1. Introduction to Agile

In February 2001, a group of 17 software experts gathered in Snowbird, Utah, to talk over the deplorable state of software development. At that time, most software was created using ineffective, heavyweight, high-ritual processes like Waterfall and overstuffed instances of the Rational Unified Process (RUP). The goal of these 17 experts was to create a manifesto that introduced a more effective, lighter-weight, approach.

This was no mean feat. The 17 were people of varied experience and strong divergent opinions. Expecting such a group to come to consensus was a long shot. And yet, against all odds, consensus was reached, the Agile Manifesto was written, and one of the most potent and long-lived movements in the software field was born.

Movements in software follow a predictable path. At first there is a minority of enthusiastic supporters, another minority of enthusiastic detractors, and a vast majority of those who don’t care. Many movements die in, or at least never leave, that phase. Think of aspect-oriented programming, or logic programming, or CRC cards. Some, however, cross the chasm and become extraordinarily popular and controversial. Some even manage to leave the controversy behind and simply become part of the mainstream body of thought. Object Orientation (OO) is an example of the latter. And so is Agile.

Unfortunately, once a movement becomes popular, the name of that movement gets blurred through misunderstanding and usurpation. Products and methods having nothing to do with the actual movement will borrow the name to cash in on the name’s popularity and significance. And so it has been with Agile.

The purpose of this book, written nearly two decades after the Snowbird event, is to set the record straight. This book is an attempt to be as pragmatic as possible, describing Agile without nonsense and in no uncertain terms.

Presented here are the fundamentals of Agile. Many have embellished and extended these ideas—and there is nothing wrong with that. However, those extensions and embellishments are not Agile. They are Agile plus something else. What you will read here is what Agile is, what Agile was, and what Agile will inevitably always be.

History of Agile

When did Agile begin? Probably more than 50,000 years ago when humans first decided to collaborate on a common goal. The idea of choosing small intermediate goals and measuring the progress after each is just too intuitive, and too human, to be considered any kind of a revolution.

When did Agile begin in modern industry? It’s hard to say. I imagine the first steam engine, the first mill, the first internal combustion engine, and the first airplane were produced by techniques that we would now call Agile. The reason for that is that taking small measured steps is just too natural and human for it to have happened any other way.

So when did Agile begin in software? I wish I could have been a fly on the wall when Alan Turing was writing his 1936 paper.1 My guess is that the many “programs” he wrote in that book were developed in small steps with plenty of desk checking. I also imagine that the first code he wrote for the Automatic Computing Engine, in 1946, was written in small steps, with lots of desk checking, and even some real testing.

1. Turing, A. M. 1936. On computable numbers, with an application to the Entscheidungsproblem [proof]. Proceedings of the London Mathematical Society, 2 (published 1937), 42(1):230–65. The best way to understand this paper is to read Charles Petzold’s masterpiece: Petzold, C. 2008. The Annotated Turing: A Guided Tour through Alan Turing’s Historic Paper on Computability and the Turing Machine. Indianapolis, IN: Wiley.

The early days of software are loaded with examples of behavior that we would now describe as Agile. For example, the programmers who wrote the control software for the Mercury space capsule worked in half-day steps that were punctuated by unit tests.

Much has been written elsewhere about this period. Craig Larman and Vic Basili wrote a history that is summarized on Ward Cunningham’s wiki,2 and also in Larman’s book, Agile & Iterative Development: A Manager’s Guide.3 But Agile was not the only game in town. Indeed, there was a competing methodology that had enjoyed considerable success in manufacturing and industry at large: Scientific Management.

2. Ward’s wiki, c2.com, is the original wiki—the first ever to have appeared in the internet. Long may it be served.

3. Larman, C. 2004. Agile & Iterative Development: A Manager’s Guide. Boston, MA: Addison-Wesley.

Scientific Management is a top-down, command-and-control approach. Managers use scientific techniques to ascertain the best procedures for accomplishing a goal and then direct all subordinates to follow their plan to the letter. In other words, there is big up-front planning followed by careful detailed implementation.

Scientific Management is probably as old as the pyramids, Stonehenge, or any of the other great works of ancient times, because it is impossible to believe that such works could have been created without it. Again, the idea of repeating a successful process is just too intuitive, and human, to be considered some kind of a revolution.

Scientific Management got its name from the works of Frederick Winslow Taylor in the 1880s. Taylor formalized and commercialized the approach and made his fortune as a management consultant. The technique was wildly successful and led to massive increases in efficiency and productivity during the decades that followed.

And so it was that in 1970 the software world was at the crossroads of these two opposing techniques. Pre-Agile (Agile before it was called “Agile”) took short reactive steps that were measured and refined in order to stagger, in a directed random walk, toward a good outcome. Scientific Management deferred action until a thorough analysis and a resulting detailed plan had been created. Pre-Agile worked well for projects that enjoyed a low cost of change and solved partially defined problems with informally specified goals. Scientific Management worked best for projects that suffered a high cost of change and solved very well-defined problems with extremely specific goals.

The question was, what kinds of projects were software projects? Were they high cost of change and well defined with specific goals, or were they low cost of change and partially defined with informal goals?

Don’t read too much into that last paragraph. Nobody, to my knowledge, actually asked that question. Ironically, the path we chose in the 1970s appears to have been driven more by accident than intent.

In 1970, Winston Royce wrote a paper4 that described his ideas for managing large-scale software projects. The paper contained a diagram (Figure 1.1) that depicted his plan. Royce was not the originator of this diagram, nor was he advocating it as a plan. Indeed, the diagram was set up as a straw man for him to knock down in the subsequent pages of his paper.

4. Royce, W. W. 1970. Managing the development of large software systems. Proceedings, IEEE WESCON, August: 1–9. Accessed at http://www-scf.usc.edu/∼csci201/lectures/Lecture11/royce1970.pdf.

Nevertheless, the prominent placing of the diagram, and the tendency for people to infer the content of a paper from the diagram on the first or second page, led to a dramatic shift in the software industry.

Royce’s initial diagram looked so much like water flowing down a series of rocks, that the technique became known as “Waterfall.”

Waterfall was the logical descendant of Scientific Management. It was all about doing a thorough analysis, making a detailed plan, and then executing that plan to completion.

Even though it was not what Royce was recommending, it was the concept people took away from his paper. And it dominated the next three decades.5

5. It should be noted that my interpretation of this timeline has been challenged in Chapter 7 of Bossavit, L. 2012. The Leprechauns of Software Engineering: How Folklore Turns into Fact and What to Do About It. Leanpub.

This is where I come into the story. In 1970, I was 18 years old, working as a programmer at a company named A. S. C. Tabulating in Lake Bluff, Illinois. The company had an IBM 360/30 with 16K of core, an IBM 360/40 with 64K of core, and a Varian 620/f minicomputer with 64K of core. I programmed the 360s in COBOL, PL/1, Fortran, and assembler. I wrote only assembler for the 620/f.

It’s important to remember what it was like being a programmer back in those days. We wrote our code on coding forms using pencils, and we had keypunch operators punch them onto cards for us. We submitted our carefully checked cards to computer operators who ran our compiles and tests during the third shift because the computers were too busy during the day doing real work. It often took days to get from the initial writing to the first compile, and each turnaround thereafter was usually one day.

The 620/f was a bit different for me. That machine was dedicated to our team, so we had 24/7 access to it. We could get two, three, perhaps even four turnarounds and tests per day. The team I was on was also composed of people who, unlike most programmers of the day, could type. So we would punch our own decks of cards rather than surrendering them to the vagaries of the keypunch operators.

What process did we use during those days? It certainly wasn’t Waterfall. We had no concept of following detailed plans. We just hacked away on a day-to-day basis, running compiles, testing our code, and fixing bugs. It was an endless loop that had no structure. It also wasn’t Agile, or even Pre-Agile. There was no discipline in the way we worked. There was no suite of tests and no measured time intervals. It was just code and fix, code and fix, day after day, month after month.

I first read about Waterfall in a trade journal sometime around 1972. It seemed like a godsend to me. Could it really be that we could analyze the problem up front, then design a solution to that problem, and then implement that design? Could we really develop a schedule based on those three phases? When we were done with analysis, would we really be one-third done with the project? I felt the power of the concept. I wanted to believe it. Because, if it worked, it was a dream come true.

Apparently I wasn’t alone, because many other programmers and programming shops caught the bug too. And, as I said before, Waterfall began to dominate the way we thought.

It dominated, but it didn’t work. For the next thirty years I, my associates, and my brother and sister programmers around the world, tried and tried and tried to get that analysis and design right. But every time we thought we had it, it slipped through our fingers during the implementation phase. All our months of careful planning were made irrelevant by the inevitable mad dash, made before the glaring eyes of managers and customers, to terribly delayed deadlines.

Despite the virtually unending stream of failures, we persisted in the Waterfall mindset. After all, how could this fail? How could thoroughly analyzing the problem, carefully designing a solution, and then implementing that design fail so spectacularly over and over again? It was inconceivable6 that the problem lay in that strategy. The problem had to lie with us. Somehow, we were doing it wrong.

6. Watch The Princess Bride (1987) to hear the proper inflection of that word.

The level to which the Waterfall mindset dominated us can be seen in the language of the day. When Dijkstra came up with Structured Programming in 1968, Structured Analysis7 and Structured Design8 were not far behind. When Object-Oriented Programming (OOP) started to become popular in 1988, Object-Oriented Analysis9 and Object-Oriented Design10 (OOD) were also not far behind. This triplet of memes, this triumvirate of phases, had us in its thrall. We simply could not conceive of a different way to work.

7. DeMarco, T. 1979. Structured Analysis and System Specification. Upper Saddle River, NJ: Yourdon Press.

8. Page-Jones, M. 1980. The Practical Guide to Structured Systems Design. Englewood Cliffs, NJ: Yourdon Press.

9. Coad, P., and E. Yourdon. 1990. Object-Oriented Analysis. Englewood Cliffs, NJ: Yourdon Press.

10. Booch, G. 1991. Object Oriented Design with Applications. Redwood City, CA: Benjamin-Cummings Publishing Co.

And then, suddenly, we could.

The beginnings of the Agile reformation began in the late 1980s or early 1990s. The Smalltalk community began showing signs of it in the ’80s. There were hints of it in Booch’s 1991 book on OOD.10 More resolution showed up in Cockburn’s Crystal Methods in 1991. The Design Patterns community started to discuss it in 1994, spurred by a paper written by James Coplien.11

11. Coplien, J. O. 1995. A generative development-process pattern language. Pattern Languages of Program Design. Reading, MA: Addison-Wesley, p. 183.

By 1995 Beedle,12 Devos, Sharon, Schwaber, and Sutherland had written their famous paper on Scrum.13 And the floodgates were opened. The bastion of Waterfall had been breached, and there was no turning back.

12. Mike Beedle was murdered on March 23, 2018, in Chicago by a mentally disturbed homeless man who had been arrested and released 99 times before. He should have been institutionalized. Mike Beedle was a friend of mine.

13. Beedle, M., M. Devos, Y. Sharon, K. Schwaber, and J. Sutherland. SCRUM: An extension pattern language for hyperproductive software development. Accessed at http://jeffsutherland.org/scrum/scrum_plop.pdf.

This, once again, is where I come into the story. What follows is from my memory, and I have not tried to verify it with the others involved. You should therefore assume that this recollection of mine has many omissions and contains much that is apocryphal, or at least wildly inaccurate. But Don’t Panic, because I’ve at least tried to keep it a bit entertaining.

I first met Kent Beck at that 1994 PLOP,14 where Coplien’s paper was presented. It was a casual meeting, and nothing much came of it. I met him next in February 1999 at the OOP conference in Munich. But by then I knew a lot more about him.

14. Pattern Languages of Programming was a conference held in the 1990s near the University of Illinois.

At the time, I was a C++ and OOD consultant, flying hither and yon helping folks to design and implement applications in C++ using OOD techniques. My customers began to ask me about process. They had heard that Waterfall didn’t mix with OO, and they wanted my advice. I agreed15 about the mixing of OO and Waterfall and had been giving this idea a lot of thought myself. I had even thought I might write my own OO process. Fortunately, I abandoned that effort early on because I had stumbled across Kent Beck’s writings on Extreme Programming (XP).

15. This is one of those strange coincidences that occur from time to time. There is nothing special about OO that makes it less likely to mix with Waterfall, and yet that meme was gaining a lot of traction in those days.

The more I read about XP, the more fascinated I was. The ideas were revolutionary (or so I thought at the time). They made sense, especially in an OO context (again, so I thought at the time). And so I was eager to learn more.

To my surprise, at that OOP conference in Munich, I found myself teaching across the hall from Kent Beck. I bumped into him during a break and said that we should meet for lunch to discuss XP. That lunch set the stage for a significant partnership. My discussions with him led me to fly out to his home in Medford, Oregon, to work with him to design a course about XP. During that visit, I got my first taste of Test-Driven Development (TDD) and was hooked.

At the time, I was running a company named Object Mentor. We partnered with Kent to offer a five-day boot camp course on XP that we called XP Immersion. From late 1999 until September 11, 2001,16 they were a big hit! We trained hundreds of folks.

16. The significance of that date should not be overlooked.

In the summer of 2000, Kent invited a quorum of folks from the XP and Patterns community to a meeting near his home. He called it the “XP Leadership” meeting. We rode boats on, and hiked the banks of, the Rogue River. And we met to decide just what we wanted to do about XP.

One idea was to create a nonprofit organization around XP. I was in favor of this, but many were not. They had apparently had an unfavorable experience with a similar group founded around the Design Patterns ideas. I left that session frustrated, but Martin Fowler followed me out and suggested that we meet later in Chicago to talk it out. I agreed.

So Martin and I met in the fall of 2000 at a coffee shop near the ThoughtWorks office where he worked. I described to him my idea to get all the competing lightweight process advocates together to form a manifesto of unity. Martin made several recommendations for an invitation list, and we collaborated on writing the invitation. I sent the invitation letter later that day. The subject was Light Weight Process Summit.

One of the invitees was Alistair Cockburn. He called me to say that he was just about to call a similar meeting, but that he liked our invitation list better than his. He offered to merge his list with ours and do the legwork to set up the meeting if we agreed to have the meeting at Snowbird ski resort, near Salt Lake City.

Thus, the meeting at Snowbird was scheduled.

Snowbird

I was quite surprised that so many people agreed to show up. I mean, who really wants to attend a meeting entitled, “The Light Weight Process Summit”? But here we all were, up in the Aspen room at the Lodge at Snowbird.

There were 17 of us. We have since been criticized for being 17 middle-aged white men. The criticism is fair up to a point. However, at least one woman, Agneta Jacobson, had been invited but could not attend. And, after all, the vast majority of senior programmers in the world, at the time, were middle-aged white men—the reasons why this was the case is a story for a different time, and a different book.

The 17 of us represented quite a few different viewpoints, including 5 different lightweight processes. The largest cohort was the XP team: Kent Beck, myself, James Grenning, Ward Cunningham, and Ron Jeffries. Next came the Scrum team: Ken Schwaber, Mike Beedle, and Jeff Sutherland. Jon Kern represented Feature-Driven Development, and Arie van Bennekum represented the Dynamic Systems Development Method (DSDM). Finally, Alistair Cockburn represented his Crystal family of processes.

The rest of the folks were relatively unaffiliated. Andy Hunt and Dave Thomas were the Pragmatic Programmers. Brian Marick was a testing consultant. Jim Highsmith was a software management consultant. Steve Mellor was there to keep us honest because he was representing the Model-Driven philosophy, of which many of the rest of us were suspicious. And finally came Martin Fowler, who although he had close personal connections to the XP team, was skeptical of any kind of branded process and sympathetic to all.

I don’t remember much about the two days that we met. Others who were there remember it differently than I do.17 So, I’ll just tell you what I remember, and I’ll advise you to take it as the nearly two-decade-old recollection of a 65-year-old man. I might miss a few details, but the gist is probably correct.

17. There was a recently published history of the event in The Atlantic: Mimbs Nyce, C. 2017. The winter getaway that turned the software world upside down. The Atlantic. Dec 8. Accessed at https://www.theatlantic.com/technology/archive/2017/12/agile-manifesto-a-history/547715/. As of this writing I have not read that article, because I don’t want it to pollute the recollection I am writing here.

It was agreed, somehow, that I would kick off the meeting. I thanked everyone for coming and suggested that our mission should be the creation of a manifesto that described what we believed to be in common about all these lightweight processes and software development in general. Then I sat down. I believe that was my sole contribution to the meeting.

We did the standard sort of thing where we write issues down on cards and then sorted the cards on the floor into affinity groupings. I don’t really know if that led anywhere. I just remember doing it.

I don’t remember whether the magic happened on the first day or on the second. It seems to me it was toward the end of the first day. It may have been the affinity groupings that identified the four values, which were Individuals and Interactions, Working Software, Customer Collaboration, and Responding to Change. Someone wrote these on the whiteboard at the front of the room and then had the brilliant idea to say that these are preferred, but do not replace, the complementary values of processes, tools, documentation, contracts, and plans.

This is the central idea of the Agile Manifesto, and no one seems to remember clearly who first put it on the board. I seem to recall that it was Ward Cunningham. But Ward believes it was Martin Fowler.

Look at the picture on the agilemanifesto.org web page. Ward says that he took this picture to record that moment. It clearly shows Martin at the board with many of the rest of us gathered around.18 This lends credence to Ward’s notion that it was Martin who came up with the idea.

18. From left to right, in a semi-circle around Martin, that picture shows Dave Thomas, Andy Hunt (or perhaps Jon Kern), me (you can tell by the blue jeans and the Leatherman on my belt), Jim Highsmith, someone, Ron Jeffries, and James Grenning. There is someone sitting behind Ron, and on the floor by his shoe appears to be one of the cards we used in the affinity grouping.

On the other hand, perhaps it’s best that we never really know.

Once the magic happened, the whole group coalesced around it. There was some wordsmithing, and some tweaking and tuning. As I recall, it was Ward who wrote the preamble: “We are uncovering better ways of developing software by doing it and helping others do it.” Others of us made tiny alterations and suggestions, but it was clear that we were done. There was this feeling of closure in the room. No disagreement. No argument. Not even any real discussion of alternatives. Those four lines were it.

Individuals and interactions over processes and tools.

Working software over comprehensive documentation.

Customer collaboration over contract negotiation.

Responding to change over following a plan.

Did I say we were done? It felt like it. But of course, there were lots of details to figure out. For one thing, what were we going to call this thing that we had identified?

The name “Agile” was not a slam dunk. There were many different contenders. I happened to like “Light Weight,” but nobody else did. They thought it implied “inconsequential.” Others liked the word “Adaptive.” The word “Agile” was mentioned, and one person commented that it was currently a hot buzzword in the military. In the end, though nobody really loved the word “Agile,” it was just the best of a bunch of bad alternatives.

As the second day drew to a close, Ward volunteered to put up the agilemanifesto.org website. I believe it was his idea to have people sign it.

After Snowbird

The following two weeks were not nearly so romantic or eventful as those two days in Snowbird. They were mostly dominated by the hard work of hammering out the principles document that Ward eventually added to the website.

The idea to write this document was something we all agreed was necessary in order to explain and direct the four values. After all, the four values are the kinds of statements that everyone can agree with without actually changing anything about the way they work. The principles make it clear that those four values have consequences beyond their “Mom and apple pie” connotation.

I don’t have a lot of strong recollections about this period other than that we emailed the document containing the principles back and forth between each other and repeatedly wordsmithed it. It was a lot of hard work, but I think we all felt that it was worth the effort. With that done, we all went back to our normal jobs, activities, and lives. I presume most of us thought that the story would end there.

None of us expected the huge groundswell of support that followed. None of us anticipated just how consequential those two days had been. But lest I get a swelled head over having been a part of it, I continually remind myself that Alistair was on the verge of calling a similar meeting. And that makes me wonder how many others were also on the verge. So I content myself with the idea that the time was ripe, and that if the 17 of us hadn’t met on that mountain in Utah, some other group would have met somewhere else and come to a similar conclusion.

Agile Overview

How do you manage a software project? There have been many approaches over the years—most of them pretty bad. Hope and prayer are popular among those managers who believe that there are gods who govern the fate of software projects. Those who don’t have such faith often fall back on motivational techniques such as enforcing dates with whips, chains, boiling oil, and pictures of people climbing rocks and seagulls flying over the ocean.

These approaches almost universally lead to the characteristic symptoms of software mismanagement: Development teams who are always late despite working too much overtime. Teams who produce products of obviously poor quality that do not come close to meeting the needs of the customer.

The Iron Cross

The reason that these techniques fail so spectacularly is that the managers who use them do not understand the fundamental physics of software projects. This physics constrains all projects to obey an unassailable trade-off called the Iron Cross of project management. Good, fast, cheap, done: Pick any three you like. You can’t have the fourth. You can have a project that is good, fast, and cheap, but it won’t get done. You can have a project that is done, cheap, and fast, but it won’t be any good.

The reality is that a good project manager understands that these four attributes have coefficients. A good manager drives a project to be good enough, fast enough, cheap enough, and done as much as necessary. A good manager manages the coefficients on these attributes rather than demanding that all those coefficients are 100%. It is this kind of management that Agile strives to enable.

At this point I want to be sure that you understand that Agile is a framework that helps developers and managers execute this kind of pragmatic project management. However, such management is not made automatic, and there is no guarantee that managers will make appropriate decisions. Indeed, it is entirely possible to work within the Agile framework and still completely mismanage the project and drive it to failure.

Charts on the Wall

So how does Agile aid this kind of management? Agile provides data. An Agile development team produces just the kinds of data that managers need in order to make good decisions.

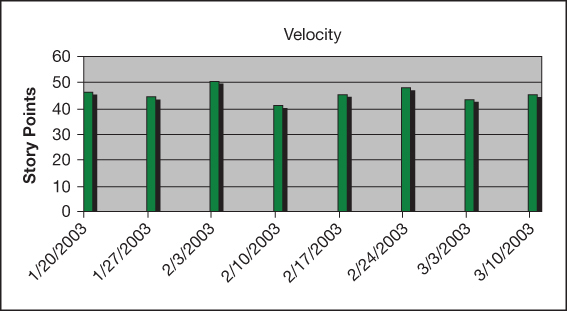

Consider Figure 1.2. Imagine that it is up on the wall in the project room. Wouldn’t this be great?

This graph shows how much the development team has gotten done every week. The measurement unit is “points.” We’ll talk about what those points are later. But just look at that graph. Anyone can glance at that graph and see how fast the team is moving. It takes less than ten seconds to see that the average velocity is about 45 points per week.

Anyone, even a manager, can predict that next week the team will get about 45 points done. Over the next ten weeks, they ought to get about 450 points done. That’s power! It’s especially powerful if the managers and the team have a good feel for the number of points in the project. In fact, good Agile teams capture that information on yet another graph on the wall.

Figure 1.3 is called a burn-down chart. It shows how many points remain until the next major milestone. Notice how it declines each week. Note that it declines less than the number of points in the velocity chart. This is because there is a constant background of new requirements and issues being discovered during development.

Notice that the burn-down chart has a slope that predicts when the milestone will probably be reached. Virtually anyone can walk into the room, look at these two charts, and come to the conclusion that the milestone will be reached in June at a rate of 45 points per week.

Note that there is a glitch on the burn-down chart. The week of February 17 lost ground somehow. This might have been due to the addition of a new feature or some other major change to the requirements. Or it might have been the result of the developers re-estimating the remaining work. In either case, we want to know the effect on the schedule so that the project can be properly managed.

It is a critical goal of Agile to get those two charts on the wall. One of the driving motivations for Agile software development is to provide the data that managers need to decide how to set the coefficients on the Iron Cross and drive the project to the best possible outcome.

Many people would disagree with that last paragraph. After all, the charts aren’t mentioned in the Agile Manifesto, nor do all Agile teams use such charts. And, to be fair, it’s not actually the charts that matter. What matters is the data.

Agile development is first and foremost a feedback-driven approach. Each week, each day, each hour, and even each minute is driven by looking at the results of the previous week, day, hour, and minute, and then making the appropriate adjustments. This applies to individual programmers, and it also applies to the management of the entire team. Without data, the project cannot be managed.19

19. This is strongly related to John Boyd’s OODA loop, summarized here: https://en.wikipedia.org/wiki/OODA_loop. Boyd, J. R. 1987. A Discourse on Winning and Losing. Maxwell Air Force Base, AL: Air University Library, Document No. M-U 43947.

So even if you don’t get those two charts on the wall, make sure you get the data in front of managers. Make sure the managers know how fast the team is moving and how much the team has left to accomplish. And present this information in a transparent, public, and obvious fashion—like putting the two charts on the wall.

But why is this data so important? Is it possible to effectively manage a project without that data? For 30 years we tried. And this is how it went…

The First Thing You Know

What is the first thing you know about a project? Before you know the name of the project or any of the requirements, there is one piece of data that precedes all others. The Date, of course. And once The Date is chosen, The Date is frozen. There’s no point trying to negotiate The Date, because The Date was chosen for good business reasons. If The Date is September, it’s because there’s a trade show in September, or there’s a shareholders’ meeting in September, or our funding runs out in September. Whatever the reason, it’s a good business reason, and it’s not going to change just because some developers think they might not be able to make it.

At the same time, the requirements are wildly in flux and can never be frozen. This is because the customers don’t really know what they want. They sort of know what problem they want to solve, but translating that into the requirements of a system is never trivial. So the requirements are constantly being re-evaluated and re-thought. New features are added. Old features are removed. The UI changes form on a weekly if not daily basis.

This is the world of the software development team. It’s a world in which dates are frozen and requirements are continuously changing. And somehow in that context, the development team must drive the project to a good outcome.

The Meeting

The Waterfall model promised to give us a way to get our arms around this problem. To understand just how seductive and ineffective this was, I need to take you to The Meeting.

It is the first of May. The big boss calls us all into a conference room.

“We’ve got a new project,” the big boss says. “It’s got to be done November first. We don’t have any requirements yet. We’ll get them to you in the next couple of weeks.”

“Now, how long will it take you to do the analysis?”

We look at each other out of the corner of our eyes. No one is willing to speak. How do you answer a question like that? One of us murmurs: “But we don’t have any requirements yet.”

“Pretend you have the requirements!” hollers the big boss. “You know how this works. You’re all professionals. I don’t need an exact date. I just need something to put in the schedule. Keep in mind if it takes any more than two months we might as well not do this project.”

The words “Two months?” burble out of someone’s mouth, but the big boss takes it as an affirmation. “Good! That’s what I thought too. Now, how long will it take you to do the design?”

Again, astonished silence fills the room. You do the math. You realize that there are six months to November first. The conclusion is obvious. “Two months?” you say.

“Precisely!” the Big Boss beams. “Exactly what I thought. And that leaves us two months for the implementation. Thank you for coming to my meeting.”

Many of you reading this have been to that meeting. Those of you who haven’t, consider yourselves lucky.

The Analysis Phase

So we all leave the conference room and return to our offices. What are we doing? This is the start of the Analysis Phase, so we must be analyzing. But just what is this thing called analysis?

If you read books on software analysis you’ll find that there are as many definitions of analysis as there are authors. There is no real consensus on just what analysis is. It might be creating the work breakdown structure of the requirements. It might be the discovery and elaboration of the requirements. It might be the creation of the underlying data model, or object model, or… The best definition of analysis is: It’s what analysts do.

Of course, some things are obvious. We should be sizing the project and doing basic feasibility and human resources projections. We should be ensuring that the schedule is achievable. That is the least that our business would be expecting of us. Whatever this thing called analysis is, it’s what we are going to be doing for the next two months.

This is the honeymoon phase of the project. Everyone is happily surfing the web, doing a little day-trading, meeting with customers, meeting with users, drawing nice diagrams, and in general having a great time.

Then, on July 1, a miracle happens. We’re done with analysis.

Why are we done with analysis? Because it’s July 1. The schedule said we were supposed to be done on July 1, so we’re done on July 1. Why be late?

So we have a little party, with balloons and speeches, to celebrate our passage through the phase gate and our entry into the Design Phase.

The Design Phase

So now what are we doing? We are designing, of course. But just what is designing?

We have a bit more resolution about software design. Software design is where we split the project up into modules and design the interfaces between those modules. It’s also where we consider how many teams we need and what the connections between those teams ought to be. In general, we are expected to refine the schedule in order to produce a realistically achievable implementation plan.

Of course, things change unexpectedly during this phase. New features are added. Old features are removed or changed. And we’d love to go back and re-analyze these changes; but time is short. So we just sort of hack these changes into the design.

And then another miracle happens. It’s September 1, and we are done with the design. Why are we done with the design? Because it’s September 1. The schedule says we are supposed to be done, so why be late?

So: another party. Balloons and speeches. And we blast through the phase gate into the Implementation Phase.

If only we could pull this off one more time. If only we could just say we were done with implementation. But we can’t, because the thing about implementation is that is actually has to be done. Analysis and design are not binary deliverables. They do not have unambiguous completion criteria. There’s no real way to know that you are done with them. So we might as well be done on time.

The Implementation Phase

Implementation, on the other hand, has definite completion criteria. There’s no way to successfully pretend that it’s done.20

20. Though the developers of healthcare.gov certainly tried.

It’s completely unambiguous what we are doing during the Implementation Phase. We’re coding. And we’d better be coding like mad banshees too, because we’ve already blown four months of this project.

Meanwhile, the requirements are still changing on us. New features are being added. Old features are being removed or changed. We’d love to go back and re-analyze and re-design these changes, but we’ve only got weeks left. And so we hack, hack, hack these changes into the code.

As we look at the code and compare it to the design, we realize that we must have been smoking some pretty special stuff when we did that design because the code sure isn’t coming out anything like those nice pretty diagrams that we drew. But we don’t have time to worry about that because the clock is ticking and the overtime hours are mounting.

Then, sometime around October 15, someone says: “Hey, what’s the date? When is this due?” That’s the moment that we realize that there are only two weeks left and we’re never going to get this done by November 1. This is also the first time that the stakeholders are told that there might be a small problem with this project.

You can imagine the stakeholders’ angst. “Couldn’t you have told us this in the Analysis Phase? Isn’t that when you were supposed to be sizing the project and proving the feasibility of the schedule? Couldn’t you have told us this during the Design Phase? Isn’t that when you were supposed to be breaking up the design into modules, assigning those modules to teams, and doing the human resources projections? Why’d you have to tell us just two weeks before the deadline?”

And they have a point, don’t they?

The Death March Phase

So now we enter the Death March Phase of the project. Customers are angry. Stakeholders are angry. The pressure mounts. Overtime soars. People quit. It’s hell.

Sometime in March, we deliver some limping thing that sort of half does what the customers want. Everybody is upset. Everybody is demotivated. And we promise ourselves that we will never do another project like this. Next time we’ll do it right! Next time we’ll do more analysis and more design.

I call this Runaway Process Inflation. We’re going to do the thing that didn’t work, and do a lot more of it.

Hyperbole?

Clearly that story was hyperbolic. It grouped together into one place nearly every bad thing that has ever happened in any software project. Most Waterfall projects did not fail so spectacularly. Indeed some, through sheer luck, managed to conclude with a modicum of success. On the other hand, I have been to that meeting on more than one occasion, and I have worked on more than one such project, and I am not alone. The story may have been hyperbolic, but it was still real.

If you were to ask me how many Waterfall projects were actually as disastrous as the one described above, I’d have to say relatively few—on the other hand, it’s not zero, and it’s far too many. Moreover, the vast majority suffered similar problems to a lesser (or sometimes greater) degree.

Waterfall was not an absolute disaster. It did not crush every software project into rubble. But it was, and remains, a disastrous way to run a software project.

A Better Way

The thing about the Waterfall idea is that it just makes so much sense. First, we analyze the problem, then we design the solution, and then we implement the design.

Simple. Direct. Obvious. And wrong.

The Agile approach to a project is entirely different from what you just read, but it makes just as much sense. Indeed, as you read through this I think you’ll see that it makes much more sense than the three phases of Waterfall.

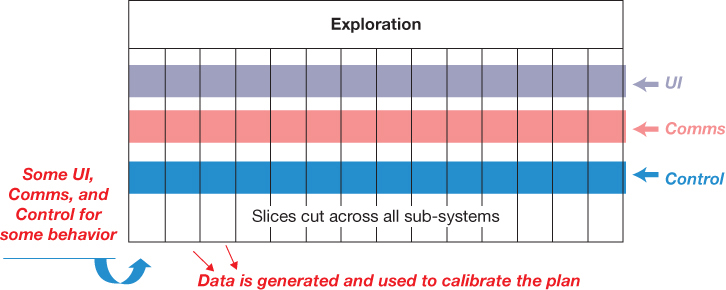

An Agile project begins with analysis, but it’s an analysis that never ends. In the diagram in Figure 1.4 we see the whole project. At the right is the end date, November 1. Remember, the first thing you know is the date. We subdivide that time into regular increments called iterations or sprints.21

21. Sprint is the term used in Scrum. I dislike the term because it implies running as fast as possible. A software project is a marathon, and you don’t want to sprint in a marathon.

The size of an iteration is typically one or two weeks. I prefer one week because too much can go wrong in two weeks. Other people prefer two weeks because they fear you can’t get enough done in one week.

Iteration Zero

The first iteration, sometimes known as Iteration Zero, is used to generate a short list of features, called stories. We’ll talk much more about this in future chapters. For now, just think of them as features that need to be developed. Iteration Zero is also used to set up the development environment, estimate the stories, and lay out the initial plan. That plan is simply a tentative allocation of stories to the first few iterations. Finally, Iteration Zero is used by the developers and architects to conjure up an initial tentative design for the system based on the tentative list of stories.

This process of writing stories, estimating them, planning them, and designing never stops. That’s why there is a horizontal bar across the entire project named Exploration. Every iteration in the project, from the beginning to the end, will have some analysis and design and implementation in it. In an Agile project, we are always analyzing and designing.

Some folks take this to mean that Agile is just a series of mini-Waterfalls. That is not the case. Iterations are not subdivided into three sections. Analysis is not done solely at the start of the iteration, nor is the end of the iteration solely implementation. Rather, the activities of requirements analysis, architecture, design, and implementation are continuous throughout the iteration.

If you find this confusing, don’t worry. Much more will be said about this in later chapters. Just keep in mind that iterations are not the smallest granule in an Agile project. There are many more levels. And analysis, design, and implementation occur in each of those levels. It’s turtles all the way down.

Agile Produces Data

Iteration one begins with an estimate of how many stories will be completed. The team then works for the duration of the iteration on completing those stories. We’ll talk later about what happens inside the iteration. For now, what do you think the odds are that the team will finish all the stories that they planned to finish?

Pretty much zero, of course. That’s because software is not a reliably estimable process. We programmers simply do not know how long things will take. This isn’t because we are incompetent or lazy; it’s because there is simply no way to know how complicated a task is going to be until that task is engaged and finished. But, as we’ll see, all is not lost.

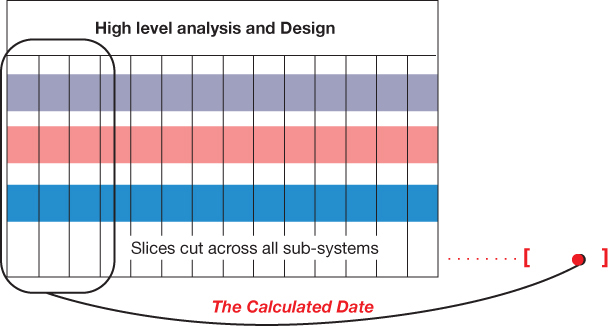

At the end of the iteration, some fraction of the stories that we had planned to finish will be done. This gives us our first measurement of how much can be completed in an iteration. This is real data. If we assume that every iteration will be similar, then we can use that data to adjust our original plan and calculate a new end date for the project (Figure 1.5).

This calculation is likely to be very disappointing. It will almost certainly exceed the original end date for the project by a significant factor. On the other hand, this new date is based upon real data, so it should not be ignored. It also can’t be taken too seriously yet because it is based on a single data point; the error bars around that projected date are pretty wide.

To narrow those error bars, we should do two or three more iterations. As we do, we get more data on how many stories can be done in an iteration. We’ll find that this number varies from iteration to iteration but averages at a relatively stable velocity. After four or five iterations, we’ll have a much better idea of when this project will be done (Figure 1.6).

As the iterations progress, the error bars shrink until there is no point in hoping that the original date has any chance of success.

Hope Versus Management

This loss of hope is a major goal of Agile. We practice Agile in order to destroy hope before that hope can kill the project.

Hope is the project killer. Hope is what makes a software team mislead managers about their true progress. When a manager asks a team, “How’s it going?” it is hope that answers: “Pretty good.” Hope is a very bad way to manage a software project. And Agile is a way to provide an early and continuous dose of cold, hard reality as a replacement for hope.

Some folks think that Agile is about going fast. It’s not. It’s never been about going fast. Agile is about knowing, as early as possible, just how screwed we are. The reason we want to know this as early as possible is so that we can manage the situation. You see, this is what managers do. Managers manage software projects by gathering data and then making the best decisions they can based on that data. Agile produces data. Agile produces lots of data. Managers use that data to drive the project to the best possible outcome.

The best possible outcome is not often the originally desired outcome. The best possible outcome may be very disappointing to the stakeholders who originally commissioned the project. But the best possible outcome is, by definition, the best they are going to get.

Managing the Iron Cross

So now we return to the Iron Cross of project management: good, fast, cheap, done. Given the data produced by the project, it’s time for the managers of that project to determine just how good, how fast, how cheap, and how done the project should be.

Managers do this by making changes to the scope, the schedule, the staff, and the quality.

Changing the Schedule

Let’s start with the schedule. Let’s ask the stakeholders if we can delay the project from November 1 to March 1. These conversations usually don’t go well. Remember, the date was chosen for good business reasons. Those business reasons probably haven’t changed. So a delay often means that the business is going to take a significant hit of some kind.

On the other hand, there are times when the business simply chooses the date for convenience. For example, maybe there is a trade show in November where they want to show off the project. Perhaps there’s another trade show in March that would be just as good. Remember, it’s still early. We’re only a few iterations into this project. We want to tell the stakeholders that our delivery date will be March before they buy the booth space at the November show.

Many years ago, I managed a group of software developers working on a project for a telephone company. In the midst of the project, it became clear that we were going to miss the expected delivery date by six months. We confronted the telephone company executives about this as early as we could. These executives had never had a software team tell them, early, that the schedule would be delayed. They stood up and gave us a standing ovation.

You should not expect this. But it did happen to us. Once.

Adding Staff

In general, the business is simply not willing to change the schedule. The date was chosen for good business reasons, and those reasons still hold. So let’s try to add staff. Everyone knows we can go twice as fast by doubling the staff.

Actually, this is exactly the opposite of the case. Brooks’ law22 states: Adding manpower to a late project makes it later.

22. Brooks, Jr., F. P. 1995 [1975]. The Mythical Man-Month. Reading, MA: Addison-Wesley. https://en.wikipedia.org/wiki/Brooks%27s_law.

What really happens is more like the diagram in Figure 1.7. The team is working along at a certain productivity. Then new staff is added. Productivity plummets for a few weeks as the new people suck the life out of the old people. Then, hopefully, the new people start to get smart enough to actually contribute. The gamble that managers make is that the area under that curve will be net positive. Of course you need enough time, and enough improvement, to make up for the initial loss.

Another factor, of course, is that adding staff is expensive. Often the budget simply cannot tolerate hiring new people. So, for the sake of this discussion, let’s assume that staff can’t be increased. That means quality is the next thing to change.

Decreasing Quality

Everyone knows that you can go much faster by producing crap. So, stop writing all those tests, stop doing all those code reviews, stop all that refactoring nonsense, and just code you devils, just code. Code 80 hours per week if necessary, but just code!

I’m sure you know that I’m going to tell you this is futile. Producing crap does not make you go faster, it makes you go slower. This is the lesson you learn after you’ve been a programmer for 20 or 30 years. There is no such thing as quick and dirty. Anything dirty is slow.

The only way to go fast, is to go well.

So we’re going to take that quality knob and turn it up to 11. If we want to shorten our schedule, the only option is to increase quality.

Changing Scope

That leaves one last thing to change. Maybe, just maybe, some of the planned features don’t really need to be done by November 1. Let’s ask the stakeholders.

“Stakeholders, if you need all these features then it’s going to be March. If you absolutely have to have something by November then you’re going to have to take some features out.”

“We’re not taking anything out; we have to have it all! And we have to have it all by November first.”

“Ah, but you don’t understand. If you need it all, it’s going to take us till March to do it.”

“We need it all, and we need it all by November!”

This little argument will continue for a while because no one ever wants to give ground. But although the stakeholders have the moral high ground in this argument, the programmers have the data. And in any rational organization, the data will win.

If the organization is rational, then the stakeholders eventually bow their heads in acceptance and begin to scrutinize the plan. One by one, they will identify the features that they don’t absolutely need by November. This hurts, but what real choice does the rational organization have? And so the plan is adjusted. Some features are delayed.

Business Value Order

Of course, inevitably the stakeholders will find a feature that we have already implemented and then say, “It’s a real shame you did that one, we sure don’t need it.”

We never want to hear that again! So from now on, at the beginning of each iteration, we are going to ask the stakeholders which features to implement next. Yes, there are dependencies between the features, but we are programmers, we can deal with dependencies. One way or another we will implement the features in the order that the stakeholders ask.

Here Endeth the Overview

What you have just read is the 20,000 foot view of Agile. A lot of details are missing, but this is the gist of it. Agile is a process wherein a project is subdivided into iterations. The output of each iteration is measured and used to continuously evaluate the schedule. Features are implemented in the order of business value so that the most valuable things are implemented first. Quality is kept as high as possible. The schedule is primarily managed by manipulating scope.

That’s Agile.

Circle of Life

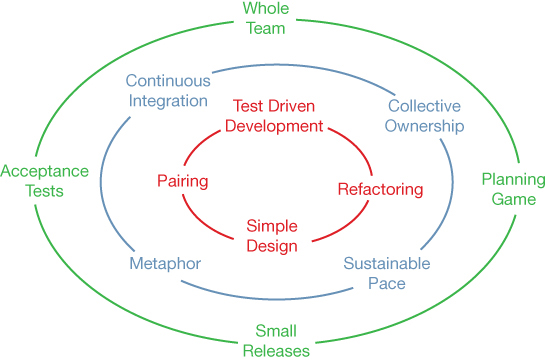

Figure 1.8 is Ron Jeffries’ diagram describing the practices of XP. This diagram is affectionately known as the Circle of Life.

Figure 1.8 The Circle of Life

I have chosen the practices of XP for this book because, of all the Agile processes, XP is the best defined, the most complete, and the least muddled. Virtually all other Agile processes are a subset of or a variation on XP. This is not to say that these other Agile processes should be discounted. You may, in fact, find them valuable for various projects. But if you want to understand what Agile is really all about, there is no better way than to study XP. XP is the prototype, and the best representative, of the essential core of Agile.

Kent Beck is the father of XP, and Ward Cunningham is the grandfather. The two of them, working together at Tektronix in the mid ’80s, explored many of the ideas that eventually became XP. Kent later refined those ideas into the concrete form that became XP, circa 1996. In 2000, Beck published the definitive work: Extreme Programming Explained: Embrace Change.23

23. Beck, K. 2000. Extreme Programming Explained: Embrace Change. Boston, MA: Addison-Wesley. There is a second edition with a 2005 copyright, but the first edition is my favorite and is the version that I consider to be definitive. Kent may disagree.

The Circle of Life is subdivided into three rings. The outer ring shows

the business-facing practices of XP. This ring is essentially the equivalent of the Scrum24 process. These practices provide the framework for the way the software development team communicates with the business and the principles by which the business and development team manage the project.

24. Or at least as Scrum was originally conceived. Nowadays, Scrum has absorbed many more of the XP practices.

The Planning Game practice plays the central role of this ring. It tells us how to break down a project into features, stories, and tasks. It provides guidance for the estimation, prioritization, and scheduling of those features, stories, and tasks.

Small Releases guides the team to work in bite-sized chunks.

Acceptance Tests provide the definition of “done” for features, stories, and tasks. It shows the team how to lay down unambiguous completion criteria.

Whole Team conveys the notion that a software development team is composed of many different functions, including programmers, testers, and managers, who all work together toward the same goal.

The middle ring of the Circle of Life presents the team-facing practices. These practices provide the framework and principles by which the development team communicates with, and manages, itself.

Sustainable Pace is the practice that keeps a development team from burning their resources too quickly and running out of steam before the finish line.

Collective Ownership ensures that the team does not divide the project into a set of knowledge silos.

Continuous Integration keeps the team focused on closing the feedback loop frequently enough to know where they are at all times.

Metaphor is the practice that creates and promulgates the vocabulary and language that the team and the business use to communicate about the system.

The innermost ring of the Circle of Life represents the technical practices that guide and constrain the programmers to ensure the highest technical quality.

Pairing is the practice that keeps the technical team sharing knowledge, reviewing, and collaborating at a level that drives innovation and accuracy.

Simple Design is the practice that guides the team to prevent wasted effort.

Refactoring encourages continual improvement and refinement of all work products.

Test Driven Development is the safety line that the technical team uses to go quickly while maintaining the highest quality.

These practices align very closely with the goals of the Agile Manifesto in at least the following ways:

Individuals and interactions, over processes and tools

Whole Team, Metaphor, Collective Ownership, Pairing, Sustainable Pace

Working software over comprehensive documentation

Acceptance Tests, Test Driven Development, Simple Design, Refactoring, Continuous Integration

Customer collaboration over contract negotiation

Small Releases, Planning Game, Acceptance Tests, Metaphor

Responding to change over following a plan

Small Releases, Planning Game, Sustainable Pace, Test Driven Development, Refactoring, Acceptance Tests

But, as we’ll see as this book progresses, the linkages between the Circle of Life and the Agile Manifesto are much deeper and more subtle than the simple preceding model.

Conclusion

So that’s what Agile is, and that’s how Agile began. Agile is a small discipline that helps small software teams manage small projects. But despite all that smallness, the implications and repercussions of Agile are enormous because all big projects are, after all, made from many small projects.

With every day that passes, software is ever more insinuated into the daily lives of a vast and growing subset of our population. It is not too extreme to say that software rules the world. But if software rules the world, it is Agile that best enables the development of that software.