CHAPTER 4

OS Hardening and Virtualization

This chapter covers the following subjects:

![]() Hardening Operating Systems: This section details what you need to know to make your operating system strong as steel. Patches and hotfixes are vital. Reducing the attack surface is just as important, which can be done by disabling unnecessary services and uninstalling extraneous programs. Group policies, security templates, and baselining put on the finishing touches to attain that “bulletproof” system.

Hardening Operating Systems: This section details what you need to know to make your operating system strong as steel. Patches and hotfixes are vital. Reducing the attack surface is just as important, which can be done by disabling unnecessary services and uninstalling extraneous programs. Group policies, security templates, and baselining put on the finishing touches to attain that “bulletproof” system.

![]() Virtualization Technology: This section delves into virtual machines and other virtual implementations with an eye on applying real-world virtualization scenarios.

Virtualization Technology: This section delves into virtual machines and other virtual implementations with an eye on applying real-world virtualization scenarios.

Imagine a computer with a freshly installed server operating system (OS) placed on the Internet or in a DMZ that went live without any updating, service packs, or hotfixes. How long do you think it would take for this computer to be compromised? A week? Sooner? It depends on the size and popularity of the organization, but it won’t take long for a nonhardened server to be compromised. And it’s not just servers! Workstations, routers, switches: You name it; they all need to be updated regularly, or they will fall victim to attack. By updating systems frequently and by employing other methods such as group policies and baselining, we are hardening the system, making it tough enough to withstand the pounding that it will probably take from today’s technology...and society.

Another way to create a secure environment is to run operating systems virtually. Virtual systems allow for a high degree of security, portability, and ease of use. However, they are resource-intensive, so a balance needs to be found, and virtualization needs to be used according to the level of resources in an organization. Of course, these systems need to be maintained and updated (hardened) as well.

By utilizing virtualization properly and by implementing an intelligent update plan, operating systems and the relationships between operating systems can be more secure and last a long time.

Foundation Topics

Hardening Operating Systems

An operating system that has been installed out-of-the-box is inherently insecure. This can be attributed to several things, including initial code issues and backdoors, the age of the product, and the fact that most systems start off with a basic and insecure set of rules and policies. How many times have you heard of a default OS installation where the controlling user account was easily accessible and had a basic password, or no password at all? Although these types of oversights are constantly being improved upon, making an out-of-the-box experience more pleasant, new applications and new technologies offer new security implications as well. So regardless of the product, we must try to protect it after the installation is complete.

Hardening of the OS is the act of configuring an OS securely, updating it, creating rules and policies to help govern the system in a secure manner, and removing unnecessary applications and services. This is done to minimize OS exposure to threats and to mitigate possible risk.

Quick tip: There is no such thing as a “bulletproof” system as I alluded to in the beginning of the chapter. That’s why I placed the term in quotation marks. Remember, no system can ever truly be 100% secure. So, although it is impossible to reduce risk to zero, I’ll show some methods that can enable you to diminish current and future risk to an acceptable level.

This section demonstrates how to harden the OS through the use of patches and patch management, hotfixes, group policies, security templates, and configuration baselines. We then discuss a little bit about how to secure the file system and hard drives. But first, let’s discuss how to analyze the system and decide which applications and services are unnecessary, and then remove them.

Removing Unnecessary Applications and Services

Unnecessary applications and services use valuable hard drive space and processing power. More importantly, they can be vulnerabilities to an operating system. That’s why many organizations implement the concept of least functionality. This is when an organization configures computers and other information systems to provide only the essential functions. Using this method, a security administrator will restrict applications, services, ports, and protocols. This control—called CM-7—is described in more detail by the NIST at the following link:

https://nvd.nist.gov/800-53/Rev4/control/CM-7

The United States Department of Defense describes this concept in DoD instruction 8551.01:

http://www.dtic.mil/whs/directives/corres/pdf/855101p.pdf

It’s this mindset that can help protect systems from threats that are aimed at insecure applications and services. For example, instant messaging programs can be dangerous. They might be fun for the user but usually are not productive in the workplace (to put it nicely); and from a security viewpoint, they often have backdoors that are easily accessible to attackers. Unless they are required by tech support, they should be discouraged and/or disallowed by rules and policies. Be proactive when it comes to these types of programs. If a user can’t install an IM program to a computer, then you will never have to remove it from that system. However, if you do have to remove an application like this, be sure to remove all traces that it ever existed. That is just one example of many, but it can be applied to most superfluous programs.

Another group of programs you should watch out for are remote control programs. Applications that enable remote control of a computer should be avoided if possible. For example, Remote Desktop Connection is a commonly used Windows-based remote control program. By default, this program uses inbound port 3389, which is well known to attackers—an obvious security threat. Consider using a different port if the program is necessary, and if not, make sure that the program’s associated service is turned off and disabled. Check if any related services need to be disabled as well. Then verify that their inbound ports are no longer functional, and that they are closed and secured. Confirm that any shares created by an application are disabled as well. Basically, remove all instances of the application or, if necessary, re-image the computer!

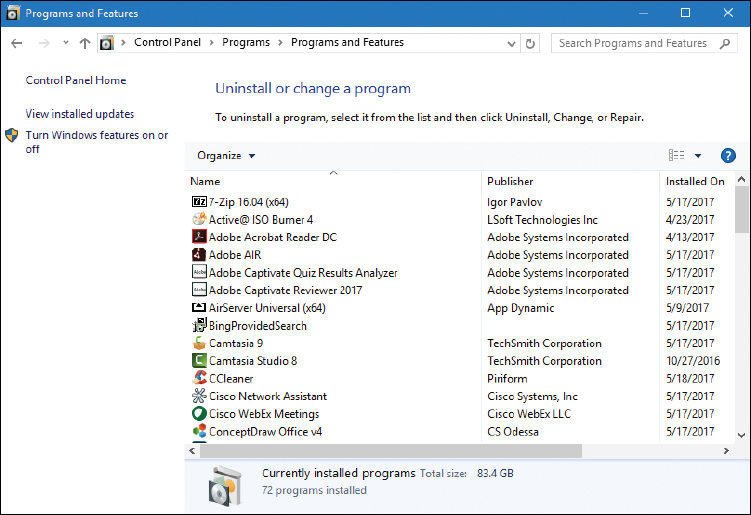

Personally, I use a lot of programs. But over time, some of them fall by the wayside and are replaced by better or newer programs. The best procedure is to check a system periodically for any unnecessary programs. For example, in Windows we can look at the list of installed programs by going to the Control Panel > Programs > Programs and Features, as shown in Figure 4-1. You can see a whopping 83.4 GB of installed programs, and the system in the figure is only a year old.

Notice in the figure that Camtasia Studio 8 and Camtasia 9 are installed. Because version 8 is an older version, a person might consider removing it. This can be done by right-clicking the application and selecting Uninstall. However, you must consider wisely. In this case, the program is required for backward compatibility because some of the older projects cannot be upgraded to a newer version of the program.

Programs such as this can use up valuable hard drive space, so if they are not necessary, it makes sense to remove them to conserve hard drive space. This becomes more important when you deal with audio/video departments that use big applications such as Camtasia, or Pro Tools, or Premiere Pro. The applications are always battling for hard drive space, and it can get ugly! Not only that, but many applications place a piece of themselves in the Notification Area in Windows. So, a part of the program is actually running behind the scenes using processor/RAM resources. If the application is necessary, there are often ways to eliminate it from the Notification Area, either by right-clicking it and accessing its properties, or by turning it off with a configuration program such as the Task Manager or Msconfig.

Consider also that an app like this might also attempt to communicate with the Internet to download updates, or for other reasons. It makes this issue not only a resource problem, but also a security concern, so it should be removed if it is unused. Only software deemed necessary should be installed in the future.

Now, uninstalling applications on a few computers is feasible, but what if you have a larger network? Say, one with 1,000 computers? You can’t expect yourself or your computer techs to go to each and every computer locally and remove applications. That’s when centrally administered management systems come into play. Examples of these include Microsoft’s System Center Configuration Manager (SCCM), and the variety of mobile device management (MDM) suites available. These programs allow a security administrator to manage lots of computers’ software, configurations, and policies, all from the local workstation.

Of course, it can still be difficult to remove all the superfluous applications from every end-user computer on the network. What’s important to realize here is that applications are at their most dangerous when actually being used by a person. Given this mindset, you should consider the concept of application whitelisting and blacklisting. Application whitelisting, as mentioned in Chapter 3, “Computer Systems Security Part II,” is when you set a policy that allows only certain applications to run on client computers (such as Microsoft Word and a secure web browser). Any other application will be denied to the user. This works well in that it eliminates any possibility (excluding hacking) of another program being opened by an end user, but it can cause productivity problems. When an end user really needs another application, an exception would have to be made to the rule for that user, which takes time, and possibly permission from management. Application blacklisting, on the other hand, is when individual applications are disallowed. This can be a more useful (and more efficient) solution if your end users work with, and frequently add, a lot of applications. In this scenario, an individual application (say a social media or chat program) is disabled across the network. This and whitelisting are often performed from centralized management systems mentioned previously, and through the use of policies, which we discuss more later in this chapter (and later in the book).

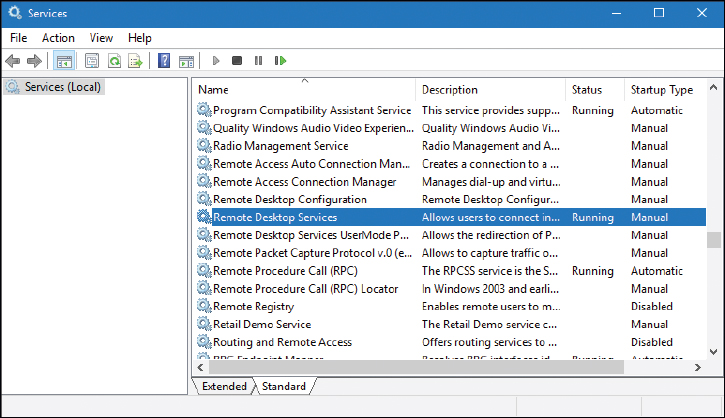

Removing applications is a great way to reduce the attack surface and increase performance on computers. But the underlying services are even more important. Services are used by applications and the OS. They, too, can be a burden on system resources and pose security concerns. Examine Figure 4-2 and note the highlighted service.

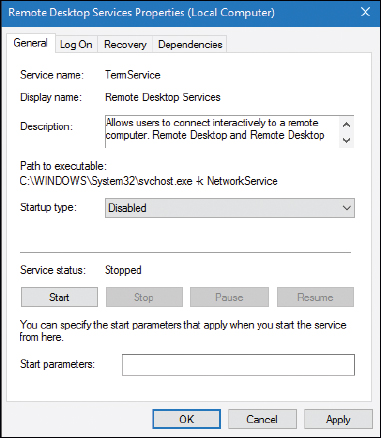

The highlighted service is Remote Desktop Services. You can see in the figure that it is currently running. If it is not necessary, we would want to stop it and disable it. To do so, just right-click the service, select Properties, click the Stop button, click Apply, and change the Startup Type drop-down menu to the Disabled option, as shown in Figure 4-3.

This should be done for all unnecessary services. By disabling services such as this one, we can reduce the risk of unwanted access to the computer and we trim the amount of resources used. This is especially important on Windows servers, because they run a lot more services and are a more common target. By disabling unnecessary services, we reduce the size of the attack surface.

Note

Even though it is deprecated, you might see Windows XP running here and there. A system such as this might have the Telnet service running, which is a serious vulnerability. Normally, I wouldn’t use Windows XP as an example given its age (and the fact that Microsoft will not support it anymore), but in this case I must because of the insecure nature of Telnet and the numerous systems that will probably continue to run Windows XP for some time—regardless of the multitude of warnings. Always remember to stop and disable Telnet if you see it. Then, replace it with a secure program/protocol such as Secure Shell (SSH). Finally, consider updating to a newer operating system!

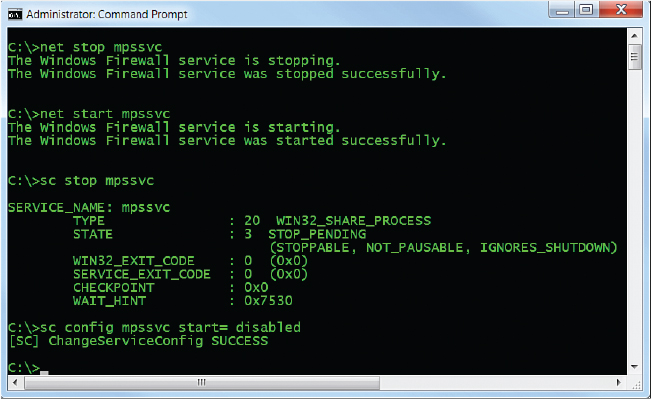

Services can be started and stopped in the Windows Command Prompt with the net start and net stop commands, as well as by using the sc command. Examples of this are shown in Figure 4-4.

Note

You will need to run the Command Prompt in elevated mode (as an administrator) to execute these commands.

In Figure 4-4 we have stopped and started the Windows Firewall service (which uses the service name mpssvc) by invoking the net stop and net start commands. Then, we used the sc command to stop the same service with the sc stop mpssvc syntax. It shows that the service stoppage was pending, but as you can see from Figure 4-5, it indeed stopped (and right away, I might add). Finally, we used a derivative of the sc command to disable the service, so that it won’t start again when the system is restarted. This syntax is

sc config mpssvc start= disabled

Note that there is a space after the equal sign, which is necessary for the command to work properly. Figure 4-5 shows the GUI representation of the Windows Firewall service.

You can see in the figure that the service is disabled and stopped. This is a good place to find out the name of a service if you are not sure. Or, you could use the sc query command.

In Linux, you can start, stop, and restart services in a variety of ways. Because there are many variants of Linux, how you perform these actions varies in the different GUIs that are available. So, in this book I usually stick with the command-line, which is generally the same across the board. You’ll probably want to display a list of services (and their status) in the command-line first. For example, in Ubuntu you can do this by typing the following:

service -–status-all

For a list of upstart jobs with their status, use the following syntax:

initctl list

Note

In Linux, if you are not logged in as an administrator (and even sometimes if you are), you will need to type sudo before a command, and be prepared to enter an administrator password. Be ready to use sudo often.

Services can be stopped in the Linux command-line in a few ways:

![]() By typing the following syntax:

By typing the following syntax:

/etc/init.d/<service> stop

where <service> is the service name. For example, if you are running an Apache web server, you would type the following:

/etc/init.d/apache2 stop

Services can also be started and restarted by replacing stop with start or restart.

![]() By typing the following syntax in select versions:

By typing the following syntax in select versions:

service <service> stop

Some services require a different set of syntax. For example, Telnet can be deactivated in Red Hat by typing chkconfig telnet off. Check the MAN pages within the command-line or online for your particular version of Linux to obtain exact syntax and any previous commands that need to be issued. Or use a generic Linux online MAN page; for example: http://linux.die.net/man/1/telnet.

In macOS/OS X Server, services can be stopped in the command line by using the following syntax:

sudo serveradmin stop <service>

However, this may not work on macOS or OS X client. For example, in 10.9 Mavericks you would simply quit processes either by using the Activity Monitor or by using the kill command in the Terminal.

Note

Ending the underlying process is sometimes necessary in an OS when the service can’t be stopped. To end processes in Windows, use the Task Manager or the taskkill command. The taskkill command can be used in conjunction with the executable name of the task or the process ID (PID) number. Numbers associated with processes can be found with the tasklist command. In Linux, use the kill command to end an individual process. To find out all process IDs currently running, use the syntax ps aux | less. (Ctrl+Z can break you out of commands such as this if necessary.) On mobile devices, the equivalent would be a force quit.

Let’s not forget about mobile devices. You might need to force stop or completely uninstall apps from Android or iOS. Be ready to do so and lock them down from a centralized MDM.

Table 4-1 summarizes the various ways to stop services in operating systems.

Table 4-1 Summary of Ways to Stop Services

Operating System |

Procedure to Stop Service |

Windows |

Access Use the Use the |

Linux |

Use the syntax Use the syntax Use the syntax |

macOS/OS X |

Use the |

Windows Update, Patches, and Hotfixes

To be considered secure, operating systems should have support for multilevel security, and be able to meet government requirements. An operating system that meets these criteria is known as a Trusted Operating System (TOS). Examples of certified Trusted Operating Systems include Windows 7, OS X 10.6, FreeBSD (with the TrustedBSD extensions), and Red Hat Enterprise Server. To be considered a TOS, the manufacturer of the system must have strong policies concerning updates and patching.

Even without being a TOS, operating systems should be updated regularly. For example, Microsoft recognizes the deficiencies in an OS, and possible exploits that could occur, and releases patches to increase OS performance and protect the system.

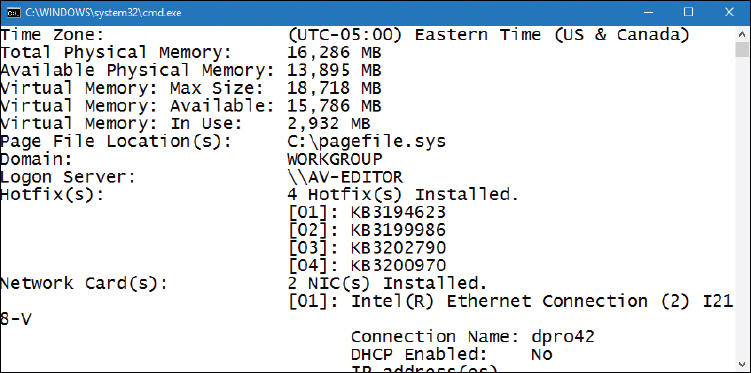

Before updating, the first thing to do is to find out the version number, build number, and the patch level. For example, in Windows you can find out this information by opening the System Information tool (open the Run prompt and type msinfo32.exe). It will be listed directly in the System Summary. You could also use the winver command. In addition, you can use the systeminfo command in the Command Prompt (a great information gatherer!) or simply the ver command.

Then, you should check your organization’s policies to see what level a system should be updated to. You should also test updates and patches on systems located on a clean, dedicated, testing network before going live with an update.

Windows uses the Windows Update program to manage updates to the system. Versions before Windows 10 include this feature in the Control Panel. It can also be accessed by typing wuapp.exe in the Run prompt. In Windows 10 it is located in the Settings section, or can be opened by typing ms-settings:windowsupdate in the Run prompt.

Updates are divided into different categories:

![]() Security update: A broadly released fix for a product-specific security-related vulnerability. Security vulnerabilities are rated based on their severity, which is indicated in the Microsoft Security Bulletin as critical, important, moderate, or low.

Security update: A broadly released fix for a product-specific security-related vulnerability. Security vulnerabilities are rated based on their severity, which is indicated in the Microsoft Security Bulletin as critical, important, moderate, or low.

![]() Critical update: A broadly released fix for a specific problem addressing a critical, non-security–related bug.

Critical update: A broadly released fix for a specific problem addressing a critical, non-security–related bug.

![]() Service pack: A tested, cumulative set of hotfixes, security updates, critical updates, and updates, as well as additional fixes for problems found internally since the release of the product. Service packs might also contain a limited number of customer-requested design changes or features. Note that Windows 7 and Windows Server 2008 R2 are the last of the Microsoft operating systems to use service packs. If possible, the testing of service packs should be done offline (with physical media). Disconnect the computer from the network by disabling the network adapter before initiating the SP upgrade.

Service pack: A tested, cumulative set of hotfixes, security updates, critical updates, and updates, as well as additional fixes for problems found internally since the release of the product. Service packs might also contain a limited number of customer-requested design changes or features. Note that Windows 7 and Windows Server 2008 R2 are the last of the Microsoft operating systems to use service packs. If possible, the testing of service packs should be done offline (with physical media). Disconnect the computer from the network by disabling the network adapter before initiating the SP upgrade.

![]() Windows update: Recommended update to fix a noncritical problem certain users might encounter; also adds features and updates to features bundled into Windows.

Windows update: Recommended update to fix a noncritical problem certain users might encounter; also adds features and updates to features bundled into Windows.

![]() Driver update: Updated device driver for installed hardware. It’s important to verify that any drivers installed will be compatible with a system. A security administrator should be aware of the potential to modify drivers through the use of driver shimming (the adding of a small library that intercepts API calls) and driver refactoring (the restructuring of driver code). By default, shims are not supposed to be used to resolve compatibility issues with device drivers, but it’s impossible to foresee the types of malicious code that may present itself in the future. So, a security administrator should be careful when updating drivers by making sure that the drivers are signed properly and first testing them on a closed system.

Driver update: Updated device driver for installed hardware. It’s important to verify that any drivers installed will be compatible with a system. A security administrator should be aware of the potential to modify drivers through the use of driver shimming (the adding of a small library that intercepts API calls) and driver refactoring (the restructuring of driver code). By default, shims are not supposed to be used to resolve compatibility issues with device drivers, but it’s impossible to foresee the types of malicious code that may present itself in the future. So, a security administrator should be careful when updating drivers by making sure that the drivers are signed properly and first testing them on a closed system.

There are various options for the installation of Windows updates, including automatic install, defer updates to a future date, download with option to install, manual check for updates, and never check for updates. The types available to you will differ depending on the version of Windows. Automatic install is usually frowned upon by businesses, especially in the enterprise. Generally, you as the administrator want to specify what is updated, when it is updated, and to which computers it is updated. This eliminates problems such as patch level mismatch and network usage issues.

In some cases, your organization might opt to turn off Windows Update altogether. Depending on your version of Windows, this may or may not be possible within the Windows Update program. However, your organization might go a step further and specify that the Windows Update service be stopped and disabled, thereby disabling updates. This can be done in the Services console window (Run > services.msc) or from the Command Prompt with one of the methods discussed earlier in the chapter—the service name for Windows Update is wuauserv.

Patches and Hotfixes

The best place to obtain patches and hotfixes is from the manufacturer’s website. The terms patches and hotfixes are often used interchangeably. Windows updates are made up of hotfixes. Originally, a hotfix was defined as a single problem-fixing patch to an individual OS or application installed live while the system was up and running and without needing a reboot. However, this term has changed over time and varies from vendor to vendor. (Vendors may even use both terms to describe the same thing.) For example, if you run the systeminfo command in the Command Prompt of a Windows computer, you see a list of hotfixes similar to Figure 4-6. They can be identified with the letters KB followed by seven numbers. Hotfixes can be single patches to individual applications, or might affect the entire system.

On the other side of the spectrum, a gaming company might define hotfixes as a “hot” change to the server with no downtime, and no client download is necessary. The organization releases these if they are critical, instead of waiting for a full patch version. The gaming world commonly uses the terms patch version, point release, or maintenance release to describe a group of file updates to a particular gaming version. For example, a game might start at version 1 and later release an update known as 1.17. The .17 is the point release. (This could be any number, depending on the amount of code rewrites.) Later, the game might release 1.32, in which .32 is the point release, again otherwise referred to as the patch version. This is common with other programs as well. For example, the aforementioned Camtasia program that is running on the computer shown in Figure 4-1 is version 9.0.4. The second dot (.4) represents very small changes to the program, whereas a patch version called 9.1 would be a larger change, and 10.0 would be a completely new version of the software. This concept also applies to blogging applications and forums (otherwise known as bulletin boards). As new threats are discovered (and they are extremely common in the blogging world), new patch versions are released. They should be downloaded by the administrator, tested, and installed without delay. Admins should keep in touch with their software manufacturers, either through phone or e-mail, or by frequenting their web pages. This keeps the admin “in the know” when it comes to the latest updates. And this applies to server and client operating systems, server add-ons such as Microsoft Exchange or SQL Server, Office programs, web browsers, and the plethora of third-party programs that an organization might use. Your job just got a bit busier!

Of course, we are usually not concerned with updating games in the working world; they should be removed from a computer if they are found (unless perhaps you work for a gaming company). But multimedia software such as Camtasia is prevalent in some companies, and web-based software such as bulletin-board systems are also common and susceptible to attack.

Patches generally carry the connotation of a small fix in the mind of the user or system administrator, so larger patches are often referred to as software updates, service packs, or something similar. However, if you were asked to fix a single security issue on a computer, a patch would be the solution you would want. For example, there are various Trojans that attack older versions of Microsoft Office for Mac. To counter these, Microsoft released a specific patch for those versions of Office for Mac that disallows remote access by the Trojans.

Before installing an individual patch, you should determine if it perhaps was already installed as part of a group update. For example, you might read that a particular version of macOS or OS X had a patch released for iTunes, and being an enthusiastic iTunes user, you might consider installing the patch. But you should first find out the version of the OS you are running—it might already include the patch. To find this information, simply click the Apple menu and then click About This Mac.

Remember: All systems need to be patched at some point. It doesn’t matter if they are Windows, Mac, Linux, Unix, Android, iOS, hardware appliances, kiosks, automotive computers…need I go on?

Unfortunately, sometimes patches are designed poorly, and although they might fix one problem, they could possibly create another, which is a form of software regression. Because you never know exactly what a patch to a system might do, or how it might react or interact with other systems, it is wise to incorporate patch management.

Patch Management

It is not wise to go running around the network randomly updating computers, not to say that you would do so! Patching, like any other process, should be managed properly. Patch management is the planning, testing, implementing, and auditing of patches. Now, these four steps are ones that I use; other companies might have a slightly different patch management strategy, but each of the four concepts should be included:

![]() Planning: Before actually doing anything, a plan should be set into motion. The first thing that needs to be decided is whether the patch is necessary and whether it is compatible with other systems. Microsoft Baseline Security Analyzer (MBSA) is one example of a program that can identify security misconfigurations on the computers in your network, letting you know whether patching is needed. If the patch is deemed necessary, the plan should consist of a way to test the patch in a “clean” network on clean systems, how and when the patch will be implemented, and how the patch will be checked after it is installed.

Planning: Before actually doing anything, a plan should be set into motion. The first thing that needs to be decided is whether the patch is necessary and whether it is compatible with other systems. Microsoft Baseline Security Analyzer (MBSA) is one example of a program that can identify security misconfigurations on the computers in your network, letting you know whether patching is needed. If the patch is deemed necessary, the plan should consist of a way to test the patch in a “clean” network on clean systems, how and when the patch will be implemented, and how the patch will be checked after it is installed.

![]() Testing: Before automating the deployment of a patch among a thousand computers, it makes sense to test it on a single system or small group of systems first. These systems should be reserved for testing purposes only and should not be used by “civilians” or regular users on the network. I know, this is asking a lot, especially given the amount of resources some companies have. But the more you can push for at least a single testing system that is not a part of the main network, the less you will be to blame if a failure occurs!

Testing: Before automating the deployment of a patch among a thousand computers, it makes sense to test it on a single system or small group of systems first. These systems should be reserved for testing purposes only and should not be used by “civilians” or regular users on the network. I know, this is asking a lot, especially given the amount of resources some companies have. But the more you can push for at least a single testing system that is not a part of the main network, the less you will be to blame if a failure occurs!

![]() Implementing: If the test is successful, the patch should be deployed to all the necessary systems. In many cases this is done in the evening or over the weekend for larger updates. Patches can be deployed automatically using software such as Microsoft’s SCCM and third-party patch management tools.

Implementing: If the test is successful, the patch should be deployed to all the necessary systems. In many cases this is done in the evening or over the weekend for larger updates. Patches can be deployed automatically using software such as Microsoft’s SCCM and third-party patch management tools.

![]() Auditing: When the implementation is complete, the systems (or at least a sample of systems) should be audited; first, to make sure the patch has taken hold properly, and second, to check for any changes or failures due to the patch. SCCM and third-party tools can be used in this endeavor.

Auditing: When the implementation is complete, the systems (or at least a sample of systems) should be audited; first, to make sure the patch has taken hold properly, and second, to check for any changes or failures due to the patch. SCCM and third-party tools can be used in this endeavor.

Note

The concept of patch management, in combination with other application/OS hardening techniques, is collectively referred to as configuration management.

There are also Linux-based and Mac-based programs and services developed to help manage patching and the auditing of patches. Red Hat has services to help sys admins with all the RPMs they need to download and install, which can become a mountain of work quickly! And for those people who run GPL Linux, there are third-party services as well. A network with a lot of mobile devices benefits greatly from the use of a MDM platform. But even with all these tools at an organization’s disposal, sometimes, patch management is just too much for one person, or for an entire IT department, and an organization might opt to contract that work out.

Group Policies, Security Templates, and Configuration Baselines

Although they are important tasks, removing applications, disabling services, patching, hotfixing, and installing service packs are not the only ways to harden an operating system. Administrative privileges should be used sparingly, and policies should be in place to enforce your organization’s rules. A Group Policy is used in Microsoft and other computing environments to govern user and computer accounts through a set of rules. Built-in or administrator-designed security templates can be applied to these to configure many rules at one time—creating a “secure configuration." Afterward, configuration baselines should be initiated to measure server and network activity.

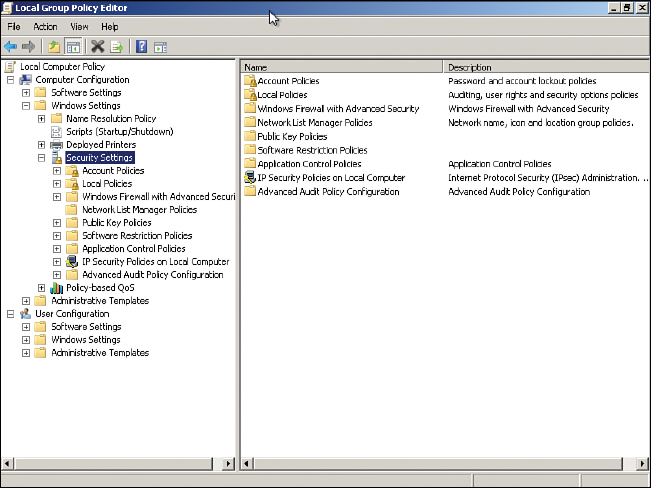

To access the Group Policy in Windows, go to the Run prompt and type gpedit.msc. This should display the Local Group Policy Editor console window. Figure 4-7 shows a typical example of this on a Windows client.

Although there are many configuration changes you can make, this figure focuses on the computer’s security settings that can be accessed by navigating to Local Computer Policy > Computer Configuration > Windows Settings > Security Settings. From here you can make changes to the password policies (for example, how long a password lasts before having to be changed), account lockout policies, public key policies, and so on. We talk about these different types of policies and the best way to apply them in future chapters. The Group Policy Editor in Figure 4-7 is known as the Local Group Policy Editor and only governs that particular machine and the local users of that machine. It is a basic version of the Group Policy Editor used by Windows Server domain controllers that have Active Directory loaded.

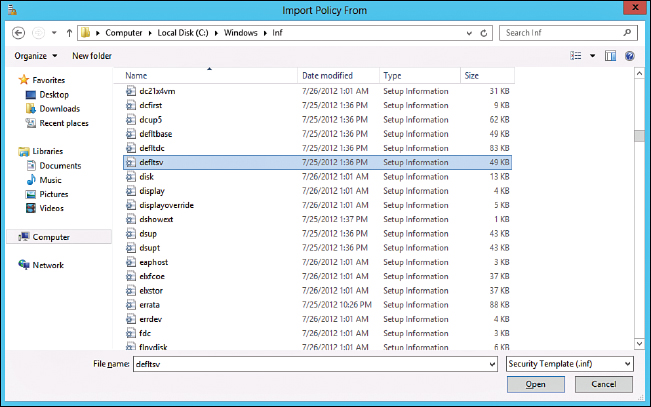

It is also from here that you can add security templates as well. Security templates are groups of policies that can be loaded in one procedure; they are commonly used in corporate environments. Different security templates have different security levels. These can be installed by right-clicking Security Settings and selecting Import Policy. This brings up the Import Policy From window. This technique of adding a policy template becomes much more important on Windows Server computers. Figure 4-8 shows an example of the Import Policy From window in Windows Server.

There are three main security templates in Windows Server: defltbase.inf (uncommon), defltsv.inf (used on regular servers), and defltdc.inf (used in domain controllers). By default, these templates are stored in %systemroot%inf (among a lot of other .inf files).

Select the policy you desire and click Open. That establishes the policy on the server. It’s actually many policies that are written to many locations of the entire Local Security Policy window. Often, these policy templates are applied to organizational units on a domain controller. But they can be used for other types of systems and policies as well.

Note

Policies are imported in the same manner in Server 2003, but the names are different. For example, the file securedc.inf is an information file filled with policy configurations more secure than the default you would find in a Windows Server 2003 domain controller that runs Active Directory. And hisecdc.inf is even more secure, perhaps too secure and limiting for some organizations. Server Templates for Server 2003 are generally stored in %systemroot%Security emplates. I normally don’t cover Server 2003 because it is not supported by Microsoft any longer, but you just might see it in the field!

In Windows Server you can modify policies, and add templates, directly from Server Manager > Security Configuration Wizard as well. If you save templates here, they are saved as .xml files instead of .inf files.

Group Policies are loaded with different Group Policy objects (GPOs). By configuring as many of these GPOs as possible, you implement OS hardening, ultimately establishing host-based security for your organization’s workstations.

Baselining is the process of measuring changes in networking, hardware, software, and so on. Creating a baseline consists of selecting something to measure and measuring it consistently for a period of time. For example, I might want to know what the average hourly data transfer is to and from a server. There are many ways to measure this, but I could possibly use a protocol analyzer to find out how many packets cross through the server’s network adapter. This could be run for 1 hour (during business hours, of course) every day for 2 weeks. Selecting different hours for each day would add more randomness to the final results. By averaging the results together, we get a baseline. Then we can compare future measurements of the server to the baseline. This can help us to define what the standard load of our server is and the requirements our server needs on a consistent basis. It can also help when installing additional computers on the network. The term baselining is most often used to refer to monitoring network performance, but it actually can be used to describe just about any type of performance monitoring. Baselining and benchmarking are extremely important when testing equipment and when monitoring already installed devices.

Any baseline deviation should be investigated right away. In most organizations, the baselines exist. The problem is that they are not audited and analyzed often enough by security administrators, causing deviations to go unnoticed until it is too late. Baselining becomes even more vital when dealing with a real-time operating system (RTOS). These systems require near 100% uptime and lightning-fast response with zero latency. Be sure to monitor these carefully and often. We discuss baselining further in Chapter 13, “Monitoring and Auditing."

Hardening File Systems and Hard Drives

You want more? I promise more. The rest of the book constantly refers to more advanced and in-depth ways to harden a computer system. But for this chapter, let’s conclude this section by giving a few tips on hardening a hard drive and the file system it houses.

First, the file system used dictates a certain level of security. On Microsoft computers, the best option is to use NTFS, which is more secure, enables logging (oh so important), supports encryption, and has support for a much larger maximum partition size and larger file sizes. Just about the only place where FAT32 and NTFS are on a level playing field is that they support the same amount of file formats. So, by far, NTFS is the best option. If a volume uses FAT or FAT32, it can be converted to NTFS using the following command:

convert volume /FS:NTFS

For example, if I want to convert a USB flash drive named M: to NTFS, the syntax would be

convert M: /FS:NTFS

There are additional options for the convert command. To see these, simply type convert /? in the Command Prompt. NTFS enables for file-level security and tracks permissions within access control lists (ACLs), which are a necessity in today’s environment. Most systems today already use NTFS, but you never know about flash-based and other removable media. A quick chkdsk command in the Command Prompt or right-clicking the drive in the GUI and selecting Properties can tell you what type of file system it runs.

Generally, the best file system for Linux systems is ext4. It allows for the best and most configurable security. To find out the file system used by your version of Linux, use the fdisk -l command or df -T command.

System files and folders by default are hidden from view to protect a Windows system, but you never know. To permanently configure the system to not show hidden files and folders, navigate to the File Explorer (or Folder) Options dialog box. Then select the View tab, and under Hidden Files and Folders select the Don’t Show Hidden Files, Folders, or Drives radio button. To configure the system to hide protected system files, select the Hide Protected Operating System Files checkbox, located below the radio button previously mentioned. This disables the ability to view such files and folders as bootmgr and pagefile.sys. You might also need to secure a system by turning off file sharing, which in most versions of Windows can be done within the Network and Sharing Center.

In the past, I have made a bold statement: “Hard disks will fail." But it’s all too true. It’s not a matter of if; it’s a matter of when. By maintaining and hardening the hard disk with various hard disk utilities, we attempt to stave off that dark day as long as possible. You can implement several strategies when maintaining and hardening a hard disk:

![]() Remove temporary files: Temporary files and older files can clog up a hard disk, cause a decrease in performance, and pose a security threat. It is recommended that Disk Cleanup or a similar program be used. Policies can be configured (or written) to run Disk Cleanup every day or at logoff for all the computers on the network.

Remove temporary files: Temporary files and older files can clog up a hard disk, cause a decrease in performance, and pose a security threat. It is recommended that Disk Cleanup or a similar program be used. Policies can be configured (or written) to run Disk Cleanup every day or at logoff for all the computers on the network.

![]() Periodically check system files: Every once in a while, it’s a good idea to verify the integrity of operating system files. A file integrity check can be done in the following ways:

Periodically check system files: Every once in a while, it’s a good idea to verify the integrity of operating system files. A file integrity check can be done in the following ways:

— With the chkdsk command in Windows. This examines the disk and provides a report. It can also fix some errors with the /F option.

— With the SFC (System File Checker) command in Windows. This utility checks and, if necessary, replaces protected system files. It can be used to fix problems in the OS, and in other applications such as Internet Explorer. A typical command you might type is SFC /scannow. Use this if chkdsk is not successful at making repairs.

— With the fsck command in Linux. This command is used to check and repair a Linux file system. The synopsis of the syntax is fsck [ -sAVRTNP ] [ -C [ fd ] ] [ -t fstype ] [filesys ... ] [--] [ fs-specific-options ]. More information about this command can be found at the corresponding MAN page for fsck. A derivative, e2fsck, is used to check a Linux ext2fs (second extended file system). Also, open source data integrity tools can be downloaded for Linux such as Tripwire.

![]() Defragment drives: Applications and files on hard drives become fragmented over time. For a server, this could be a disaster, because the server cannot serve requests in a timely fashion if the drive is too thoroughly fragmented. Defragmenting the drive can be done with Microsoft’s Disk Defragmenter, with the command-line

Defragment drives: Applications and files on hard drives become fragmented over time. For a server, this could be a disaster, because the server cannot serve requests in a timely fashion if the drive is too thoroughly fragmented. Defragmenting the drive can be done with Microsoft’s Disk Defragmenter, with the command-line defrag command, or with other third-party programs.

![]() Back up data: Backing up data is critical for a company. It is not enough to rely on a fault-tolerant array. Individual files or the entire system can be backed up to another set of hard drives, to optical discs, to tape, or to the cloud. Microsoft domain controllers’ Active Directory databases are particularly susceptible to attack; the System State for these operating systems should be backed up, in case that the server fails and the Active Directory needs to be recovered in the future.

Back up data: Backing up data is critical for a company. It is not enough to rely on a fault-tolerant array. Individual files or the entire system can be backed up to another set of hard drives, to optical discs, to tape, or to the cloud. Microsoft domain controllers’ Active Directory databases are particularly susceptible to attack; the System State for these operating systems should be backed up, in case that the server fails and the Active Directory needs to be recovered in the future.

![]() Use restoration techniques: In Windows, restore points should be created on a regular basis for servers and workstations. The System Restore utility (rstrui.exe) can fix issues caused by defective hardware or software by reverting back to an earlier time. Registry changes made by hardware or software are reversed in an attempt to force the computer to work the way it did previously. Restore points can be created manually and are also created automatically by the OS before new applications, service packs, or hardware are installed. macOS and OS X use the Time Machine utility, which works in a similar manner. Though there is no similar tool in Linux, a user can back up the ~/home directory to a separate partition. When these contents are decompressed to a new install, most of the Linux system and settings will have been restored. Another option in general is to use imaging (cloning) software. Remember that these techniques do not necessarily back up data, and that the data should be treated as a separate entity that needs to be backed up regularly.

Use restoration techniques: In Windows, restore points should be created on a regular basis for servers and workstations. The System Restore utility (rstrui.exe) can fix issues caused by defective hardware or software by reverting back to an earlier time. Registry changes made by hardware or software are reversed in an attempt to force the computer to work the way it did previously. Restore points can be created manually and are also created automatically by the OS before new applications, service packs, or hardware are installed. macOS and OS X use the Time Machine utility, which works in a similar manner. Though there is no similar tool in Linux, a user can back up the ~/home directory to a separate partition. When these contents are decompressed to a new install, most of the Linux system and settings will have been restored. Another option in general is to use imaging (cloning) software. Remember that these techniques do not necessarily back up data, and that the data should be treated as a separate entity that needs to be backed up regularly.

![]() Consider whole disk encryption: Finally, whole disk encryption can be used to secure the contents of the drive, making it harder for attackers to obtain and interpret its contents.

Consider whole disk encryption: Finally, whole disk encryption can be used to secure the contents of the drive, making it harder for attackers to obtain and interpret its contents.

A recommendation I give to all my students and readers is to separate the OS from the data physically. If you can have each on a separate hard drive, it can make things a bit easier just in case the OS is infected with malware (or otherwise fails). The hard drive that the OS inhabits can be completely wiped and reinstalled without worrying about data loss, and applications can always be reloaded. Of course, settings should be backed up (or stored on the second drive). If a second drive isn’t available, consider configuring the one hard drive as two partitions, one for the OS (or system) and one for the data. By doing this, and keeping a well-maintained computer, you are effectively hardening the OS.

Note

To clean out a system regularly, consider re-imaging it, or if a mobile device, resetting it. This takes care of any pesky malware by deleting everything and reinstalling to the point in time of the image, or to factory condition. While you will have to do some reconfigurations, the system will also run much faster because it has been completely cleaned out.

Virtualization Technology

Let’s define virtualization. Virtualization is the creation of a virtual entity, as opposed to a true or actual entity. The most common type of entity created through virtualization is the virtual machine—usually housing an OS. In this section we discuss types of virtualization, identify their purposes, and define some of the various virtual applications.

Types of Virtualization and Their Purposes

Many types of virtualization exist, from network and storage to hardware and software. The CompTIA Security+ exam focuses mostly on virtual machine software. The virtual machines (VMs) created by this software run operating systems or individual applications. The virtual operating system—also known as a guest or a virtual desktop environment (VDE)—is designed to run inside a real OS. So, the beauty behind this is that you can run multiple various operating systems simultaneously from just one PC. This has great advantages for programmers, developers, and systems administrators, and can facilitate a great testing environment. Security researchers in particular utilize virtual machines so they can execute and test malware without risk to an actual OS and the hardware it resides on. Nowadays, many VMs are also used in live production environments. Plus, an entire OS can be dropped onto a DVD or even a flash drive and transported where you want to go.

Of course, there are drawbacks. Processor and RAM resources and hard drive space are eaten up by virtual machines. And hardware compatibility can pose some problems as well. Also, if the physical computer that houses the virtual OS fails, the virtual OS will go offline immediately. All other virtual computers that run on that physical system will also go offline. There is added administration as well. Some technicians forget that virtual machines need to be updated with the latest service packs and patches just like regular operating systems. Many organizations have policies that define standardized virtual images, especially for servers. As I alluded to earlier, the main benefit of having a standardized server image is that mandated security configurations will have been made to the OS from the beginning—creating a template, so to speak. This includes a defined set of security updates, service packs, patches, and so on, as dictated by organizational policy. So, when you load up a new instance of the image, a lot of the configuration work will already have been done, and just the latest updates to the OS and AV software need to be applied. This image can be used in a virtual environment, or copied to a physical hard drive as well. For example, you might have a server farm that includes two physical Windows Server systems and four virtual Windows Server systems, each running different tasks. It stands to reason that you will be working with new images from time to time as you need to replace servers or add them. By creating a standardized image once, and using it many times afterward, you can save yourself a lot of configuration time in the long run.

Virtual machines can be broken down into two categories:

![]() System virtual machine: A complete platform meant to take the place of an entire computer, enabling you to run an entire OS virtually.

System virtual machine: A complete platform meant to take the place of an entire computer, enabling you to run an entire OS virtually.

![]() Process virtual machine: Designed to run a single application, such as a virtual web browser.

Process virtual machine: Designed to run a single application, such as a virtual web browser.

Whichever VM you select, the VM cannot cross the software boundaries set in place. For example, a virus might infect a computer when executed and spread to other files in the OS. However, a virus executed in a VM will spread through the VM but not affect the underlying actual OS. So, this provides a secure platform to run tests, analyze malware, and so on...and creates an isolated system. If there are adverse effects to the VM, those effects (and the VM) can be compartmentalized to stop the spread of those effects. This is all because the virtual machine inhabits a separate area of the hard drive from the actual OS. This enables us to isolate network services and roles that a virtual server might play on the network.

Virtual machines are, for all intents and purposes, emulators. The terms emulation, simulation, and virtualization are often used interchangeably. Emulators can also be web-based; for example, an emulator of a SOHO router’s firmware that you can access online. You might also have heard of much older emulators such as Basilisk, or the DOSBox, or a RAM drive, but nowadays, anything that runs an OS virtually is generally referred to as a virtual machine or virtual appliance.

A virtual appliance is a virtual machine image designed to run on virtualization platforms; it can refer to an entire OS image or an individual application image. Generally, companies such as VMware refer to the images as virtual appliances, and companies such as Microsoft refer to images as virtual machines. One example of a virtual appliance that runs a single app is a virtual browser. VMware developed a virtual browser appliance that protects the underlying OS from malware installations from malicious websites. If the website succeeds in its attempt to install the malware to the virtual browser, the browser can be deleted and either a new one can be created or an older saved version of the virtual browser can be brought online!

Other examples of virtualization include the virtual private network (VPN), which is covered in Chapter 10, “Physical Security and Authentication Models,” and virtual desktop infrastructure (VDI) and virtual local area network (VLAN), which are covered in Chapter 6, “Network Design Elements."

Hypervisor

Most virtual machine software is designed specifically to host more than one VM. A byproduct is the intention that all VMs are able to communicate with each other quickly and efficiently. This concept is summed up by the term hypervisor. A hypervisor allows multiple virtual operating systems (guests) to run at the same time on a single computer. It is also known as a virtual machine manager (VMM). The term hypervisor is often used ambiguously. This is due to confusion concerning the two different types of hypervisors:

![]() Type 1–Native: The hypervisor runs directly on the host computer’s hardware. Because of this it is also known as “bare metal.” Examples of this include VMware vCenter and vSphere, Citrix XenServer, and Microsoft Hyper-V. Hyper-V can be installed as a standalone product, known as Microsoft Hyper-V Server, or it can be installed as a role within a standard installation of Windows Server 2008 (R2) or higher. Either way, the hypervisor runs independently and accesses hardware directly, making both versions of Windows Server Hyper-V Type 1 hypervisors.

Type 1–Native: The hypervisor runs directly on the host computer’s hardware. Because of this it is also known as “bare metal.” Examples of this include VMware vCenter and vSphere, Citrix XenServer, and Microsoft Hyper-V. Hyper-V can be installed as a standalone product, known as Microsoft Hyper-V Server, or it can be installed as a role within a standard installation of Windows Server 2008 (R2) or higher. Either way, the hypervisor runs independently and accesses hardware directly, making both versions of Windows Server Hyper-V Type 1 hypervisors.

![]() Type 2–Hosted: This means that the hypervisor runs within (or “on top of”) the operating system. Guest operating systems run within the hypervisor. Compared to Type 1, guests are one level removed from the hardware and therefore run less efficiently. Examples of this include VirtualBox, Windows Virtual PC (for Windows 7), Hyper-V (for Windows 8 and higher), and VMware Workstation.

Type 2–Hosted: This means that the hypervisor runs within (or “on top of”) the operating system. Guest operating systems run within the hypervisor. Compared to Type 1, guests are one level removed from the hardware and therefore run less efficiently. Examples of this include VirtualBox, Windows Virtual PC (for Windows 7), Hyper-V (for Windows 8 and higher), and VMware Workstation.

Generally, Type 1 is a much faster and much more efficient solution than Type 2. It is also more elastic, meaning that environments using Type 1 hypervisors can usually respond to quickly changing business needs by adjusting the supply of resources as necessary. Because of this elasticity and efficiency, Type 1 hypervisors are the kind used by web-hosting companies and by companies that offer cloud computing solutions such as infrastructure as a service (IaaS). It makes sense, too. If you have ever run a powerful operating system such as Windows Server within a Type 2 hypervisor such as VirtualBox, you will have noticed that a ton of resources are being used that are taken from the hosting operating system. It is not nearly as efficient as running the hosted OS within a Type 1 environment. However, keep in mind that the hardware/software requirements for a Type 1 hypervisor are more stringent and more costly. Because of this, some developing and testing environments use Type 2–based virtual software.

Another type of virtualization you should be familiar with is application containerization. This allows an organization to deploy and run distributed applications without launching a whole virtual machine. It is a more efficient way of using the resources of the hosting system, plus it is more portable. However, there is a lack of isolation from the hosting OS, which can lead to security threats potentially having easier access to the hosting system.

Securing Virtual Machines

In general, the security of a virtual machine operating system is the equivalent to that of a physical machine OS. The VM should be updated to the latest service pack. If you have multiple VMs, especially ones that will interact with each other, make sure they are updated in the same manner. This will help to ensure patch compatibility between the VMs. A VM should have the newest AV definitions, perhaps have a personal firewall, have strong passwords, and so on. However, there are several things to watch out for that, if not addressed, could cause all your work compartmentalizing operating systems to go down the drain. This includes considerations for the virtual machine OS as well as the controlling virtual machine software.

First, make sure you are using current and updated virtual machine software. Update to the latest patch for the software you are using (for example, the latest version of Oracle VirtualBox). Configure any applicable security settings or options in the virtual machine software. Once this is done, you can go ahead and create your virtual machines, keeping in mind the concept of standardized imaging mentioned earlier.

Next, keep an eye out for network shares and other connections between the virtual machine and the physical machine, or between two VMs. Normally, malicious software cannot travel between a VM and another VM or a physical machine as long as they are properly separated. But if active network shares are between the two—creating the potential for a directory traversal vulnerability—then malware could spread from one system to the other.

If a network share is needed, map it, use it, and then disconnect it when you are finished. If you need network shares between two VMs, document what they are and which systems (and users) connect to them. Review the shares often to see whether they are still necessary. Be careful with VMs that use a bridged or similar network connection, instead of network address translation (NAT). This method connects directly with other physical systems on the network, and can allow for malware and attacks to traverse the “bridge” so to speak.

Any of these things can lead to a failure of the VM’s isolation. Consequently, if a user (or malware) breaks out of a virtual machine and is able to interact with the host operating system, it is known as virtual machine escape. Vulnerabilities to virtual hosting software include buffer overflows, remote code execution, and directory traversals. Just another reason for the security administrator to keep on top of the latest CVEs and close those unnecessary network shares and connections.

Going further, if a virtual host is attached to a network attached storage (NAS) device or to a storage area network (SAN), it is recommended to segment the storage devices off the LAN either physically or with a secure VLAN. Regardless of where the virtual host is located, secure it with a strong firewall and disallow unprotected file transfer protocols such as FTP and Telnet.

Consider disabling any unnecessary hardware from within the virtual machine such as optical drives, USB ports, and so on. If some type of removable media is necessary, enable the device, make use of it, and then disable it immediately after finishing. Also, devices can be disabled from the virtual machine software itself. The boot priority in the virtual BIOS should also be configured so that the hard drive is booted from first, and not any removable media or network connection (unless necessary in your environment).

Due to the fact that VMs use a lot of physical resources of the computer, a compromised VM can be a threat in the form of a denial-of-service attack. To mitigate this, set a limit on the amount of resources any particular VM can utilize, and periodically monitor the usage of VMs. However, be careful of monitoring VMs. Most virtual software offers the ability to monitor the various VMs from the main host, but this feature can also be exploited. Be sure to limit monitoring, enable it only for authorized users, and disable it whenever not necessary.

Speaking of resources, the more VMs you have, the more important resource conservation becomes. Also, the more VMs that are created over time, the harder they are to manage. This can lead to virtualization sprawl. VM sprawl is when there are too many VMs for an administrator to manage properly. To help reduce the problem, a security administrator might create an organized library of virtual machine images. The admin might also use a virtual machine lifecycle management (VMLM) tool. This can help to enforce how VMs are created, used, deployed, and archived.

Finally, be sure to protect the raw virtual disk file. A disaster on the raw virtual disk can be tantamount to physical disk disaster. Look into setting permissions as to who can access the folder where the VM files are stored. If your virtual machine software supports logging and/or auditing, consider implementing it so that you can see exactly who started and stopped the virtual machine, and when. Otherwise, you can audit the folder where the VM files are located. Finally, consider making a copy of the virtual machine or virtual disk file—also known as a snapshot or checkpoint—encrypting the VM disk file, and digitally signing the VM and validating that signature prior to usage.

Note

Enterprise-level virtual software such as Hyper-V and VMware vCenter/vSphere takes security to a whole new level. Much more planning and configuration is necessary for these applications. It’s not necessary to know for the Security+ exam, but if you want to gather more information on securing Hyper-V, see the following link:

https://technet.microsoft.com/en-us/library/dd569113.aspx

For more information on how to use and secure VMware, see the following link:

https://www.vmware.com/products/vsphere.xhtml#resources

Table 4-2 summarizes the various ways to protect virtual machines and their hosts.

Table 4-2 Summary of Methods to Secure Virtual Machines

VM Security Topics |

Security Methods |

Virtualization updating |

Update and patch the virtualization software. Configure security settings on the host. Update and patch the VM operating system and applications. |

Virtual networking security and virtual escape protection |

Remove unnecessary network shares between the VMs and the host. Review the latest CVEs for virtual hosting software to avoid virtual machine escape. Remove unnecessary bridged connections to the VM. Use a secure VLAN if necessary. |

Disable unnecessary hardware |

Disable optical drives. Disable USB ports. Configure the virtual BIOS boot priority for hard drive first. |

Monitor and protect the VM |

Limit the amount of resources used by a VM. Monitor the resource usage of the VM. Reduce VM sprawl by creating a library of VM images and using a VMLM tool. Protect the virtual machine files by setting permissions, logging, and auditing. Secure the virtual machine files by encrypting and digitally signing. |

One last comment: A VM should be as secure as possible, but in general, because the hosting computer is in a controlling position, it is likely to be more easily exploited, and a compromise to the hosting computer probably means a compromise to any guest operating systems it contains. Therefore, if possible, the host should be even more secure than the VMs it controls. So, harden your heart, harden the VM, and make the hosting OS solid as a rock.

Chapter Summary

This chapter focused on the hardening of operating systems and the securing of virtual operating systems. Out-of-the-box operating systems can often be insecure for a variety of reasons and need to be hardened to meet your organization’s policies, Trusted Operating System (TOS) compliance, and government regulations. But in general, they need to be hardened so that they are more difficult to compromise.

The process of hardening an operating system includes: removing unnecessary services and applications; whitelisting and blacklisting applications; using anti-malware applications; configuring personal software-based firewalls; updating and patching (as well as managing those patches); using group policies, security templates, and baselining; utilizing a secure file system and performing preventive maintenance on hard drives; and in general, keeping a well-maintained computer.

Well, that’s a lot of work, especially for one person. That makes the use of automation very important. Automate your work whenever you can through the use of templates, the imaging of systems, and by using specific workflow methods. These things, in conjunction with well-written policies, can help you (or your team) to get work done faster and more efficiently.

One great way to be more efficient (and possibly more secure) is by using virtualization, the creation of a virtual machine or other emulator that runs in a virtual environment, instead of requiring its own physical computer. It renders dual-booting pretty much unnecessary, and can offer a lot of options when it comes to compartmentalization and portability. The virtual machine runs in a hypervisor—either Type 1, which is also known as bare metal, or Type 2, which is hosted. Type 1 is faster and more efficient, but usually more expensive and requires greater administrative skill. Regardless of the type you use, the hypervisor, and the virtual machines it contains, needs to be secured.

The hosting operating system, if there is one, should be hardened appropriately. The security administrator should update the virtual machine software to the latest version and configure applicable security settings for it. Individual virtual machines should have their virtual BIOS secured, and the virtual machine itself should be hardened the same way a regular, or non-virtual, operating system would be. (That clarification is important, because many organizations today have more virtual servers than non-virtual servers! The term regular becomes inaccurate in some scenarios.) Unnecessary hardware should be disabled, and network connections should be very carefully planned and monitored. In addition, the administrator should consider setting limits on the resources a virtual machine can consume, monitor the virtual machine (and its files), and protect the virtual machine through file permissions and encryption. And of course, all virtual systems should be tested thoroughly before being placed into production. It’s the implementation of security control testing that will ensure compatibility between VMs and virtual hosting software, reduce the chances of exploitation, and offer greater efficiency and less downtime in the long run.

Chapter 4 builds on Chapters 2 and 3. Most of the methods mentioned during Chapters 2 and 3 are expected to be implemented in addition to the practices listed in this chapter. By combining them with the software protection techniques we will cover in Chapter 5, “Application Security,” you will end up with a quite secure computer system.

Chapter Review Activities

Use the features in this section to study and review the topics in this chapter.

Review Key Topics

Review the most important topics in the chapter, noted with the Key Topic icon in the outer margin of the page. Table 4-3 lists a reference of these key topics and the page number on which each is found.

Table 4-3 Key Topics for Chapter 4

Key Topic Element |

Description |

Page Number |

Services window of a Windows Computer |

||

Remote Desktop Services Properties dialog box |

||

Stopping and disabling a service in the Windows Command Prompt |

||

Summary of ways to stop services |

||

Bulleted list |

Windows Update categories |

|

|

||

Bulleted list |

Patch management four steps |

|

Local Group Policy Editor in Windows |

||

Windows Server Import Policy From window |

||

Bulleted list |

Maintaining and hardening a hard disk |

|

Step list |

Keeping a well-maintained computer |

|

Bulleted list |

Types of hypervisors |

|

Summary of methods to secure VMs |

Define Key Terms

Define the following key terms from this chapter, and check your answers in the glossary:

hardening, least functionality, application blacklisting, Trusted Operating System (TOS), hotfix, patch, patch management, Group Policy, security template, baselining, virtualization, virtual machine (VM), hypervisor, application containerization, virtual machine escape, virtualization sprawl

Complete the Real-World Scenarios

Complete the Real-World Scenarios found on the companion website (www.pearsonitcertification.com/title/9780789758996). You will find a PDF containing the scenario and questions, and also supporting videos and simulations.

Review Questions

Answer the following review questions. Check your answers with the correct answers that follow.

1. Virtualization technology is often implemented as operating systems and applications that run in software. Often, it is implemented as a virtual machine. Of the following, which can be a security benefit when using virtualization?

A. Patching a computer will patch all virtual machines running on the computer.

B. If one virtual machine is compromised, none of the other virtual machines can be compromised.

C. If a virtual machine is compromised, the adverse effects can be compartmentalized.

D. Virtual machines cannot be affected by hacking techniques.

2. Eric wants to install an isolated operating system. What is the best tool to use?

A. Virtualization

B. UAC

C. HIDS

D. NIDS

3. Where would you turn off file sharing in Windows?

A. Control Panel

B. Local Area Connection

C. Network and Sharing Center

D. Firewall properties

4. Which option enables you to hide the bootmgr file?

A. Enable Hide Protected Operating System Files

B. Enable Show Hidden Files and Folders

C. Disable Hide Protected Operating System Files

D. Remove the -R Attribute

5. Which of the following should be implemented to harden an operating system? (Select the two best answers.)

A. Install the latest updates.

B. Install Windows Defender.

C. Install a virtual operating system.

D. Execute PHP scripts.

6. What is the best (most secure) file system to use in Windows?

A. FAT

B. NTFS

C. DFS

D. FAT32

7. A customer’s SD card uses FAT32 as its file system. What file system can you upgrade it to when using the convert command?

A. NTFS

B. HPFS

C. ext4

D. NFS

8. Which of the following is not an advantage of NTFS over FAT32?

A. NTFS supports file encryption.

B. NTFS supports larger file sizes.

C. NTFS supports larger volumes.

D. NTFS supports more file formats.

9. What is the deadliest risk of a virtual computer?

A. If a virtual computer fails, all other virtual computers immediately go offline.

B. If a virtual computer fails, the physical server goes offline.

C. If the physical server fails, all other physical servers immediately go offline.

D. If the physical server fails, all the virtual computers immediately go offline.

10. Virtualized browsers can protect the OS that they are installed within from which of the following?

A. DDoS attacks against the underlying OS

B. Phishing and spam attacks

C. Man-in-the-middle attacks

D. Malware installation from Internet websites

11. Which of the following needs to be backed up on a domain controller to recover Active Directory?

A. User data

B. System files

C. Operating system

D. System State

12. Which of the following should you implement to fix a single security issue on the computer?

A. Service pack

B. Support website

C. Patch

D. Baseline

13. An administrator wants to reduce the size of the attack surface of a Windows Server. Which of the following is the best answer to accomplish this?

A. Update antivirus software.

B. Install updates.

C. Disable unnecessary services.

D. Install network intrusion detection systems.

14. Which of the following is a security reason to implement virtualization in your network?

A. To isolate network services and roles

B. To analyze network traffic

C. To add network services at lower costs

D. To centralize patch management

15. Which of the following is one example of verifying new software changes on a test system?

A. Application hardening

B. Virtualization

C. Patch management

D. HIDS

16. You have been tasked with protecting an operating system from malicious software. What should you do? (Select the two best answers.)

A. Disable the DLP.

B. Update the HIPS signatures.

C. Install a perimeter firewall.

D. Disable unused services.

E. Update the NIDS signatures.

17. You are attempting to establish host-based security for your organization’s workstations. Which of the following is the best way to do this?

A. Implement OS hardening by applying GPOs.

B. Implement database hardening by applying vendor guidelines.

C. Implement web server hardening by restricting service accounts.

D. Implement firewall rules to restrict access.

18. In Windows, which of the following commands will not show the version number?

A. Systeminfo

B. Wf.msc

C. Winver

D. Msinfo32.exe

19. During an audit of your servers, you have noticed that most servers have large amounts of free disk space and have low memory utilization. Which of the following statements will be correct if you migrate some of the servers to a virtual environment?

A. You might end up spending more on licensing, but less on hardware and equipment.

B. You will need to deploy load balancing and clustering.

C. Your baselining tasks will become simpler.

D. Servers will encounter latency and lowered throughput issues.

Answers and Explanations

1. C. By using a virtual machine (which is one example of a virtual instance), any ill effects can be compartmentalized to that particular virtual machine, usually without any ill effects to the main operating system on the computer. Patching a computer does not automatically patch virtual machines existing on the computer. Other virtual machines can be compromised, especially if nothing is done about the problem. Finally, virtual machines can definitely be affected by hacking techniques. Be sure to secure them!

2. A. Virtualization enables a person to install operating systems (or applications) in an isolated area of the computer’s hard drive, separate from the computer’s main operating system.

3. C. The Network and Sharing Center is where you can disable file sharing in Windows. It can be accessed indirectly from the Control Panel as well. By disabling file sharing, you disallow any (normal) connections to data on the computer. This can be very useful for computers with confidential information, such as an executive’s laptop or a developer’s computer.

4. A. To hide bootmgr, you either need to click the radio button for Don’t Show Hidden Files, Folders, or Drives or enable the Hide Protected Operating System Files checkbox.

5. A and B. Two ways to harden an operating system include installing the latest updates and installing Windows Defender. However, virtualization is a separate concept altogether; it can be used to create a compartmentalized OS, but needs to be secured and hardened just like any other OS. PHP scripts will generally not be used to harden an operating system. In fact, they can be vulnerabilities to websites and other applications.