Chapter 13. Cloud and Virtualization Technology Integration

This chapter covers the following topics:

Technical Deployment Models (Outsourcing/Insourcing/Managed Services/Partnership): This section covers cloud and virtualization considerations and hosting options, on-premise vs. hosted, and cloud service models.

Security Advantages and Disadvantages of Virtualization: This section describes Type 1 vs. Type 2 hypervisors, container-based virtualization, vTPM, hyperconverged infrastructure, virtual desktop infrastructure, and secure enclaves and volumes.

Cloud Augmented Security Services: This section covers anti-malware, vulnerability scanning, sandboxing, content filtering, cloud security brokers, Security as a Service, and managed security service providers.

Vulnerabilities Associated with Comingling of Hosts with Different Security Requirements: Topics include VMEscape, privilege elevation, live VM migration, and data remnants.

Data Security Considerations: This section describes vulnerabilities associated with a single server hosting multiple data types and vulnerabilities associated with a single platform hosting multiple data types/owners on multiple virtual machines.

Resources Provisioning and Deprovisioning: This section discusses virtual devices and data remnants.

This chapter covers CAS-003 objective 4.2.

Cloud computing is all the rage these days, and it comes in many forms. The basic idea of cloud computing is to make resources available in a web-based data center so the resources can be accessed from anywhere. When a company pays another company to host and manage this type of environment, we call it a public cloud solution. If the company hosts this environment itself, we call it a private cloud solution.

Virtualization is typically at the heart of cloud computing. Virtualization of servers has become a key part of reducing the physical footprint of data centers. The advantages include:

Reduced overall use of power in the data center

Dynamic allocation of memory and CPU resources to the servers

High availability provided by the ability to quickly bring up a replica server in the event of loss of the primary server

This chapter looks at cloud computing and virtualization and how these features are changing the network landscape.

Technical Deployment Models (Outsourcing/Insourcing/Managed Services/Partnership)

To integrate hosts, storage solutions, networks, and applications into a secure enterprise, an organization may use various technical deployment models, including outsourcing, insourcing, managed services, and partnerships. The following sections discuss cloud and virtualization considerations and hosting options, virtual machine vulnerabilities, secure use of on-demand/elastic cloud computing, data remnants, data aggregation, and data isolation.

Cloud and Virtualization Considerations and Hosting Options

Cloud computing allows enterprise assets to be deployed without the end user knowing where the physical assets are located or how they are configured. Virtualization involves creating a virtual device on a physical resource; a physical resource can hold more than one virtual device. For example, you can deploy multiple virtual computers on a Windows computer. But keep in mind that each virtual machine will consume some of the resources of the host machine, and the configuration of the virtual machine cannot exceed the resources of the host machine.

For the CASP exam, you must understand public, private, hybrid, community, multitenancy, and single-tenancy cloud options.

Public

A public cloud is the standard cloud computing model, in which a service provider makes resources available to the public over the Internet. Public cloud services may be free or may be offered on a pay-per-use model. An organization needs to have a business or technical liaison responsible for managing the vendor relationship but does not necessarily need a specialist in cloud deployment. Vendors of public cloud solutions include Amazon, IBM, Google, Microsoft, and many more. In a public cloud model, subscribers can add and remove resources as needed, based on their subscription.

Private

A private cloud is a cloud computing model in which a private organization implements a cloud in its internal enterprise, and that cloud is used by the organization’s employees and partners. Private cloud services require an organization to employ a specialist in cloud deployment to manage the private cloud.

Hybrid

A hybrid cloud is a cloud computing model in which an organization provides and manages some resources in-house and has others provided externally via a public cloud. This model requires a relationship with the service provider as well as an in-house cloud deployment specialist. Rules need to be defined to ensure that a hybrid cloud is deployed properly. Confidential and private information should be limited to the private cloud.

Community

A community cloud is a cloud computing model in which the cloud infrastructure is shared among several organizations from a specific group with common computing needs. In this model, agreements should explicitly define the security controls that will be in place to protect the data of each organization involved in the community cloud and how the cloud will be administered and managed.

Multitenancy

A multitenancy model is a cloud computing model in which multiple organizations share the resources. This model allows the service providers to manage resource utilization more efficiently. With this model, organizations should ensure that their data is protected from access by other organizations or unauthorized users. In addition, organizations should ensure that the service provider will have enough resources for the future needs of the organization. If multitenancy models are not properly managed, one organization can consume more than its share of resources, to the detriment of the other organizations involved in the tenancy.

Single Tenancy

A single-tenancy model is a cloud computing model in which a single tenant uses a resource. This model ensures that the tenant organization’s data is protected from other organizations. However, this model is more expensive than the multitenancy model.

On-Premise vs. Hosted

Accompanying the movement to virtualization is a movement toward the placement of resources in an on-premise versus hosted environment. An on-premise cloud solution uses resources that are on the enterprise network or deployed from the enterprise’s data center. A hosted environment is provided by a third party and is deployed on the third-party’s physical resources. Security professionals must understand the security implications of these two models, particularly if the cloud deployment will be hosted on third-party resources in a shared tenancy.

These are the biggest risks you face when placing resources in a public cloud:

Multitenancy can lead to the following:

Allowing another tenant or an attacker to see others’ data or to assume the identity of other clients

Residual data from old tenants being exposed in storage space assigned to new tenants

Mechanisms for authentication and authorization may be improper or inadequate.

Users may lose access due to inadequate redundant and fault tolerance measures.

Shared ownership of data with the customer can limit the legal liability of the provider.

The provider may use data improperly (for example, with data mining).

Data jurisdiction is an issue: Where does the data actually reside, and what laws affect it, based on its location?

As you can see, in most cases, the customer depends on the provider to prevent these issues. Any agreement an organization enters into with a provider should address each of these concerns clearly.

Environmental reconnaissance testing should involve testing all these improper access issues. Any issues that are identified should be immediately addressed with the vendor.

Cloud Service Models

There is trade-off to consider when a decision must be made between architectures. A private solution provides the most control over the safety of your data but also requires the staff and the knowledge to deploy, manage, and secure the solution. A public cloud puts your data’s safety in the hands of a third party, but that party is more capable and knowledgeable about protecting data in such an environment and managing the cloud environment.

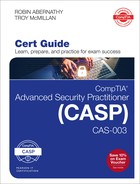

With a public solution, various levels of service can be purchased. Some of these levels include:

Software as a Service (SaaS): With SaaS, the vendor provides the entire solution, including the operating system, the infrastructure software, and the application. The vendor may provide an email system, for example, in which it hosts and manages everything for the contracting company. An example of this is a company that contracts to use Salesforce or Intuit QuickBooks using a browser rather than installing the application on every machine. This frees the customer company from performing updates and other maintenance of the applications.

Infrastructure as a Service (IaaS): With IaaS, the vendor provides the hardware platform or data center, and the company installs and manages its own operating systems and application systems. The vendor simply provides access to the data center and maintains that access. An example of this is a company hosting all its web servers with a third party that provides everything. With IaaS, customers can benefit from the dynamic allocation of additional resources in times of high activity, while those same resources are scaled back when not needed, which saves money.

Platform as a Service (PaaS): With PaaS, the vendor provides the hardware platform or data center and the software running on the platform, including the operating systems and infrastructure software. The company is still involved in managing the system. An example of this is a company that contacts a third party to provide a development platform for internal developers to use for development and testing.

The relationship of these services to one another is shown in Figure 13-1.

Security Advantages and Disadvantages of Virtualization

Virtualization of servers has become a key part of reducing the physical footprint of data centers. The advantages include:

Reduced overall use of power in the data center

Dynamic allocation of memory and CPU resources to the servers

High availability provided by the ability to quickly bring up a replica server in the event of loss of the primary server

However, most of the same security issues that must be mitigated in the physical environment must also be addressed in the virtual network.

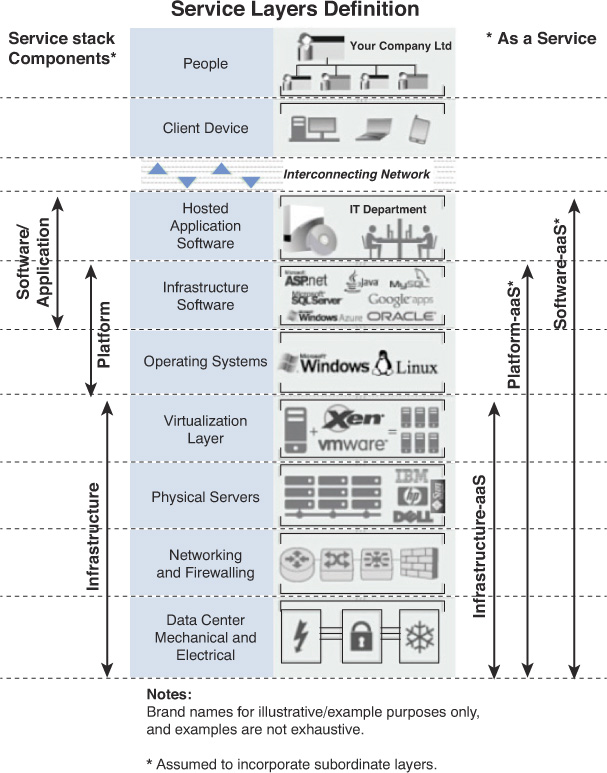

In a virtual environment, instances of an operating system are virtual machines (VMs). A host system can contain many VMs. Software called a hypervisor manages the distribution of resources (CPU, memory, and disk) to the virtual machines. Figure 13-2 shows the relationship between the host machine, its physical resources, the resident VMs, and the virtual resources assigned to them.

Keep in mind that in any virtual environment, each virtual server that is hosted on the physical server must be configured with its own security mechanisms. These mechanisms include antivirus and anti-malware software and all the latest service packs and security updates for all the software hosted on the virtual machine. Also, remember that all the virtual servers share the resources of the physical device.

When virtualization is hosted on a Linux machine, any sensitive application that must be installed on the host should be installed in a chroot environment. A chroot on UNIX-based operating system is an operation that changes the root directory for the current running process and its children. A program that is run in such a modified environment cannot name (and therefore normally cannot access) files outside the designated directory tree.

Type 1 vs. Type 2 Hypervisors

There are two types of hypervisors. Let’s take a look at their differences.

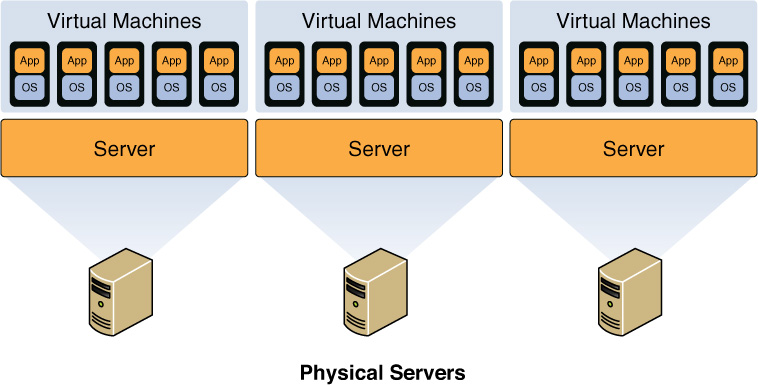

Type 1 Hypervisor

The hypervisor that manages the distribution of the physical server’s resources can be either Type 1 or Type 2. A guest operating system runs on another level above the hypervisor. Examples of Type 1 hypervisors are Citrix XenServer, Microsoft Hyper-V, and VMware vSphere.

Type 2 Hypervisor

A Type 2 hypervisor runs within a conventional operating system environment. With the hypervisor layer as a distinct second software level, guest operating systems run at the third level above the hardware. VMware Workstation and VirtualBox exemplify Type 2 hypervisors. A comparison of the two approaches is shown in Figure 13-3.

Container-Based

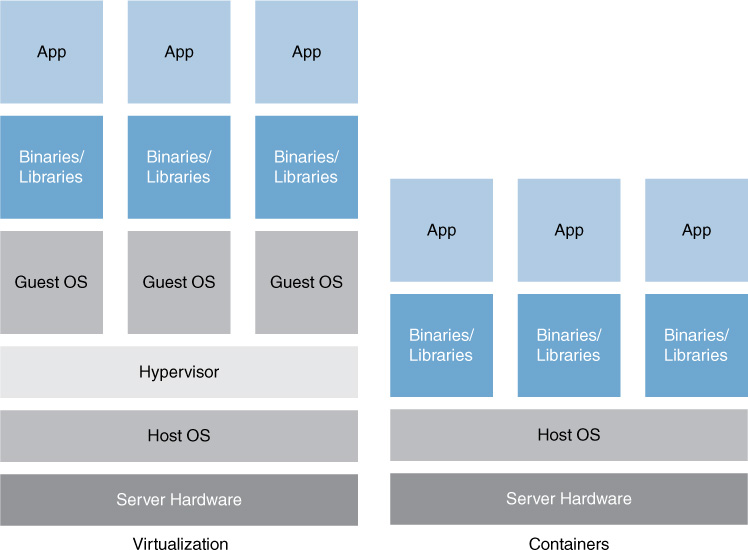

A newer approach to virtualization is referred to as container-based virtualization, also called operating system virtualization. This kind of server virtualization is a technique in which the kernel allows for multiple isolated user space instances. The instances are known as containers, virtual private servers, or virtual environments.

In this model, the hypervisor is replaced with operating system–level virtualization, where the kernel of an operating system allows multiple isolated user spaces or containers. A virtual machine is not a complete operating system instance but rather a partial instance of the same operating system. The containers in Figure 13-4 are the blue boxes just above the host OS level. Container-based virtualization is used mostly in Linux environments, and examples are the commercial Parallels Virtuozzo and the open source OpenVZ project.

vTPM

Virtual TPM (vTPM) is covered in Chapter 6, “Security Controls for Host Devices.”

Hyperconverged Infrastructure

A converged infrastructure is one in which the vendor integrates all storage, network, and computer gear into the same physical enclosure, simplifying the data center deployment. It provides a single management interface for these resources. Examples of this are the Dell System Managed system and the IBM PurePlex system.

Hyperconvergence takes this a step further, utilizing software to perform this integration without hardware changes. It utilizes virtualization as well. It integrates numerous services that are managed from a single interface. While this approach allows for expansion by simply adding more hardware without regard to vendor, the organization becomes somewhat tied to a specific vendor’s hyperconvergence solution. Examples of hyperconverged solutions are VMware EVO:RAIL and Nutanix NX with Acropolis and Prism.

Virtual Desktop Infrastructure

A virtual desktop infrastructure (VDI) hosts desktop operating systems within a virtual environment in a centralized server. Users access the desktops and run them from the server. VDI is covered in Chapter 5, “Network and Security Components, Concepts, and Architectures.”

Secure Enclaves and Volumes

Secure enclaves and secure volumes both have the same goal: to minimize the amount of time sensitive data is unencrypted as it is used. Secure enclaves are processors that process data in its encrypted state. This means that even those with access to the underlying hardware in the virtual environment are not able to access the data.

Secure enclaves are supported in Windows Azure and other systems. The concept is utilized in Apple devices. The secure processor prevents access to data by the main processor. One well-known service that utilizes the processor is Touch ID.

Secure volumes accomplish this goal in a different way. A secure volume is unmounted and hidden until used. Only then is it mounted and decrypted. When edits are complete, the volume is encrypted and unmounted.

Cloud Augmented Security Services

Cloud computing is all the rage, and everyone is falling all over themselves to put their data “in the cloud.” However, security issues arise when you do this. Where is your data actually residing physically? Is it comingled with others’ data? How secure is it? It’s quite scary to trust the security of your data to others. The following sections look at issues surrounding cloud security.

Hash Matching

A method that has been used to steal data from a cloud infrastructure is a process called hash matching, or hash spoofing. A good example of this vulnerability is the case of the attack on the cloud vendor Dropbox.

Dropbox used hashes to identify blocks of data stored by users in the cloud as a part of the data deduplication process. These hashes, which are values derived from the data used to uniquely identify the data, are used to determine whether data has changed when a user connects, indicating consequently whether a synchronization process needs to occur.

The attack involved spoofing the hashes in order to gain access to arbitrary pieces of other customers’ data. Because the unauthorized access was granted from the cloud, the customer whose files were being distributed didn’t know it was happening.

Since this attack was discovered, Dropbox has addressed the issue through the use of stronger hashing algorithms, but hash matching can still be a concern with any private, public, or hybrid cloud solution.

Hashing can also be used for the forces of good. Antivirus software uses hashing to identify malware. Signature-based antivirus products look for matching hashes when looking for malware. The problem that has developed is that malware has evolved and can now change itself, thereby changing its hash value. This is leading to the use of what is called fuzzy hashing. Unlike typical hashing, in which an identical match must occur, fuzzy hashing looks for hashes that are close but not perfect matches.

Anti-malware

Cloud antimalware products run not on your local computer but in the cloud, creating a smaller footprint on the client and utilizing processing power in the cloud. They have the following advantages:

They allow access to the latest malware data within minutes of the cloud antimalware service learning about it.

They eliminate the need to continually update your antimalware.

The client is small, and it requires little processing power.

Cloud antimalware products have the following disadvantages:

There is a client-to-cloud relationship, which means they cannot run in the background.

They may scan only the core Windows files for viruses and not the whole computer.

They are highly dependent on an Internet connection.

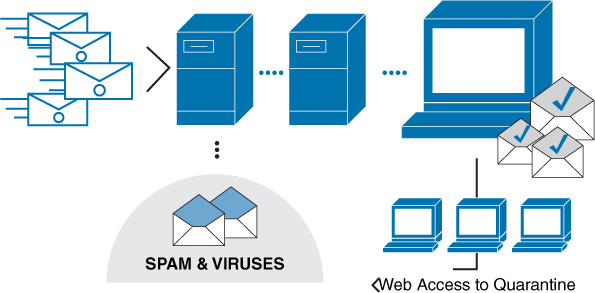

Antispam services can also be offered from the cloud. Vendors such as Postini and Mimecast scan your email and then store anything identified as problematic on their server, where you can look through the spam to verify that it is, in fact, spam. In this process, illustrated in Figure 13-5, the mail first goes through the cloud server, where any problematic mail is quarantined. Then the users can view the quarantined items through a browser at any time.

Vulnerability Scanning

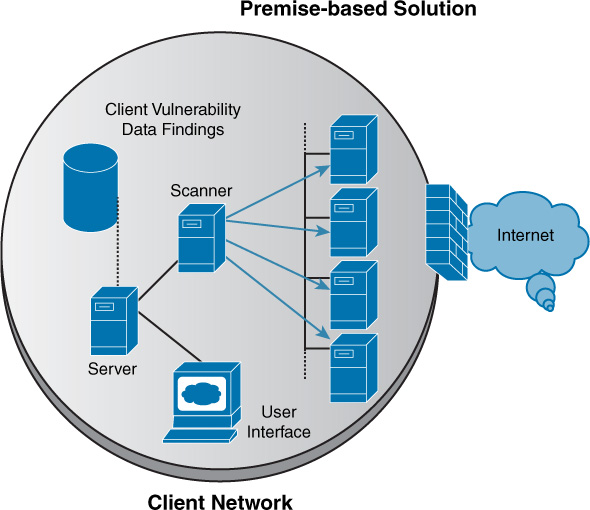

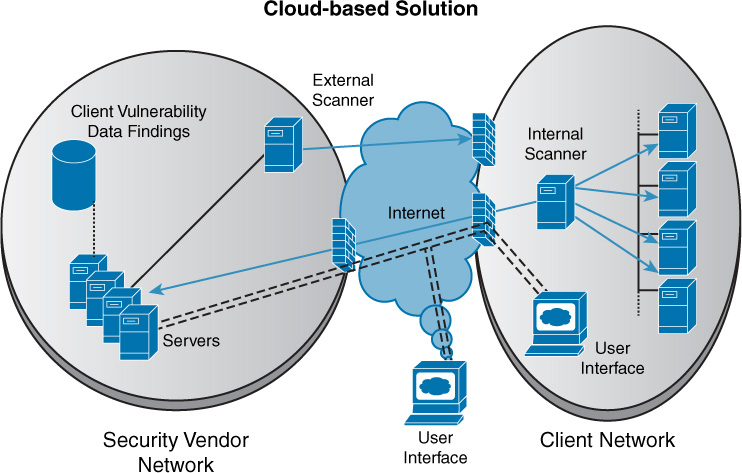

Cloud-based vulnerability scanning is a service that is a performed from the vendor’s cloud and can be considered a good example of SaaS. The benefits that are derived are those derived from any SaaS offering—that is, no equipment on the part of the subscriber and no footprint in the local network. Figure 13-6 shows a premise-based approach to vulnerability scanning, while Figure 13-7 shows a cloud-based solution. In the premise-based approach, the hardware and/or software vulnerability scanners and associated components are entirely installed on the client premises, while in the cloud-based approach, the vulnerability management platform is in the cloud. Vulnerability scanners for external vulnerability assessments are located at the solution provider’s site, with additional scanners on the premises.

The advantages of the cloud-based approach are:

Installation costs are low because there is no installation and configuration for the client to complete.

Maintenance costs are low as there is only one centralized component to maintain, and it is maintained by the vendor (not the end client).

Upgrades are included in a subscription.

Costs are distributed among all customers.

It does not require the client to provide onsite equipment.

However, there is a considerable disadvantage: Whereas premise-based deployments store data findings at the organization’s site, in a cloud-based deployment, the data is resident with the provider. This means the customer is dependent on the provider to ensure the security of the vulnerability data.

Sandboxing

Sandboxing is the segregation of virtual environments for security proposes. Sandboxed appliances have been used in the past to supplement the security features of a network. These appliances are used to test suspicious files in a protected environment. Cloud-based sandboxing has some advantages over sandboxing performed on the premises:

It is free of hardware limitations and is therefore scalable and elastic.

It is possible to track malware over a period of hours or days.

It can be easily updated with any operating system type and version.

It isn’t limited by geography.

The potential disadvantage is that many sandboxing products suffer incompatibility issues with many applications and other utilities, such as antivirus products.

Content Filtering

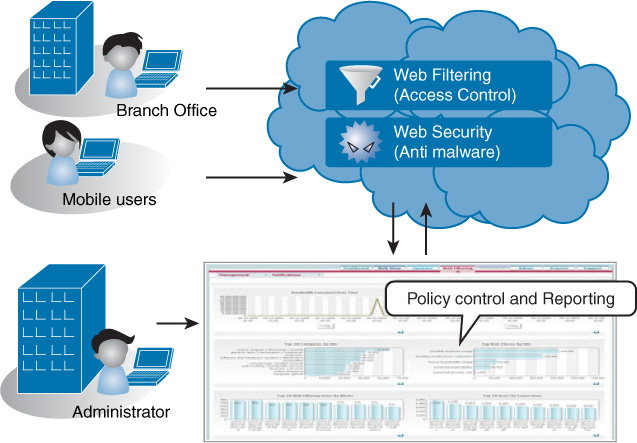

Filtering of web content can be provided as a cloud-based solution. In this case, all content is examined through the providers. The benefits are those derived from all cloud solutions: savings on equipment and support of the content filtering process while maintaining control of the process. This process is shown in Figure 13-8.

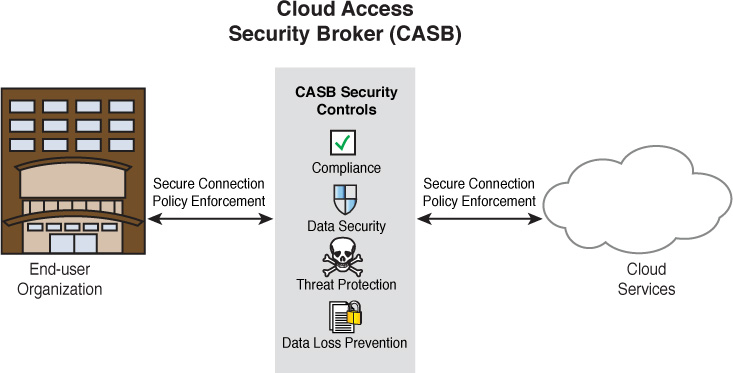

Cloud Security Broker

A cloud security broker, or cloud access security broker (CASB), is a software layer that operates as a gatekeeper between an organization’s on-premise network and the provider’s cloud environment. It can provide many services in this strategic position, as shown in Figure 13-9. Vendors in the cloud access security space include Skyhigh Networks and Netskope.

Security as a Service

Another cloud-based service is Security as a Service (SecaaS). Many organizations don’t have the skill sets to provide required security services, and it doesn’t make business sense to acquire them. For these organizations, it may make sense to engage a security provider, which offers the following benefits:

Cost savings

Consistent and uniform protection

Consistent virus definition updates

Greater security expertise

Faster user provisioning

Outsourcing of administrative tasks

Intuitive administrative interface

Managed Security Service Providers

Taking the idea of SecaaS a step further, managed security service providers (MSSPs) offer the option of fully outsourcing all information assurance to a third party. If an organization decides to deploy a third-party identity service, including cloud computing solutions, security practitioners must be involved in the integration of that implementation with internal services and resources. This integration can be complex, especially if the provider solution is not fully compatible with existing internal systems. Most third-party identity services provide cloud identity, directory synchronization, and federated identity. Examples of these services include Amazon Web Services (AWS), AWS Identity and Access Management (IAM) service, and Oracle Identity Management.

Vulnerabilities Associated with Comingling of Hosts with Different Security Requirements

When guest systems are virtualized, they may share a common host machine. When this occurs and the systems sharing the host have varying security requirements, security issues can arise. The following sections look at some of these issues and some measures that can be taken to avoid them.

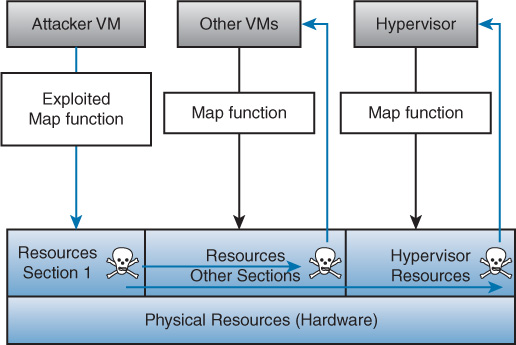

VMEscape

In a VMEscape attack, the attacker “breaks out” of a VM’s normally isolated state and interacts directly with the hypervisor. Since VMs often share the same physical resources, if the attacker can discover how his VM’s virtual resources map to the physical resources, he will be able to conduct attacks directly on the real physical resources. If he is able to modify his virtual memory in a way that exploits how the physical resources are mapped to each VM, the attacker can affect all the VMs, the hypervisor, and potentially other programs on that machine. Figure 13-10 shows the relationship between the virtual resources and the physical resources and how an attacker can attack the hypervisor and other VMs. To help mitigate a VMEscape attack, virtual servers should only be on the same physical server as others in their network segment.

Privilege Elevation

In some cases, the dangers of privilege elevation, or escalation, in a virtualized environment may be equal to or greater than those in a physical environment. When the hypervisor is performing its duty of handling calls between the guest operating system and the hardware, any flaws introduced to those calls could allow an attacker to escalate privileges in the guest operating system. A recent case of a flaw in VMware ESX Server, Workstation, Fusion, and View products could have led to escalation on the host. VMware reacted quickly to fix this flaw with a security update. The key to preventing privilege escalation is to make sure all virtualization products have the latest updates and patches.

Live VM Migration

One of the advantages of a virtualized environment is the ability of the system to migrate a VM from one host to another when needed. This is called a live migration. When VMs are on the network between secured perimeters, attackers can exploit the network vulnerability to gain unauthorized access to VMs. With access to VM images, attackers can plant malicious code in the VM images to plant attacks on data centers that VMs travel between. Often the protocols used for the migration are not encrypted, making a man-in-the-middle attack in the VM possible while it is in transit, as shown in Figure 13-11. They key to preventing man-in-the-middle attacks is encryption of the images where they are stored.

Data Remnants

Sensitive data inadvertently replicated in VMs as a result of cloud maintenance functions or remnant data left in terminated VMs needs to be protected. Also, if data is moved, residual data may be left behind, and unauthorized users may be able to access it. Any remaining data in the old location should be shredded, but depending on the security practice, data remnants may persist. This can be a concern with confidential data in private clouds and any sensitive data in public clouds.

There are commercial products such as those made by Blancco to permanently remove data from PCs, servers, data center equipment, and smartphones. Data erased by Blancco cannot be recovered with any existing technology. Blancco also creates a report to report each erasure for compliance purposes.

Data Security Considerations

In a cloud deployment, a single server or a single platform may hold multiple customers’ VMs. In both cases, these can present security vulnerabilities if not handled correctly. Let’s look at these issues.

Vulnerabilities Associated with a Single Server Hosting Multiple Data Types

In some virtualization deployments, a single physical server hosts multiple organizations’ VMs. All of the VMs hosted on a single physical computer must share the resources of that physical server. If the physical server crashes or is compromised, all of the organizations that have VMs on that physical server are affected. User access to the VMs should be properly configured, managed, and audited. Appropriate security controls, including antivirus, anti-malware, access control lists (ACLs), and auditing, must be implemented on each of the VMs to ensure that each one is properly protected. Other risks to consider include physical server resource depletion, network resource performance, and traffic filtering between virtual machines.

Driven mainly by cost, many companies outsource to cloud providers computing jobs that require a large number of processor cycles for a short duration. This situation allows a company to avoid a large investment in computing resources that will be used for only a short time. Assuming that the provisioned resources are dedicated to a single company, the main vulnerability associated with on-demand provisioning is traces of proprietary data that can remain on the virtual machine and may be exploited.

Let’s look at an example. Say that a security architect is seeking to outsource company server resources to a commercial cloud service provider. The provider under consideration has a reputation for poorly controlling physical access to data centers and has been the victim of social engineering attacks. The service provider regularly assigns VMs from multiple clients to the same physical resource. When conducting the final risk assessment, the security architect should take into consideration the likelihood that a malicious user will obtain proprietary information by gaining local access to the hypervisor platform.

Vulnerabilities Associated with a Single Platform Hosting Multiple Data Types/Owners on Multiple Virtual Machines

In some virtualization deployments, a single platform hosts multiple organizations’ VMs. If all of the servers that host VMs use the same platform, attackers will find it much easier to attack the other host servers once the platform is discovered. For example, if all physical servers use VMware to host VMs, any identified vulnerabilities for that platform could be used on all host computers. Other risks to consider include misconfigured platforms, separation of duties, and application of security policy to network interfaces.

If an administrator wants to virtualize the company’s web servers, application servers, and database servers, the following should be done to secure the virtual host machines: only access hosts through a secure management interface and restrict physical and network access to the host console.

Resources Provisioning and Deprovisioning

Just as when working with physical resources, the deployment of virtual solutions and the decommissioning of such virtual solutions should follow certain best practices. Provisioning is the process of adding a resource for usage, and deprovisioning is the process of removing a resource from usage. Provisioning and deprovisioning are important in both virtualization and cloud environments, especially if the enterprise is paying on a per-resource basis or based on the uptime of resources. Security professionals should ensure that the appropriate provisioning and deprovisioning procedures are documented and followed.

The following sections cover virtual devices and data remnants, which are two considerations for this process.

Virtual Devices

When virtual devices are provisioned in a cloud environment, there should be some method of securing access to the resource (such as VMs) such that the provider no longer has direct access. Access should be provided only to the customer and should be secured with some sort of identifying key or ID number. SLAs should be scrutinized to ensure that this actually happens.

Data Remnants

Data remnants are data that is left behind on a computer or another resource when that resource is no longer used. The best way to protect this data is to employ some sort of data encryption. If data is encrypted, it cannot be recovered without the original encryption key. If resources, especially hard drives, are reused frequently, an unauthorized user can access data remnants.

Administrators must understand the kind of data that is stored on physical drives. This helps them determine whether data remnants should be a concern. If the data stored on a drive is not private or confidential, the organization may not be concerned about data remnants. However, if the data stored on the drive is private or confidential, the organization may want to implement asset reuse and disposal policies.

SLAs of cloud providers must be examined to ensure that data remnants are destroyed using a method commensurate with the sensitivity of the data or that data is permanently encrypted and the key destroyed.

Exam Preparation Tasks

You have a couple choices for exam preparation: the exercises here and the practice exams in the Pearson IT Certification test engine.

Review All Key Topics

Review the most important topics in this chapter, noted with the Key Topics icon in the outer margin of the page. Table 13-1 lists these key topics and the page number on which each is found.

Table 13-1 Key Topics for Chapter 13

Key Topic Element |

Description |

Page Number |

List |

Advantages of virtualization |

513 |

List |

Risks of placing resources in a public cloud |

515 |

List |

Cloud service models |

516 |

List |

Advantages of cloud antivirus |

522 |

List |

Advantages of cloud-based vulnerability scanning |

524 |

List |

Advantages of cloud-based sandboxing |

525 |

List |

Benefits of Security as a Service |

527 |

Define Key Terms

Define the following key terms from this chapter and check your answers in the glossary:

container-based virtualization

Infrastructure as a Service (IaaS)

managed security service provider (MSSP)

Security as a Service (SecaaS)

virtual desktop infrastructure (VDI)

Review Questions

1. Your organization has recently experienced issues with data storage. The servers you currently use do not provide adequate storage. After researching the issues and the options available, you decide that data storage needs for your organization will grow exponentially over the next couple years. However, within three years, data storage needs will return to the current demand level. Management wants to implement a solution that will provide for current and future needs without investing in hardware that will no longer be needed in the future. Which recommendation should you make?

Deploy virtual servers on the existing machines.

Contract with a public cloud service provider.

Deploy a private cloud service.

Deploy a community cloud service.

2. Management expresses concerns about using multitenant public cloud solutions to store organizational data. You explain that tenant data in a multitenant solution is quarantined from other tenants’ data using tenant IDs in the data labels. What is this condition referred to?

data remnants

data aggregation

data purging

data isolation

3. Which of the following is a cloud solution owned and managed by one company solely for that company’s use?

hybrid

public

private

community

4. Which of the following runs directly on the host’s hardware to control the hardware and to manage guest operating systems?

Type 1 hypervisor

Type 2 hypervisor

Type 3 hypervisor

Type 4 hypervisor

5. In which cloud service model does the vendor provide the hardware platform or data center, while the company installs and manages its own operating systems and application systems?

IaaS

SaaS

PaaS

SecaaS

6. Which of the following is not an advantage of virtualization?

reduced overall use of power in the data center

dynamic allocation of memory and CPU resources to the servers

ability to quickly bring up a replica server in the event of loss of the primary server

better security

7. In which attack does the attacker leave the VM’s normally isolated state and interact directly with the hypervisor?

VMEscape

cross violation

XSS

CSRF

8. Which of the following utilizes software to perform integration without hardware changes?

hyperconvergence

convergence

sandboxing

secure enclaves

9. Which of the following minimizes the amount of time that sensitive data is unencrypted as it is used?

secure enclaves

vTPM

TPM

hash matching

10. Which of the following is a software layer that operates as a gatekeeper between the organization’s on-premise network and a provider’s cloud environment?

SecaaS

CASB

MSSP

PaaP