Chapter 14. Authentication and Authorization Technology Integration

This chapter covers the following topics:

Authentication: This section covers certificate-based authentication, single sign-on, 802.1x, context-aware authentication, and push-based authentication.

Authorization: This section describes OAuth, XACML, and SPML.

Attestation: This section discusses the purpose of attestation.

Identity Proofing: Topics include various methods of proofing.

Identity Propagation: This section describes challenges and benefits of identity propagation.

Federation: This section discusses SAML, OpenID, Shibboleth, and WAYF.

Trust Models: This section covers RADIUS configurations, LDAP, and AD.

This chapter covers CAS-003 objective 4.3.

Identifying users and devices and determining the actions permitted by a user or device forms the foundation of access control models. While this paradigm has not changed since the beginning of network computing, the methods used to perform this important set of functions have changed greatly and continue to evolve.

While simple usernames and passwords once served the function of access control, in today’s world, more sophisticated and secure methods are developing quickly. Not only are such simple systems no longer secure, the design of access credential systems today emphasizes ease of use. The goal of techniques such as single sign-on and federated access control is to make a system as easy as possible for the users. This chapter covers evolving technologies and techniques that relate to authentication and authorization.

Authentication

To be able to access a resource, a user must prove her identity, provide the necessary credentials, and have the appropriate rights to perform the tasks she is completing. So there are two parts:

Identification: In the first part of the process, a user professes an identity to an access control system.

Authentication: The second part of the process involves validating a user with a unique identifier by providing the appropriate credentials.

When trying to differentiate between these two parts, security professionals should know that identification identifies the user, and authentication verifies that the identity provided by the user is valid. Authentication is usually implemented through a user password provided at login. The login process should validate the login after all the input data is supplied.

The most popular forms of user identification include user IDs or user accounts, account numbers, and personal identification numbers (PINs).

Authentication Factors

Once the user identification method has been established, an organization must decide which authentication method to use. Authentication methods are divided into five broad categories:

Knowledge factor authentication: Something a person knows

Ownership factor authentication: Something a person has

Characteristic factor authentication: Something a person is

Location factor authentication: Somewhere a person is

Action factor authentication: Something a person does

Authentication usually ensures that a user provides at least one factor from these categories, which is referred to as single-factor authentication. An example of this would be providing a username and password at login. Two-factor authentication ensures that the user provides two of the three factors. An example of two-factor authentication would be providing a username, password, and smart card at login. Three-factor authentication ensures that a user provides three factors. An example of three-factor authentication would be providing a username, password, smart card, and fingerprint at login. For authentication to be considered strong authentication, a user must provide factors from at least two different categories. (Note that the username is the identification factor, not an authentication factor.)

You should understand that providing multiple authentication factors from the same category is still considered single-factor authentication. For example, if a user provides a username, password, and the user’s mother’s maiden name, single-factor authentication is being used. In this example, the user is still only providing factors that are something a person knows.

Knowledge Factors

As briefly described above, knowledge factor authentication is authentication that is provided based on something a person knows. This type of authentication is referred to as a Type I authentication factor. While the most popular form of authentication used by this category is password authentication, other knowledge factors can be used, including date of birth, mother’s maiden name, key combination, or PIN.

Ownership Factors

As briefly described above, ownership factor authentication is authentication that is provided based on something that a person has. This type of authentication is referred to as a Type II authentication factor. Ownership factors can include the following:

Token devices: A token device is a handheld device that presents the authentication server with the one-time password. If the authentication method requires a token device, the user must be in physical possession of the device to authenticate. So although the token device provides a password to the authentication server, the token device is considered a Type II authentication factor because its use requires ownership of the device. A token device is usually implemented only in very secure environments because of the cost of deploying the token device. In addition, token-based solutions can experience problems because of the battery life span of the token device.

Memory cards: A memory card is a swipe card that is issued to a valid user. The card contains user authentication information. When the card is swiped through a card reader, the information stored on the card is compared to the information that the user enters. If the information matches, the authentication server approves the login. If it does not match, authentication is denied. Because the card must be read by a card reader, each computer or access device must have its own card reader. In addition, the cards must be created and programmed. Both of these steps add complexity and cost to the authentication process. However, it is often worth the extra complexity and cost for the added security it provides, which is a definite benefit of this system. However, the data on the memory cards is not protected, and this is a weakness that organizations should consider before implementing this type of system. Memory-only cards are very easy to counterfeit.

Smart cards: A smart card accepts, stores, and sends data but can hold more data than a memory card. Smart cards, often known as integrated circuit cards (ICCs), contain memory like memory cards but also contain embedded chips like bank or credit cards. Smart cards use card readers. However, the data on a smart card is used by the authentication server without user input. To protect against lost or stolen smart cards, most implementations require the user to input a secret PIN, meaning the user is actually providing both Type I (PIN) and Type II (smart card) authentication factors.

Characteristic Factors

As briefly described above, characteristic factor authentication is authentication that is provided based on something a person is. This type of authentication is referred to as a Type III authentication factor. Biometric technology is the technology that allows users to be authenticated based on physiological or behavioral characteristics. Physiological characteristics include any unique physical attribute of the user, including iris, retina, and fingerprints. Behavioral characteristics measure a person’s actions in a situation, including voice patterns and data entry characteristics.

Additional Authentication Concepts

The following are some additional authentication concepts with which all security professionals should be familiar:

Time-Based One-Time Password algorithm (TOTP): This is an algorithm that computes a password from a shared secret and the current time. It is based on HOTP but turns the current time into an integer-based counter.

HMAC-Based One-Time Password algorithm (HOTP): This is an algorithm that computes a password from a shared secret that is used one time only. It uses an incrementing counter that is synchronized on both the client and the server to do this.

Single sign-on (SSO): This is provided when an authentication system requires a user to authenticate only once to access all network resources.

Identity and Account Management

Identity and account management is vital to any authentication process. As a security professional, you must ensure that your organization has a formal procedure to control the creation and allocation of access credentials or identities. If invalid accounts are allowed to be created and are not disabled, security breaches will occur. Most organizations implement a method to review the identification and authentication process to ensure that user accounts are current. Questions that are likely to help in the process include:

Is a current list of authorized users and their access maintained and approved?

Are passwords changed at least every 90 days—or earlier, if needed?

Are inactive user accounts disabled after a specified period of time?

Any identity management procedure must include processes for creating, changing, and removing users from the access control system. When initially establishing a user account, a new user should be required to provide valid photo identification and should sign a statement regarding password confidentiality. User accounts must be unique. Policies should be in place to standardize the structure of user accounts. For example, all user accounts should be firstname.lastname or follow some other structure. This ensures that users in an organization will be able to determine a new user’s identification, mainly for communication purposes.

Once they are created, user accounts should be monitored to ensure that they remain active. Inactive accounts should be automatically disabled after a certain period of inactivity, based on business requirements. In addition, a termination policy should include formal procedures to ensure that all user accounts are disabled or deleted. Elements of proper account management include the following:

Establish a formal process for establishing, issuing, and closing user accounts.

Periodically review user accounts.

Implement a process for tracking access authorization.

Periodically rescreen personnel in sensitive positions.

Periodically verify the legitimacy of user accounts.

User account reviews are a vital part of account management. User accounts should be reviewed for conformity with the principle of least privilege (which is explained later in this chapter). User account reviews can be performed on an enterprisewide, systemwide, or application-by-application basis. The size of the organization will greatly affect which of these methods to use. As part of user account reviews, organizations should determine whether all user accounts are active.

Password Types and Management

As mentioned earlier in this chapter, password authentication is the most popular authentication method implemented today. But often password types can vary from system to system. It is vital that you understand all the types of passwords that can be used.

Some of the types of passwords that you should be familiar with include:

Standard word passwords: As the name implies, this type of password consists of a single word that often includes a mixture of upper- and lowercase letters. The advantage of this password type is that it is easy to remember. A disadvantage of this password type is that it is easy for attackers to crack or break, which can lead to a compromised account.

Combination passwords: These passwords, also called composition passwords, use a mix of dictionary words—usually two that are unrelated. Like standard word passwords, they can include upper- and lowercase letters and numbers. An advantage of this password type is that it is harder to break than a standard word password. A disadvantage is that it can be hard to remember.

Static passwords: This password type is the same for each login. It provides a minimum level of security because the password never changes. It is most often seen in peer-to-peer networks.

Complex passwords: This password type forces a user to include a mixture of upper- and lowercase letters, numbers, and special characters. For many organizations today, this type of password is enforced as part of the organization’s password policy. An advantage of this password type is that it is very hard to crack. A disadvantage is that it is harder to remember and can often be much harder to enter correctly.

Passphrase passwords: This password type requires that a long phrase be used. Because of the password’s length, it is easier to remember but much harder to attack, both of which are definite advantages. Incorporating upper- and lowercase letters, numbers, and special characters in this type of password can significantly increase authentication security.

Cognitive passwords: This password type is a piece of information that can be used to verify an individual’s identity. The user provides this information to the system by answering a series of questions based on her life, such as favorite color, pet’s name, mother’s maiden name, and so on. An advantage of this type is that users can usually easily remember this information. The disadvantage is that someone who has intimate knowledge of the person’s life (spouse, child, sibling, and so on) may be able to provide this information as well.

One-time passwords (OTPs): Also called a dynamic password, an OTP is used only once to log in to the access control system. This password type provides the highest level of security because it is discarded after it is used once.

Graphical passwords: Also called Completely Automated Public Turing test to tell Computers and Humans Apart (CAPTCHA) passwords, this type of password uses graphics as part of the authentication mechanism. One popular implementation requires a user to enter a series of characters that appear in a graphic. This implementation ensures that a human, not a machine, is entering the password. Another popular implementation requires the user to select the appropriate graphic for his account from a list of graphics.

Numeric passwords: This type of password includes only numbers. Keep in mind that the choices of a password are limited by the number of digits allowed. For example, if all passwords are four digits, then the maximum number of password possibilities is 10,000, from 0000 through 9999. Once an attacker realizes that only numbers are used, cracking user passwords will be much easier because the attacker will know the possibilities.

The simpler types of passwords are considered weaker than passphrases, one-time passwords, token devices, and login phrases. Once an organization has decided which type of password to use, the organization must establish its password management policies.

Password management considerations include, but may not be limited to:

Password life: How long a password will be valid. For most organizations, passwords are valid for 60 to 90 days.

Password history: How long before a password can be reused. Password policies usually remember a certain number of previously used passwords.

Authentication period: How long a user can remain logged in. If a user remains logged in for the specified period without activity, the user will be automatically logged out.

Password complexity: How the password will be structured. Most organizations require upper- and lowercase letters, numbers, and special characters.

Password length: How long the password must be. Most organizations require 8 to 12 characters.

As part of password management, an organization should establish a procedure for changing passwords. Most organizations implement a service that allows a user to automatically reset his password before it expires. In addition, most organizations should consider establishing a password reset policy in cases where users have forgotten their passwords or the passwords have been compromised. A self-service password reset approach allows users to reset their own passwords, without the assistance of help desk employees. An assisted password reset approach requires that users contact help desk personnel for help changing passwords.

Password reset policies can also be affected by other organizational policies, such as account lockout policies. Account lockout policies are security policies that organizations implement to protect against attacks carried out against passwords. Organizations often configure account lockout policies so that user accounts are locked after a certain number of unsuccessful login attempts. If an account is locked out, the system administrator may need to unlock or reenable the user account. Security professionals should also consider encouraging organizations to require users to reset their passwords if their accounts have been locked. For most organizations, all the password policies, including account lockout policies, are implemented at the enterprise level on the servers that manage the network.

Note

An older term that you may need to be familiar with is clipping level. A clipping level is a configured baseline threshold above which violations will be recorded. For example, an organization may want to start recording any unsuccessful login attempts after the first one, with account lockout occurring after five failed attempts.

Depending on which servers are used to manage the enterprise, security professionals must be aware of the security issues that affect user accounts and password management. Two popular server operating systems are Linux and Windows.

For UNIX/Linux, passwords are stored in the /etc/passwd or /etc/shadow file. Because the /etc/passwd file is a text file that can be easily accessed, you should ensure that any Linux servers use the /etc/shadow file, where the passwords in the file can be protected using a hash. The root user in Linux is a default account that is given administrative-level access to the entire server. If the root account is compromised, all passwords should be changed. Access to the root account should be limited only to system administrators, and root login should be allowed only via a system console.

For Windows Server 2003 and earlier and all client versions of Windows that are in workgroups, the Security Accounts Manager (SAM) stores user passwords in a hashed format. It stores a password as an Lan Manager (LM) hash and/or an NT Lan Manager ( NTLM) hash. However, known security issues exist with a SAM, especially with regard to the LM hashes, including the ability to dump the password hashes directly from the Registry. You should take all Microsoft-recommended security measures to protect this file. If you manage a Windows network, you should change the name of the default administrator account or disable it. If this account is retained, make sure that you assign a password to it. The default administrator account may have full access to a Windows server.

Most versions of Windows can be configured to disable the creation and storage of valid LM hashes when the user changes her password. This is the default setting in Windows Vista and later but was disabled by default in earlier versions of Windows.

Physiological Characteristics

Physiological systems use a biometric scanning device to measure certain information about a physiological characteristic. You should understand the following physiological biometric systems:

Fingerprint scan: This type of scan usually examines the ridges of a finger for matching. A special type of fingerprint scan called minutiae matching is more microscopic; it records the bifurcations and other detailed characteristics. Minutiae matching requires more authentication server space and more processing time than ridge fingerprint scans. Fingerprint scanning systems will be used and shared.

Finger scan: This type of scan extracts only certain features from a fingerprint. Because a limited amount of the fingerprint information is needed, finger scans require less server space or processing time than any type of fingerprint scan.

Hand geometry scan: This type of scan usually obtains size, shape, or other layout attributes of a user’s hand but can also measure bone length or finger length. Two categories of hand geometry systems are mechanical and image-edge detective systems. Regardless of which category is used, hand geometry scanners require less server space and processing time than fingerprint or finger scans.

Hand topography scan: This type of scan records the peaks and valleys of the hand and its shape. This system is usually implemented in conjunction with hand geometry scans because hand topography scans are not unique enough if used alone.

Palm or hand scan: This type of scan combines fingerprint and hand geometry technologies. It records fingerprint information from every finger as well as hand geometry information.

Facial scan: This type of scan records facial characteristics, including bone structure, eye width, and forehead size. This biometric method uses eigenfeatures or eigenfaces.

Retina scan: This type of scan examines the retina’s blood vessel pattern. A retina scan is considered more intrusive than an iris scan.

Iris scan: This type of scan examines the colored portion of the eye, including all rifts, corneas, and furrows. Iris scans have greater accuracy than the other biometric scans.

Vascular scan: This type of scan examines the pattern of veins in the user’s hand or face. While this method can be a good choice because it is not very intrusive, physical injuries to the hand or face, depending on which the system uses, could cause false rejections.

Behavioral Characteristics

Behavioral systems use a biometric scanning device to measure a person’s actions. You should understand the following behavioral biometric systems:

Signature dynamics: This type of system measures stroke speed, pen pressure, and acceleration and deceleration while the user writes her signature. Dynamic signature verification (DSV) analyzes signature features and specific features of the signing process.

Keystroke dynamics: This type of system measures the typing pattern that a user uses when inputting a password or other predetermined phrase. In this case, if the correct password or phrase is entered but the entry pattern on the keyboard doesn’t match the stored value, the user will be denied access. Flight time, a term associated with keystroke dynamics, is the amount of time it takes to switch between keys. Dwell time is the amount of time you hold down a key.

Voice pattern or print: This type of system measures the sound pattern of a user saying certain words. When the user attempts to authenticate, he will be asked to repeat those words in different orders. If the pattern matches, authentication is allowed.

Biometric Considerations

When considering biometric technologies, security professionals should understand the following terms:

Enrollment time: This is the process of obtaining the sample that is used by the biometric system. This process requires actions that must be repeated several times.

Feature extraction: This is the approach to obtaining biometric information from a collected sample of a user’s physiological or behavioral characteristics.

Accuracy: This is the most important characteristic of biometric systems. It is how correct the overall readings will be.

Throughput rate: This is the rate at which the biometric system will be able to scan characteristics and complete the analysis to permit or deny access. The acceptable rate is 6 to 10 subjects per minute. A single user should be able to complete the process in 5 to 10 seconds.

Acceptability: This describes the likelihood that users will accept and follow the system.

False rejection rate (FRR): This is a measurement of valid users that will be falsely rejected by the system. This is called a Type I error.

False acceptance rate (FAR): This is a measurement of the percentage of invalid users that will be falsely accepted by the system. This is called a Type II error. Type II errors are more dangerous than Type I errors.

Crossover error rate (CER): This is the point at which FRR equals FAR. Expressed as a percentage, this is the most important metric.

Often when analyzing biometric systems, security professionals refer to a Zephyr chart that illustrates the comparative strengths and weaknesses of biometric systems. But you should also consider how effective each biometric system is and its level of user acceptance.

The following is a list of the most popular biometric methods, ranked by effectiveness, starting with the most effective:

Iris scan

Retina scan

Fingerprint

Hand print

Hand geometry

Voice pattern

Keystroke pattern

Signature dynamics

The following is a list of the most popular biometric methods, ranked by user acceptance, starting with the methods that are most popular:

Voice pattern

Keystroke pattern

Signature dynamics

Hand geometry

Hand print

Fingerprint

Iris scan

Retina scan

When considering FAR, FRR, and CER, remember that smaller values are better. FAR errors are more dangerous than FRR errors. Security professionals can use the CER for comparative analysis when helping their organization decide which system to implement. For example, voice print systems usually have higher CERs than iris scans, hand geometry, or fingerprints.

Dual-Factor and Multi-Factor Authentication

Knowledge, characteristic, and behavioral factors can be combined to increase the security of an authentication system. When this is done, it is called dual-factor or multi-factor authentication. Specifically, dual-factor authentication is a combination of two authentication factors (such as a knowledge factor and a behavioral factor), while multi-factor authentication is a combination of all three factors. The following are examples:

Dual-factor: A password (knowledge factor) and an iris scan (characteristic factor)

Multi-factor: A PIN (knowledge factor), a retina scan (characteristic factor), and signature dynamics (behavioral factor)

Certificate-Based Authentication

The security of an authentication system can be raised significantly if the system is certificate based rather than password or PIN based. A digital certificate provides an entity—usually a user—with the credentials to prove its identity and associates that identity with a public key. At minimum, a digital certificate must provide the serial number, the issuer, the subject (owner), and the public key. Digital certificates are covered more completely in Chapter 15, “Cryptographic Techniques.”

Using certificate-based authentication requires the deployment of a public key infrastructure (PKI). PKIs include systems, software, and communication protocols that distribute, manage, and control public key cryptography. A PKI publishes digital certificates. Because a PKI establishes trust within an environment, a PKI can certify that a public key is tied to an entity and verify that a public key is valid. Public keys are published through digital certificates. PKI is discussed more completely in Chapter 15.

In some situations, it may be necessary to trust another organization’s certificates or vice versa. Cross-certification establishes trust relationships between certification authority (CAs) so that the participating CAs can rely on the other participants’ digital certificates and public keys. It enables users to validate each other’s certificates when they are actually certified under different certification hierarchies. A CA for one organization can validate digital certificates from another organization’s CA when a cross-certification trust relationship exists.

Single Sign-on

In a single sign-on (SSO) environment, a user enters his login credentials once and can access all resources in the network. The Open Group Security Forum has defined many objectives for single sign-on systems. Some of the objectives for a user sign-on interface and user account management include the following:

The interface should be independent of the type of authentication information handled.

The creation, deletion, and modification of user accounts should be supported.

Support should be provided for a user to establish a default user profile.

The interface should be independent of any platform or operating system.

Advantages of an SSO system include:

Users are able to use stronger passwords.

User administration and password administration are simplified.

Resource access is much faster.

User login is more efficient.

Users need to remember the login credentials for only a single system.

Disadvantages of an SSO system include:

Once a user obtains system access through the initial SSO login, the user is able to access all resources to which he is granted access.

If a user’s credentials are compromised, attackers will have access to all resources to which the user has access.

While the discussion on SSO so far has mainly focused on how it is used for networks and domains, SSO can also be implemented in web-based systems. Enterprise access management (EAM) provides access control management for web-based enterprise systems. Its functions include accommodation of a variety of authentication methods and role-based access control. In this instance, the web access control infrastructure performs authentication and passes attributes in an HTTP header to multiple applications.

Regardless of the exact implementation, SSO involves a secondary authentication domain that relies on and trusts a primary domain to do the following:

Protect the authentication credentials used to verify the end user’s identity to the secondary domain for authorized use.

Correctly assert the identity and authentication credentials of the end user.

802.1x

802.1x is a standard that defines a framework for centralized port-based authentication. 802.1x is covered in Chapter 5, “Network and Security Components, Concepts, and Architectures.”

Context-Aware Authentication

Context-aware or Context-dependent access control is based on subject or object attributes or environmental characteristics. These characteristics can include location or time of day. For example, suppose administrators implement a security policy which ensures that a user only logs in from a particular workstation during certain hours of the day.

Push-Based Authentication

Push authentication involves sending a notification (via a secure network) to a user’s device, usually a smartphone, when accessing a protected resource.

With push-based authentication, possessing the device itself becomes a prime method of authentication. To be successful, the device must be in the possession of a user who can answer a text message correctly for access.

Authorization

Once a user is authenticated, he or she must be granted rights and permissions to resources. The process is referred to as authorization. Identification and authentication are necessary steps in providing authorization. The next sections cover important components in authorization: access control models, access control policies, separation of duties, least privilege/need to know, and default to no access. In addition, several standards for performing the authorization function have emerged: OAuth, XACML, and SPML. The following sections discuss these standards.

Access Control Models

An access control model is a formal description of an organization’s security policy. Access control models are implemented to simplify access control administration by grouping objects and subjects. Subjects are entities that request access to an object or data within an object. Users, programs, and processes are subjects. Objects are entities that contain information or functionality. Computers, databases, files, programs, directories, and fields are objects. A secure access control model must ensure that secure objects cannot flow to an object with a classification that is lower.

The access control models and concepts that you need to understand include the following: discretionary access control, mandatory access control, role-based access control, rule-based access control, content-dependent access control, access control matrix, and access control list.

Discretionary Access Control

In discretionary access control (DAC), the owner of an object specifies which subjects can access the resource. DAC is typically used in local, dynamic situations. The access is based on the subject’s identity, profile, or role. DAC is considered to be a need-to-know control.

DAC can be an administrative burden because the data custodian or owner grants access privileges to the users. Under DAC, a subject’s rights must be terminated when the subject leaves the organization. Identity-based access control is a subset of DAC and is based on user identity or group membership.

Nondiscretionary access control is the opposite of DAC. In nondiscretionary access control, access controls are configured by a security administrator or another authority. The central authority decides which subjects have access to objects, based on the organization’s policy. In DAC, the system compares the subject’s identity with the object’s access control list.

Mandatory Access Control

In mandatory access control (MAC), subject authorization is based on security labels. MAC is often described as prohibitive because it is based on a security label system. Under MAC, all that is not expressly permitted is forbidden. Only administrators can change the category of a resource.

While MAC is more secure than DAC, DAC is more flexible and scalable than MAC. Because of the importance of security in MAC, labeling is required. Data classification reflects the data’s sensitivity. In a MAC system, a clearance is a privilege. Each subject and object is given a security or sensitivity label. The security labels are hierarchical. For commercial organizations, the levels of security labels could be confidential, proprietary, corporate, sensitive, and public. For government or military institutions, the levels of security labels could be top secret, secret, confidential, and unclassified.

In MAC, the system makes access decisions when it compares a subject’s clearance level with an object’s security label.

Role-Based Access Control

In role-based access control (RBAC), each subject is assigned to one or more roles. Roles are hierarchical, and access control is defined based on the roles. RBAC can be used to easily enforce minimum privileges for subjects. An example of RBAC is implementing one access control policy for bank tellers and another policy for loan officers.

RBAC is not as secure as the previously described access control models because security is based on roles. RBAC usually has a much lower implementation cost than the other models and is popular in commercial applications. It is an excellent choice for organizations with high employee turnover. RBAC can effectively replace DAC and MAC because it allows you to specify and enforce enterprise security policies in a way that maps to the organization’s structure.

RBAC is managed in four ways. In non-RBAC, no roles are used. In limited RBAC, users are mapped to single application roles, but some applications do not use RBAC and require identity-based access. In hybrid RBAC, each user is mapped to a single role, which gives users access to multiple systems, but each user may be mapped to other roles that have access to single systems. In full RBAC, users are mapped to a single role, as defined by the organization’s security policy, and access to the systems is managed through the organizational roles.

Rule-Based Access Control

Rule-based access control facilitates frequent changes to data permissions. Using this method, a security policy is based on global rules imposed for all users. Profiles are used to control access. Many routers and firewalls use this type of access control and define which packet types are allowed on a network. Rules can be written to allow or deny access based on packet type, port number used, MAC address, and other parameters.

Content-Dependent Access Control

Content-dependent access control makes access decisions based on an object’s data. With this type of access control, the data that a user sees may change based on the policy and access rules that are applied.

Some security experts consider a constrained user interface another method of access control. An example of a constrained user interface is a shell, which is a software interface to an operating system that implements access control by limiting the system commands that are available. Another example is database views that are filtered based on user or system criteria. Constrained user interfaces can be content or context dependent, depending on how the administrator constrains the interface.

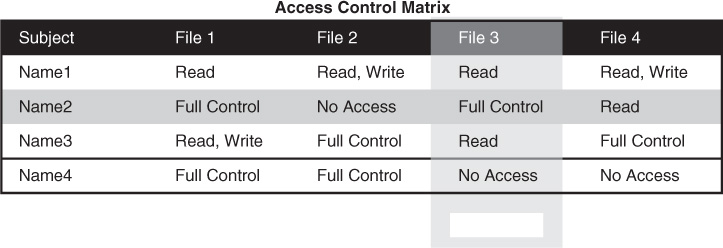

Access Control Matrix

An access control matrix is a table that consists of a list of subjects, a list of objects, and a list of the actions that a subject can take on each object. The rows in the matrix are the subjects, and the columns in the matrix are the objects. Common implementations of an access control matrix include a capabilities table and an access control list (ACL).

As shown in Figure 14-1, a capability table lists the access rights that a particular subject has to objects. A capability table is about the subject. A capability corresponds to a subject’s row from an access control matrix.

ACLs

An ACL corresponds to an object’s column from an access control matrix. An ACL lists all the access rights that subjects have to a particular object. An ACL is about the object. For example, in Figure 14-1, each file is an object, so the full ACL for File 3 comprises the column containing the permissions held by each user (shaded in the diagram).

Access Control Policies

An access control policy defines the method for identifying and authenticating users and the level of access granted to users. Organizations should put access control policies in place to ensure that access control decisions for users are based on formal guidelines. If an access control policy is not adopted, an organization will have trouble assigning, managing, and administering access management.

Default to No Access

During the authorization process, you should configure an organization’s access control mechanisms so that the default level of security is to default to no access. This means that if nothing has been specifically allowed for a user or group, the user or group will not be able to access the resource. The best security approach is to start with no access and add rights based on a user’s need.

OAuth

Open Authorization (OAuth) is a standard for authorization that allows users to share private resources on one site to another site without using credentials. It is sometimes described as the valet key for the Web. Whereas a valet key only gives the valet the ability to park your car but not access the trunk, OAUTH uses tokens to allow restricted access to a user’s data when a client application requires access. These tokens are issued by an authorization server. Although the exact flow of steps depends on the specific implementation, Figure 14-2 shows the general process steps.

OAUTH is a good choice for authorization whenever one web application uses another web application’s API on behalf of the user. A good example would be a geolocation application integrated with Facebook. OAUTH gives the geolocation application a secure way to get an access token for Facebook without revealing the Facebook password to the geolocation application.

XACML

Extensible Access Control Markup Language (XACML) is a standard for an access control policy language using Extensible Markup Language (XML). Its goal is to create an attribute-based access control system that decouples the access decision from the application or the local machine. It provides for fine-grained control of activities based on criteria including:

Attributes of the user requesting access (for example, all division managers in London)

The protocol over which the request is made (for example, HTTPS)

The authentication mechanism (for example, requester must be authenticated with a certificate)

XACML uses several distributed components, including:

Policy enforcement point (PEP): This entity is protecting the resource that the subject (a user or an application) is attempting to access. When it receives a request from a subject, it creates an XACML request based on the attributes of the subject, the requested action, the resource, and other information.

Policy decision point (PDP): This entity retrieves all applicable polices in XACML and compares the request with the policies. It transmits an answer (access or no access) back to the PEP.

XACML is valuable because it is able to function across application types. The process flow used by XACML is described in Figure 14-3.

XACML is a good solution when disparate applications that use their own authorization logic are in use in the enterprise. By leveraging XACML, developers can remove authorization logic from an application and centrally manage access using policies that can be managed or modified based on business need without making any additional changes to the applications themselves.

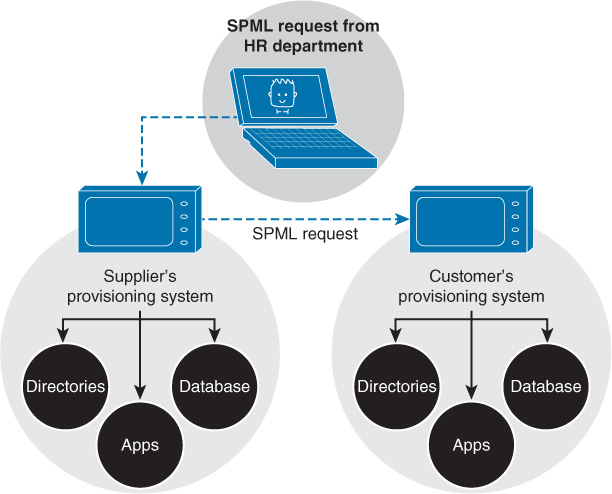

SPML

Another open standard for exchanging authorization information between cooperating organizations is Service Provisioning Markup Language (SPML). It is an XML-based framework developed by the Organization for the Advancement of Structured Information Standards (OASIS).

The SPML architecture has three components:

Request authority (RA): The entity that makes the provisioning request

Provisioning service provider (PSP): The entity that responds to the RA requests

Provisioning service target (PST): The entity that performs the provisioning

When a trust relationship has been established between two organizations with web-based services, one organization acts as the RA, and the other acts as the PSP. The trust relationship uses Security Assertion Markup Language (SAML) in a Simple Object Access Protocol (SOAP) header. The SOAP body transports the SPML requests/responses.

Figure 14-4 shows an example of how these SPML messages are used. In the diagram, a company has an agreement with a supplier to allow the supplier to access its provisioning system. When the supplier HR adds a user, an SPML request is generated to the supplier’s provisioning system so the new user can use the system. Then the supplier’s provisioning system generates another SPML request to create the account in the customer provisioning system.

Attestation

Attestation allows changes to a user’s computer to be detected by authorized parties. Alternatively, it allows a machine to be assessed for the correct version of software or for the presence of a particular piece of software on a computer. This function can play a role in defining what a user is allowed to do in a particular situation.

Let’s say, for example, that you have a server that contains the credit card information of customers. The policy being implemented calls for authorized users on authorized devices to access the server only if they are also running authorized software. In this case, these three goals need to be achieved. The organization will achieve these goals by:

Identifying authorized users by authentication and authorization

Identifying authorized machines by authentication and authorization

Identifying running authorized software by attestation

Attestation provides evidence about a target to an appraiser so the target’s compliance with some policy can be determined before access is allowed.

Attestation also has a role in the operation of a Trusted Platform Module (TPM) chip. TPM chips have an endorsement key (EK) pair that is embedded during the manufacturing process. This key pair is unique to the chip and is signed by a trusted CA. It also contains an attestation integrity key (AIK) pair. This key is generated and used to allow an application to perform remote attestation as to the integrity of the application. It allows a third party to verify that the software has not changed.

Identity Proofing

Identity proofing is an additional step in the identification step of authentication. An example of identity proofing is the presentation of secret questions to which only the individual undergoing authentication would know the answer. While the subject would still need to provide credentials such as a password, this additional step helps to mitigate instances in which a password has been compromised.

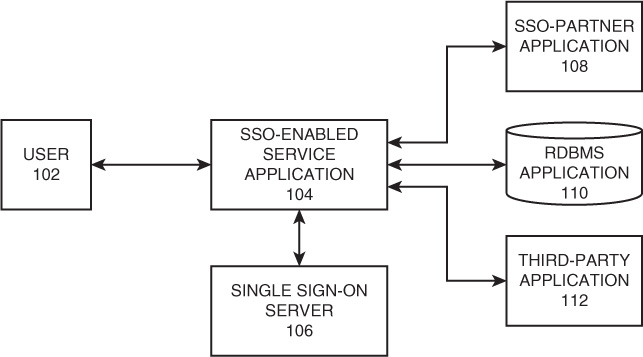

Identity Propagation

Identity propagation is the passing or sharing of a user’s or device’s authenticated identity information from one part of a multitier system to another. In most cases, each of the components in the system performs its own authentication, so identity propagation allows this to occur seamlessly. There are several approaches to performing identity propagation. Some systems, such as Microsoft’s Active Directory, use a proprietary method and tickets to perform identity propagation.

In some cases, not all of the components in a system may be SSO enabled (meaning a component can accept the identity token in its original format from the SSO server). In those cases, a proprietary method must be altered to communicate in a manner the third-party application understands. In the example in Figure 14-5, a user is requesting access to a relational database management system (RDBMS) application. The RDBMS server redirects the user to the SSO authentication server. The SSO server provides the user with an authentication token, which is then used to authenticate to the RDBMS server. The RDBMS server checks the token containing the identity information and grants access.

Now suppose that the application service receives a request to access the external third-party web application that is not SSO enabled. The application service redirects the user to the SSO server. Now when the SSO server propagates the authenticated identity information to the external application, it will not use the SSO token but will instead use an XML token.

Another example of a protocol that performs identity propagation is Credential Security Support Provider (CredSSP). It is often integrated into the Microsoft Remote Desktop terminal services environment to provide network layer authentication. Among the possible authentication or encryption types supported when implemented for this purpose are Kerberos, TLS, and NTLM.

Federation

A federated identity is a portable identity that can be used across businesses and domains. In federated identity management, each organization that joins the federation agrees to enforce a common set of policies and standards. These policies and standards define how to provision and manage user identification, authentication, and authorization. Providing disparate authentication mechanisms with federated IDs has the lowest up-front development cost compared to other methods, such as a PKI or attestation.

Federated identity management uses two basic models for linking organizations within the federation:

Cross-certification model: In this model, each organization certifies that every other organization is trusted. This trust is established when the organizations review each other’s standards. Each organization must verify and certify through due diligence that the other organizations meet or exceed standards. One disadvantage of cross-certification is that the number of trust relationships that must be managed can become problematic.

Trusted third-party (or bridge) model: In this model, each organization subscribes to the standards of a third party. The third party manages verification, certification, and due diligence for all organizations. This is usually the best model if an organization needs to establish federated identity management relationships with a large number of organizations.

SAML

Security Assertion Markup Language (SAML) is a security attestation model built on XML and SOAP-based services that allows for the exchange of authentication and authorization data between systems and supports federated identity management. The major issue it attempts to address is SSO using a web browser. When authenticating over HTTP using SAML, an assertion ticket is issued to the authenticating user.

Remember that SSO is the ability to authenticate once to access multiple sets of data. SSO at the Internet level is usually accomplished with cookies, but extending the concept beyond the Internet has resulted in many proprietary approaches that are not interoperable. The goal of SAML is to create a standard for this process.

A consortium called the Liberty Alliance proposed an extension to the SAML standard called the Liberty Identity Federation Framework (ID-FF). This is proposed to be a standardized cross-domain SSO framework and identifies what is called a circle of trust. Within the circle, each participating domain is trusted to document the following about each user:

The process used to identify a user

The type of authentication system used

Any policies associated with the resulting authentication credentials

Each member entity is free to examine this information and determine whether to trust it. Liberty contributed ID-FF to OASIS (a nonprofit, international consortium that creates interoperable industry specifications based on public standards such as XML and SGML). In March 2005, SAML v2.0 was announced as an OASIS standard. SAML v2.0 represents the convergence of Liberty ID-FF and other proprietary extensions.

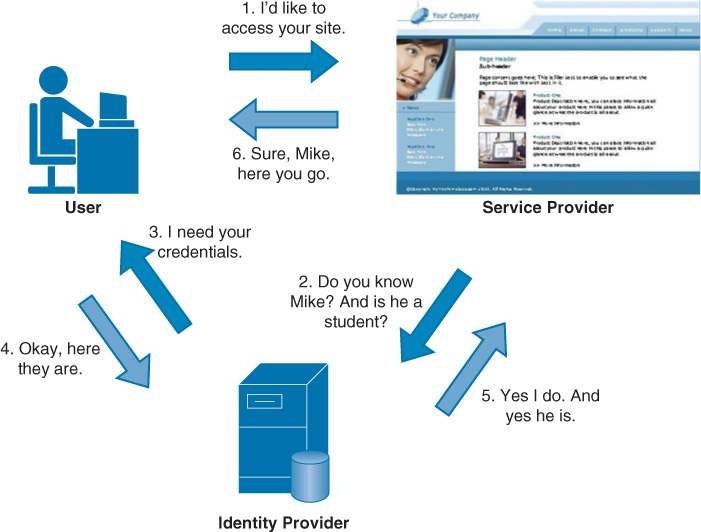

In an unauthenticated SAMLv2 transaction, the browser asks the service provider (SP) for a resource. The SP provides the browser with an XHTML format. The browser asks the identity provider (IdP) to validate the user and then provides the XHTML back to the SP for access. The <nameID> element in SAML can be provided as the X.509 subject name or by Kerberos principal name.

To prevent a third party from identifying a specific user as having previously accessed a service provider through an SSO operation, SAML uses transient identifiers (which are valid only for a single login session and will be different each time the user authenticates again but will stay the same as long as the user is authenticated).

SAML is a good solution in the following scenarios that an enterprise might face:

When you need to provide SSO (and at least one actor or participant is an enterprise)

When you need to provide access to a partner or customer application to your portal

When you can provide a centralized identity source

OpenID

OpenID is an open standard and decentralized protocol by the nonprofit OpenID Foundation that allows users to be authenticated by certain cooperating sites. The cooperating sites are called relying parties (RPs). OpenID allows users to log in to multiple sites without having to register their information repeatedly. A user selects an OpenID identity provider and uses his or her account to log in to any website that accepts OpenID authentication.

While OpenID solves the same issue as SAML, an enterprise may find these advantages in using OpenID:

It’s less complex than SAML.

It’s been widely adopted by companies such as Google.

On the other hand, you should be aware of the following shortcomings of OpenID compared to SAML:

With OpenID, auto-discovery of the identity provider must be configured for each user.

SAML has better performance.

SAML can initiate SSO from either the service provider or the identity provider, while OpenID can only be initiated from the service provider.

In February 2014, the third generation of OpenID, called OpenID Connect, was released. It is an authentication layer protocol that resides atop the OAUTH 2.0 framework. (OAUTH is covered earlier in this chapter.) It is designed to support native and mobile applications. It also defines methods of signing and encryption.

Shibboleth

Shibboleth is an open source project that provides single sign-on capabilities and allows sites to make informed authorization decisions for individual access of protected online resources in a privacy-preserving manner. Shibboleth allows the use of common credentials among sites that are a part of the federation. It is based on SAML. This system has two components:

Identity providers (IPs), which supply the user information

Service providers (SPs), which consume this information before providing a service

Here is an example of SAML in action:

A user logs in to Domain A, using a PKI certificate that is stored on a smart card protected by an eight-digit PIN.

The credential is cached by the authenticating server in Domain A.

Later, the user attempts to access a resource in Domain B. This initiates a request to the Domain A authenticating server to somehow attest to the resource server in Domain B that the user is in fact who she claims to be.

Figure 14-6 illustrates the way the service provider obtains the identity information from the identity provider.

WAYF

Where Are You From (WAYF) is another SSO system that allows credentials to be used in more than one place. It has been used to allow a user from an institution that participates to log in by simply identifying the institution that is his home organization. That organization plays the role of identity provider to the other institutions.

When the user attempts to access a resource held by one of the participating institutions, if he is not already signed in to his home institution, he is redirected to his identity provider to do so. Once he authenticates (or if he is already logged in), the provider sends information about him (after asking for consent) to the resource provider. This information is used to determine the access to provide to the user.

When an enterprise needs to allow SSO access to information that may be located in libraries at institutions such as colleges, secondary schools, and governmental bodies, WAYF is a good solution and is gaining traction in these areas.

Trust Models

Over the years, advanced SSO systems have been developed to support network authentication. The following sections provide information on Remote Access Dial-In User Service (RADIUS), which allows you to centralize authentication functions for all network access devices. You will also be introduced to two standards for network authentication directories: Lightweight Directory Access Protocol (LDAP) and a common implementation of the service called Active Directory (AD).

RADIUS Configurations

When users are making connections to the network through a variety of mechanisms, they should be authenticated first. This could apply to users accessing the network through:

Dial-up remote access servers

VPN access servers

Wireless access points

Security-enabled switches

In the past, each of these access devices performed the authentication process locally on the device. The administrators needed to ensure that all remote access policies and settings were consistent across them all. When a password needed to be changed, it had to be done on all devices.

RADIUS is a networking protocol that provides centralized authentication and authorization. It can be run at a central location, and all of the access devices (wireless access point, remote access, VPN, and so on) can be made clients of the server. Whenever authentication occurs, the RADIUS server performs the authentication and authorization. This provides one location to manage the remote access policies and passwords for the network. Another advantage of using these systems is that the audit and access information (logs) are not kept on the access server.

RADIUS is a standard defined in RFC 2138. It is designed to provide a framework that includes three components. The supplicant is the device seeking authentication. The authenticator is the device to which the supplicant is attempting to connect (for example, AP, switch, remote access server), and the RADIUS server is the authentication server. With regard to RADIUS, the device seeking entry is not the RADIUS client. The authenticating server is the RADIUS server, and the authenticator (for example, AP, switch, remote access server) is the RADIUS client.

In some cases, a RADIUS server can be the client of another RADIUS server. In that case, the RADIUS server is acting as a proxy client for its RADIUS clients.

Security issues with RADIUS are related to the shared secret used to encrypt the information between the network access device and the RADIUS server and the fact that this protects only the credentials and not other pieces of useful information, such as tunnel-group IDs or VLAN memberships. The protection afforded by the shared secret is not considered strong, and IPsec should be used to encrypt these communication channels. A protocol called RadSec that is under development shows promise for correcting this flaw.

LDAP

A directory service is a database designed to centralize data management regarding network subjects and objects. A typical directory contains a hierarchy that includes users, groups, systems, servers, client workstations, and so on. Because the directory service contains data about users and other network entities, it can be used by many applications that require access to that information. A common directory service standard is Lightweight Directory Access Protocol (LDAP), which is based on the earlier standard X.500.

X.500 uses Directory Access Protocol (DAP). In X.500, the distinguished name (DN) provides the full path in the X.500 database where the entry is found. The relative distinguished name (RDN) in X.500 is an entry’s name without the full path.

LDAP is simpler than X.500. LDAP supports DN and RDN, but it includes more attributes, such as the common name (CN), domain component (DC), and organizational unit (OU) attributes. Using a client/server architecture, LDAP uses TCP port 389 to communicate. If advanced security is needed, LDAP over SSL communicates via TCP port 636.

AD

Microsoft’s implementation of LDAP is Active Directory (AD), which organizes directories into forests and trees. AD tools are used to manage and organize everything in an organization, including users and devices. This is where security is implemented, and its implementation is made more efficient through the use of Group Policy.

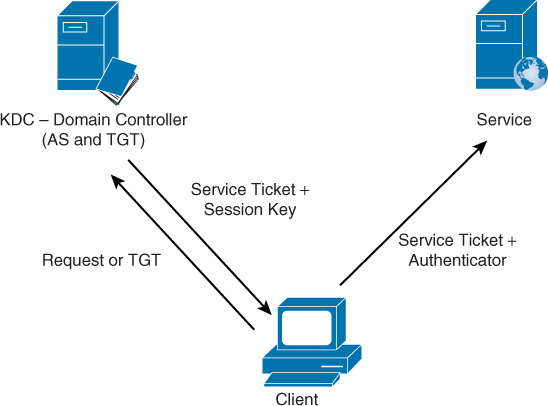

AD is also another example of an SSO system. It uses the same authentication and authorization system used in UNIX and Kerberos. This system authenticates a user once and then, through the use of a ticket system, allows the user to perform all actions and access all resources to which she has been given permission without the need to authenticate again.

The steps used in this process are shown in Figure 14-7. The user authenticates with the domain controller, and the domain controller is performing several other roles as well. First, it is the key distribution center (KDC), which runs the authorization service (AS), which determines whether the user has the right or permission to access a remote service or resource in the network.

After the user has been authenticated (when she logs on once to the network), she is issued a ticket-granting ticket (TGT). This is used to later request session tickets, which are required to access resources. At any point that she later attempts to access a service or resource, she is redirected to the AS running on the KDC. Upon presenting her TGT, she is issued a session, or service, ticket for that resource. The user presents the service ticket, which is signed by the KDC, to the resource server for access. Because the resource server trusts the KDC, the user is granted access.

Exam Preparation Tasks

You have a couple choices for exam preparation: the exercises here and the practice exams in the Pearson IT Certification test engine.

Review All Key Topics

Review the most important topics in this chapter, noted with the Key Topics icon in the outer margin of the page. Table 14-1 lists these key topics and the page number on which each is found.

Table 14-1 Key Topics for Chapter 14

Key Topic Element |

Description |

Page Number |

List |

Steps in authentication |

537 |

List |

Authentication factors |

538 |

List |

Ownership factors |

539 |

List |

Additional authentication concepts |

540 |

List |

Password types |

541 |

List |

Password management considerations |

543 |

List |

Physiological biometric systems |

544 |

List |

Behavioral biometric systems |

545 |

List |

Biometric considerations |

546 |

List |

Dual-factor and multi-factor authentication |

548 |

List |

XACML components |

555 |

List |

SPML architecture |

556 |

List |

Federated identity management models |

559 |

Define Key Terms

Define the following key terms from this chapter and check your answers in the glossary:

certificate-based authentication

content-dependent access control

context-dependent access control

discretionary access control (DAC)

Extensible Access Control Markup Language (XACML)

HMAC-Based One-Time Password algorithm (HOTP)

Lightweight Directory Access Protocol (LDAP)

mandatory access control (MAC)

policy enforcement point (PEP)

provisioning service provider (PSP)

provisioning service target (PST)

role-based access control (RBAC)

Security Assertion Markup Language (SAML)

Service Provisioning Markup Language (SPML)

Time-Based One-Time Password algorithm (TOTP)

trusted third-party (or bridge) model

Review Questions

1. Your company is examining its password polices and would like to require passwords that include a mixture of upper- and lowercase letters, numbers, and special characters. What type of password does this describe?

standard word password

combination password

complex password

passphrase password

2. You would like to prevent users from using a password again when it is time to change their passwords. What policy do you need to implement?

password life

password history

password complexity

authentication period

3. Your company implements one of its applications on a Linux server. You would like to store passwords in a location that can be protected using a hash. Where is this location?

/etc/passwd

/etc/passwd/hash

/etc/shadow

/etc/root

4. Your organization is planning the deployment of a biometric authentication system. You would like a method that records the peaks and valleys of the hand and its shape. Which physiological biometric system performs this function?

fingerprint scan

finger scan

hand geometry scan

hand topography

5. Which of the following is not a biometric system based on behavioral characteristics?

signature dynamics

keystroke dynamics

voice pattern or print

vascular scan

6. During a discussion of biometric technologies, one of your coworkers raises a concern that valid users will be falsely rejected by the system. What type of error is he describing?

FRR

FAR

CER

accuracy

7. The chief security officer wants to know the most popular biometric methods, based on user acceptance. Which of the following is the most popular biometric method, based on user acceptance?

voice pattern

keystroke pattern

iris scan

retina scan

8. When using XACML as an access control policy language, which of the following is the entity that is protecting the resource that the subject (a user or an application) is attempting to access?

PEP

PDP

FRR

RAR

9. Which of the following concepts provides evidence about a target to an appraiser so the target’s compliance with some policy can be determined before access is allowed?

identity propagation

authentication

authorization

attestation

10. Which single sign-on system is used in both UNIX and Microsoft Active Directory?

Kerberos

Shibboleth

WAYF

OpenID