Chapter 5. Application Security

This chapter covers the following subjects:

![]() Securing the Browser: What is a computer without a web browser? Some might answer “worthless.” Well, a compromised browser is worse than no browser at all. The web browser must be secured to have a productive and enjoyable web experience.

Securing the Browser: What is a computer without a web browser? Some might answer “worthless.” Well, a compromised browser is worse than no browser at all. The web browser must be secured to have a productive and enjoyable web experience.

![]() Securing Other Applications: Organizations use many applications, and they each have their own group of security vulnerabilities. In this section, we spend a little time on common applications such as Microsoft Office and demonstrate how to make those applications safe.

Securing Other Applications: Organizations use many applications, and they each have their own group of security vulnerabilities. In this section, we spend a little time on common applications such as Microsoft Office and demonstrate how to make those applications safe.

![]() Secure Programming: Programmers use many techniques when validating and verifying the security of their code. This important section covers a few basic concepts of programming security such as system testing, secure code review, and fuzzing.

Secure Programming: Programmers use many techniques when validating and verifying the security of their code. This important section covers a few basic concepts of programming security such as system testing, secure code review, and fuzzing.

Browser security should be at the top of any security administrator’s list. It’s another example of inherently insecure software “out of the box.” Browsers are becoming more secure as time goes on, but new malware always presents a challenge—as mentioned in Chapter 1, “Introduction to Security,” the scales are always tipping back and forth.

Most browsers have plenty of built-in options that you can enable to make them more secure, and third-party programs can help in this endeavor as well. This chapter covers web browsers in a generic fashion; most of the concepts we cover can be applied to just about any browser. However, users don’t just work with web browsers. They use office applications frequently as well, so these should be secured, too. Also, other applications, such as the command-line, though a great tool, can be a target. Back office applications such as the ones that supply database information and e-mail are also vulnerable and should be hardened accordingly. And finally, any applications that are being developed within your dominion should be reviewed carefully for bugs.

Be sure to secure any application used or developed on your network. It takes only one vulnerable application to compromise the security of your network.

Let’s start with discussing how to secure web browsers.

Foundation Topics

Securing the Browser

There is a great debate as to which web browser to use. It doesn’t matter too much to me, because as a security guy I am going to spend a decent amount of time securing whatever it is that is in use. However, each does have advantages, and one might work better than the other depending on the environment. So, if you are in charge of implementing a browser solution, be sure to plan for performance and security right from the start. I do make some standard recommendations to customers, students, and readers when it comes to planning and configuring browser security. Let’s discuss a few of those now.

The first recommendation is to not use the very latest version of a browser. (Same advice I always give for any application, it seems.) Let the people at the top of the marketing pyramid, the innovators, mess around with the “latest and greatest;” let those people find out about the issues, backdoors, and whatever other problems a new application might have; at worst, let their computer crash! For the average user, and especially for a fast-paced organization, the browser needs to be rock-solid; these organizations will not tolerate any downtime. I always allow for some time to pass before fully embracing and recommending software. The reason I bring this up is because most companies share the same view and implement this line of thinking as a company policy. They don’t want to be caught in a situation where they spent a lot of time and money installing something that is not compatible with their systems.

Note

Some browsers update automatically by default. While this is a nice feature that can prevent threats from exploiting known vulnerabilities, it might not correspond to your organization’s policies. This feature would have to be disabled in the browser’s settings or within a computer policy so that the update can be deferred until it is deemed appropriate for all systems involved.

The next recommendation is to consider what type of computer, or computers, will be running the browser. Generally, Macs run Safari. Linux computers run Firefox. Edge and Internet Explorer (IE) are common in the Windows market, but Firefox and Google Chrome work well on Windows computers, too. Some applications such as Microsoft Office are, by default, linked to the Microsoft web browser, so it is wise to consider other applications that are in use when deciding on a browser. Another important point is whether you will be centrally managing multiple client computers’ browsers. Edge and IE can be centrally managed through the use of Group Policy objects (GPOs) on a domain. I’ll show a quick demonstration of this later in the chapter.

When planning what browser to use, consider how quickly the browser companies fix vulnerabilities. Some are better than others and some browsers are considered to be more secure than others in general. It should also be noted that some sites recommend—or in some cases require—a specific browser to be used. This is a “security feature” of those websites and should be investigated before implementing other browsers in your organization.

When it comes to functionality, most browsers work well enough, but one browser might fare better for the specific purposes of an individual or group. By combining the functions a user or group requires in a browser, along with the security needed, you should come up with the right choice. You should remember that the web browser was not originally intended to do all the things it now does. In many cases, it acts as a shell for all kinds of other things that run inside of it. This trend will most likely continue. That means more new versions of browsers, more patching, and more secure code to protect those browsers. Anyway, I think that’s enough yappin’ about browsers—we’ll cover some actual security techniques in just a little bit.

General Browser Security Procedures

First, some general procedures should be implemented regardless of the browser your organization uses. These concepts can be applied to desktop browsers as well as mobile browsers.

![]() Implement policies.

Implement policies.

![]() Train your users.

Train your users.

![]() Use a proxy and content filter.

Use a proxy and content filter.

![]() Secure against malicious code.

Secure against malicious code.

Each of these is discussed in more detail in the following sections.

Implement Policies

The policy could be hand-written, configured at the browser, implemented within the computer operating system, or better yet, configured on a server centrally. Policies can be configured to manage add-ons, and disallow access to websites known to be malicious, have Flash content, or use a lot of bandwidth. As an example, Figure 5-1 shows the Local Group Policy of a Windows 10 computer focusing on the settings of the two Microsoft browsers. You can open the Local Group Policy Editor by going to Run and typing gpedit.msc. Then navigate to

User Configuration > Administrative Templates > Windows Components

From there, depending on which browser you want to modify security settings for, you can access Internet Explorer (especially the Security Features subsection) or Microsoft Edge.

In the figure, you can see that the folder containing the Microsoft Edge policy settings is opened and that there are many settings that can be configured. The figure also shows Internet Explorer open at the top of the window. As you can see, there are many folders and consequently many more security settings for IE.

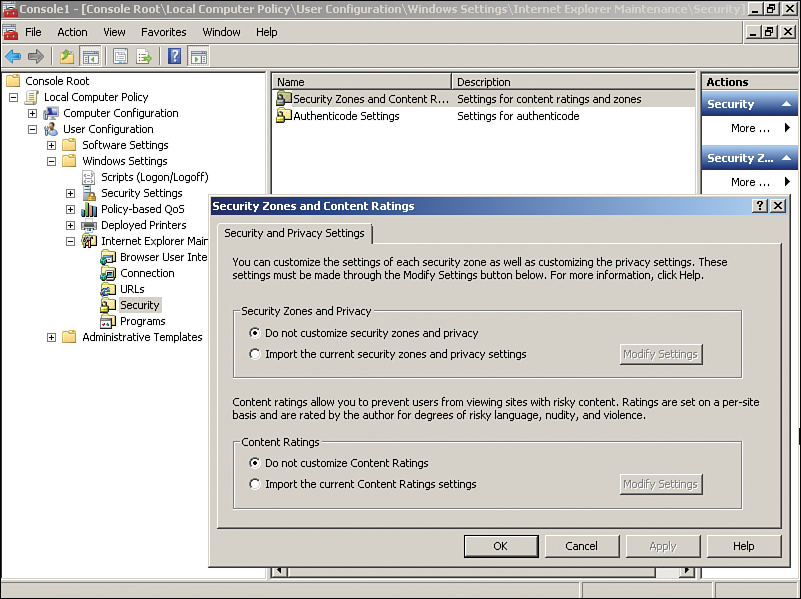

Of course, literally hundreds of settings can be changed for Internet Explorer. You can also modify Internet Explorer Maintenance Security by navigating to

User Configuration > Windows Settings > Internet Explorer Maintenance > Security

An example of this in Windows 7 is shown in Figure 5-2. The Security Zones and Content Ratings object was double-clicked to show the dialog box of the same name, which allows you to customize the Internet security zones of the browser. Some versions of Windows will not enable you to access the Local Computer Policy; however, most security features can be configured directly within the browser in the case that you don’t have access to those features in the OS.

Note

These are just examples. I use Windows 10 and Windows 7 as examples here because the chances are that you will see plenty of those systems over the lifespan of the CompTIA Security+ Certification (and most likely beyond). However, you never know what operating systems and browser an organization will use in the future, so keep an open mind.

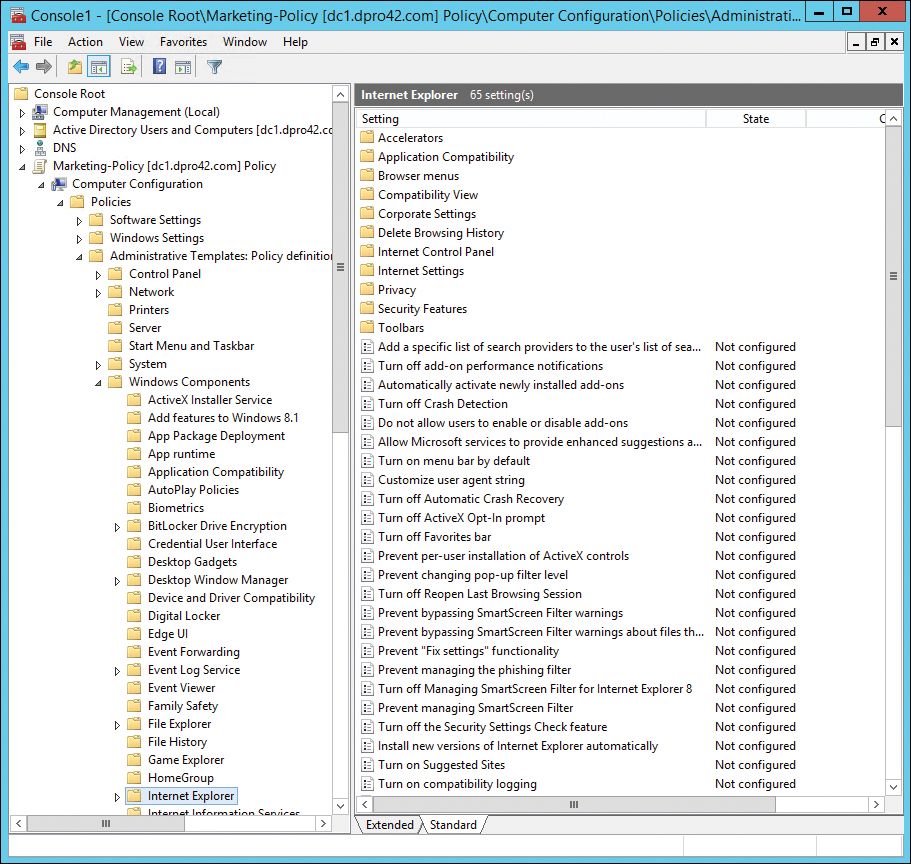

Now, you wouldn’t want to configure these policy settings on many more than a few computers individually. If you have multiple computers that need their IE security policies updated, consider using a template (described in Chapter 4, “OS Hardening and Virtualization”), or if you have a domain controller, consider making the changes from that central location. From there, much more in-depth security can be configured and deployed to the IE browsers within multiple computers. An example of the IE policies, as managed from a domain controller, is shown in Figure 5-3.

For this, I set up a Windows Server as a domain controller (controlling the domain dpro42.com), created an organizational unit (OU) named Marketing, and then created a Group Policy object named Marketing-Policy that I added to a Microsoft Management Console (MMC). From that policy, the Internet Explorer settings, which can affect all computers within the Marketing OU, can be accessed by navigating to

Computer Configuration > Policies > Administrative Templates > Windows Components > Internet Explorer

From here we can configure trusted and non-trusted sites, zones, and advanced security features in one shot for all the computers in the OU. A real time-saver!

Note

You should also learn about viewing and managing group policies with the Group Policy Management Console (GPMC). In Windows Server 2012 R2 and higher, you can access this by going to Administrative Tools > Group Policy Management or by going to Run and typing gpmc.msc. It can also be added to an MMC.

Train Your Users

User training is important to determine which websites to access, how to use search engines, and what to do if pop-ups appear on the screen. The more users you can reach with your wisdom, the better! Onsite training classes, webinars, and downloadable screencasts all work great. Or if your organization doesn’t have those kinds of resources, consider writing a web article to this effect—make it engaging and interesting, yet educational.

For example, explain to users the value of pressing Alt+F4 to close pop-up windows instead of clicking No or an X. Pop-ups could be coded in such a way that No actually means Yes, and the close-out X actually means “take me to more annoying websites!” Alt+F4 is a hard-coded shortcut key that usually closes applications. If that doesn’t work, explain that applications can be closed by ending the task or process, force stopping, or force quitting, depending on the OS being used.

Another example is to show users how to determine if their communications are secure on the web. Just looking for HTTPS in the address bar isn’t necessarily enough. A browser might show a green background in the address bar if the website uses a proper encryption certificate. Some browsers use a padlock in the locked position to show it is secure. To find out the specific security employed by the website to protect the session, click, or right-click, the padlock icon and select More Information or Connection, or something to that effect (depending on the browser). This will show the encryption type and certificate being used. We’ll talk more about these concepts in Chapter 14, “Encryption and Hashing Concepts,” and Chapter 15, “PKI and Encryption Protocols.”

Use a Proxy and Content Filter

HTTP proxies (known as proxy servers) act as a go-between for the clients on the network and the Internet. Simply stated, they cache website information for the clients, reducing the number of requests that need to be forwarded to the actual corresponding web server on the Internet. This is done to save time, make more efficient use of bandwidth, and help to secure the client connections. By using a content filter in combination with this, specific websites can be filtered out, especially ones that can potentially be malicious, or ones that can be a waste of man-hours, such as peer-to-peer (P2P) websites/servers. I know—I’m such a buzzkill. But these filtering devices are common in today’s networks; we talk more about them in Chapter 8, “Network Perimeter Security.” For now, it is important to know how to connect to them with a browser. Remember that the proxy server is a mediator between the client and the Internet, and as such the client’s web browser must be configured to connect to them. You can either have the browser automatically detect a proxy server or (and this is more common) configure it statically. Figure 5-4 shows a typical example of a connection to a proxy server.

This setting can also be configured within an organizational unit’s Group Policy object on the domain controller. This way, it can be configured one time but affect all the computers within the particular OU.

Of course, any fancy networking configuration such as this can be used for evil purposes as well. The malicious individual can utilize various malware to write their own proxy configuration to the client operating system, thereby redirecting the unsuspecting user to potentially malevolent websites. So as a security administrator you should know how to enable a legitimate proxy connection used by your organization, but also know how to disable an illegitimate proxy connection used by attackers.

Note

When checking for improper proxy connections, a security admin should also check the hosts file for good measure. It is located in %systemroot%System32Driversetc.

Secure Against Malicious Code

Depending on your company’s policies and procedures, you might need to configure a higher level of security concerning ActiveX controls, Java, JavaScript, Flash media, phishing, and much more. We discuss these more as we progress through the chapter.

Web Browser Concerns and Security Methods

There are many ways to make a web browser more secure. Be warned, though, that the more a browser is secured, the less functional it becomes. For the average company, the best solution is to find a happy medium between functionality and security. However, if you are working in an enterprise environment or mission-critical environment, then you will need to lean much farther toward security.

Basic Browser Security

The first thing that you should do is to update the browser—that is, if company policy permits it. Remember to use the patch management strategy discussed earlier in the book. You might also want to halt or defer future updates until you are ready to implement them across the entire network.

Next, install pop-up blocking and other ad-blocking solutions. Many antivirus suites have pop-up blocking tools. There are also third-party solutions that act as add-ons to the browser. And of course, newer versions of web browsers will block some pop-ups on their own.

After that, consider security zones if your browser supports them. You can set the security level for the Internet and intranet zones, and specify trusted sites and restricted sites. In addition, you can set custom levels for security; for example, disable ActiveX controls and plug-ins, turn the scripting of Java applets on and off, and much more.

Note

For step-by-step demonstrations concerning web browser security, see my online real-world scenarios and corresponding videos that accompany this book.

As for mobile devices, another way to keep the browser secure, as well as the entire system, is to avoid jailbreaking or rooting the device. If the device has been misconfigured in this way, it is easier for attackers to assault the web browser and, ultimately, subjugate the system.

Cookies

Cookies can also pose a security threat. Cookies are text files placed on the client computer that store information about it, which could include your computer’s browsing habits and possibly user credentials. The latter are sometimes referred to as persistent cookies, used so that a person doesn’t have to log in to a website every time. By adjusting cookie settings, you can either accept all cookies, deny all cookies, or select one of several options in between. A high cookie security setting will usually block cookies that save information that can be used to contact the user, and cookies that do not have a privacy policy.

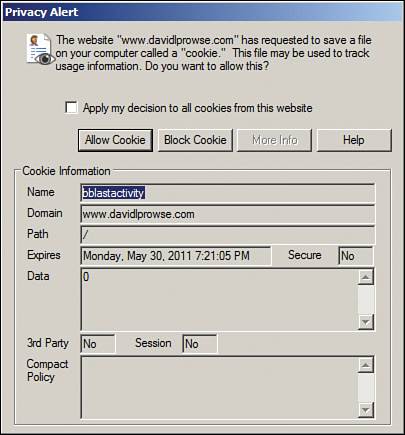

You can also override any automatic cookie handling that might occur by configuring the prompt option. This way, a user will be prompted when a website attempts to create a cookie. For example, in Figure 5-5 my website www.davidlprowse.com was accessed. The site automatically tried to create a cookie due to the bulletin board system code. The browser sees this, stops it before it occurs, and verifies with the user whether to accept it. This particular cookie is harmless, so in this case I would accept it. There is a learning curve for users when it comes to knowing which cookies to accept. I guarantee that once or twice they will block a cookie that subsequently blocks functionality of the website. In some cases, an organization deals with too many websites that have too many cookies, so this particular security configuration is not an option.

Tracking cookies are used by spyware to collect information about a web user’s activities. Cookies can also be the target for various attacks; namely, session cookies are used when an attacker attempts to hijack a session. There are several types of session hijacking. One common type is cross-site scripting (also known as XSS), which is when the attacker manipulates a client computer into executing code considered trusted as if it came from the server the client was connected to. In this way, the attacker can acquire the client computer’s session cookie (allowing the attacker to steal sensitive information) or exploit the computer in other ways. We cover more about XSS later in this chapter, and more about session hijacking in Chapter 7, “Networking Protocols and Threats.”

LSOs

Another concept similar to cookies is locally shared objects (LSOs), also called Flash cookies. These are data that Adobe Flash-based websites store on users’ computers, especially for Flash games. The privacy concern is that LSOs are used by a variety of websites to collect information about users’ browsing habits. However, LSOs can be disabled via the Flash Player Settings Manager (a.k.a. Local Settings Manager) in most of today’s operating systems. LSOs can also be deleted entirely with third-party software, or by accessing the user’s profile folder in Windows. For example, in Windows, a typical path would be

C:Users[Your Profile]AppDataRoamingMacromediaFlash Player#SharedObjects[variable folder name]

Add-ons

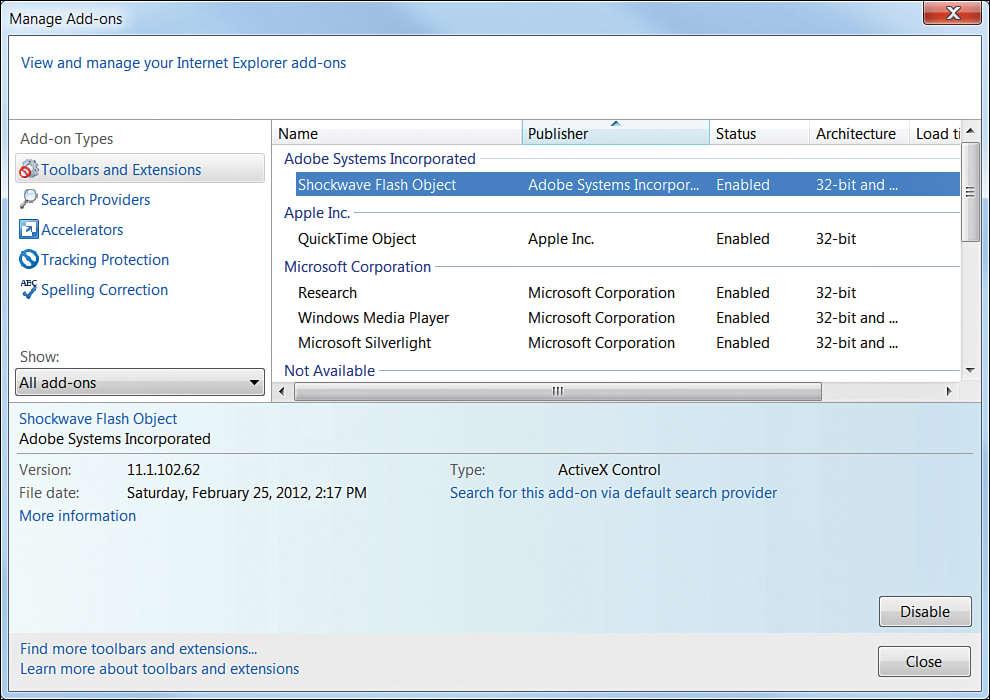

You can enable and disable add-on programs for your browser in the settings or the add-ons management utility. But be wary with add-ons. Many companies will disallow them entirely because they can be a risk to the browser and possibly to the entire system or even the network. For example, in Figure 5-6, the Shockwave Flash Object ActiveX control is selected on a computer running IE. There are scenarios where this control could cause the browser to close or perhaps cause the system to crash; it can be turned off by clicking Disable toward the bottom of the window. Many add-ons are ActiveX controls, and ActiveX could also be turned off altogether in the advanced settings of the web browser. Depending on the add-on and the situation, other ways to fix the problem include updating Flash and upgrading the browser.

ActiveX controls are small program building blocks used to allow a web browser to execute a program. They are similar to Java applets; however, Java applets can run on any platform, whereas ActiveX can run only on Internet Explorer (and Windows operating systems). You can see how a downloadable, executable ActiveX control or Java applet from a suspect website could possibly contain viruses, spyware, or worse. These are known as malicious add-ons—Flash scripts especially can be a security threat. Generally, you can disable undesirable scripts from either the advanced settings or by creating a custom security level or zone. If a particular script technology cannot be disabled within the browser, consider using a different browser, or a content filtering solution.

Advanced Browser Security

If you need to configure advanced settings in a browser, locate the settings or options section—this is usually found by clicking the three lines or three dots in the upper-right corner of the web browser. Then, locate the advanced settings. If you are using a Microsoft-based browser in Windows, you might also opt to go to the Control Panel (in icons mode), click Internet Options, and then select the Advanced tab of the Internet Properties dialog box.

Temporary browser files can contain lots of personally identifiable information (PII). You should consider automatically flushing the temp files from a system every day. For example, a hotel that offers Internet access as a service for guests might enable this function. This way, the histories of users are erased when they close the browser. On a related note, salespeople, field technicians, and other remote users should be trained to delete temporary files, cookies, and passwords when they are using computers on the road. In general, most companies discourage the saving of passwords by the browser. Some organizations make it a policy to disable that option. If you do save passwords, it would be wise to enter a master password. This way, when saved passwords are necessary, the browser will ask for only the master password, and you don’t have to type or remember all the others. Use a password quality meter (for example, www.passwordmeter.com) to tell you how strong the password is. Personally, I don’t recommend allowing any web browser to store passwords. Period. But, your organization’s policies may differ in this respect. You might also consider a secure third-party password vault for your users, but this comes with a whole new set of concerns. Choose wisely!

A company might also use the advanced settings of a browser to specify the minimum version of SSL or TLS that is allowed for secure connections. Some organizations also disable third-party browser extensions altogether from the advanced settings.

You might also consider connecting through a VPN or running your browser within a virtual machine. These methods can help to protect your identity and protect the browser as well.

Of course, this section has only scraped the surface, but it gives you an idea of some of the ways to secure a web browser. Take a look at the various browsers available—they are usually free—and spend some time getting to know the security settings for each. Remember to implement Group Policies from a server (domain controller) to adjust the security settings of a browser for many computers across the network. It saves time and is therefore more efficient.

In Chapter 4 we mentioned that removing applications that aren’t used is important. But removing web browsers can be difficult, if not downright impossible, and should be avoided. Web browsers become one with the operating system, especially so in the case of Edge/IE and Windows. So, great care should be taken when planning whether to use a new browser—because it might be difficult to get rid of later on.

Keep in mind that the technology world is changing quickly, especially when it comes to the Internet, browsing, web browsers, and attacks. Be sure to periodically review your security policies for web browsing and keep up to date with the latest browser functionality, updates, security settings, and malicious attacks.

One last comment on browsers: sometimes a higher level of security can cause a browser to fail to connect to some websites. If a user cannot connect to a site, consider checking the various security settings such as trusted sites, cookies, and so on. If necessary, reduce the security level temporarily so that the user can access the site.

Securing Other Applications

Typical users shouldn’t have access to any applications other than the ones they specifically need. For instance, would you want a typical user to have full control over the Command Prompt or PowerShell in Windows? The answer is: Doubtful. Protective measures should be put into place to make sure the typical user does not have access.

One way to do this is to use User Account Control (UAC) on qualifying Windows operating systems. UAC is a security component of Windows Vista and newer, and Windows Server 2008 and newer. It keeps every user (besides the actual Administrator account) in standard user mode instead of as an administrator with full administrative rights—even if the person is a member of the administrators group. It is meant to prevent unauthorized access and avoid user error in the form of accidental changes. A user attempting to execute commands in the Command Prompt and PowerShell will be blocked and will be asked for credentials before continuing. This applies to other applications within Windows as well.

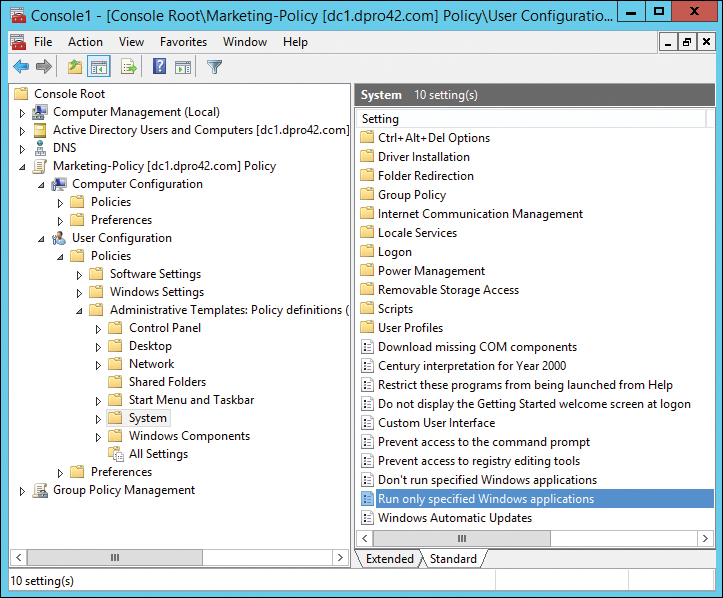

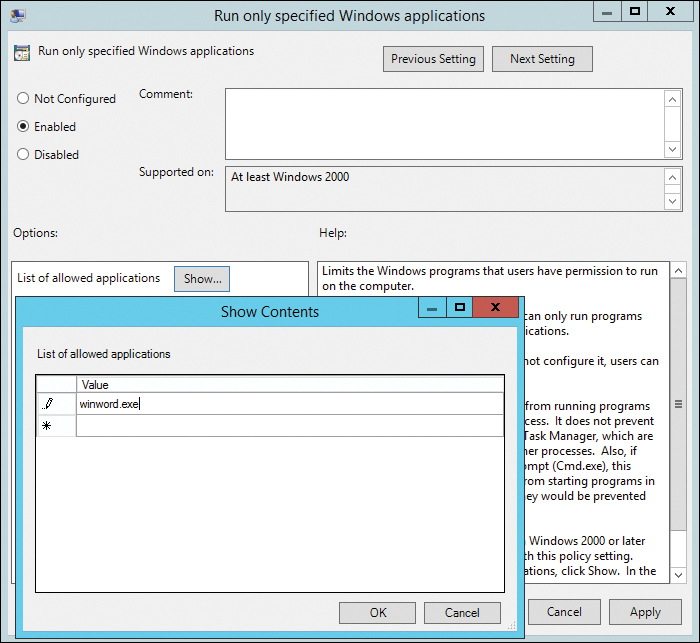

Another way to deny access to applications is to create a policy. For example, on a Windows Server, you can do this in two ways. The first way is to disallow access to specific applications; this policy is called Don’t Run Specified Windows Applications (a form of application blacklisting). However, the list could be longer than Florida, so another possibility would be to configure the Run Only Specified Windows Applications policy (a form of application whitelisting), as shown in Figure 5-7. This and the previously mentioned policy are adjacent to each other and can be found at the following path in Windows Server:

Policy (in this case we use the Marketing-Policy again) > User Configuration > Policies > Administrative Templates > System

When double-clicked, the policy opens and you can enable it and specify one or more applications that are allowed. All other applications will be denied to the user (if the user is logged on to the domain and is a member of the Marketing OU to which the policy is applied). Maybe you as the systems administrator decide that the marketing people should be using only Microsoft Word—a rather narrow view, but let’s use that just for the sake of argument. All you need to do is click the Enabled radio button, click the Show button, and in the Show Contents window add the application; in this case, Word, which is winword.exe. An example of this is shown in Figure 5-8.

This is in-depth stuff, and the CompTIA Security+ exam probably won’t test you on exact procedures for this, but you should be aware that there are various ways to disallow access to just about anything you can think of. Of course, when it comes to Windows Server, the more practice you can get the better—for the exam, and for the real world.

For applications that users are allowed to work with, they should be secured accordingly. In general, applications should be updated, patched, or have the appropriate service packs installed. This is collectively known as application patch management, and is an overall part of the configuration management of an organization’s software environment. Table 5-1 shows some common applications and some simple safeguards that can be implemented for them.

Table 5-1 Common Applications and Safeguards

| Application Name | Safeguards |

| Outlook | Install the latest Office update or service pack. (This applies to all Office suite applications.) Keep Office up to date with Windows Update. (This also applies to all Office suite applications.) Consider an upgrade to a newer version of Office, if the currently used one is no longer supported. Increase the junk e-mail security level or use a whitelist. Read messages in plain text instead of HTML. Enable attachment blocking. Use a version that enables Object Model Guard functionality, or download it for older versions. Password protect any .PST files. Use strong passwords for Microsoft accounts if using web-based Outlook applications. Use encryption: Consider encrypting the authentication scheme, and possibly other traffic, including message traffic between Outlook clients and Exchange servers. Consider a digital certificate. Secure Password Authentication (SPA) can be used to secure the login, and S/MIME and PGP/GPG can be used to secure actual e-mail transmissions. Or, in the case of web-based e-mail, use SSL or TLS for encryption. |

| Word | Consider using passwords for opening or modifying documents. Use read-only or comments only (tracking changes) settings. Consider using a digital certificate to seal the document. |

| Excel | Use password protection on worksheets. Set macro security levels. Consider Excel encryption. |

Mobile apps should be secured as well. You might want to disable GPS to protect a mobile app, or the mobile device in general. You should also consider strong passwords for e-mail accounts and accounts to “app stores” and similar shopping portals. Watch for security updates for the mobile apps your organization uses.

We also need to give some thought to back office applications that run on servers. Database software, e-mail server software, and other back office “server” software needs to be hardened and secured as well. High-level server application examples from Microsoft include SQL Server and Exchange Server—and let’s not forget FTP servers and web servers. These applications have their own set of configuration requirements and might be insecure out-of-the-box. For instance, a database server, FTP server, or other similar server will often have its own separate administrator account with a blank password by default. It is common knowledge what the names of these administrative user accounts are, and if they haven’t been secured, hacking the system becomes mere child’s play. Be sure to rename accounts (and disable unnecessary accounts) and configure strong passwords, just the way you would in an operating system.

One other thing to watch for in general is consolidation. Some organizations, in an attempt to save money, will merge several back office systems onto one computer. While this is good for the budget, and uses a small amount of resources, it opens the floodgates for attack. The more services a server has running, the more open doorways that exist to that system—and, the more possible ways that the server can fail. The most important services should be compartmentalized physically or virtually in order to reduce the size of the attack surface, and lower the total amount of threats to an individual system. We’ll discuss servers more in Chapter 6, “Network Design Elements.”

Organizations use many applications specific to their type of business. Stay on top of the various vendors that supply your organization with updates and new versions of software. Always test what effects one new piece of software will have on the rest of your installed software before implementation.

So far in this chapter we have discussed browser software, server-based applications, and other apps such as Microsoft Office. But that just scratches the surface. Whatever the application you use, attempt to secure it by planning how it will be utilized and deployed, updating it, and configuring it. Access the manufacturer’s website for more information on how to secure the specific applications you use. Just remember that users (and other administrators) still need to work with the program. Don’t make it so secure that a user gets locked out!

Secure Programming

We mentioned several times that many applications are inherently insecure out-of-the-box. But this problem can be limited with the implementation of secure programming or secure coding concepts. Secure coding concepts can be defined as the best practices used during software development in an effort to increase the security of the application being built—they harden the code of the application. In this section we cover several types of secure coding concepts used by programmers, some of the vulnerabilities programmers should watch out for, and how to defend against them.

Software Development Life Cycle

To properly develop a secure application, the developer has to scrutinize it at every turn, and from every angle, throughout the life of the project. Over time, this idea manifested itself into the concept known as the software development life cycle (SDLC)—an organized process of planning, developing, testing, deploying, and maintaining systems and applications, and the various methodologies used to do so. A common SDLC model used by companies is the waterfall model. Using this model the SDLC is broken down into several sequential phases. Here’s an example of an SDLC’s phases based on the waterfall model:

1. Planning and analysis. Goals are determined, needs are assessed, and high-level planning is accomplished.

2. Software/systems design. The design of the system or application is defined and diagrammed in detail.

3. Implementation. The code for the project is written.

4. Testing. The system or application is checked thoroughly in a testing environment.

5. Integration. If multiple systems are involved, the application should be tested in conjunction with those systems.

6. Deployment. The system or application is put into production and is now available to end users.

7. Maintenance. Software is monitored and updated throughout the rest of its life cycle. If there are many versions and configurations. version control is implemented to keep everything organized.

This is a basic example of the phases in an SDLC methodology. This one actually builds on the original waterfall model but it is quite similar. However, it could be more or less complicated depending on the number of systems, the project goals, and the organization involved.

Note

The SDLC is also referred to as: software development process, systems development lifecycle, or application development life cycle. CompTIA uses the term software development life cycle in its Security+ objectives, but be ready for slightly different terms based on the same concept.

While variations of the waterfall model are commonplace, an organization might opt to use a different model, such as the V-shaped model, which stresses more testing, or rapid application development (RAD), which puts more emphasis on process and less emphasis on planning. Then there is the agile model, which breaks work into small increments and is designed to be more adaptive to change. The agile model has become more and more popular since 2001. It focuses on customer satisfaction, cooperation between developers and business people, face-to-face conversation, simplicity, and quick adjustments to change.

The increasing popularity of the agile model led to DevOps, and subsequently, the practice of secure DevOps. DevOps is a portmanteau of the terms software development and information technology operations. It emphasizes the collaboration of those two departments so that the coding, testing, and releasing of software can happen more efficiently—and hopefully, more securely. DevOps is similar to continuous delivery—which focuses on automation and quick execution—but from an organizational standpoint is broader and supports greater collaboration. A secure DevOps environment should include the following concepts: secure provisioning and deprovisioning of software, services, and infrastructure; security automation; continuous integration; baselining; infrastructure as code; and immutable systems. Immutable means unchanging over time. From a systems and infrastructure viewpoint it means that software and services are replaced instead of being changed. Rapid, efficient deployment of new applications is at the core of DevOps and it is one of the main tasks of all groups involved.

Core SDLC and DevOps Principles

The terms and models discussed in the previous section can be confusing at times because they are similar, and because there are gray areas between them that overlap. So, if you are ever confused while working in the field, it’s good to think back to the core fundamentals of security. From a larger perspective, a programmer/systems developer and the security administrator should always keep the CIA concept in mind:

![]() Maintaining confidentiality: Only allowing users access to data to which they have permission

Maintaining confidentiality: Only allowing users access to data to which they have permission

![]() Preserving integrity: Ensuring data is not tampered with or altered

Preserving integrity: Ensuring data is not tampered with or altered

![]() Protecting availability: Ensuring systems and data are accessible to authorized users when necessary

Protecting availability: Ensuring systems and data are accessible to authorized users when necessary

The CIA concepts are important when doing a secure code review, which can be defined as an in-depth code inspection procedure. It is often included by organizations as part of the testing phase of the SDLC but is usually conducted before other tests such as fuzzing or penetration tests, which we discuss more later in this chapter.

In general, quality assurance policies and procedures should be implemented while developing and testing code. This will vary from one organization to the next, but generally includes procedures that have been developed by a team, a set of checks and balances, and a large amount of documentation. By checking the documentation within a project, a developer can save a lot of time, while keeping the project more secure.

From a larger perspective, an organization might implement modeling as part of its software qual101ity assurance program. By modeling, or simulating, a system or application, it can be tested, verified, validated, and finally accredited as acceptable for a specific purpose.

One secure and structured approach that organizations take is called threat modeling. Threat modeling enables you to prioritize threats to an application, based on their potential impact. This modeling process includes identifying assets to the system or application, uncovering vulnerabilities, identifying threats, documenting threats, and rating those threats according to their potential impact. The more risk, the higher the rating. Threat modeling is often incorporated into the SDLC during the design, testing, and deployment phases.

Some other very important security principles that should be incorporated into the SDLC include

![]() Principle of least privilege: Applications should be coded and run in such a way as to maintain the principle of least privilege. Users should only have access to what they need. Processes should run with only the bare minimum access needed to complete their functions. However, this can be coupled with separation of privilege, where access to objects depends on more than one condition (for example, authentication plus an encrypted key).

Principle of least privilege: Applications should be coded and run in such a way as to maintain the principle of least privilege. Users should only have access to what they need. Processes should run with only the bare minimum access needed to complete their functions. However, this can be coupled with separation of privilege, where access to objects depends on more than one condition (for example, authentication plus an encrypted key).

![]() Principle of defense in depth: The more security controls the better. The layering of defense in secure coding may take the form of validation, encryption, auditing, special authentication techniques, and so on.

Principle of defense in depth: The more security controls the better. The layering of defense in secure coding may take the form of validation, encryption, auditing, special authentication techniques, and so on.

![]() Applications should never trust user input: Input should be validated carefully.

Applications should never trust user input: Input should be validated carefully.

![]() Minimize the attack surface area: Every additional feature that a programmer adds to an application increases the size of the attack surface and increases risk. Unnecessary functions should be removed, and necessary functions should require authorization.

Minimize the attack surface area: Every additional feature that a programmer adds to an application increases the size of the attack surface and increases risk. Unnecessary functions should be removed, and necessary functions should require authorization.

![]() Establish secure defaults: Out-of-the-box offerings should be as secure as possible. If possible, user password complexity and password aging default policies should be configured by the programmer, not the user. Permissions should default to no access and should be granted only as they are needed.

Establish secure defaults: Out-of-the-box offerings should be as secure as possible. If possible, user password complexity and password aging default policies should be configured by the programmer, not the user. Permissions should default to no access and should be granted only as they are needed.

![]() Provide for authenticity and integrity: For example, when deploying applications and scripts, use code signing in the form of a cryptographic hash with verifiable checksum for validation. A digital signature will verify the author and/or the version of the code—that is, if the corresponding private key is secured properly.

Provide for authenticity and integrity: For example, when deploying applications and scripts, use code signing in the form of a cryptographic hash with verifiable checksum for validation. A digital signature will verify the author and/or the version of the code—that is, if the corresponding private key is secured properly.

![]() Fail securely: At times, applications will fail. How they fail determines their security. Failure exceptions might show the programming language that was used to build the application, or worse, lead to access holes. Error handling/exception handling code should be checked thoroughly so that a malicious user can’t find out any additional information about the system. These error-handling methods are sometimes referred to technically as pseudocodes. For example, to handle a program exception, a properly written pseudocode will basically state (in spoken English): “If a program module crashes, then restart the program module.”

Fail securely: At times, applications will fail. How they fail determines their security. Failure exceptions might show the programming language that was used to build the application, or worse, lead to access holes. Error handling/exception handling code should be checked thoroughly so that a malicious user can’t find out any additional information about the system. These error-handling methods are sometimes referred to technically as pseudocodes. For example, to handle a program exception, a properly written pseudocode will basically state (in spoken English): “If a program module crashes, then restart the program module.”

![]() Fix security issues correctly: Once found, security vulnerabilities should be thoroughly tested, documented, and understood. Patches should be developed to fix the problem, but not cause other issues or application regression.

Fix security issues correctly: Once found, security vulnerabilities should be thoroughly tested, documented, and understood. Patches should be developed to fix the problem, but not cause other issues or application regression.

There are some other concepts to consider. First is obfuscation, which is the complicating of source code to make it more difficult for people to understand. This is done to conceal its purpose in order to prevent tampering and/or reverse engineering. It is an example of security through obscurity. Other examples of security through obscurity include code camouflaging and steganography, both of which might be used to hide the true code being used. Another important concept is code checking, which involves limiting the reuse of code to that which has been approved for use, and removing dead code. It’s also vital to incorporate good memory management techniques. Finally, be very careful when using third-party libraries and software development kits (SDKs), and test them thoroughly before using them within a live application.

For the Security+ exam, the most important of the SDLC phases are maintenance and testing. In the maintenance phase, which doesn’t end until the software is removed from all computers, an application needs to be updated accordingly, corrected when it fails, and constantly monitored. We discuss more about monitoring in Chapter 13, “Monitoring and Auditing.” In the testing phase, a programmer (or team of programmers and other employees) checks for bugs and errors in a variety of ways. It’s imperative that you know some of the vulnerabilities and attacks to a system or application, and how to fix them and protect against them. The best way to prevent these attacks is to test and review code.

Programming Testing Methods

Let’s discuss the testing methods and techniques that can be implemented to seek out programming vulnerabilities and help prevent attacks from happening.

Programmers have various ways to test their code, including system testing, input validation, and fuzzing. By using a combination of these testing techniques during the testing phase of the SDLC, there is a much higher probability of a secure application as the end result.

White-box and Black-box Testing

System testing is generally broken down into two categories: black-box and white-box. Black-box testing utilizes people who do not know the system. These people (or programs, if automated) test the functionality of the system. Specific knowledge of the system code, and programming knowledge in general, is not required. The tester does not know about the system’s internal structure and is often given limited information about what the application or system is supposed to do. In black-box testing, one of the most common goals is to crash the program. If a user is able to crash the program by entering improper input, the programmer has probably neglected to thoroughly check the error-handling code and/or input validation.

On the other side of the spectrum, white-box testing (also known as transparent testing) is a way of testing the internal workings of the application or system. Testers must have programming knowledge and are given detailed information about the design of the system. They are given login details, production documentation, and source code. System testers might use a combination of fuzzing (covered shortly), data flow testing, and other techniques such as stress testing, penetration testing, and sandboxes. Stress testing is usually done on real-time operating systems, mission-critical systems, and software, and checks if they have the robustness and availability required by the organization. A penetration test is a method of evaluating a system’s security by simulating one or more attacks on that system. We speak more about penetration testing in Chapter 12, “Vulnerability and Risk Assessment.” A sandbox is a term applied to when a web script (or other code) runs in its own environment (often a virtual environment) for the express purpose of not interfering with other processes, often for testing. Sandboxing technology is frequently used to test unverified applications for malware, malignant code, and possible errors such as buffer overflows.

A third category that has become more common of late is gray-box testing, where the tester has internal knowledge of the system from which to create tests but conducts the tests the way a black-box tester would—at the user level.

Compile-Time Errors Versus Runtime Errors

Programmers and developers need to test for potential compile-time errors and runtime errors. Compile time refers to the duration of time during which the statements written in any programming language are checked for errors. Compile-time errors might include syntax errors in the code and type-checking errors. A programmer can check these without actually “running” the program, and instead checks it in the compile stage when it is converted into machine code.

A runtime error is a program error that occurs while the program is running. The term is often used in contrast to other types of program errors, such as syntax errors and compile-time errors. Runtime errors might include running out of memory, invalid memory address access, invalid parameter value, or buffer overflows/dereferencing a null pointer (to name a few), all of which can only be discovered by running the program as a user. Another potential runtime error can occur if there is an attempt to divide by zero. These types of errors result in a software exception. Software and hardware exceptions need to be handled properly. Consequently, structured exception handling (SEH) is a mechanism used to handle both types of exceptions. It enables the programmer to have complete control over how exceptions are handled and provides support for debugging.

Code issues and errors that occur in either compile time or run time could lead to vulnerabilities in the software. However, it’s the runtime environment that we are more interested in from a security perspective, because that more often is where the attacker will attempt to exploit software and websites.

Input Validation

Input validation is very important for website design and application development. Input validation, or data validation, is a process that ensures the correct usage of data—it checks the data that is inputted by users into web forms and other similar web elements. If data is not validated correctly, it can lead to various security vulnerabilities including sensitive data exposure and the possibility of data corruption. You can validate data in many ways, from coded data checks and consistency checks to spelling and grammar checks, and so on. Whatever data is being dealt with, it should be checked to make sure it is being entered correctly and won’t create or take advantage of a security flaw. If validated properly, bad data and malformed data will be rejected. Input validation should be done both on the client side and, more importantly, on the server side. Let’s look at an example next.

If an organization has a web page with a PHP-based contact form, the data entered by the visitor should be checked for errors, or maliciously typed input. The following is a piece of PHP code contained within a common contact form:

else if (!preg_match('/^[A-Za-z0-9.-]+$/', $domain))

{

// character not valid in domain part

$isValid = false;

}

This is a part of a larger piece of code that is checking the entire e-mail address a user enters into a form field. This particular snippet of code checks to make sure the user is not trying to enter a backslash in the domain name portion of the e-mail address. This is not allowed in e-mail addresses and could be detrimental if used maliciously. Note in the first line within the brackets it says A-Za-z0-9.-, which is telling the system what characters are allowed. Uppercase and lowercase letters, numbers, periods, and dashes are allowed, but other characters such as backslashes, dollar signs, and so on are not allowed. Those other characters would be interpreted by the form’s supporting PHP files as illegitimate data and would not be passed on through the system. The user would receive an error, which is a part of client-side validation. But, the more a PHP form is programmed to check for errors, the more it is possible to have additional security holes. Therefore, server-side validation is even more important. Any data that is passed on by the PHP form should be checked at the server as well. In fact, an attacker might not even be using the form in question, and might be attacking the URL of the web page in some other manner. This can be checked at the server within the database software, or through other means. By the way, the concept of combining client-side and server-side validation also goes for pages that utilize JavaScript.

This is just one basic example, but as mentioned previously, input validation is the key to preventing attacks such as SQL injection and XSS. All form fields should be tested for good input valida104tion code, both on the client side and the server side. By combining the two, and checking every access attempt, you develop complete mediation of requests.

Note

Using input validation is one way to prevent sensitive data exposure, which occurs when an application does not adequately protect PII. This can also be prevented by the following: making sure that inputted data is never stored or transmitted in clear text; using strong encryption and securing key generation and storage; using HTTPS for authentication; and using a salt, which is random data used to strengthen hashed passwords. Salts are covered in more detail in Chapter 14.

Static and Dynamic Code Analysis

Static code analysis is a type of debugging that is carried out by examining the code without executing the program. This can be done by scrutinizing code visually, or with the aid of specific automated tools—static code analyzers—based on the language being used. Static code analysis can help to reveal major issues with code that could even lead to disasters. While this is an important phase of testing, it should always be followed by some type of dynamic analysis—for example, fuzz testing. This is when the program is actually executed while it is being tested. It is meant to locate minor defects in code and vulnerabilities. The combination of static and dynamic analysis by an organization is sometimes referred to as glass-box testing, which is another name for white-box testing.

Fuzz Testing

Fuzz testing (also known as fuzzing or dynamic analysis) is another smart concept. This is where random data is inputted into a computer program in an attempt to find vulnerabilities. This is often done without knowledge of the source code of the program. The program to be tested is run, has data inputted to it, and is monitored for exceptions such as crashes. This can be done with applications and operating systems. It is commonly used to check file parsers within applications such as Microsoft Word, and network parsers that are used by protocols such as DHCP. Fuzz testing can uncover full system failures, memory leaks, and error-handling issues. Fuzzing is usually automated (a program that checks a program) and can be as simple as sending a random set of bits to be inputted to the software. However, designing the inputs that cause the software to fail can be a tricky business, and often a myriad of variations of code needs to be tried to find vulnerabilities. Once the fuzz test is complete, the results are analyzed, the code is reviewed and made stronger, and vulnerabilities that were found are removed. The stronger the fuzz test, the better the chances that the program will not be susceptible to exploits.

As a final word on this, once code is properly tested and approved, it should be reused whenever possible. This helps to avoid “re-creating the wheel” and avoids common mistakes that a programmer might make that others might have already fixed. Just remember, code reuse is only applicable if the code is up to date and approved for use.

Programming Vulnerabilities and Attacks

Let’s discuss some program code vulnerabilities and the attacks that exploit them. This section gives specific ways to mitigate these risks during application development, hopefully preventing these threats and attacks from becoming realities.

Note

This is a rather short section, and covers a topic that we could fill several books with. It is not a seminar on how to program, but rather a concise description of programmed attack methods, and some defenses against them. The Security+ objectives do not go into great detail concerning these methods, but CompTIA does expect you to have a broad and basic understanding.

Backdoors

To begin, applications should be analyzed for backdoors. As mentioned in Chapter 2, “Computer Systems Security Part I,” backdoors are used in computer programs to bypass normal authentication and other security mechanisms in place. These can be avoided by updating the operating system and applications and firmware on devices, and especially by carefully checking the code of the program. If the system is not updated, a malicious person could take all kinds of actions via the backdoor. For example, a software developer who works for a company could install code through a backdoor that reactivates the user account after it was disabled (for whatever reason—termination, end of consulting period, and so on). This is done through the use of a logic bomb (in addition to the backdoor) and could be deadly once the software developer has access again. To reiterate, make sure the OS is updated, and consider job rotation, where programmers check each other’s work.

Memory/Buffer Vulnerabilities

Memory and buffer vulnerabilities are common. There are several types of these, but perhaps most important is the buffer overflow. A buffer overflow is when a process stores data outside the memory that the developer intended. This could cause erratic behavior in the application, especially if the memory already had other data in it. Stacks and heaps are data structures that can be affected by buffer overflows. The stack is a key data structure necessary for the exchange of data between procedures. The heap contains data items whose size can be altered during execution. Value types are stored in a stack, whereas reference types are stored in a heap. An ethical coder will try to keep these running efficiently. An unethical coder wanting to create a program vulnerability could, for example, omit input validation, which could allow a buffer overflow to affect heaps and stacks, which in turn could adversely affect the application or the operating system in question.

Let’s say a programmer allows for 16 bytes in a string variable. This won’t be a problem normally. However, if the programmer failed to verify that no more than 16 bytes could be copied over to the variable, that would create a vulnerability that an attacker could exploit with a buffer overflow attack. The buffer overflow can also be initiated by certain inputs. For example, corrupting the stack with no-operation (no-op, NOP, or NOOP) machine instructions, which when used in large numbers can start a NOP slide, can ultimately lead to the execution of unwanted arbitrary code, or lead to a denial-of-service (DoS) on the affected computer.

All this can be prevented by patching the system or application in question, making sure that the OS uses data execution prevention, and utilizing bounds checking, which is a programmatic method of detecting whether a particular variable is within design bounds before it is allowed to be used. It can also be prevented by using correct code, checking code carefully, and using the right programming language for the job in question (the right tool for the right job, yes?). Without getting too much into the programming side of things, special values called “canaries” are used to protect against buffer overflows.

On a semi-related note, integer overflows are when arithmetic operations attempt to create a numeric value that is too big for the available memory space. This creates a wrap and can cause resets and undefined behavior in programming languages such as C and C++. The security ramification is that the integer overflow can violate the program’s default behavior and possibly lead to a buffer overflow. This can be prevented or avoided by making overflows trigger an exception condition, or by using a model for automatically eliminating integer overflow, such as the CERT As-if Infinitely Ranged (AIR) integer model. More can be learned about this model at the following link:

http://www.cert.org/secure-coding/tools/integral-security.cfm

Then there are memory leaks. A memory leak is a type of resource leak caused when a program does not release memory properly. The lack of freed-up memory can reduce the performance of a computer, especially in systems with shared memory or limited memory. A kernel-level leak can lead to serious system stability issues. The memory leak might happen on its own due to poor programming, or it could be that code resides in the application that is vulnerable, and is later exploited by an attacker who sends specific packets to the system over the network. This type of error is more common in languages such as C or C++ that have no automatic garbage collection, but it could happen in any programming language. There are several memory debuggers that can be used to check for leaks. However, it is recommended that garbage collection libraries be added to C, C++, or other programming language, to check for potential memory leaks.

Another potential memory-related issue deals with pointer dereferencing—for example, the null pointer dereference. Pointer dereferencing is common in programming; when you want to access data (say, an integer) in memory, dereferencing the pointer would retrieve different data from a different section of memory (perhaps a different integer). Programs that contain a null pointer dereference generate memory fault errors (memory leaks). A null pointer dereference occurs when the program dereferences a pointer that it expects to be valid, but is null, which can cause the application to exit, or the system to crash. From a programmatical standpoint, the main way to prevent this is meticulous coding. Programmers can use special memory error analysis tools to enable error detection for a null pointer deference. Once identified, the programmer can correct the code that may be causing the error(s). But this concept can be used to attack systems over the network by initiating IP address to hostname resolutions—ones that the attacker hopes will fail—causing a return null. What this all means is that the network needs to be protected from attackers attempting this (and many other) programmatical and memory-based attacks via a network connection. We’ll discuss how to do that in Chapters 6 through 9.

Note

For more information on null pointer dereferencing (and many other software weaknesses), see the Common Weakness Enumeration portion of MITRE: https://cwe.mitre.org/. Add that site to your favorites!

A programmer may make use of address space layout randomization (ASLR) to help prevent the exploitation of memory corruption vulnerabilities. It randomly arranges the different address spaces used by a program (or process). This can aid in protecting mobile devices (and other systems) from exploits caused by memory-management problems. While there are exploits to ASLR (such as side-channel attacks) that can bypass it and de-randomize how the address space is arranged, many systems employ some version of ASLR.

Arbitrary Code Execution/Remote Code Execution

Arbitrary code execution is when an attacker obtains control of a target computer through some sort of vulnerability, thus gaining the power to execute commands on that remote computer at will. Programs that are designed to exploit software bugs or other vulnerabilities are often called arbitrary code execution exploits. These types of exploits inject “shellcode” to allow the attacker to run arbitrary commands on the remote computer. This type of attack is also known as remote code execution (RCE) and can potentially allow the attacker to take full control of the remote computer and turn it into a zombie.

RCE commands can be sent to the target computer using the URL of a browser, or by using the Netcat service, among other methods. To defend against this, applications should be updated, or if the application is being developed by your organization, it should be checked with fuzz testing and strong input validation (client side and server side) as part of the testing stage of the SDLC. If you have PHP running on a web server, it can be set to disable remote execution of configurations. A web server (or other server) can also be configured to block access from specific hosts.

Note

RCE is also very common with web browsers. All browsers have been affected at some point, though some instances are more publicized than others. To see proof of this, access the Internet and search for the Common Vulnerabilities and Exposures (CVE) list for each type of web browser.

XSS and XSRF

Two web application vulnerabilities to watch out for include cross-site scripting (XSS) and cross-site request forgery (XSRF).

XSS holes are vulnerabilities that can be exploited with a type of code injection. Code injection is the exploitation of a computer programming bug or flaw by inserting and processing invalid information—it is used to change how the program executes data. In the case of an XSS attack, an attacker inserts malicious scripts into a web page in the hopes of gaining elevated privileges and access to session cookies and other information stored by a user’s web browser. This code (often JavaScript) is usually injected from a separate “attack site.” It can also manifest itself as an embedded JavaScript image tag, header manipulation (as in manipulated HTTP response headers), or other HTML embedded image object within e-mails (that are web-based). The XSS attack can be defeated by programmers through the use of output encoding (JavaScript escaping, CSS escaping, and URL encoding), by preventing the use of HTML tags, and by input validation: for example, checking forms and confirming that input from users does not contain hypertext. On the user side, the possibility of this attack’s success can be reduced by increasing cookie security and by disabling scripts in the ways mentioned in the first section of this chapter, “Securing the Browser.” If XSS attacks by e-mail are a concern, the user could opt to set his e-mail client to text only.

The XSS attack exploits the trust a user’s browser has in a website. The converse of this, the XSRF attack, exploits the trust that a website has in a user’s browser. In this attack (also known as a one-click attack), the user’s browser is compromised and transmits unauthorized commands to the website. The chances of this attack can be reduced by requiring tokens on web pages that contain forms, special authentication techniques (possibly encrypted), scanning .XML files (which could contain the code required for unauthorized access), and submitting cookies twice instead of once, while verifying that both cookie submissions match.

More Code Injection Examples

Other examples of code injection include SQL injection, XML injection, and LDAP injection. Let’s discuss these briefly now.

Databases are just as vulnerable as web servers. The most common kind of database is the relational database, which is administered by a relational database management system (RDBMS). These systems are usually written in the Structured Query Language (SQL). An example of a SQL database is Microsoft’s SQL Server (pronounced “sequel”); it can act as the back end for a program written in Visual Basic or Visual C++. Another example is MySQL, a free, open source relational database often used in conjunction with websites that employ PHP pages. One concern with SQL is the SQL injection attack, which occurs in databases, ASP.NET applications, and blogging software (such as WordPress) that use MySQL as a back end. In these attacks user input in web forms is not filtered correctly and is executed improperly, with the end result of gaining access to resources or changing data. For example, the login form for a web page that uses a SQL back end (such as a WordPress login page) can be insecure, especially if the front-end application is not updated. An attacker will attempt to access the database (from a form or in a variety of other ways), query the database, find out a user, and then inject code to the password portion of the SQL code—perhaps something as simple as X = X. This will allow any password for the user account to be used. If the login script was written properly (and validated properly), it should deflect this injected code. But if not, or if the application being used is not updated, it could be susceptible. It can be defended against by constraining user input, filtering user input, and using stored procedures such as input validating forms. Used to save memory, a stored procedure is a subroutine in an RDBMS that is typically implemented as a data-validation or access-control mechanism which includes several SQL statements in one procedure that can be accessed by multiple applications.

When using relational databases such as SQL and MySQL, a programmer works with the concept of normalization, which is the ability to avoid or reduce data redundancies and anomalies—a core concept within relational DBs. There are, however, other databases that work within the principle of de-normalization, and don’t use SQL (or use code in addition to SQL). Known as NoSQL databases, they offer a different mechanism for retrieving data than their relational database counterparts. These are commonly found in data warehouses and virtual systems provided by cloud-based services. While they are usually resistant to SQL injection, there are NoSQL injection attacks as well. Because of the type of programming used in NoSQL, the potential impact of a NoSQL injection attack can be greater than that of a SQL injection attack. An example of a NoSQL injection attack is the JavaScript Object Notation (JSON) injection attack. But, NoSQL databases are also vulnerable to brute-force attacks (cracking of passwords) and connection pollution (a combination of XSS and code injection techniques). Methods to protect against NoSQL injection are similar to the methods mentioned for SQL injection. However, because NoSQL databases are often used within cloud services, a security administrator for a company might not have much control over the level of security that is implemented. In these cases, careful scrutiny of the service-level agreement (SLA) between the company and the cloud provider is imperative.

LDAP injection is similar to SQL injection, again using a web form input box to gain access, or by exploiting weak LDAP lookup configurations. The Lightweight Directory Access Protocol is a protocol used to maintain a directory of information such as user accounts, or other types of objects. The best way to protect against this (and all code injection techniques for that matter) is to incorporate strong input validation.

XML injection attacks can compromise the logic of XML (Extensible Markup Language) applications—for example, XML structures that contain the code for users. It can be used to create new users and possibly obtain administrative access. This can be tested for by attempting to insert XML metacharacters such as single and double quotes. It can be prevented by filtering in allowed characters (for example, A–Z only). This is an example of “default deny” where only what you explicitly filter in is permitted; everything else is forbidden.

One thing to remember is that when attackers utilize code injecting techniques, they are adding their own code to existing code, or are inserting their own code into a form. A variant of this is command injection, which doesn’t utilize new code; instead, an attacker executes system-level commands on a vulnerable application or OS. The attacker might enter the command (and other syntax) into an HTML form field or other web-based form to gain access to a web server’s password files.

Note

Though not completely related, another type of injection attack is DLL injection. This is when code is run within the address space of another process by forcing it to load a dynamic link library (DLL). Ultimately, this can influence the behavior of a program that was not originally intended. It can be uncovered through penetration testing, which we will discuss more in Chapter 12.

Once again, the best way to defend against code injection/command injection techniques in general is by implementing input validation during the development, testing, and maintenance phases of the SDLC.

Directory Traversal

Directory traversal, or the ../ (dot dot slash) attack, is a method of accessing unauthorized parent (or worse, root) directories. It is often used on web servers that have PHP files and are Linux or UNIX-based, but it can also be perpetrated on Microsoft operating systems (in which case it would be .. or the “dot dot backslash” attack). It is designed to get access to files such as ones that contain passwords. This can be prevented by updating the OS, or by checking the code of files for vulnerabilities, otherwise known as fuzzing. For example, a PHP file on a Linux-based web server might have a vulnerable if or include statement, which when attacked properly could give the attacker access to higher directories and the passwd file.

Zero Day Attack

A zero day attack is an attack executed on a vulnerability in software, before that vulnerability is known to the creator of the software, and before the developer can create a patch to fix the vulnerability. It’s not a specific attack, but rather a group of attacks including viruses, Trojans, buffer overflow attacks, and so on. These attacks can cause damage even after the creator knows of the vulnerability, because it may take time to release a patch to prevent the attacks and fix damage caused by them. It can be discovered by thorough analysis and fuzz testing.

Zero day attacks can be prevented by using newer operating systems that have protection mechanisms and by updating those operating systems. They can also be prevented by using multiple layers of firewalls and by using whitelisting, which only allows known good applications to run. Collectively, these preventive methods are referred to as zero day protection.

Table 5-2 summarizes the programming vulnerabilities/attacks we have covered in this section.

Table 5-2 Summary of Programming Vulnerabilities and Attacks

| Vulnerability | Description |

| Backdoor | Placed by programmers, knowingly or inadvertently, to bypass normal authentication, and other security mechanisms in place. |

| Buffer overflow | When a process stores data outside the memory that the developer intended. |

| Remote code execution (RCE) | When an attacker obtains control of a target computer through some sort of vulnerability, gaining the power to execute commands on that remote computer. |

| Cross-site scripting (XSS) | Exploits the trust a user’s browser has in a website through code injection, often in web forms. |

| Cross-site request forgery (XSRF) | Exploits the trust that a website has in a user’s browser, which becomes compromised and transmits unauthorized commands to the website. |

| Code injection | When user input in database web forms is not filtered correctly and is executed improperly. SQL injection is a very common example. |

| Directory traversal | A method of accessing unauthorized parent (or worse, root) directories. |

| Zero day | A group of attacks executed on vulnerabilities in software before those vulnerabilities are known to the creator. |

The CompTIA Security+ exam objectives don’t expect you to be a programmer, but they do expect you to have a basic knowledge of programming languages and methodologies so that you can help to secure applications effectively. I recommend a basic knowledge of programming languages used to build applications, such as Visual Basic, C++, C#, Java, and Python, as well as web-based programming languages such as HTML, ASP, and PHP, plus knowledge of database programming languages such as SQL. This foundation knowledge will help you not only on the exam, but also as a security administrator when you have to act as a liaison to the programming team, or if you are actually involved in testing an application.

Chapter Review Activities

Use the features in this section to study and review the topics in this chapter.

Chapter Summary

Without applications, a computer doesn’t do much for the user. Unfortunately, applications are often the most vulnerable part of a system. The fact that there are so many of them and that they come from so many sources can make it difficult to implement effective application security. This chapter gave a foundation of methods you can use to protect your programs.

The key with most organizations is to limit the number of applications that are used. The fewer applications, the easier they are to secure and, most likely, the more productive users will be. We mentioned the whitelisting and blacklisting of applications in this chapter and in Chapter 4, and those methods can be very effective. However, there are some applications that users cannot do without. One example is the web browser. Edge, Internet Explorer, Firefox, Chrome, and Safari are very widely used. For mobile devices, Safari, Chrome, and the Android browser are common. One general rule for browsers is to watch out for the latest version. You should be on top of security updates for the current version you are using, but always test a new version of a browser very carefully before implementing it.