Chapter 1. The Value of Real-Time Messaging

Real-time systems power many of the systems we rely on today for banking, food, transportation, internet service, and communication, among others. They provide the infrastructure to make many of the daily interactions we have with them seem like magic. Apache Pulsar is one of these systems, and throughout this book, we’ll dig into what makes it unique. Before diving into all the technical weeds about Pulsar, this chapter motivates building systems like Pulsar. It should provide some context on why we would take on the complexity of a Real-Time system with all of its moving parts.

Data In Motion

When I was eleven years old, I started a small business selling trading cards in my elementary school cafeteria. The business model was simple, I bought packs of Pokemon cards, figured out which ones were the most valuable from cross-checking on internet forums, and then attempted to sell them to other kids during lunch break. What started as an exciting and profitable venture soon turned into a crowded space with many other children entering the market and trying to make some spending money of their own. I went from taking home about $1 a day in profit to $.25, and I thought about quitting my business. I talked to my step-father about it one evening over dinner, looking for advice from someone who ran a small business too (although much more profitable than mine). After listening to me intently, he absorbed what I said and took a deep breath. He explained that I needed a competitive advantage, something that would make me stick out in a crowded space with many other kids. I asked him what kind of things would give me a competitive advantage, and he chuckled. He told me I needed to figure it out personally and when I did, to come back and talk to him.

Figure 1-1. An example of Edgar’s ledger with the price of each card sold.

I puzzled over what I could be doing differently for weeks. Day-after-day I watched other children transact in the school cafeteria, and nothing came to me immediately. One day, I talked to one of my friends named Edgar, who watched all the Pokemon card transactions more intently than I did. I asked Edgar what he was looking at, and he explained that he was keeping track of all the cards sold that day. He walked from table to table and held a ledger (Figure 1-1) of all the transactions he witnessed. I asked Edgar if I could look through his notebook. I turned and saw weeks’ worth of Pokemon card transactions. As I looked through his notebook, it dawned on me that I could use the data he collected to augment my selling strategy. I could use the information he gathered to figure out where there was an unmet demand for cards! I told Edgar to meet me after class to talk about the next steps and a business partnership.

When school was out, Edgar and I met and came up with a game plan. I pulled out all of my cards, and we went through and painstakingly created an inventory sheet. I cross-referenced my inventory with the sales Edgar collected in his notebook. With this information, I felt confident we could be competitive with the other kids and undercut them where it made sense. With our new inventory and pricing model, Edgar and I spent the next three weeks selling more cards and making plenty of money. Our daily profit slowly rose from $.25 a day up to around $.50 a day. We were still not at the $1 I started at, and now I had to share revenue with Edgar, so we were working much harder and making less money. We decided something had to give, and there had to be another way to make this process easier.

When Edgar and I talked about the limitations of our business, one aspect of it stuck out. There were only two of us and five tables where kids sold Pokemon cards. Frequently, we would begin our selling at the wrong table. The cards either Edgar or I had were not the cards other kids at the table wanted to buy. We would miss out on the market opportunity for the day, and often for a week or more while waiting for new customers. We needed a way to be at all five tables at once. Furthermore, we needed a way to communicate with each other in real-time across tables. Edgar and I schemed for a few days and came up with the plan depicted in figure 1-2.

Figure 1-2. A diagram of our card selling scheme. At each table, one member of our company had a walkie-talkie and we communicated the prices of transactions to each other over the walkie-talkie.

We recruited three other students who were trying to break into the Pokemon card selling market. We split the cards we wanted to sell evenly between the five of us, and each went to one of the five cafeteria tables attempting to sell the cards in their hand. Each of us also had a notebook and a walkie-talkie. When we overheard other kids selling their cards, we would communicate to the other 4 in the group. We would all keep the same ledger of prices, and if someone in our group had the card of interest, the person at the table would offer it at a lower price. With this strategy, we could always undercut the competition, and all five of us had a picture of the Pokemon card market for that day. With the new company strategy, our Pokemon card profits went from $.50 a day to $2.50 per day. Our teachers eventually shut down the business, and I haven’t sold Pokemon cards since.

This story illustrates the value of data in motion. Before we began collecting and broadcasting the Pokemon cards, the data had little value. It did not have a material impact on our ability to sell cards. Our walkie-talk and ledger system was a simple real-time system. It enabled us, in real-time, to communicate bids and asks across the entire market. Armed with that information, we could make informed decisions about our inventory and sell more cards than we ever were able to before. While our real-time system only enriched me by a few quarters a day, the system’s principles enable rich experiences throughout modern life.

Resource Efficiency

In my trading card business, one of our company’s significant advantages was the ability to collect data once and share it with everyone in the company. That ability enabled us to take advantage of sales at the cafeteria table. In other words, it gave each person at the table a global outlook. This global outlook decentralized the information about sales, and it created redundancy in our network. Commonly, if one member of the crew was writing and missed an update, they could ask everyone else in the system what their current state of affairs was, and they would be able to update their outlook.

While my trading card business was small and inconsequential in the larger scheme of things, resource efficiency can be a boon for companies of any size. When you consider the modern enterprise, many events happen that have downstream consequences. Consider the simple meetings that every company has. Creating a calendar meeting requires scheduling time on multiple people’s calendars, scheduling a room, video conferencing software, and often scheduling refreshments as part of the meeting. With tools like Google Calendar, we can schedule an appointment with thousands of people and coordinate it all with a few clicks and entering information into a form (Figure 1-3). Once that event is created, emails are sent, calendars and tentatively booked, pizza is ordered, and rooms are reserved.

Figure 1-3. With Event-Driven architectures complex tasks like scheduling meeting invites across multiple participants are made much easier.

Without the platforms to manage and choreograph the calendar invite, administrative overhead can grow like a tumor. Administrators would have to make phone calls, collect RSVPs, and put a sticky note on the door of a conference room. Real-time systems provide value in other systems we use every day, from Customer Resource Management (CRM) to payroll systems.

Interesting Applications

Resource efficiency is one reason to utilize a messaging system, but the user experience may ultimately be a more compelling one. The software systems we use and rely on to manage our finances, manage our business, and ensure our health benefit from user experience enhancements. User experience enhancements can come in several forms. Still, the most notable are (1) improving the design to make interfaces more comfortable to navigate and (2) doing more on behalf of the user. Exploring the second of these benefits, programs that perform on the user’s behalf quickly and accurately can take an everyday experience and turn it into something magical. Consider a bank account that saves money on your behalf when there is a credit in your account. Upon a debit clearing in your account, the program uses the balance and other contexts about your account and deposits money in your savings account. Over time you save money without ever feeling the pain of saving. Messaging systems are the backbone of systems like this one. This section will explore a few examples in more detail and explore precisely how a messaging platform enables their rich user experiences.

Banking

Banks provide the capital that powers much of our economy. To buy a home or car and, in many cases, start a business, you will likely need to borrow money from a bank. If I were to be kind, I would best describe the process of borrowing money from a financial institution as excruciating. In many cases, borrowing money requires printing out your bank statements so the bank can get an understanding of your monthly expenses. They may also use these printouts to verify your income and tax returns. In many cases, you may provide bank statements, pay stubs, tax returns, and other documents to pre-qualify for a loan and then provide the same copies two months later to get an actual loan. While this sounds painful and superfluous in an era of technology, the bank has good reason to be as thorough and intrusive as possible.

For a bank, lending you six figures worth of money comes at considerable risk. By performing extensive checks on your bank statements and other documents, they reduce the risk of approving you for a loan. Banks also face significant regulations, and without a good understanding of your credit, they may face the loss of licensing without due diligence. To modernize this credit approval system, we need to think about the problem through a slightly different lens.

Figure 1-4. An example diagram of communicating credit card usage and risk across to many downstream consumers

When a customer prequalifies a loan, the bank agrees they will lend up to a certain dollar amount contingent on the applicant having the same creditworthiness when they are ready to act on the loan. One product approach to this problem is after a customer prequalifies, the software system connected to the user’s financial institution will send notifications to the lending company for predetermined events. The lender is notified in real-time if the potential borrower does anything that would jeopardize the loan closing. In real-time, the lender can update based on the borrower’s behavior how much they can borrow and have a clear understanding of the probability the borrower would have a successful close. This end-to-end flow is depicted in figure 1-4. After the initial data collection from prequalification, a real-time pipeline of transactions and credit usage is sent to the lender, so there are no surprises.

This product is superior in many ways to the traditional process of doing a full application process at preapproval and at the time of the loan. It reduces the friction of closing the loan (where the lender would make money) and puts the borrower in control. For the financial institution providing the real-time data, it’s just a matter of routing data used for another purpose for the lender. The efficiency gained and value provided from this approach is exact.

Medical

Hospital systems, medical staff, and medical software are under heightened scrutiny. This scrutiny often looks like compliance and authorization checks by affiliated government entities. When something goes wrong in the medical field, it can be much more devastating than losing money, as with our banking example. Mistakes in the medical field can lead to permanent injury and death for a patient, disbarment for a medical provider, or legislation for a hospital system. Because of this high level of scrutiny, visiting a doctor’s office for even routine work can feel slow and inefficient in a moderate case and blood-curdling frustrating in the worst case. There are many forms to fill out; there is a lot of waiting, and you are often asked the same questions multiple times by different people. Not only does this create inefficiency and frustration, but it also translates into a doctor’s visit being expensive.

How would a real-time system help the hospital? Some hospital systems in Utah are trying to tackle this problem. Of all patient complaints, the most common was patients felt frustrated when giving their medical history more than once per visit. When a patient arrived for a visit, they would fill out a form with their health history. When they saw a medical assistant, they were asked many of the same questions they filled out on the entry form. When they finally saw the doctor, they were asked the same questions again. The health history provides a reasonable basis for the doctors to work from and can prevent common misdiagnosis issues and prevent the provider from prescribing a drug the patient is allergic to. However, collecting health history often comes at the cost of clinic time that translates into a poor experience and workload on the staff.

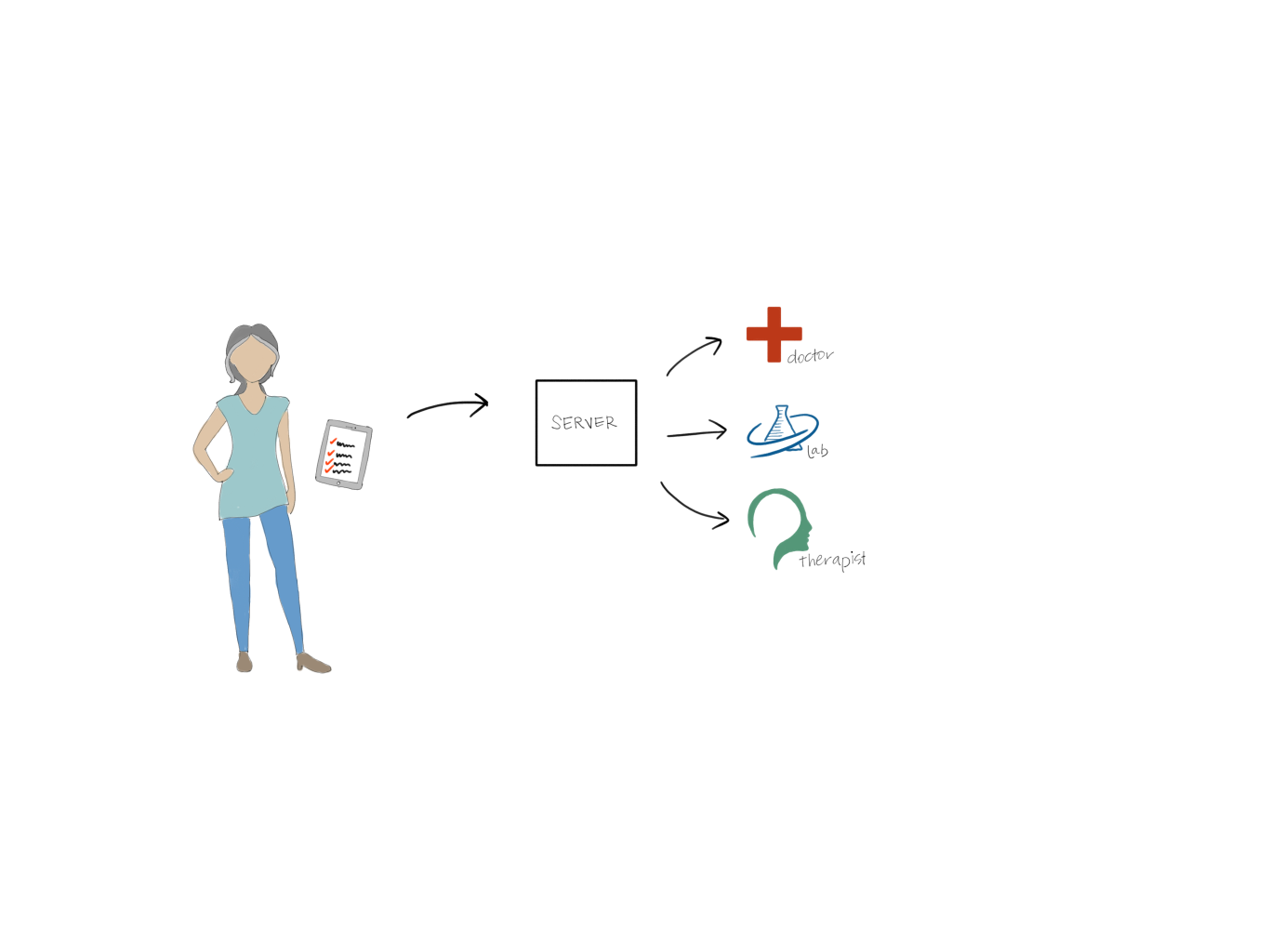

The software engineering department and health providers worked together to reimagine what a health history system would look like. They focused on the question of, what if the information was just in time for the providers? The hospital re-engineered their patient record system making it all event-driven and real-time. When a patient arrived at the hospital, they entered their information on a tablet (Figure 1-5). Once complete, the data was saved to the system. Three minutes before the scheduled appointment time, the doctor would receive a notification to log on and check the patient’s information. The doctor would use that 3 minutes to end the previous appointment and start reading through the other patient’s chart. When the doctor arrived in the patient’s room, they knew everything they needed to start a conversation with the patient about their care.

Figure 1-5. How the Health System automatically publishes patient information to necessary parties providing a better user experience.

After the visit, If the patient needed to take any blood tests or other tests, the doctor would press a button, and the test order would be sent over to the lab. Similarly, when the patient checked into the lab, their information would auto-populate. While initially designed to prevent duplication of collecting medical history, the system had a far-reaching impact on the hospital system. Not only did the hospital system save money, but the patient experience improved drastically.

Security

Fraud and hacking on the internet is a costly problem for governments and private companies. As more companies have some part of their companies managed online, the risks of being online become quickly apparent. Fighting hackers and fraudulent users is a problem without a one size fits all solution. The hardest part of fighting hacking and fraud is the number of places or vectors an organization has to protect itself. Hacks can happen from employees accidentally clicking on a phishing attempt link or an engineer applying the wrong policy to their code. Defending against all of these attack vectors is expensive and requires specialized skills.

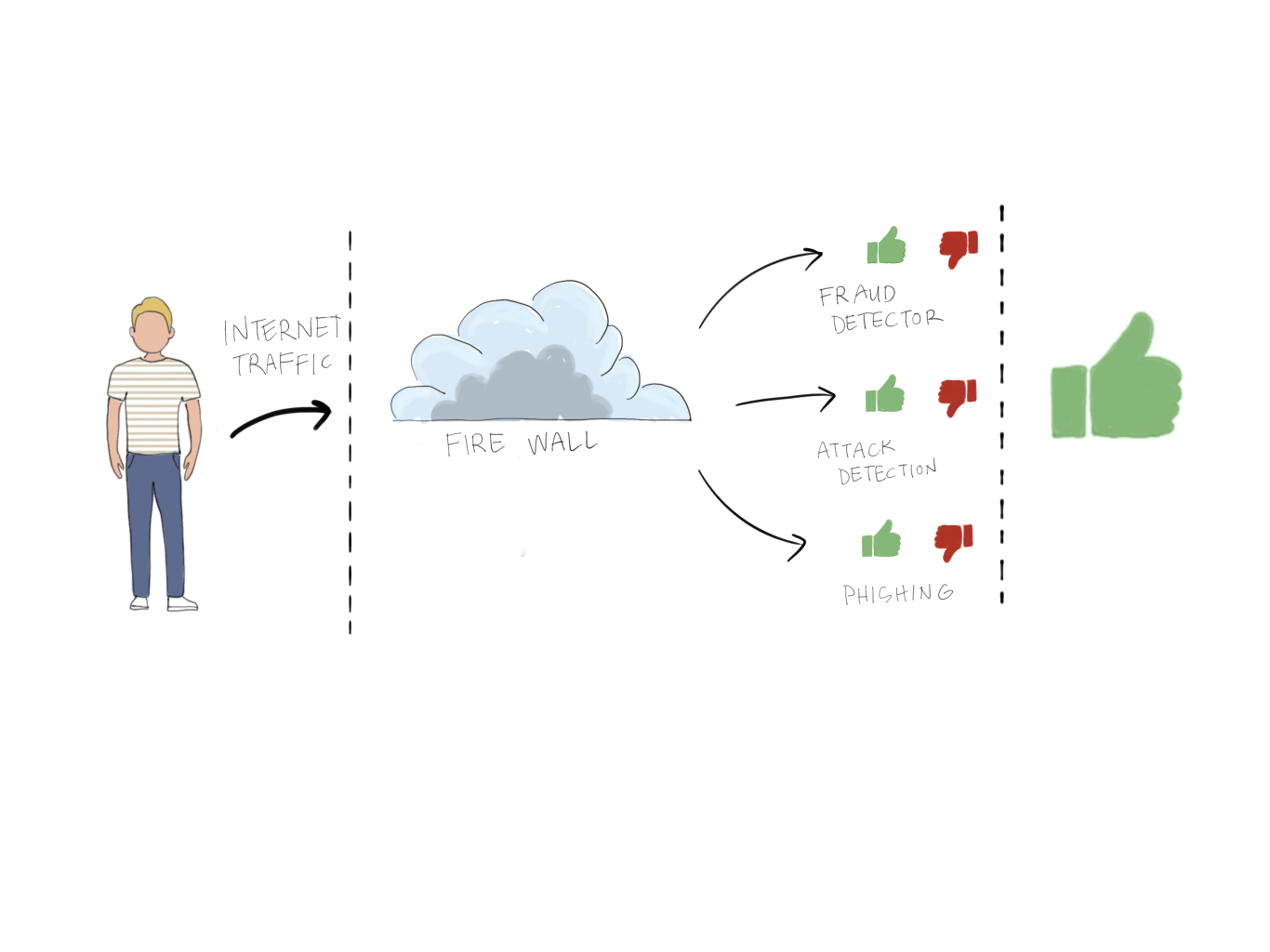

Real-time systems can reduce some of the cost and overhead of protecting an organization against frauds and hacks. For many organizations, they require specialized approaches to each threat vector they face. They may use one vendor for their firewall, another to protect their email, and a third to manage policies for their cloud computing. For many of these vendors, it’s not in their business interest to work well with other providers. Many of these vendors use the same data to detect different threats.

Some new product offerings in the market utilize the real-time nature of the data (internet traffic) and provide an all-in-one solution (Figure 1-6). These solutions treat each new connection as a threat. They use an event-driven system with machine learning, business logic, and other approaches to determine if a particular request is safe. This approach is also modular, where the vendor could customize the threat protection for each customer based on their needs. This approach is superior to the alternatives in that it reuses data passing through the system and choreographs responses from multiple systems.

Figure 1-6. An example of a modern real-time fraud detection system.

Internet of Things

The use of smart speakers and other smart home appliances has been on the rise around the world. With the broader availability of the internet service and the lower cost of producing internet-connected devices, many first-time smart device buyers have entered the market. In general, users of smart devices find utility in what these devices have to offer. I’ve been a smart home enthusiast for many years now, and while each camera, sensor, or speaker has utility on their own, the utility they have when working together is hard to rival. Working together takes smart homes from many applications on a smartphone to a holistic system that can meet the user’s needs. Unfortunately, getting the devices from a myriad of manufacturers to work together can be difficult. For the manufacturer, it may not be in their best business interest to make their product interoperable with other manufacturers. There also may be sound technical reasons why a device doesn’t support a popular protocol. For a user, these decisions can be limiting and frustrating. I found these limitations too prohibitive and decided to build my proprietary bridge with my consumer-grade smart home products.

The majority of consumer-grade smart home devices speak one of three protocols. Bluetooth, Wi-Fi, and Zigbee. Most of us are familiar with Bluetooth; it is a commonly used protocol for connecting devices from computer keyboards and mice to hearing aids. Bluetooth doesn’t require any internet connectivity and is widely supported. Wi-Fi is a wireless internet connectivity protocol. Zigbee is a low energy communication protocol commonly used in smart home devices.

Suppose you had 20 smart home devices from different manufacturers. Some of them spoke Bluetooth, others Wi-FI, and others Zigbee. If you wanted them to all work together to do something like monitoring your home, it would not easily be possible. However, if you could build a bridge that would translate Wi-FI into Zigbee, Zigbee into Bluetooth, and so on, the possibilities would be endless. That’s the idea I worked with when designing the Smart Home bridge in figure 1-7.

Figure 1-7. A diagram of my smart system. Each device on the edge communicates with a different protocol, however, they publish their events to a centralized MQTT server and the centralized server can choreograph the events.

Each of my smart home devices was event-driven, meaning when an event occurred of importance to the device, they would publish that event to some centralized server. For all the smart home devices I owned, I could tap into their software and broadcast that same event to my event-bridge. I used MQTT, a light-weight messaging system designed for internet of things applications. Now, whenever an event occurred in my home (like a door opening), it would publish natively to the manufacturer’s platform but then also my own. I built a small event-processing platform that would take events posted to MQTT and perform actions when pre-defined criteria were met. For example, if a door was open and no door closed even within 2 minutes, it would send a push notification to my wife. Or if the alarm system was armed and it detected someone was home, it would delay notifying for a few seconds.

My smart home system provides much more utility to me now that the events are all codified in a reusable way. That is the power of real-time, and that’s also the power of event-driven architectures.

In this book, we’ll dive even deeper into the value proposition of these architectures and the limitations of adopting them. By the end of the book, I hope you can see why Apache Pulsar is an excellent choice to build an event-driven system. We’ll see how thoroughly the creators of Pulsar understood this space and why they made Pulsar. We’ll see how you can build event-driven systems that may power the next trading card marketplace, bank, or internet-connected security system.