- Preface

- 1. Searching, Finding, Deciding

- Every Day Is a Decision Day

- Information as a Corporate Asset

- Information Quality

- Information: Important and Yet Invisible!

- Enterprise Search

- Search and “Information Retrieval”

- A Short History of Information Retrieval

- A Short History of Search

- Search Is a Dialog

- Search Must Be Managed

- Why Search Is Important

- Summary

- Further Reading

- 2. Benefits and Challenges

- 3. Search Technology

- Content Gathering

- Connectors

- Document Filters and Language Identification

- Parsing and Tokenizing

- Stop Words

- Stemming and Lemmatization

- Dates

- Phrases

- Processing Pipeline

- Building and Managing the Index

- Security and Access Control Lists

- Entity Extraction

- Graph Search

- IDOL and Decisiv

- Summary

- Further Reading

- 4. Query and Results Management

- 5. The Business of Search

- The Acquisition Frenzy

- Independent Search Vendors

- The Future of Commercial Search

- Open Source Search Software

- Intranet Search

- Search Appliances

- Microsoft SharePoint

- Product Roadmaps

- The Future of “Keyword” Search

- Specialized Search Components

- Cloud-Based Search

- OEM Applications

- Systems Integrators

- Summary

- Further Reading

- 6. Open Source Search

- 7. SharePoint Search

- A Short History of SharePoint Search

- Search in SharePoint 2010

- SharePoint 2013 Search

- Installation, Administration, and Maintenance

- Crawl Management

- Analytics Management

- Working with Metadata

- Working with Queries

- UI Customizations

- Managed Navigation

- Search Governance

- Search Support Team

- The Challenges of SP2013

- Migration from SharePoint 2010

- Future Directions in SharePoint Search

- Summary

- Further Reading

- 8. Search Governance

- Who Should Own Search?

- Search Manager

- Search Technology Manager

- Search Analytics Manager

- Search Information Specialist

- Search User Support Manager

- Search Help Desk

- Team Skills and Training

- Search Liaison Specialists

- Supporting Global Enterprise Search

- Search Center of Excellence

- Managing a Virtual SCE

- SCE Case Study

- Security and Compliance

- Training and Support

- Establishing Good Communications

- Summary

- Further Reading

- 9. Making a Business Case

- 10. Defining User Requirements

- 11. Searching for People and Expertise

- 12. Search User Interface Design

- 13. Specification and Selection

- How Much Will It Cost?

- The Project Team

- RACI Responsibility Matrix

- Specification Project Team

- Selection Project Team

- Installation Project Team

- The Global Dimension

- Risk Management

- Project Schedule

- Writing the Specification

- The Story so Far

- Content Scope

- User Expectations

- Information Systems Architecture

- IT Partnerships

- Internal Development and Support Resources

- Security and Identity Management

- Federated Search Requirements

- People Databases

- Project Timetable

- Functional Specification

- Connectors and APIs

- Index Freshness

- Filters and Facets

- Taxonomy and Metadata Management

- Search and System Logs

- Entity Extraction

- Questions for the Vendors

- Risk Assessment

- Project Schedule

- Project Management Methodology

- Upgrade Release Schedule

- Supporting a Global Implementation

- User Groups

- Key Employee Strategy

- License and Support Costs

- Reference Sites

- Training

- Building the Vendor Short List

- Using a Consultant

- Using an Implementation Partner

- Open Source Software Procurement

- The Best of Both Worlds?

- Proof of Concept

- Contract Negotiation

- Summary

- Further Reading

- 14. Installation and Implementation

- Project Management

- Customer Responsibilities

- Implementation Schedule

- Minimum Viable Search

- Knowledge Transfer

- The Show Stoppers

- Get Indexing!

- User Interface Design

- Usability and Accessibility Testing

- Disaster Recovery Tests

- Help Desk

- Metadata Management

- Communications Plan

- Migration and Search Implementation

- Summary

- 15. Search Evaluation

- 16. Website Search

- 17. eDiscovery

- 18. Text and Content Analytics

- 19. The Next Five Years

- A. Search Strategy

- B. Critical Success Factors

- C. Search Blogs

- D. A Core Library for Enterprise Search

- E. Vendor List

- Glossary

- Index

Chapter 7. SharePoint Search

This is the only chapter in this book devoted to a single search application. The reason for this is the ubiquitous use of SharePoint in organizations of all sizes. By default, these organizations are able to capitalize on the search application that is a core element of the SharePoint 2013 architecture, and that makes it probably the most widely used of all search applications. However, even if the IT team has had experience in using FAST Search Server for SharePoint 2010, they may well be underprepared to take advantage of the much richer user experience in SharePoint 2013.

A Short History of SharePoint Search

The search functionality of SharePoint 2003 and SharePoint 2007 was very limited, and Microsoft realized that without a significant enhancement of search, it could not position SharePoint 2010 as an enterprise-level application suite against IBM and Oracle. In 2008, Microsoft bought the Norwegian company FAST Search and Transfer, and rushed through the development of FAST Search Server for SharePoint 2010, often referred to as FS4SP. FAST Search and Transfer had developed FAST ESP as a very powerful enterprise search application that ran on both Linux and Windows servers. Microsoft continued to support the original FAST ESP application, though fairly quickly ceased to support the Linux version.

However, no further development was undertaken, and so from 2008 to 2011, the only version available was the 5.3 release from 2008. Full support for this application ceased in June 2013. The FAST ESP enterprise search application continued to be offered but support for this expired in June 2013. There is some limited assistance available until 2018. Despite the limited installed base of FAST ESP and also of FS4SP, FAST has achieved an almost mythical reputation for performance, and many SharePoint search managers refer to it in awe even though they have never used either FAST ESP or FS4SP. As a result of the lack of familiarity, they also significantly under-estimate the requirements for technical and search support.

Search in SharePoint 2010

Microsoft offered two search applications for SharePoint 2010: SharePoint Search 2010 and FAST Search for SharePoint 2010. Because of the naming convention adopted by Microsoft, the impression was created that FAST Search for SharePoint 2010 (FS4SP) was identical to the FAST ESP application, and many search managers and IT managers were convinced that they had the full power of FAST ESP available to them. FS4SP is only available through an Enterprise CAL contract and so comes at a significant additional cost.

At the time of launch, FS4SP was positioned as a potential step toward a customer adopting FAST ESP as a broader-based search application by adopting many of the search management features of FAST ESP within SharePoint. This created the impression that there was going to be an option to upgrade to FAST ESP. Many organizations were surprised and disappointed both by the failure of Microsoft to enhance FAST ESP beyond Version 5.3 and then to announce that full support for FAST ESP would cease in 2013.

For customers accustomed to the comparatively weak feature set of the search application in SharePoint 2007, the migration to SharePoint 2010 Search needed care but was not a major leap in terms of search administration. FS4SP was a much more challenging prospect, especially if the organization had little if any search management expertise. SharePoint 2010 Search can be implemented almost out of the box, but this is certainly not the case with FSP4SP. The challenges are not just in the management of the backend servers but in the development of an effective search user interface.

SharePoint 2013 Search

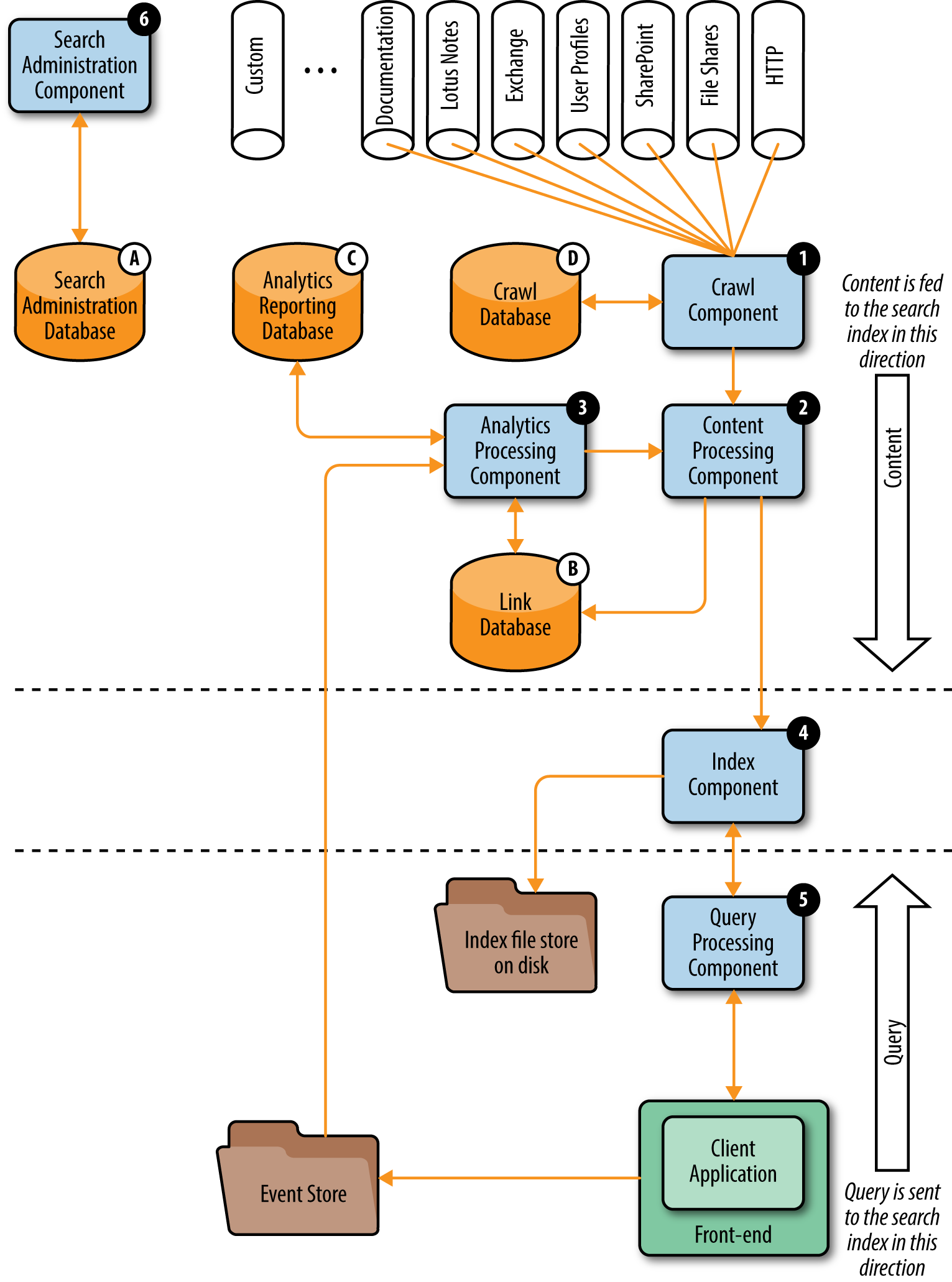

It is probably better to see SharePoint 2013 Search as a new product rather than an evolution, especially when compared to the Search Server application in SharePoint 2010. Microsoft has integrated search into all elements of the SharePoint 2013 platform rather than positioning it as one of the many elements within the overall application. Figure 7-1 is a schematic of the core modules of SharePoint 2013.

Figure 7-1. Schematic diagram of the search architecture of Microsoft SharePoint 2013

Some of the most important changes are:

-

Complete integration of search within the SharePoint platform

-

A simplification of the content processing pipeline

-

Major changes to crawling and content processing

-

The introduction of the Analytics Processing Component

-

Substantial changes to the user interface

-

Built to be a cloud-based application, offering a hybrid search of on-premise and Office 365

-

More control at site collection and site level

The need to support a cloud-based architecture is one reason why certain changes have been engineered into SharePoint 2013.

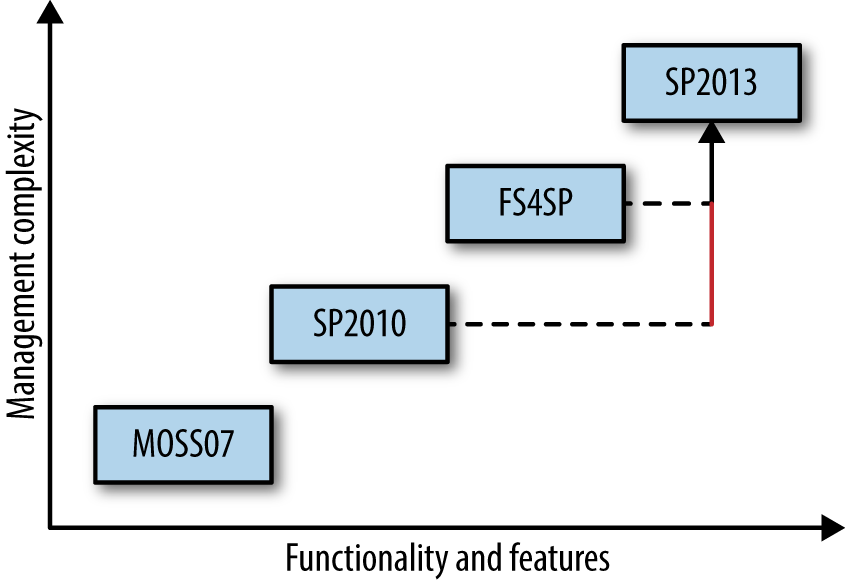

Figure 7-2 illustrates the situation that will be faced by search managers familiar with either of the two SharePoint 2010 applications.

Figure 7-2. With power comes management complexity

Arguably, the base management of SP2013 is easier than with FS4SP because so many of the options have been tied down, but with more options on the user side, the overall expertise base of the support team needs to be wider and more integrated with the business.

Particular attention needs to be paid to the migration from the SharePoint 2010 Standard CAL search to SharePoint 2013 search. This requires very careful planning both with the IT team and with the business to understand the implications for migration and successful long-term search support. SharePoint users have long been familiar with the unique way that Microsoft uses terms such as List and Library. In moving from SharePoint 2010 to SharePoint 2013, there are some language changes that need to be fully understood. This is especially the case for Best Bets, Promotion and Demotion of Results, and Synonyms and Scopes, all of which are now managed within Query Rules. In moving from SharePoint 2010 to SharePoint 2013, it is advisable not to assume that the same label achieves the same action.

Installation, Administration, and Maintenance

From a technical perspective, the initial planning of SP2013 search deployment has to start with the hardware infrastructure. All the search components are tightly integrated into SharePoint 2013, so that each search component will be on one of the servers in the SharePoint farm. Both the crawling and querying components can be scaled out to more servers to improve the performance, but this needs good planning and a very good understanding of capacity planning for search applications.

The challenge lies in keeping a balance between content volume (item count) within SharePoint 2013, the query load in Queries per Second (QPS), and the crawl load in Documents per Second (DPS). Because of the range of crawl options, the crawl load element needs careful assessment.

From a software perspective, the installation of SharePoint 2013 Search infrastructure is relatively easy but again needs to be planned with care. It can be done either on the Central Administration UI or by repeatable scripts. Although this second approach needs more preparation, this is probably the best approach.

Search administration can be also done on both the UI and by scripting. Again, this second approach is getting more and more important as the search platform and environment become increasingly more complex. On one hand, we have many more features and opportunities in scripting than to be tied to the admin UI. On the other hand, we get a repeatable way of administration by scripting that is also critical.

It is important to appreciate that search administrators can delegate a lot of tasks to lower levels of the information architecture (site collections and sites). This means less overload on the central administration level, but also it needs more attention and governance as the environment can easily get to be a “search silo” without enforcing rules and policies.

Crawl Management

Content freshness is one of the major measurements of search systems. In SharePoint 2013, the most important major improvement in content processing is the new type of crawling. Besides full and incremental crawls, there’s a new concept called “continuous crawl,” which runs every 15 minutes as a default schedule. It works on SharePoint content sources and enables changes (new or deleted items, changes in content or metadata) to be added to the index in minutes or even seconds. This new way of crawling is very agile. There can be multiple crawling processes running (to improve performance and content freshness), and these can be run in parallel with a full crawl on the same content source. However, a continuous crawl just ignores and logs any errors it finds.

Besides content freshness, this might be very important for large-scale implementations where it is not uncommon for a full crawl to take several weeks. In SharePoint 2010, no other crawl process could be run in parallel with a full crawl. Thus, the content, even if it had been crawled in the beginning of the full crawl, could not get refreshed until the full crawl finished.

With the new model of continuous crawl, it is possible to “refresh” the items already indexed even if the users modify them during the long full crawl process. As a result, there is always a current index.

Analytics Management

A major change in SharePoint 2013 is the availability of an Analytics module. This module is not just a means of managing search logs but lies at the heart of many of the novel features of SharePoint 2013, such as the recommendation of content based on prior searches. The Analytics module also monitors documents that are opened by each user and makes the assumption that if the document is opened, it has importance to the user. This, and similar information, is used in ranking a document in a results list. This is carried out at a site collection level and enables SharePoint 2013 to deliver highly personalized search results based on the role of a particular user derived from which Site Collections are being used.

However, the Analytics module only tracks events occurring to content that is being managed within SharePoint 2013. External content that is being indexed by SharePoint 2013 will not be tracked by the Analytics module, and this could have implications for relevance ranking.

The Microsoft Developer Network site provides a good summary of how to develop customized ranking models for SharePoint 2013 search. The amount of applied mathematics in this post (e.g., around the BM25 rank feature model) illustrates why specialist expertise is required to support the power of SharePoint 2013 search.

Working with Metadata

Metadata has always been the “glue” of search solutions, and is essential for search-based applications. In SharePoint 2013, the concept of metadata management is broadly the same as in SharePoint 2010. Crawled properties are automatically generated by the metadata of the content source, while managed properties are created and controlled by search administrators. These controlled managed properties can be mapped to the automatically created crawled properties in order to be able to use them in end-user scenarios. For example, they can be refiners (facets) that can be displayed with the results and can be used to sort or filter the results as well as query by them.

What has changed in SharePoint 2013 is the management and maintenance of the search schema. With the new delegated administration of search, managed properties can be handled not only on the global Central Administration level, but site collection administrators also have the privileges to create and manage their own site collection-level managed properties. This can be very useful as departments, projects, and so on. can have their own search metadata sets, without having any effect on others, but it still needs to be set within an overall governance approach to avoid issues with a coherent cross-site ranking.

Working with Queries

Users who enter the queries and use the search system will have different backgrounds, knowledge, personas, and expectations. They might be also interested in different content: salespeople look for customer-facing presentations, developers need technical documentation, and finance need the proposals, invoices, and payment certificates. Their search “maturity level” also might be different.

Query Rules are the way to help searches responding to the intent of users, by creating conditions and corresponding actions. When a query meets the search system, it performs the actions specified in the rule to improve the relevance of the search results, such as by narrowing results, changing the order in which results are displayed, displaying additional Result Blocks, or using additional queries or modifying the current one.

The last improvement on query (and UI) to mention is called “Content by Search.” This is a new way to provide dynamic content from the SharePoint search index, based on dynamic search queries. The query can be either entered by the user or generated automatically. Obviously, content freshness of items displayed depends on the latest crawl, which is why continuous crawl can be so critical in some scenarios, as discussed earlier.

UI Customizations

An important addition to the “look and feel” of search in SharePoint 2013 is the Hover Panel. This is a side panel that gets displayed when users hover the mouse over a search result. It displays a preview of the document (out of the box for Office documents and web pages stored in SharePoint), the outline, the most important properties, and actions to take on the item. The Hover Panel varies by the type of result.

In SharePoint 2013, customization of these UI elements is also much easier to develop and update. There is now no need to create and modify long and complex XML configurations. Instead, Display Templates control which managed properties to use, how to use and display them, and also the available actions. These templates are used in the search user interface, in the result set, and in Content-by-Search. They can be configured to display specific managed properties.

On the Hover Panel, the most important aspects that can be customized are the properties to display and actions available by result type. Document previews also have some level of configuration. Finally, the way to display refiners can be also configured by Display Templates. With these options, we get an easy and powerful way to customize our search UI and are able to build up great search-based applications.

Managed Navigation

Managed navigation itself is not a search-based concept, although it can be used with search in some very elegant ways. It is a dynamic, taxonomy-based navigation that creates SEO-friendly URLs that are derived from the managed navigation structure. It provides an alternative to the traditional SharePoint navigation feature, even with the opportunity to create a global, farm-level navigation experience without any custom development. Moreover, this navigation experience can be combined with search. The landing page can be a search result page, with Content by Search, refiners, and more. The query that drives the results is how and where the user navigates. It is dynamic: as soon as a new term is added into the navigation term set, it will get displayed as a new node and users will see the related items (results) immediately. This is highly intuitive, dynamic, and a good basis for creating a catalog-like experience or an interactive search-based application.

When SharePoint 2013 is used to index either non-Microsoft content (e.g., PDFs) or content that is not being managed within SharePoint 2013 (e.g., the corporate website), it is important to test out the extent to which these sources are being managed by SharePoint 2013. This process needs to take into account the role of the Office Web Apps server, which is the component of SharePoint 2013 that provides previews of documents in the search results. Just because a document can be previewed does not mean to say that the indexing and relevance rules are applied in the same way as other content.

Search Governance

Effective governance is now recognized as an essential component of a SharePoint implementation. Search Server for SharePoint 2010 could operate out of the box and needed little in the way of search support. FAST Search Server for SharePoint 2010 needed careful management of the backend processing, but the options for the user interface and administration were more limited. In addition, FAST Search Server implementations were generally in large, multinational organizations which had at least some degree of internal expertise in search management.

This may well not be the case for SharePoint 2013, and the move to this version may well expose a lack of understanding within IT departments about the value and complexity of search. SharePoint 2013 needs to be implemented within a well-developed search strategy, especially as many organizations of all sizes will already be running other search applications.

For example, one must consider the types of content that must be searchable and from what content sources. Decisions have to be made about versions (whether to search in the latest public version or in each previous one as well), exclusions and inclusions, content freshness requirements and crawl schedule guidelines, and other factors. Out of the box, only the latest published versions can be indexed.

It is also very important to have policies for the metadata (i.e., you need to determine what should be searchable, establish standards for displaying this metadata, and specify under what circumstances it can be used). In SharePoint 2013, the governance of metadata has an important new dimension. Because of the multilevel search administration, policies will need to be developed for metadata on different levels (central administration, site collection), and we have to define what must be on which level. Enforcing these rules is the next challenge, though SharePoint 2013 does provide some tools for this. There is also a requirement to make the rules about who is responsible for which set of metadata and what actions should be taken to monitor the effectiveness of the metadata in searching.

With regard to relevance, decisions have to be made about the ranking models to be used and the basis on which these models need to be changing or new ones created.

Last, but not least, it is important to understand and make full use of the user interface. Search can be and is everywhere in SharePoint 2013, from the organization-wide Search Centers down to the single but complex Content by Search web parts. Governance is very critical to avoid having a poor search experience that takes time and effort to redress.

Search Support Team

For organizations that have invested in FS4SP, the migration to SharePoint 2013 search is in many respects not going to offer significant challenges to the search development team, but for organizations using a Standard CAL SharePoint 2010, there will be a requirement for a wider range of skills to get the best out of SharePoint 2013 search. Certainly the out-of-the-box implementation will provide some benefits, but to get the best out of the application will require investment in a search support team.

With SharePoint 2013, there is no “easy” option. Although there may not be the same requirement for developer support, the rich user interface and the range of analytics all require a skilled team of specialists on an ongoing basis. The following table1 summarizes the roles and responsibilities of a core support team for SharePoint 2013.

| System Administration | Search Administration | Content Administration | |

|---|---|---|---|

| Task | Capacity planning Install Backup Monitoring |

Crawls Property Mapping Result Sources Query Rules |

Metadata Content Types Search-Driven Publishing |

| Working with | Central Administration SQL PowerShell Logs |

Site Collection Administration Search Reports |

Site Administration Term Store |

| Needs to know | SharePoint Architecture Performance Testing Security |

Information Retrieval Concepts Query Syntax Query Rules |

Information Architecture Concepts Usability Catalogues |

In an organization of any size, the Search Administration and Content Administration roles will be full time, and the System Administration work needs to be undertaken by someone who can make this work his top priority. In addition, there needs to be a Search Manager who monitors changes in business requirements and maintains a close relationship with business managers. This is moving toward a team of three or four people as a minimum to support SharePoint 2013 search. This team would need to be increased by at least one search administrator if the SharePoint 2013 search application is used to index other content repositories.

The Challenges of SP2013

SharePoint 2013 is being positioned by Microsoft as an enterprise search application capable of federated search across multiple repositories out of the box. At the same time, the full benefits of the upgrades to the search technology can only be gained from managing the content totally within SharePoint 2013.

A major change to SharePoint 2013 is that there is no Pull API, which enabled content from other applications to be selectively moved to SharePoint for indexing. To some extent, this serious omission has been overcome by the availability of Continuous and Incremental crawls, but this is not a complete answer. The number of connectors available from Microsoft to interconnect SharePoint 2013 with other applications is currently quite limited. Other companies are offering a wider range of connectors, but these require careful implementation and support. The presentation of the search results from other repositories is not very elegant. Most of these can be eliminated by some additional configuration to provide transparent user experience, regardless of the source of the content, except the thumbnail document previews on the Hover Panel. For example, unless content is managed within SharePoint 2013, it is not possible to provide thumbnail previews of the content, though there are third-party apps.

Organizations currently using FAST Search Server for SharePoint 2010 would be advised to look at what elements of the application are not available in SharePoint 2013. In some cases, the SharePoint 2013 functionality is better, but that is not always the case. With development effort, some of the weaknesses in SharePoint 2013 search can be addressed, but this might be beyond the capabilities of a company that did not have a team of experienced search developers.

Another option would be to use the range of applications from BA Insight. Over the last decade, this company has specialized in offering solutions that build on top of SharePoint. It clearly has a close working relationship with Microsoft while being an independent supplier of solutions, including a strong collection of connectors and tools for auto-classification and people search.

Migration from SharePoint 2010

The benefits and challenges of implementing SharePoint 2013 search have an important bearing on the migration routes from SharePoint 2010. Migrating from an Enterprise CAL SharePoint 2010 implementation using FS4SP will need a careful review of what features of FS4SP are now deprecated (i.e., not supported) in SharePoint 2013. In particular, this will affect any highly customized search-based applications, to the extent that companies may well choose not to migrate these applications but run them in SharePoint 2010. At least with an FS4SP to 2013 migration there will be the in-house skills to take advantage of the power of SharePoint 2013. There will, however, be changes in relevant rankings, and users may well find that content that may usually have appeared on the first page of results no longer does.

Migrating from a Standard CAL SharePoint 2010 implementation is not a trivial task. First of all, a team with the requisite skills needs to be allocated, or perhaps even recruited, ahead of the migration. What is emerging as a good migration path is to implement SharePoint 2013 and use it to index and search the SharePoint 2010 implementation. This will highlight areas where there seem to be changes to relevance rankings and give the development team an opportunity to learn not only the new functionality of SharePoint 2013 search but also the new terms used by Microsoft to describe many of the features.

Once this has been accomplished, then the migration of the other components can be undertaken. Although there have been many changes to elements such web content management, these are usually not visible to a user looking at a page of content. That is not the case for a user undertaking a search who may have some initial difficulty making use of the new features of the user interface.

However, the upgrade to SP2013 search is also usually being accompanied by a concurrent migration of content from SP2010 to SP2013. Ideally, the opportunity should also be taken to remove redundant, obsolete, and trivial (ROT) content and introduce a more rigorous and consistent approach to metadata. For any organization, this will be a very significant project where almost every task has a dependency on another task. There may be some opportunity to use software tools to support the operation, but almost inevitably there will be a need for someone to touch every content item. To give some indication of scale, if each content item takes in total 10 minutes to check in, review, enrich, and migrate, that works out to be around 200 content items a week once a few difficult ones have been resolved. Dividing the total number of content items by 200 can be a quite frightening calculation.

From a search perspective, testing the SP2013 search cannot effectively be carried out until all the content is migrated, and that can put a great deal of pressure on the search team to find and fix bugs and test out the user interfaces.

Future Directions in SharePoint Search

Microsoft has committed to a future release of an on-premise version of SharePoint in 2015, with the future being on cloud-based applications such as Office 365. In 2014, Microsoft released Office Graph as a graph database platform. Microsoft has built in a way that APIs can be introduced, and in effect, Delve is one of these. For Microsoft, this is a new direction, and during 2015 it is likely that more such applications will be released. There are, of course, many benefits from adopting a cloud-based approach to enterprise applications, but these may have an impact on search performance. For large-scale applications, there could be less control over how the indexes are distributed and crawled, and there may also be some limits on the number of documents that can be indexed and searched. Of greater importance may be that Microsoft cloud solutions are optimized for Microsoft applications in the same way that arguably SharePoint 2013 makes it more difficult to manage the crawl and indexing of non-SharePoint content. Federating search across different cloud services is likely to be challenging, to say the least.

Microsoft offers three hybrid scenarios for joint SharePoint 2013 Server on-premise and SharePoint Online cloud implementations, each based on what Microsoft refers to as a hybrid topology:

- One-way outbound

- SharePoint Server 2013 Search services can query the SharePoint Online search index and return federated results to SharePoint Server 2013 Search.

- One-way inbound

- SharePoint Online Search services can query the SharePoint Server 2013 search index and return federated results to SharePoint Online Search.

- Two-way

- Both SharePoint Server 2013 and SharePoint Online Search services can query the search index in the other environment and return federated results.

The factors that need to be taken into account in deciding which scenario to adopt include the following:

-

The requirement for users to search, find, and use on-premises content and data when they are away from their office and probably using mobile devices

-

The extent to which there is a need to access secure data from SharePoint Server 2013

-

The implications of data privacy legislation on the storage location of data and information

-

The extent to which the SharePoint 2013 Server implementation uses custom code

-

Search latency issues arising from distributed storage and/or network bandwidth availability

-

Integration and analysis of search logs

In early 2015, Microsoft announced the acquisition of Equivio and Revolution. Equivio supports law firms with a set of tools that automatically generate relevance-based indexes from large streams of text, and Revolution is the leading commercial provider of software and services for R, a widely used programming language for statistical computing and predictive analytics. Although these are both small-scale acquisitions, they do indicate a commitment from Microsoft to respond to market requirements for innovative approaches to text and data analytics tools.

It will be essential to read all the Microsoft fine print over the next few years. This is not because Microsoft is hiding limitations in its applications, but because search is a very complex operation and is not something that can be bolted together at speed. This is especially the case if there is, or will be, a requirement to search across non-SharePoint applications. Connectors are difficult enough to implement in on-premises situations. Cloud applications are probably an order of magnitude more complex. Reading through the list of publicly available Microsoft Technical Research Reports will give a sense of the scale and likely direction of Microsoft in the search sector, remembering that anything really dazzling will not be on display.

Summary

Microsoft has progressively enhanced the functionality of the search application with SharePoint over the last decade. The main change from FS4SP in SharePoint 2010 to SP2013 has been the provision of a much richer user interface, but this also requires a different set of requirements to be gathered from the organization and a different set of skills within the SP2013 development and operations team. The migration from SP2010 to SP2013 search is usually undertaken within an overall migration project. These projects are very complex to manage and to forecast schedules and resource requirements with any degree of certainty.

Further Reading

BA Insight publishes briefing papers on SharePoint 2013 search.

Mark Bennett, Jeff Fried, Miles Kehoe, and Natalya Voskresenskaya, Professional Microsoft Search: FAST Search, SharePoint Search and Search Server (Hoboken, NJ: 2010). This book is now out of print but provides not only a detailed account of SP2010 search but also advice on enterprise search management.

David Hobbs, Web Site Migration Handbook Version 2.

TechNet, Plan Search in SharePoint 2013 (and associated subsections).

1 Source: Jeff Fried, CTO, BA Insight at the Enterprise Search Summit, New York, May 2013.

-

No Comment