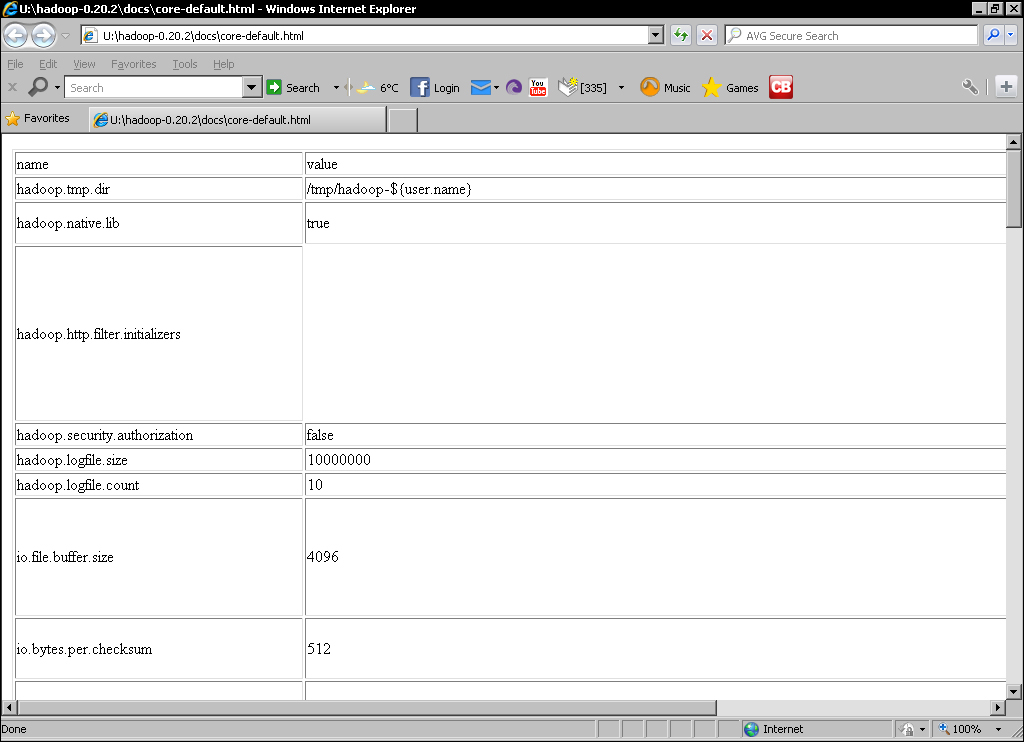

Fortunately, the XML documents are not the only way of looking at the default values; there are also more readable HTML versions, which we'll now take a quick look at.

These files are not included in the Hadoop binary-only distribution; if you are using that, you can also find these files on the Hadoop website.

As you can see, each property has a name, default value, and a brief description. You will also see there are indeed a very large number of properties. Do not expect to understand all of these now, but do spend a little time browsing to get a flavor for the type of customization allowed by Hadoop.

When we have previously set properties in the configuration files, we have used an XML element of the following form:

<property> <name>the.property.name</name> <value>The property value</value> </property>

There are an additional two optional XML elements we can add, description

and final. A fully described property using these additional elements now looks as follows:

<property> <name>the.property.name</name> <value>The default property value</value> <description>A textual description of the property</description> <final>Boolean</final> </property>

The description element is self-explanatory and provides the location for the descriptive text we saw for each property in the preceding HTML files.

The final

property has a similar meaning as in Java: any property marked final cannot be overridden by values in any other files or by other means; we will see this shortly. Use this for those properties where for performance, integrity, security, or other reasons, you wish to enforce cluster-wide values.

You will see properties that modify where Hadoop stores its data on both the local disk and HDFS. There's one property used as the basis for many others hadoop.tmp.dir, which is the root location for all Hadoop files, and its default value is /tmp.

Unfortunately, many Linux distributions—including Ubuntu—are configured to remove the contents of this directory on each reboot. This means that if you do not override this property, you will lose all your HDFS data on the next host reboot. Therefore, it is worthwhile to set something like the following in core-site.xml:

<property> <name>hadoop.tmp.dir</name> <value>/var/lib/hadoop</value> </property>

Remember to ensure the location is writable by the user who will start Hadoop, and that the disk the directory is located on has enough space. As you will see later, there are a number of other properties that allow more granular control of where particular types of data are stored.

We have previously used the configuration files to specify new values for Hadoop properties. This is fine, but does have an overhead if we are trying to find the best value for a property or are executing a job that requires special handling.

It is possible to use the JobConf class to programmatically set configuration properties on the executing job. There are two types of methods supported, the first being those that are dedicated to setting a specific property, such as the ones we've seen for setting the job name, input, and output formats, among others. There are also methods to set properties such as the preferred number of map and reduce tasks for the job.

In addition, there are a set of generic methods, such as the following:

Void set(String key, String value);Void setIfUnset(String key, String value);Void setBoolean( String key, Boolean value);Void setInt(String key, int value);

These are more flexible and do not require specific methods to be created for each property we wish to modify. However, they also lose compile time checking meaning you can use an invalid property name or assign the wrong type to a property and will only find out at runtime.

Note

This ability to set property values both programmatically and in the configuration files is an important reason for the ability to mark a property as final. For properties for which you do not want any submitted job to have the ability to override them, set them as final within the master configuration files.