CHAPTER 8

Measuring Compliance and Conformance

Chapter 7 described metrics and sample security measurement projects that could be applied to specific (and often technical) security operations. This chapter shifts a bit to talk about measuring compliance with and conformance to mandated ways for conducting those security operations.

These required approaches can be found in the laws, regulations, standards, contracts, service level agreements, and general best practice frameworks that are quickly crowding the security industry landscape. Some apply to specific industries or types of information, while others apply to everyone doing business in a certain way (such as publicly traded companies). And as most security managers increasingly tasked with answering regulator and auditor questions can tell you, their systems do not come with buttons you can push or command-line arguments you can enter that tell the system to “measure my compliance” (despite an increasing number of vendors that claim to be peddling just that function). Instead, CISOs and security directors face complexities that can often leave them scratching their heads (and in extreme cases fearing for their jobs).

The Challenges of Measuring Compliance

One very important reason why compliance is so challenging to measure is because compliance is not one single thing and this frustrates our efforts to simplify and bound the problem space. Compliance today is a fuzzy and subjective concept that involves a dynamic mix of new and changing regulations and rules, the personal interactions of organizations with auditors and regulatory agents, and the problems that accompany our lack of insight into the nature of our security operations themselves.

In most of the treatments of compliance-related security metrics I have reviewed, the metrics promoted appear to be similar to what you would expect for IT systems. They tend to be quantitative and narrowly focused on the specific controls required by particular compliance frameworks. The risk, however, is that this approach can create a “checkbox” mentality that favors simplistic but easily validated data points over exploring the actual complexities of IT security. In some cases, a myopic focus on controls causes metrics proponents to miss the real purpose of a particular compliance framework. ISO/IEC 27001 and 27002, two international standards for IT security, are good examples of this effect.

Confusion Among Related Standards

ISO/IEC 27001 and ISO/IEC 27002 are part of a family of international standards developed by the International Standards Organization (ISO) that address the security of information systems. These two standards have a long history of changes and development, with different names and designations used over the years, and finally stabilizing as the 27001 and 27002 designations around 2005. The standards themselves are closely related, with ISO/IEC 27001 acting as a standard for IT security—a defined set of requirements for an information security management system against which an organization can formally certify itself. ISO/IEC 27002 is a framework of best practices and guidance in the form of control objectives and controls that can be used to build robust security architectures within an organization, even if that organization does not choose to become certified.

And here is where the confusion begins. In addition to the 27001 and 27002 standards, the ISO/IEC 27000 family includes a number of other standards that have been released or are being developed. Other 27000 family standards available from ISO include ISO/IEC 27004 (information security management measurements), ISO/IEC 27005 (guidelines for information security risk management), and ISO/IEC 27006 (guidance for certifying to the ISO/IEC 27001 standard). All ISO standards are available directly from ISO or other standards organizations such as the American National Standards Institute (ANSI). The standards are not free, however, as these organizations charge for licenses to use the documents.

ISO/IEC 27001 overlaps with ISO/IEC 27002 in that the control objectives and specific controls recommended in ISO/IEC 27002 are included in ISO/IEC 27001 as an annex to the standard. Because of this, many readers of the ISO/IEC 27001 (including many security and compliance professionals) assume that 27001 is just a certifiable version of 27002 and that what is being certified are the presence of those controls. But the controls are written in a somewhat ambiguous way that leaves them open to the interpretation of both the organization implementing them and the auditors assessing them. Some security metrics experts have used this ambiguity to make the case that ISO/IEC 27002 is a poor choice for measuring security. This argument asserts that the standard is written mainly from the perspective of the certification auditor and is too subjective to use in building metrics. Over-focused on audit and under-focused on measurements, the standard provides no way of measuring objective success.

I disagree with this reading of ISO/IEC 27002, however, for two reasons having to do with the question of whether and how we can measure compliance and conformance. First, the ISO/IEC 27002 standard that is critiqued as too audit-focused is not a certifiable standard. This means that you cannot audit against it or become ISO/IEC 27002 certified because the standard is not prescriptive, mandating no particular controls, and instead acts as a guidance document for information security. ISO/IEC 27001 is the auditable security standard in the 27000 family. What makes ISO/IEC 27001 auditable is not the set of controls from ISO/IEC 27002 that are included as an appendix. ISO/IEC 27001 is a standard for implementing a security management process, centrally focused on requirements for developing, implementing, reviewing, and improving an organization’s security management process. While the specific controls that are used to enforce that process are important, they are supplementary to the standard’s requirements. The requirements in ISO/IEC 27001 can be quite specific, although they may not always be expressed in numbers and they require that the organization implementing the standard perform some of the intellectual heavy lifting necessary to determine the best way to measure performance. ISO/IEC 27001 requirements include such things as documented risk assessment results, formal statements of selected controls and the justifications for those choices, and the implementation of defined written policies and procedures. You can quantify these things to an extent, but they do not always lend themselves to numerical baselines.

Auditing or Measuring?

This brings me to the second mistaken belief of quantitatively-biased metrics experts. Some complain that ISO/IEC 27002 talks a lot about audit and very little about measurement. By focusing on audit, the standard places an emphasis on choosing and assessing and not on monitoring and measuring. This argument echoes Lord Kelvin and his assertion of quantitative bias (my paraphrase): “If I can’t easily turn what I am looking at into a number, then it has no real meaning.” I simply disagree with this narrow philosophical position, as you may have already assumed, having read this far. The definition of audit is “a systematic or methodical examination or review of something,” and this is awfully close to many definitions of measurement. Of course, if your definition of measurement is so narrow as to include only data or analysis that directly involves a quantity of something, you will disagree with me.

But my point here is not to rehash the numbers argument or even to debate the merits of the ISO/IEC 27000 standards or compliance auditing in general. My concern actually echoes that of others, in that if compliance can be this ambiguous, how are we supposed to measure (or audit) it at all? Some might say this is impossible, and that you should toss out compliance frameworks in favor of easily derived, quantitative performance indicators that can be retrofitted into what the auditors want to see in the results. I think this a recipe for bad compliance and bad security, however, because it ignores the spirit of many of the frameworks in favor of low-level, “objective” metrics.

Such granular data does not tell a story and does not provide context on its own. Such data still requires interpretation and “spin” to explain the meanings it represents. I believe that if you have to explain and interpret the data, you might as well give some thought to context and meaning as part of your measurement. Interpretation and context are how we make sense of the individual observations with which we are bombarded every day, and interpretation and context allow us to apply those objective data to a variety of situations.

By downplaying people’s role in measurement in favor of “just the facts,” you risk stifling intuition and creativity and turning your security metrics program into an uninteresting collection of statistics that are unlikely to move anyone to action. The same holds true for audit environments that have been reduced to nothing but present/not present control checklists. A real audit is like a detective story—the auditor attempts to understand and interpret the complexity of compliance, not just the trappings of controls. Real measurement is like science, with a researcher looking at ideas and theories beyond just what the data can immediately tell her. In both cases, we are searching for truth, which is not always reflected in the facts.

If this seems like I am getting romantic about something as boring as measuring and auditing IT security, then so be it! If your security metrics program doesn’t impart at least a little curiosity and wonder in addition to your successful audits and visibility into technical operations, then, frankly, you are not doing it right.

Confusion Across Multiple Frameworks

If it is so difficult to understand how an organization stacks up against two standards that are in the same family, such as ISO/IEC 27001 and 27002, consider the problems associated with organizations that are required to adhere to multiple compliance frameworks (that may also be changing over time as the framework is revised). Theoretically, you can have every control included in ISO/IEC 27002 in place and functioning and still not be compliant with ISO/IEC 27001, because ISO/IEC 27001 compliance measures something other than the effectiveness of technical controls (it measures the comprehensiveness and maturity of the security management program, including people, process, and technology). To attain compliance to any standard or compliance framework, you must first understand what the framework actually requires—and this can differ in both direct and subtle ways across compliance regimes.

In my day job, I work with many clients regarding issues of IT Governance, Risk, and Compliance (IT GRC). IT GRC is a catch-all term that describes several areas of IT, risk, and security management and includes everything from regulatory compliance to assessing business risks associated with human and organizational information behaviors. Much of what IT GRC deals with is unclear and would drive a pure-quant metrics professional up the wall. But you cannot ignore the demands and drivers of IT GRC simply because you don’t think it lends itself to the kind of measurements you prefer.

Today’s security environment is increasingly subject to the influence and interference of many different stakeholders, including state and local governments (laws and regulations), industry associations (best practices and formal requirements), international bodies (standards), and even our partners and customers (contracts and satisfaction or retention). Like it or not, you need to figure out how to assess and measure these many aspects of your security and automated, quantitative analyses of your log files, or your vulnerability scanner report charts will simply not suffice in every case.

When working with my clients to help them achieve IT GRC goals, I focus on determining how to tie together the various compliance requirements they are facing into something approaching a cohesive set of needs. Today, many organizations handle compliance requirements with little or no coordination between the individual efforts to meet specific needs. The HR team may be running a Health Insurance Portability and Accountability Act (HIPAA) compliance program, for instance, while the security team is dealing with preparing for a Payment Card Industry Data Security Standard (PCI DSS) audit. These two teams may not communicate at all, and neither may be aware that the office of the CFO is busily engaged in Sarbanes-Oxley (SOX) requirements in preparation for an annual report to the board. As these projects grow and develop methods and metrics for their individual goals, they become silos within the enterprise; it becomes less and less likely that anyone involved in any particular initiative will be looking to collaborate with anyone outside his own team. Yet, in many cases, the controls and objectives of the frameworks they are separately implementing share a great deal of overlap and redundancy. One result of this fragmentation is the tangible duplication of effort and wasted resources that could be more effectively utilized elsewhere. Another result is the increased risk that the different compliance initiatives end up creating risks to the company, because compliance is not standardized across the entire organization.

Even if you wanted to apply straightforward, easy-to-collect metrics to your compliance problem space, you will have a hard time doing so if you don’t understand the actual boundaries and specific components that make up your compliance posture. To make matters even more complex, not all the compliance obligations that impact security are actually security-specific. So to measure your compliance from a security perspective, you might not even have the luxury of keeping your efforts localized to the security group, and you’ll need to engage finance, legal, and risk management organizations within the enterprise to address the actual compliance requirements. As with many problems that appear difficult to solve, your first step in measuring compliance and conformance is to measure (or assess) what compliance and conformance really means for your environment. After that, you can get more specific about the kinds of compliance metrics you want to explore.

Vendors offer products in the IT GRC space that try to help automate the IT GRC process. These vendors’ solutions can be complex, providing enterprise-wide management of governance activities, risk-management efforts, and compliance efforts. But understanding which vendor or solution is right for your environment can be a challenge, because there is no universal agreement on exactly what constitutes a full IT GRC software solution, and some vendors focus on one area of IT GRC more than others.

The bottom line is that even expensive, well-designed software cannot provide you with a solution to a problem that you do not fully understand. And implementing large-scale automation to solve business problems carries its own risks, to which many with experience implementing enterprise resource planning (ERP) and customer relationship management (CRM) solutions over the years can attest.

Sample Measurement Projects for Compliance and Conformance

The following security measurement project (SMP) examples are an attempt to determine how to combine, or rationalize, several different compliance frameworks to reduce redundancies and duplication of effort. Later examples include projects to measure specific aspects of a few sample compliance concerns.

Creating a Rationalized Common Control Framework

Controls rationalization is a process by which multiple compliance frameworks are analyzed and equivalencies are mapped between the specific control requirements of one framework against another. The goal of the mapping process is to identify requirements that are the same for both frameworks, and to then document these relationships so that a single control may be leveraged to support multiple compliance initiatives. The control may exist in the security domain, within general IT management, or elsewhere within the organizational management structure. The point is to ensure coordination so that different groups are not implementing different controls that essentially overlap but are administered separately for each framework or compliance program.

The end result of this process is usually some form of common controls framework (CCF). A CCF is a documented conceptual map of the control requirements of an organization that identifies the equivalencies between different control frameworks. The CCF can be used as the basis for a unified enterprise compliance initiative that will meet the needs of the entire organization and eliminate the waste of silos and uncoordinated compliance activities. From a security metrics perspective, a CCF can then be used to develop the requirements-driven measurement projects for any particular control or control objective. But even before the development of the CCF, compliance-related metrics are available that can help define the success of the controls rationalization project.

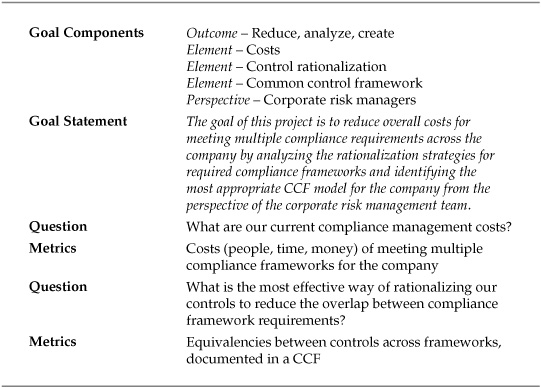

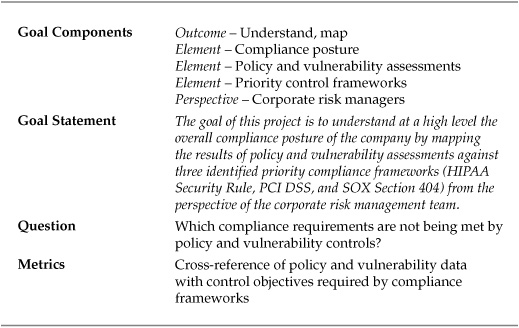

For this project, the entity conducting the study was a publicly traded hospital system. The hospital system’s risk management team was asked to review and streamline IT compliance costs, particularly from a security perspective, in the face of the current economic downturn. The general feeling was that compliance efforts were mushrooming, creating not only increased costs, but uncertainty regarding how well the hospital security program was meeting regulatory mandates. Table 8-1 shows a basic Goal-Question-Metric (GQM) template for the project.

Table 8-1. GQM Template for Rationalized CCF Project

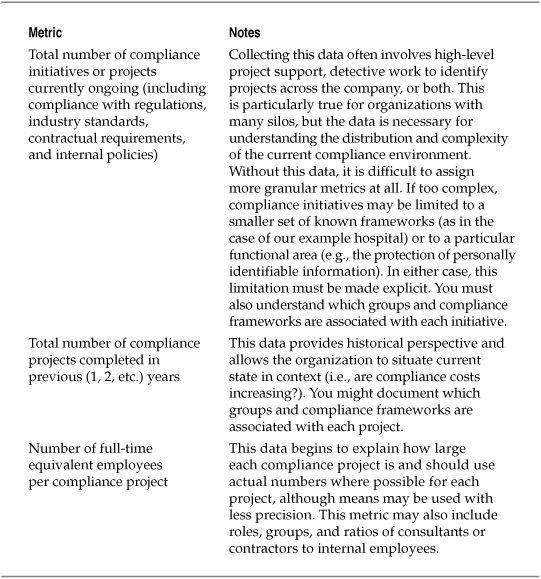

Metrics for Compliance Costs

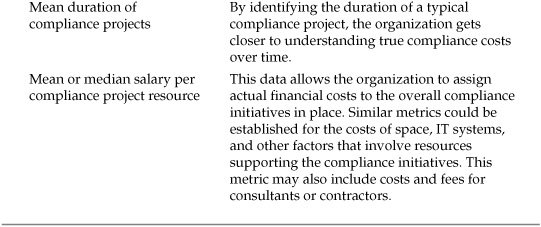

The first data that needed to be collected for this project involved the current costs of the compliance program. These metrics then provided a baseline against which to measure any increases or decreases in costs that may have resulted from the adoption of a particular CCF. Table 8-2 lists a selection of the metrics used to develop this data. Note that these metrics have nothing to do with how well the compliance initiatives are performing, their effects on audits, or other compliance performance criteria. These are simply current state costs for the compliance efforts undertaken.

Table 8-2. Sample Metrics for Compliance Costs

Rationalizing Control Frameworks

Strategies for rationalizing control frameworks and creating a CCF vary, and not every strategy would be equally effective in meeting the hospital’s goal of reducing costs. So the next phase of the measurement project was the exploration of equivalencies between three compliance frameworks of most concern to the risk management team:

![]() HIPAA Healthcare regulation covers patient data

HIPAA Healthcare regulation covers patient data

![]() PCI DSS The hospital accepts point-of-sale credit card transactions

PCI DSS The hospital accepts point-of-sale credit card transactions

![]() Sarbanes-Oxley Act The hospital system is publicly traded

Sarbanes-Oxley Act The hospital system is publicly traded

The actual frameworks are of less importance to this example project, but I will use them to illustrate the CCF strategies considered by the company. The hospital system reviewed three control mapping strategies as part of the project: normative, transitive, and granular. Each of the rationalization strategies had associated benefits and limitations that impacted the risk assessment team’s decision.

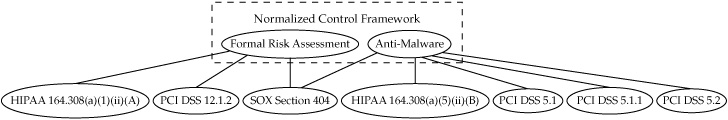

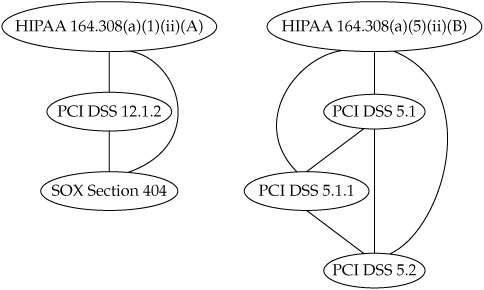

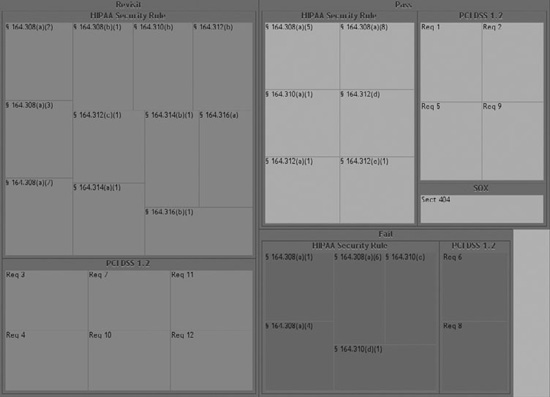

Normative Control Mapping In a normative mapping, all the control frameworks under consideration are analyzed and equivalencies are developed that map into a new “meta” framework that becomes the central set of controls for compliance. The goal is to develop a smaller, more streamlined controls catalog that still covers all the necessary requirements but with a standardized set of controls that apply to everyone, regardless of their specific areas of concern or focus. Figure 8-1 shows an example of the concept for a small subset of the hospital system’s control requirements. The normative mapping arrangement would assign equivalence to controls by assigning them to new controls within the normalized framework. The new framework would represent the unified set of controls that everyone in the company had to meet, and it no longer required the various compliance projects to concentrate on the specifics of HIPAA or of PCI DSS. Another advantage of this approach included a greater flexibility in treating more ambiguous controls, such as those required under SOX, in a way that best met the goals of the organization.

Figure 8-1. Normative control mapping of HIPAA, PCI DSS, and SOX controls

The limitations of the normative mapping strategy included a need for standard, sometimes more generalized language to be used to address the controls of multiple frameworks. This raised concerns that in an audit situation the auditors would be looking for very precise terminology specific to the compliance requirements they were assessing. This would require careful scrutiny by the hospital’s corporate counsel and thorough documentation of the new controls framework so that mapping these controls back to the original framework requirements would be straightforward.

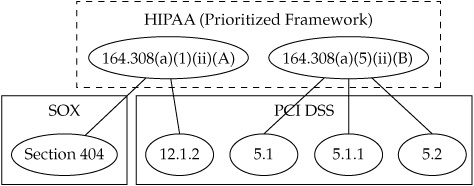

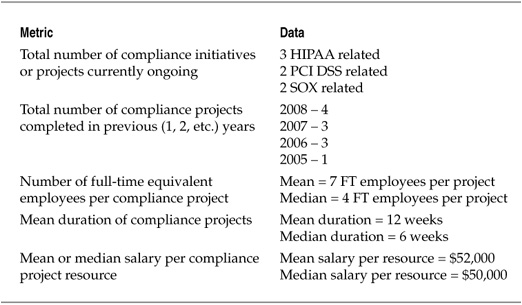

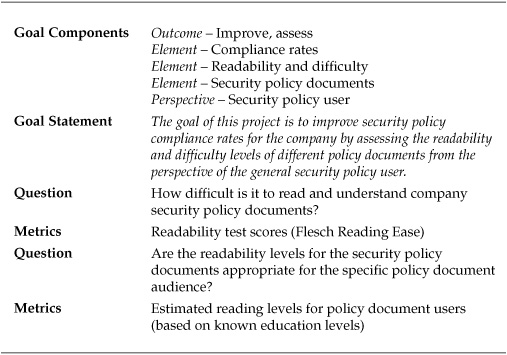

Transitive Control Mapping The transitive mapping strategy did not involve creating an entirely new controls framework, but instead took the approach of prioritizing one of the existing frameworks into a “key” compliance requirement against which the others were mapped. It was decided that HIPAA was the priority framework and therefore should be the central control set. Figure 8-2 shows the same sample set of previously examined controls reconfigured into a transitive control map. The risk managers thought this strategy benefited from the need for fewer resources on the front end to map between the various controls. Since no new framework was needed, the majority of the effort could be focused on identifying specific equivalencies between the HIPAA controls and the other frameworks. If controls did not overlap, they would remain as they were and be handled by the specific teams responsible for that area of compliance. It was assumed in this scenario that the main goal would be a CCF of only those controls that overlapped, which would then be assigned and coordinated among the various teams.

Figure 8-2. Transitive control mapping of HIPAA, PCI DSS, and SOX controls

The risk managers also identified several limiting factors of the transitive mapping strategy. The first limitation involved the assumptions when mapping the frameworks together. When a PCI DSS control was mapped to a HIPAA control, an equivalent relationship was established. The same thing occurred when a SOX control was mapped to the same HIPAA control. By mapping these two controls to the same HIPAA control, however, there was also an implied equivalence between the PCI DSS and the SOX control, although these controls were not explicitly mapped to one another. The risk management team saw in these implied relationships the potential for audit risks if controls that had not been mapped were implemented as though they were the same control, even if they met the primary control requirement.

The second limitation identified was the inverse of the first. By choosing to map only through HIPAA, equivalent controls in other frameworks might not be identified, because they had no equivalent in the primary framework. This would mean that redundancies and duplicated efforts would continue among the compliance teams. The false positive and false negative equivalents that were possible under this system were viewed as the primary limiting factors of the strategy.

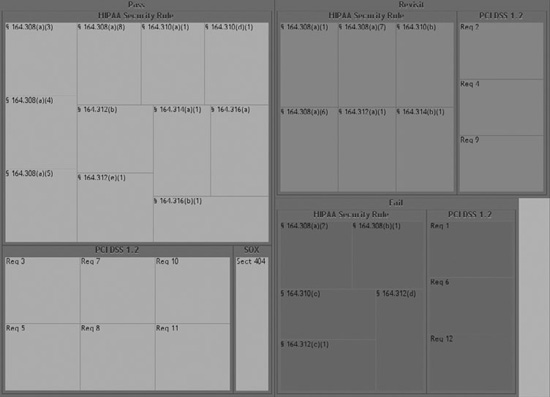

Granular Control Mapping Granular control mapping attempts a one-to-one cross-referencing of every control in every framework against every other control in every framework. All equivalencies are identified and documented. Figure 8-3 shows the sample of controls mapped under a granular strategy. In a granular map, nothing is left to chance, and every relationship between every control is identified and documented. This type of CCF can be deployed to analyze the efforts and overlap involved with every aspect of the various compliance frameworks undertaken by the enterprise and to determine exactly where equivalence occurs. But when dealing with more than two or three frameworks, the amount of analysis begins to increase exponentially as each new framework control added must be cross-referenced with every other framework control across the entire set. While the benefits of this strategy were apparent to the risk management team in that all the relationships between the controls would be formally established, the project team quickly discounted this mapping strategy as a viable approach because the resources required to accomplish the mapping were seen as prohibitive.

Figure 8-3. Granular control mapping of HIPAA, PCI DSS, and SOX controls

Choosing CCF Mapping Strategies

The results of the mapping exercises allowed the hospital risk managers to make a more informed decision about which rationalization strategy to choose. Given the three approaches available, one (the granular mapping) could be rejected immediately as too costly on its face. A careful analysis of the other two options aided the project team in deciding on the likely best approach for streamlining the company’s control requirements and reducing the cost of compliance efforts. At the conclusion of the project, the risk management team decided that a transitive mapping strategy that prioritized the HIPAA security regulations would be the optimal approach. This decision was made, in part, because of the limited number of frameworks chosen for the compliance initiative. The main job would be to map HIPAA requirements with PCI DSS requirements and to include SOX control requirements as necessary. The logic of the project team was that the HIPAA and PCI DSS frameworks were similar enough that equivalence false positives and false negatives would remain at an acceptable level, while the cost savings from streamlining the controls would allow for the elimination of several initiative silos. Should new compliance frameworks or requirements be added at a later date, it was agreed that the measurement project and the mapping strategies would need to be revisited.

This type of security analysis will not meet a strictly quantitative definition of measurement and metrics. But if this is the case, it must be said that most scientific or research endeavors are not about measurement either. One of my main complaints about an overly simplistic definition of security metrics is that it makes gathering “facts” more important than trying to understand what it is those facts are supposed to explain. No scientist describes to people our need to explore space, to cure disease, or to create better computing technologies by spouting numbers and equations. They start with a context in which those numbers begin to make sense, usually in the form of a problem statement, an expression of curiosity, or even in the relatively simple telling of a story.

IT security metrics can and should be treated no differently. You cannot separate the “metrics” from the larger context of measurement in which they exist without losing your purpose—or worse, never understanding that purpose in the first place. In fact, even IT security metrics proponents recognize this fact, whether they admit it or not. You will not find discussions of security metrics that promote facts, figures, and data on their own merits. Instead, books and articles situate and explain the importance of metrics in terms of the problem space of poorly understood security and stories of better articulating the business value of security. Stories matter and facts don’t make stories any more than the entries in a dictionary make a novel. I don’t believe artificially parsing the stories from the facts helps the process of security measurement.

Applying Cost Metrics to the CCF Mapping

The measurements and analyses undertaken during this project let the hospital’s risk management staff derive a baseline for one indicator of compliance performance, develop the cost of compliance initiatives for the company, and explore alternative compliance strategies that might reduce those costs. Table 8-3 lists the data collected for the compliance cost assessment.

After establishing some basic cost measurements around compliance as well as a reasonable strategy for CCF creation, the hospital’s risk assessment team was positioned to begin developing a quasi-experimental set of follow-on measurement projects that would compare the costs of compliance before and after the adoption of the new CCF. This outcome was not part of the immediate project, which was bounded at measuring just the baseline costs and assessing a strategy that the project team felt was most likely to reduce those costs. The measurement project did not assess how well the compliance initiatives performed or whether the controls in place were effective. The project did not look at comparisons between the resources spent on compliance and the results of formal regulatory or industry audits.

Table 8-3. Compliance Cost Data for CCF Project

Although all of these are appropriate considerations around which to build metrics and measurement projects, remember that the goals of a security improvement program (and of the security process management framework in general) involve incremental and ongoing measurement and analysis. The hospital system could have chosen to conduct a much more extensive measurement project that attempted to define some of the listed compliance performance indicators, but the larger and more comprehensive the project becomes, the more difficult it is to manage. And there is no need for massive, comprehensive projects when you recognize your security metrics efforts as an incremental and ongoing process that never stops.

Mapping Assessments to Compliance Frameworks

Continuing to use the example of the hospital system, the next two example projects focus on specific aspects of compliance that the organization sought to measure and assess. In the first project, the risk management project team developed a high-level compliance map that would show how well or how poorly the company was managing the overall compliance posture for the three previously identified frameworks (HIPAA, PCI DSS, and SOX). In this project, the data results from two assessments—one of policy and another of security vulnerabilities—were used to provide a compliance scorecard to aid management decisions about where to focus compliance remediation efforts. The GQM template for this project is shown in Table 8-4.

Table 8-4. GQM Template for Assessment to Control Framework Mapping

The methodology used to complete this assessment was a detailed comparison and analysis of each required control framework with the results of previously conducted policy and vulnerability assessments. The policy assessment had resulted in data and findings regarding the structure and effectiveness of the company’s security policies, but most applicable to this measurement project was a detailed policy catalog that was developed during the review. The catalog included all security-related policy documents in place within the company, annotated with notes on the purpose and applicability of each policy document (based on interviews with security program personnel). The policy catalog provided a ready set of data that could be compared with the major requirements of each compliance framework identified by the company.

The vulnerability assessment provided similar data based on a vendor’s assessment of physical and logical security within the company. The findings of the vulnerability assessment were analyzed against specific control requirements found in each compliance framework. In both cases, the primary analytical work was the measurement of relationships between the findings of the assessments and the control objectives of the required frameworks. Where necessary, the project team referred questions to corporate and outside counsel to ensure that the associations made were reasonable from a legal and regulatory perspective. Following is a sampling of specific findings that were used in the analysis:

Policy Assessment Findings

![]() No policy document formally specifying responsibility for compliance requirements

No policy document formally specifying responsibility for compliance requirements

![]() No process for measuring contract performance regarding security of partners

No process for measuring contract performance regarding security of partners

![]() Poorly documented and unenforceable standards for router configurations

Poorly documented and unenforceable standards for router configurations

Vulnerability Assessment Findings

![]() Personal health data discovered on unprotected systems

Personal health data discovered on unprotected systems

![]() Physical media containing personally identifiable information found unsecured

Physical media containing personally identifiable information found unsecured

![]() Multiple shared user IDs identified, including system administrator IDs

Multiple shared user IDs identified, including system administrator IDs

The cross-referenced assessment and compliance data were used to construct several tree maps that provided an intuitive visualization of the hospital system’s compliance posture. In each tree map, compliance with a given required control objective for one of the regulatory frameworks was indicated in green, compliance failures were in red, and requirements that were partially met or required revisiting were in gray. The tree maps for the policy mapping and for the vulnerability assessment mapping are shown (in grayscale) in Figures 8-4 and 8-5, respectively.

The results of this measurement project provided high-level, intuitive results that the risk assessment team intended to use with senior management to demonstrate the strengths and weaknesses of the company’s compliance posture. The project team was careful to explain the limitations of the project findings: it was based on two specific sets of assessment data and did not reflect a complete review of all compliance requirements for which the company was responsible. But the project team did use the resulting information to stimulate ideas for other, similar assessments that could produce complementary results. The project team also made recommendations based on the findings for several follow-on measurement projects that would be designed to explore more fully and at greater depth the relationships among policy architectures, vulnerabilities, and compliance obligations that the company was failing to meet.

Figure 8-4. Tree map for cross-referenced policy assessment findings and compliance requirements

Analyzing the Readability of Security Policy Documents

The final security measurement project discussed in this chapter reinforces my position that not all IT security metrics are about the output of IT systems, how many of something exists, or how often an event occurs. IT security metrics can and should be as varied and creative as the elements and concepts we find across the field of information security. Sometimes vulnerabilities are subtle, and it takes an eye for new and innovative measurement ideas to get at them. This is particularly true in the case of compliance and conformance challenges, where the very nature of the security problem space is a mash-up of people, processes, and technologies.

Figure 8-5. Tree map for cross-referenced vulnerability assessment findings and compliance requirements

As part of my professional work, I provide clients with security policy assessments. Security policies are the bedrock of any effective IT security program, absolutely essential to success. Without an effective and well-written security policy architecture, any process or technical controls that you implement will have no guiding principles, no expectation of enforcement, and no baseline against which to measure success. Or at least this is the standard party line that everyone quotes while installing policy architectures that often prove fundamentally worthless.

If we all really believed that security policies were so important, why wouldn’t everyone put as much effort into constructing them, verifying them, and measuring their success as we put in to our technical infrastructures? Most security policies I see seem to be written almost as afterthoughts, or they are copied whole cloth from freely available templates that are never customized to the unique environment and culture of the organization that adopts them. They are the epitome of checkbox compliance, designed primarily to be able to say “we have a security policy.”

We assess our security policies in much the same cavalier way. The typical security policy assessment involves finding someone who a) can read, and b) knows something about security, and turning them loose. They can, of course, refer to many guidelines from a variety of security resources and organizations that recommend best practices for security policies—but in the end, security policy development and assessment comes down to individual opinion more than just about any other element of the enterprise security architecture. And this means it is likely you are not measuring your security policy with any degree of rigor or depth. But how do you measure a policy document?

Johnny Can’t Read (the Security Policy)

A number of metrics can be applied to security policies, but this project focuses on one that I find particularly interesting: readability. One of the policy measurement activities I provide for clients is an assessment of the readability of the policies they have developed and that they expect everyone in the organization to follow to protect IT security. I like readability as a metric because it reminds me of usability in other IT systems. We intuitively understand that systems that are difficult or impossible to use tend not to be used. But many of my clients don’t understand why so many of their employees seem to disregard the organization’s security policies. They don’t think of their security policy as something that has a usability factor. But most of my clients do understand when something is difficult to read.

Whether we are trying to read an updated privacy policy from a credit card company, a click-through license agreement when we install new software, or the latest Thomas Pynchon novel, we all know what a difficult text looks like. This is why most people never read any of these things. When system security policies are difficult or impossible to read, many users of the policy simply give up on reading and understanding it. And if users make this choice with regard to the company’s security policy, regardless of whether or not they acknowledge that they read and understood it, they put the company at risk. It is cold comfort to fire someone for a policy violation after the damage has already been done. If the violation occurred because the policy was incomprehensible in the first place, then the punishment is unfair as well as untimely.

As with other metrics and data analysis methods that could benefit our field, readability is only innovative in that it hasn’t been widely implemented in IT security. But it is used in many other environments, from measuring the usability of military manuals (you need to make sure that 18-year-olds understand how to operate that tank) to healthcare (you need to make sure that 80-year-olds understand how to take their medicine). Studies have shown that the average reader in the United States reads and comprehends at a tenth-grade level or lower. As a result, many documents are written so that your reading skills need be no greater than that to comprehend the text. In some cases, the market takes care of it (most popular novels are written at about an eighth-grade level), while in other cases, readability must be mandated (many organizations require that manuals and other procedural instructions be written at a level no higher than high school to ensure that everyone can follow them). My experience with security policies is that they are almost never written with the average reader in mind. More often, they require higher levels of comprehension skills, often at the graduate or postgraduate levels, to understand them fully. Methods and tools are available for assessing readability of documents, including many freely available web tools, as well as basic features built into word processors such as Microsoft Word.

Measuring Readability as Part of Compliance

While there are no formal requirements for the readability of security policies in typical compliance frameworks, it can be assumed that any framework mandating that a policy be in place also mandates that the policy be easily understood and followed by every member of the organization to which it applies. This usually means that the more general the security policy, the easier it must be to read, since it affects a wide variety of people across the organization. Specialized policies that impact smaller audiences, including those that are assumed to be more educated (coders, IT specialists, or managers), may not be compromised by higher readability levels. But without a good understanding of policy audiences and users, an enterprise may put itself at risk of a policy failure or, worse, legal claims in the event that problems occur because of an inappropriately written policy document.

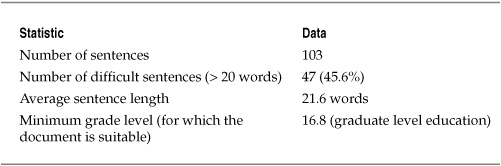

For continuity, I have kept this example project in the context of the hypothetical hospital system’s risk measurement activities. The project developed out of findings from the policy assessment described in the previous example project. During the policy assessment it was noted that no standard style guide or manual existed for writing security policies and one of the project staff proposed that the project team adopt the hospital’s style guide for writing medical procedures. Part of that style guide mandated ceilings on the reading comprehension levels necessary to follow the procedures. The project team was then motivated to determine the usability levels of the security policies. The GQM template for the project can be found in Table 8-5.

Table 8-5. GQM Template for Policy Readability Assessment Project

Table 8-6. Sample Results for Readability Test of General Security Policy

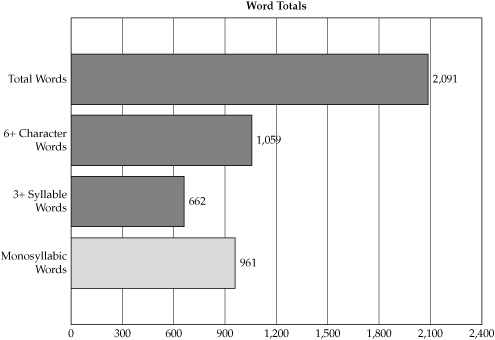

The reading difficulty tests for the security policies were conducted using Readability Studio, a commercial product used for analyzing the readability of texts. Table 8-6 shows a selection of the resulting readability metrics data for the hospital system’s general information security policy. This policy outlined the overall security program and all employees of the company were required to read and acknowledge that they understood this policy. Figure 8-6 provides a more detailed breakdown of the security policy, detailing the number of difficult words as compared with the total word count of the policy.

Figure 8-6. Word breakdown for general information security policy document

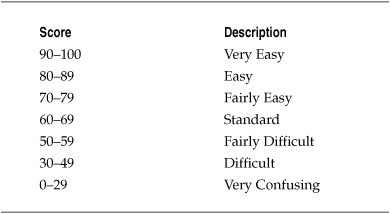

Table 8-7. Flesch Readability Ease Scores

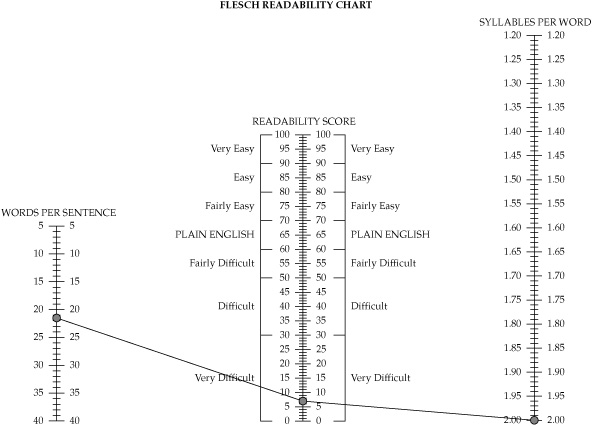

In addition to the basic statistical analyses of the lexical and grammatical structures of the security policy documents, the project team conducted a Flesch Reading Ease test for the security policies under review. The Flesch Reading Ease score is often used by government agencies, where it has become a standard test of the readability of technical manuals and other procedural documentation. The test calculates a readability score based on the sentence length and number of syllables contained in a text. Table 8-7 lists the score levels for the Flesch test. Higher Flesch scores indicate easier reading levels, while lower scores mean a text is increasingly difficult to understand.

The Flesch test for the hospital’s security policy indicated that the document was very difficult to read and confusing, as shown in the Flesch score chart in Figure 8-7. This readability score, combined with the results of other tests that placed minimum suitable education levels necessary to read the document effectively at the graduate school level, indicated serious flaws in a security policy that was intended to be used by everyone in the company. If the hospital system was on par with the national average, and the typical reading skill level was at the high school level, it was considered very likely that most employees would simply be unable to use the policy effectively, even though they acknowledged that they had read and understood the content of the policy.

Readability Project Findings

The results of the readability metrics convinced the project team that they were dealing with a potentially serious, but quite unconventional, security problem. For most of the security and risk managers working on the project, security policies had been traditionally viewed as the responsibility of the employee who was required to read and acknowledge his or her understanding of the policy. The way the policies were written could even be interpreted as a sort of contract, as they specified sanctions up to and including termination for anyone who violated those policies.

Figure 8-7. Flesch readability chart for the general information security policy

The project team concluded that the role of the company in creating usable and appropriate policies had been neglected, and that two immediate risks resulted from this oversight. First there was the real risk of security breaches that might be caused by employees who did not understand their responsibilities under the security policies. The risk management team felt that the presence of the policy had provided a false sense of security, as the company assumed any violations were deliberate or the result of gross neglect rather than lack of comprehension. Second, the project team believed that the problems with the readability of the policies could potentially open the company to lawsuits if employees were fired for policy violations. In both cases, the readability study had measured elements of security risk that had previously been completely unidentified.

As a result of the readability tests, the project team recommended a complete review and overhaul of the company’s policy documents. As part of this review, they reached out to both corporate counsel and technical documentation experts who designed policies and procedures where readability was considered an important component of the documentation. As part of the ongoing security improvement program, the project team also recommended follow-on security measurement projects be conducted after the redesign of the security policy to measure whether the new, more easily understood, policies could be correlated with declines in security incidents and events across the company.

Summary

IT Governance, Risk, and Compliance (IT GRC) is a complex challenge and encompasses how you manage your security as a process, the controls that you choose to protect specific resources, and the many requirements that are imposed on you by laws, regulations, industry standards, and business contracts. Measuring compliance becomes a particularly challenging activity because of so much variation and uncertainty between compliance frameworks and the organizational and human interpretations of those frameworks. Even frameworks such as ISO/IEC 27001 and 27002, which are closely aligned, often cause confusion among security managers. Whether you call your activities an audit, measurement, or something entirely different, your goal is to understand fully the requirements you are obligated to meet and then to meet those requirements effectively and efficiently.

One exercise that is increasingly common in security is the use of rationalized common control frameworks (CCFs) that align multiple compliance requirements into more easily managed or aligned control systems. There are several ways to rationalize control frameworks, including normalized, transitive, and granular strategies. Each strategy has strengths and limitations. A CCF can be used to break down silos in the organization’s compliance program and help the organization better coordinate and actively measure compliance efforts.

In addition to CCF mapping, specific measurement projects may be undertaken regarding compliance requirements that are limited only by the imagination and creativity of the organization. Two projects examined in this chapter dealt with the alignment of the results of policy and technical assessments to the compliance requirements for an example hospital environment and with the assessment of readability metrics of the hospital’s security policies. By taking a broad approach to security metrics in the context of regulatory compliance or conformance to other control structures, your organization can develop innovative measurement efforts that cover a wide variety of situations and security performance indicators.

Further Reading

Flesch, R. How to Write Plain English: A Book for Lawyers and Consumers. Barnes & Noble, 1981.

Hayden, L. Designing Common Control Frameworks: A Model for Evaluating Information Technology Governance, Risk, and Compliance Control Rationalization Strategies. Information Security Journal: A Global Perspective, 18(6), p. 297-305, 2009.

National Assessment of Adult Literacy (NAAL). http://nces.ed.gov/naal/