23. Playing and Recording Sound and Video

YOU know your phone can make noise. It has a ringer, and you can hear voices from it. How can your applications make noise with your phone? The answer is the Mobile Media API (MMAPI).

You can do a lot more with MMAPI than just make noise. MMAPI has a very flexible design that makes it suitable for a wide variety of content, such as images, audio, and video. The Advanced Multimedia Supplements (AMMS) extend MMAPI’s capabilities with additional camera support, 3D audio, and more.

23.1. Boring Background Information

MSA’s multimedia APIs are composed of parts that are related in subtle ways. If you know that your applications are aimed at MSA devices, then skip this section and read the rest of the chapter, where you learn how to do stuff. If you want the whole story, drink some strong coffee and read this section.

The core of MSA’s multimedia capabilities is the MMAPI, defined by JSR 135. The basic idea is pretty simple. Ask for a Player for a certain type of content, and the API returns an object that knows how to render that content. If the device can’t handle the content, it throws an exception.

If APIs were tools, then MMAPI would be a shiny platinum ratcheted electric screwdriver that comes without any bits. You can’t do anything useful with MMAPI unless the implementation on the device supports useful content. It’s possible, though tragic, that a device could be able to play MP3 files from native applications, such as the Web browser, but not support playing MP3 files via MMAPI. In theory, it’s also possible that a device could have MMAPI without being actually able to play anything at all.

The MMAPI specification does not require any content types, although most devices that implement MMAPI will take advantage of any available supported content types. The conundrum of supported content is covered in a later section.

MIDP 2.1 includes a subset of MMAPI for playing tones and sampled audio. It is a strict subset of MMAPI, so any audio-related code in MIDlets should also work on an MMAPI device.

MIDP requires that devices can play tones, which at least means you can make some kind of noise. MIDP also requires support for one type of sampled audio, but only halfheartedly. The specification states that if the device can play sampled audio, then MMAPI must support 8-bit, 8KHz, monophonic linear PCM WAV. This is roughly equivalent in sound quality to telephones from a century ago.

The MSA specification is a little more concrete, but not much. It requires MMAPI, and it requires support for the following content types:

8kHz, 8-bit linear PCM audio in WAV. This is the same requirement as in MIDP, but this time it has to be done.

AMR-NB. Adaptive Multirate Narrowband (AMR-NB) is a sampled audio format suitable for voice.

MIDI and SP-MIDI. MIDI is a venerable standard for representing music as a series of tones and durations. SP-MIDI is a variant suitable for small devices. It allows composers to specify portions that can be eliminated on devices that cannot play as many notes simultaneously.

All that is confusing enough, but now another character has come on stage, JSR 234 AMMS. AMMS is not those pills the bulky guy at the gym is offering. It’s an API that extends the capabilities of MMAPI. MSA requires AMMS, which means the API is present but its capabilities are mostly dependent on the underlying hardware. Here are a few examples, but not a complete list, of AMMS capabilities:

If the device has a camera, then the AMMS camera APIs must be supported.

If the device has a radio, then it must be available through AMMS.

If the device has 3D audio capabilities, they must be available through AMMS.

In summary, MSA’s multimedia APIs come from JSR 135 MMAPI and JSR 234 AMMS. Tones, sampled WAV audio, AMR-NB, and MIDI content must all be supported.

MMAPI lives in javax.microedition.media and its child packages. The center of MMAPI is javax.microedition.media.Manager.

23.2. Tones

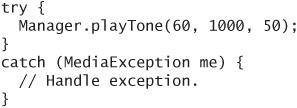

Playing a single tone is pretty easy, but you don’t get much control over how it sounds. Use the static playTone() method in Manager.

You need to specify a note number, which corresponds to a key on a piano. Middle C on a piano is note number 60. This is the same numbering scheme used in the Musical Instrument Digital Interface (MIDI) standard. You also need to say how long the note will be, in milliseconds, and how loud it should be, from 0, silent, to 100, loudest.

This example plays middle C for one second at half volume:

If the tone cannot be played, MediaException is thrown. This could happen if the device is busy playing some other sound. My Motorola RAZR is a milquetoast in this regard and can’t handle quickly repeated calls to playTone() without throwing a MediaException.

You can also play a tone sequence. This technique is described later in the chapter.

23.3. Using Players

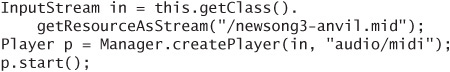

The story of MMAPI is very simple for simple tasks. First, ask Manager for a Player for some content. Manager either returns an appropriate Player for the content type or throws MediaException. Then start the Player.

Here is how it works for a MIDI content file:

A Player has a distinct life cycle, represented by constants in the Player class:

An

UNREALIZED Playerhas just been created.REALIZEDmeans thePlayerhas located all the resources it needs. For example, aPlayerthat is going to render some audio content from a Web server will initiate the communication with the server to becomeREALIZED.A

Playergets ready to render content by acquiring device resources or filling buffers. After this work is done, thePlayerisPREFETCHED.When the

Playeris actually rendering content, it isSTARTED. After thePlayerfinishes rendering, it returns to thePREFETCHEDstate.Shut down a

Playerby callingclose(), which releases resources and changes the state toCLOSED.

You can read more about Player’s life cycle in the API documentation. In the earlier example, calling start() rocketed the Player through the intermediate states of REALIZED and PREFETCHED to STARTED. To perform these steps explicitly, use the realize() and prefetch() methods. Note that these methods block until the requested state is reached, so you shouldn’t call them in an event callback thread.

You can prefetch() a Player to ensure that it can respond quickly when your application is ready to make a noise.

Manager has three createPlayer() methods. The one used in the previous example accepts an InputStream and a content type. You can also just specify a URL, in which case MMAPI will attempt to determine the content type itself and return an appropriate Player. The third createPlayer() method involves a DataSource, which is a lower-level object that you will probably never use. For more information, consult the API documentation.

Creating a Player from a URL is really simple:

![]()

Of course, you need appropriate permission to make network connections. For efficient use of network content, you want a streaming protocol like Real-Time Streaming Protocol (RTSP). Unfortunately, the MIDP specification, the MMAPI specification, and the MSA specification are all silent on this subject, so support varies from device to device.

You can also use a file: URL to load content from a local file using the JSR 75 FileConnection API. The MSA specification defines three system properties that point to likely content locations:

The

fileconn.dir.tonesproperty contains the location of ring tones and related audio.The

fileconn.dir.musicproperty points to a directory containing music content such as MP3 or AAC files.The

fileconn.dir.recordingsproperty contains the location of voice recordings.

23.4. Supported Content Types

You know that an MSA device can render WAV, AMR-NB, and MIDI content, but what else is available? At runtime, Manager can tell you all the content types and protocols it supports. Use the static getSupportedContentTypes() and getSupportedProtocols() to find out the device’s capabilities.

WAV files are bulky but could be appropriate for short sounds. AMR-NB files have poor sound quality but can be adequate for some applications, particularly podcasts or other voice recordings. AMR-NB is more portable than WAV because it has fewer encoding options. MIDI files are good as a compact representation of songs but are not appropriate for short sound effects.

For music clips, consider using Advanced Audio Coding (AAC) or AAC+, although neither is part of the MSA specification. AAC has been supported on many higher end handsets for a couple years. AAC is CD quality. AAC+ is even more compact but not yet as widely supported as AAC.

In video formats, .3gp is the most portable for Global System for Mobile Communications (GSM), and .3g2 is the most portable for Code Division Multiple Access (CDMA) networks. See Wikipedia for more information:

http://en.wikipedia.org/wiki/3GP

23.5. Threading and Listening

Media rendering usually happens in a separate thread. When you call start() on a Player, the content starts playing and control returns to your application. However, playing content usually is a heavy load for a small device, so don’t expect to do lots of other work at the same time.

A Player can notify your application of important events in its life. Just register a PlayerListener with the addPlayerListener() method. PlayerListener has a single callback method, playerUpdate(), which gets called when the Player reaches the end of its content, when it’s stopped, when the volume is changed, and more. Again, it depends on the implementation as to exactly which events trigger a call to playerUpdate().

Generally in your application, you can register a listener, then listen for a specific event and react to it appropriately. For example, you might wait for an END_ OF_MEDIA event and then close() the corresponding Player to free system resources.

23.6. Taking Control

How do you pump up the volume? How can you change the rate of playback? How can you rewind, pause, and restart? The answer is controls, another useful abstraction in MMAPI.

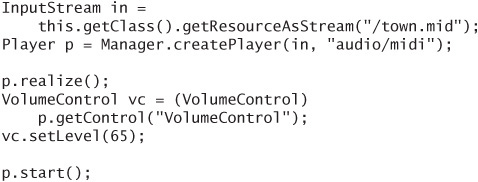

You can get a control from a Player by passing its class name to getControl(). The javax.microedition.media.control package contains a toolbox of control types. The Player must be REALIZED to supply controls. If the Player cannot supply a control of the requested type, it returns null.

For example, to adjust the volume for MIDI content, you would do something like this:

This example sets the playback volume to 65% of the maximum.

You can retrieve all of a Player’s Controls by calling getControls(), which returns an array of Controls. Then you can test each one using instanceof to see if it’s the type of control you need.

MSA does require any audio player to include a VolumeControl, but you cannot rely on the presence of any other type of control.

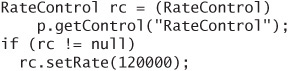

To make a MIDI file play back faster or slower, use a RateControl. The default rate is 100,000, so the following code would make MIDI content play at 120% of its usual rate:

My Motorola V3 returns null when I ask for a RateControl. Testing for the null return value prevents my application from utterly failing with an Application Error message. I can’t play my MIDI file at a 120% rate, but the application runs without barfing.

23.7. Playing Sampled Audio Content

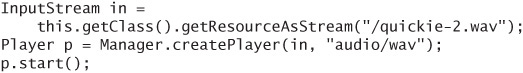

Now that you understand the basics of Players and Controls, playing sampled audio is easy. In fact, the code is nearly identical in many cases.

Just like before, supply a path to the content and kick off the Player:

The same rules apply here. You can ask for Controls to change how the audio is rendered. On MSA devices, you know at least a VolumeControl is available.

23.8. Playing Video Content

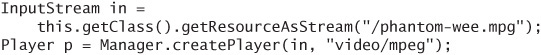

Video content is handled much the same way, except you have to do some magic to make it show up on the screen.

First, create a Player as usual, pointing it to some video content.

To show the video on the screen, you need a VideoControl. You can only get it if the Player is REALIZED.

![]()

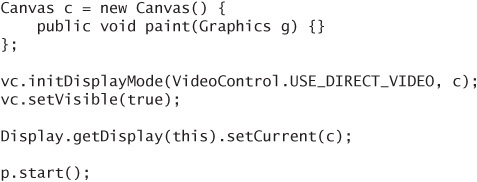

Now you have two choices. You can show the video on a Canvas, or you can show it as an Item in a Form. This is one way to show it on a Canvas:

MSA mandates that VideoControl’s methods for setting the size and location of the video must be supported, and furthermore, that setDisplayFullScreen() must be supported. Figure 23.1 shows it on a canvas in the Sun Java Wireless Toolkit:

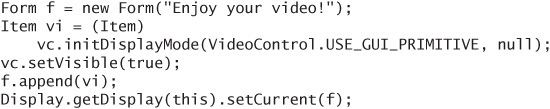

To show video on a Form instead, use this kind of code:

Figure 23.1. Video in a Canvas

The video Item behaves like any other item. As usual, you are free to add other kinds of items and commands to the Form.

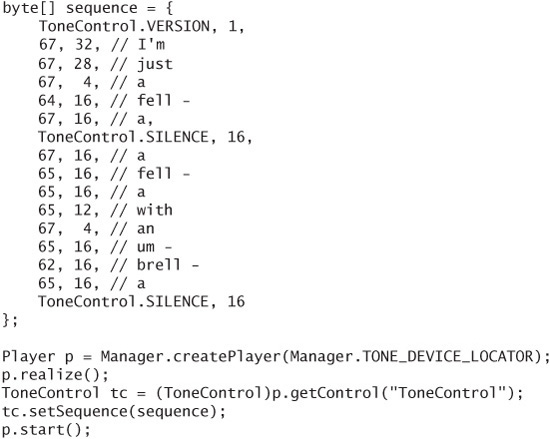

23.9. The Tone Sequence Player

Two special players, a tone sequence player and an interactive MIDI player, provide useful functionality.

The tone sequence player must be supported. It serves as a base level of audio functionality that must be present on all device. To use the tone sequence player, call Manager’s createPlayer() method with a special string, Manager.TONE_DEVICE_LOCATOR. Then retrieve a ToneControl to work with the Player.

Tone sequences have a file format, which is described in the API documentation for ToneControl. You can either point Manager at a file (MIME type is audio/x-tone-seq) or define the tone sequence directly in your code.

The core of the tone sequence is a series of note number and duration pairs, although you can do some other stuff, such as define blocks, change volume, and set a tempo.

Here is an example that defines a simple tone sequence in the source code, then plays it:

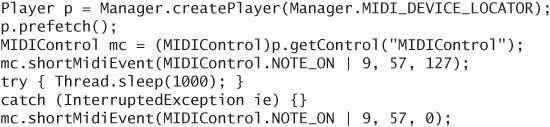

23.10. The Interactive MIDI Player

The interactive MIDI player is not the same as the MIDI content player. MSA requires that devices be able to play MIDI content. The interactive MIDI player is more like the tone sequence player. If it’s available, you can play notes and send other MIDI events dynamically using MIDIControl.

Obtain the interactive MIDI player by calling Manager.createPlayer() with the special locator value MIDI_DEVICE_LOCATOR. If the interactive MIDI player is not supported on the device, you’ll get a MediaException.

Once you’ve got the interactive MIDI player, prefetch() it and get a MIDIControl. You can get information about the MIDI device’s sounds and sound banks, and you can send short and long messages directly to the device.

Here’s an example that plays a cymbal crash for one second.

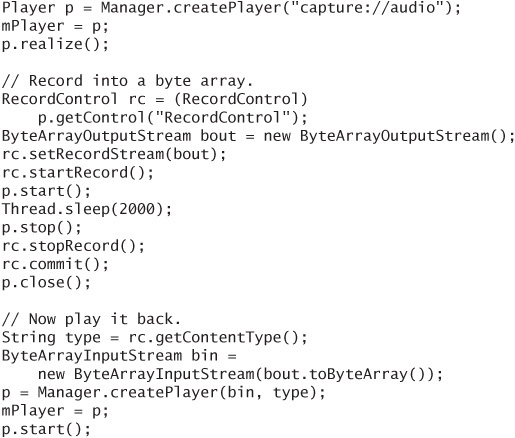

23.11. Recording Audio

If a device supports recording audio, the supports.audio.capture system property will have the value true. In this case, you can find out the content types that are supported for recording by examining the system property audio.encodings.

To record audio, use a special locator capture://audio to create a Player. Then use RecordControl to control the recording. Here is an example that records for two seconds into a byte array, then plays the recording back:

A more accurate implementation would register a listener and wait until the player was started (RECORD_STARTED) before kicking off the two-second timer. This example keeps things simpler by starting the timer right after the call to start().

Recording audio is a security-sensitive operation. You don’t want any old downloaded application recording your voice or the sounds around you. The device should ask for permission before recording. To avoid security prompts, you need to cryptographically sign your MIDlet suite and get the javax.microedition. media.control.RecordControl permission.

23.12. Capturing Video

If your device has a camera, the MSA specification requires that it be available via MMAPI. Capture means your application can show the camera’s output on the screen. In addition, you can record video (like a camcorder) or take snapshots of the video (like a camera).

If the system property supports.video.capture is true, then your device has a camera and you are in business.

For recording video, the video.encodings property contains a list of supported content types for captured video.

For taking snapshots, the video.snapshot.encodings property lists the image encodings that are supported.

Recording video is very similar to recording audio. You need to get a RecordControl, tell it where to store the video content, and start it and stop it.

Taking snapshots is even simpler. Use the getSnapshot() method in VideoControl. You have to supply an encoding, and you get back an array of bytes. It’s up to you what to do next. You could use one of the Image.createImage() methods, or you could write the byte array to a file or send it out to the network.

Using the camera is security-sensitive, just like recording audio, so you need one or both of the following permissions:

![]()

23.13. You Can’t Make Everyone Happy

If you want to create an application that makes noise, what kind of content should you package with your application? Your answer will be the result of some tough balancing between application size, performance, and compatibility:

- Package one of the MSA-mandated formats, either MIDI, WAV, or AMR- NB.

- Package several different kinds of resources with the application and select appropriate resources at runtime on the basis of the device’s capabilities. This technique is likely to result in a large MIDlet suite JAR file.

- On the basis of the device’s capabilities, download appropriate resources from the network. This option is attractive because you can keep the MIDlet suite JAR file small by packaging no content or some mostly compatible content. On the other hand, accessing the network is usually slow, and the first experience your users will have with your application is waiting for content to download from the network, hardly an auspicious beginning.

- Create customized MIDlet suite JAR files for different devices, or at least different groups of devices. This is a headache, but if it’s handled properly, it is a manageable problem.

The ideal solution is that you expect your target devices will all implement MSA and you can package MIDI, WAV, or AMR content with your application. A more realistic scenario is that you aim your application at both MSA and pre-MSA devices and follow one of the other strategies described above.

23.14. About MMMIDlet

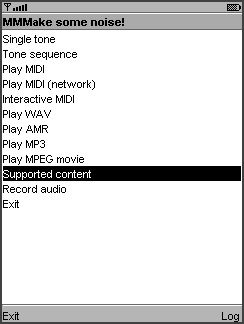

The example application for this chapter, MMMIDlet, is an extended version of the snippets of sample code sprinkled throughout this chapter (see Figure 23.2). It presents a menu of options that roughly correspond to the sections in this chapter.

Figure 23.2. The main menu of MMMIDlet

Two projects are included for this chapter. kb-ch23 contains the full spectrum of examples from this chapter. By contrast, kb-ch23-midp contains a pared-down MMMIDlet that will run on MIDP 2.0 devices without MMAPI.

MMMIDlet keeps the current Player as a member variable, mPlayer. If a new item is selected before the last one is finished, the current Player is stopped before the new one is created.

To aid in debugging, MMMIDlet also includes a log screen where information and messages about exceptions are printed.

The Play WAV item is implemented a little differently from the others. MMMIDlet attempts to play a WAV file using three different content types, just in case your device recognizes one and not another.

Choose Supported content from the menu to list all the content types your device can render. This is the output of Manager.getSupportedContentTypes(null) and is shown on MMMIDlet’s log screen.

23.15. Summary

The Mobile Media API (MMAPI) is the key to playing and recording sound and video content in MIDP applications. To play a sound or a video, ask Manager for an appropriate Player. You can identify the content with a URL or supply an InputStream and a MIME type. Players have a distinct life cycle, which is partly under your control. To begin playing content, call start(). Register a PlayerListener so you can be sure to call Player methods when the Player is in a known state.

A Player can have controls associated with it that are useful for manipulating the playback. One common control is VolumeControl. Playing video works much the same as playing audio, but you use a VideoControl to display the video on a Canvas or Form. MMAPI also enables recording audio and video and taking snapshots from a device’s camera. Although MMAPI is flexible and powerful, content capabilities can vary widely among devices. MSA requires support for MIDI, WAV, and AMR-NB.