How do stakeholders and the project team reach a common understanding of what to build? Most often, what to build is embodied in requirements, diagrams, and designs. The means and timing of what to define varies widely. Rapid development approaches (e.g., eXtreme programming[1] and MSF for Agile Software Development) use a well-structured build process to incrementally identify, build, test, and deploy solution features and function (i.e., define a little, build a little). At the other end of the spectrum, more formal build approaches thoroughly plan and verify what needs to be done before proceeding with solutions development. Both ends of the spectrum and everything in between have merit. Which approach to use is up to the stakeholders and project team in deciding which one best fits their solutions delivery philosophy and project constraints.

For the purposes of this book, the following sections attempt to take an approach-neutral view of planning what to build.

Lead Advocacy Group: Architecture

Requirements iteratively gathered during planning further define and refine a solution described by high-level requirements gathered during an Envision Track. They collectively form a detailed description of a solution. As with high-level requirements, detailed requirements address various domains such as business, end users, operations, and systems requirements. They include specifying a solution’s behavior, how it interacts with its environment (be it other solutions, users, or administrators), features, functions, and expected operational characteristics (e.g., availability).

Requirements are costly to gather and maintain. Especially because they often change before a team acts on them. This is caused in part by changes in the business environment, changes in technology, and changes in project constraints. Compounding that, what are stated as requirements sometimes end up not being what is expected. Therefore, a team should determine what requirements should be collected and when.

Keep in mind that the purpose of requirements is to sufficiently, consistently, and clearly describe and understand a solution. Therefore, a team should gather the fewest requirements necessary to achieve that purpose. This presents a challenge in determining the amount and understanding how the blend of team experience levels and abilities influences that amount.

Often, more experienced and skilled team members require less specificity. Conversely, team members with less experience and skill often require more specificity. The level of specificity is also influenced by tools and processes used to gather the requirements.

As mentioned earlier, a significant source of requirements is defining expected operational characteristics of a solution, commonly called qualities of service (QoS). For example, an availability requirement is defined as a solution shall provide service 99.999 percent of the time (also referred to as achieving five 9s). Another example is a regulatory requirement defined as a solution shall comply with HIPAA transactions standards.[2]

QoS are not a new concept. They are sometimes referred to as nonfunctional requirements and informally referred to as the "–ilities" because so many of them end that way (e.g., maintainability). QoS are commonly found within a Service Level Agreement (SLA). Because providing high QoS in all areas is often prohibitively costly, a project team and the stakeholders need to come to an agreement on what is necessary. Often an organization will be able to provide a basic level of QoS through good design principles.

Table 8-2 through Table 8-5 provide some common and emerging QoS to consider for a solution and its components.[3] Keep in mind that not all QoS are relevant to all solutions.

Table 8-2. Performance-Oriented Qualities of Service

Performance | |

|---|---|

Responsiveness | Allowable amount of delay when responding to an action, call, or event |

Concurrency | Ability to perform well when operated concurrently with other aspects of a solution and with its environment |

Efficiency | Capability to provide appropriate performance, relative to the resources used, under stated conditions |

Fault tolerance | Capability to maintain a specified level of performance irrespective of faults or of infringement of system resources |

Scalability | Ability to handle simultaneous operational load |

Extensibility | Ability to extend a solution without significant rework |

Table 8-3. Trustworthiness-Oriented Qualities of Service

Trustworthiness | |

|---|---|

Security | Ability to prevent access and disruption by unauthorized users, viruses, worms, spyware, and other agents |

Privacy | Ability to prevent unauthorized access or viewing of data |

Table 8-4. User Experience-Oriented Qualities of Service

Accessibility | Extent to which individuals with disabilities have access to and use of information and data that is comparable to the access to and use by individuals without disabilities |

Attractiveness | Measure of visual appeal to users |

Compatibility | Conformance to conventions and expectations |

Discoverability | Ability of a user to find and learn features of a solution |

Ease of use | Cognitive efficiency with which a user can perform desired tasks |

Localizability | Ability to be adapted to conform to the linguistic, cultural, and conventional needs and expectations of a specific group of users |

Table 8-5. Manageability-Oriented Qualities of Service

Manageability | |

|---|---|

Availability | Degree to which it is operational and accessible when required for use. Often expressed as a probability |

Reliability | Capability to maintain a specified level of performance when used under specified conditions, commonly stated as mean time between failures (MTBF) |

Installability and uninstallability | Capability to be installed in a specific environment and uninstalled without altering the environment’s initial state |

Maintainability | Ease of modification to correct faults, improve performance or other attributes, or adapt to a changed environment |

Monitorability | Extent to which health and operational data are automatically collected while operating |

Operability | Extent to which a solution is controlled automatically while operating |

Portability | Capability to be transferred from one environment to another |

Recoverability | Capability to reestablish a specified level of performance and recover the data directly affected in the case of a failure |

Testability | Degree to which the solution facilitates the establishment of test criteria and the performance of tests to determine whether those criteria have been met |

Supportability | Extent to which operational problems are corrected |

Reusability | Ability to repurpose solution components with little rework |

Conformance | Adherence to applicable rules, regulations, policies, and standards |

Interoperability | Capability to interact with other solutions in the environment |

In support of trade-off decision making and forming a versioned release strategy, a team needs a means to prioritize requirements (and features). There are many good approaches to prioritizing requirements.

A commonly used, simple approach is to sort the requirements into three categories: must have, should have, and nice to have. The must-have category contains requirements that are essential to a solution. Stakeholders, customers, and users would be dissatisfied if these requirements were not reflected in the delivered solution. The should-have category contains requirements that, although not critical to the success of a solution, have a compelling motivation to be in a solution. Stakeholders, customers, and users would not be pleased if these requirements were not reflected in the delivered solution. The nice-to-have category contains requirements that, although not necessary, would increase user experience and customer satisfaction. Another similar approach commonly used is referred to as MoSCoW (Must-, Should-, Could-, and Won’t-have requirements and features).

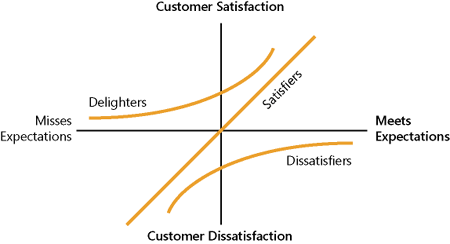

Another more systematic approach to prioritizing requirements is to use Kano Analysis.[4] Kano Analysis purports customer satisfaction is related to customer expectations surrounding the performance and functional completeness of a solution. This relationship is usually depicted as shown in Figure 8-2.

The horizontal axis indicates how much the delivered solution meets customer expectations. The vertical axis indicates customer satisfaction. The three graphed lines convey three requirements categories with different behaviors relating customer satisfaction with customer expectations:

Dissatisfiers. Expected requirements that when absent in a solution generate significant dissatisfaction whereas their presence generates a diminishing dissatisfaction toward a neutral feeling

Satisfiers. Requirements that generate proportional satisfaction with the quality and quantity of what is delivered

Delighters. Unanticipated requirements that when present in a solution generate significant satisfaction whereas their absence is acceptable and therefore does not generate dissatisfaction

Following this method, a team better understands customer satisfaction as related to the presence and absence of requirements and features. As such, when gathering requirements, make sure to ask the respondents about how they feel about degrees of delivering on requirements (i.e., from not delivering on the requirement through moderately delivering up through overdelivering on the requirement). The key is to find where the optimal balance between requirement delivery and customer satisfaction is.

The Kano Analysis diagram can also be used to communicate graphically the expected portions of delighters, satisfiers, and dissastisfiers in each iteration and in each release. As depicted in Figure 8-3, each iteration encompasses the indicated proportions of three requirements categories. As also depicted, the release is the expected proportion for the releases—identified as the minimum acceptable level.

Lead Advocacy Group: Product Management

Part of forming a shared vision is presenting the requirements in a form that all team members are able sufficiently, consistently, and clearly to understand for a solution. This often involves a detailed description, as viewed from a user perspective, of what a solution will look like and how it will behave. With increasing usage of tools to retain and present this information, a growing trend is for teams not to capture this information in a physical document called a functional specification. Rather, the constructs of a functional specification are contained within a combination of tools and other documents.

A functional specification represents a detailed description of a solution, detailed usage scenarios, and design goals and contains detailed requirements specifying its behavior and how it interacts with its environment (be it other solutions, users, or administrators). Requirements cover all aspects of a solution and often come from various domains such as business, end users, operations, and systems requirements.

Because the principles and underpinnings of a functional specification remain valid, it is up to the team to decide whether a physical document is needed. Beyond describing a solution, a functional specification serves many purposes:

A contract between the team and stakeholders on what will be delivered

A basis for estimating work

A common reference point to synchronize the whole team

A logical way to break down the problem and modularize a solution

A path and structure to use for planning, scheduling, and building a solution

A functional specification is the basis for building the master project plan and schedule as well as a solution design. As such, once baselined, a functional specification should be changed only through change control.

[1] K. Beck, eXtreme Programming Explained: Embrace Change (Reading, MA: Addison-Wesley, 2000).

[2] Health Insurance Portability and Accountability Act of 1996 (HIPAA), Public Law 104–191, 104th Congress (August 21, 1996).

[3] Sam Guckenheimer, Software Engineering with Microsoft Visual Studio Team System (Upper Saddle River, NJ: Addison-Wesley Professional, 2006), 67–71.

[4] David Walden, "Kano’s Methods for Understanding Customer-Defined Quality: Introduction to Kano’s Methods," Center for Quality Management Journal 2, no. 4 (Fall 1993), 1–28.