Most of the Pentaho engines, including the engines mentioned earlier, were created as community projects and later adopted by Pentaho. The PDI engine is no exception—Pentaho Data Integration is the new denomination for the business intelligence tool born as Kettle.

Note

The name Kettle didn't come from the recursive acronym Kettle Extraction, Transportation, Transformation, and Loading Environment it has now, but from KDE Extraction, Transportation, Transformation and Loading Environment, as the tool was planned to be written on top of KDE, as mentioned in the introduction of the book.

In April 2006 the Kettle project was acquired by the Pentaho Corporation and Matt Casters, Kettle's founder, also joined the Pentaho team as a Data Integration Architect.

When Pentaho announced the acquisition, James Dixon, the Chief Technology Officer, said:

We reviewed many alternatives for open source data integration, and Kettle clearly had the best architecture, richest functionality, and most mature user interface. The open architecture and superior technology of the Pentaho BI Platform and Kettle allowed us to deliver integration in only a few days, and make that integration available to the community.

By joining forces with Pentaho, Kettle benefited from a huge developer community, as well as from a company that would support the future of the project.

From that moment the tool has grown constantly. Every few months a new release is available, bringing to the users, improvements in performance and existing functionality, new functionality, ease of use, and great changes in look and feel. The following is a timeline of the major events related to PDI since its acquisition by Pentaho:

- June 2006: PDI 2.3 is released. Numerous developers had joined the project and there were bug fixes provided by people in various regions of the world. Among other changes, the version included enhancements for large scale environments and multilingual capabilities.

- February 2007: Almost seven months after the last major revision, PDI 2.4 is released including remote execution and clustering support (more on this in Chapter 13), enhanced database support, and a single designer for the two main elements you design in Kettle—jobs and transformations.

- May 2007: PDI 2.5 is released including many new features, the main feature being the advanced error handling.

- November 2007: PDI 3.0 emerges totally redesigned. Its major library changed to gain massive performance. The look and feel also changed completely.

- October 2008: PDI 3.1 comes with an easier-to-use tool, along with a lot of new functionalities as well.

- April 2009: PDI 3.2 is released with a really large number of changes for a minor version—new functionality, visualization improvements, performance improvements, and a huge pile of bug fixes. The main change in this version was the incorporation of dynamic clustering (see Chapter 13 for details).

- In 2010 PDI 4.0 will be released, delivering mostly improvements with regard to enterprise features such as version control.

Paying attention to its name, Pentaho Data Integration, you could think of PDI as a tool to integrate data.

In you look at its original name, K.E.T.T.L.E., then you must conclude that it is a tool used for ETL processes which, as you may know, are most frequently seen in data warehouse environments.

In fact, PDI not only serves as a data integrator or an ETL tool, but is such a powerful tool that it is common to see it used for those and for many other purposes. Here you have some examples.

The loading of a datawarehouse or a datamart involves many steps, and there are many variants depending on business area or business rules. However, in every case, the process involves the following steps:

- Extracting information from one or different databases, text files, and other sources. The extraction process may include the task of validating and discarding data that doesn't match expected patterns or rules.

- Transforming the obtained data to meet the business and technical needs required on the target. Transformation implies tasks such as converting data types, doing some calculations, filtering irrelevant data, and summarizing.

- Loading the transformed data into the target database. Depending on the requirements, the loading may overwrite the existing information, or may add new information each time it is executed.

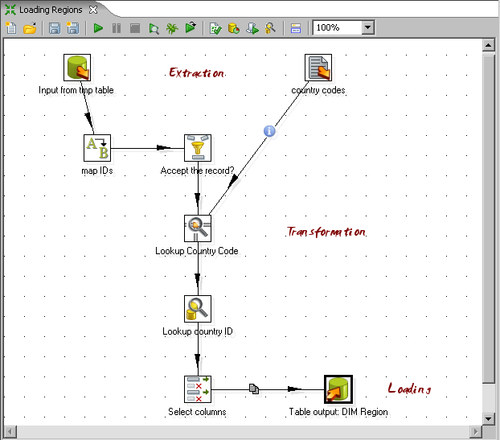

Kettle comes ready to do every stage of this loading process. The following sample screenshot shows a simple ETL designed with Kettle:

Imagine two similar companies that need to merge their databases in order to have a unified view of the data, or a single company that has to combine information from a main ERP application and a CRM application, though they're not connected. These are just two of hundreds of examples where data integration is needed. Integrating data is not just a matter of gathering and mixing data; some conversions, validation, and transport of data has to be done. Kettle is meant to do all those tasks.

Why do we need that data be correct and accurate? There are many reasons—for the efficiency of business, to generate trusted conclusions in data mining or statistical studies, to succeed when integrating data, and so on. Data cleansing is about ensuring that the data is correct and precise. This can be ensured by verifying if the data meets certain rules, discarding or correcting those that don't follow the expected pattern, setting default values for missing data, eliminating information that is duplicated, normalizing data to conform minimum and maximum values, and so on—tasks that Kettle makes possible, thanks to its vast set of transformation and validation capabilities.

Think of a company of any size that uses a commercial ERP application. One day the owners realize that the licences are consuming an important share of its budget and so they decide to migrate to an open source ERP. The company will no longer have to pay licences, but if they want to do the change, they will have to migrate the information. Obviously it is not an option to start from scratch, or type the information by hand. Kettle makes the migration possible, thanks to its ability to interact with most kinds of sources and destinations such as plain files, and commercial and free databases and spreadsheets.

Sometimes you are forced by government regulations to export certain data to be processed by legacy systems. You can't just print and deliver some reports containing the required data. The data has to have a rigid format, with columns that have to obey some rules (size, format, content), different records for heading and tail, just to name some common demands. Kettle has the power to take crude data from the source and generate these kinds of ad hoc reports.

The previous examples show typical uses of PDI as a standalone application. However, Kettle may be used as part of a process inside the Pentaho BI Platform. There are many things embedded in the Pentaho application that Kettle can do—preprocessing data for an on-line report, sending mails in a schedule fashion, or generating spreadsheet reports.

Note

You'll find more on this in Chapter 13. However, the use of PDI integrated with the BI Suite is beyond the scope of this book.