WCF provides support for queued calls using the NetMsmqBinding. With this binding, instead of transporting the messages over

TCP, HTTP, or IPC, WCF transports the messages over MSMQ. WCF packages each SOAP message

into an MSMQ message and posts it to a designated queue. Note that there is no direct

mapping of WCF messages to MSMQ messages, just like there is no direct mapping of WCF

messages to TCP packets. A single MSMQ message can contain multiple WCF messages, or just a

single one, according to the contract session mode (as discussed at length later). In

effect, instead of sending the WCF message to a live service, the client posts the message

to an MSMQ queue. All that the client sees and interacts with is the queue, not a service

endpoint. As a result, the calls are inherently asynchronous (because they will execute

later, when the service processes the messages) and disconnected (because the service or

client may interact with local queues).

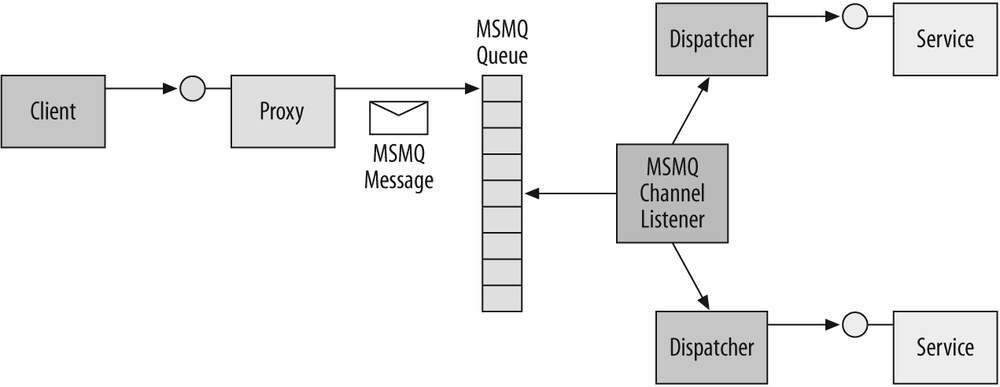

As with every WCF service, in the case of a queued service the client interacts with a proxy, as shown in Figure 9-2.

However, since the proxy is configured to use the MSMQ binding, it does not send the WCF message to any particular service. Instead, it converts the call (or calls) to an MSMQ message (or messages) and posts it to the queue specified in the endpoint's address. On the service side, when a service host with a queued endpoint is launched, the host installs a queue listener, which is similar conceptually to the listener associated with a port when using TCP or HTTP. The queue's listener detects that there is a message in the queue, de-queues the message, and then creates the host side's chain of interceptors, ending with a dispatcher. The dispatcher calls the service instance as usual. If multiple messages are posted to the queue, the listener can create new instances as fast as the messages come off the queue, resulting in asynchronous, disconnected, and concurrent calls.

If the host is offline, messages destined for the service will simply remain pending in the queue. The next time the host is connected, the messages will be played to the service. Obviously, if both the client and the host are alive and running and are connected, the host will process the calls immediately.

A potentially disconnected call made against a queue cannot possibly return any

values, because no service logic is invoked at the time the message is dispatched to the

queue. Not only that, but the call may be dispatched to the service and processed after

the client application has shut down, when there is no client available to process the

returned values. In much the same way, the call cannot return to the client any

service-side exceptions, and there may not be a client around to catch and handle the

exceptions anyway. In fact, WCF disallows using fault contracts on queued operations.

Since the client cannot be blocked by invoking the operation—or rather, the client is

blocked, but only for the briefest moment it takes to queue up the message—the queued

calls are inherently asynchronous from the client's perspective. All of these are the

classic characteristics of one-way calls. Consequently, any contract exposed by an

endpoint that uses the NetMsmqBinding can have only

one-way operations, and WCF verifies this at service (and proxy) load time:

//Only one-way calls allowed on queued contracts

[ServiceContract]

interface IMyContract

{

[OperationContract(IsOneWay = true)]

void MyMethod( );

}Because the interaction with MSMQ is encapsulated in the binding, there is nothing in the service or client invocation code pertaining to the fact that the calls are queued. The queued service and client code look like any other WCF service and client code, as shown in Example 9-1.

Example 9-1. Implementing and consuming a queued service

//////////////////////// Service Side ///////////////////////////

[ServiceContract]

interface IMyContract

{

[OperationContract(IsOneWay = true)]

void MyMethod( );

}

class MyService : IMyContract

{

public void MyMethod( )

{...}

}

//////////////////////// Client Side ///////////////////////////

MyContractClient proxy = new MyContractClient( );

proxy.MyMethod( );

proxy.Close( );When you define an endpoint for a queued service, the endpoint address must contain the queue's name and designation (that is, the type of the queue). MSMQ defines two types of queues: public and private. Public queues require an MSMQ domain controller installation or Active Directory integration and can be accessed across machine boundaries. Applications in production often require public queues due to the secure and disconnected nature of such queues. Private queues are local to the machine on which they reside and do not require a domain controller. Such a deployment of MSMQ is called a workgroup installation. During development, and for private queues they set up and administer, developers usually resort to a workgroup installation.

You designate the queue type (private or public) as part of the queued endpoint address:

<endpoint

address = "net.msmq://localhost/private/MyServiceQueue"

binding = "netMsmqBinding"

...

/>In the case of a public queue, you can omit the public designator and have WCF infer the queue type. With private queues, you

must include the designator. Also note that there is no $ sign in the queue's type.

When you're using private queues in a workgroup installation, you typically disable MSMQ security on the client and service sides. Chapter 10 discusses in detail how to secure WCF calls, including queued calls. Briefly, the default MSMQ security configuration expects users to present certificates for authentication, and MSMQ certificate-based security requires an MSMQ domain controller. Alternatively, selecting Windows security for transport security over MSMQ requires Active Directory integration, which is not possible with an MSMQ workgroup installation. For now, Example 9-2 shows how to disable MSMQ security.

Example 9-2. Disabling MSMQ security

<system.serviceModel>

...

<endpoint name = ...

address = "net.msmq://localhost/private/MyServiceQueue"

binding = "netMsmqBinding"

bindingConfiguration = "NoMSMQSecurity"

contract = "..."

/>

...

<bindings>

<netMsmqBinding>

<binding name = "NoMSMQSecurity">

<security mode = "None"/>

</binding>

</netMsmqBinding>

</bindings>

</system.serviceModel>Tip

If you must for some reason enable security for development in a workgroup installation, you can configure the service to use message security with username credentials.

On both the service and the client side, the queue must exist before client calls

are queued up against it. There are several options for creating the queue. The

administrator (or the developer, during development) can use the MSMQ control panel

applet to create the queue, but that is a manual step that should be automated. The host

process can use the API of System.Messaging to verify

that the queue exists before opening the host. The class MessageQueue offers the Exists( ) method

for verifying that a queue is created, and the Create(

) methods for creating a queue:

public class MessageQueue : ...

{

public static MessageQueue Create(string path); //Nontransactional

public static MessageQueue Create(string path,bool transactional);

public static bool Exists(string path);

public void Purge( );

//More members

}If the queue is not present, the host process can first create it and then proceed to open the host. Example 9-3 demonstrates this sequence.

Example 9-3. Verifying a queue on the host

ServiceHost host = new ServiceHost(typeof(MyService));

if(MessageQueue.Exists(@".private$MyServiceQueue") == false)

{

MessageQueue.Create(@".private$MyServiceQueue",true);

}

host.Open( );In this example, the host verifies against the MSMQ installation on its own machine

that the queue is present before opening the host. If it needs to, the hosting code

creates the queue. Note the use of the true value for

the transactional queue, as discussed later. Note also the use of the $ sign in the queue designation.

The obvious problem with Example 9-3 is that it

hardcodes the queue name, not once, but twice. It is preferable to read the queue name

from the application config file by storing it in an application setting, although there

are problems even with that approach. First, you have to constantly synchronize the

queue name in the application settings and in the endpoint's address. Second, you still

have to repeat this code every time you host a queued service. Fortunately, it is

possible to encapsulate and automate the code in Example 9-3 in my ServiceHost<T>, as shown in Example 9-4.

Example 9-4. Creating the queue in ServiceHost<T>

public class ServiceHost<T> : ServiceHost

{

protected override void OnOpening( )

{

foreach(ServiceEndpoint endpoint in Description.Endpoints)

{

endpoint.VerifyQueue( );

}

base.OnOpening( );

}

//More members

}

public static class QueuedServiceHelper

{

public static void VerifyQueue(this ServiceEndpoint endpoint)

{

if(endpoint.Binding is NetMsmqBinding)

{

string queue = GetQueueFromUri(endpoint.Address.Uri);

if(MessageQueue.Exists(queue) == false)

{

MessageQueue.Create(queue,true);

}

}

}

//Parses the queue name out of the address

static string GetQueueFromUri(Uri uri)

{...}

}In Example 9-4, ServiceHost<T> overrides the OnOpening( ) method of its base class. This method is called before opening

the host, but after calling the Open( ) method.

ServiceHost<T> iterates over the collection

of configured endpoints. For each endpoint, if the binding used is NetMsmqBinding—that is, if queued calls are

expected—ServiceHost<T> calls the extension

method VerifyQueue( ) of the ServiceEndpoint type and asks it to verify the presence of the queue. The

static extension VerifyQueue( ) method of QueuedServiceHelper parses the queue's name out of the

endpoint's address and uses code similar to that in Example 9-3 to create the queue if needed.

Using ServiceHost<T>, Example 9-3 is reduced to:

ServiceHost<MyService> host = new ServiceHost<MyService>( ); host.Open( );

The client must also verify that the queue exists before dispatching calls to it. Example 9-5 shows the required steps on the client side.

Example 9-5. Verifying the queue by the client

if(MessageQueue.Exists(@".private$MyServiceQueue") == false)

{

MessageQueue.Create(@".private$MyServiceQueue",true);

}

MyContractClient proxy = new MyContractClient( );

proxy.MyMethod( );

proxy.Close( );Again, you should not hardcode the queue name and should instead read the queue name

from the application config file by storing it in an application setting. And again, you

will face the challenges of keeping the queue name synchronized in the application

settings and in the endpoint's address, and of writing queue verification logic

everywhere your clients use the queued service. You can use QueuedServiceHelper directly on the endpoint behind the proxy, but that

forces you to create the proxy (or a ServiceEndpoint

instance) just to verify the queue. You can, however, extend my QueuedServiceHelper to streamline and support client-side queue

verification, as shown in Example 9-6.

Example 9-6. Extending QueuedServiceHelper to verify the queue on the client side

public static class QueuedServiceHelper

{

public static void VerifyQueues( )

{

Configuration config = ConfigurationManager.OpenExeConfiguration(

ConfigurationUserLevel.None);

ServiceModelSectionGroup sectionGroup =

ServiceModelSectionGroup.GetSectionGroup(config);

foreach(ChannelEndpointElement endpointElement in

sectionGroup.Client.Endpoints)

{

if(endpointElement.Binding == "netMsmqBinding")

{

string queue = GetQueueFromUri(endpointElement.Address);

if(MessageQueue.Exists(queue) == false)

{

MessageQueue.Create(queue,true);

}

}

}

}

//More members

}Example 9-6 uses the type-safe

programming model offered by the ConfigurationManager

class to parse a configuration file. It loads the WCF section (the ServiceModelSectionGroup) and iterates over all the

endpoints defined in the client config file. For each endpoint that is configured with

the MSMQ binding, VerifyQueues( ) creates the queue

if required.

Using QueuedServiceHelper, Example 9-5 is reduced to:

QueuedServiceHelper.VerifyQueues( ); MyContractClient proxy = new MyContractClient( ); proxy.MyMethod( ); proxy.Close( );

Note that the client application needs to call QueuedServiceHelper.VerifyQueues( ) just once anywhere in the application,

before issuing the queued calls.

If the client is not using a config file to create the proxy (or is using a channel

factory), the client can still use the extrusion method VerifyQueue( ) of the ServiceEndpoint

class:

EndpointAddress address = new EndpointAddress(...);

Binding binding = new NetMsmqBinding(...); //Can still read binding from config

MyContractClient proxy = new MyContractClient(binding,address);

proxy.Endpoint.VerifyQueue( );

proxy.MyMethod( );

proxy.Close( );When a host is launched, it may already have messages in queues, received by MSMQ

while the host was offline, and the host will immediately start processing these

messages. Dealing with this very scenario is one of the core features of queued

services, as it enables you to have disconnected services. While this is, therefore,

exactly the sort of behavior you would like when deploying a queued service, it is

typically a hindrance in debugging. Imagine a debug session of a queued service. The

client issues a few calls and the service begins processing the first call, but while

stepping through the code you notice a defect. You stop debugging, change the service

code, and relaunch the host, only to have it process the remaining messages in the queue

from the previous debug session, even if those messages break the new service code.

Usually, messages from one debug session should not seed the next one. The solution is

to programmatically purge the queues when the host shuts down, in debug mode only. You

can streamline this with my ServiceHost<T>, as

shown in Example 9-7.

Example 9-7. Purging the queues on host shutdown during debugging

public static class QueuedServiceHelper

{

public static void PurgeQueue(ServiceEndpoint endpoint)

{

if(endpoint.Binding is NetMsmqBinding)

{

string queueName = GetQueueFromUri(endpoint.Address.Uri);

if(MessageQueue.Exists(queueName) == true)

{

MessageQueue queue = new MessageQueue(queueName);

queue.Purge( );

}

}

}

//More members

}

public class ServiceHost<T> : ServiceHost

{

protected override void OnClosing( )

{

PurgeQueues( );

//More cleanup if necessary

base.OnClosing( );

}

[Conditional("DEBUG")]

void PurgeQueues( )

{

foreach(ServiceEndpoint endpoint in Description.Endpoints)

{

QueuedServiceHelper.PurgeQueue(endpoint);

}

}

//More members

}In this example, the QueuedServiceHelper class

offers the static method PurgeQueue( ). As its name

implies, PurgeQueue( ) accepts a service endpoint. If

the binding used by that endpoint is the NetMsmqBinding, PurgeQueue( ) extracts

the queue name out of the endpoint's address, creates a new MessageQueue object, and purges it. ServiceHost<T> overrides the OnClosing(

) method, which is called when the host shuts down gracefully. It then calls

the private PurgeQueues( ) method. PurgeQueues( ) is marked with the Conditional attribute, using DEBUG as a

condition. This means that while the body of PurgeQueues(

) always compiles, its call sites are conditioned on the DEBUG symbol. In debug mode only, OnClosing( ) will actually call PurgeQueues(

). PurgeQueues( ) iterates over all

endpoints of the host, calling QueuedServiceHelper.PurgeQueue(

) on each.

Tip

The Conditional attribute is the preferred way

in .NET for using conditional compilation and avoiding the pitfalls of explicit

conditional compilation with #if.

WCF requires you to always dedicate a queue per endpoint for each service. This means a service with two contracts needs two queues for the two corresponding endpoints:

<service name = "MyService">

<endpoint

address = "net.msmq://localhost/private/MyServiceQueue1"

binding = "netMsmqBinding"

contract = "IMyContract"

/>

<endpoint

address = "net.msmq://localhost/private/MyServiceQueue2"

binding = "netMsmqBinding"

contract = "IMyOtherContract"

/>

</service>The reason is that the client actually interacts with a queue, not a service endpoint. In fact, there may not even be a service at all; there may only be a queue. Two distinct endpoints cannot share queues because they will get each other's messages. Since the WCF messages in the MSMQ messages will not match, WCF will silently discard those messages it deems invalid, and you will lose the calls. Much the same way, two polymorphic endpoints on two services cannot share a queue, because they will eat each other's messages.

WCF cannot exchange metadata over MSMQ. Consequently, it is customary for even a service that will always have only queued calls to also expose a MEX endpoint or to enable metadata exchange over HTTP-GET, because the service's clients still need a way to retrieve the service description and bind against it.