Chapter 9

Psychology in Attacks

The job isn't always just to “get in.” Usually there's work to be done after the initial breach. Access is just the first hurdle. Following an initial compromise, you will try to gain traction and maintain your place within the environment. For example, after entering into a system, a pentester will try to increase his privileges to administrator level to install an application, modify, exfiltrate, or hide data. A physical pentester will attempt a similar endeavor, typically by getting deeper into the building, penetrating it until the asset, location, or data has been reached. It starts with what is called gaining a foothold, and this chapter looks at the tactics you, as an ethical attacker, can use to gain a foothold and some tactics that will help you establish a firmer one.

Setting The Scene: Why Psychology Matters

We've looked at the process of gaining a contract or other legally binding correspondence, specifically the scope and how that directly affects what you can do as an attacker, while noting it does not hamper the mindset; rather, it should make your AMs perform at a more creative level. We've also looked at what makes OSINT important and what your AMs should provide you with in regards to OSINT finds and searches, routes, and rabbit holes—specifically weaponization and leveraging through the tie-back method.

Now, though, I want to turn to the things you as an ethical attacker (EA) must do to gain a foothold within an organization and how to maintain your position and then increase it. In network pen testing, access can take various forms, and the prosperous attacker will often creatively come up with multiple attack vectors. Once they have completed comprehensive recon and know all the ports, services, and apps, they may turn to the vulnerability databases to look for known vulnerabilities and exploits. Their attack methodology will differ based on whether they have remote access or local access and if they have physical access to the network. But in any case, it's widely accepted that if an attacker can circumvent security, all bets are off. This last point is why your job as an EA is so vital – in the physical or network category; they are not mutually exclusive. They can be complementary or extremely potent when used together. Of course, sometimes your client, your skill, or your objective means that both options in tandem aren't available and so only one type of attack will be performed. In any case, gaining a foothold and penetrating further into the organization (or operation) has some identical tactics, regardless of the mode used.

My experience and conversations with people in the community tell me that gaining access physically as an attacker is no more anxiety-inducing than testing the network—it's completely dependent on the person executing the attack. This is where offensive attacker mindsets (OAMs) and defensive attacker mindsets (DAMs) come into play (see Chapter 2, “Offensive vs. Defensive Attacker Mindset”). Contrary to popular opinion, it is advantageous to plan something that might rely on seizing an opportunity in the moment, which sounds utterly absurd at first, but bear with me.

As in football, where a team trains, practices things that might never happen, such as an intercept, passing, and possession play, all the time knowing that the games they train for won't necessarily turn into the games they play, you too must plan this way. You must strategize how you will get access and gain more—this type of planning is one of the most fundamental and vital steps you will take as an EA, even if the actual event(s) are nothing like your imaginings. Planning leads to flexibility. This method goes all the way back to one of the first mental models I talked about and have threaded throughout this book: a game of mental chess. Think through all the options you may have based on the information you've collected. Imagine the situation unfolding in all directions, and envisage your reactions to all the good and bad, positive and negative scenarios you can come up with (keep them based in reality of course) because here's the thing: your reactions matter most. People will do, say, and act however they feel they should most of the time. You must react how you think you should. Going through the motions in your mind will help you react in a way that's best for the objective more than any other type of preparation. More than how important your reactions are is how important your reaction time is. This is the key to attack psychology, because as a social engineer, for example, you are already reading a person's non-verbals, assessing and analyzing them. It's part and parcel of the job. Having your reaction manufactured and ready in the nick of time may seem like a big ask, but I proffer we do this much of the time in social situations: we get ready for the laugh when someone is telling us a colorful story enthusiastically; we get ready to be outraged when our friends tell us a scandalizing story; we get in line with someone else's happiness as they are telling us the reason for it. It's not always logical, but it's part of the human condition. Therefore, it's not as big an ask as you may have first thought to be ready with a reaction when testing.

It can be applied to network pen testing, as an example, too. Your reaction time matters as it plays heavily into the “thinking on your feet” approach that is so critical in that sort of testing. For example, password cracking is great but it can take anywhere from minutes to years to perform; a hacker with the ability to problem solve and react quickly might work out the easiest thing to do it to move around it. Spoofing attacks are a great example: you may be able to take advantage of misconfigurations of workstations on the internal network in order to collect password hashes, which can then be taken offline and cracked, but you could also search for admin consoles with default credentials.

Lateral movement techniques are definitely not lacking in number or diversity, and they typically follow the same process: gain access to a low-privileged asset with low protection, escalate privileges and seek out targets of interest on the network. The type of lateral movement may need to be decided on quickly—you will nearly always have to do internal recon after initial access, and that can be noisy.

From another perspective, unethical hackers process information and use it unnervingly quickly. According to research by the Federal Trade Commission, it took only nine minutes before the hackers tried to access the information from a fake data breach (https://www.consumer.ftc.gov/blog/2017/05/how-fast-will-identity-thieves-use-stolen-info). First, they created a database of information of about 100 fake consumers. To make it seem legitimate, the Financial Trade Commission used popular names based on Census data, addresses from across the country, phone numbers that corresponded to the addresses, intuitive email addresses based on the information, and they provided payment information, too. They then posted the data on two different occasions on a website that is used to make stolen credentials public. After the second posting, it took only nine minutes before the information was accessed. We have to think like this, too. This is how we, the ethical hackers, get ahead on both the offensive and defensive curves.

You must have a plan in mind for both ingress and egress but take the opportunities when they arise. It's also vital to note that access does not equal privilege escalation. You can have limited access both physically and on the network. However, it can be a little harder to cover your tracks if you have limited access on a physical job. I once entered a building through the back entrance. A very helpful man (a lucrative target within the environment) held it open for me as he left, presumably for the day. It feels pretty good when getting in is that easy.

Continuing this account, upon getting inside, I saw the only room open to me was the cafeteria, and the only open exit led back to the street. There were two more doors inside, but both were locked. One was a solid door at the top of a staircase, but the other had a small window. Through it I could see all the way into reception, but I couldn't get there because I didn't have a card. Worse still, it was quite late in the day when I gained access, so I had missed the lunchtime folk passing through. The building lacked security personnel, so I did what any respectable social engineer testing physical security would do: I made myself a cup of coffee and sat at the closest table to the connecting door.

I did this for two reasons:

- If someone came in, I could swiftly get up and catch the door before it closed behind them.

- Coffee tastes better when it is free.

Eventually someone did come in, and I managed to make it look like I was just getting ready to leave anyway and got through the door before it slammed shut. However, what is most notable is that my initial access did not guarantee privilege escalation; it merely upped the odds. I did not know the layout of the building because there was no OSINT or outside observation to tell me that, so I couldn't plan for a better route in. The back entrance seemed like the most attractive and least bold entry point. However, it was only on-the-spot thinking that led me to sit with a coffee, which is a very inconspicuous thing to be doing in a cafeteria, and remain close to the door so that I could catch it—which in the end permitted me access. I didn't plan it, it's not particularly brilliant, but it is a way of thinking; it's a way of anticipating progress and waiting for the payoff.

However, with a bit of planning and a few games of mental chess beforehand, I might have concluded that was the best route forward, or I may have chosen to leave and then try to gain access through the front door at a better time.

Ego Suspension, Humility & Asking for Help

There is something else that complements the planning and execution. It will be one of the most significant and powerful tools in your AMs arsenal: ego suspension.

Ego suspension is the ability to suppress one's own wants, needs, and motivations and place priority on the other person. I consider it one of the most powerful techniques for building rapport, and in terms of AMs, it falls under the fifth skill of self awareness. Oddly enough, as an attacker, you will likely find that you want your targets to perceive you as humble. This trait is often associated with honesty in my experience and a way to fly easily under the radar. Effectively, ego suspension can ostensibly neutralize your threat shadow (your threat shadow refers to the activities, actions, contributions, and communications taken as an attacker even though you are acting through a pretext).

Ego suspension is easy in theory. It's the act of intentionally placing the focus on the other person, which often serves to further increase your own trustworthiness. The ability to suspend one's ego leads to overall likeability. This alone increases the probability that people will be more open to your requests and appeals. There are other ways to get people to comply with your requests. For instance, you could use authority. However, not everyone can be intimidated, and even if they were, it's not always the most effective way to get what you want. If you can make people like you, then you have created a path in which the fruits of your labor may last longer. This all sounds great, doesn't it? All you have to do to gain access to a secured building or network, by my reckoning, is to not let your nerves get the best of you, use your OAMs and DAMs, employ critical thought and heuristic prowess as needed, gather some intel, walk in and place yourself in a lower position than your target, and watch them bend to your will.

Alas, no. That would be a pretty good outcome, but this book would then be more akin to a novel.

Ego suspension is a difficult beast to battle. That's because ego, no matter what flavor of it you have, is linked to who you are. It's a large chunk of your identity. The solution is not quite as simple as I'd like. Because ego is inseparably connected with who you are, the act of suppressing it is often in direct conflict with your mind's autopilot goals. Our ego lets us believe that people see who we are projecting ourselves to be—this is the ego's great delusion. Whether it be intelligent, intellectual, sharp-witted, reasoned, composed, competent, nice, moral, attractive, easy-going, etc., we all want to present ourselves in a certain way. As humans, we know that any time we speak or engage with others, judgment typically follows, which impacts how we are treated.

It is a natural tendency to want others to think favorably of us, because this impacts our self-esteem. Most people have been in a situation where a conversation has escalated to wild claims of understanding and skill in a domain—where no matter than you say, the person you're talking to knows what you know but knows it better. Perhaps you have even been this person. The stronger we feel about a subject, the more difficult it is to maintain an air of neutrality or ignorance. So, when you are standing in front of a top executive in their headquarters, asking to place a USB drive into their machine, and they say that they couldn't possibly allow that but that you could talk to their assistants, it can be hard to suppress gloating and a sense of smugness, which is a form of ego.

This is why ego suspension can be so difficult—it's your sense of self-esteem or sense of self-importance, and it can be at odds with someone else's. Law 4 of AMs says everything you do is in favor of the objective. Someone standing in the way of what's important to you might make suspending your ego and sense of importance difficult. But, despite how hard it can be to suspend your ego, learning to do so is a critical skill for an EA; you will be much more effective in your interactions.

Ego suspension is also the base for many other tools you can use as an EA, ultimately leading to rapport and the target liking you, or at the very least viewing you as nonthreatening. The bottom line is, help people feel valued and they will help you; that starts with ego suspension.

Ego suspension falls firmly under self-awareness. In Ego Is the Enemy (Portfolio, 2016), author Ryan Holiday explores how ego hinders our development more often than not. Our ego makes us say, “It was just a mistake” when in fact, your ego is attempting to protect itself by playing down your mistakes. Mistakes aren't patterns. They are typically made a couple of times, with the person making them learning from them. Mistakes that continue to happen are flaws. Record the mistakes you make most often on jobs, and note the reason. You can generally reduce the reasons down to a lack of attention or focus, poverty of information, impatience, or non suspension of ego (lack of humility).

This level of introspection will take a certain amount of self-awareness. Self-awareness itself is the ability and tendency to pay attention to the way you think, feel, and behave. It is understanding our own emotions and moods as well as how we behave and act. Making the changes to become and stay self-aware will take humility and more self-awareness.

Remember, self-awareness is invisible; you cannot see if someone is self-aware, but you will know if they are or not because they will leave you feeling a certain way. Think of the “friend” who doesn't stop talking, doesn't ask you how you are, isn't interested in whatever is happening in your life at all. They only talk about their own life, their own circumstances, and they give you their own thoughts on matters, never asking for your input. They are most likely not very self-aware. When dealing with someone like this, I often am left feeling drained and sometimes dejected.

Increasing your self-awareness will allow you to adapt to your circumstances, adapt to your opponent's ego, and leave them feeling the way that's most fitting for your objective and your circumstances. Most often, it's to your advantage to make someone feel as though they helped you, not that you won. But increasing your own self-awareness is two things: the first is difficult and the second is never-ending. But, fear not, there are a few steps you can take to start the journey: ask for feedback on yourself and take it well! Choose a solid relationship in your life and start small.

Identify your cognitive distortions (essentially how we lie to ourselves). By diagnosing the irrational thoughts and beliefs that you unknowingly reinforce over time, you can free yourself of them, pruning them out of your psyche. Some of the more common ones include the Nothing Thinking/Polarized Thinking also known as Black-and-White Thinking which results in you seeing things in terms of extremes—you are either perfect or a total failure, etc.

Another is overgeneralization that makes you view a single event as an invariable rule, so that, for example, if someone lets you down once, they will always let you down. Another is Jumping to Conclusions, also known as Mind Reading. This distortion manifests as the inaccurate belief that you know what another person is thinking. Whilst you might have an idea and be able to infer from time to time, this distortion is tied to the pessimistic and unfavorable interpretations that we jump to.

Magnification (Catastrophizing) or Minimization, also known as the Binocular Trick can skew how you see reality, too. This distortion involves exaggerating or minimizing the meaning, importance, or likelihood of things. A pentester who is generally savvy and sharp but makes a mistake may magnify the importance of that mistake and believe that they are actually not good at their job (which could lead to or stem from imposter syndrome), while a pentester who always performs, is agile and quick, and that can live off of the land when tools cannot be used, may minimize the importance of their skill and continue believing that they are simply lucky.

There's also Emotional Reasoning which refers to the acceptance of your emotions as fact. For easy understanding's sake, I can reduce it to “I feel it, therefore it must be true.” This is common, but as is logical, feelings are not facts and they often change with nothing more than time.

Should Statements imposed on yourself (what you “should” do or what you “must” do) are damaging because they introduce stress, which can lead to increased anxiety and avoidance behaviors. They are also notably damaging when applied to others because by making these statements, you are essentially imposing a set of expectations that will likely not be met, which can lead to anger and resentment, which is toxic in a professional or personal setting.

Control Fallacies are another category of cognitive distortion. This distortion can manifest one of two ways: (1) that you have no control over your life, no agency at all, and that you are a helpless victim of fate, or (2) that you are in complete control of yourself and your surroundings, the latter one being a distortion I am prone to.

Whichever way you lean, both are damaging, and both are equally inaccurate. No one is in complete control of everything happens to them although I'd like to believe that we are. And no one has absolutely no control over their situation.

The final one I will list is my least favorite and most recognizable from a self-awareness point of view: Always Being Right.

Those struggling with Imposter Syndrome or those with an anal retentive personality may recognize this distortion—it is the belief and truly feeling that you must always be right. Being wrong is absolutely unacceptable, and you will fight to the figurative death to prove that you are right.

There's another benefit of self-awareness and ego suspension that should be noted: your ego will tell you that you have to be the best, you have to play your best character and role to date, you have to best the client so that they are on their knees by the time you depart. This is not accurate. You have to assess the client and outwit them. That's a game of data analysis and then acting based on the answers. You do not have to be James Bond. You don't have to play complicated openings or closing moves. Don't let your ego or your DAMs win in those moments.

Ego will also tell you that you don't have to go through the sewers, that you will find another way in…or that you don't have to hide in the bathroom for hours on end…or that you won't be the one to get shot on the engagement where the security guards have guns…or that you can pull off that southern accent… or that no one else could do what you do. Don't let ego do this to you. Use your DAMs, not your ego, to calculate risks.

Additionally, chess players study general opening principles, basic tactical and checkmate motifs, pawn structure, strategy, and endgames, too. The best chess players sidestep ego. They have to. You are never done learning chess; you will never know every move. It's not too dissimilar to our line of work as ethical attackers. The landscape will always change; the world will always be changing, and it's not enough just to change with it, we have to be aware of where it's headed, take preemptive courses of action and be okay with being off by a few degrees, but smart enough and sharp enough to fix our erroneous solutions.

Self-awareness in terms of ego suspension forces us to do one other thing: ensure that you are studying material that is appropriate for your level. You might have to study the basics of networks before you start on commands. You might have to study lock picking before you start scaling buildings. Even as you progress, learning things like bypassing alarms and using network security protocols, you will have to be able to recall and be up-to-date with the latest techniques for the basics.

Moreover, you will have to constantly find ways to test your knowledge. Doing so will help you know when you can move on to studying and practicing more complex concepts and skills, and it will keep you honest with yourself insofar as what you retain. I recommend performing OSINT challenges, taking capture-the-flag and red team courses, writing phishes and getting feedback, and gathering OSINT on yourself and your loved ones.

Ego suspension and self-awareness means that before you can get really good, you have to be comfortable being bad. It also means that you should not be afraid to make mistakes. Get the basics, move forward as you need to, and refresh whenever possible.

Humility

Humility isn't just a virtue but also a trait. It heavily relates to the degree to which we value and promote our interests above others, and this is why it's such a powerful weapon in an attacker's arsenal: it helps you adhere to the fourth law: every move made is for the good of the objective.

Humility is also an important factor in knowing jurisdiction over a situation does not always have to be conspicuous or explicitly said to be felt. If you are impersonating someone who is above your target in the hierarchy, the target will know that. You may not have to do anything more than tell them “your” name. Explicitly making the point that “you” are their superior is often moot. Allow them to do some of the heavy lifting for you.

Humility also allows two other things that aren't synonymous with attacker but that are powerful when used by one:

- An ability to acknowledge mistakes and limitations

- Low self-focus

The last in that list ties back to law four, also. Your focus is the objective.

Asking for Help

Sometimes, in order to influence others, you will find it prudent and rewarding to begin with what appears to be vulnerability and openness, which then helps your play into assistance themes. The success of this technique relies on the human desire to help when asked.

Mutually beneficial and altruistic behavior is common throughout the animal kingdom, especially in species with complex social structures. Across many studies of mammals, from mouse to man, data suggests that we are profoundly shaped by our social environments, and we are biologically inclined to help others. Each of these motivates people to engage in what is called prosocial behavior. So simply asking for help can be effective, but you will need to have at least temporarily muted your ego to ask for help and approach the person you wish to ask with a level of humility that is moderately conspicuous. In other words, you cannot show them your expectation of help just because you are aware helping each other may be a natural instinct. The good news is that as humans, we're generally not good at suppressing instincts (helpful when considering targets): the bad news is that as humans, we're generally not good at suppressing instincts (not helpful when considering what we have to contend with internally as attackers).

Introducing the Target-Attacker Window Model

As I've shown, it matters more what the target thinks of you than what you think of the target. You have to be able to read the target, but you don't have to be sold on their story, mainly because you are approaching them. Their story is likely to stand. To be honest, I don't know if there's a brain in the world with enough bandwidth to run mental games of chess all the way to the moment you walk into the target environment only to find out it's a front.

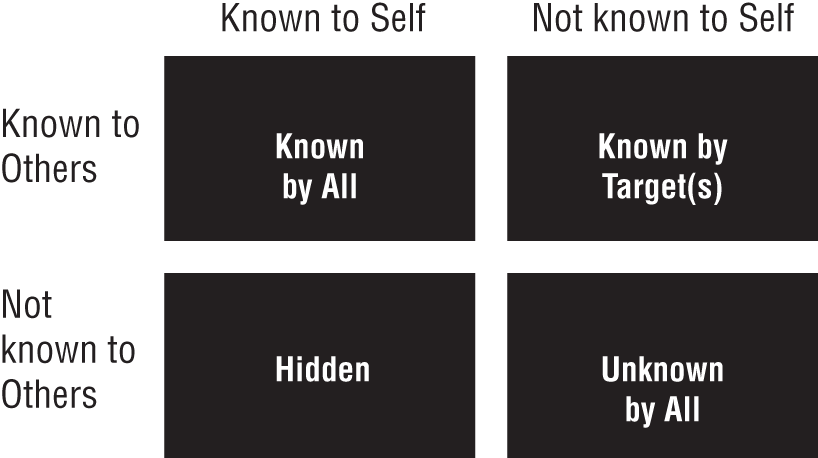

The target for you is your obstacle—how they perceive you matters immensely. The Target-Attacker Window Model (TAWM) addresses this. Specifically, the model focuses on why snuffing out ego is often difficult. The egotist may not know that ego exists; they may have a skewed self-perception or be deluded by ego and false entitlements. Not being able to identify your own ego at play is common and often invisible to the bearers. This would fall under the “Known by target(s)” pane, which is covered in the next section, “Four TAWM Regions.”

TAWM is based on the Johari Window model, devised by two American psychologists named Joseph Luft and Harry Ingham. The Johari model was produced in 1955 whilst the two men were researching group dynamics at the University of California, Los Angeles. TAWM is made up of four panes and is a simple and useful tool for illustrating the attacker's vantage point as well as the target's. It can be used for much more than just identifying ego and, by extension, identifying ways to suppress it. This model can be used to assess and improve an attacker's ability to further disguise themselves and to gain the upper hand through knowledge. The model can also be used for understanding and training self-awareness, development, and target-attacker dynamics.

Four TAWM Regions

The TAWM window is a technique that helps ethical attackers better understand their relationship with themselves and their targets. It's made up of four quadrants that visualize the attacker's known or unknown information.

- Known by All What is known by the attacker about themselves and is also known by others

- Known by Target(s) What is unknown by the attacker about themselves but that others know

- Hidden What the attacker knows about themselves that others do not know

- Unknown by All What is unknown by the attacker about themselves and is also unknown by others

The TAWM window, depicted here, is based on a four-square grid.

In the field tactics of an attacker, the two on the left are the most advantageous, whereas the two on the right are the most problematic and the exact reasons why you cannot predict outcomes but only stack the odds in your favor, which I will circle back to in a moment.

For now, let's break the categories down. There are certain things that are apparent as you walk into a building. Unless you are disguised, the first person or people you meet, most often the first line of defense within an organization, know that you are whichever gender you are and how you look physically. These two things are known to you, too. They are “Known by All.” There's nothing magical about this category. Everything you know and that is also known by the outside world (upon interaction) is represented in this category. Known by All includes factors like accent, health status (broadly speaking), and confidence level. As an attacker, you want the faux reason you are there to be in this category, too. If you show up as a mechanic, look like one. If you show up as the boss, look like one based on that company's culture.

The pane directly below Known by All is Hidden. Hidden is both your objective and what your pretext conceals. Hidden is why you are there in the first place and the basis of the attack; it is also your AMs as applied to the attack. The Hidden pane is perhaps the most important set of things you are in charge of. It's everything you don't want revealed about yourself; it's the bolstering cause and effect of the operation and should be protected at all costs. It is the employment of every skill and law of the mindset.

Known by Target(s) is my least favorite category but perhaps the most important to play around with mentally. How your target thinks of their job, feels about their role, and what they know about their environment and perceive of you are all contained within this category. Those are all things that can go either for or against you. Scary, right? Well, I do have one piece of good news: the previous figure misrepresents the size of the panes. They are not all equal, because some have more influence than others. Realistically, the categories should be represented by their impact on the whole engagement. If I had started out like that, they would've looked more like the following graphic, give or take, based on the skills of each attacker and the security culture in each individual environment.

Ego suspension is a huge part of what I teach in my Advanced Practical Social Engineering course and is a fundamental skill. You can have every other facet of an attack down, but if your AMs can't handle the suspension of your ego, all bets are off. If you know your ego is at play, you will have an easier time of subduing it where and when advantageous. If you don't and your ego falls within the Known by Target(s) pane under Not Known to Self, you may find many of your interactions and engagements going awry, even if they are meticulously planned.

There's a simple reason ego suspension is so important. It boils down to the fact that you cannot appeal to someone else's ego if your own is in the way. And most of the time, you need to appeal to your target's ego in order to influence and steer them in the direction you need them to take. Your ego will always want you to do and be the best, but it will help you resist doing some of the less glamorous things that you might have to when executing an attack, like dumpster diving or entering through sewers or cargo bays. It might stop you from starting your vishing calls toward the bottom of the organizational chart because your aim is to get to the CEO, and you shouldn't waste your time with those lower in rank. Big mistake. Your job is to gain information and weaponize it. No target is too big or too small.

Target Psychology

Many attacks exploit psychology to a larger degree than technology. As computer security professional Bruce Schneier once said, “Only amateurs attack technology; professionals target people.” However, that's because it's easier in many ways than dancing with technology—attacking a person will yield a far greater payout than targeting technology most of the time. I would go as far to say that the most brilliant yet most terrifying attacks we have seen in our lifetimes, whether it be those that threaten the global supply chain, like the Maersk debacle, or those like Frank Abagnale's Pan Am pilot jaunt, contain a hefty element of AMs that rubs up against technology only slightly.

With Maersk, it is understood that the NotPetya malware got into corporate networks via a hijacked software update for a Ukrainian tax software tool. The attack was executed via phishing emails, which helped take down the world's largest shipping corporation. At the peak of infection, almost all connected assets owned by the corporation were touched directly or indirectly by the phish. Some of the computer screens affected read “repairing file system on C:” with a simple warning not to turn it off. Others were a little more menacing, reading “Oops, your important files are encrypted” accompanied by a demand to pay $300 worth of bitcoin in order for them to be decrypted. This onscreen messaging included a single kiosk in a gift shop stationed within a basement in Copenhagen. People reportedly ran into conference rooms and unplugged machines in the middle of meetings, and hurdled over locked keycard gates, which had also been rendered useless by the malware.

Disconnecting Maersk's entire global network took the company's IT staff about two hours, but the phish likely took far less time to formulate and definitely less time to send. Employees—rendered entirely idle without computers, servers, routers, or desk phones—were left without work. Maersk ships operated normally throughout the ordeal, but for roughly two days, affected terminals couldn't fulfil their function and move cargo. NotPetya affected Maersk's global business, too, and IT costs weren't insignificant either.

As I pointed out earlier, this all started with phishing. But it's something more general that's the true point of reference here: as humans, when one party believes the intent is pure, a vulnerability is created. Your AMs must use this as its compass for doing bad (for the sake of good of course). It must be able to create a vulnerability with that principle in mind and be able to use it to your advantage time and time again. You create the vulnerability; you yourself are the exploit.

This rule applies to every vector I talked about in Chapter 8, “Attack Strategy”; with phishing, vishing, smishing, and in-person, you only need the other party to believe that your intent is pure to allow a vulnerability to be created. You may not always be able to exploit it, but creating it is the first step in any case, and it stems, of course, from your pretext and committing to it for as long as it works for you.

Committing to a pretext in and of itself is powerful. Fairly recently a Malaysian bank robber who used social engineering as his primary weapon in a string of thefts successfully gained $142,000 by pretending to be a fire extinguisher maintenance technician. He never broke his pretext, and what's more, he never bothered to make it more convincing than the words coming out of his mouth. Where you and I would typically dress the part of our chosen pretext, this man approached his target environment carrying a backpack and dressed casually in a T-shirt and shorts. He walked into the bank with a single document that he claimed was a floor plan for the building. According to local reports and CCTV footage, he simply displayed the paper to a bank manager and requested permission to do an inspection. He was refused access by the manager upon being incapable of producing any sort of identification to corroborate his story. But, even a bank manager needs lunch. While the manager was away, the attacker continued to check extinguishers and the whole environment continued as was normal, with staff assisting customers and keeping business rolling. Working quickly but discreetly, the attacker got closer and closer to the safe room, needing only one thing to happen—for the head cashier to access the area. And when that happened, he essentially tailgated his way to quite a bounty.

Niftily using a magnet on the door's lock, preventing it from shutting fully, he waited until the coast was clear before entering. Once inside the secure area, he pushed a hefty amount of cash into his backpack and walked away. In a bid to make a convincing escape, the attacker approached a security guard and explained that he was leaving to get additional staff to help with the inspection. All told, he was inside the bank for less than 20 minutes and walked away with $142,000.

In a final short example, we look to Frank Abagnale, maybe my favorite social engineer of all time. He predates the industry in terms of security, and he's been on both sides of the law. His AMs is sharp, and when combined with his instincts, he created precise conditions that allowed vulnerabilities to be created, which he exploited in plain sight. Frank Abagnale is most often associated with the Steven Spielberg movie Catch Me If You Can, with Leonardo DiCaprio portraying a handsome, charming young man using somewhat ingenious crimes as paydays. Abagnale is a famous check forger, imposter, and con artist. He was between the ages of 15 and 21 when he committed most of his crimes. He was arrested multiple times in multiple countries, spending six months in a French prison, six months in a Swedish prison, and finally four years in a US prison in Atlanta, Georgia. That's generally the extent of what people know about him. But Abagnale escaped prison in 1971 in one of the most agile and AMs-centric methods you could ask for, and unlike with many of his other exploits, Abagnale had help this time.

Abagnale was transferred into prison by a US Marshal, who inadvertently forgot to give the prison his detention commitment. This struck the administration as odd and caused the guards to believe that he was a prison inspector sent by the FBI. Frank Abagnale was a sharp observer by this point, so he quickly took in the information and used it to create a vulnerability to be used against the prison. He used his one phone call to speak to Jean Sebring (as she was named in his book), a friend of his, and asked her to forge a business card to back up the story the prison administrators had told themselves. Once the card was delivered to Abagnale, he donned the pretext they'd created for him and finally told the guards the “truth” that they wanted to hear—that he was in fact an inspector sent by the FBI. The guards believed him and even bragged about how they knew all along. Ultimately, they allowed Frank to leave the facility.

Social engineering, for good or bad, when used in tandem with AMs is a powerful force to be reckoned with, and the best way to wield this force is to understand elements of psychology, the traits we have in common, and the best way to exploit them. So, with that in mind, let's look at target psychology, beyond what was discussed in relation to TAWM, and find out which human pressure points your AMs is best applied to. You should know by now that AMs has its benefits and that having a strong AMs puts you at the top of the food chain. But to truly have a well-rounded AMs, you will need two things, both of which all the examples in this book have incorporated:

- Empathy

- Knowing the pitfalls of human behavior as they pertain to security and, specifically, to you as an attacker

Empathy is important because it allows you to place yourself in the shoes of the target. This ability to empathize, even if it's just cognitive empathy, is strongly related to the ability to use human nature against those you are attacking. This is even beneficial to those of us that only attack digital assets; knowing how to hide your tracks is often linked with knowing how to disguise them to humans. Being able to place yourself in the shoes of someone else allows you to be able to exploit the individual more easily.

Empathy is the birthplace of the principle I've been talking about in this chapter. As humans, when one party believes the intent is pure, a vulnerability is created. Every breach occurs because someone did something they weren't supposed to do, or somebody failed to do something they were supposed to do. This is the perfect counterpart to knowing that the basic premise of social engineering is that people have certain predictable characteristics such as an innate desire to be helpful, and that, when put under time pressure from someone they believe to be genuine, they will be prone to bypassing basic security protocols.

Given this, I will look at three of the most potent and prevalent biases we can use to our advantage as attackers:

- Optimism bias

- Confirmation bias and motivated reasoning

- Framing effect

Optimism Bias

People tend to overestimate the probability of positive events and underestimate the probability of negative events happening to them in the future, as author Tali Sharot says in her book, The Optimism Bias (Vintage, 2012). For example, I may underestimate my risk of getting hit by a car and overestimate my future success on the stock market—that's optimism, right? I really don't want to be hit by a car, but I really do want to see my stock prices go up. Another example is that of most newlyweds underestimating the likelihood that their marriage will end in divorce. According to the American Psychological Association, it is estimated that 50 percent of all marriages end in divorce in the United States (https://www.apa.org/topics/divorce-child-custody).

People are optimistic. Because people are optimistic, they tend to underestimate risks. Therefore, they engage unnecessarily in overtly risky behavior, and you can capitalize on that as an attacker.

When a target receives emails designed to infect their machines with malware, they don't necessarily treat them with the suspicion they deserve. Adding to this, somewhat counterintuitively, is that the security communities have actually done a good job of raising awareness about the perils of clicking links in phishing emails. Countless resources explain how to spot a phish. To most people, the danger of falling victim to a phish is reduced, which is paradoxical, of course. But it stands to reason that the victims think the criminals must've found another way by now because phishing is overdone, overused, and too easily recognized. The criminals think the targets will fall for their phish in spite of those things. The loop continues and is likely an infinite one.

Optimism goes beyond the measures of technology, though. Thanks to people's inherently optimistic nature, they expose themselves, and the companies they work for, to threats they could easily avoid. The illusion of invulnerability, either of self or of the organization, is something that will often present itself to you as an attacker in the form of security by theatrics; a placebo effect, or rather a nocebo effect transpires. Targets within the environment will simply go through with the process they've been instructed to without much critical thought. The very consideration of you being a malicious actor either does not occur or washes over so softly that it is basically useless. If you are presenting your pretext correctly, leveraging your mental agility and self-awareness and never breaking pretext, people will tend to believe the narrative you present and look for signs that it is legitimate. They will most often assume that they are not standing with a real-life threat in front of them disguised with nothing more than a seemingly good reason to be there.

A number of factors can explain this optimism bias. The two most important that I have investigated are perceived control and being in a good mood.

With perceived control being a bolstering effect of this bias, you can see why ego suspension is so important, as well as committing to pretext (typically a non-authoritative one). Leaving the target with the misconception they are in control is enormously important if you are to count on optimism bias in an attack.

And though other forms of positive illusions have been identified in psychology, including self-serving bias and wishful thinking, the illusion of control matters most to you as an attacker because of what it is: an exaggerated belief in one's capacity to control independent, external events, which I tie into one with optimism. This is the tendency for people to believe that compared to others they are less likely to experience negative events and more likely to experience positive ones. It's like the gambler who thinks they have a better chance at winning than everyone else at the table, with everyone else at the table thinking the same thing.

When it comes to mood, people tend to be more optimistic in safe settings, but when it becomes critical to recognize threats and danger, even the greatest of optimists are prone to revising their beliefs when faced with negative information. This is why pretexts in combination with commitment are so important: you must present the narrative in such a way that the target never has to think about it as anything else other than what is being presented. If this is not an option, you might try circumventing the target altogether by applying AMs to neutralize technological defenses.

Although it is a hard argument to make that all people suffer from optimism bias, it's safe to say that many do and on many differing fronts. As an attacker, your best bet is to use optimism bias for yourself and against the target. Ironically, you must believe that your target suffers from this psychological phenomenon and act accordingly; adopt a well-rounded, comprehensive pretext and treat most people as if they are not expecting to be approached, influenced, and circumvented by you.

Confirmation Bias and Motivated Reasoning

Confirmation bias is the predisposition of searching for, interpreting, favoring, and recalling information in a way that confirms or strengthens your prior personal beliefs or assumptions. In other words, confirmation bias is why people see what they want to see.

Here's an example: You search online to back up what you think is true, like “Area 51 Aliens,” and take into consideration the press release in which the US Army Air Forces (USAAF) announced they'd recovered a “flying disc” from a ranch near Roswell, but you ignore the one after, in which USAAF stated it was actually weather balloon debris.

Some believe Area 51 is researching and experimenting on aliens and their spacecraft. Others theorize that the moon landing was staged at Area 51. If you search, you will find. Confirmation bias keeps you steady as you wade the murky waters of information—it keeps you looking and agreeing only with information that reinforces what you already know.

Another example of the confirmation bias is someone who forms an initial impression of a person and then interprets everything that this person does in a way that confirms this initial impression—for the good and the bad. Consider the debate over gun control. Let's say Alice is in support of gun control. She seeks out news stories and opinion pieces that reaffirm her beliefs. When shootings occur, she looks at the facts of the situation in a way that supports her existing beliefs.

Bob, on the other hand, is as against gun control as any one person could be. He too seeks out news sources that fit his viewpoint. When shootings occur, he looks at the facts of the situation in a way that supports his existing beliefs.

These two people have polar opposite positions on the same subject. Even if they read the same story, their bias will likely shape the way they perceive the details, further confirming their beliefs.

Now imagine they meet for the first time and in a place where gun control was the main issue—each of them passionately advocating on behalf of their own side of the debate. They share a tense and dramatic conversation and part ways. But, oh dear, something else happens…they meet again the next day as they are walking into work. Bob just started working where Alice does and, worse still, she's going to be his buddy for the next few days to get him settled in.

Most likely, they can, at least ostensibly, put their differences aside to talk about the protocols that need to be followed. Alice will be able to show Bob how to get to the cafeteria and how to clock in, but there's a slim chance of her trusting Bob, wanting to be friends with him and wanting to know more about any of Bob's other beliefs. The same is true for Bob when considering Alice. They are each operating on the belief that they are opposed on one major front and will start looking for other facts to build their cases against each other. When Bob sees Alice drive out of the parking garage in a Prius and Alice looks over to see Bob's pickup truck, they will each build more beliefs about the other.

Confirmation bias can trick the mind into only looking for specific patterns based on a person's previous experience and understanding, instead of considering each event as isolated. The use of this cognitive shortcut is easily fathomable: evaluating evidence requires a great deal of mental energy, so our brains select to take shortcuts. This saves us all time and prevents us from feeling overwhelmed with the constant bombardment of new information we surround ourselves with every day.

Biases are, after all, a wonderful vulnerability to tap into. You can most easily put this bias to good if you are fully dedicated to your character and have assumed the role before you've even walked in the door. This is because as you approach a person, they look for information that supports, rather than rejects, their preconceptions, typically by interpreting evidence to confirm existing beliefs while discarding or discounting any incompatible data. If you are about to enter an art gallery as a very wealthy person looking to buy a piece of art, with some hidden objective (hopefully set by the client), then you cannot simply assume this role as you talk with the target inside. You would have to be in character upon arrival on the street. Personally, I would arrive in a car, with a driver, I would be dressed the part of my wealthy character, and I would be fully in the role before I even stepped out onto the pavement. In taking pretexting this seriously, you help the confirmation bias in your target and over the whole environment bloom, which is only to your benefit.

With motivated reasoning, the tendency is to assign weight to information that permits us to come to the conclusion we want to reach. Accepting information that confirms our beliefs is far easier and less energetically consuming than reassessing and relearning. Contradicting information causes us to withdraw cognitively. Although confirmation bias is an inherent tendency to observe information that matches our established beliefs and ignore information that doesn't, motivated reasoning is our tendency to readily accept new information that agrees with our worldview and critically analyze that which doesn't. Using both against a target and environment effectively is a very powerful force.

Using the two in tandem against a target is easier than it first seems. Confirmation bias occurs from the direct influence of desire on beliefs. When people want to think a certain idea or concept is true, they end up believing it to be true. Once someone has formed a view, they embrace information that confirms that view while ignoring, or rejecting, information that casts doubt on it. Confirmation bias suggests that most of us don't perceive circumstances objectively. This is why, when performing attacks in a team, you must most often play to what is typical within society, not what is politically correct. For instance, it's more likely that an older man with me is my boss than I am his. Neither AMs nor social engineering cares about what is politically correct. Neither does confirmation bias. Using confirmation bias as attackers demands we use whatever is typical for the environment.

Motivated reasoning is actually suffused with emotion. Not only are motivated reasoning and confirmation bias often inseparable, but the positive or negative feelings about people, things, and ideas arise more rapidly than the target's conscious thoughts—in a matter of milliseconds, in fact. Many people, and so many targets, want to push threatening information away and reel in nonthreatening information. In our modern society, people have instinctual reflexes, not only to tangible threats, but to information, too. And although we are not only driven by emotions and biases, reasoning often happens later. So for the sake of not making the target “think” too much, it is often necessary that I show up as the assistant or the employee and not the boss if culturally and traditionally that's more fitting.

Framing Effect

The framing effect is a cognitive bias that impacts our decision making when something is said or presented in different ways. In other words, we are influenced heavily by how something is presented. There are many subtypes of this bias, but I will concentrate on positive and negative frames.

At first glance, the framing effect doesn't seem much different from the other biases presented throughout this book or even throughout this chapter, but this bias is a little special. Whereas with the last three biases I've covered I have painted an all-or-nothing type of situation—stay in character or you will get yourself caught; don't do what is politically correct, do what is expected, or you will get caught, and so on—the framing effect teaches you presentation because this cognitive bias occurs when the outcome between the two options is the same. When the options are framed differently, they result in us choosing the one that is favorably framed.

There are different kinds of framing, but I will concentrate on positive and negative frames. We've all been subject to marketing strategies such as ‘Don't miss out’ or ‘Get it before it's gone’. The frame is simple—make people feel like they are losing out on something. People tend to fear losses more so than gains and take action to avoid losses. Negative frames are effective in certain scenarios too, because they can create urgency. For instance, telling the frontline of a building that their “audit” will be delayed three months and that “headquarters” won't be too pleased if you are turned away now might be enough to make them reconsider allowing you entry then and there.

Our fear of loss is strong, but we tend to seek out positivity. Positive frames often work better in convincing people. For example, “I can be done and in and out of your hair in 30 minutes if I get going now. You won't see an auditor again until next year.” This also brings into play the use of an artificial time constraint, which also results in the illusion of a positive frame. An artificial time constraint is manufactured restriction on time—using made-up time constraints can result in the neutralization of discomfort for the other party.

In an everyday example, how many times have you been sat next to someone on an airplane, on public transport, or in a public place and had them try to start a conversation with you? This can make many of us feel uncomfortable and not because of the interaction itself, but because we don't know how long the intended conversation will last for… the whole flight? The entire length of the line at the DMV? This is why artificial time constraints work so well with positive framing—they create the illusion of a positive outcome in a short amount of time.

However, the result is the same either way: the security guard or receptionist can choose to let you in for 30 mins now so that they don't have to wait months for their next turn an at a safety audit, which may have broader effects on the company (audits are often mandatory), or you can have a teammate spoof a senior personnel's number and corner them into allowing you access. The results are the same. The framing is different.

Looked at this way, even when you know you will get what you want, you should frame it to the target to get them to feel one way or another about it because getting people to feel a certain way about something often allows them to react a certain way, which you can often count on.

Framing, when used positively, is about covering your tracks at a zero-distance range by giving the target the illusion of control: you're using a bias against them that results in them feeling good or in charge. The other way to do this is to create a problem and then offer a positive solution straight away, and it can be “personal” to you rather than contingent upon the target environment: “This is my first weekend with my kid in a month, and if I don't get out of here in 30 minutes I am going to be late and his mom/dad will make a big deal of it. You can really help me out if I can just get this job done.” This again gives someone a choice to make (and the illusion of control over the situation), and if they lean in and help you out, they also get to feel good about their choice.

As a note, I generally do not use negative framing, but I am not in charge of every attacker looking to implement AMs by reading this book, and they can be effective.

Thin-Slice Assessments

A successful attack depends on a target's perception of the attacker's personality, motivations, trustworthiness, and affect. Person-perception research indicates that reliable and accurate assessments of these traits can be made based on very brief observations, or thin slices.

Thin slicing is a term that means making very quick inferences about the state, characteristics, or details of an individual or a situation with nominal quantities of information. It is a type of social cognition that proves beneficial in AMs and works both ways. You can and should get comfortable performing thin-slice assessments on your targets, and you should be aware they are doing something of the same to you, whether it's subconscious or not.

Thin slices of behavior is a term coined by researchers Nalini Ambady and Robert Rosenthal (https://tspace.library.utoronto.ca/handle/1807/33126). They discovered that very brief, dynamic silent video clips, ranging from 2 to 10 seconds, provided sufficient information for people with no special skills to evaluate a teacher's effectiveness, which was measured against the actual students' final course ratings of their teachers. Thin slices can be assessed from any available means of communication, including the face, the body, speech, the voice, or any combination of these. Notably, static photographs would not qualify as thin slices.

Thin-slice judgments have been shown to accurately predict the effectiveness of doctors treating patients, the relationship status of opposite-sex pairs interacting, judgments of rapport between two persons, courtroom judges' expectations as to a defendant's guilt, and even testosterone levels in males.

Malcolm Gladwell in Blink: The Power of Thinking Without Thinking (Back Bay Books, 2007) shows art experts identifying a piece of art as a forgery in the first few seconds of examination. Tennis coaches have known whether the player will fault on a serve in the half a second before it is even struck; a salesperson is able to read someone's emotions and future decisions on the basis of three seconds of observation—these are examples of thin-slice assessments. However, the thin-slice methodology is useful only if relevant and valid information can be extracted from a person's behavior in that given moment. There are many factors that influence the accuracy of thin-slice judgments, including culture and exposure, individual differences in the ability to decode information accurately, differences in accuracy based on expertise, and the type of judgment being made. This is where AMs comes in, and it works both ways.

As human beings, we are wonderful storytellers. We tell stories about who we are, what we're doing, and why we are doing it. To pass someone else's thin-slice assessment of us, we must be such good storytellers that no one could possibly know we are deceiving them. To do this, consider everything visual and auditory about yourself: how you walk, your pace, your posture, your eye contact, your tone of voice, your rate of speech, the words you use, and even your blink rate. You have the upper hand—as long as the target believes your pretext, they are not judging your ability as an attacker; they are judging you as who you have presented yourself as.

Looking at it the other way, you must be able to perform a thin-slice assessment of your target. The more accurate your assessment, the better your chances of success as an attacker. You may need to alter your character's persona once your assessment results are in. You would when you wanted your target to stay or get to a more relaxed state than your intended interaction would allow for. They are judging and reacting to your behavioral stream, which is natural. You are using this against them and using your sampling of their behavioral stream to carve the right outcome for your objective.

What is interesting, and not at all helpful when writing a section of a book on it, is that there's no real guidance on just how to perform a thin-slice assessment. We know that they are sampled from a channel or a combination of channels of communication; we know that people segment units of behavior and ascribe meaning to them, even though life is more a continuous stream than isolated segments. People also tend to think that the more they know someone and the more information they have, the more reliable their perception of them is—this is, paradoxically, why the first few moments of interaction under your pretext are so critical. It sets the tone of how the target envisions you as a whole person, not just the person standing in front of them. Typically, we all aim to extend our first impressions of someone and infer from there.

Something else to note is that research has found that people are better at accurately judging targets from their own culture or similar cultures to their own. Similarly, in-group benefits exist for tribes of people, who show an advantage at accurately extrapolating details about others based on thin slices of behavior. An example would be a group of IT workers—they will likely tell if you are pretending to be a sys admin based on a thin-slice assessment. Thin-slice judgments can be affected by people's expertise and competency, which is important to note as an attacker if you need to impersonate someone to fit in at such a granular level.

The type of judgment being made matters, too, which is somewhat helpful to keep in mind (and to take the pressure off). Thin-slice judgments are accurate only to the degree that applicable variables are apparent to the assessor. In other words, it is possible to accurately assess how warm and likable a person appears to be, because these characteristics can be swiftly revealed through behavior. Other traits, depending on the situation you find a person in, are shown over time, such as how persevering someone appears to be. It's harder in one, short interaction to see this in someone or to have them see it in you. This is welcome news for us as attackers. It means that in a short engagement, a solid pretext will afford us enough cover to pass such assessments, with our own personality and traits having little bearing on the outcome.

Default to Truth

As Tim Levine, author of Duped: Truth-Default Theory and the Social Science of Lying and Deception (University of Alabama Press, 2019) ultimately proved, humans tend to default to truth. Let me explain.

Overwhelmingly, our human tendency is to operate on the assumption that the people we are dealing with are straightforward and trustworthy. Although this instinct will always leave us open to deception, and even facilitates it, it also underpins nearly all of our initial interactions with one another and, as such, enables friendships to form, relationships to start, business to be conducted, and society to operate. The consequence of not defaulting to truth is that, if you don't begin in a state of trust, you can't have meaningful social interaction. There are people who do not operate through this tendency. If you have ever met someone who seems to offer up acute analytical inspection at every word you say and every action you take, you will know it is tiring and difficult to build and cultivate any sort of meaningful, symbiotic relationship. The simplest of processes in society could not take place as they do if we all functioned with such conspicuous distrust at every turn and every encounter.

Moreover, evolution should have favored people with the ability to pick up the signs of deception, and yet here we are—still all pretty terrible at knowing whether someone is lying. We are able to pick up signs of comfort or discomfort, but we aren't able to pick out a lie. This is counterintuitive and, upon first inspection, seems to not make any sense at all. Surely suspicion and caution would fit us best in this dangerous world. Alas, we are more inclined to assume someone is telling us the truth. It may be that evolution cannot give us the ability to tell whether a lie is being told because every lie we hear comes with different signage and indicators or because every person's tells are too different. Or it could be that always knowing when an individual is lying is not needed. Catastrophic lies are rare and often told by a very small subset of people, so defaulting to truth makes sense for us in terms of building societies and thriving as a race. Or my favorite theory: the reason we haven't evolved to tell when someone is lying with precision could be many reasons combined.

Knowing that operating under this default to truth tendency is socially necessary, we can begin to look at ways to exploit it as an attacker and also, at the end, stop it from becoming such a vulnerability for businesses and people. We've discussed, thanks to Daniel Kahneman's brilliant work, especially that written in Thinking, Fast and Slow (Farrar, Straus, and Giroux, 2013), how we can get by people as long as we don't snap them out of their “System 1” thinking (automatic, intuitive, and unconscious mode) into System 2 (slow, controlled, and analytical method of thinking; uses reasoning). This means we have to operate in a way that is congruent with our presented selves. It means adhering to law 3—never breaking pretext—never uncloaking yourself as a threat. The default to truth tendency, as defined by Tim Levine, is an extension of this. To snap a target out of truth-default mode takes large and conspicuous deviation from what you are presenting as. This is not to say a target won't have suspicions or doubts—they may. It is your job, via the laws and skills of AMs, to win them over. What refraining from snapping someone out of this tendency means is that you cannot do something so egregiously incongruent that they fall out of truth-default mode. People will forgive little incongruencies, because as humans we often rationalize things away. We start out by believing people and we end supposing deceit over truth only when our doubts and uncertainties grow to a point where we can no longer rationalize or explain incongruencies away.

Imagine you had to analyze whether your grandfather was a spy. Let's assume he could speak multiple languages, was very private, did not like his picture to be taken, and did not want to be in the background of pictures, especially if they were going to be posted on social media. Imagine he was against social media altogether, but you often found him perusing it, looking at foreign countries—not as himself, of course. Let's go further and imagine that whenever you asked him what his job was, his answer didn't make sense: imagine he traveled a lot for “an electrician.” Imagine you found items that you could identify as ways to bug people—devices that were small and nifty. Now imagine you asked him about all of those things, and he said his expectation and need for privacy was a biproduct of his generation and upbringing…that he did not like the way he looked in pictures…and that he liked the thought of getting news from locals, a sentiment he remembered his own father longed for as an immigrant but without access to social media. Imagine he said he traveled because there was no work as an electrician at the rates he charged in the immediate vicinity. Moreover, he works big jobs, generally for large buildings that make staying beside the facility easier. And imagine he had a hobby that made him want to dismantle small, wily devices that outdated your time on Earth. Great; truth-default mode says you will rationalize what he says with what he does. But actually, your grandfather is a spy. It's not until he is caught and on the news that you think to yourself, “I feel like I knew that the whole time.” Also, “How much is bail from the Soviets?”

Truth-default mode is a powerful cognitive bias. The only way to stop from becoming a liability to businesses is to teach people, through behavioral security, that defense starts in the brain—that there are no circumstances in which security protocols can be bypassed. If the security protocols are lacking, then these too must be fixed. The people, more pointedly us as ethical attackers, are not the target's immediate family. We deserve no rationalization.

Summary

- Humans are vulnerable to dishonesty and strong narratives. An attacker can leverage this knowledge for their own gain.

- Part of an attacker's arsenal is being able to think like the people and businesses they are targeting.

- Building knowledge of fundamental human tendencies and biases is vital.

- You can use many biases against your targets, not only the ones listed within this book. Use AMs to look at them and tie them to your objective as they pertain to the target.

Key Message

Use thin-slice assessments to your advantage in two ways: know how to perform them to get accurate readings, and know that they will likely be performed on you. Be prepared for that, and use every tool and technique at your disposal to avoid exposure as an imposter.