![]()

Genetics versus Nutrition, Part One

Scientists have found the gene for shyness. They would have found it years ago, but it was hiding behind a couple of other genes.

—JONATHAN KATZ

In all things it is better to hope than to despair.

—JOHANN WOLFGANG VON GOETHE

In the last chapter, we saw how reductionism collapses in both theory and practice in the face of the awe-inspiring complexity of our enzymatic systems. We also saw how reductionist interventions usually aren’t necessary, providing we consume the right foods, as our biochemistry naturally moves us toward healthy homeostasis. But instead of turning their attention to nutrition and acknowledging the futility of efforts to manipulate enzymatic activity in a way that does more good than harm, reductionist researchers have focused upstream, on the template that is used to manufacture those amazing enzymes: deoxyribonucleic acid, or DNA.

Genetic medicine is the ultimate reductionist fantasy. It sidesteps all the messy big-picture factors that influence health and the development of disease, and focuses on millions and millions of tiny, deterministic elements with no room for fuzziness or randomness. It lets scientists point to a bit of DNA and say, “There, that’s why you got pancreatic cancer!” And despite all the evidence calling into question a direct link between genes and cancer (and most other chronic diseases), geneticists are now pointing to bits of DNA and asserting, “There, that’s why you’re probably going to get pancreatic cancer within the next forty years.” They’re racing gleefully into a future where they can identify, isolate, and “fix” that faulty gene, to conquer disease once and for all.

For the past fifty years, medical researchers have become increasingly fascinated with understanding, mapping, and manipulating our DNA. This fascination, as we’ll see over the next two chapters, has brought with it great cost, both economically and philosophically, to our beliefs about our power to influence health.

AN END TO DISEASE

Despite decades of disappointment, most of us still believe in the Big Promise of modern medicine: a world free from disease and early death, a paradise in which we no longer have to fear scourges like cancer, heart disease, diabetes, and so on.

To understand why we believe this, you need only look at the remarkable advances of twentieth-century medical science. In 1900, medicine could not reliably cure infection, transplant organs, keep people alive on respirators, replace failing kidneys with dialysis, or look deeply into our bodies with MRI and CT scans. The list of recent medical advances leads us to believe that our progress has been staggering. Why wouldn’t we assume that future breakthroughs will be even more remarkable? As computers and other technologies advance, it just makes sense that someday soon, all these discoveries and inventions will save us from both our folly and most, if not all, of the diseases that still plague humankind.

The medical establishment has fanned the flames and basked in the glow of our love affair with scientific progress. After all, our collective faith in the Big Promise has funded the War on Cancer, among many others. And popular culture has enshrined the image of the selfless, heroic researcher hot on the trail of the “cure” for cancer.

Trouble is, the medical establishment hasn’t had any real wins in a long time. Technology has advanced at a breakneck pace, but technologies that actually improve health outcomes have been hard to find. While death rates in developed countries plummeted in the early part of the twentieth century largely due to an understanding of hygiene,1 none of the ultra-expensive high-tech advances of the past fifty years have made a dent in overall rates of death and disease in first-world countries. And while medicine is now much better equipped to save someone’s life after an acute event like a car crash or a sudden heart attack than it was fifty years ago, we’re really no better at preventing chronic degenerative diseases like heart disease and cancer, often called “diseases of affluence,” than we were in the 1950s.

Yet we still look for the next medical knight on a white horse to ride to our rescue: the pill, the vaccine, the technology, the intervention that will disease-proof us and save us, not just from the diseases themselves, but from the pervasive fear of diseases that seem to strike randomly in our midst.

It’s the (apparent) randomness that scares us the most. I remember the fallout when Jim Fixx, author of the 1977 bestseller The Complete Book of Running, died of a heart attack at the age of fifty-two. The media reported his death with an air of ironic fatalism, as proof that death would find us no matter how fervently we pursued a healthy lifestyle.

What we really want from science is an end to randomness. We want to know why diseases strike some people and not others. We want to know how to protect ourselves against the scourges that have our names on them. We want, in short, to banish unpredictability.

In a reductionist universe, you’ll recall, unpredictability is not allowed. In a universe that is simply a mechanical expression of physical laws, everything is theoretically knowable. If we can’t predict in advance exactly who will get pancreatic cancer or heart disease, it’s simply because we haven’t collected enough data yet. We don’t yet have tools sensitive or powerful enough to lay bare the apparent mystery. But no fear—they’re coming! In fact, they’re just about here! The problem is, they’ve been “just about here” for the last forty years or so.

In recent years, one discipline has gained prominence over the rest as the one that will solve all our health problems and tell us all those things we don’t yet know. I’m speaking, of course, of the genetics revolution that began in the early 1950s and has been gathering steam (and money) ever since. You could argue that we are now living in the Age of Genetics. The mapping of the human genome and individual gene sequencing are the cutting edge of medical technology. DNA is the master code, right? Our entire biography and destiny, mapped out in a fantastically long and complicated blueprint. All the secrets of our development and our nature are contained in that DNA double helix: our physical appearance and function, our personality, our predisposition to various diseases. As computing power and speed increase, we continue to unravel these secrets. Soon, as a March 7, 2012 New York Times article trumpeted, the cost of individual gene sequencing will be as modest as that of a simple blood test, with “enormous consequences for human longevity.”2 The scientists at the Silicon Valley start-ups behind this push for fast, affordable sequencing operate from the assumption that the limiting factor in improving human health has been a lack of data. Typical of this faith is the statement of Larry Smarr, director of the California Institute of Telecommunications and Information Technology and a member of the scientific advisory board for Complete Genomics (one of Silicon Valley’s gene sequencing pioneers): “For all of human history, humans have not had the readout of the software that makes them alive. Once you make the transition from a data poor to data rich environment, everything changes.”3

These genetic crusaders view themselves as pioneers in a new age of enlightenment—specifically, reductionist enlightenment. Genes, in the genetic crusaders’ view, are simply human software. Just as a good programmer can read code and predict exactly what the program will do, eventually we’ll be able to look at genes and predict exactly what diseases we’ll develop, perhaps even what emotions we’ll experience from moment to moment.

The problem is, we can’t. Genes tell us what may happen, but not if or how. The increasing fascination with and funding of genetic technology is simply another medical dead end, another reductionist rabbit hole that will lead us no further toward preventing and reversing chronic illness.

GENETIC COMPLEXITY VERSUS REDUCTIONISM

As with nutrition, the discipline of genetics is unimaginably complex. This complexity has not filtered down to the public. Most of the population tends to think of genes as relatively fixed entities that cause us to look and function and behave in particular ways. The truth is far more interesting.

When I was on the farm, my brothers, Jack and Ron, and I each had a “self-propelled combine”—a big machine that harvested grain as we drove through the field (our way of helping our father earn money for our college education). In those days, combines were about as mechanically complex as any other machine on the market. I’ve forgotten how many belts and pulleys there were on my machine, but I remember well the 103 fittings that I had to fill with grease at the start of each and every day. For me it was an engineering marvel of ordered complexity. But these machines were only the beginning of the engineering marvels yet to come: ever-larger airplanes, massive ocean liners, talking radios in color (i.e., TVs), satellites and space stations, communication devices and systems, really fancy laboratory equipment, and now computers everywhere. Marvelous machines, marvelous minds! But as impressive as these engineering and technical feats may be in their complexity and order, they pale into insignificance when compared with the microcosmic universes of molecular genetics.

A SHORT LESSON IN GENETICS

As you may remember from high school biology, DNA is a long thread composed of two parallel strands that are gently twisted together into a double helix shape. Alternating sugar and phosphate molecules link to form the backbones of these adjacent strands (seen as ribbons in Figure 8-1).

Strung along these strands are four precisely arranged, or sequenced, nitrogen-containing bases, each of which is anchored to a deoxyribose unit of the strand. They are named adenine (A), thymine (T), guanine (G), and cytosine (C), and they project perpendicularly from each strand in a way that faces partner bases on the adjacent strand, thus facing inward and holding the strands together. The facing As and Ts of each strand have a chemical affinity for each other, thus forming base pairs; Gs and Cs form similar pairs.

The DNA molecule is unimaginably long and harbors these four bases in a sequence that is unique for each and every person who ever lived on the planet. Because these bases act like letters of an alphabet that create words, they have the capacity to create an enormous body of information.4

This unique DNA chain is clipped and packed into twenty-three pairs of chromosomes located within the nucleus of each of the 100 trillion cells in our bodies (which, individually, are small enough to sit comfortably on the tip of a pin). Our cells use DNA as a blueprint for doing their work. The bases on the twenty-three chromosome pairs (about three billion bases, in total) are grouped into aggregates (around 25,000 of them) called genes. And each of these genes, which may contain as few as 100 bases and up to as many as several million, ultimately directs the formation of a unique protein.

However, these genes do not translate into a protein directly. Instead, they do so through the intermediate formation of ribonucleic acid (RNA) (Figure 8-2), a similar strand of bases that mirrors a DNA strand.

FIGURE 8-2. The process of DNA expression into active proteins (e.g., enzymes)

The RNA base sequence serves in turn as a code for the selection of amino acids (about twenty amino acids are used in human protein production, each possessing a unique chemical structure) which, when combined into a long strand, form proteins. The bases on the RNA chains don’t code for these amino acids on a one-to-one basis, however. Instead, triplet sets of bases are used, each specifying one or more amino acids. With four bases, it is possible to create sixty-four different triplet combinations or codons (some amino acids can be specified by more than one triplet codon).

In the early days of genetic research, scientists believed in a “one gene/one protein” hypothesis, in which each gene was responsible for expressing a single protein. If there were 25,000 genes, then that meant there were 25,000 proteins. However, recent work in the field makes it clear that this hypothesis is too simple. For instance, more than one gene can share in the making of a single protein, because some proteins are made up of more than one strand of amino acids, and each of those amino acid strands is produced by a separate gene. The number of possible proteins and their combinations is impossible to estimate. The complexity at this point is far beyond comprehension by the human mind.

And here’s another puzzle. Despite the fact that each of our cells contains the exact same genetic master template as every other cell in our bodies, these cells can do very different things. A liver cell is very different from a nerve cell or a cell on the inner surface of the intestine, both in form and in function. Their structural and functional differences depend solely on which segments of DNA bases are selected for expression within each cell. The act of selecting which bases to use among the three billion bases is an awesome display of nature at work.

To recap: relatively short segments of the DNA base sequence, called genes, are transcribed into comparable RNA sequences, which translate, in turn, into sequences of amino acids that are used to make proteins. These proteins then provide the structure and function of cells, acting as enzymes, hormones, and structural units. It is through the activity of these proteins that DNA manifests its destiny.

That manifestation of destiny—the expression of genes, how they do what they do—operates through a series of enormously complex but very orderly processes. To investigate and understand these processes, researchers like to simplify them by thinking of seemingly discrete stages or events operating one after another, like dominos falling in a row. This simplification is helpful because it allows the details of each stage to be more easily investigated and visualized, but it is not entirely reliable. In reality, these stages or events are highly interconnected and communicative, a virtually seamless and extensively integrated stream of activities.

Every point in this process may be influenced by body biochemistry, diet, physical activity, medication, mood, and just about every other variable you can think of. Not only that: the so-called stages of genetic expression influence one another, too, feeding information backward and forward in an endlessly complex series of loops. These streams of events communicate with one another in many different ways, at every enormously complex stage of the process, as we saw with the series of enzymes (which are themselves one type of protein) in chapter seven. In addition, each change in activity rate can have more than one cause. The amounts of protein synthesized from DNA, for example, fluctuate according to how much is needed at any moment in time. When there is enough of one protein, its formation is slowed. But slowing the rate of protein synthesis can be controlled in multiple ways. The rate of DNA-to-RNA transcription, and/or the rate of protein synthesis from RNA itself, both can be altered.

This is the system that we are now tampering with, as if it were a human-made machine. Sure, we’ve mapped the human genome.5 But that mapping is only the first step. We can label genes with cryptic names all we want; that doesn’t mean we’ll magically know what those labels mean or how emergent structures like personality, preferences, predispositions—or disease—arise from them . . . assuming it’s even possible to do so.

THE GENETICIST’S DREAM

Despite the unimaginable complexity of genetics, geneticists stubbornly persist in advocating and pursuing a genetic research agenda as the future of health care. To reductionists, complexity is simply an invitation to throw more time and money at the problem. All we need is faster processing, or smarter programming, or more research. . . .

Geneticists are sure that we’ll crack the genetic basis of disease in a decade or two—if not sooner. And once we do, it will lead to a revolution in health care. Knowing the identity and function of genes involved in disease formation and treatment will let us refine drug development6 and economize clinical testing of the newly developed products. Drugs will be developed that are targeted either for specific disease-related events or, as recently announced, for individuals whose genes define their likely drug responsiveness. In doing so, drug side effects would be minimized and costs of clinical trials would be lessened. In fact, the Human Genome Program—the ambitious government-led research project that mapped all 20,000 to 25,000 human genes from 1990 to 2003—claims a more streamlined drug development process would have “the potential to dramatically reduce the estimated 100,000 deaths and 2 million hospitalizations that occur each year in the United States as the result of adverse drug response.”7

But that’s only the start of the benefits. Here are a few other verbatim quotations from their website that reflect the U.S. government’s “official” enthusiasm:

♦ “[A]dvance knowledge of a particular disease susceptibility will allow careful monitoring, and treatments can be introduced at the most appropriate stage to maximize their therapy.”8

♦ “Vaccines made of genetic material [. . .] promise all the benefits of existing vaccines without all the risks.”9

♦ “The cost and risk of clinical trials will be reduced by targeting only those persons capable of responding to a drug.”10

♦ All of these benefits and more “will promote a net decrease in the cost of health care.”11

NIH Director Dr. Francis Collins, who, with Dr. J. Craig Venter, led the remarkable sequencing of the human genome, and who used to direct NIH’s National Human Genome Research Institute, also talks frequently and with extraordinary enthusiasm about the promise of genetics research. He visualizes a time when the identities of individuals’ unique DNA profiles will not only establish disease risks but also permit customized programs of prevention and treatment of illness. Because people are unique, he envisions customized prevention and treatment strategies for each individual. One size will not fit all, according to Collins and his colleagues.

These promises all sound inspiring and are said to be ushering in a whole new medical practice paradigm: genetics as the centerpiece of medicine’s future! And in fact, many of the promised outcomes of genetics no doubt will be very good. I’m not saying that genetic research is a complete waste of time. I actually find the Human Genome Project to be endlessly fascinating science. There’s no way a curious species like ourselves could have left that stone of indeterminate complexity unturned, given sufficient technology. And there’s no doubt that genetic interventions will help the 0.01 percent of the population who suffer from rare conditions brought about by faulty genes.

What they won’t do, however, is solve the basic problem: our society’s failing health. What I object to is our focus on genetics to the near exclusion of everything else. Currently, hundreds of billions of dollars are being spent on genetic testing and sequencing every year in the United States, without getting us any closer to solving our health-care crisis. Our society’s multibillion-dollar investment in genetics will help only a very small portion of the population, and even then only at enormous expense.

Once we’ve eliminated 90 percent of human diseases via nutrition and ended the financial drain of reductionist health care on our economy, then we can avail ourselves of the luxury of genetic testing and sequencing. Right now we have much more urgent things we can do that would benefit a much larger percentage of the population. We’re facing a perfect-storm health-care crisis right now. When the hurricane is blowing, you don’t redecorate the foyer; you nail plywood over the windows.

Or maybe I’m just jealous. I’ll leave that for you to decide. After all, while this new Age of Genetics was rising over the horizon, an Age of Nutrition was sinking below it.

THE DECLINE OF THE AGE OF NUTRITION

In 1955, I was in my first year of veterinary school at the University of Georgia, where my biochemistry professor was enthralled by the recent discovery of the DNA double helix and what it might mean for the future. I, too, was enthralled with this marvelous bit of biochemical and medical research—exactly what I’d envisioned as my cup of tea. When Cornell professor Clive McCay surprised me with an unsolicited offer by telegram for me to drop veterinary medicine and instead come to Cornell and study this new field of “biochemistry” (of which the emerging discipline of genetics was then a part), I jumped at the opportunity. In my graduate research program at Cornell, I formally combined nutrition as a major field of study with biochemistry as a minor. In retrospect, I realize that I was witnessing not only the emergence of a new field, but a tectonic shift in the way science viewed human health.

From the early 1900s to the early 1950s, nutrition researchers were at the forefront of the struggle to improve human health. In the early twentieth century, scientists and medical professionals had begun investigating the causes of such diseases as beriberi, scurvy, pellagra, rickets, and other maladies. These diseases appeared to be linked in some way to food, but the exact mechanism was unclear. Eventually, researchers identified specific nutrients and posed the possibility that inadequate intake of these nutrients might be what leads to these diseases. Around 1912, the word vitamin was coined to refer to a substance in food, present in very small quantities, that was thought to be vital for sustaining life.

During the 1920s and 1930s, nutrition researchers identified a number of specific vitamins and other nutrients, including the “letter vitamins,” A through K. Amino acids, the building blocks of protein that are assembled from the DNA template, also were being studied to determine how their sequence and arrangement within polypeptide chains affected protein’s important, life-giving properties. In 1948, scientists stated with confidence that they had discovered the last vitamin, B12, based on the observation that it was possible to grow laboratory rats on diets composed only of chemically synthesized versions of these newly discovered food nutrients. Now that the elementary particles of nutrition had been found and catalogued, nutrition scientists believed, whole foods need not be eaten. Human beings could get everything they needed from pills, and hunger and malnutrition would be banished to the distant past.

The findings from this impressive period of basic nutrition research filled our lectures as I started my research program at Cornell University in 1956, of course. But news of these exciting nutrient discoveries had filtered down to the popular imagination years earlier. I remember, when I was a child, my mother gave my siblings and me spoonfuls of oil prepared from codfish liver daily because it contained the life-giving nutrient vitamin A (I can still taste that oil—ugh!). I also remember at about that same time my aunt telling my mother with considerable enthusiasm that someday we would not have to eat food because its main ingredients would be in the form of a few pills! Forget about the vegetables grown in my mom’s garden. (I remember my mother not taking kindly to that comment.) Protein was another nutrient independently gaining a reputation of epic proportions. On our dairy farm, we were certain that our milk was especially good for mankind (womankind had not yet been invented) because it was a source of high-quality protein that could make muscle and grow strong bones and teeth. Nutrition as a scientific discipline was riding high, although even then it was mostly focused on the discoveries and activities of individual nutrients.

Ironically, it was the reductionist nature of nutrition that provided the opening for the much more reductionist discipline of genetics to replace it as the best answer to the question of Why We Get Sick. All those fortified breakfast cereals and multivitamin pills weren’t turning us into a nation of decathletes and vigorous octogenarians. Nutrition as a reductionist science had hit a dead end. And genetics obligingly stepped up to replace it.

THE NATURE–NURTURE DEBATE

The power struggle between nutrition and genetics closely mimics that age-old debate concerning nature versus nurture. Does our initial “nature” at birth—our genes—predetermine which diseases we get later in life? Or are health and disease events a product of our environment, like the food we eat or toxins we’re exposed to—our “nurture”? Forms of the nature–nurture debate (or mindless shouting match) have been raging for millennia, at least since Aristotle characterized the human mind as a tabula rasa, or a blank slate to be filled by guidance and experience, in opposition to the prevailing view that humans were born with fixed “essential natures.”

Most health researchers agree that neither nature nor nurture acts alone in determining which diseases we get, if any. Both contribute. The debate centers around how much each contributes. But the truth is, it’s almost impossible to assign meaningful numbers to the relative contributions of genes and lifestyle, let alone the specific contribution of nutrition.

This uncertainty became clear to me many years ago when, from 1980 to 1982, I was on a thirteen-member expert committee of the National Academy of Sciences preparing a special report on diet, nutrition, and cancer,12 the first reasonably official report of its kind. Among other objectives, we were asked to estimate the proportion of cancers caused by diet versus those caused by everything else, including genetics, environmental toxins, and lifestyle, and through that, suggest how much cancer could be prevented by the food we eat.

Estimating the proportion of cancer prevented by diet was of considerable interest to those of us working on the project because, as had been noted in the media a year or so before, a report13 developed for the now-abolished Office of Technology Assessment of the U.S. Congress by two very distinguished scientists from the University of Oxford, Sir Richard Doll and Sir Richard Peto, had suggested that 35 percent of all cancers were preventable by diet. This surprisingly high estimate quickly became a politically charged issue, especially as this estimate was even higher than the 30 percent of cancers estimated to be preventable by not smoking. Most people had no idea that diet might be this important.

Our committee’s task of creating our own specific estimate of diet-preventable cancers proved to be impossible. I was assigned the task of writing a first draft of this risk assessment, and I quickly saw that this exercise made little or no sense. Any estimate of how much cancer could be prevented by diet that was based on a single number was likely to convey more certainty than it deserved. We also faced the dilemma of how to summarize the combined effects of the various factors that affect cancer risk. What were we to do, for example, if not smoking could prevent 90 percent of lung cancer (our current best guess), a proper diet could prevent 30 percent (there is such evidence), and avoiding air pollution could prevent 15 percent? Did we add these numbers together and conclude that 135 percent of lung cancer could be prevented?

Becoming aware of both of these somewhat contrasting difficulties (i.e., over-precision and inappropriate summation of risk), our committee therefore declined to include a chapter that gave precise estimates of the reduced risk of cancer due to a healthy diet. We also knew that the previous report prepared for the Office of Technology Assessment14 did not fixate on a precise number for diet-preventable cancers; the 35 percent cited by the media was a result of sloppy reporting. In fact, the report’s authors had surveyed relevant professional diet and health communities and found that the estimates ranged broadly, from 10 percent to 70 percent. The seemingly finite figure of 35 percent was anything but conclusive. It was mostly suggested as a reasonable midpoint within this range, because a range of 10 percent to 70 percent would only confuse the public and discourage taking seriously diet’s effect on cancer development. It is a generous range within which personal biases can play.

I am convinced that our committee’s decision not to go down that path of estimating the size of such an unknowable risk was wise. Even today, writers incorrectly claim with far too much assurance that one-third of all cancers are preventable by dietary means, based on that University of Oxford report. Precise numbers are often over-interpreted, especially by those with a personal or professional agenda. And decades later, diet and health research communities still cannot agree on a precise figure.

The problem is, risk doesn’t actually exist as an objective reality. It changes constantly based on how much we know. For example, the television station that broadcast the Washington Nationals’ baseball games used to display a statistic they called “odds of winning.” If the Nationals were ahead 5-2 in the bottom of the fourth inning, their odds of winning that game might be 79 percent. But if the opposing team then scored a run in the top of the fifth inning, those odds might decline to 65 percent. A grand slam by the Nationals in the eighth inning would raise those odds again, perhaps to 97 percent. But a heroic rally in the top of the ninth could erase that lead and shift the odds yet again. The problem, of course, is that the odds of winning can’t be permanently pinned down. Every pitch, every swing of the bat, every change in cloud cover or drop in relative humidity could conceivably affect the final score. Depending on what the statistician who programmed the algorithm chose to include or ignore, the number could change dozens of times each second.

Like a bookmaker seeking precise quantification of risk to set odds on the outcome of a baseball game, individuals who care about their own health and that of their loved ones also seek the reassurance of specific percentages. They want to know with some confidence how to stay healthy and avoid chronic disease. But they don’t need misleadingly “accurate” aggregate numbers that predict nothing in any specific instance. The important takeaway from our report wasn’t how much cancer was preventable by diet, but that diet was a predominant factor.

What can we do, then, if we can assume neither a specific estimate nor a wide range of possible estimates? Do we just make up stuff? I am convinced that most people simply believe what they want to believe about cancer causation and prevention, according to which way the nature–nurture pendulum swings in their minds. In the absence of a reliable answer to the cancer prevention question, they fall back on personal nature or nurture biases.

HOPE (NUTRITION) VERSUS DESPAIR (GENES)

Where we stand on this continuum, consciously or unconsciously, influences our thinking about health and disease more than we realize. Do we simply accept the cards dealt to us, or do we consider the possibility that we can control our own destiny? If our health trajectory is mostly predetermined by our genes, then there’s no point in trying to be healthy. If our choices trump the cards we were dealt at birth, then there’s a reason for us to do what we can to achieve and maintain health.

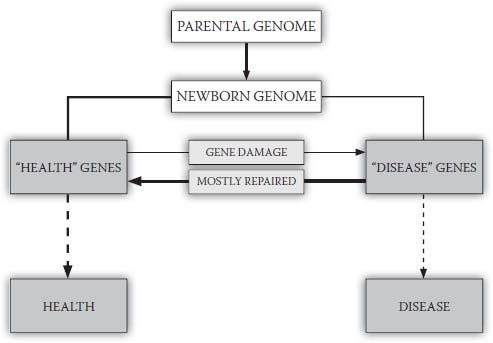

Most medical researchers fall on the nature side of the nature–nurture dichotomy, and affirm the primacy of genetics as the basis of disease. They mistakenly believe that genetics is what will allow us to better diagnose and predict disease risk, through the discovery of faulty genes or gene arrangements in DNA that may be causing disease. Basic to these beliefs is a theory fairly popular in the health sciences called genetic determinism. According to this theory, we can draw a more or less straight causal line between genes and their final health- or disease-related outcomes. In other words, genes operate fairly independently, continuing to “do their thing” with little impact from the environment and one’s lifestyle. A very simple representation of this process is shown in Figure 8-3.

In contrast, there is an alternative belief system to genetic determinism that I call nutritional determinism, wherein nutrition controls the expression of genes to cause health or disease outcomes, by turning on health genes and suppressing disease genes as shown in Figure 8-4. And this is the belief system to which, based on my years of research and that of others, I subscribe.

FIGURE 8-3. Genetic determinism

Health or disease occurrence is primarily determined by “health” and “disease” genes, which arise from the newborn’s genome plus damaged but unrepaired genes produced during life.

Certainly, there also are nonnutrient lifestyle factors that may control gene expression. There are also, of course, relatively rare diseases like Tay-Sachs and others that are entirely genetic in cause, for which nutrition may, at very best, be able to mitigate some of their symptoms—if that. Even nutrition is not a cure-all; there’s no diet that can regrow an amputated limb, as far as we know. However, I am suggesting that nutritional inputs are the primary factor in gene expression, and that in the vast majority of cases, the vast majority of the time, good nutrition has a much greater impact than anything else—including the most complicated and expensive genetic intervention.

Genes are the starting point for health and disease events; they are the “nature” part of the equation. But it is nutrition and other lifestyle factors, the “nurture” part, that control whether and how these genes are expressed. The influence of nurture (i.e., nutrition) has far more influence on health and disease outcome than nature (i.e., genes).

FIGURE 8-4. Nutritional determinism

Health or disease processes begin with “health” and “disease” genes, but nutritional practices control expression of these genes. Good nutrition blocks expression of disease genes, leaving health genes to produce health.

A belief in genetic determinism suggests that our future health and disease events are already predestined at birth and that, as we age, we simply move from one disease benchmark to another according to the genetic blueprint we inherited at conception. This encourages the impression that there is little or nothing that we can do to prevent serious diseases like cancer. In contrast, the belief that cancer and related diseases are dependent on nutritional practices can encourage a sense of hope and lead to healthier behavior. And as we’re about to see, this belief is not just wishful thinking; it’s supported by an overwhelming amount of wholistic evidence. Let’s now look at how nutrition and genetics compare when it comes to minimizing and repairing our damaged and misbehaving genes, and what our focus on a reductionist approach to disease means for our ability to prevent chronic diseases like cancer.