This chapter covers 30% of the Certified OpenStack Administrator exam requirements. It is one of the most impotent topics in the book. Without a solid knowledge of the network components, you will be unable to perform most exam tasks.

Neutron’s Architecture and Components

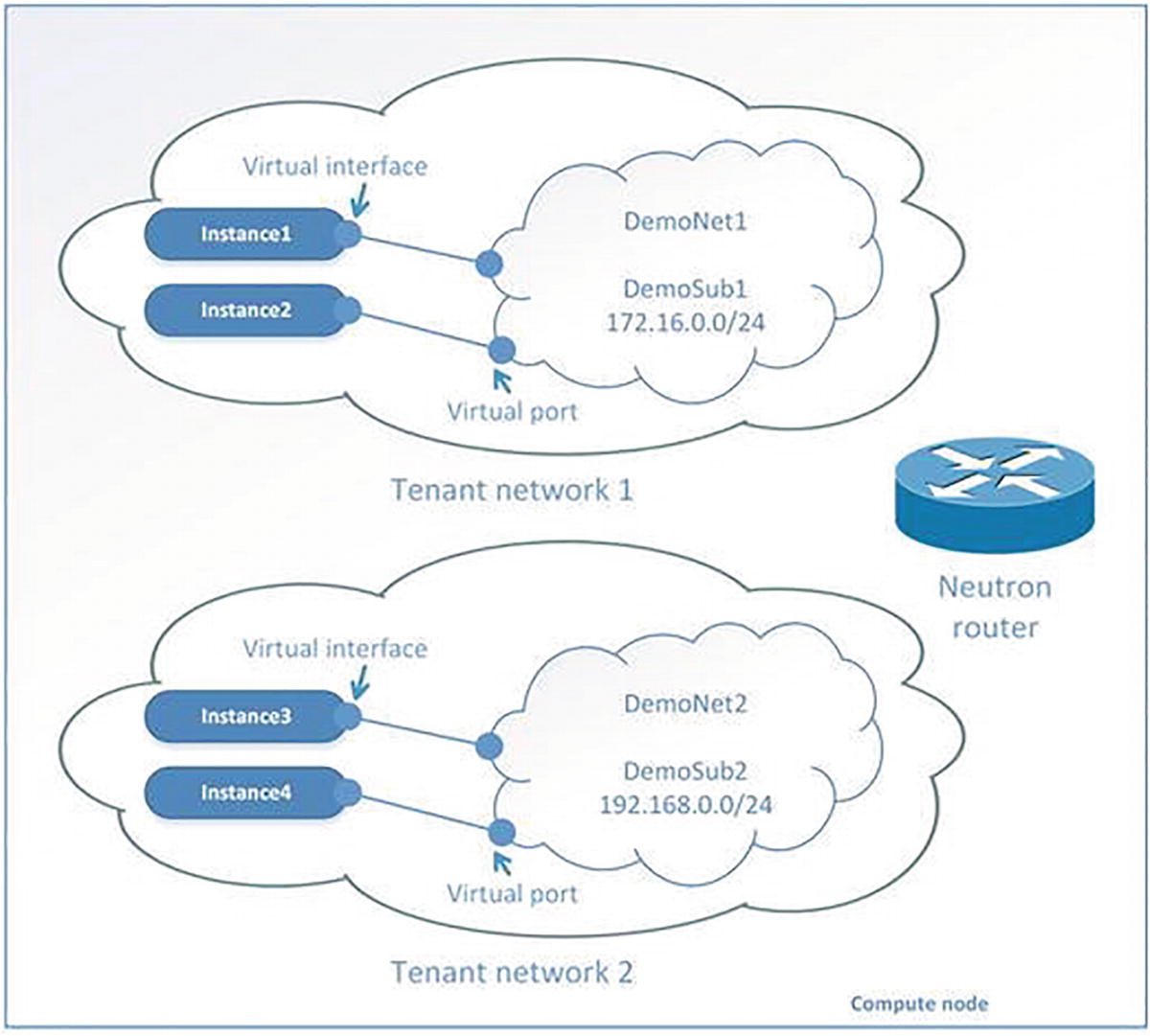

A diagram represents an open stack networking service. At the top, Tenant network 1 with instances 1 and 2, Tenant network 2 with instances 3 and 4 at the bottom.

Logical objects in OpenStack’s networking service

A tenant network is a virtual network that provides connectivity between entities. The network consists of subnets, and each subnet is a logical subdivision of an IP network. A subnet can be private or public. Virtual machines can get access to an external world through the public subnet. If a virtual machine is connected only to the private subnet, then only other virtual machines from this network can access it. Only a user with an admin role can create a public network.

A router is a virtual network device that passes network traffic between different networks. A router can have one gateway and many connected subnets.

A security group is a set of ingress and egress firewall rules that can be applied to one or many virtual machines. It is possible to change a Security Group at runtime.

A floating IP address is an IP address that can be associated with a virtual machine so that the instance has the same IP from the public network each time it boots.

A port is a virtual network port within OpenStack’s networking service. It is a connection between the subnet and vNIC or virtual router.

A vNIC (virtual network interface card) or VIF (virtual network interface) is an interface plugged into a port in a network.

A diagram represents Neutron architecture that consists of a control node, computel node, network node, and database.

Neutron’s architecture (OVS example)

Upstream documentation from docs.openstack.org defines several types of OpenStack nodes. Neutron is usually spread across three of them. API service usually exists at the control node. Open vSwitch and client-side Neutron agents are usually started at the hypervisor or compute node. And all server-side components of OpenStack’s networking service work on network nodes, which can be gateways to an external network.

neutron-server is the main service of Neutron. Accepts and routes API requests through message bus to the OpenStack networking plug-ins for action.

neutron-openvswitch-agent receives commands from neutron-server and sends them to Open vSwitch (OVS) for execution. The neutron-openvswitch-agent uses the local GNU/Linux commands for OVS management.

neutron-l3-agent provides routing and network address translation (NAT) using standard GNU/Linux technologies like Linux Routing and Network Namespaces.

neutron-dhcp-agent manages dnsmasq services, which is a lightweight Dynamic Host Configuration Protocol (DHCP) and caching DNS server. Also, neutron-dhcp-agent starts proxies for the metadata server.

neutron-metadata-agent allows instances to get information such as hostname, SSH keys, and so on. Virtual machines can request HTTP protocol information such as http://169.254.169.254 at boot time. Usually, this happens with scripts like cloud-init (https://launchpad.net/cloud-init). An agent acts as a proxy to nova-api for retrieving metadata.

Neutron also uses Open vSwitch. Its configuration is discussed in the next section of this chapter. Some modern OpenStack distributions migrated to Open Virtual Networking (OVN) instead of OVS. OVN includes a DHCP service, L3 routing, and NAT. It replaces the OVS ML2 driver and the Neutron agent with the OVN ML2 driver. OVN does not use the Neutron agents at all. In OVN-enabled OpenStack, the ovn-controller service implements all functionality. Some gaps from ML2/OVS are still present (see https://docs.openstack.org/neutron/yoga/ovn/gaps.html). Note that the current OpenStack installation guide refers to OVS, but if you install the last version of DevStack or PackStack, you get OVN.

You will not be tested on this knowledge on the exam. You may directly jump to the “Manage Network Resources” section. From an exam point of view, your experience should be the same.

OpenStack Neutron Services and Their Placement

Service | Node Type | Configuration Files |

|---|---|---|

neutron-service | Control | /etc/neutron/neutron.conf |

neutron-openvswitch-agent | Network and Compute | /etc/neutron/plugins/ml2/openvswitch_agent.ini |

neutron-l3-agent | Network | /etc/neutron/l3_agent.ini |

neutron-dhcp-agent | Network | /etc/neutron/dhcp_agent.ini |

neutron-metadata-agent | Network | /etc/neutron/metadata_agent.ini |

Modular Layer 2 agent (it is not run as a daemon) | Network | /etc/neutron/plugins/ml2/ml2_conf.ini and /etc/neutron/plugin.ini (symbolic link to ml2_conf.ini) |

Opening vSwitch’s Architecture

OVS is an important part of networking in the OpenStack cloud. The website for OVS with documentation and source code is https://www.openvswitch.org/. Open vSwitch is not a part of the OpenStack project. However, OVS is used in most implementations of OpenStack clouds. It has also been integrated into many other virtual management systems, including OpenQRM, OpenNebula, and oVirt. Open vSwitch can support protocols such as OpenFlow, GRE, VLAN, VXLAN, NetFlow, sFlow, SPAN, RSPAN, and LACP. It can operate in distributed configurations with a central controller.

openswitch_mod.ko is a GNU/Linux kernel module that plays the role of ASIC (application-specific integrated circuit) in hardware switches. This module is an engine of traffic processing.

ovs-vswitchd is a daemon in charge of management and logic for data transmitting.

ovsdb-server is a daemon used for the internal database. It also provides RPC (remote procedure call) interfaces to one or more Open vSwitch databases (OVSDBs).

br-int is the integration bridge. There is one on each node. This bridge acts as a virtual switch where all virtual network cards from all virtual machines are connected. OVS Neutron agent automatically creates the integration bridge.

br-ex is the external bridge for interconnection with external networks. In our example, the eth1 physical interface is connected to this bridge.

br-tun is the tunnel bridge. It is a virtual switch like br-int. It connects the GRE and VXLAN tunnel endpoints. As you can see in our example, it connects the node with the IP address 10.0.2.15 and two others with IP 10.0.2.20 and 10.0.2.30. In our example, a GRE tunnel was used.

The Tip in Chapter 5 explains how RegEx can be used in the grep command.

Opening Virtual Networking (OVN)

OVN is an open source project launched by the Open vSwitch team. Open vSwitch (OVS) includes OVN starting with version 2.5. OVN has been released as a separate project since version 2.13.

Instead of the Neutron agents, it uses ovn-controller and OVS flows to support all functions.

The OVN northbound (NB) database stores the logical configuration, which it gets from the OVN ML2 plug-in. The plug-in runs on the controller nodes and listens on port 6641/TCP.

The northbound service converts the logical network configuration from the northbound database to the logical path flows. The ovn-northd service populates the OVN southbound database with the logical path flows. The service runs on the controller nodes.

The southbound (SB) database listens on port 6642/TCP. The ovn-controller connects to the Southbound database to control and monitor network traffic. This service runs on all compute nodes.

The OVN metadata agent runs the HAProxy instances. These instances manage the HAProxy instances, OVS interfaces, and namespaces. It runs on all compute nodes.

The OpenFlow protocol configures Open vSwitch and defines how network traffic will flow. OpenFlow can dynamically rewrite flow tables, allowing it to add and remove network functions as required.

Differences Between OVS and OVN

Area | OVS | OVN |

|---|---|---|

DHCP Service | Provided by dnsmasq service per dhcp-xxx namespaces | OpenFlow rules by ovn-controller |

High availability of dataplane | Implemented via creating qrourer namespace | OpenFlow rules by ovn-controller |

Communication | RabbitMQ broker | Ovsdb protocol |

Components of data plane | Veth, iptables, namespaces | OpenFlow rules |

Metadata service | DHCP namespaces on controller nodes | Ovnmeta-xxx namespace on compute nodes |

Managing Network Resources

If you can’t create a network with type flat, add flat to the type_drivers option in the /etc/neutron/plugins/ml2/ml2_conf.ini config file. After changes, you need to restart the Neutron service.

A screenshot represents the procedure to create a network.

Net creating dialog in Horizon

A screenshot of the open stack dashboard represents the overview of the ext-net network.

Properties of the chosen network in Horizon

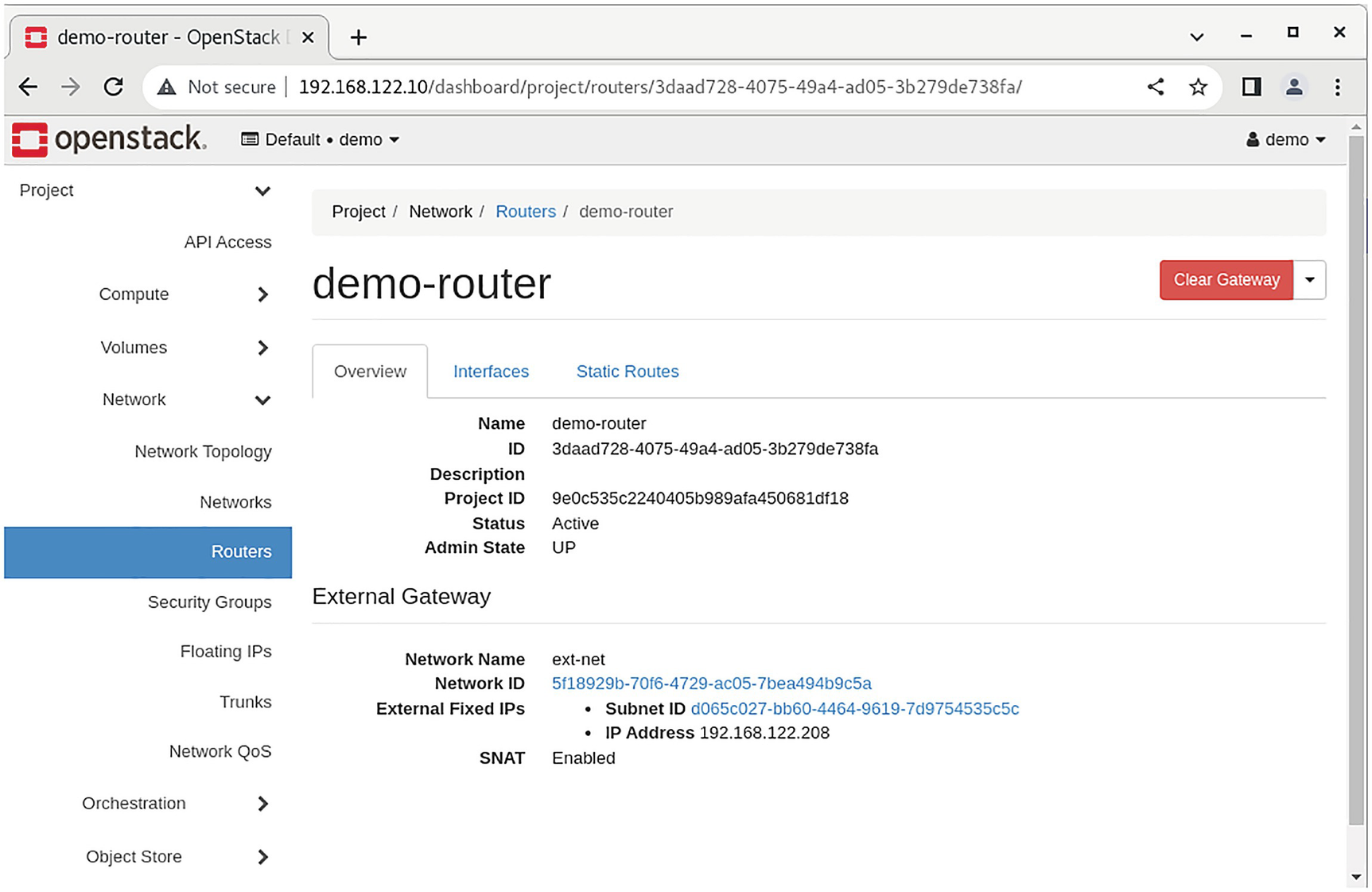

A screenshot of an open stack dashboard represents the overview of the demo-router.

Properties of a virtual router in Horizon

A screenshot of an open stack dashboard depicts the network topology on the right.

Network Topology tab in Horizon

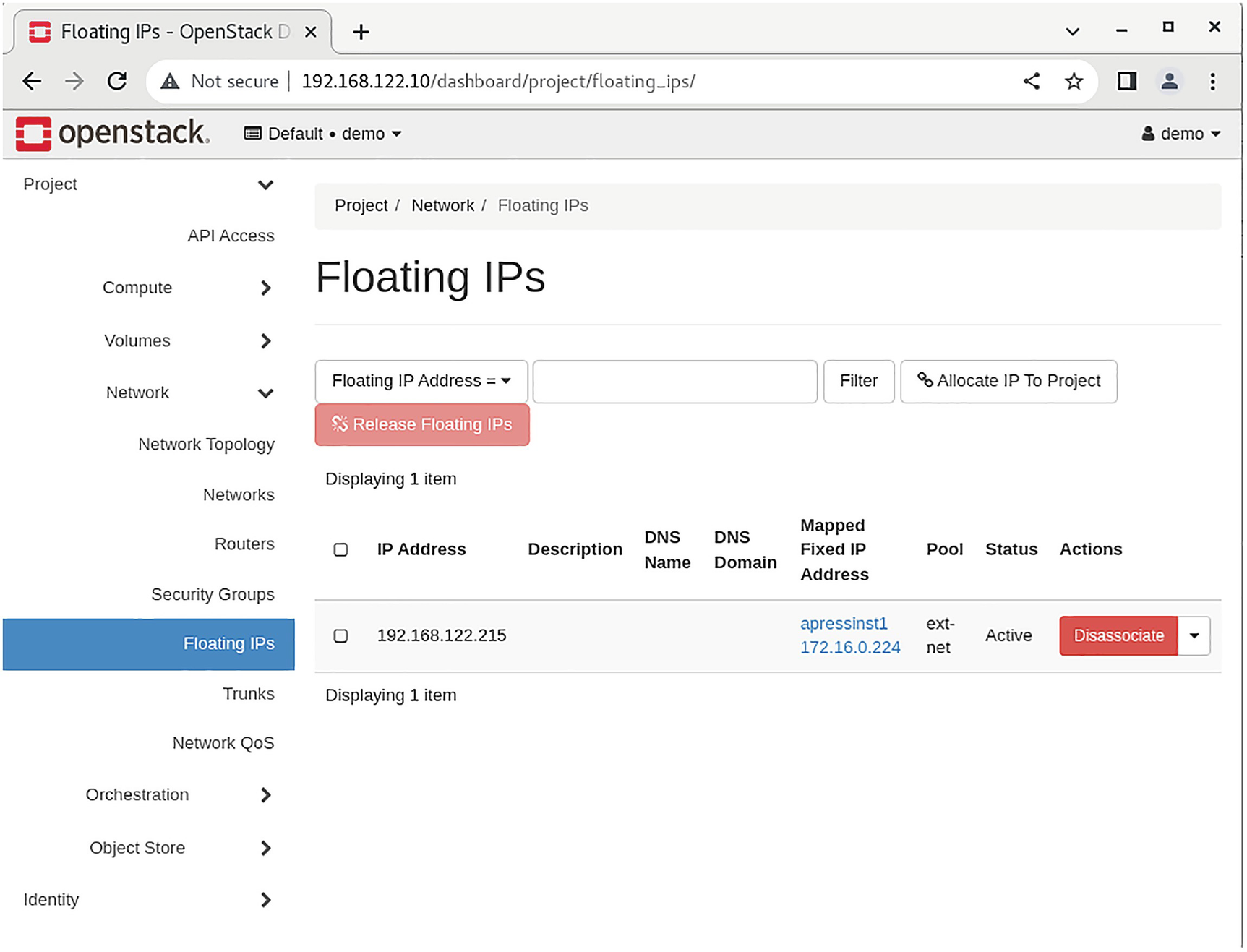

At this point, you have only one missing part. Your instances within the tenant network can connect to each other, but none of the instances can reach out to an external network. You need to add a floating IP from ext-net to the virtual machine.

A screenshot of an open stack dashboard depicts the floating IP address on the right.

Floating IPs tab in Horizon

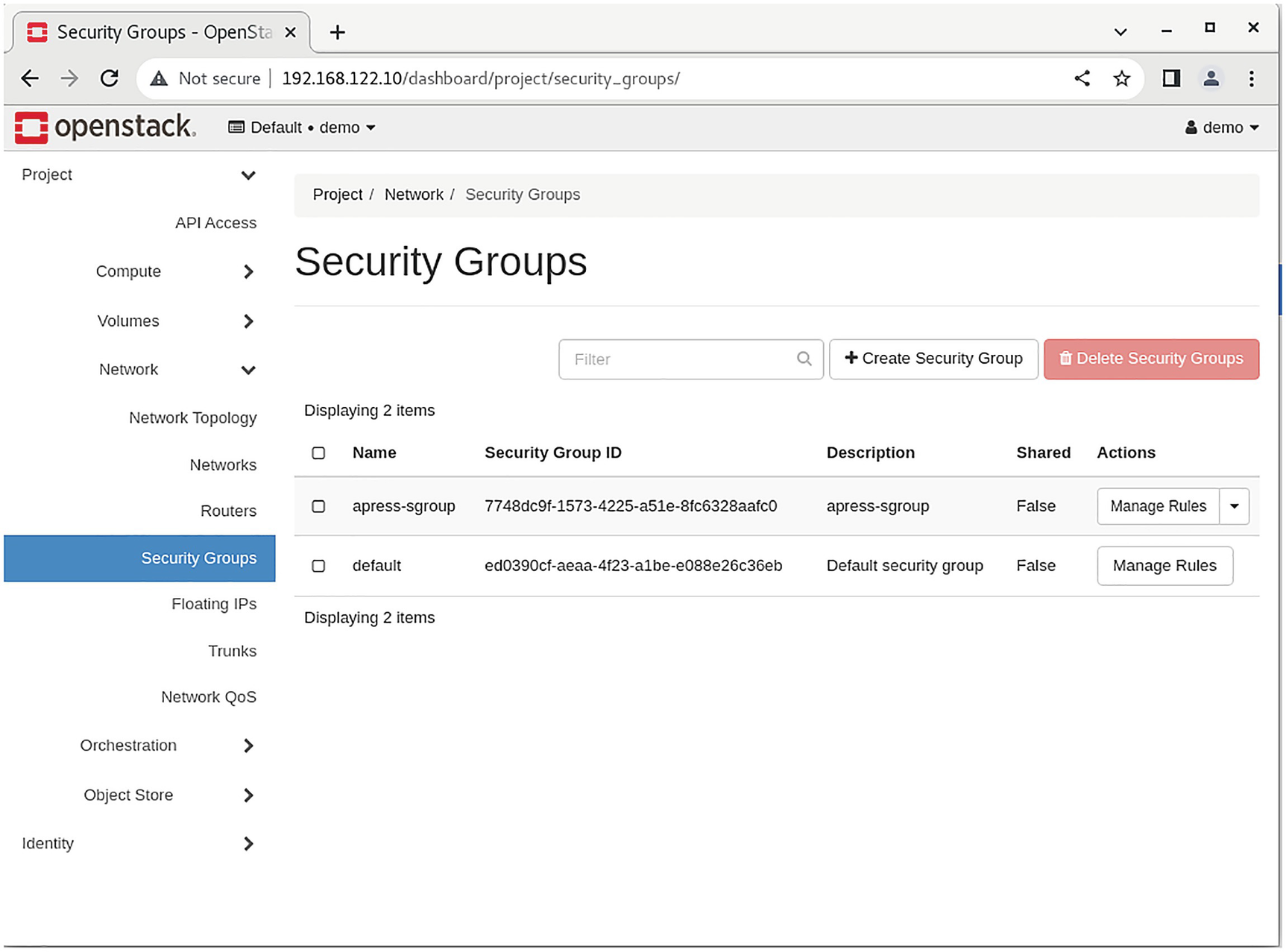

Managing Project Security Group Rules

A screenshot of an open stack dashboard depicts the security groups on the right.

Security Groups tab in Horizon

Managing Quotas

A screenshot of an open stack dashboard depicts the pathway of network quotas.

Default network quotas

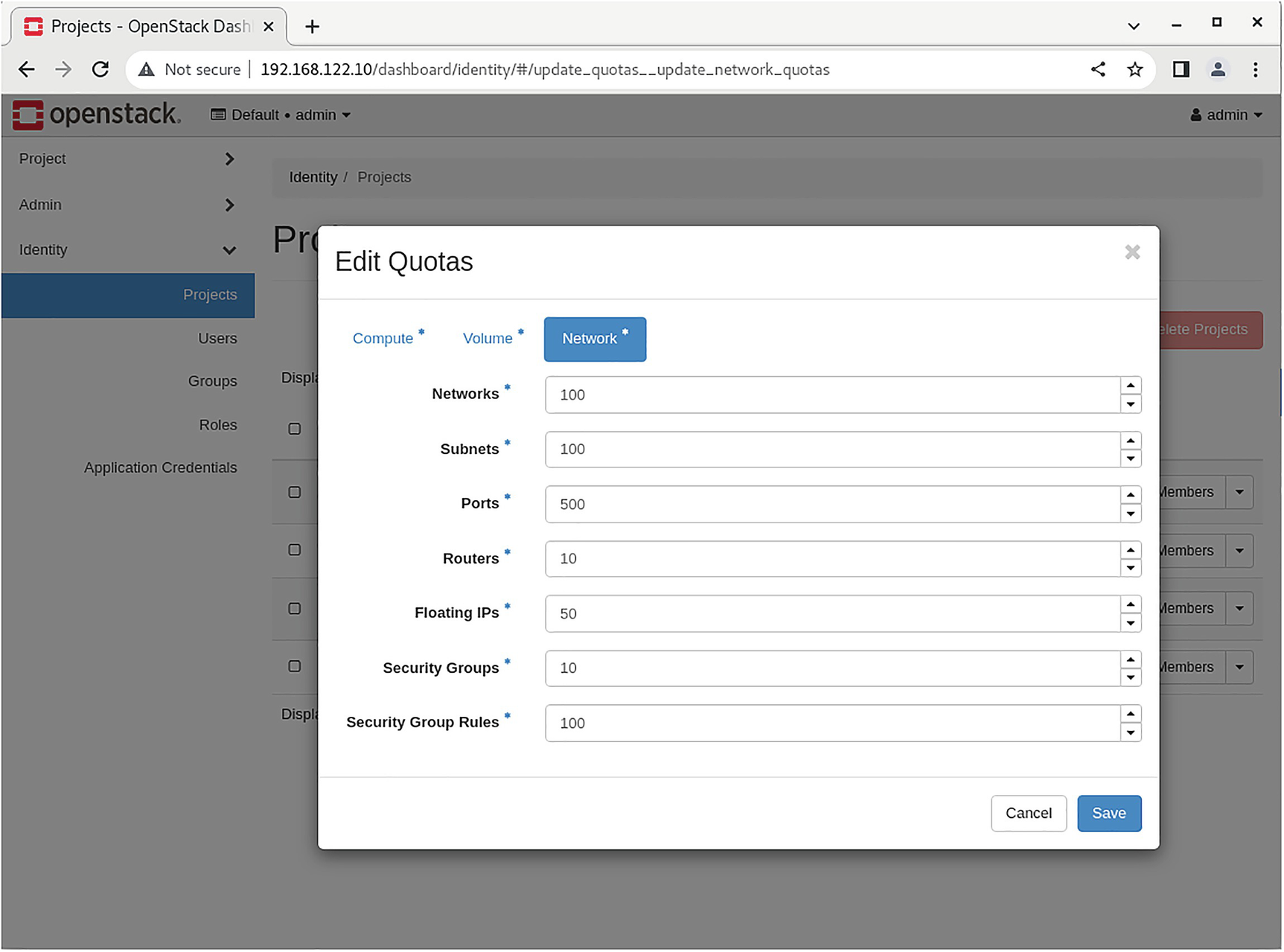

A screenshot of an open stack dashboard depicts the edit quotas pop-up menu.

Checking quotas in Horizon

Verifying the Operation of the Network Service

Neutron consists of several components. Its configuration files were listed at the beginning of this chapter. The Neutron API service is bound to port 9696. The log file for the Neutron server is available at /var/log/neutron/server.log.

Summary

It is essential to study this chapter’s material to pass the exam. You may not need to dig into the differences between OVS and OVN, but you must know the practical aspects of using a network in OpenStack.

The next chapter covers OpenStack’s compute services.

Review Questions

- 1.Which service provides routing and NAT in OVS-enabled OpenStack?

- A.

neutron-server

- B.

neutron-openvswitch-agent

- C.

neutron-l3-agent

- D.

neutron-metadata-agent

- 2.Which checks the status of running Neutron agents?

- A.

neutron agent-list

- B.

openstack network agent show

- C.

openstack network agent list

- D.

neutron agents-list

- 3.Which is the Neutron API service config?

- A.

/etc/neutron/neutron.conf

- B.

/etc/neutron.conf

- C.

/etc/neutron/plugin.ini

- D.

/etc/neutron/api-server.conf

- 4.Which correctly adds a new rule to an existing security group?

- A.

openstack security group rule create --protocol tcp --dst-port 22 apress-sgroup

- B.

openstack sgroup rule create --protocol tcp --dst-port 22 apress-sgroup

- C.

openstack sgroup rule create apress-sgroup --protocol tcp --dst-port 22

- D.

openstack security-group rule create --protocol tcp --dst-port 22 apress-sgroup

- 5.Where is the Neutron API log file located?

- A.

/var/log/neutron/neutron.log

- B.

/var/log/neutron/server.log

- C.

/var/log/neutron/api.log

- D.

/var/log/neutron/api-server.log

Answers

- 1.

B

- 2.

B

- 3.

A

- 4.

A

- 5.

B