Drools has been historically used (and will most likely continue to be used) to cover many different use cases. Since, as a framework, it acts as a behavior injection component, we can use it to inject all sorts of logic anywhere in our applications. Since we cannot cover all possible types of integration, we will try to cover the most common cases here, and discuss the natural evolution that is usually seen for Drools integration in diverse applications.

The first most common step for integrating Drools is usually embedding its dependencies and code inside our own application and using it as a library.

This is usually the first scenario for integrating Drools with our own apps because it is the quickest way to start using Drools. We just need to add the right dependencies and start using the APIs directly in the locations we want. The following figure shows this integration as it happens in the first stage:

First stage: Drools embedded inside our application

The first rule projects we defined in this book followed this structure. As you can see, Drools can interact with any and all layers of our application, depending on what we expect to accomplish with it:

- It could interact with the UI to provide complex form validations

- It could interact with data sources to load persisted data when a rule evaluation determines it is needed

- It could interact with outside services, to either load complex information into the rules or send messages to outside services about the outcome of the rules execution

During this first stage in the development of rule-based projects, all the components needed to run our rules are usually included in our application, including the rules. This means we will have to redeploy our application if we want to change the business rules running in it.

The first thing we usually need to do in these situations is to start updating the business logic inside the rules at a faster rate than the rest of the application. This leads us to upgrade our architecture so as to move business rules as an outside dependency, defined as a KJAR. Components in our rule runtime can dynamically load these rules from outside repositories, as the following diagram shows:

Second stage: Drools getting our rules from an external repository

In this structure, we moved the rules away from our application. Instead, we take them from an external JAR. This change is not just a project rearrangement, because the actual JAR with all the rules won't be a dependency at the moment of deploying our application. Instead, the Drools runtime will read the JAR directly from our Maven repository (local or remote) and load the rules whenever it needs to do it. The Drools runtime can do so by letting CDI directly inject our Kie-related objects with the appropriate version using a @KReleaseId annotation:

@Inject @KSession

@KReleaseId(groupId = "org.drools.devguide",

artifactId = "chapter-11-kjar",

version = "0.1-SNAPSHOT")

KieSession kSession;Alternatively, we can directly build our Kie Container objects based on a specific version of our code. If done this way, we can use a KieScanner to let our runtime know it should monitor the repository for possible future changes, so that the runtime can change versions without even having to restart:

KieServices ks = KieServices.Factory.get();

KieContainer kContainer = ks.newKieContainer(

ks.newReleaseId("org.drools.devguide",

"chapter-11-kjar", "0.1-SNAPSHOT"));

KieScanner kScanner = ks.newKieScanner(kContainer);

kScanner.start(10_000);

KieSession kSession = kContainer.newKieSession();We should mention at this stage that nowhere else in the project have we added a direct dependency to the specific JAR from which we want our Kie Session to be built. Dependency resolving is entirely managed by the runtime, by having org.kie:kie-ci as a dependency. You can see examples of these two cases in the chapter-11/chapter-11-ci project in the code bundle. Both examples are inside the KieCITest class.

Note

Note: In the previous code snippet, we use a specific Maven Release ID. But, just like in Maven, we can use ranges to define the version of the release on which we want to work. Also, the use of SNAPSHOT and LATEST can let the Maven components worry about the right version they should obtain, instead of returning a single specific version.

This is a necessary step toward having an independent development life cycle for our business rules. This independence will allow for the rules to be developed, deployed, and managed as many times as needed without having to redeploy our applications.

The main disadvantage at this stage of having Drools embedded in our application is the amount of dependencies needed to do all this. We started our case with only a few DRL files and about a dozen lightweight dependencies added to our classpath, but at this stage we will have a few other dependencies directly in our runtime that we might want to keep out of our classpath. This is the point where we start looking at exporting our rule runtime to external components, and we will discuss this in the next subsection.

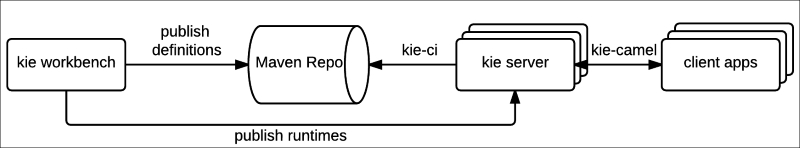

Once our business rule requirements start growing—including dynamic rule reload, more and more calls, and interactions with external systems—we get to the point where multiple applications would need to replicate a lot of things in their own runtimes to reach the same behavior for what could be common business logic. The natural transition at this point is creating knowledge-based external services outside our application. Managing independent rule development life cycles becomes easier, as does the possibility of replicating the service environment for higher demands. The following diagram shows a common way to design such services:

Third stage: Drools as a Service

In the previous diagram, we can notice two important aspects:

- As many client applications as desired can access the Drools runtime without adding big complexities to their own technology stack, because that complexity is entirely on the Drools Service side.

- All the rules are stored in a Maven repository. This allows for all environments to work with the same Business Rules if they share business logic, and for all of them to have the same versions of that knowledge updated at the same time, if rules are modified.

Creating this sort of component makes most sense when we want to add several custom configurations to our Drools runtime—for example, accessing external services in a legacy way, or when we want to provide custom responses to outside clients. We can use common integration tools, such as the Spring Framework (http://projects.spring.io/spring-framework/) and Apache Camel (http://camel.apache.org/), to integrate other components and environments with our Drools runtime. We will see how to configure these elements in further subsections.

However, when we need to use the Drools runtime internally (where the specific structure of the response can be adjusted later), and configurations are standard in terms of what Drools expects in a kmodule.xml, we might use a simpler approach. Drools provides a module called kie-server, which can be used to configure similar environments that are only responsible for running Drools rules and processes, taking the kJAR from outside sources, as the following diagram shows:

We will see how the kie-server is configured in a further section.

As you can see, there are multiple ways of integrating Drools, depending on our architecture, design, and performance-oriented decisions. Let's discuss some of the off-the-shelf integration tools available to make Drools interact with the rest of our components and applications.