Chapter 12. Host, Storage, Network, and Application Integration

This chapter covers the following topics:

Adapt Data Flow Security to Meet Changing Business Needs: This section covers issues affecting data flow security.

Standards: This section describes open standards, adherence to standards, competing standards, issues with lack of standards, and de facto standards.

Interoperability Issues: This section covers legacy systems and software/current systems, application requirements, software types (in-house developed, commercial, tailored commercial, open source), standard data formats, and protocols and APIs.

Resilience Issues: Topics include use of heterogeneous components, course of action automation/orchestration, distribution of critical assets, persistence and non-persistence of data, redundancy/high availability, and assumed likelihood of attack.

Data Security Considerations: This section describes data remnants, data aggregation, data isolation, data ownership, data sovereignty, and data volume.

Resources Provisioning and Deprovisioning: This section discusses users, servers, virtual devices, applications, and data remnants.

Design Considerations During Mergers, Acquisitions and Demergers/Divestitures: This section covers security issues that accompany mergers, acquisitions, and demergers/divestitures.

Network Secure Segmentation and Delegation: This section discusses the value and dangers of secure segmentation and delegation.

Logical Deployment Diagram and Corresponding Physical Deployment Diagram of All Relevant Devices: This section covers the use of logical and physical diagrams.

Security and Privacy Considerations of Storage Integration: This section discusses security issues related to the integration of storage.

Security Implications of Integrating Enterprise Applications: Applications include CRM, ERP, CMDB, CMS, integration enablers, directory services, DNS, SOA, and ESB.

This chapter covers CAS-003 objective 4.1.

Organizations must securely integrate hosts, storage, networks, and applications. It is a security practitioner’s responsibility to ensure that the appropriate security controls are implemented and tested. But this isn’t the only step a security practitioner must take. Security practitioners must also:

Secure data flows to meet changing business needs.

Understand standards.

Understand interoperability issues.

Use techniques to increase resilience

Know how to segment and delegate a secure network.

Analyze logical and physical deployment diagrams of all relevant devices.

Design a secure infrastructure.

Integrate secure storage solutions within the enterprise.

Deploy enterprise application integration enablers.

All these points are discussed in detail in this chapter

Adapt Data Flow Security to Meet Changing Business Needs

Business needs of an organization may change and require that security devices or controls be deployed in a different manner to protect data flow. As a security practitioner, you should be able to analyze business changes, look at how they affect security, and then deploy the appropriate controls.

To protect data during transmission, security practitioners should identify confidential and private information. Once this data has been properly identified, the following analysis steps should occur:

Step 1. Determine which applications and services access the information.

Step 2. Document where the information is stored.

Step 3. Document which security controls protect the stored information.

Step 4. Determine how the information is transmitted.

Step 5. Analyze whether authentication is used when accessing the information.

If it is, determine whether the authentication information is securely transmitted.

If it is not, determine whether authentication can be used.

Step 6. Analyze enterprise password policies, including password length, password complexity, and password expiration.

Step 7. Determine whether encryption is used to transmit data.

If it is, ensure that the level of encryption is appropriate and that the encryption algorithm is adequate.

If it is not, determine whether encryption can be used.

Step 8. Ensure that the encryption keys are protected.

Security practitioners should adhere to the defense-in-depth principle to ensure that the CIA of data is ensured across its entire life cycle. Applications and services should be analyzed to determine whether more secure alternatives can be used or whether inadequate security controls are deployed. Data at rest may require encryption to provide full protection and appropriate access control lists (ACLs) to ensure that only authorized users have access. For data transmission, secure protocols and encryption should be employed to prevent unauthorized users from being able to intercept and read data. The most secure level of authentication possible should be used in the enterprise. Appropriate password and account policies can protect against possible password attacks.

Finally, security practitioners should ensure that confidential and private information is isolated from other information, including locating the information on separate physical servers and isolating data using virtual LANs (VLANs). Disable all unnecessary services, protocols, and accounts on all devices. Make sure that all firmware, operating systems, and applications are kept up-to-date, based on vendor recommendations and releases.

When new technologies are deployed based on the changing business needs of the organization, security practitioners should be diligent to ensure that they understand all the security implications and issues with the new technology. Deploying a new technology before proper security analysis has occurred can result in security breaches that affect more than just the newly deployed technology. Remember that changes are inevitable! How you analyze and plan for these changes is what will set you apart from other security professionals.

Standards

Standards describe how policies will be implemented within an organization. They are actions or rules that are tactical in nature, meaning they provide the steps necessary to achieve security. Just like policies, standards should be regularly reviewed and revised. Standards are usually established by a governing organization, such as the National Institute of Standards and Technology (NIST).

Because organizations need guidance on protecting their assets, security professionals must be familiar with the standards that have been established. Many standards organizations have been formed, including NIST, the U.S. Department of Defense (DoD), and the International Organization for Standardization (ISO).

The U.S. DoD Instruction 8510.01 establishes a certification and accreditation process for DoD information systems. It can be found at http://www.esd.whs.mil/Portals/54/Documents/DD/issuances/dodi/851001_2014.pdf.

The ISO works with the International Electrotechnical Commission (IEC) to establish many standards regarding information security. The ISO/IEC standards that security professionals need to understand are covered in Chapter 1, “Business and Industry Influences and Associated Security Risks.”

Security professionals may also need to research other standards, including standards from the European Union Agency for Network and Information Security Agency (ENISA), European Union (EU), and U.S. National Security Agency (NSA). It is important that an organization research the many standards available and apply the most beneficial guidelines based on the organization’s needs.

The following sections briefly discuss open standards, adherence to standards, competing standards, lack of standards, and de facto standards.

Open Standards

Open standards are standards that are open to the general public. The general public can provide feedback on the standards and may use them without purchasing any rights to the standards or organizational membership. It is important that subject matter and industry experts help guide the development and maintenance of these standards.

Adherence to Standards

Organizations may opt to adhere entirely to both open standards and standards managed by a standards organization. Some organizations may even choose to adopt selected parts of standards, depending on the industry. Remember that an organization should fully review any standard and analyze how its adoption will affect the organization.

Legal implications can arise if an organization ignores well-known standards. Neglecting to use standards to guide your organization’s security strategy, especially if others in your industry do, can significantly impact your organization’s reputation and standing.

Competing Standards

Competing standards most often come into effect between competing vendors. For example, Microsoft often establishes its own standards for authentication. Many times, its standards are based on an industry standard with slight modifications to suit Microsoft’s needs. In contrast, Linux may implement standards, but because it is an open source operating system, changes may have been made along the way that may not fully align with the standards your organization needs to follow. Always compare competing standards to determine which standard best suits your organization’s needs.

Lack of Standards

In some new technology areas, standards are not formulated yet. Do not let a lack of formal standards prevent you from providing the best security controls for your organization. If you can find similar technology that has formal adopted standards, test the viability of those standards for your solution. In addition, you may want to solicit input from subject matter experts (SMEs). A lack of standards does not excuse your organization from taking every precaution necessary to protect confidential and private data.

De Facto Standards

De facto standards are standards that are widely accepted but not formally adopted. De jure standards are standards that are based on laws or regulations and are adopted by international standards organizations. De jure standards should take precedence over de facto standards. If possible, your organization should adopt security policies that implement both de facto and de jure standards.

Let’s look at an example. Suppose that a chief information officer’s (CIO’s) main objective is to deploy a system that supports the 802.11r standard, which will help wireless VoIP devices in moving vehicles. However, the 802.11r standard has not been formally ratified. The wireless vendor’s products do support 802.11r as it is currently defined. The administrators have tested the product and do not see any security or compatibility issues; however, they are concerned that the standard is not yet final. The best way to proceed would be to purchase the equipment now, as long as its firmware will be upgradable to the final 802.11r standard.

Interoperability Issues

When integrating solutions into a secure enterprise architecture, security practitioners must ensure that they understand all the interoperability issues that can occur with legacy systems/current systems, application requirements, and in-house versus commercial versus tailored commercial applications.

Legacy Systems and Software/Current Systems

Legacy systems are old technologies, computers, or applications that are considered outdated but provide a critical function in the enterprise. Often the vendor no longer supports the legacy systems, meaning that no future updates to the technology, computer, or application will be provided. It is always best to replace these systems as soon as possible because of the security issues they introduce. However, sometimes these systems must be retained because of the critical function they provide.

Some guidelines when retaining legacy systems include:

If possible, implement the legacy system in a protected network or demilitarized zone (DMZ).

Limit physical access to the legacy system to administrators.

If possible, deploy the legacy application on a virtual computer.

Employ ACLs to protect the data on the system.

Deploy the highest-level authentication and encryption mechanisms possible.

Let’s look at an example. Suppose an organization has a legacy customer relationship application that it needs to retain. The application requires the Windows 2000 operating system (OS), and the vendor no longer supports the application. The organization could deploy a Windows 2000 virtual machine (VM) and move the application to that VM. Users needing access to the application could use Remote Desktop to access the VM and the application.

Now let’s look at a more complex example. Say that an administrator replaces servers whenever budget money becomes available. Over the past several years, the company has been using 20 servers and 50 desktops from five different vendors. The management challenges and risks associated with this style of technology life cycle management include increased mean time to failure rate of legacy servers, OS variances, patch availability, and the ability to restore dissimilar hardware.

Application Requirements

Any application that is installed may require certain hardware, software, or other criteria that the organization does not use. However, with recent advances in virtual technology, the organization can implement a virtual machine that fulfills the criteria for the application through virtualization. For example, an application may require a certain screen resolution or graphics driver that is not available on any physical computers in the enterprise. In this case, the organization could deploy a virtual machine that includes the appropriate screen resolution or driver so that the application can be successfully deployed.

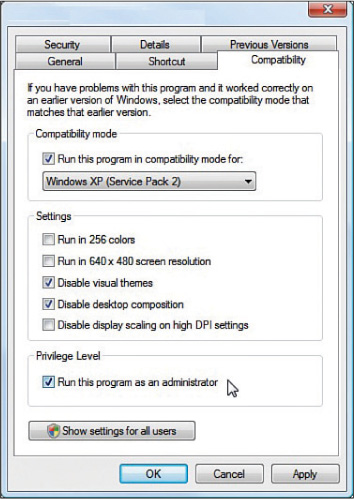

Keep in mind that some applications may require older versions of operating systems that are not available. In recent versions of Windows, you can choose to deploy an application in compatibility mode by using the Compatibility tab of the application’s executable file, as shown in Figure 12-1.

Software Types

Software can be of several types, and each type has advantages and disadvantages. This section looks at the major types of software.

In-house Developed

Applications can be developed in-house or purchased commercially. In-house developed applications can be completely customized to the organization, provided that developers have the necessary skills, budget, and time. Commercial applications may provide customization options to the organization. However, usually the customization is limited.

Organizations should fully research their options when a new application is needed. Once an organization has documented its needs, it can compare them to all the commercially available applications to see if any of them will work. It is usually more economical to purchase a commercial solution than to develop an in-house solution. However, each organization needs to fully assess the commercial application costs versus in-house development costs.

Commercial

Commercial software is well known and widely available; it is commonly referred to as commercial off-the-shelf (COTS) software. Information concerning vulnerabilities and viable attack patterns is typically shared within the IT community. This means that using commercial software can introduce new security risks in the enterprise. Also, it is difficult to verify the security of commercial software code because the source is not available to customers in most cases.

Tailored Commercial

Tailored commercial (or commercial customized) software is a new breed of software that comes in modules, the combination of which can be used to arrive at exactly the components required by the organization. It allows for customization by the organization.

Open Source

Open source software is free but comes with no guarantees and little support other than the help of the user community. It requires considerable knowledge and skill to apply to a specific enterprise but also affords the most flexibility.

Standard Data Formats

When integrating diverse applications in an enterprise, problems can arise with respect to data formats. Each application will have its own set of data formats specific to that software, as indicated by the filename extension. One challenge is securing these data types. Some methods of encryption work on some types but not others. One new development in this area is the Trusted Data Format (TDF), developed by Virtru.

TDF is essentially a protective wrapper that contains content. Whether you’re sending an email message, an Excel spreadsheet, or a cat photo, your files are encrypted and “wrapped” into a TDF file, which communicates with Virtru-enabled key stores to maintain access privileges.

Protocols and APIs

The use of diverse protocols and application program interfaces (APIs) is another challenge to interoperability. With networking, storage, and authentication protocols, support and understanding of the protocols in use is required of both endpoints. It should be a goal to reduce the number of protocols in use in order to reduce the attack surface. Each protocol has its own history of weaknesses to mitigate.

With respect to APIs, a host of approaches—including Simple Object Access Protocol(SOAP), REpresentational State Transfer (REST), and JavaScript Object Notation (JSON)—are available, and many enterprises find themselves using all of them. It should be a goal to reduce the number of APIs in use in order to reduce the attack surface.

Resilience Issues

When integrating solutions into a secure enterprise architecture, security practitioners must ensure that the result is an environment that can survive both in the moment and for a prolonged period of time. Mission-critical workflows and the systems that support the services and applications required to maintain business continuity must be resilient. This section looks at issues impacting availability.

Use of Heterogeneous Components

Heterogeneous components refers to systems that use more than one kind of components. Heterogeneous components can be within a system or can be different physical systems, such as when both Windows and Linux systems must communicate to complete an organizational process. Probably the best example of heterogeneous components is a data warehouse.

A data warehouse is a repository of information from heterogeneous databases. It allows for multiple sources of data to not only be stored in one place but to be organized in such a way that redundancy of data is reduced (called data normalization), and more sophisticated data mining tools are used to manipulate the data to discover relationships that may not have been apparent before. Along with the benefits they provide, they also offer more security challenges.

Another term is heterogeneous computing, which refers to systems that use more than one kind of processor or core. Such systems leverage the individual strengths of the different components by assigning each a task at which the processor excels. While this does typically boost performance, it makes the predictability of performance somewhat more difficult. As the ability to predict performance under various workloads is key to capacity planning, this can impact resilience in a negative way or may lead to overcapacity.

Course of Action Automation/Orchestration

While automation of tasks has been employed (at least through scripts) for some time, orchestration takes this a step further to automate entire workflows. One of the benefits of orchestration is the ability to build in logic that gives the systems supporting the workflow the ability to react to changes in the environment. This can be a key aid in supporting resilience of systems. Assets can be adjusted in real time to address changing workflows. For example, VMware vRealize is an orchestration product for the virtual environment that goes a step further and uses past data to predict workloads.

Distribution of Critical Assets

One strategy that can help support resiliency is to ensure that critical assets are not all located in the same physical location. Colocating critical assets leaves your organization open to the kind of nightmare that occurred in 2017 at the Atlanta airport. When a fire took out the main and backup power systems (which were located together), the busiest airport in the world went dark for over 12 hours. Distribution of critical assets certainly enhances resilience.

Persistence and Non-persistence of Data

Persistent data is data that is available even after you fully close and restart an app or a device. Conversely, non-persistent data is gone when an unexpected shutdown occurs. One strategy that has been employed to provide protection to non-persistent data is the hibernation process that a laptop undergoes when the battery runs down. Another example is system images that from time to time “save” all changes to a snapshot. Finally, the journaling process used by database systems records changes that are scheduled to be made to the database (recorded as transactions) and saves them to disk before they are even made. After a loss of power, the transaction log is read to apply the unapplied transactions. Security professionals must explore and utilize these various techniques to provide the highest possible level of protection for non-persistent data when integrating new systems.

Redundancy/High Availability

Fault tolerance allows a system to continue operating properly in the event that components within the system fail. For example, providing fault tolerance for a hard drive system involves using fault-tolerant drives and fault-tolerant drive adapters. However, the cost of any fault tolerance must be weighed against the cost of the redundant device or hardware. If security capabilities of information systems are not fault tolerant, attackers may be able to access systems if the security mechanisms fail. Organizations should weigh the cost of deploying a fault-tolerant system against the cost of any attack against the system being attacked. It may not be vital to provide a fault-tolerant security mechanism to protect public data, but it is very important to provide a fault-tolerant security mechanism to protect confidential data.

Availability means ensuring that data is accessible when and where it is needed. Only individuals who need access to data should be allowed access to that data. The two main instances in which availability is affected are (1) when attacks are carried out that disable or cripple a system and (2) when service loss occurs during and after disasters. Each system should be assessed in terms of its criticality to organizational operations. Controls should be implemented based on each system’s criticality level.

Availability is the opposite of destruction or isolation. Fault-tolerant technologies, such as RAID or redundant sites, are examples of controls that help improve availability.

Probably the most obvious influence in the resiliency of a new solution or system is the extent to which the system exhibits high availability, usually provided though redundancy of either internal components, network connections, or data sources. Taken to the next level, some systems may need to be deployed in clusters in order to provide the ability to overcome the loss of an entire system. All new integrations should consider high-availability solutions and redundant components when indicated by the criticality of the operation the system supports.

Assumed Likelihood of Attack

All new integrations should undergo risk analysis to determine the likelihood and impact of various vulnerabilities and threats. When attacks have been anticipated and controls have been applied, attacks can be avoided and their impact lessened. It is also critical to assess the extent to which interactions between new and older systems may create new vulnerabilities.

Data Security Considerations

The security of the data processed by any new system is perhaps one of the most important considerations during an integration. Data security must be considered during every stage of the data life cycle. This section discusses issues surrounding data security during integration.

Data Remnants

Data remnants are data that is left behind on a computer or another resource when that resource is no longer used. If resources, especially hard drives, are reused frequently, an unauthorized user can access data remnants. The best way to protect this data is to employ some sort of data encryption. If data is encrypted, it cannot be recovered without the original encryption key.

Administrators must understand the kind of data that is stored on physical drives so they can determine whether data remnants should be a concern. If the data stored on a drive is not private or confidential, the organization may not be concerned about data remnants. However, if the data stored on the drive is private or confidential, the organization may want to implement asset reuse and disposal policies.

Whenever data is erased or removed from storage media, residual data can be left behind. The data may be able to be reconstructed when the organization disposes of the media, resulting in unauthorized individuals or groups gaining access to data. Security professionals must consider media such as magnetic hard disk drives, solid-state drives, magnetic tapes, and optical media, such as CDs and DVDs. When considering data remanence, security professionals must understand three countermeasures:

Clearing: This includes removing data from the media so that data cannot be reconstructed using normal file recovery techniques and tools. With this method, the data is recoverable only using special forensic techniques.

Purging: Also referred to as sanitization, purging makes the data unreadable even with advanced forensic techniques. When this technique is used, data should be unrecoverable.

Destruction: Destruction involves destroying the media on which the data resides. Overwriting is a destruction technique that writes data patterns over the entire media, thereby eliminating any trace data. Degaussing, another destruction technique, involves exposing the media to a powerful, alternating magnetic field to remove any previously written data and leave the media in a magnetically randomized (blank) state. Encryption scrambles the data on the media, thereby rendering it unreadable without the encryption key. Physical destruction involves physically breaking the media apart or chemically altering it. For magnetic media, physical destruction can also involve exposure to high temperatures.

The majority of these countermeasures work for magnetic media. However, solid-state drives present unique challenges because they cannot be overwritten. Most solid-state drive vendors provide sanitization commands that can be used to erase the data on the drive. Security professionals should research these commands to ensure that they are effective. Another option for these drives is to erase the cryptographic key. Often a combination of these methods must be used to fully ensure that the data is removed.

Data remanence is also a consideration when using any cloud-based solution for an organization. Security professionals should be involved in negotiating any contract with a cloud-based provider to ensure that the contract covers data remanence issues, although it is difficult to determine that the data is properly removed. Using data encryption is a great way to ensure that data remanence is not a concern when dealing with the cloud.

Data Aggregation

Data aggregation allows data from multiple resources to be queried and compiled together into a summary report. The account used to access the data needs to have appropriate permissions on all of the domains and servers involved. In most cases, these types of deployments incorporate a centralized data warehousing and mining solution on a dedicated server.

Security threats to databases usually revolve around unwanted access to data. Two security threats that exist in managing databases are the processes of aggregation and inference. Aggregation is the act of combining information from various sources. This can become a security issue with databases when a user does not have access to a given set of data objects but does have access to them individually—or least has access to some of them—and is able to piece together the information to which he should not have access. The process of piecing together the information is called inference. Two types of access measures can be put in place to help prevent access to inferable information:

Content-dependent access control: With this type of measure, access is based on the sensitivity of the data. For example, a department manager might have access to the salaries of the employees in his or her department but not to the salaries of employees in other departments. The cost of this measure is increased processing overhead.

Context-dependent access control: With this type of measure, access is based on multiple factors to help prevent inference. Access control can be a function of factors such as location, time of day, and previous access history.

Data Isolation

Data isolation in databases prevents data from being corrupted by two concurrent operations. Data isolation is used in cloud computing to ensure that tenant data in a multitenant solution is isolated from other tenants’ data, using tenant IDs in the data labels. Trusted login services are usually used as well. In both of these deployments, data isolation should be monitored to ensure that data is not corrupted. In most cases, some sort of transaction rollback should be employed to ensure that proper recovery can be made.

Data Ownership

While most of the data an organization possesses may be created in-house, some of it is not. In many cases, organizations acquire data from others who generate such data as their business. These entities may retain ownership of the data and only license its use. When integrated systems make use of such data, consideration must give to any obligations surrounding this acquired data. Service-level agreements (SLAs) that specify particular types of treatment or protection of the data should be followed.

The main responsibility of a data or information owner is to determine the classification level of the information she owns and to protect the data for which she is responsible. This role approves or denies access rights to the data. However, the data owner usually does not handle the implementation of the data access controls.

The data owner role is usually filled by an individual who understands the data best through membership in a particular business unit. Each business unit should have a data owner. For example, a human resources department employee better understands the human resources data than does an accounting department employee.

The data custodian implements the information classification and controls after they are determined by the data owner. Whereas the data owner is usually an individual who understands the data, the data custodian does not need any knowledge of the data beyond its classification levels. Although a human resources manager should be the data owner for the human resources data, an IT department member could act as the data custodian for the data.

Data Sovereignty

Information that has been converted and stored in binary digital form is subject to the laws of the country in which it is located. This concept is called data sovereignty. When an organization operates globally, data sovereignty must be considered. It can affect security issues such as selection of controls and ultimately could lead to a decision to locate all data centrally in the home country.

No organization operates within a bubble. All organizations are affected by laws, regulations, and compliance requirements. Organizations must ensure that they comply with all contracts, laws, industry standards, and regulations. Security professionals must understand the laws and regulations of the country or countries they are working in and the industry within which they operate. In many cases, laws and regulations are written in a manner whereby specific actions must be taken. However, in some cases, laws and regulations leave it up to the organization to determine how to comply.

The United States and the European Union both have established laws and regulations that affect organizations that do business within their area of governance. While security professionals should strive to understand laws and regulations, security professionals may not have the level of knowledge and background to fully interpret these laws and regulations to protect their organization. In these cases, security professionals should work with legal representation regarding legislative or regulatory compliance.

Data Volume

Organizations should strive to minimize the amount of data they hold. More data means a larger attack surface. Data retention policies should be created that prescribe the destruction of data when it is no longer of use to the organization. Keep in mind that the creation of such policies should be driven by legal and regulatory requirements for the retention of data that might be relevant to the industry in which the enterprise operates.

Resources Provisioning and Deprovisioning

One of the benefits of many cloud deployments is the ability to provision and deprovision resources as needed. This includes provisioning and deprovisioning users, servers, virtual devices, and applications. Depending on the deployment model used, your organization may have an internal administrator who handles these tasks, the cloud provider may handle these tasks, or you may have some hybrid solution where these tasks are split between an internal administrator and cloud provider personnel. Remember that any solution where cloud provider personnel must provide provisioning and deprovisioning may not be ideal because those people may not be immediately available to perform any tasks that you need.

Users

When provisioning (or creating) user accounts, it is always best to use an account template to ensure that all of the appropriate password policies, user permissions, and other account settings are applied to the newly created account.

When deprovisioning a user account, you should consider first disabling the account. Once an account is deleted, it may be impossible to access files, folders, and other resources that are owned by that user account. If the account is disabled instead of deleted, the administrator can reenable the account temporarily to access the resources owned by that account.

An organization should adopt a formal procedure for requesting the creation, disablement, or deletion of user accounts. In addition, administrators should monitor account usage to ensure that accounts are active.

Servers

Provisioning and deprovisioning servers should be based on organizational need and performance statistics. To determine when a new server should be provisioned, administrators must monitor the current usage of the server resources. Once a predefined threshold has been reached, procedures should be put in place to ensure that new server resources are provisioned. When those resources are no longer needed, procedures should also be in place to deprovision the servers. Once again, monitoring is key.

Virtual Devices

Virtual devices consume resources of the host machine. For example, the memory on a physical machine is shared among all the virtual devices that are deployed on that physical machine. Administrators should provision new virtual devices when organizational need demands. However, it is just as important that virtual devices be deprovisioned when they are no longer needed to free up the resources for other virtual devices.

Applications

Organizations often need a variety of applications. It is important to maintain the licenses for any commercial applications that are used. Administrators must be notified to ensure that licenses are not renewed when an organization no longer needs them or that they are renewed at a lower level if usage has simply decreased.

Data Remnants

When storage devices are deprovisioned or when they are prepared for reuse, consideration must be given to data remnants, as discussed earlier in this chapter.

Design Considerations During Mergers, Acquisitions and Demergers/Divestitures

When organizations merge, are acquired, or split, the enterprise design must be considered. In the case of mergers or acquisitions, each separate organization has its own resources, infrastructure, and model. As a security practitioner, it is important that you ensure that two organizations’ structures are analyzed thoroughly before deciding how to merge them. For demergers, you probably have to help determine how to best divide the resources. The security of data should always be a top concern.

Network Secure Segmentation and Delegation

An organization may need to segment its network to improve network performance, to protect certain traffic, or for a number of other reasons. Segmenting an enterprise network is usually achieved through the use of routers, switches, and firewalls. A network administrator may decide to implement VLANs using switches or may deploy a DMZ using firewalls. No matter how you choose to segment the network, you should ensure that the interfaces that connect the segments are as secure as possible. This may mean closing ports, implementing MAC filtering, and using other security controls. In a virtualized environment, you can implement separate physical trust zones. When the segments or zones are created, you can delegate separate administrators who are responsible for managing the different segments or zones.

Logical Deployment Diagram and Corresponding Physical Deployment Diagram of All Relevant Devices

For the CASP exam, security practitioners must understand two main types of enterprise deployment diagrams: logical deployment diagrams and physical deployment diagrams. A logical deployment diagram shows the architecture, including the domain architecture, with the existing domain hierarchy, names, and addressing scheme; server roles; and trust relationships. A physical deployment diagram shows the details of physical communication links, such as cable length, grade, and wiring paths; servers, with computer name, IP address (if static), server role, and domain membership; device location, such as printer, hub, switch, modem, router, or bridge, as well as proxy location; communication links and the available bandwidth between sites; and the number of users, including mobile users, at each site. A logical diagram usually contains less information than a physical diagram. While you can often create a logical diagram from a physical diagram, it is nearly impossible to create a physical diagram from a logical one.

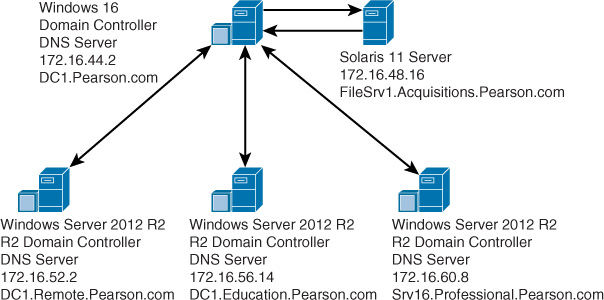

An example of a logical network diagram is shown in Figure 12-2.

As you can see, the logical diagram shows only a few of the servers in the network, the services they provide, their IP addresses, and their DNS names. The relationships between the different servers are shown by the arrows between them.

An example of a physical network diagram is shown in Figure 12-3.

A physical network diagram gives much more information than a logical one, including the cabling used, the devices on the network, the pertinent information for each server, and other connection information.

Security and Privacy Considerations of Storage Integration

When integrating storage solutions into an enterprise, security practitioners should be involved in the design and deployment to ensure that security issues are considered.

The following are some of the security considerations for storage integration:

Limit physical access to the storage solution.

Create a private network to manage the storage solution.

Implement ACLs for all data, paths, subnets, and networks.

Implement ACLs at the port level, if possible.

Implement multi-factor authentication.

Security practitioners should ensure that an organization adopts appropriate security policies for storage solutions to ensure that storage administrators prioritize the security of the storage solutions.

Security Implications of Integrating Enterprise Applications

Enterprise application integration enablers ensure that applications and services in an enterprise are able to communicate as needed. For the CASP exam, the primary concerns are understanding which enabler is needed in a particular situation or scenario and ensuring that the solution is deployed in the most secure manner possible. The solutions that you must understand include customer relationship management (CRM); enterprise resource planning (ERP); governance, risk, and compliance (GRC); enterprise service bus (ESB); service-oriented architecture (SOA); Directory Services; Domain Name System (DNS); configuration management database (CMDB); and content management systems (CMSs).

CRM

Customer relationship management (CRM) involves identifying customers and storing all customer-related data, particularly contact information and data on any direct contacts with customers. The security of CRM is vital to an organization. In most cases, access to the CRM system is limited to sales and marketing personnel and management. If remote access to the CRM system is required, you should deploy a VPN or similar solution to ensure that the CRM data is protected.

ERP

Enterprise resource planning (ERP) involves collecting, storing, managing, and interpreting data from product planning, product cost, manufacturing or service delivery, marketing/sales, inventory management, shipping, payment, and any other business processes. An ERP system is accessed by personnel for reporting purposes. ERP should be deployed on a secured internal network or DMZ. When deploying ERP, you might face objections because some departments may not want to share their process information with other departments.

CMDB

A configuration management database (CMDB) keeps track of the state of assets, such as products, systems, software, facilities, and people, as they exist at specific points in time, as well as the relationships between such assets. The IT department typically uses CMDBs as data warehouses.

CMS

A content management system (CMS) publishes, edits, modifies, organizes, deletes, and maintains content from a central interface. This central interface allows users to quickly locate content. Because edits occur from this central location, it is easy for users to view the latest version of the content. Microsoft SharePoint is an example of a CMS.

Integration Enablers

Enterprise application integration enablers ensure that applications and services in an enterprise can communicate as needed. The services listed in this section are all examples of such enablers.

Directory Services

Directory Services stores, organizes, and provides access to information in a computer operating system’s directory. With Directory Services, users can access a resource by using the resource’s name instead of its IP or MAC address. Most enterprises implement an internal Directory Services server that handles any internal requests. This internal server communicates with a root server on a public network or with an externally facing server that is protected by a firewall or other security device to obtain information on any resources that are not on the local enterprise network. Active Directory, DNS, and LDAP are examples of directory services.

DNS

Domain Name System (DNS) provides a hierarchical naming system for computers, services, and any resources connected to the Internet or a private network. You should enable Domain Name System Security Extensions (DNSSEC) to ensure that a DNS server is authenticated before the transfer of DNS information begins between the DNS server and client. Transaction Signature (TSIG) is a cryptographic mechanism used with DNSSEC that allows a DNS server to automatically update client resource records if their IP addresses or hostnames change. The TSIG record is used to validate a DNS client.

As a security measure, you can configure internal DNS servers to communicate only with root servers. When you configure internal DNS servers to communicate only with root servers, the internal DNS servers are prevented from communicating with any other external DNS servers.

The Start of Authority (SOA) contains the information regarding a DNS zone’s authoritative server. A DNS record’s Time to Live (TTL) determines how long a DNS record will live before it needs to be refreshed. When a record’s TTL expires, the record is removed from the DNS cache. Poisoning the DNS cache involves adding false records to the DNS zone. If you use a longer TTL, the resource record is read less frequently and therefore is less likely to be poisoned.

Let’s look at a security issue that involves DNS. Suppose an IT administrator installs new DNS name servers that host the company mail exchanger (MX) records and resolve the web server’s public address. To secure the zone transfer between the DNS servers, the administrator uses only server ACLs. However, any secondary DNS servers would still be susceptible to IP spoofing attacks.

Another scenario could occur when a security team determines that someone from outside the organization has obtained sensitive information about the internal organization by querying the company’s external DNS server. The security manager should address the problem by implementing a split DNS server, allowing the external DNS server to contain only information about domains that the outside world should be aware of and enabling the internal DNS server to maintain authoritative records for internal systems.

SOA

Service-oriented architecture (SOA) involves using software to provide application functionality as services to other applications. A service is a single unit of functionality, and services are combined to provide the entire functionality needed. This architecture often intersects with web services.

Let’s look at an SOA scenario. Suppose a database team suggests deploying an SOA-based system across the enterprise. The CIO decides to consult the security manager about the risk implications of adopting this architecture. The security manager should present to the CIO two concerns for the SOA system: Users and services are distributed, often over the Internet, and SOA abstracts legacy systems such as web services, which are often exposed to outside threats.

ESB

Enterprise service bus (ESB) involves designing and implementing communication between mutually interacting software applications in an SOA. It allows SOAP, Java, .NET, and other applications to communicate. An ESB solution is usually deployed on a DMZ to allow communication with business partners.

ESB is the most suitable solution for providing event-driven and standards-based secure software architecture.

Exam Preparation Tasks

You have a couple choices for exam preparation: the exercises here and the practice exams in the Pearson IT Certification test engine.

Review All Key Topics

Review the most important topics in this chapter, noted with the Key Topics icon in the outer margin of the page. Table 12-1 lists these key topics and the page number on which each is found.

Table 12-1 Key Topics for Chapter 12

Key Topic Element |

Description |

Page Number |

List |

Data analysis steps |

487 |

List |

Guidelines when retaining legacy systems |

491 |

List |

Software types |

493 |

Logical network diagram |

502 |

|

Physical network diagram |

503 |

|

List |

Security considerations for storage integration |

504 |

Define Key Terms

Define the following key terms from this chapter and check your answers in the glossary:

configuration management database (CMDB)

content management system (CMS)

customer relationship management (CRM)

enterprise resource planning (ERP)

service-oriented architecture (SOA)

tailored commercial (or commercial customized)

virtual local area network (VLAN)

Review Questions

1. Several business changes have occurred in your company over the past six months. You must analyze your enterprise’s data to ensure that data flows are protected. Which of the following guidelines should you follow? (Choose all that apply.)

Determine which applications and services access the data.

Determine where the data is stored.

Share encryption keys with all users.

Determine how the data is transmitted.

2. During a recent security analysis, you determined that users do not use authentication when accessing some private data. What should you do first?

Encrypt the data.

Configure the appropriate ACL for the data.

Determine whether authentication can be used.

Implement complex user passwords.

3. Your organization must comply with several industry and governmental standards to protect private and confidential information. You must analyze which standards to implement. Which standards should you consider?

open standards, de facto standards, and de jure standards

open standards only

de facto standards only

de jure standards only

4. Which of the following is a new breed of software that comes in modules allowing for customization by the organization?

tailored commercial

open source

in-house developed

commercial

5. Management expresses concerns about using multitenant public cloud solutions to store organizational data. You explain that tenant data in a multitenant solution is quarantined from other tenants’ data, using tenant IDs in the data labels. What is the term for this process?

data remnants

data aggregation

data purging

data isolation

6. You have been hired as a security practitioner for an organization. You ask the network administrator for any network diagrams that are available. Which network diagram would give you the most information?

logical network diagram

wireless network diagram

physical network diagram

DMZ diagram

7. Your organization has recently partnered with another organization. The partner organization needs access to certain resources. Management wants you to create a perimeter network that contains only the resources that the partner organization needs to access. What should you do?

Deploy a DMZ.

Deploy a VLAN.

Deploy a wireless network.

Deploy a VPN.

8. What concept prescribes that information that has been converted and stored in binary digital form is subject to the laws of the country in which it is located?

data sovereignty

data ownership

data isolation

data aggregation

9. Recently, sales people within your organization have been having trouble managing customer-related data. Management is concerned that sales figures are being negatively affected as a result of this mismanagement. You have been asked to provide a suggestion to fix this problem. What should you recommend?

Deploy an ERP solution.

Deploy a CRM solution.

Deploy a GRC solution.

Deploy a CMS solution.

10. As your enterprise has grown, it has become increasingly hard to access and manage resources. Users often have trouble locating printers, servers, and other resources. You have been asked to deploy a solution that will allow easy access to internal resources. Which solution should you deploy?

Directory Services

CMDB

ESB

SOA