Chapter 6

Cloud Network Infrastructure

CERTIFICATION OBJECTIVES

6.01 Cloud Network Components

6.02 Network Protocols

6.03 Cloud Load Balancing

![]() Two-Minute Drill

Two-Minute Drill

Q&A Self Test

Networks provide the foundation that supports cloud services. Careful planning and testing result in a solid cloud-based network infrastructure. This chapter starts by introducing you to a variety of methods through which your on-premises network and cloud networks can be connected.

Next, cloud network components such as virtual subnets and their related IP addressing are discussed. We will then cover common network protocols used with both on-premises and cloud networking. Lastly, we’ll examine what to consider when planning and configuring site-to-site VPNs or client-to-site VPNs.

CERTIFICATION OBJECTIVE 6.01

Cloud Network Components

Planning your cloud network environment shares many similarities with planning an on-premises network environment. Let’s take a look at some factors that you should consider:

![]() How many subnets are needed?

How many subnets are needed?

![]() Which IPv4 or IPv6 address ranges will be used?

Which IPv4 or IPv6 address ranges will be used?

![]() Should any IT workloads be isolated from others?

Should any IT workloads be isolated from others?

![]() Should any IT workloads be publicly accessible from the Internet?

Should any IT workloads be publicly accessible from the Internet?

![]() Do remote users need secure VPN access to the company network?

Do remote users need secure VPN access to the company network?

![]() Are custom routes required to send network traffic to a security appliance?

Are custom routes required to send network traffic to a security appliance?

Fortunately for cloud customers, cloud service providers take advantage of software-defined networking (SDN), which means cloud customers don’t have to worry about the detailed complexities of configuring and managing a wide array of network hardware and software. Instead, cloud customers use intuitive graphical user interfaces (GUIs) or command-line tools that then configure the underlying network components.

SDN works well for cloud service providers because it allows their many cloud customers to provision network configurations across many underlying network infrastructure devices such as routers and switches. Without SDN, cloud customers would most likely submit their network configuration requests to cloud data center technicians for implementation. The data center technicians would have to be proficient in configuring a multitude of network devices, potentially from different vendors whose detailed configuration steps differ. Think of SDN as a centralized, easy-to-use software layer that sits on top of network infrastructure hardware.

Connecting to Cloud Environments

Whether you are an individual cloud customer or a member of a corporate enterprise using cloud services, one way or another, you need to connect to a cloud service provider. As you will recall from Chapter 1, an important characteristic of cloud computing is broad access to IT services over a network.

Internet Connectivity

The Internet is the most common way of directly connecting to a public CSP for creating and managing cloud resources, as well as the most common way for users to access those services. When cloud computing services are crucial for the organization’s survival, the organization must carefully examine the resiliency of its Internet connectivity. If the organization has a single Internet connection and it fails, then how will public cloud services be accessed? This problem is exacerbated with organizations that funnel all branch office Internet traffic through a single Internet hub location. If the central hub location fails in some way, all branch offices might be unable to contact the public CSP.

What can be done about this single point of failure? The answer is clear—ensure each on-premises location has two Internet connections, ideally through different Internet service providers (ISPs) if possible. If one ISP experiences a network outage, ideally the other ISP will not simultaneously. Addressing single points of failure is not always this simple, of course, but your organization’s risk appetite will determine how much importance is attributed to this scenario.

Dedicated Network Circuit

Another option besides the Internet is a dedicated private network link to the public CSP from your on-premises network, essentially a wide area network (WAN) link. Microsoft Azure calls this ExpressRoute, and Amazon Web Services (AWS) calls it Direct Connect; different names, same end result. Some IT workloads, such as Voice over IP (VoIP) packets, are sensitive to network disruptions and might require a guaranteed quality of service (QoS) to ensure packet loss is minimized. Figure 6-1 shows an example of provisioning an AWS Direct Connect link.

FIGURE 6-1 Creating an AWS Direct Connect link

You will need to check your CSP’s documentation to determine if it has a local point of presence to which your organization can establish a dedicated private network link.

There are a few potential benefits to using a dedicated network circuit to the public cloud:

![]() Network traffic does not traverse the Internet.

Network traffic does not traverse the Internet.

![]() Network bandwidth is predictable.

Network bandwidth is predictable.

![]() Network throughput is higher than through the Internet.

Network throughput is higher than through the Internet.

![]() It may be less expensive than a fast Internet connection.

It may be less expensive than a fast Internet connection.

Cloud VPNs

Virtual private networks (VPNs) have been around for a long time. This solution creates an end-to-end encrypted tunnel over an untrusted network such as the Internet. Network traffic sent through the VPN tunnel is protected until it is decrypted at the other end of the VPN connection. This is a great way to connect

![]() Branch offices over the Internet

Branch offices over the Internet

![]() Remote users to a private network over the Internet

Remote users to a private network over the Internet

![]() On-premises networks to a cloud-based virtual network

On-premises networks to a cloud-based virtual network

When planning VPNs for connection to a public CSP such as Microsoft Azure, you need to consider the VPN type.

Site-to-Site VPNs

A site-to-site VPN configuration is used to extend your on-premises network into the cloud. VPN configurations require a minimum of two endpoints, and in this case, the endpoints are an on-premises VPN device and a cloud-based VPN device. Your on-premises VPN device could be hardware or software based and must have a public IP address to establish the tunnel with the cloud service provider’s VPN appliance.

Site-to-site VPNs allow network devices on both ends of the connection to communicate by sending traffic through the VPN tunnel. In other words, you don’t need to install and configure a VPN client on each device. Figure 6-2 shows the configuration of a Microsoft Azure Site-to-Site VPN. The pre-shared key specified in the Shared Key (PSK) field is used to establish the VPN tunnel between the two endpoints.

FIGURE 6-2 Microsoft Azure site-to-site IPsec VPN configuration

Multiple branch office locations can be connected to one another using a VPN. If one of those branch offices also has a VPN connection to the cloud, connected branch offices can also be configured to connect to the cloud through that VPN connection.

Client-to-Site VPNs

Some of today’s workforce works remotely—whether from home, from different office locations, or traveling for business. A client-to-site (C2S) VPN, also called a point-to-site (P2S) VPN, connects an individual device to a VPN endpoint to establish an encrypted tunnel. This allows remote users to securely access IT services over an untrusted network. For example, you could allow a user working from home to securely connect to a cloud-deployed database securely through a VPN connection. Figure 6-3 shows a C2S VPN configuration in the Microsoft Azure cloud, which requires client devices to have a security certificate to authenticate to the VPN; that is why the public certificate data is specified. Also note the Download VPN Client button; this downloads a VPN client configuration.zip file for a Windows computer that will be the VPN client.

FIGURE 6-3 Microsoft Azure point-to-site VPN configuration

Some exam questions might test your knowledge of solutions that combine cloud network solutions. As an example, for the utmost in security and predictable bandwidth, the scenario might call for a site-to-site VPN configured on a dedicated network circuit.

Cloud Virtual Networks

Because of SDN, creating cloud-based virtual networks is straightforward as long as you plan carefully and research the options offered by various cloud service providers before choosing one. Cloud service providers use their own nomenclature for cloud virtual networks. For example, Microsoft Azure calls them VNets, while AWS calls them Virtual Private Clouds (VPCs).

Both VNets and VPCs contain one or more subnets, much like an on-premises physical network switch that can be carved into multiple virtual local area networks (VLANs). VLANs provide network isolation for security and/or reduced network congestion. You can also control whether or not traffic from one subnet can reach other subnets. Network isolation is commonly maintained between testing and production environments. This is true both on premises and in the cloud.

IP Addressing

Deploying cloud-based virtual networks requires specifying a TCP/IP address range that will be used, normally in what is called Classless Inter-Domain Routing (CIDR) format. CIDR format specifies an IP network address followed by a slash and the number of bits in a subnet mask, such as 10.1.0.0/16, where 10.1.0.0 is the network address with 16 bits in the subnet mask, or 255.255.0.0.

The IP address range used by a cloud-based virtual subnet must fall within the IP range assigned to the cloud-based virtual network, such as the following:

![]() VNet1: 10.1.0.0/16

VNet1: 10.1.0.0/16

![]() Subnet1: 10.1.1.0/24

Subnet1: 10.1.1.0/24

The 10.1.1.0/24 network falls within the 10.1.0.0/16 network because, starting from the left, we are adding more binary bits to address networks (24 bits beyond the 16 bits for VNet1)./16 in decimal notation is 255.255.0.0, and /24 in decimal notation is 255.255.255.0. In binary notation, /16 is 11111111.11111111.00000000.00000000, and /24 is 11111111.11111111.11111111.00000000.

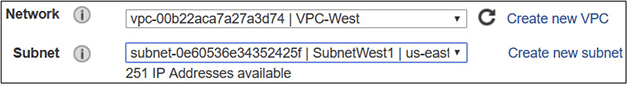

Preparation is everything—before deploying cloud resources, you need to plan into which network subnets resources will be deployed, as depicted in Figure 6-4.

FIGURE 6-4 Deploying an AWS virtual machine into a VPC and subnet

CERTIFICATION OBJECTIVE 6.02

Network Protocols

With cloud computing, everything old is now new. In other words, many old technologies used in on-premises environments work no differently now for cloud computing than they did 25 years ago—the only difference is those services are running on somebody else’s equipment that you connect to over a network. Table 6-1 lists common network protocols used both on premises and in the cloud.

TABLE 6-1 Common Network Protocols

Some network protocols such as DNS are integrated with CSP settings. An example is Microsoft Azure–provided DNS. When you deploy a new Azure VNet, by default, virtual machines deployed into subnets within that VNet can resolve names of other resources on the same subnet as well as Internet names. You can see this setting in Figure 6-5. Of course, you can configure custom DNS server IP addresses of your own, if you wish.

FIGURE 6-5 Microsoft Azure VNet DNS configuration

CERTIFICATION OBJECTIVE 6.03

Cloud Load Balancing

When an application is handling a high volume of client requests, it needs more horsepower to accommodate the increased workload than when demand is lower. Load balancing can be used to solve this problem.

You can configure internal load balancers for line-of-business applications used by employees, or you can configure external load balancers for public-facing applications. Either way, client requests for an app such as www.app1.com are resolved through DNS to the IP address of the load balancer. The load balancer is configured with multiple back-end virtual machines that actually run the application, as depicted in Figure 6-6.

The load balancer keeps track of how busy back-end servers are and also which back-end servers are no longer responding; client requests are routed by the load balancer to the least-busy responsive back-end server. You can configure horizontal autoscaling to add and remove virtual machines, providing the benefit of better application performance and increased resiliency against failure of a single server. You can base autoscaling on metrics such as number of received requests, CPU utilization, and so on.

Autoscaling with a load balancer is considered horizontal scaling because VM nodes are added or removed. Vertical scaling is related to increasing or decreasing individual VM resources, such as the number of vCPUs or the amount of RAM.

EXERCISE 6-1

Create a Microsoft Azure Virtual Network

In this exercise, you will create a virtual network and subnet in the Microsoft Azure cloud. This exercise depends on having first completed Exercise 1-1.

1. Using a web browser, sign in to https://portal.azure.com.

2. At the top of the navigation pane on the left, click the Create a Resource button.

3. In the Search field, type virtual network, select Virtual Network from the results, and click Create.

4. Configure the virtual network with the following settings (accept the default values for all other settings), as shown in Figure 6-7:

FIGURE 6-7 Microsoft Azure VNet creation

![]() Name: VNet90

Name: VNet90

![]() Address space: 10.3.0.0/16

Address space: 10.3.0.0/16

![]() Resource group: (New) ResGroup90

Resource group: (New) ResGroup90

![]() Subnet name: Subnet1

Subnet name: Subnet1

![]() Address range: 10.3.1.0/24

Address range: 10.3.1.0/24

5. Click Create.

EXERCISE 6-2

Configure a Microsoft Azure ExpressRoute Circuit

In this exercise, you will configure a dedicated link between an on-premises network to Azure through ExpressRoute. This exercise depends on having completed Exercise 1-1.

1. In the Azure portal, click Create a Resource in the upper left.

2. In the Search bar, type expressroute, select ExpressRoute from the list, and click Create.

3. Configure the ExpressRoute circuit with settings similar to the following example for my location, as shown in Figure 6-8:

FIGURE 6-8 Microsoft Azure ExpressRoute circuit creation

![]() Name: HQ_Circuit1

Name: HQ_Circuit1

![]() Provider: Bell Canada

Provider: Bell Canada

![]() Peering Location: Toronto

Peering Location: Toronto

![]() Bandwidth: 100Mbps

Bandwidth: 100Mbps

![]() Resource group: (New) ResGroup91

Resource group: (New) ResGroup91

4. To complete the process in a production environment, you would contact the service provider to provision the circuit and link it to a virtual network through a virtual-network gateway.

INSIDE THE EXAM

Cloud Networking

NetworkingKnowing when to use a specific cloud network configuration is the key to answering questions correctly on the CompTIA Cloud Essentials+ CLO-002 exam. Remember that some organizational requirements could be met by using a combination of cloud network solutions, such as a VPN to allow secure connections to the cloud to run a cloud-based, load-balanced application.

CERTIFICATION SUMMARY

This chapter discussed how to connect an on-premises network to a public cloud service provider over the Internet or using a dedicated network circuit. You also learned about providing secure connections to a public CSP through VPNs. Depending on your company’s needs, you can configure a site-to-site VPN linking your on-premises network to the cloud, or you can enable individual remote users to connect to the cloud through a client-to-site VPN connection.

You also learned about cloud virtual networks such as Microsoft Azure Virtual Networks and Amazon Web Services Virtual Private Clouds and how subnets in the cloud use an IP address range within the network range.

This chapter also described how many of the traditional network protocols, such as SSH, RDP, and DNS, that are used on premises are also used in a cloud computing environment for the same purposes.

The chapter wrapped up by explaining how load balancers can be implemented to provide quick and efficient access to cloud-hosted applications. Client requests to the app are directed to the load balancer, which then routes the requests to the least-busy responsive back-end server. The load-balanced solution can also be autoscaled to adjust the number of back-end virtual machines serving the application.

TWO-MINUTE DRILL

TWO-MINUTE DRILL

Cloud Network Components

![]() Cloud customers can connect to public CSPs over the Internet or through a private dedicated network circuit.

Cloud customers can connect to public CSPs over the Internet or through a private dedicated network circuit.

![]() Redundant Internet cloud connections should be used in case one connection fails.

Redundant Internet cloud connections should be used in case one connection fails.

![]() Dedicated network circuits provide predictable bandwidth on a private network link.

Dedicated network circuits provide predictable bandwidth on a private network link.

![]() Microsoft Azure dedicated network links are called ExpressRoute circuits.

Microsoft Azure dedicated network links are called ExpressRoute circuits.

![]() Amazon Web Services dedicated network links are called Direct Connect dedicated connections.

Amazon Web Services dedicated network links are called Direct Connect dedicated connections.

![]() VPNs create an encrypted tunnel between two endpoints over an untrusted network.

VPNs create an encrypted tunnel between two endpoints over an untrusted network.

![]() Branch office networks can be connected using a site-to-site VPN. If one branch office has VPN connectivity to the cloud, other branch offices could be configured to also have cloud access through the VPN.

Branch office networks can be connected using a site-to-site VPN. If one branch office has VPN connectivity to the cloud, other branch offices could be configured to also have cloud access through the VPN.

![]() On-premises networks can be securely connected to the public cloud via a site-to-site VPN.

On-premises networks can be securely connected to the public cloud via a site-to-site VPN.

![]() Site-to-site VPNs require an on-premises VPN appliance with a public IP address.

Site-to-site VPNs require an on-premises VPN appliance with a public IP address.

![]() Individual user devices can be securely connected to the public cloud using a client-to-site VPN.

Individual user devices can be securely connected to the public cloud using a client-to-site VPN.

![]() Client-to-site VPNs do not require an on-premises VPN appliance.

Client-to-site VPNs do not require an on-premises VPN appliance.

![]() Software-defined networking (SDN) spares cloud customers from needing detailed network hardware configuration knowledge when configuring cloud network components.

Software-defined networking (SDN) spares cloud customers from needing detailed network hardware configuration knowledge when configuring cloud network components.

![]() Cloud virtual networks contain subnets and are configured with a specific IP address space.

Cloud virtual networks contain subnets and are configured with a specific IP address space.

![]() Microsoft Azure cloud-based virtual networks are called VNets.

Microsoft Azure cloud-based virtual networks are called VNets.

![]() Amazon Web Services cloud-based virtual networks are called VPCs.

Amazon Web Services cloud-based virtual networks are called VPCs.

Network Protocols

![]() Traditional on-premises network protocols are also used in the cloud.

Traditional on-premises network protocols are also used in the cloud.

![]() SSH uses TCP port 22 for network device, Unix, and Linux remote management.

SSH uses TCP port 22 for network device, Unix, and Linux remote management.

![]() RDP uses port TCP 3389 for Windows host remote management.

RDP uses port TCP 3389 for Windows host remote management.

![]() Cloud services are primarily accessible over HTTP (TCP port 80) and HTTPS (TCP port 443).

Cloud services are primarily accessible over HTTP (TCP port 80) and HTTPS (TCP port 443).

![]() LDAP uses TCP port 389 when connecting to a network configuration database.

LDAP uses TCP port 389 when connecting to a network configuration database.

![]() SNMP uses UDP port 161 when monitoring network devices.

SNMP uses UDP port 161 when monitoring network devices.

![]() DNS uses UDP port 53 for client requests.

DNS uses UDP port 53 for client requests.

![]() DNS uses TCP port 53 for server-to-server communication.

DNS uses TCP port 53 for server-to-server communication.

Cloud Load Balancing

![]() Client connectivity to an application goes through a load balancer.

Client connectivity to an application goes through a load balancer.

![]() Load-balanced applications perform better and are resilient to server failures.

Load-balanced applications perform better and are resilient to server failures.

![]() Load balancers can serve internal or public-facing applications.

Load balancers can serve internal or public-facing applications.

![]() Load balancing uses back-end server pools consisting of VMs running the same application.

Load balancing uses back-end server pools consisting of VMs running the same application.

![]() App requests are routed to the least-busy back-end server.

App requests are routed to the least-busy back-end server.

![]() App requests are not routed to unresponsive back-end servers.

App requests are not routed to unresponsive back-end servers.

SELF TEST

SELF TEST

The following questions will help you measure your understanding of the material presented in this chapter. As indicated, some questions may have more than one correct answer, so be sure to read all the answer choices carefully.

Cloud Network Components

1. Which term refers to configuring cloud networking without directly having to configure underlying network hardware?

A. Load balancing

B. Software-defined networking

C. Cloud-based routing

D. Cloud-based virtualization

2. Your company uses a dedicated network circuit for public cloud connectivity. You need to ensure that on-premises–to–public cloud connections are not exposed to other Internet users. What should you do?

A. Configure a site-to-site VPN

B. Nothing

C. Configure a client-to-site VPN

D. Enable HTTPS

3. What must be configured within a cloud-based network to allow cloud resources to communicate on the network?

A. Public IP address

B. VPN

C. Load balancer

D. Subnet

4. Which type of IP addressing notation uses a slash followed by the number of subnet mask bits?

A. SDN

B. CIDR

C. VPN

D. QoS

5. Which word is the most closely related to using a VPN?

A. Performance

B. Encryption

C. Anonymous

D. Updates

6. Which type of VPN links two networks together over the Internet?

A. Point-to-site

B. Branch-to-branch

C. Client-to-site

D. Site-to-site

7. Which common VPN type links a single device to a private network over the Internet?

A. Point-to-site

B. Branch-to-branch

C. Client-to-site

D. Site-to-site

Network Protocols

8. You need to configure your on-premises perimeter firewall to allow outbound Linux remote management. Which port should you open?

A. TCP 80

B. UDP 161

C. TCP 22

D. TCP 3389

9. Your Microsoft Azure virtual machine has been deployed into an Azure VNet that uses default DNS settings. You are unable to connect to www.site.com from within the VM. What is the most likely problem?

A. The VNet is configured with custom DNS servers.

B. Outbound TCP port 22 traffic is blocked.

C. www.site.com is down.

D. Azure virtual machines do not support Internet name resolution.

10. You are configuring your on-premises perimeter firewall to allow outbound Windows server remote management. Which port should you open?

A. 3389

B. 443

C. 445

D. 389

Cloud Load Balancing

11. Which of the following words are most closely related to load balancing? (Choose two.)

A. Security

B. Performance

C. Archiving

D. Resiliency

12. Which cloud feature automatically adds or removes virtual machines based on how busy an application is?

A. Load balancing

B. Elasticity

C. Autoscaling

D. Monitoring

13. Which term describes adding virtual machines to support a busy application?

A. Scaling in horizontally

B. Scaling out horizontally

C. Scaling down vertically

D. Scaling up horizontally

14. After a load balancer is put in place, users report that they can no longer access a web application. What is the most likely cause of the problem?

A. The load balancer DNS name is not resolving to the website name.

B. The website DNS name is not resolving to the load balancer.

C. TCP port 3389 is blocked in the cloud.

D. TCP port 389 is blocked in the cloud.

15. What normally occurs when a load balancer back-end server is unresponsive?

A. The load balancer deletes and re-creates the failed server.

B. The load balancer uses vertical scaling to add servers.

C. The load balancer does not route client requests to the unresponsive server.

D. The load balancer prevents client connections to the app.

SELF TEST ANSWERS

SELF TEST ANSWERS

Cloud Network Components

1. ![]() B. Software-defined networking (SDN) hides the underlying complexities of network device configuration from the cloud user.

B. Software-defined networking (SDN) hides the underlying complexities of network device configuration from the cloud user.

![]() A, C, and D are incorrect. Load balancing distributes incoming client app requests among a pool of back-end servers supporting the app. Cloud-based routing is used to direct network traffic flow. Cloud-based virtualization allows VMs to run on CSP equipment.

A, C, and D are incorrect. Load balancing distributes incoming client app requests among a pool of back-end servers supporting the app. Cloud-based routing is used to direct network traffic flow. Cloud-based virtualization allows VMs to run on CSP equipment.

2. ![]() B. Nothing needs to be done; dedicated network circuits are completely separate from Internet connections.

B. Nothing needs to be done; dedicated network circuits are completely separate from Internet connections.

![]() A, C, and D are incorrect. None of the options are correct because nothing needs to be done.

A, C, and D are incorrect. None of the options are correct because nothing needs to be done.

3. ![]() D. A subnet is created within a cloud-based virtual network to allow cloud resources to communicate on the network. The subnet IP address range must fall within the cloud-based virtual network address space.

D. A subnet is created within a cloud-based virtual network to allow cloud resources to communicate on the network. The subnet IP address range must fall within the cloud-based virtual network address space.

![]() A, B, and C are incorrect. A public IP address is used to provide connectivity to cloud resources over the Internet. VPNs provide encrypted tunnels between two endpoints over an untrusted network such as the Internet. Load balancing distributes incoming client app requests among a pool of back-end servers supporting the app.

A, B, and C are incorrect. A public IP address is used to provide connectivity to cloud resources over the Internet. VPNs provide encrypted tunnels between two endpoints over an untrusted network such as the Internet. Load balancing distributes incoming client app requests among a pool of back-end servers supporting the app.

4. ![]() B. Classless Inter-Domain Routing (CIDR) notation uses an IP network address prefix followed by a slash and the number of bits in the subnet mask.

B. Classless Inter-Domain Routing (CIDR) notation uses an IP network address prefix followed by a slash and the number of bits in the subnet mask.

![]() A, C, and D are incorrect. Software-defined networking (SDN) hides the underlying complexities of network device configuration from the cloud user. VPNs provide encrypted tunnels between two endpoints over an untrusted network such as the Internet. Quality of service (QoS) provides a reasonable guaranteed level of network throughput with minimal packet loss for time-sensitive applications such as Voice over IP (VoIP).

A, C, and D are incorrect. Software-defined networking (SDN) hides the underlying complexities of network device configuration from the cloud user. VPNs provide encrypted tunnels between two endpoints over an untrusted network such as the Internet. Quality of service (QoS) provides a reasonable guaranteed level of network throughput with minimal packet loss for time-sensitive applications such as Voice over IP (VoIP).

5. ![]() B. Virtual private networks (VPNs) create an encrypted tunnel between two endpoints for the secure transmission of data.

B. Virtual private networks (VPNs) create an encrypted tunnel between two endpoints for the secure transmission of data.

![]() A, C, and D are incorrect. The terms performance, anonymous, and updates are not closely related to VPNs.

A, C, and D are incorrect. The terms performance, anonymous, and updates are not closely related to VPNs.

6. ![]() D. Site-to-site VPNs can be used to connect different networks together over the Internet.

D. Site-to-site VPNs can be used to connect different networks together over the Internet.

![]() A, B, and C are incorrect. Point-to-site (P2S) VPN links allow individual client connectivity to a remote network, such as Microsoft Azure client-to-site VPN configurations. Branch-to-branch is not a common VPN term.

A, B, and C are incorrect. Point-to-site (P2S) VPN links allow individual client connectivity to a remote network, such as Microsoft Azure client-to-site VPN configurations. Branch-to-branch is not a common VPN term.

7. ![]() C. Client-to-site VPNs link individual devices to a VPN endpoint through an encrypted tunnel.

C. Client-to-site VPNs link individual devices to a VPN endpoint through an encrypted tunnel.

![]() A, B, and D are incorrect. Point-to-site (P2S) VPN links allow individual client connectivity to a remote network, such as Microsoft Azure client-to-site VPN configurations. Branch-to-branch is not a common VPN term. Site-to-site VPNs can be used to connect different networks together over the Internet.

A, B, and D are incorrect. Point-to-site (P2S) VPN links allow individual client connectivity to a remote network, such as Microsoft Azure client-to-site VPN configurations. Branch-to-branch is not a common VPN term. Site-to-site VPNs can be used to connect different networks together over the Internet.

Network Protocols

8. ![]() C. Remote management of Linux hosts is normally performed using Secure Shell (SSH) over TCP port 22.

C. Remote management of Linux hosts is normally performed using Secure Shell (SSH) over TCP port 22.

![]() A, B, and D are incorrect. TCP port 80 is used by HTTP, UDP port 161 is used by SNMP, and TCP port 339 is used for Windows host remote management using RDP.

A, B, and D are incorrect. TCP port 80 is used by HTTP, UDP port 161 is used by SNMP, and TCP port 339 is used for Windows host remote management using RDP.

9. ![]() C. The most likely culprit of the listed items is that www.site.com is down.

C. The most likely culprit of the listed items is that www.site.com is down.

![]() A, B, and D are incorrect. Custom DNS server references are not part of Azure VNet default settings. Connecting to a website uses TCP port 80 or 443, not TCP port 22, which is used for SSH. Azure virtual machines can resolve Internet names if the configuration allows it.

A, B, and D are incorrect. Custom DNS server references are not part of Azure VNet default settings. Connecting to a website uses TCP port 80 or 443, not TCP port 22, which is used for SSH. Azure virtual machines can resolve Internet names if the configuration allows it.

10. ![]() A. Windows host remote management occurs over TCP port 3389.

A. Windows host remote management occurs over TCP port 3389.

![]() B, C, and D are incorrect. HTTPS uses TCP port 443, the Server Message Block (SMB) file-sharing protocol uses TCP port 445, and LDAP uses TCP port 389.

B, C, and D are incorrect. HTTPS uses TCP port 443, the Server Message Block (SMB) file-sharing protocol uses TCP port 445, and LDAP uses TCP port 389.

Cloud Load Balancing

11. ![]() B and D. Load balancing distributes client app requests to a pool of back-end servers to improve application performance and resiliency against server failures.

B and D. Load balancing distributes client app requests to a pool of back-end servers to improve application performance and resiliency against server failures.

![]() A and C are incorrect. Security and archiving are not closely related to load balancing.

A and C are incorrect. Security and archiving are not closely related to load balancing.

12. ![]() C. Autoscaling (also called horizontal scaling) can be configured to add or remove virtual machines when application utilization is above or below configured thresholds.

C. Autoscaling (also called horizontal scaling) can be configured to add or remove virtual machines when application utilization is above or below configured thresholds.

![]() A, B, and D are incorrect. Load balancing distributes client app requests to a pool of back-end servers to improve application performance and resiliency against server failures. Elasticity is a cloud computing characteristic that defines the rapid provisioning and deprovisioning of cloud resources. Monitoring is a passive activity that is not related to adding or removing VMs for busy applications.

A, B, and D are incorrect. Load balancing distributes client app requests to a pool of back-end servers to improve application performance and resiliency against server failures. Elasticity is a cloud computing characteristic that defines the rapid provisioning and deprovisioning of cloud resources. Monitoring is a passive activity that is not related to adding or removing VMs for busy applications.

13. ![]() B. Scaling out horizontally means adding virtual machines in response to how busy an application is.

B. Scaling out horizontally means adding virtual machines in response to how busy an application is.

![]() A, C, and D are incorrect. Scaling in means removing virtual machines, not adding VMs. Vertical scaling is related to individual VM resources such as vCPUs and RAM. Scaling up is vertical, not horizontal.

A, C, and D are incorrect. Scaling in means removing virtual machines, not adding VMs. Vertical scaling is related to individual VM resources such as vCPUs and RAM. Scaling up is vertical, not horizontal.

14. ![]() B. The DNS name used for web app connectivity most likely points to a now-defunct server IP address. The name must resolve to the load balancer’s IP address.

B. The DNS name used for web app connectivity most likely points to a now-defunct server IP address. The name must resolve to the load balancer’s IP address.

![]() A, C, and D are incorrect. DNS names must, in the end, resolve to an IP address to be resolved correctly. Port 3389 is used for RDP and port 389 is used for LDAP; neither of these is used to access a web application.

A, C, and D are incorrect. DNS names must, in the end, resolve to an IP address to be resolved correctly. Port 3389 is used for RDP and port 389 is used for LDAP; neither of these is used to access a web application.

15. ![]() C. Unresponsive back-end servers no longer receive client requests through the load balancer.

C. Unresponsive back-end servers no longer receive client requests through the load balancer.

![]() A, B, and D are incorrect. Unresponsive back-end servers are not re-created by a load balancer. Adding servers is horizontal, not vertical, scaling. Just because one back-end server is unresponsive, it does not mean other servers cannot still fulfill client app requests.

A, B, and D are incorrect. Unresponsive back-end servers are not re-created by a load balancer. Adding servers is horizontal, not vertical, scaling. Just because one back-end server is unresponsive, it does not mean other servers cannot still fulfill client app requests.