Incident response begins with the detection and identification of events. Detection, a function found in the NIST Cybersecurity Framework, should be deployed based on risks identified and potential attack patterns of known threats. Many of the capabilities discussed in this chapter play roles in other elements of incident response. Several provide automated detection and identification. Automation is desirable when it lowers costs, increases efficiency and is more reliable than manual processes. A significant use case for automation exists when technology correlates and detects behavior patterns and activity not always seen easily with the human eye. Considering the vast amounts of data produced by entities these days, detection requires automated means to support information security and incident response teams. As nice as automation is, automating everything is not possible, and some form of manual controls must also exist.

Data loss prevention (DLP)

Data capture, including NetFlow and full packets

End point detection and response

Intrusion detection systems (IDS)

Firewalls

Routers and switches

Domain name system (DNS)

Application and infrastructure monitoring

Security incident and event management (SIEM)

Implementing these technologies requires care. Not defining use cases—specific scenarios in which risks and security gaps the solution is designed to detect exist—causes implementation gaps and mismanagement. These capabilities possibly alert the organization to an event or incident, or are used to confirm whether an event or incident took place and must be investigated.

Building Detective Capabilities

To detect events, basic capabilities, and technology, people who understand these are needed. These technologies require resources to tune and maintain the implementation, so that the right events are detected and nonevents don’t waste resources with unnecessary investigations. The choice of whether to implement each comes down to financial and risk-based decisions. Implementing these types of capabilities takes time away from other priorities. The trick lies in balancing the choices, based on cost factors and gains in risk reduction.

Not all detection requires technology. End users are an example of how the human element can be very effective, such as noticing phishing e-mails first when other employees do not observe good e-mail hygiene.

Data Loss Protection

DLP is a necessary solution designed to detect sensitive data in motion, in use, and at rest. For example, if DLP is implemented at a healthcare organization, it is expected to address situations in which data is transmitted, stored and used in insecure ways. This might involve e-mailing patient data in clear text, storing it in SharePoint or OneDrive, printing it or saving it to a thumb drive. Many DLP solutions contain Health Insurance Accountability and Portability Act (HIPAA) rulesets out of the box. Other common out-of-the-box rules include financial, personally identifiable information (PII) and credit card data detection rules.

Implementing DLP

Matrix showing examples of data states

Matrix Outlining How Specific Data Types Must Be Handled for Given Scenarios When Detected by a DLP Solution

Data Type | E-mailed | Transmitted to Outsiders | Stored Internally | Destroyed |

|---|---|---|---|---|

Personally Identifiable | Encrypted | Requires Approval/ Encrypted | Encrypted/Secured | DOD Standards |

Intellectual Property | Encrypted | Requires Approval/Encrypted | Encrypted/Secured | DOD Standards |

Nonsensitive | None Specified | None Specified | None Specified | None Specified |

One choice available when considering the time and effort necessary to fine-tune DLP and investigate alerts is outsourcing the management of the solution. Entities of all sizes take advantage of services offered by DLP vendors and other third parties to offload these operations. This takes the day-to-day burdens away from the on-premise team, potentially at less cost than a full-time employee. It is important to establish the rules of engagement with the service provider, to ensure data monitoring is consistent with the sensitivity levels established.

Because impacts to business process and hardware performance is possible, communication to the user base and slow, deliberate roll-out of the solution makes sense. The last thing the information security team needs is an implementation resulting in a business slowdown.

Handling the Data Types

Data traverses the network and is transmitted to customers and business partners. DLP detects instances in which the data types in question are in motion. Sensitive data headed out of the organization, in clear text, is usually prohibited and must be prevented. Some data at rest requires storage in secure places. Allowing sensitive data to sit in SharePoint sites, One Drive, or, in some cases, on laptops, is usually a policy violation. DLP is used to identify instances in which data is stored in violation of policy. Again, the solution can simply alert, encrypt the data where it is located or move the data and leave behind a note with the new location where the data is at rest. The actions of encrypting or moving usually occur after the solution has been in place for a while and the likelihood of a false positive is lower.

End Point Detection and Response

FireEye Endpoint Security

Carbon Black’s Cb Response

Cybereason Total Enterprise Protection

Symantec Endpoint Protection

CrowdStrike Falcon Insight

This is a short list of players in this space, and differentiating each takes time and research. A full list can be found on the Gartner Peer Insights review.1

Analyzing Traffic

Packet capture aids incident response teams’ need to confirm whether suspected events exist. Organizations implement these solutions based on the incident response and monitoring strategy. Entities can choose to capture full packets, headers, and stream headers (the header and a portion of the packet contents), depending on program needs and the solutions offerings. NetFlow is another option. Developed by Cisco, NetFlow allows entities to capture data on the origination, destination, and amount of traffic. According to Michael Patterson, in his blog, Plixer, discussing the difference between NetFlow and packet capture, information provided by NetFlow is improving.2 Network devices enabled with NetFlow collect this data for review. On occasion, NetFlow information is necessary to identify where traffic is originating from in the network, to detect anomalous behavior. If DNS server activity spikes, NetFlow information captured coming into the DNS server informs analysts where traffic originated from inside the entity, so that the end point can be investigated further.

Capturing full packets is the ideal method to analyze traffic but is very costly. The volume of data storage is cost-prohibitive in both storage and maintenance. Full-packet capture requires strategic deployment and specific use cases. Therefore, NetFlow is an effective alternative during traffic analysis. This data can be used, not only for incident response, but infrastructure teams use it to troubleshoot performance issues. Most firewalls and routers on the market are capable of capturing NetFlow traffic.

There are many solutions to choose from when implementing this capability. The major network hardware manufacturers offer this capability, and niche players exist, adding threat intelligence on top of packet and NetFlow traffic analysis.

Security Incident and Event Management

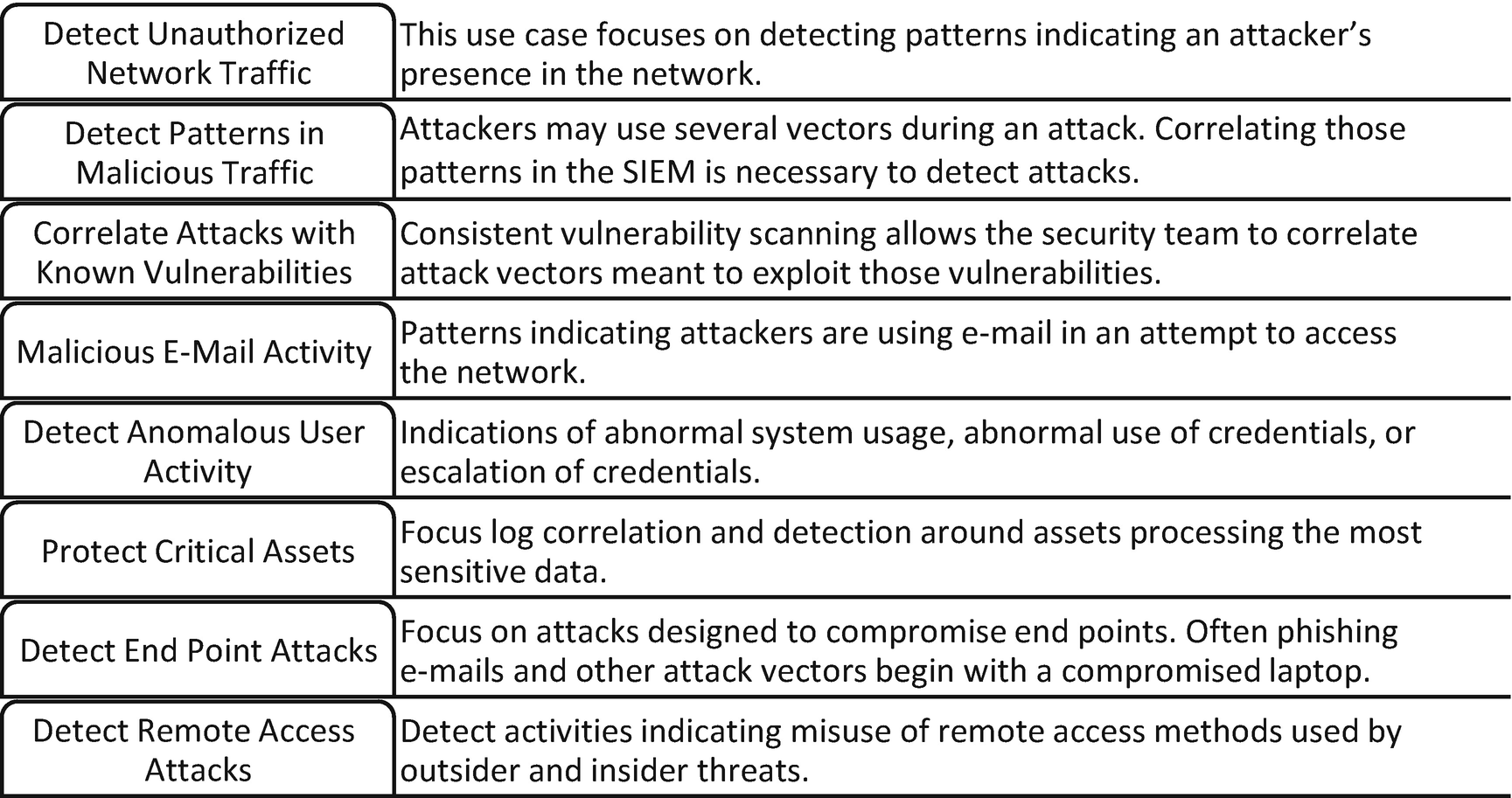

Examples of use cases that entities can use to correlate potential malicious activities

Once use cases are identified, determining what logs and information must be ingested into the SIEM is next. Over time, new use cases are identified, and feeding of the SIEM is updated. This is how the capability is matured.

Note

These are by no means the only use cases for using a SIEM solution. The examples shown are meant to teach readers how to build a SIEM process and fit it into the incident response process.

Entities can create use cases around detecting attacks against web assets, lateral movement, and other insider threat vectors. Over time, as the threats, vulnerabilities, and risks are better understood within the entity, new use cases are developed and monitored within the security operations center.

Empowering End Users

End users are a detection capability. E-mails directed at them intended to gain a foothold inside the entity land in in-boxes every day. When end users identify malicious e-mails, it prevents successful attacks and allows the security team to see the methods attackers are employing against them. How savvy the end users are determines how effective this group is at detecting attempts to infiltrate the network. One way to increase the effectiveness of the end users is through training with phishing simulations. These simulations must be done with the intent to partner with end users and not as a method to catch them doing something wrong and calling them out on it. The idea is to keep security and a cautiously suspicious eye on e-mail communication from unknown sources. It serves as another capability to detect events early.

Other Ways of Detecting and Identifying Events

Intrusion detection systems (IDS), firewalls, application and infrastructure logs, and database and operating systems are other sources of alerts and evidence used to confirm that an event or incident occurred.

Intrusion detection systems work at the network level, analyzing traffic traversing between hosts and at the host. These solutions are intended to identify unusual behavior and events known to be malicious. These solutions use known indicators and intelligence captured from past events by the solution provider, and advanced implementations use artificial intelligence or machine learning to understand what is normal inside a network and on a host. These devices can be stand-alone or come packaged within other solutions.

Firewalls are primarily a prevention capability, but traffic passing through it can be archived and used to correlate events. Discussed earlier in this chapter were NetFlow capabilities especially. The firewalls collect data related to all traffic coming into and out of the network and traffic blocked. This is important evidence when investigating all types of events.

Depending on the application, auditing features capture successful and unsuccessful login attempts, user activity and configuration changes. Not all applications offer this extensive logging, but if available, entities should utilize it.

Domain Name System (DNS) logs and cache capture connections to known malicious IP addresses and domains.

Routers and switches contain log data from traffic passing through used during investigations.

Events Captured by Windows Event Logs

Windows Event Category | Description |

|---|---|

Account Logon | Credential Validation |

Account Management | Changes to computer, user and group accounts |

Detailed Tracking | Encryption events, process creation, process termination and RPC events |

DS Access | Active Directory access |

Logon/Logoff | Successful logons and logoffs by user |

Object Access | Access to files, folders, applications, and registry |

Policy Change | Changes to audit policies |

Privilege Use | Audit the use of privileges |

System | Changes to security subsystem |

Some of these categories can add further granularity to the event logging. System administrators can evaluate these options and discuss with the team. If possible, forwarding these event logs to a SIEM solution facilitates efficient and reliable event correlation. Attacks often cause seemingly unrelated events to occur in multiple devices.

Events Captured by Linux Logging Capabilities

Linux Event Logged |

|---|

Successful User Login |

Failed User Login |

User Logoff |

Changes to User Accounts or Deleted Accounts |

Sudo Actions |

Service Failures |

Just as in the Windows scenario, the best use of the logs collected are via analysis by a SIEM or another log-correlation tool.

Depending on the database in use, some variance of events captured exists across the different types. Many database events of interest are like those mentioned in the Windows and Linux event logging: successful and failed login attempts, changes to accounts, changes to data structures and schemas and privileged actions. These events deliver clues to suspicious activity in these environments.

Identification of Security Events

Security events and incidents are detected in many ways. End users contact the help desk or security desk, if one exists. Detective capabilities trigger alerts, and outside entities, such as law enforcement, contact entities when evidence of a potential event are uncovered.

The early stages of an event are critical. When the moment comes, kicking off an investigation fluidly and without confusion is a must. Ignoring an alert or not following the correct process causes ineffectiveness. This is where the playbooks come in. No matter what type of event is taking place, a documented process should exist to conduct initial triage and any necessary escalation.

Triaging the event is important. Initial investigations focus on understanding if events are benign or part of a malicious campaign. Events of low consequence should be closed by the help or security desk without fanfare. Significant events are escalated for further analysis. Successful completion of these steps leads to successful responses.

Sensitivity of the assets involved in the event

Impact to business operations and ability to recover

Financial impact not related to downtime and lost productivity

The impact level derives from the considerations discussed here. If operations are impacted, the time required to restore them influences impact. Sensitive assets, such as customer data, intellectual property or confidential data, can be high-impact targets. Events causing legal fees and reputational damage are also examples of high-impact issues.

Data Impacts in This Example Are Measured on Three Levels

Rating | Definition |

|---|---|

High | Sensitive production data is impacted. |

Medium | Production data in less sensitive environments is impacted. |

Low | Data impacted is not sensitive. |

Ransomware outbreaks are trouble when production environments are involved. Some data instances have longer restoration times and do not cause issues when data is inaccessible. Impact to sensitive data environments creates negative consequences almost immediately. Nonsensitive data locations are of lower impact to the entity, mainly owing to the time it takes the IT team to restore this data. The definitions are based on the risk tolerance of an organization. Entities with less risk tolerance might consider the unsuccessful targeting of end users with ransomware a low-impact event. Others barely consider it an event.

Once the impact is known, follow-up actions are required. The incident response plan dictates what to do next. High- and medium-impact events should be escalated to the incident response leader. The correct playbook should be utilized and the incident response plan followed. Low-impact incidents might be handled at the security or help desk level, with communication to the incident response leader required.

Summary

Applications and application servers

Firewalls

Intrusion detection and prevention systems

Packet captures

End point detection and response

DNS security monitoring

DLP solutions

EDR solutions

Infrastructure (operating systems and databases)

Data needs a place to go and a way for analysis to occur. Security events spawn many indicators. These indicators may make it obvious that an attack is occurring, and others may be only hinting at this. The increased use of SIEM solutions was designed to identify all the subtle hints of an attack and alert cybersecurity teams. These represent just some of the capabilities organizations need to implement to identify potential security incidents.