Chapter 10. Mobile: It’s not just a city in Alabama anymore

WELCOME TO THE 21ST CENTURY — YOU MAY EXPERIENCE A SLIGHT SENSE OF VERTIGO

[shouting] PHENOMENAL COSMIC POWERS! [softly] Itty-bitty living space!

—ROBIN WILLIAMS AS THE GENIE IN ALADDIN, COMMENTING ON THE UPSIDE AND DOWNSIDE OF THE GENIE LIFESTYLE

Ahh, the smartphone.

Phones had been getting gradually smarter for years, gathering in desk drawers and plotting amongst themselves. But it wasn’t until the Great Leap Forward1 that they finally achieved consciousness.

1 Introduction of the iPhone June 2007.

I, for one, was glad to welcome our tiny, time-wasting overlords. I know there was a time when I didn’t have a powerful touch screen computer with Internet access in my pocket, but it’s getting harder and harder to remember what life was like then.

And of course it was about this same time that the Mobile Web finally came into its own. There had been Web browsers on phones before, but they—to use the technical term—sucked.

The problem had always been—as the Genie aptly put it—the itty-bitty living space. Mobile devices meant cramped devices, squeezing Web pages the size of a sheet of paper into a screen the size of a postage stamp. There were various attempts at solutions, even some profoundly debased “mobile” versions of sites (remember pressing numbers to select numbered menu items?) and, as usual, the early adopters and the people who really needed the data muddled through.

But Apple married more computer horsepower (in an emotionally pleasing, thin, aesthetic package—why are thin watches so desirable?) with a carefully wrought browser interface. One of Apple’s great inventions was the ability to scroll (swiping up and down) and zoom in and out (pinching and...unpinching) very quickly. (It was the very quickly part—the responsiveness of the hardware—that finally made it useful.)

For the first time, the Web was fun to use on a device that you could carry with you at all times. With a battery that lasted all day. You could look up anything anywhere anytime.

It’s hard to overestimate what a sea change this was.

Of course, it wasn’t only about the Web. Just consider how many things the smartphone allowed you to carry in your pocket or purse at all times: a camera (still and video, and, for many people, the best one they’d ever owned), a GPS with maps of the whole world, a watch, an alarm clock, all of your photos and music, etc., etc.

It’s true: The best camera really is the one you have with you.

And think about the fact that for most people in emerging countries, in the same way they bypassed landlines and went straight to cellphones, the smartphone is their first—and only—computer.

There’s not much denying that mobile devices are the wave of the future, except for things where you need enormous horsepower (professional video editing, for example, at least for now) or a big playing surface (Photoshop or CAD).

What’s the difference?

So, what’s different about usability when you’re designing for use on a mobile device?

In one sense, the answer is: Not much. The basic principles are still the same. If anything, people are moving faster and reading even less on small screens.

But there are some significant differences about mobile that make for challenging new usability problems.

As I write this, Web and app design for mobile devices is still in its formative “Wild West” days in many ways. It’s going to take another few years for things to shake out, probably just in time for innovations that will force the whole cycle to start over again.

I’m not going to talk very much about specific best practices because many of the bright interface design ideas that will eventually become the prevailing conventions probably haven’t emerged yet. And of course the technology is going to keep changing under our feet faster than we can run.

“App” | xkcd.com

What I will do is tell you a few things that I’m sure will continue to be true. And the first one is...

It’s all about tradeoffs

One way to look at design—any kind of design—is that it’s essentially about constraints (things you have to do and things you can’t do) and tradeoffs (the less-than-ideal choices you make to live within the constraints).

To paraphrase Lincoln, the best you can do is please some of the people some of the time.2

2 ...if, in fact, he ever actually said “You can fool some of the people all of the time, and all of the people some of the time, but you cannot fool all of the people all of the time.” One of the things I’ve learned from the Internet is that when it comes to memorable sayings attributed to famous people, 92% of the time they never said them. See en.wikiquote.org/wiki/Abraham_Lincoln.

There’s a well-established meme that suggests that rather than being the negative force that they often feel like, constraints actually make design easier and foster innovation.

And it’s true that constraints are often helpful. If a sofa has to fit in this space and match this color scheme, it’s sometimes easier to find one than if you just go shopping for any sofa. Having something pinned down can have a focusing effect, where a blank canvas with its unlimited options—while it sounds liberating—can have a paralyzing effect.

You may not buy the idea that constraints are a positive influence, but it really doesn’t matter: Whenever you’re designing, you’re dealing with constraints. And where there are constraints, there are tradeoffs to be made.

In my experience, many—if not most—serious usability problems are the result of a poor decision about a tradeoff.

For example, I don’t use CBS News on my iPhone.

I’ve learned over time that their stories are broken up into too-small (for me) chunks, and each one takes a long time to load. (If the pages loaded faster, I might not mind.) And to add insult to injury, on each new page you have to scroll down past the same photo to get to the next tiny morsel of text.

Here’s what the experience looks like:

Tap to open the story, then wait. And wait. And wait.

When the page finally loads, swipe to scroll down past the photo.

Read the two paragraphs of text, then tap Next and wait. And wait.

Repeat 8 times to read the whole story.

It’s so annoying that when I’m scanning Google News (which I do several times a day) and notice that the story I’m about to tap is linked to CBS News, I always click on Google’s “More stories” link to choose another source.

When I run into a problem like this, I know that it’s not there because the people who designed it didn’t think about it. In fact, I’m sure it was the subject of some intense debate that resulted in a compromise.

I don’t know what constraints were at work in this particular tradeoff. Since there are ads on the pages, it may have been a need to generate more page views. Or it could have something to do with the way the content is segmented for other purposes in their content management system. I have no idea. All I do know is that the choice they made didn’t place enough weight on creating a good experience for the user.

Most of the challenges in creating good mobile usability boil down to making good tradeoffs.

The tyranny of the itty-bitty living space

The most obvious thing about mobile screens is that they’re small. For decades, we’ve been designing for screens which, while they may have felt small to Web designers at the time, were luxurious by today’s standards. And even then, designers were working overtime trying to squeeze everything into view.

But if you thought Home page real estate was precious before, try accomplishing the same things on a mobile site. So there are definitely many new tradeoffs to be made.

One way to deal with a smaller living space is to leave things out: Create a mobile site that is a subset of the full site. Which, of course, raises a tricky question: Which parts do you leave out?

One approach was Mobile First. Instead of designing a full-featured (and perhaps bloated) version of your Web site first and then paring it down to create the mobile version, you design the mobile version first based on the features and content that are most important to your users. Then you add on more features and content to create the desktop/full version.

It was a great idea. For one thing, Mobile First meant that you would work hard to determine what was really essential, what people needed most. Always a good thing to do.

But some people interpreted it to mean that you should choose what to include based on what people want to do when they’re mobile. This assumed that when people accessed the mobile version they were “on the move,” not sitting at their desk, so they’d only need the kinds of features you’d use on the move. For example, you might want to check your bank balances while out shopping, but you wouldn’t be likely to reconcile your checkbook or set up a new account.

Of course, it turned out this was wrong. People are just as likely to be using their mobile devices while sitting on the couch at home, and they want (and expect) to be able to do everything. Or at least, everybody wants to do some things, and if you add them all up it amounts to everything.

If you’re going to include everything, you have to pay even more attention to prioritizing.

Things I want to use in a hurry or frequently should be close at hand. Everything else can be a few taps away, but there should be an obvious path to get to them.

In some cases, the lack of space on each screen means that mobile sites become much deeper than their full-size cousins, so you might have to tap down three, four, or five “levels” to get to some features or content.

This means that people will be tapping more, but that’s OK. With small screens it’s inevitable: To see the same amount of information, you’re going to be either tapping or scrolling a lot more. As long as the user continues to feel confident that what they want is further down the screen or behind that link or button, they’ll keep going.

Here’s the main thing to remember, though:

MANAGING REAL ESTATE CHALLENGES SHOULDN’T BE DONE AT THE COST OF USABILITY3

3 Thanks to Manikandan Baluchamy for this maxim.

Breeding chameleons

The siren song of one-design-fits-all-screen-sizes has a long history of bright hopes, broken promises, and weary designers and developers.

If there are two things I can tell you about scalable design (a/k/a dynamic layout, fluid design, adaptive design, and responsive design), they’re these:

![]() It tends to be a lot of work.

It tends to be a lot of work.

![]() It’s very hard to do it well.

It’s very hard to do it well.

In the past, scalable design—creating one version of a site that would look good on many different size screens—was optional. It seemed like a good idea, but very few people actually cared about it. Now that small screens are taking over, everybody cares: If you have a Web site, you have to make it usable on any size screen.

Developers learned long ago that trying to create separate versions of anything—keeping two sets of books, so to speak—is a surefire path to madness. It doubles the effort (at least) and guarantees that either things won’t be updated as frequently or the versions will be out of sync.

It’s still getting sorted out. This time, the problem has real revenue implications, so there will be technical solutions, but it will take time.

In the meantime, here are three suggestions:

![]() Allow zooming. If you don’t have the resources to “mobilize” your site at all and you’re not using responsive design, you should at least make sure that your site doesn’t resist efforts to view it on a mobile device. There are few things more annoying than opening up a site on your phone and discovering that you can’t zoom in on the tiny text at all. (Well, all right. Actually there are a lot of things more annoying. But it’s pretty annoying.)

Allow zooming. If you don’t have the resources to “mobilize” your site at all and you’re not using responsive design, you should at least make sure that your site doesn’t resist efforts to view it on a mobile device. There are few things more annoying than opening up a site on your phone and discovering that you can’t zoom in on the tiny text at all. (Well, all right. Actually there are a lot of things more annoying. But it’s pretty annoying.)

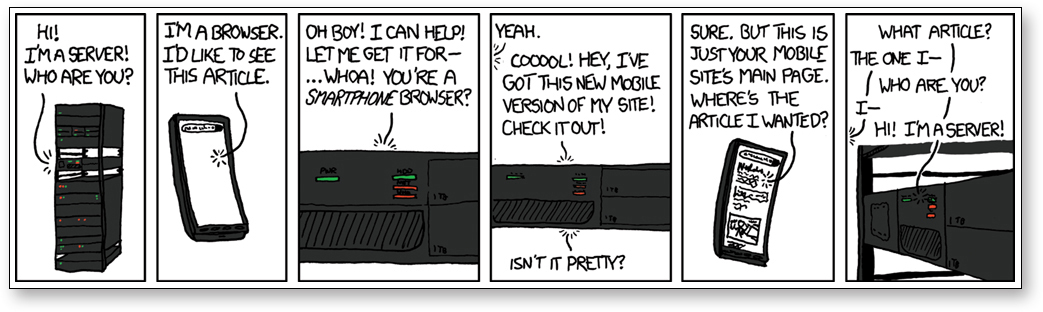

![]() Don’t leave me standing at the front door. Another real nuisance: You tap on a link in an email or a social media site and instead of taking you to the article in question it takes you to the mobile Home page, leaving you to hunt for the thing yourself.

Don’t leave me standing at the front door. Another real nuisance: You tap on a link in an email or a social media site and instead of taking you to the article in question it takes you to the mobile Home page, leaving you to hunt for the thing yourself.

![]() Always provide a link to the “full” Web site. No matter how fabulous and complete your mobile site is, you do need to give users the option of viewing the non-mobile version, especially if it has features and information that aren’t available in your mobile version. (The current convention is to put a Mobile Site/Full Site toggle at the bottom of every page.)

Always provide a link to the “full” Web site. No matter how fabulous and complete your mobile site is, you do need to give users the option of viewing the non-mobile version, especially if it has features and information that aren’t available in your mobile version. (The current convention is to put a Mobile Site/Full Site toggle at the bottom of every page.)

There are many situations where people will be willing to zoom in and out through the small viewport of a mobile device in return for access on the go to features they’ve become accustomed to using or need at that moment. Also, some people will prefer to see the desktop pages when using 7″ tablets with high-resolution screens in landscape mode.

Don’t hide your affordances under a bushel

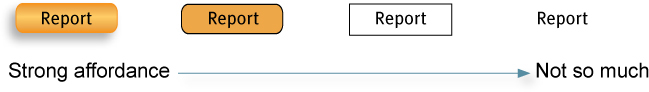

Affordances are visual clues in an object’s design that suggest how we can use it. (I mentioned them back in Chapter 3. Remember the doorknobs and the book by Don Norman? He popularized the term in the first edition of The Design of Everyday Things in 1988 and the design world quickly adopted it.4)

4 Unfortunately, the way they used it wasn’t exactly what he intended. He’s clarified it in the new edition of Everyday Things by proposing to call the clues “signifiers” instead, but it may be too late to put that genie back in the bottle. With apologies to Don, I’m going to keep calling them affordances here because (a) it’s still the prevailing usage, and (b) it makes my head hurt too much otherwise.

Affordances are the meat and potatoes of a visual user interface. For instance, the three-dimensional style of some buttons makes it clear they’re meant to be clicked. The same as with the scent of information for links, the clearer the visual cues, the more unambiguous the signal.

In the same way, a rectangular box with a border around it suggests that you can click in it and type something. If you had an editable text box without a border, the user could still click on it and type in it if he knew it was there. But it’s the affordance—the border—that makes its function clear.

For affordances to work, they need to be noticeable, and some characteristics of mobile devices have made them less noticeable or, worse, invisible. And by definition, affordances are the last thing you should hide.

This is not to say that all affordances need to hit you in the face. They just have to be visible enough that people can notice the ones they need to get their tasks done.

No cursor = no hover = no clue

Before touch screens arrived, Web design had come to rely heavily on a feature called hover—the ability of screen elements to change in some way when the user points the cursor at them without clicking.

But a capacitive touch screen (used on almost all mobile devices) can’t accurately sense that a finger is hovering above the glass, only when the finger has touched it. This is why they don’t have a cursor.5

5 Did you ever notice that the cursor was missing? I have to admit that I used my first iPhone for several months before it dawned on me that there was no cursor.

As a result, many useful interface features that depended on hover are no longer available, like tool tips, buttons that change shape or color to indicate that they’re clickable, and menus that drop down to reveal their contents without forcing you to make a choice.

As a designer, you need to be aware that these elements don’t exist for mobile users and try to find ways to replace them.

Flat design: Friend or foe?

Affordances require visual distinctions. But the recent trend in interface design (which may have waned by the time you read this) has moved in exactly the opposite direction: removing visual distinctions and “flattening” the appearance of interface elements.

It looks darned good (to some people, anyway), and it can make screens less cluttered-looking. But at what price?

In this case the tradeoff is between a clean, uncluttered look on one hand and providing sufficient visual information so people can perceive affordances on the other.

Unfortunately, Flat design has a tendency to take along with it not just the potentially distracting decoration but also the useful information that the more textured elements were conveying.

The distinctions required to draw attention to an affordance often need to be multi-dimensional: It’s the position of something (e.g., in the navigation bar) and its formatting (e.g., reversed type, all caps) that tell you it’s a menu item.

By removing a number of these distinctions from the design palette, Flat design makes it harder to differentiate things.

Flat design has sucked the air out of the room. It reminds me of the pre-color world in my favorite Calvin and Hobbes cartoon/comic/comic strip. (The rest of the cartoon is at the end of Chapter 13.)

CALVIN AND HOBBES © 1989 Watterson. Reprinted with permission of UNIVERSAL UCLICK. All rights reserved.

You can do all the Flat design you want (you may have to, it may be forced on you), but make sure you’re using all of the remaining dimensions to compensate for what you lose.

You actually can be too rich or too thin

...but computers can never be too fast. Particularly on mobile devices, speed just makes everything feel better. Slow performance equals frustration for users and loss of goodwill for publishers.

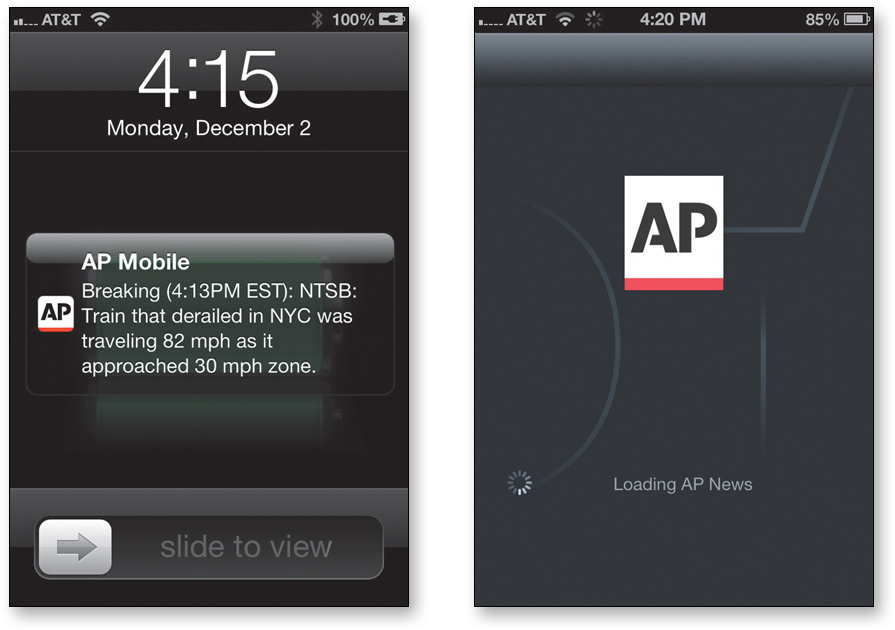

For instance, I prize the breaking news alerts from the AP (Associated Press) mobile app. They’re always the first hint I get of major news stories.

Unfortunately for AP, though, whenever I tap on one of their alerts, the app insists on loading a huge chunk of photos for all the other current top stories before showing me any details about the alert.

As a result, I’ve formed a new habit: When an AP alert arrives, I immediately open the New York Times site or Google News to see if they’ve picked up the story yet.

We’re all used to very fast connections nowadays, but we have to remember that mobile download speeds are unreliable. If people are at home or sitting at Starbucks, download speeds are probably good, but once they leave the comfort of Wi-Fi and revert to 4G or 3G or worse, performance can vary widely.

Be careful that your responsive design solutions aren’t loading up pages with huge amounts of code and images that are larger than necessary for the user’s screen.

Mobile apps, usability attributes of

You may remember that way back on page 9 I mentioned that I’d talk later about attributes that some people include in their definitions of usability: useful, learnable, memorable, effective, efficient, desirable, and delightful. Well, that time has arrived.

Personally, my focus has always been on the three that are central to my definition of usability:

A person of average (or even below average) ability and experience can figure out how to use the thing [i.e., it’s learnable] to accomplish something [effective] without it being more trouble than it’s worth [efficient].

I don’t spend much time thinking about whether things are useful because it strikes me as more of a marketing question, something that should be established before any project starts, using methods like interviews, focus groups, and surveys. Whether something is desirable seems like a marketing question too, and I’ll have more to say about that in the final chapter.

For now let’s talk about delight, learnability, and memorability and how they apply to mobile apps.

Delightful is the new black

What is this “delight” stuff, anyway?

Delight is a bit hard to pin down; it’s more one of those “I’ll know it when I feel it” kind of things. Rather than a definition, it’s probably easier to identify some of the words people use when describing delightful products: fun, surprising, impressive, captivating, clever, and even magical.6

6 My personal standard for a delightful app tends to be “does something you would have been burned at the stake for a few hundred years ago.”

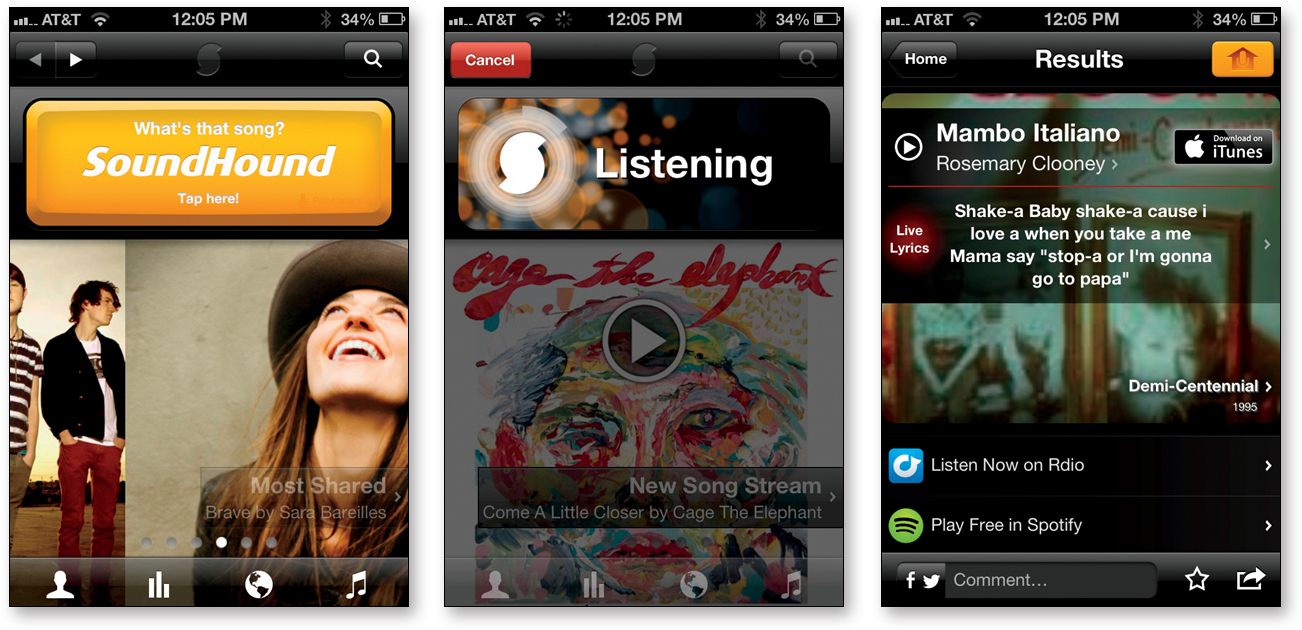

Delightful apps usually come from marrying an idea about something people would really enjoy being able to do, but don’t imagine is possible, with a bright idea about how to use some new technology to accomplish it.

SoundHound is a perfect example.

Not only can it identify that song that you hear playing wherever you happen to be, but it can display the lyrics and scroll them in sync with the song.

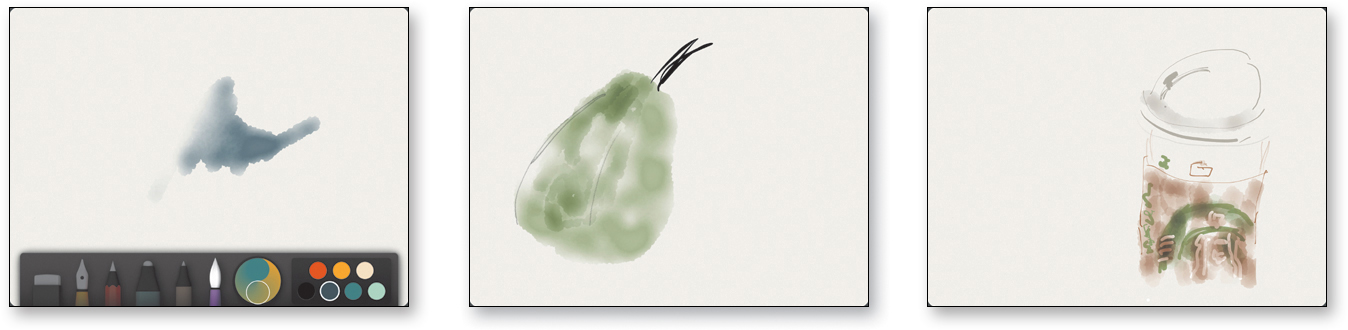

And Paper is not your average drawing app. Instead of dozens of tools with thousands of options, you get five tools with no options. And each one is optimized to create things that look good.

Building delight into mobile apps has become increasingly important because the app market is so competitive. Just doing something well isn’t good enough to create a hit; you have to do something incredibly well. Delight is sort of like the extra credit assignment of user experience design.

Making your app delightful is a fine objective. Just don’t focus so much attention on it that you forget to make it usable, too.

Apps need to be learnable

One of the biggest problems with apps is that if they have more than a few features they may not be very easy to learn.

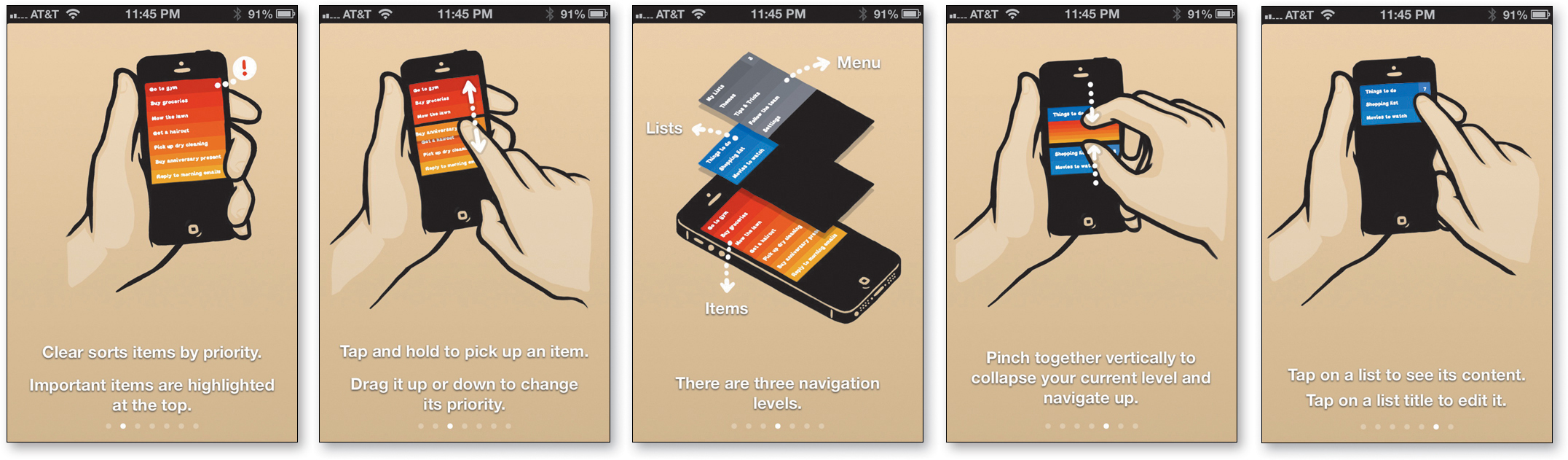

Take Clear, for example. It’s an app for making lists, like to-do lists. It’s brilliant, innovative, beautiful, useful, and fun to use, with a clean minimalist interface. All of the interactions are elegantly animated, with sophisticated sound effects. One reviewer said, “It’s almost like I’m playing a pinball machine while I’m staying productive.”

The problem is that one reason it’s so much fun to use is that they’ve come up with innovative interactions, gestures, and navigation, but there’s a lot to learn.

With most apps, if you get any instructions at all it’s usually one or two screens when you first launch the app that give a few essential hints about how the thing works. But it’s often difficult or impossible to find them again to read later.

And if help exists at all (and you can find it), it’s often one short page of text or a link to the developer’s site with no help to be found or a customer support page that gives you the email address where you can send your questions.

This can work for apps that are only doing a very few things, but as soon as you try to create something that has a lot of functionality—and particularly any functions that don’t follow familiar conventions or interface guidelines—it’s often not enough.

The people who made Clear have actually done a very good job with training compared to most apps. The first time you use it, you tap your way through a nicely illustrated ten-screen quick tour of the main features.

This is followed by an ingenious tutorial that’s actually just one of their lists.

Each item in the list tells you something to try, and by the time you’re done you’ve practiced using almost all of the features.

But when I’ve used it to do demo usability tests during my presentations, it hasn’t fared so well.

I give the participant/volunteer a chance to learn about the app by reading the description in the app store, viewing the quick tour, and trying the actions in the tutorial. Then I ask them to do the type of primary task the app is designed for: create a new list called “Chicago trip” with three items in it — Book hotel, Rent car, and Choose flight.

So far, no one has succeeded.

Even though it’s shown in the slide show on the way in, people don’t seem to get the concept that there are levels: the level of lists, the level of items in lists, and the level of settings. And even if they remember seeing it, they still can’t figure out how to navigate between levels. And if you can’t figure that out, you can’t get to the Help screens. Catch-22.

That’s not to say that no one in the real world learns how to use it. It gets great reviews and is consistently a best seller. But I have to wonder how many people who bought it have never mastered it, or how many more sales they could make if it were easier to learn.

And this is a company that’s put a lot of effort into training and help. Most don’t.

You need to do better than most, and usability testing will help you figure out how.

Apps need to be memorable, too

There’s one more attribute that’s important: memorability. Once you’ve figured out how to use an app, will you remember how to use it the next time you try or will you have to start over again from scratch?

I don’t usually talk much about memorability because I think the best way to make things easy to relearn is to make them incredibly clear and easy to learn in the first place. If it’s easy to learn the first time, it’s easy to learn the second time.

But it’s certainly a serious problem with some apps.

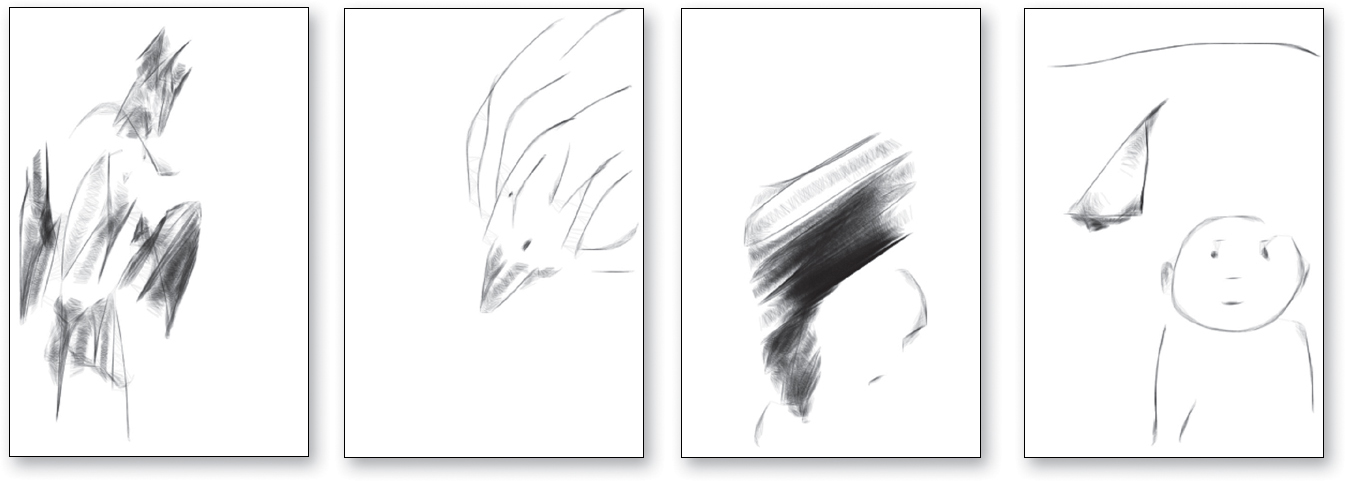

One of my favorite drawing apps is ASketch. I love this app because no matter what you try to draw and how crudely you draw it, it ends up looking interesting.

But for months, each time I opened it I couldn’t remember how to start a new drawing.

In fact, I couldn’t remember how to get to any of the controls. To maximize the drawing space there weren’t any icons on the screen.

I’d try all the usual suspects: double tap, triple tap, tap near the middle at the top or bottom of the screen, various swipes and multi-finger taps, and finally I’d hit on it. But by the next time I went to use it I’d forgotten what the trick was again.

Memorability can be a big factor in whether people adopt an app for regular use. Usually when you purchase one, you’ll be willing to spend some time right away figuring out how to use it. But if you have to invest the same effort the next time, it’s unlikely to feel like a satisfying experience. Unless you’re very impressed by what it does, there’s a good chance you’ll abandon it—which is the fate of most apps.

Life is cheap (99 cents) on mobile devices.

Usability testing on mobile devices

For the most part, doing usability testing on mobile devices is exactly the same as the testing I described in Chapter 9.

You’re still making up tasks for people to do and watching them try to do them. You still prompt them to say what they’re thinking while they work. You still need to keep quiet most of the time and save your probing questions for the end. And you should still try to get as many stakeholders as possible to come and observe the tests in person.

Almost everything that’s different when you’re doing mobile testing isn’t about the process; it’s about logistics.

The logistics of mobile testing

When you’re doing testing on a personal computer, the setup is pretty simple:

![]() The facilitator looks at the same screen as the participant.

The facilitator looks at the same screen as the participant.

![]() Screen sharing software allows the observers to see what’s happening.

Screen sharing software allows the observers to see what’s happening.

![]() Screen recording software creates a video of the session.

Screen recording software creates a video of the session.

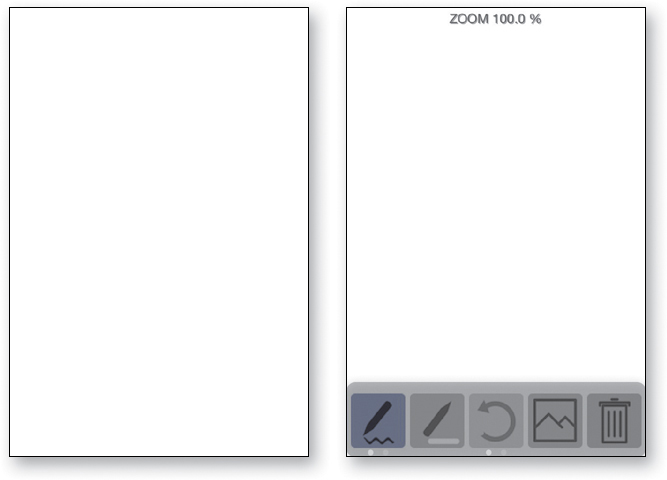

But if you’ve ever tried doing tests on mobile devices, you know that the setup can get very complicated: document cameras, Webcams, hardware signal processors, physical restraints (well, maybe not physical restraints, but “Don’t move the device beyond this point” markers to keep the participant within view of a camera), and even things called sleds and goosenecks.

Here are some of the issues you have to deal with:

![]() Do you need to let the participants use their own devices?

Do you need to let the participants use their own devices?

![]() Do they need to hold the device naturally, or can it be sitting on a table or propped up on a stand?

Do they need to hold the device naturally, or can it be sitting on a table or propped up on a stand?

![]() What do the observers need to see (e.g., just the screen, or both the screen and the participant’s fingers so they can see their gestures)? And how do you display it in the observation room?

What do the observers need to see (e.g., just the screen, or both the screen and the participant’s fingers so they can see their gestures)? And how do you display it in the observation room?

![]() How do you create a recording?

How do you create a recording?

One of the main reasons why mobile testing is complicated is that some of the tools we rely on for desktop testing don’t exist yet for mobile devices. As of this writing, robust mobile screen recording and screen sharing apps aren’t available, mainly because the mobile operating systems tend to prohibit background processes. And the devices don’t really have quite enough horsepower to run them anyway.

I expect this to change before long. With so many mobile sites and apps to test, there are already a lot of companies trying to come up with solutions.

My recommendations

Until better technology-based solutions come along, here’s what I’d lean toward:

![]() Use a camera pointed at the screen instead of mirroring. Mirroring is the same as screen sharing: It displays what’s on the screen. You can do it with software (like Apple’s Airplay) or hardware (using the same kind of cable you use to play a video from your phone or tablet on a monitor or TV).

Use a camera pointed at the screen instead of mirroring. Mirroring is the same as screen sharing: It displays what’s on the screen. You can do it with software (like Apple’s Airplay) or hardware (using the same kind of cable you use to play a video from your phone or tablet on a monitor or TV).

But mirroring isn’t a good way to watch tests done on touch screen devices, because you can’t see the gestures and taps the participant is making. Watching a test without seeing the participant’s fingers is a little like watching a player piano: It moves very fast and can be hard to follow. Seeing the hand and the screen is much more engaging.

If you’re going to capture fingers, there’s going to be a camera involved. (Some mirroring software will shows dots and streaks on the screen, but it’s not the same thing.)

![]() Attach the camera to the device so the user can hold it naturally. In some setups, the device sits on a table or desk and can’t be moved. In others, the participant can hold the device, but they’re told to keep it inside an area marked with tape. The only reason for restricting movement of the device is to make it easier to point a camera at it and keep it in view.

Attach the camera to the device so the user can hold it naturally. In some setups, the device sits on a table or desk and can’t be moved. In others, the participant can hold the device, but they’re told to keep it inside an area marked with tape. The only reason for restricting movement of the device is to make it easier to point a camera at it and keep it in view.

If you attach the camera to the device, the participant can move it freely and the screen will stay in view and in focus.

![]() Don’t bother with a camera pointed at the participant. I’m really not a fan of the face camera. Some observers like seeing the participant’s face, but I think it’s actually a distraction. I’d much rather have observers focus on what’s happening on the screen, and they can almost always tell what the user is feeling from their tone of voice anyway.

Don’t bother with a camera pointed at the participant. I’m really not a fan of the face camera. Some observers like seeing the participant’s face, but I think it’s actually a distraction. I’d much rather have observers focus on what’s happening on the screen, and they can almost always tell what the user is feeling from their tone of voice anyway.

Adding a second camera inevitably makes the configuration much more complicated, and I don’t think it’s worth the extra complexity. Of course, if your boss insists on seeing faces, show faces.

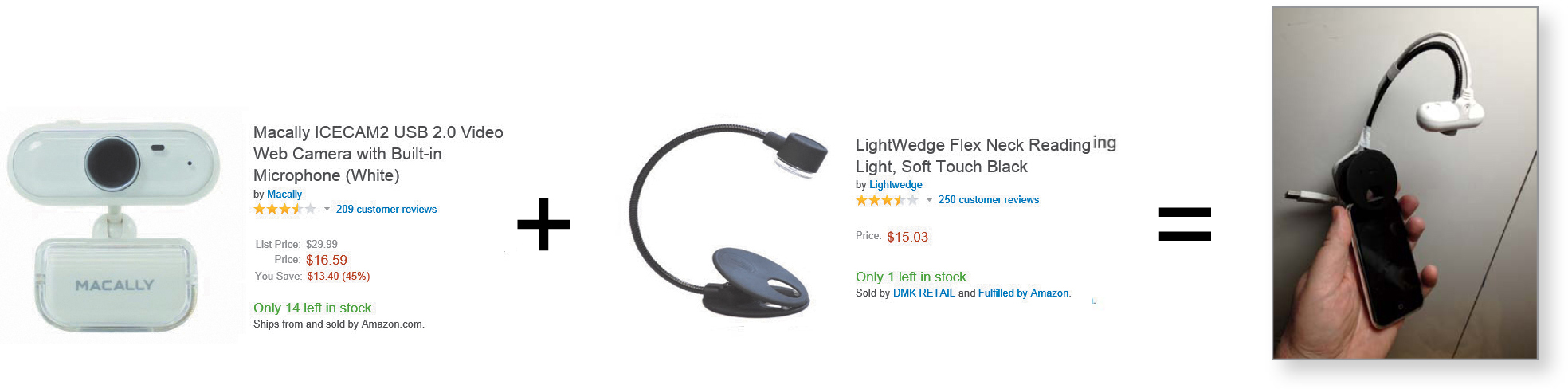

Proof of concept: My Brundleyfly7 camera

7 Brundlefly is the word Jeff Goldblum’s character (Seth Brundle) in The Fly uses to describe himself after his experiment with a teleportation device accidentally merges his DNA with that of a fly.

Out of curiosity, I built myself a camera rig by merging a clip from a book light with a Webcam. It weighs almost nothing and captures the audio with its built-in microphone. Mine cost about $30 in parts and took about an hour to make. I’m sure somebody will manufacture something similar—only much better—before long. I’ll put instructions for building one yourself online at rocketsurgerymadeeasy.com.

Lightweight webcam + Lightweight clamp and Gooseneck = Brundlefly

Attaching a camera to the device creates a very easy-to-follow view. The observers get a stable view of the screen even if the participant is waving it around.

I think it solves most of the objections to other mounted-camera solutions:

![]() They’re heavy and awkward. It weighs almost nothing and barely changes the way the phone feels in your hand.

They’re heavy and awkward. It weighs almost nothing and barely changes the way the phone feels in your hand.

![]() They’re distracting. It’s very small (smaller than it looks in the photo) and is positioned out of the participant’s line of sight, which is focused on the phone.

They’re distracting. It’s very small (smaller than it looks in the photo) and is positioned out of the participant’s line of sight, which is focused on the phone.

![]() Nobody wants to attach anything to their phone. Sleds are usually attached to phones with Velcro or double-sided tape. This uses a padded clamp that can’t scratch or mar anything but still grips the device firmly.

Nobody wants to attach anything to their phone. Sleds are usually attached to phones with Velcro or double-sided tape. This uses a padded clamp that can’t scratch or mar anything but still grips the device firmly.

One limitation of this kind of solution is that it is tethered: It requires a USB extension cable running from the camera to your laptop. But you can buy a long extension inexpensively.

The rest of the setup is very straightforward:

![]() Connect the Brundlefly to the facilitator’s laptop via USB.

Connect the Brundlefly to the facilitator’s laptop via USB.

![]() Open something like AmCap (on a PC) or QuickTime Player (on a Mac) to display the view from the Brundlefly. The facilitator will watch this view.

Open something like AmCap (on a PC) or QuickTime Player (on a Mac) to display the view from the Brundlefly. The facilitator will watch this view.

![]() Share the laptop screen with the observers using screen sharing (GoToMeeting, WebEx, etc.)

Share the laptop screen with the observers using screen sharing (GoToMeeting, WebEx, etc.)

![]() Run a screen recorder (e.g., Camtasia) on the computer in the observation room. This reduces the burden on the facilitator’s laptop.

Run a screen recorder (e.g., Camtasia) on the computer in the observation room. This reduces the burden on the facilitator’s laptop.

That’s it.

Finally...

In one form or another, it seems clear that mobile is where we’re going to live in the future, and it provides enormous opportunities to create great user experiences and usable things. New technologies and form factors are going to be introduced all the time, some of them involving dramatically different ways of interacting.8

8 Personally, I think talking to your computer is going to be one of the next big things. Recognition accuracy is already amazing; we just need to find ways for people to talk to their devices without looking, sounding, and feeling foolish. Someone who’s seriously working on the problems should give me a call; I’ve been using speech recognition software for 15 years, and I have a lot of thoughts about why it hasn’t caught on.

Just make sure that usability isn’t being lost in the shuffle. And the best way to do this is by testing.