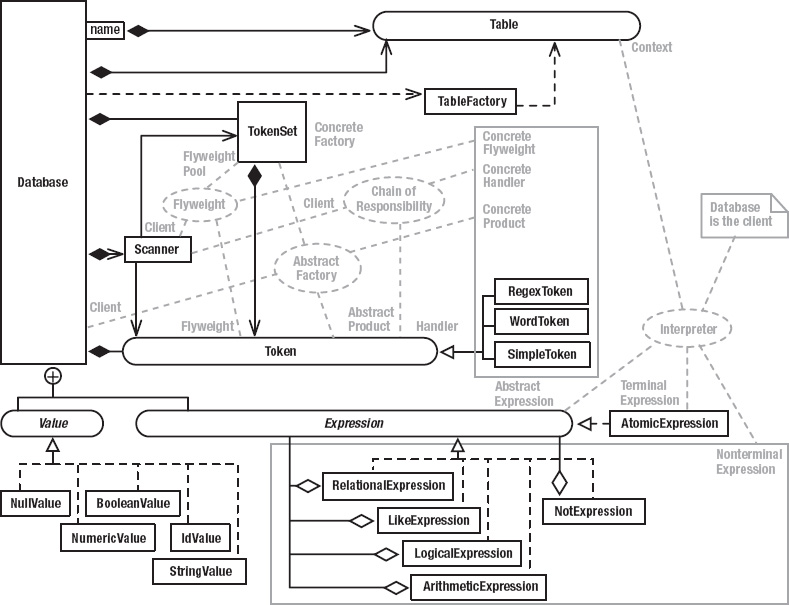

This chapter presents a complete subsystem that demonstrates all the remaining Gang-of-Four design patterns: a miniature SQL interpreter (and JDBC interface) that you can embed in your applications. This package is not a full-blown database but is a small in-memory database suitable for many client-side applications.

As was the case with the Game of Life game discussed in the previous chapter, I’ve set up a web page at http://www.holub.com/software/holubSQL/ that lists various links to SQL resources and provides an applet that lets you play with the interpreter I’m about to discuss. You can find the most recent version of the source code from this chapter on the same web page.

As was also the case with Life, I’ve opted to present a complete subsystem, so this chapter has a lot of code in it. As before, I don’t expect you to read every line—I’ve called out the important stuff in the text.

With the exception of “The Interpreter Pattern” section, you don’t need to know anything special to read this chapter. That section, which covers how the actual SQL interpreter and the parser that builds it works, is a doozy, though. After a lot of thought, I decided not to turn this chapter into a treatise on compiler writing, simply because the subject rarely comes up in normal programming. Moreover, the Interpreter Pattern section introduces only one design pattern, which is used only to build interpreters. If you’re not going to build an interpreter, you can safely skip it (both the pattern and the section). If you’re bold enough to proceed, however, I’m assuming (in that section only) that you know how write simple SQL statements, you know how to read an LL(1) BNF grammar, and you know how recursive-descent parsing works. The web page I just mentioned has links to SQL and JDBC resources if you need to learn that material, and it also links to a long introduction of formal grammars and recursive-descent parsing.

The Requirements

I originally wrote the small database engine in this chapter to handle the persistence layer for a client-side-only “shrink-wrap” application. My program used only a few tables and did only simple joins, and I didn’t want the size, overhead, and maintenance problems of a “real” database. I also didn’t need full-blown SQL—just a reasonable subset that supports table creation, modification, and simple queries (including joins) was sufficient. I didn’t need views, triggers, functions, and the other niceties of a real database. I did, however, need the tables that comprised the database to be stored in some human-readable ASCII format such as comma-separated values (preferred) or XML, and none of the databases that I could find satisfied this last requirement.

I rolled my own database for other reasons as well. The database needed to be “embedded” into the rest of the program rather than being a stand-alone server. I just didn’t want the hassle of installing (and maintaining) a stand-alone third-party database server that was likely to be an order of magnitude larger than the application itself. I wanted a small, lean implementation.

I wanted to talk to the database using JDBC so that I could replace it with something that was more fully featured if necessary. The database engine had to be written entirely in Java so that it was platform independent.

Finally, several times I’ve wanted to store a small amount of data in a database-like way, but without the overhead of an actual database. For example, it’s handy to put configuration options into a database-like data structure so that you can issue queries against the configuration. Using a database to keep track of ten configuration options was just too much overhead, however. I wanted the data structures that underlie the SQL interpreter to be useable in their own right as a sort of “collection,” but without the SQL.

I checked the web to see if there was anything that would do the job, but I couldn’t find anything at the time, so I rolled up my sleeves and wrote my own. (I’ve subsequently discovered a couple small public-domain SQL interpreters, but that’s life—it took less than two weeks to write the SQL engine you’re about to examine, so I didn’t waste any time.)

The Architecture

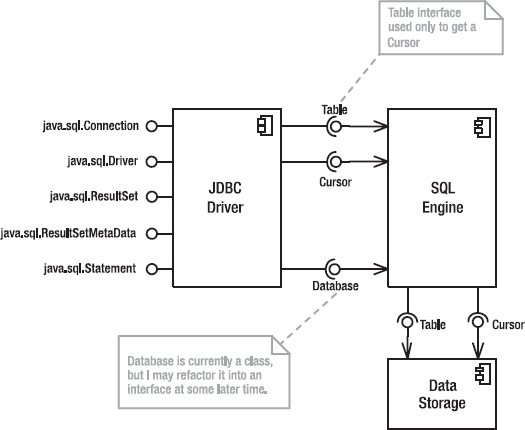

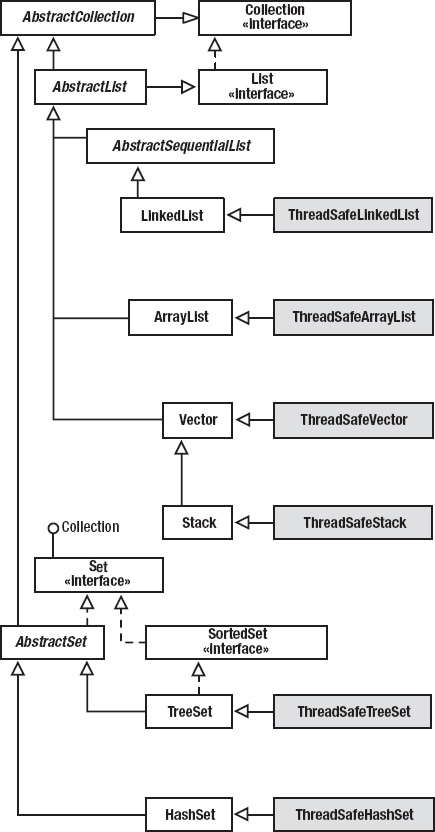

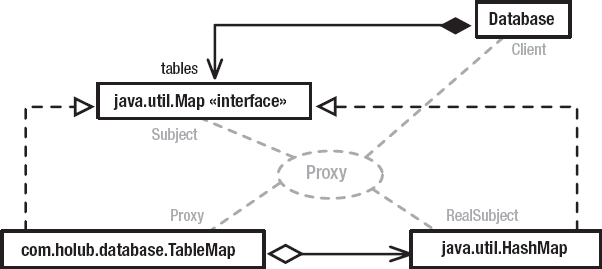

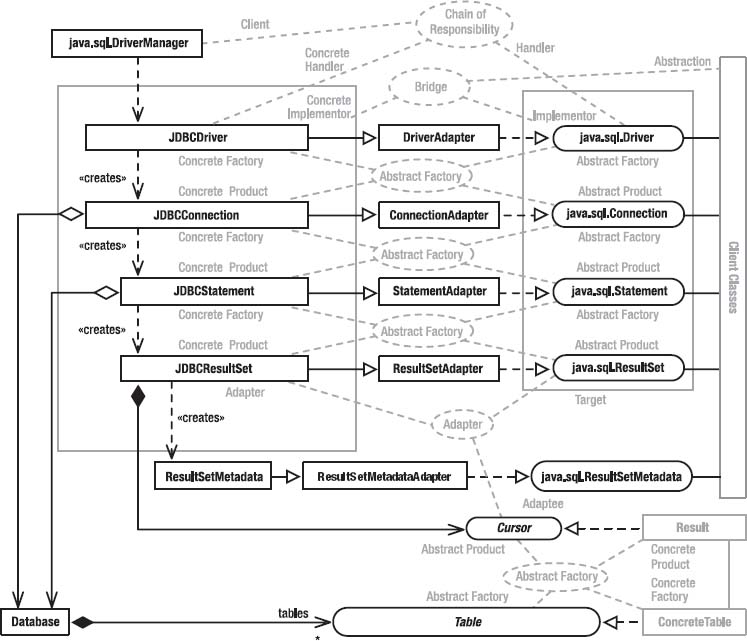

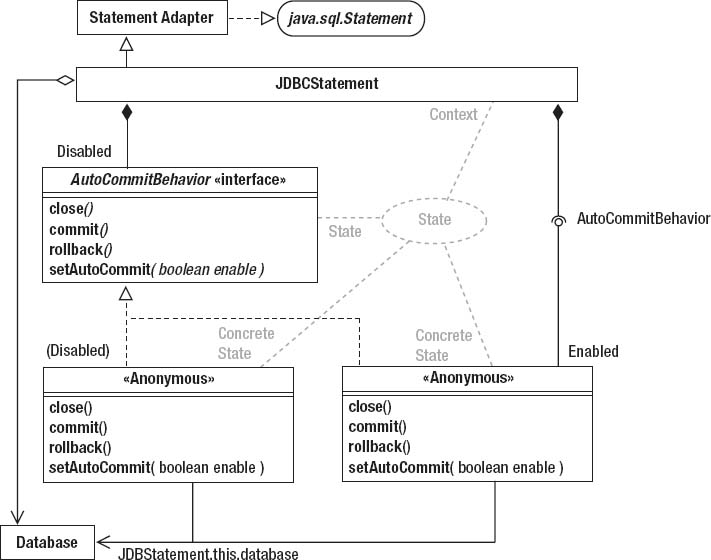

I approached the design of my small-database subsystem by breaking it into three distinct layers, each accessed through well-defined interfaces (see Figure 4-1). This use of interfaces to isolate subsystems from each other is a simple reification of the Bridge pattern, which I’ll discuss in greater depth later in the current chapter. The basic idea of Bridge is to separate subsystems with a set of interfaces so you can modify one subsystem without impacting the other.

The data-storage layer manages the actual data that comprises a table and also handles persistence. This layer exposes two interfaces: Table (which defines access to the table itself) and Cursor, which provides an iterator across rows in the table (an object that lets you visit each row of the table in sequence).

The data-storage classes are wrapped in a SQL-engine layer, which implements the SQL interpreter and uses the underlying data-storage classes to manage the actual data. This layer exposes result sets (the set of rows that result from a SQL select operation) as Table objects, and you can examine the result set with a Cursor, so these two interfaces isolate both “faces” of the subsystem. (Like Janus, one face looks backward at the data-storage layer, and the other face looks forward at the JDBC layer.)

Finally, a JDBC-driver layer wraps the SQL engine with classes that implement the various interfaces required by JDBC, so you can access my simple database just like you would any other database. Using the JDBC Bridge also lets you easily replace my simple database with something that’s more fully featured without having to modify your code. The JDBC layer completely hides the underlying Table and Cursor interfaces. (You won’t have to worry about JDBC-related stuff until you get to “The JDBC Layer” section toward the end of this chapter. Everything I discuss up to that point has nothing to do with JDBC. I’ll explain how JDBC works when you get to that section, and when I do get to it, JDBC classes will be clearly indicated by using their fully qualified class names: java.sql.Xxx. If you don’t see the java.sql, then the class is one of mine.)

The messaging between layers is effectively unidirectional. For example, the SQL engine knows about, and send messages to, the data-storage layer, but the data-storage layer knows nothing about the SQL engine and sends no messages to any of the objects that comprise the SQL engine. This one-way communication vastly simplifies maintenance because you know that the effects of a given change are limited. Since these three layers are completely independent of one another, I can also discuss them independently.

Figure 4-1. Database-classes architecture

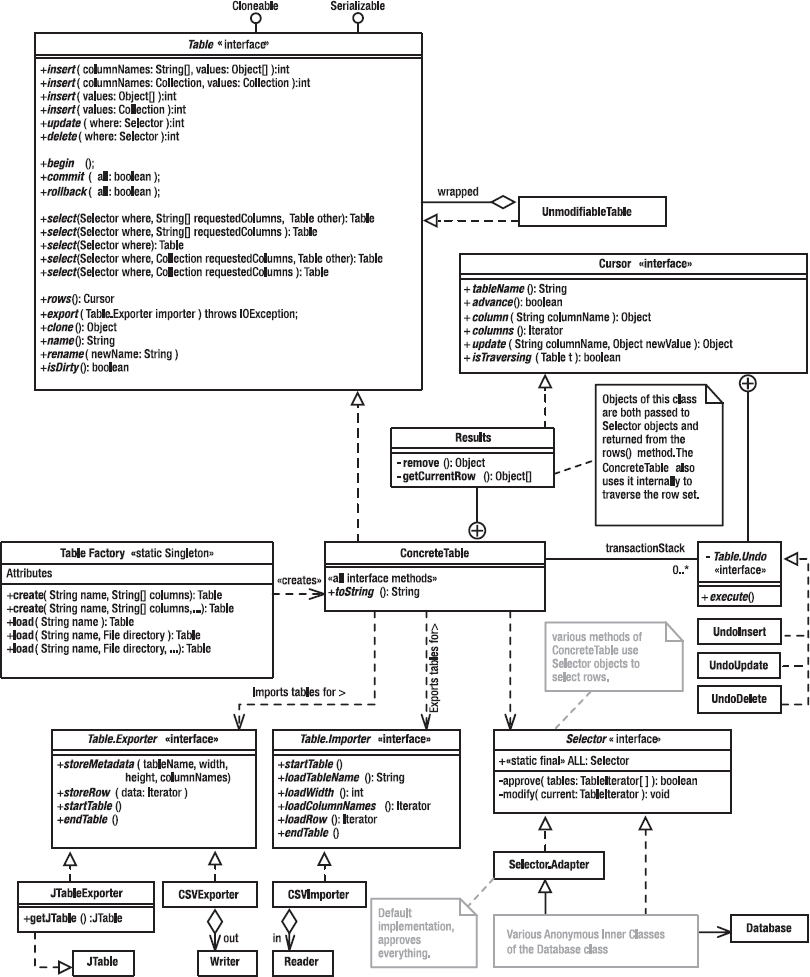

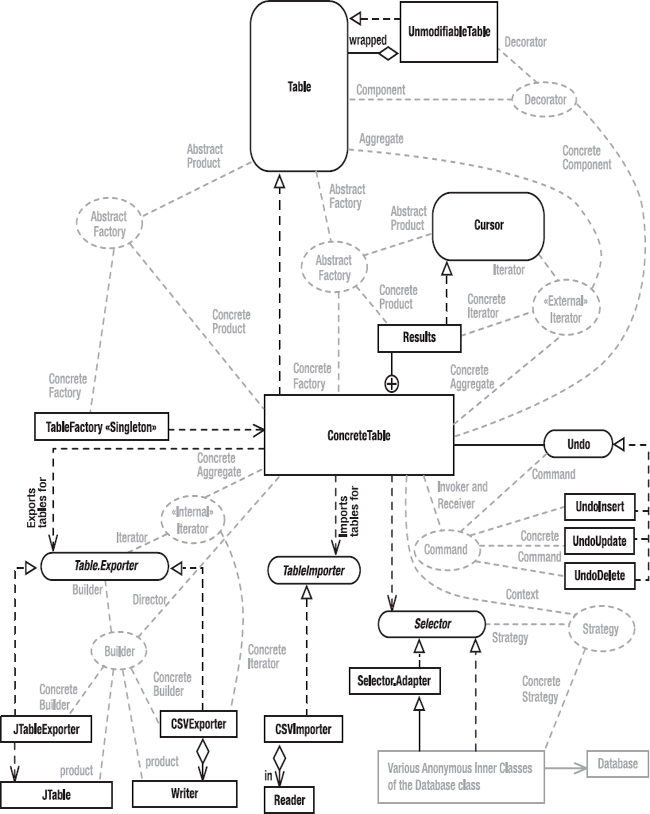

The Data-Storage Layer

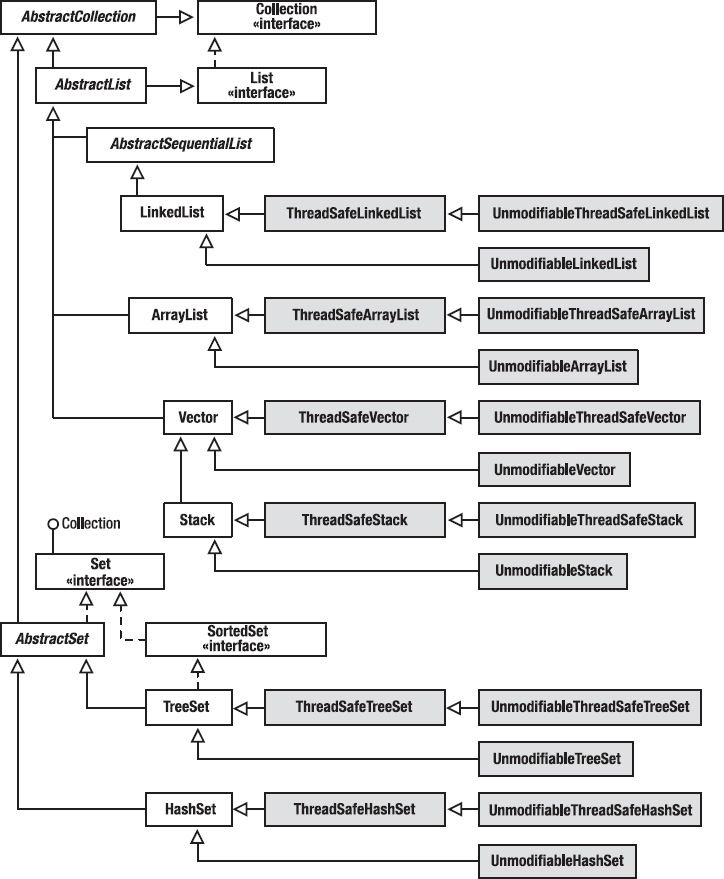

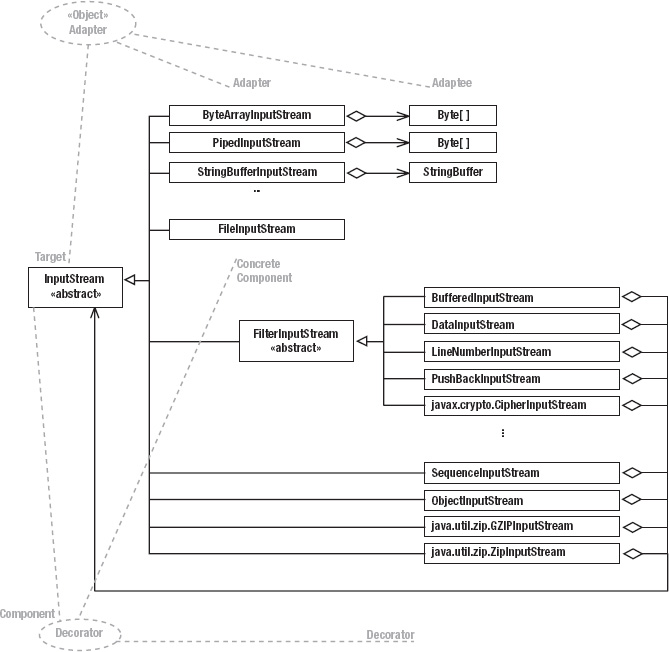

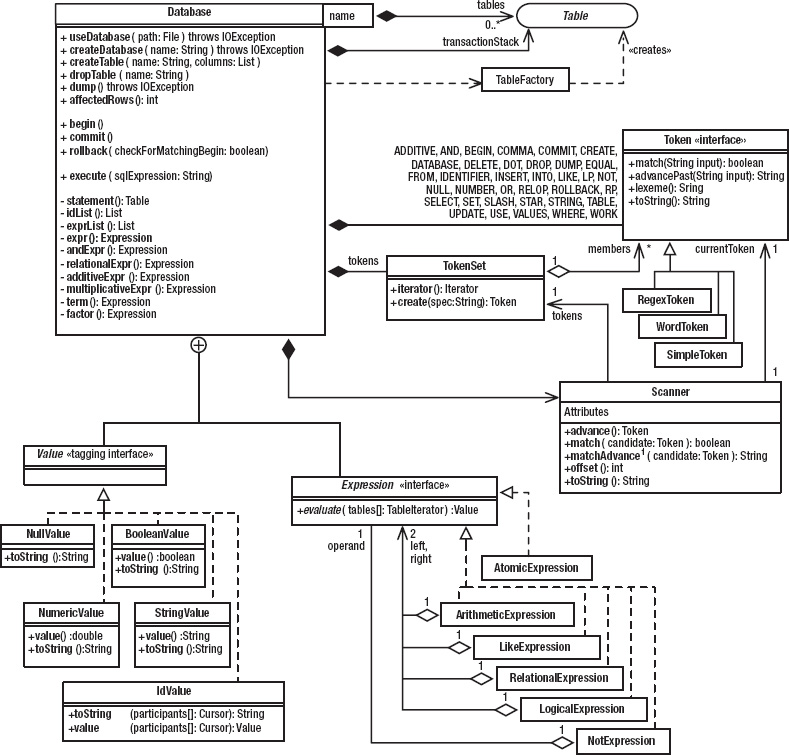

At the core of everything is the Table interface, its implementation (ConcreteTable), and various support classes and interfaces. As I did in the previous chapter, I’ll start with a couple of monstrous figures, which will seem confusing at first but make sense as I discuss the program one bit at a time. Figure 4-2 shows the static structure of the classes that comprise the data-storage layer: the Table interface and all its implementations and supporting classes. Figure 4-3 shows the design patterns. As was the case with Life, there are almost as many patterns as there are classes, which is to say that several classes participate in more than one pattern. You should bookmark these figures so that you can refer to them as I discuss the various patterns.

Figure 4-2. Data-storage layer: static structure

Figure 4-3. Data-storage layer: design patterns

The Table Interface

The Table interface (Listing 4-1) defines the methods you use to talk to a table. I’ve designed the Table so that it (and the underlying implementation) is useful as a data structure in its own right, without needing to wrap it with the SQL-related layers. Sometimes, you just need a searchable table rather than a whole database.

A Table is an in-memory data structure that you can use rather like a Collection. The interface doesn’t extend Collection, however, because a Table is really doing a different thing than a standard Collection—they just both happen to be data structures. There would be no way to implement most of the methods of the Collection interface in the Table. A Table supports the database notion of a column. You can think of a Table as a set of rows, each of which has several columns that are identified by name. It’s like a two-dimensional array, except that it’s searchable and the columns have names, not column indexes. (JDBC lets you specify a column by index, but I don’t do that in my own code, so I haven’t implemented the feature. Index-based access—as compared to named access—has given me grief when I’ve had to add columns to the database and the column indexes have changed as a consequence, so I don’t use them.)

Every cell (the intersection of a column and row) can hold an object. The rows in a Table are not ordered. You find a particular row by searching the table for rows whose columns match certain criteria (which you specify—more in a moment).

Table is an interface, not a class. An interface makes it possible to isolate the parts of the program that use the Table from the parts of the program that implement the Table. I can change everything about the implementation—even the concrete-class name—without any code on the “client” side of the interface changing. The comments in Listing 4-1 describe the various Table operations adequately, and I’ll have more to say about them when I look at the implementations.

Listing 4-1. Table.java

1 package com.holub.database;

2

3 import java.io.*;

4 import java.util.*;

5 import com.holub.database.Selector;

6

7 /** A table is a database-like table that provides support for

8 * queries.

9 */

10

11 public interface Table extends Serializable, Cloneable

12 {

13 /** Return a shallow copy of the table (the contents are not

14 * copied.

15 */

16 Object clone() throws CloneNotSupportedException;

17

18 /** Return the table name that was passed to the constructor

19 * (or read from the disk in the case of a table that

20 * was loaded from the disk.) This is a "getter," but

21 * it's a harmless one since it's just giving back a

22 * piece of information that it was given.

23 */

24 String name();

25

26 /** Rename the table to the indicated name. This method

27 * can also be used for naming the anonymous table that's

28 * returned from {@link #select select(...)}

29 * or one of its variants.

30 */

31 void rename( String newName );

32

33 /** Return true if this table has changed since it was created.

34 * This status isn't entirely accurate since it's possible

35 * for a user to change some object that's in the table

36 * without telling the table about the change, so a certain

37 * amount of user discipline is required. Returns true

38 * if you modify the table using a Table method (such as

39 * update, insert, etc.). The dirty bit is cleared when

40 * you export the table.

41 */

42 boolean isDirty();

43

44 /** Insert new values into the table corresponding to the

45 * specified column names. For example, the value at

46 * <code>values[i]</code> is put into the column specified

47 * in <code>columnNames[i]<code>. Columns that are not

48 * specified are initialized to <code>null.</code>.

49 *

50 * @return the number of rows affected by the operation.

51 * @throws IndexOutOfBoundsException One of the requested columns

52 * doesn't exist in either table.

53 */

54 int insert( String[] columnNames, Object[] values );

55

56 /** A convenience overload of {@link #insert(String[],Object[])} */

57

58 int insert( Collection columnNames, Collection values );

59

60 /** In this version of insert, values must have as many elements as there

61 * are columns, and the values must be in the order specified when the

62 * Table was created.

63 * @return the number of rows affected by the operation.

64 */

65 int insert( Object[] values );

66

67 /** A convenience overload of {@link #insert(Object[])}

68 */

69

70 int insert( Collection values );

71

72 /**

73 * Update cells in the table. The {@link Selector} object serves

74 * as a visitor whose <code>includeInSelect(...)</code> method

75 * is called for each row in the table. The return value is ignored,

76 * but the Selector can modify cells as it examines them. It's your

77 * responsibility not to modify primary-key and other constant

78 * fields.

79 * @return the number of rows affected by the operation.

80 */

81

82 int update( Selector where );

83

84 /** Delete from the table all rows approved by the Selector.

85 * @return the number of rows affected by the operation.

86 */

87

88 int delete( Selector where );

89

90 /** begin a transaction */

91 public void begin();

92

93 /** Commit a transaction.

94 * @throw IllegalStateException if no {@link #begin} was issued.

95 *

96 * @param all if false, commit only the innermost transaction,

97 * otherwise commit all transactions at all levels.

98 * @see #THIS_LEVEL

99 * @see #ALL

100 */

101 public void commit( boolean all ) throws IllegalStateException;

102

103 /** Roll back a transaction.

104 * @throw IllegalStateException if no {@link #begin} was issued.

105 * @param all if false, commit only the innermost transaction,

106 * otherwise commit all transactions at all levels.

107 * @see #THIS_LEVEL

108 * @see #ALL

109 */

110 public void rollback( boolean all ) throws IllegalStateException; 111

112 /** A convenience constant that makes calls to {@link #commit}

113 * and {@link #rollback} more readable when used as an

114 * argument to those methods.

115 * Use <code>commit(Table.THIS_LEVEL)</code> rather than

116 * <code>commit(false)</code>, for example.

117 */

118 public static final boolean THIS_LEVEL = false;

119

120 /** A convenience constant that makes calls to {@link #commit}

121 * and {@link #rollback} more readable when used as an

122 * argument to those methods.

123 * Use <code>commit(Table.ALL)</code> rather than

124 * <code>commit(true)</code>, for example.

125 */

126 public static final boolean ALL = true;

127

128 /**Described in the text on page 235*/

129

130 Table select(Selector where, String[] requestedColumns, Table[] other);

131

132 /** A more efficient version of

133 * <code>select(where, requestedColumns, null);</code>

134 */

135 Table select(Selector where, String[] requestedColumns );

136

137 /** A more efficient version of <code>select(where, null, null);</code>

138 */

139 Table select(Selector where);

140

141 /** A convenience method that translates Collections to arrays, then

142 * calls {@link #select(Selector,String[],Table[])};

143 * @param requestedColumns a collection of String objects

144 * representing the desired columns.

145 * @param other a collection of additional Table objects to join to

146 * the current one for the purposes of this SELECT

147 * operation.

148 */

149 Table select(Selector where, Collection requestedColumns,

150 Collection other);

151

152 /** Convenience method, translates Collection to String array, then

153 * calls String-array version.

154 */

155 Table select(Selector where, Collection requestedColumns );

156

157 /** Return an iterator across the rows of the current table.

158 */

159 Cursor rows();

160

161 /** Build a representation of the Table using the

162 * specified Exporter. Create an object from an

163 * {@link Table.Importer} using the constructor with an

164 * {@link Table.Importer} argument. The table's

165 * "dirty" status is cleared (set false) on an export.

166 * @see #isDirty

167 */

168 void export( Table.Exporter importer ) throws IOException;

169

170 /*******************************************************************

171 * Used for exporting tables in various formats. Note that

172 * I can add methods to this interface if the representation

173 * requires it without impacting the Table's clients at all.

174 */

175 public interface Exporter

176 { public void startTable() throws IOException;

177 public void storeMetadata(

178 String tableName,

179 int width,

180 int height,

181 Iterator columnNames ) throws IOException;

182 public void storeRow(Iterator data) throws IOException;

183 public void endTable() throws IOException;

184 }

185

186 /*******************************************************************

187 * Used for importing tables in various formats.

188 * Methods are called in the following order:

189 * <ul>

190 * <li><code>start()</code></li>

191 * <li><code>loadTableName()</code></li>

192 * <li><code>loadWidth()</code></li>

193 * <li><code>loadColumnNames()</code></li>

194 * <li><code>loadRow()<code> (multiple times)</li>

195 * <li><code>done()</code></li>

196 * </ul>

197 */

198 public interface Importer

199 { void startTable() throws IOException;

200 String loadTableName() throws IOException;

201 int loadWidth() throws IOException;

202 Iterator loadColumnNames() throws IOException;

203 Iterator loadRow() throws IOException;

204 void endTable() throws IOException;

205 }

206 }

The Bridge Pattern

The Table interface is part of the Bridge design pattern mentioned earlier. A Bridge separates subsystems from each other so that they can change independently. Unlike Facade (which you looked at in the previous chapter in the context of the menuing subsystem), a Bridge provides complete isolation between subsystems. Code on one side of the Bridge has no idea what’s on the other side. (Facade, you’ll remember, doesn’t hide the subsystem—it just simplifies access to it.)

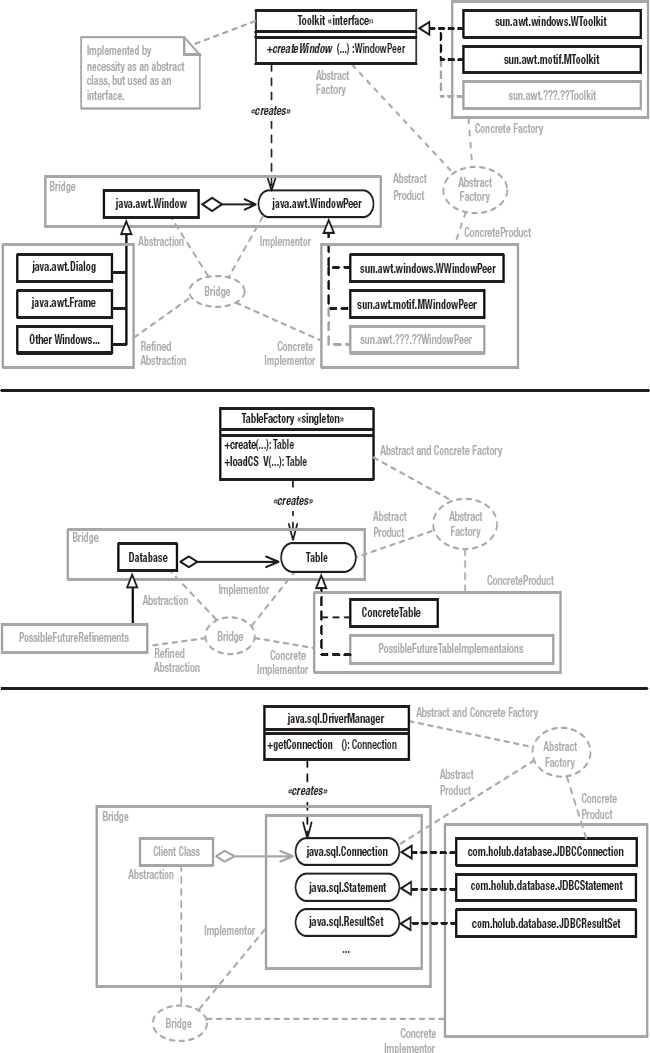

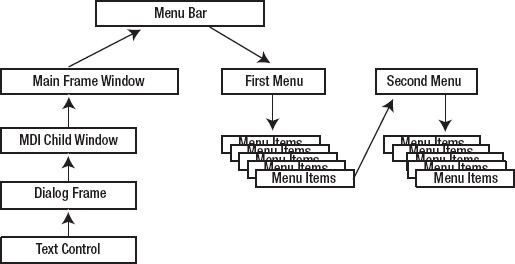

I’ll explain Bridge by presenting several examples, shown in Figure 4-4. The top section shows Java’s older AWT subsystem, and though you probably won’t be familiar with it unless you’re doing something such as PalmPilot programming, the architecture is a nice reification of the classic Gang-of-Four Bridge. The “client” classes (the ones that use java.awt.Window) can change completely without impacting the implementation of AWT. By the same token, the classes on the other side of the bridge (various “peer” classes that interface to the operating system’s GUI layer) can change without impacting the client classes. AWT leverages this ability to change in order to make the GUI model platform independent, so your PalmPilot application’s UI may well run on Windows, Linux, or some other operating system without modification.

The peer classes (the classes that implement the xxxPeer interfaces) are created at runtime using an Abstract Factory called java.awt.Toolkit. (Abstract Factory was discussed in Chapter 2, but to refresh your memory, a Collection is an Abstract Factory of Iterator objects. A Concrete Factory [ArrayList, for example] implements the Abstract-Factory interface to produce a Concrete Products [some implementation of Iterator whose class is unknown]. Other implementations of Collection may [or may not—you don’t know or care] produce different Concrete Products that implement the same Iterator interface in a way that makes sense for that particular data structure.) Toolkit is an abstract class used as an interface. (It has to be abstract because it needs to contain a static method.) The Singleton pattern is used to fetch a concrete instance of the Toolkit “interface.” That is, the Toolkit.getDefaultToolkit() method determines the operating environment at runtime (typically, by looking at a system property) and then instantiates a Toolkit implementer appropriate for that environment. I’ve shown the Windows and Motif variants in Figure 4-4, but a Toolkit implementation must exist for every environment on which a particular JVM runs.

The concrete Toolkit object acts as an Abstract Factory of “peer” objects. The peers are system-dependent implementers of various graphical objects. I’ve shown one such peer (the WindowPeer, which implements an unbordered stand-alone window) in Figure 4-4, but 27 peer interfaces exist and create the whole panoply of graphical objects (ButtonPeer, CanvasPeer, CheckboxPeer, and so on). For each of these interfaces, a system-dependent implementation exists that’s manufactured by the system-dependent concrete Toolkit in response to one of its createxxx(...) methods. For example, the Windows Toolkit (sun.awt.windows.WToolkit) will produce a Windows-specific peer (sun.awt.windows.WWindowPeer) when you call Toolkit. createWindow(). The Motif Toolkit (sun.awt.windows.MToolkit) will produce a Motif-specific peer (sun.awt.motif.MWindowPeer) when you call Toolkit.createWindow().

The Abstract Factory I’ve just described is used in concert with the Bridge pattern to isolate the mechanics of switching subsystems. The java.awt.Window class understands Toolkits and peers. When you create a Window, that object creates the appropriate peer. As long as you program in terms of Window objects, you don’t need to know that the peer exists. Consequently, everything on the other side of the bridge (all the peers) can change radically, even at runtime, without your program’s side of the bridge being impacted. By the same token, none of the peer implementations knows or cares how they’re used. Consequently, your program can change radically without the peer implementations knowing or caring.

It is commonplace for Abstract Factory and Bridge to be used together in the way I just described. You will rarely see a Bridge without an Abstract Factory helping to create the Concrete Implementor objects (for example, WWindowPeer and MWindowPeer).

Another example of Singleton, Abstract Factory, and Bridge working together includes the java.text.NumberFormat class, which is used to parse and print numbers in a Locale-specific way. When you call NumberFormat.getInstance(), you’re using Singleton to access an Abstract Factory that creates some subclass of NumberFormat that understands the current Locale. The NumberFormat “interface” serves as a Bridge that isolates your program from the rather complicated subsystem that deals with Locale-specific formatting. That subsystem could change (to support new Locales, for example), and your code wouldn’t know it.

Now let’s apply the notion of Bridge to the current problem. Referring again to Figure 4-4, the Table uses an Abstract-Factory/Bridge strategy much like AWT and NumberFormat, but things are simplified a bit. I’ll cover the Abstract-Factory issues in the next section, but the figure shows a small bridge consisting of the Database class (the core of the SQL engine, which I’ll describe shortly) and the Table interface. If you program in terms of Database objects, you don’t know or care that the Table exists. It’s possible, then, to completely change the underlying table implementation—even at runtime—without your code caring about the change. For example, at some future date I may introduce several kinds of Tables that store the underlying data in a way that’s particularly efficient for a particular data set. The Bridge, however, isolates your code from that change.

I’ll come back to Bridge (and to the third part of Figure 4-4) later in this chapter.

Creating a Table, Abstract Factory

Now let’s look at the Abstract-Factory component of the Table creation process. The following code creates a Table named people whose rows have three columns named last, first, and addrID (for address identifier—not a particularly readable name, but short column names are good because they improve search times):

Table people = TableFactory.create(

"people", new String[]{"last", "first", "addrId" } );

You can also create a table from data stored on the disk in comma-separated-value (CSV) format. The following call reads a table called address (which must be stored in a file named address) from the specified directory:

Table address = tableFactory.load( "address.csv", "c:/data/directory" );

The data is stored in CSV format, where every row of the table is on its own line and commas separate the column values from each other. Here’s a short sample:

address

addrId, street, city, state, zip

0, 12 MyStreet, Berkeley, CA, 99998

1, 34 Quarry Ln., Bedrock, AZ, 00000

The first line is the table name, the second line names the columns, and the remaining lines specify the rows.

Figure 4-4. The Bridge Pattern in AWT, com.holub.database, and JDBC

The TableFactory demonstrates the reification of Abstract Factory that I’ve used most often. Unlike the classic reification (as embodied by Collection and Iterator), there’s no interface in the Abstract Factory role of which Collection is an example. (More precisely, the TableFactory class serves as its own interface, as if Collection were an actual class, not an interface). You just have no need to complicate the code with a separate interface. What makes this reification an Abstract Factory is that it satisfies the same “intent” as a classic Abstract Factory: It provides a means of “creating families of related or dependant objects without specifying their concrete classes,” to quote the Gang of Four. TableFactory is another example of how reifications of a particular pattern can differ in form.

This particular Factory creates Table implementers, of which only one is currently present—the ConcreteTable, which I’ll discuss in subsequent sections. This particular reification of Abstract Factory is in some ways just the skeleton of a pattern, put in place primarily so that I can provide alternative Table implementations in the future. That is, this particular Factory isn’t producing a “family” of objects right now but is put into the code to make it easy to increase the size of the “family” to some number greater than 1 if the need arises. Putting a small factory into the code up front has virtually no cost but provides for a lot of down-the-line flexibility, so the potential payback is high. On the other hand, the Factory does add a small amount of extra complexity to the code.

The TableFactory source code is in Listing 4-2. For the most part, its methods do nothing but hide calls to new. The load()method (Listing 4-2, line 57) is interesting in that the method can potentially load a table from various file formats on the disk. I’ll explain the code in the load() method in greater depth in the next section, but let’s look now at what the method does rather than how it does it.

The load() method is passed a filename and location, and it creates a Table from the data it finds in that file. Right now, it examines the filename extension to determine the data format, but it could just as easily examine the contents of the file. It’s a trivial matter to make it load from an XML file rather than a CSV file, for example. It could even be passed a .sql file and load the Table by using the SQL. In other words, the method potentially isolates you completely from the underlying data format. The file read by the load() method may also specify a particular type of Table that could represent the data in a more efficient way than the current Table implementation, and the Factory could produce that specific Concrete Product. Since load() hides the complexity of creating a table, you can also think of TableFactory as a simple Facade |reification.

Listing 4-2. TableFactory.java

1 package com.holub.database;

2

3 import java.io.*;

4

5 public class TableFactory

6 {

7 /** Create an empty table.

8 * @param name the table name

9 * @param columns names of all the columns

10 * @return the table

11 */

12 public static Table create( String name, String[] columns )

13 { return new ConcreteTable( name, columns );

14 }

15

16 /** Create a table from information provided by a

17 * {@link Table.Importer} object.

18 */

19 public static Table create( Table.Importer importer )

20 throws IOException

21 { return new ConcreteTable( importer );

22 }

23

24 /** This convenience method is equivalent to

25 * <code>load(name, new File(".") );</code>

26 *

27 * @see #load(String,File)

28 */

29 public static Table load( String name ) throws IOException

30 { return load( name, new File(".") );

31 }

32

33 /** This convenience method is equivalent to

34 * <code>load(name, new File(location) );</code>

35 *

36 * @see #load(String,File)

37 */

38 public static Table load( String name, String location )

39 throws IOException

40 { return load( name, new File(location) );

41 }

42

43 /* Create a table from some form stored on the disk.

44 *

45 * <p>At present, the filename extension is used to determine

46 * the data format, and only a comma-separated-value file

47 * is recognized (the filename must end in .csv).

48 *

49 * @param the filename. The table name is the string to the

50 * left of the extension. For example, if the file

51 * is "foo.csv," then the table name is "foo."

52 * @param the directory within which the file is found.

53 *

54 * @throws java.io.IOException if the filename extension is not

55 * recognized.

56 */

57 public static Table load( String name, File directory )

58 throws IOException

59 {

60 if( !(name.endsWith( ".csv" ) || name.endsWith( ".CSV" )) )

61 throw new java.io.IOException(

62 "Filename (" +name+ ") does not end in "

63 +"supported extension (.csv)" );

64

65 Reader in = new FileReader( new File( directory, name ));

66 Table loaded = new ConcreteTable( new CSVImporter( in ));

67 in.close();

68 return loaded;

69 }

70 }

Creating and Saving a Table: Passive Iterators and Builder

Now let’s move onto the ConcreteTable implementation of Table. You can bring tables into existence in two ways. A run-of-the-mill constructor is passed a table name and an array of strings that define the column names. Here’s an example:

Table t = new ConcreteTable( "table-name",

new String[]{ "column1", "column2" } );

The sources for ConcreteTable up to and including this constructor definition are in Listing 4-3. The ConcreteTable represents the table as a list of arrays of Object (rowSet, line 32). The columnNames array (line 33) is an array of Strings that both define the column names and organize the Object arrays that comprise the rows. A one-to-one relationship exists between the index of a column name in the columnNames table and the index of the associated data in one of the rowSet arrays. If you find the column name X at columnNames[i], then you can find the associated data for column X at the ith position in one of these Object arrays.

The private indexOf method on line 49 is used internally to do this column-name-to-index mapping. It’s passed a column name and returns the associated index.

Note that everything is 0 indexed, which is intuitive to a Java programmer. Unfortunately, SQL is 1 indexed, so you have to be careful if you implement any of the SQL methods that specify column data using indexes. I’ve taken the coward’s way out and have not implemented any of the index-based JDBC methods. Be careful of off-by-one errors if you add these methods to my implementation.

Of the other fields at the top of the class definition, tableName does the obvious, and isDirty is true if the table has been modified. (I use this field to avoid unnecessary writes to disk). I’ll discuss transactionStack soon, when I discuss transaction processing.

Listing 4-3. ConcreteTable.java: Simple Table Creation

1 package com.holub.database;

2

3 import java.io.*;

4 import java.util.*;

5 import com.holub.tools.ArrayIterator;

6

7 /** The concrete class that implements the Table "interface."

8 * This class is not thread safe.

9 * Create instances of this class using {@link TableFactory} class,

10 * not <code>new</code>.

11 *

12 * <p>Note that a ConcreteTable is both serializable and "Cloneable,"

13 * so you can easily store it onto the disk in binary form

14 * or make a copy of it. Clone implements a shallow copy, however,

15 * so it can be used to implement a rollback of an insert or delete,

16 * but not an update.

17 */

18

19 /*package*/ class ConcreteTable implements Table

20 {

21 // Supporting clone() complicates the following declarations. In

22 // particular, the fields can't be final because they're modified

23 // in the clone() method. Also, the rows field has to be declared

24 // as a LinkedList (rather than a List) because Cloneable is made

25 // public at the LinkedList level. If you declare it as a List,

26 // you'll get an error message because clone()—for reasons that

27 // are mysterious to me—is declared protected in Object.

28 //

29 // Be sure to change the clone() method if you modify anything about

30 // any of these fields.

31

32 private LinkedList rowSet = new LinkedList();

33 private String[] columnNames;

34 private String tableName;

35

36 private transient boolean isDirty = false;

37 private transient LinkedList transactionStack = new LinkedList();

38

39 //----------------------------------------------------------------------

40 public ConcreteTable( String tableName, String[] columnNames )

41 { this.tableName = tableName;

42 this.columnNames = (String[]) columnNames.clone();

43 }

44

45 //----------------------------------------------------------------------

46 // Return the index of the named column. Throw an

47 // IndexOutOfBoundsException if the column doesn't exist.

48 //

49 private int indexOf( String columnName )

50 { for( int i = 0; i < columnNames.length; ++i )

51 if( columnNames[i].equals( columnName ) )

52 return i;

53

54 throw new IndexOutOfBoundsException(

55 "Column ("+columnName+") doesn't exist in " + tableName );

56 }

The second constructor (shown in Listing 4-4) is more interesting. Here, the constructor is passed an object that implements the Table.Importer interface, defined on line 198 of Listing 4-1. The constructor uses the Importer to import data into an empty table. The source code is in Listing 4-4 (p. 196). As you can see, the constructor just calls the various methods of the Importer in sequence to get table metadata (the table name, column names, and so forth). It then calls loadRow() multiple times to load the rows.

Each call to loadRow() returns a standard java.util.Iterator, which iterates across the data representing a single row in left-to-right order. By using an iterator (rather than an array), I’ve isolated myself completely from the way that the row data is stored internally. The Iterator returned from the Importer could even synthesize the data internally.

The load process finishes up with a call to done().

Listing 4-4. ConcreteTable.java Continued: Importing and Exporting

57 //----------------------------------------------------------------------

58 public ConcreteTable( Table.Importer importer ) throws IOException

59 { importer.startTable();

60

61 tableName = importer.loadTableName();

62 int width = importer.loadWidth();

63 Iterator columns = importer.loadColumnNames();

64

65 this.columnNames = new String[ width ];

66 for(int i = 0; columns.hasNext() ;)

67 columnNames[i++] = (String) columns.next();

68

69 while( (columns = importer.loadRow()) != null )

70 { Object[] current = new Object[width];

71 for(int i = 0; columns.hasNext() ;)

72 current[i++] = columns.next();

73 this.insert( current );

74 }

75 importer.endTable();

76 }

77 //----------------------------------------------------------------------

78 public void export( Table.Exporter exporter ) throws IOException

79 { exporter.startTable();

80 exporter.storeMetadata( tableName,

81 columnNames.length,

82 rowSet.size(),

83 new ArrayIterator(columnNames) );

84

85 for( Iterator i = rowSet.iterator(); i.hasNext(); )

86 exporter.storeRow( new ArrayIterator((Object[]) i.next()) );

87

88 exporter.endTable();

89 isDirty = false;

90 }

The CSVImporter class (Listing 4-5) demonstrates how to build an Importer. The following code shows a simplified version of how the TableFactory, discussed earlier, uses the CSVImporter to load a CSV file. The importer is created in the constructor call on the second line of the method. It’s passed a Reader to use for input. The ConcreteTable constructor then calls the various methods of the CSVImporter to initialize the table.

public static Table loadCSV( String name, File directory ) throws IOException

{

Reader in = new FileReader( new File( directory, name ));

Table loaded = new ConcreteTable( new CSVImporter( in ));

in.close();

return loaded;

}

As you can see in Listing 4-5, there’s not that much to building an importer. The startTable() method reads the table name first, and then it reads the metadata (the column names) from the second line and splits them into an array of String objects. The loadRow() method then reads the rows and then splits them. I’ll discuss the ArrayIterator class called on line 30 in a moment.

Listing 4-5. CSVImporter.java

1 package com.holub.database;

2

3 import com.holub.tools.ArrayIterator;

4

5 import java.io.*;

6 import java.util.*;

7

8 public class CSVImporter implements Table.Importer

9 { private BufferedReader in; // null once end-of-file reached

10 private String[] columnNames;

11 private String tableName;

12

13 public CSVImporter( Reader in )

14 { this.in = in instanceof BufferedReader

15 ? (BufferedReader)in

16 : new BufferedReader(in)

17 ;

18 }

19 public void startTable() throws IOException

20 { tableName = in.readLine().trim();

21 columnNames = in.readLine().split("\s*,\s*");

22 }

23 public String loadTableName() throws IOException

24 { return tableName;

25 }

26 public int loadWidth() throws IOException

27 { return columnNames.length;

28 }

29 public Iterator loadColumnNames() throws IOException

30 { return new ArrayIterator(columnNames);

31 }

32

33 public Iterator loadRow() throws IOException

34 { Iterator row = null;

35 if( in != null )

36 { String line = in.readLine();

37 if( line == null )

38 in = null;

39 else

40 row = new ArrayIterator( line.split("\s*,\s*"));

41 }

42 return row;

43 }

44

45 public void endTable() throws IOException

46 }

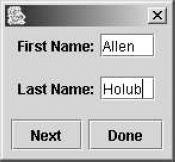

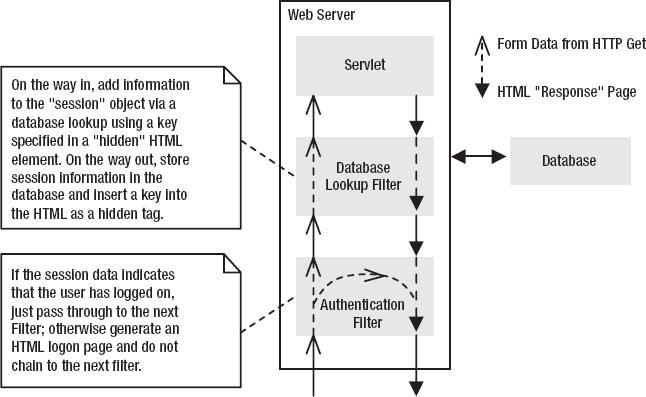

A more interesting importer is in Listing 4-6. The PeopleImporter loads a Table using the interactive UI pictured in Figure 4-5. You create a table that initializes itself interactively as follows:

Table t = TableFactory.create( new PeopleImporter() );

System.out.println( t.toString() );

System.exit(0);

My main intent is to illustrate the techniques used, so this class isn’t really production quality, but it demonstrates an effective way to separate a UI from the “business object.” I can completely change the structure of the user interface by changing the definition of the PeopleImporter. The Table implementation is completely unaffected by changes to the UI. In fact, the Table doesn’t even know that it’s being initialized from an interactive user interface.

Looking at the implementation, the getRowDataFromUser() method (Listing 4-6, line 46) creates the user interface, and the button handlers at the bottom of the method transfer the row data from the text-input fields to the rows LinkedList (declared on line 19). The balance of the class works much like the CSVImporter, but it transfers data from the rows array to the Table.

Figure 4-5. The PeopleImporter user interface

Listing 4-6. PeopleImporter.java

1 import com.holub.tools.ArrayIterator;

2 import com.holub.database.Table;

3 import com.holub.database.TableFactory;

4

5 import java.io.*;

6 import java.util.*;

7 import javax.swing.*;

8 import java.awt.*;

9 import java.awt.event.*;

10

11 /** A very simplistic demonstration of using Builder for

12 * interactive input. Doesn't do validation, error detection,

13 * etc. Also, I read all the user data, then import it to the

14 * table, rather than reading the UI on a per-row basis.

15 */

16

17 public class PeopleImporter implements Table.Importer

18 {

19 private LinkedList rows = new LinkedList();

20

21 public void startTable() throws IOException

22 { getRowDataFromUser();

23 }

24 public String loadTableName() throws IOException

25 { return "people";

26 }

27 public int loadWidth() throws IOException

28 { return 3;

29 }

30 public Iterator loadColumnNames() throws IOException

31 { return new ArrayIterator(

32 new String[]{"first", "last", "addrID"});

33 }

34 public Iterator loadRow() throws IOException

35 { try

36 { String[] row = (String[])( rows.removeFirst() );

37 return new ArrayIterator( row );

38 }

39 catch( NoSuchElementException e )

40 { return null;

41 }

42 }

43

44 public void endTable() throws IOException

45

46 private void getRowDataFromUser()

47 {

48 final JTextField first = new JTextField(" ");

49 final JTextField last = new JTextField(" ");

50 final JDialog ui = new JDialog();

51

52 ui.setModal( true );

53 ui.getContentPane().setLayout( new GridLayout(3,1) );

54

55 JPanel panel = new JPanel();

56

57 panel.setLayout( new FlowLayout() );

58 panel.add( new JLabel("First Name:") );

59 panel.add( first );

60 ui.getContentPane().add( panel );

61

62 panel = new JPanel();

63

64 panel.setLayout( new FlowLayout() );

65 panel.add( new JLabel("Last Name:") );

66 panel.add( last );

67 ui.getContentPane().add( panel );

68

69 JButton done = new JButton("Done");

70 JButton next = new JButton("Next");

71 panel = new JPanel();

72

73 done.addActionListener

74 ( new ActionListener()

75 { public void actionPerformed( ActionEvent e )

76 { rows.add

77 ( new String[]{ first.getText().trim(),

78 last.getText().trim() }

79 );

80 ui.dispose();

81 }

82 }

83 );

84

85 next.addActionListener

86 ( new ActionListener()

87 { public void actionPerformed( ActionEvent e )

88 { rows.add

89 ( new String[]{ first.getText().trim(),

90 last.getText().trim() }

91 );

92 first.setText("");

93 last.setText("");

94 }

95 }

96 );

97 panel.add( next );

98 panel.add( done );

99 ui.getContentPane().add( panel );

100

101 ui.pack();

102 ui.show();

103 }

104

105 public static class Test

106 { public static void main( String[] args ) throws IOException

107 { Table t = TableFactory.create( new PeopleImporter() );

108 System.out.println( t.toString() );

109 System.exit(0);

110 }

111 }

112 }

The other method of interest in Listing 4-4 is the export() method, which exports the table data similarly to the way the constructor imports the data. The export() method takes a Table.Exporter argument and sends the Exporter the metadata and the rows. (The Exporter interface is also an inner class of Table, defined on line 175 of Listing 4-1.)

As with the Importer constructor, the export() method first asks the Exporter to store the table metadata, and then it passes the Exporter the rows one at a time. As was the case with the Importer constructor, the export() method passes the Exporter a java.util.Iterator across the columns of a row rather than passing an Object array. This way, the Table implementor is not tied into a particular representation of the underlying data. (The ConcreteTable, for example, isn’t giving away the fact that it stores rows as Object arrays.)

The CSVExporter class in Listing 4-7 demonstrates how to build an Exporter. It’s passed a Writer and just writes the table data to that stream.

Listing 4-7. CSVExporter.java

1 package com.holub.database;

2

3 import java.io.*;

4 import java.util.*;

5

6 public class CSVExporter implements Table.Exporter

7 { private final Writer out;

8 private int width;

9

10 public CSVExporter( Writer out )

11 { this.out = out;

12 }

13

14 public void storeMetadata( String tableName,

15 int width,

16 int height,

17 Iterator columnNames ) throws IOException

18

19 { this.width = width;

20 out.write(tableName == null ? "<anonymous>" : tableName );

21 out.write("

");

22 storeRow( columnNames ); // comma-separated list of column IDs

23 }

24

25 public void storeRow( Iterator data ) throws IOException

26 { int i = width;

27 while( data.hasNext() )

28 { Object datum = data.next();

29

30 // Null columns are represented by an empty field

31 // (two commas in a row). There's nothing to write

32 // if the column data is null.

33 if( datum != null )

34 out.write( datum.toString() );

35

36 if( --i > 0 )

37 out.write(", ");

38 }

39 out.write("

");

40 }

41

42 public void startTable() throws IOException {/*nothing to do*/}

43 public void endTable() throws IOException {/*nothing to do*/}

44 }

A more interesting example of an exporter is the JTableExporter in Listing 4-8, which builds the UI in Figure 4-6. The code that created the UI in the Test class is at the bottom of Listing 4-8 (line 45), but here’s the essential stuff:

Table people = ...;

JFrame frame = ...;

JTableExporter tableBuilder = new JTableExporter();

people.export( tableBuilder );

frame.getContentPane().add(

new JScrollPane( tableBuilder.getJTable() ) );

You pass the Table’s export() method a JTableExporter() rather than a CSVExporter(). The JTableExporter() creates a JTable and populates it from the table data. You then extract the JTable from the exporter with the getJTable() call. (This “get” method is not really an accessor since the whole point of the JTableExporter is to create a JTable—I’m not giving away any surprising implementation details here.)

Figure 4-6. The JTableExporter user interface

Listing 4-8. JTableExporter.java

1 package com.holub.database;

2

3 import java.io.*;

4 import java.util.*;

5 import javax.swing.*;

6

7 public class JTableExporter implements Table.Exporter

8 {

9 private String[] columnHeads;

10 private Object[][] contents;

11 private int rowIndex = 0;

12

13 public void startTable() throws IOException { rowIndex = 0; }

14

15 public void storeMetadata( String tableName,

16 int width,

17 int height,

18 Iterator columnNames ) throws IOException

19 {

20 contents = new Object[height][width];

21 columnHeads = new String[width];

22

23 int columnIndex = 0;

24 while( columnNames.hasNext() )

25 columnHeads[columnIndex++] = columnNames.next().toString();

26 }

27

28 public void storeRow( Iterator data ) throws IOException

29 { int columnIndex = 0;

30 while( data.hasNext() )

31 contents[rowIndex][columnIndex++] = data.next();

32 ++rowIndex;

33 }

34

35 public void endTable() throws IOException {/*nothing to do*/}

36

37 /** Return the Concrete Product of this builder—a JTable

38 * initialized with the table data.

39 */

40 public JTable getJTable()

41 { return new JTable( contents, columnHeads );

42 }

43

44 public static class Test

45 { public static void main( String[] args ) throws IOException

46 {

47 Table people = TableFactory.create( "people",

48 new String[]{ "First", "Last" } );

49 people.insert( new String[]{ "Allen", "Holub" } );

50 people.insert( new String[]{ "Ichabod", "Crane" } );

51 people.insert( new String[]{ "Rip", "VanWinkle" } );

52 people.insert( new String[]{ "Goldie", "Locks" } );

53

54 javax.swing.JFrame frame = new javax.swing.JFrame();

55 frame.setDefaultCloseOperation( JFrame.EXIT_ON_CLOSE );

56

57 JTableExporter tableBuilder = new JTableExporter();

58 people.export( tableBuilder );

59

60 frame.getContentPane().add(

61 new JScrollPane( tableBuilder.getJTable() ) );

62 frame.pack();

63 frame.show();

64 }

65 }

66 }

The import/export code you’ve just been looking at is an example of the Builder design pattern. The basic idea of Builder is separate the code that creates an object from the object’s internal representation so that the same construction process can be used to create different sorts of representations. Put another way, Builder separates a “business object” (an object that models some domain-level abstraction) from implementation-specific details such as how to display that business object on the screen or put it in a database. Using Builder, a domain-level object can create multiple representations of itself without having to be rewritten. The business object has the role of Director in the pattern, and objects in the role of Concrete-Builder (which implement the Builder interface) create the representation. In the current example, ConcreteTable is the Director, Table.Exporter is the Builder, and CSVExporter is the Concrete Builder.

To my mind Table.Importer is also a Builder (of Table objects) and CSVImporter is the matching Concrete Builder, though this way of looking at things is rather backward since I’m turning multiple representations into a single object rather than the other way around. The implementation is certainly similar, however.

As you saw in the PeopleImporter and JTableExporter classes, Builder nicely solves the object-oriented UI conundrum mentioned way back in Chapter 2. If an object can’t expose implementation details (so you can’t have getter and setter methods), then how do you create a user interface, particularly if an object has to represent itself in different ways to different subsystems? An object could have a method that returns a JComponent representation of itself or of some attribute of itself, but what if you’re building a server-side UI and you need an HTML representation? What if you’re talking to a database and you need an XML or SQL representation? Adding a billion methods to the class, one for each possible representation (getXML(), getJComponent(), getSQL(), getHTML(), and so on) isn’t a viable solution—it’s too much of a maintenance hassle to go into the class definition every time a new business requirement needs a new representation..

As you just saw, however, a Builder is perfectly able to accommodate disparate representations, and adding a new Builder doesn’t require any modifications to the domain-level object at all. Any Table can be represented as a CSV list and also as a JComponent, all without having to modify the Table implementation. In fact, Builder provides a nice way to concentrate all the UI logic in a single place (the Concrete Builder) and to separate the UI logic from the “business logic” (all of which is in the Director).

Using Builder lets me support representations that don’t need to exist when the program is first written. Adding XMLImporter and XMLExporter implementations of Table.Importer and Table.Exporter is an easy matter. Once I create these new classes, a Table can now store itself in XML format and load itself from an XML file. Moreover, I’ve added XML export/import to every Table implementation (all of which have to be Directors), not just ConcreteTable.

The only difficulty with Builder is in the design of the Builder interface itself. This interface has to have methods that accommodate all displayable state information. Consequently, a tight coupling relationship exists between the objects in the Director and Builder roles, simply because the Builder interface has to have methods that are “tuned” for use by the Director. It’s possible that the Director could change in such a way that you would have to modify the Builder interface (and all the Concrete Builders, too) to accommodate the change. This problem is really the getter problem I discussed in Chapter 1. Builder, however, restricts the scope of the problem to a small number of classes (the Concrete Builders). I’d have a much worse situation if I were to put get/set methods in the Director (the Table). Unknown coupling relationships with random classes would be scattered all over the program. In any event, I haven’t often needed to make those sorts of changes in practice.

Populating the Table

The next order of business is to put some data into the table. Several methods are provided for this purpose. The simplest method, shown below, inserts a new row into the table. It’s up to you to assure that the order of array elements matches the order of columns specified when the table was created.

people.insert( new Object[]{ "Holub", "Allen", "1" } );

The foregoing method is delicate—it depends on a particular column ordering to work correctly. It’s too easy to get the column ordering wrong. A second overload of insert(...) solves the problem by requiring both column names and their contents. The columns don’t have to be in declaration order, but the two arrays much match. When firstArgument[i] specifies a column, secondArgument[i] must specify that column’s contents. Here’s an example:

people.insert( new String[]{ "addrId", "first", "last" },

new Object[]{ "1", "Fred", "Flintstone"} );

Also, Collection versions of both methods exist. The following code creates a row from the Collection elements:

List rowData = new ArrayList();

rowData.add("Flintstone");

rowData.add("Wilma");

rowData.add("1")

people.insert(rowData);

The following codes does the same thing, but with rows specified in an arbitrary order:

List columnNames = new ArrayList();

columnNames.add("addrId");

columnNames.add("first");

columnNames.add("last");

List rowData = new ArrayList();

rowData.add("1")

rowData.add("Pebbles");

rowData.add("Flintstone");

people.insert( columnNames, rowData );

This last method seems to be of dubious value, but it turns out that a two-collection variant is quite useful when building the SQL interpreter, as you’ll see later in the chapter.

Finally, I’ve provided a Map version where the keys are the column names and the values are the contents.

The source code for the methods that insert rows is in Listing 4-9. The overloads that don’t take column-name arguments just add a new object array to the rowSet. The other methods go through the column names in sequence, determine the index in the object array associated with that column (using indexOf(...)), and put the appropriate data into the appropriate element of the Object array that represents the row.

The actual inserting of the object array into the list representing the table is done by the doInsert() method (Listing 4-9, line 148). I’ll come back to the registerInsert(...) method that’s called at the top of doInsert() in a moment, when I talk about the undo system. For now, note that all insert operations mark the table as “dirty.” This boolean is initially false; it’s marked true by all operations that modify the table, and it’s reset to false by the export() method discussed earlier. A client class can determine the “dirty” state of the table by calling someTable.isDirty(). The SQL-engine layer uses this mechanism to avoid flushing to disk tables that have been read, but not modified, when you issue a SQL DUMP request.

The isDirty() method, by the way, is not a “get” method of the sort I was railing against in Chapter 1, even though it’s implemented in the simplest possible way to return a private field:

public boolean isDirty(){ return isDirty; }

The fact that the “dirty” state is stored in a boolean, and that isDirty() returns that boolean, is just an implementation convenience. I’m not exposing any implementation details (there’s no way that the client can determine how the table decides whether it’s dirty), and since there’s no setDirty(), there’s no way for the “dirty” state to be corrupted from outside. I can change the implementation and represent the “dirty” state in some other way without impacting the interface or the classes that use the interface.

If you find this explanation confusing, think about the design process. I decided at design time that a “dirty” state was necessary, so I provided an isDirty() method in the interface. Later, I added the simplest implementation possible. This way of working is fundamentally different from starting with a isDirty field in the class and then adding getDirty() and setDirty() as a matter of course. It would be a serious error for a setDirty() method to exist because, where that method present, a client object could break a Table object by making the Table think it didn’t need to be flushed to disk when it actually did; setDirty() has no valid use.

Listing 4-9. ConcreteTable.java Continued: Inserting Rows

91 //----------------------------------------------------------------------

92 // Inserting

93 //

94 public int insert( String[] intoTheseColumns, Object[] values )

95 {

96 assert( intoTheseColumns.length == values.length )

97 :"There must be exactly one value for "

98 +"each specified column" ;

99

100 Object[] newRow = new Object[ width() ];

101

102 for( int i = 0; i < intoTheseColumns.length; ++i )

103 newRow[ indexOf(intoTheseColumns[i]) ] = values[i];

104

105 doInsert( newRow );

106 return 1;

107 }

108 //----------------------------------------------------------------------

109 public int insert( Collection intoTheseColumns, Collection values )

110 { assert( intoTheseColumns.size() == values.size() )

111 :"There must be exactly one value for "

112 +"each specified column" ;

113

114 Object[] newRow = new Object[ width() ];

115

116 Iterator v = values.iterator();

117 Iterator c = intoTheseColumns.iterator();

118 while( c.hasNext() && v.hasNext() )

119 newRow[ indexOf((String)c.next()) ] = v.next();

120

121 doInsert( newRow );

122 return 1;

123 }

124 //----------------------------------------------------------------------

125 public int insert( Map row )

126 { // A map is considered to be "ordered," with the order defined

127 // as the order in which an iterator across a "view" returns

128 // values. My reading of this statement is that the iterator

129 // across the keySet() visits keys in the same order as the

130 // iterator across the values() visits the values.

131

132 return insert ( row.keySet(), row.values() );

133 }

134 //----------------------------------------------------------------------

135 public int insert( Object[] values )

136 { assert values.length == width()

137 : "Values-array length (" + values.length + ") "

138 + "is not the same as table width (" + width() +")";

139

140 doInsert( (Object[]) values.clone() );

141 return 1;

142 }

143 //----------------------------------------------------------------------

144 public int insert( Collection values )

145 { return insert( values.toArray() );

146 }

147 //----------------------------------------------------------------------

148 private void doInsert( Object[] newRow )

149 {

150 rowSet.add( newRow );

151 registerInsert( newRow );

152 isDirty = true;

153 }

Examining a Table: The Iterator Pattern

Now that you’ve populated the table, you may want to examine it. The design pattern is Iterator, of which Java’s java.util.Iterator class is a familiar reification. The basic idea is that Iterator provides a way to access the elements of some aggregate object (some data structure) without exposing the internal representation of the aggregate. Java’s Iterator is just one way to accomplish this end. Any reification that you invent that lets a client object examine an aggregate one element at a time is a reification of Iterator.

Another simple reification of Iterator is the ArrayIterator class, shown in Listing 4-10. This class just wraps an array with a class that implements the java.util.Iterator interface, so you can pass arrays to methods that take Iterator arguments. The ArrayIterator is also an example of the Adapter design pattern that I’ll discuss later. ArrayIterator adapts an array object to implement an interface that an array doesn’t normally implement.

Listing 4-10. ArrayIterator.java

1 package com.holub.tools;

2

3 import java.util.*;

4

5 /** A simple implementation of java.util.Iterator that enumerates

6 * over arrays. Use this class when you want to isolate the

7 * data structures used to hold some collection by passing an

8 * Enumeration to some method.

9 * <!-- ... -->

10 * @author Allen I. Holub

11 */

12

13 public final class ArrayIterator implements Iterator

14 {

15 private int position = 0;

16 private final Object[] items;

17

18 public ArrayIterator(Object[] items){ this.items = items; }

19

20 public boolean hasNext()

21 { return ( position < items.length );

22 }

23

24 public Object next()

25 { if( position >= items.length )

26 throw new NoSuchElementException();

27 return items[ position++ ];

28 }

29

30 public void remove()

31 { throw new UnsupportedOperationException(

32 "ArrayIterator.remove()");

33 }

34

35 /** Not part of the Iterator interface, returns the data

36 * set in array form. Modifying the returned array will

37 * not affect the iteration at all.

38 */

39 public Object[] toArray()

40 { return (Object[]) items.clone();

41 }

42 }

Though most iterators don’t give you control over the order of traversal (the ArrayIterator just goes through the array elements in order), no requirement exists that an iterator visit nodes in any particular order. A ReverseArrayIterator could traverse from high to low indexes, for example. A tree class may have inOrderIterator(), preOrderIterator(), and postOrderIterator() methods, all of which returned objects that implemented the Iterator interface, but those objects would traverse the tree nodes “in order,” root first or depth first. You can define an iterator to handle whatever ordering you like—even random ordering is okay—as long as the ordering is specified in the class contract. Some iterators—such as the tree iterators just discussed—may make requirements on the underlying data structure, however.

Iterators can also modify the underlying data structure. Some of the java.util.Iterator implementers support a remove() operation that lets you remove the current element from the underlying data structure, for example.

Iterator is used all over the ConcreteTable, but most important, it’s used to examine or modify the rows. Listing 4-11 defines the Cursor interface used by the Table, but let’s look at how it’s used before looking at the code. The following method prints all the rows of the Table passed into the method as an argument:

public void print( Table someTable )

{

Cursor current = someTable.rows();

while( current.advance() )

{ for( java.util.Iterator columns = current.columns(); columns.hasNext(); )

System.out.print( (String) columns.next() + " " );

}

}

The Cursor knows about columns, so you could print only the first- and last-name fields of some Table as follows:

public void printFirstAndLast( Table someTable )

{

for(Cursor current = someTable.rows(); current.advance() ;)

{

System.out.print( current.column("first").toString() + " " +

current.column("last").toString() + " " );

}

}

A Cursor can also update the contents of a row or delete the current row. (You actually modify the underlying table when you use these methods.) The following code changes the names of all people named Smith to Jones. It also deletes all rows representing people named Doe:

public void modify( Table people )

{

for( Cursor current = people.rows(); current.advance() ;)

{ if( current.column("last").equals( "Smith" ) )

current.update("last", "Jones" );

else if( current.column("last").equals( "Doe" ) )

current.delete();

}

}

This functionality works properly with the transaction system that I’ll discuss later in the chapter, so you can roll back modifications if necessary.

Listing 4-11. Cursor.java

1 package com.holub.database;

2

3 import java.util.Iterator;

4 import java.util.NoSuchElementException;

5

6 /** The Cursor provides you with a way of examining the

7 * tables that you create and the tables that are created

8 * as a result of a select or join operation. This is

9 * an "updateable" cursor, so you can modify columns or

10 * delete rows via the cursor without problems. (Updates

11 * and deletes done through the cursor <em>are</em> handled

12 * properly with respect to the transactioning system, so

13 * they can be committed or rolled back.)

14 */

15

16 public interface Cursor

17 {

18 /** Metadata method required by JDBC wrapper--Return the name

19 * of the table across which we're iterating. I am deliberately

20 * not allow access to the Table itself, because this would

21 * allow uncontrolled modification of the table via the

22 * iterator.

23 * @return the name of the table or null if we're iterating

24 * across a nameless table like the one created by

25 * a select operation.

26 */

27 String tableName();

28

29 /** Advances to the next row, or if this iterator has never

30 * been used, advances to the first row.

31 * @throws NoSuchElementException if this call would advance

32 * past the last row.

33 * @return true if the iterator is positioned at a valid

34 * element after the advance.

35 */

36 boolean advance() throws NoSuchElementException;

37

38 /** Return the contents of the requested column of the current

39 * row. You should

40 * treat the cells accessed through this method as read only

41 * if you ever expect to use the table in a thread-safe

42 * environment. Modify the table using {@link Table#update}.

43 *

44 * @throws IndexOutOfBoundsException --- the requested column

45 * doesn't exist.

46 */

47

48 Object column( String columnName );

49

50 /** Return a java.util.Iterator across all the columns in

51 * the current row.

52 */

53 Iterator columns();

54

55 /** Return true if the iterator is traversing the

56 * indicated table.

57 */

58 boolean isTraversing( Table t );

59

60 /** Replace the value of the indicated column of the current

61 * row with the indicated new value.

62 *

63 * @throws IllegalArgumentException if the newValue is

64 * the same as the object that's being updated.

65 *

66 * @return the former contents of the now-modified cell.

67 */

68 Object update( String columnName, Object newValue );

69

70 /** Delete the row at the current cursor position.

71 */

72 void delete();

73 }

The comments in Listing 4-11 describe the remainder of the methods in the interface adequately, so let’s move onto the implementation in Listing 4-12.

Cursors are extracted from a Table using a classic Gang-of-Four Abstract Factory. The Table interface defines an Abstract Cursor Factory; the ConcreteTable, which implements that interface, is the Concrete Factory; the Cursor interface defines the Abstract Product; the Concrete Product an instance of the Results class on line 161 of Listing 4-12. As you saw in the earlier examples, you get a Cursor by calling rows() (Listing 4-12, line 157), which just instantiates and returns a Results object.

Looking at the implementation, the Results object traverses the List of rows using a standard java.util.Iterator. The advance() method on line 169 of Listing 4-12 just delegates to the Iterator, for example.

The interesting methods are update() and delete(), at the end of the listing. Other than do what their names imply, both methods set the table’s “dirty” flag to indicate that something has changed. They also register the operation with the transaction-processing system (described on p. 226) by calling registerUpdate() or registerDelete().

Listing 4-12. ConcreteTable.java Continued: Traversing and Modifying

154 //----------------------------------------------------------------------

155 // Traversing and cursor-based Updating and Deleting

156 //

157 public Cursor rows()

158 { return new Results();

159 }

160 //----------------------------------------------------------------------

161 private final class Results implements Cursor

162 { private final Iterator rowIterator = rowSet.iterator();

163 private Object[] row = null;

164

165 public String tableName()

166 { return ConcreteTable.this.tableName;

167 }

168

169 public boolean advance()

170 { if( rowIterator.hasNext() )

171 { row = (Object[]) rowIterator.next();

172 return true;

173 }

174 return false;

175 }

176

177 public Object column( String columnName )

178 { return row[ indexOf(columnName) ];

179 }

180

181 public Iterator columns()

182 { return new ArrayIterator( row );

183 }

184185 public boolean isTraversing( Table t )

186 { return t == ConcreteTable.this;

187 }

188

189 // This method is for use by the outer class only and is not part

190 // of the Cursor interface.

191 private Object[] cloneRow()

192 { return (Object[])( row.clone() );

193 }

194

195 public Object update( String columnName, Object newValue )

196 {

197 int index = indexOf(columnName);

198

199 // The following test is required for undo to work correctly.

200 if( row[index] == newValue )

201 throw new IllegalArgumentException(

202 "May not replace object with itself");

203

204 Object oldValue = row[index];

205 row[index] = newValue;

206 isDirty = true;

207

208 registerUpdate( row, index, oldValue );

209 return oldValue;

210 }

211

212

213 public void delete()

214 { Object[] oldRow = row;

215 rowIterator.remove();

216 isDirty = true;

217

218 registerDelete( oldRow );

219 }

220 }

Passive Iterators

The iterators we’ve been looking at are called external (or active) iterators because they’re separate objects from the data structure they’re traversing.

The storeRow() method of the Builder discussed in the previous section is an example of another sort of Iterator. Rather than creating an iterator object that’s external to the data structure, the export() method effectively implements the traversal mechanism inside the data-structure class (the ConcreteTable). The export() method iterates by calling the Exporter’s storeRow() method multiple times. We’re still visiting every node in turn, so this is still the Iterator pattern, but things are now inside out.

This kind of iterator is known as an internal (or passive) iterator. The idea of a passive iterator is that you provide some data structure with a Command object, one method of which is called repetitively (once for each node) and is passed the “current” node.

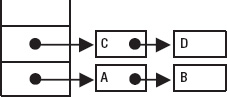

Passive iterators are quite useful when the data structure is inherently difficult to traverse. Consider the case of a simple binary tree such as the one shown in Listing 4-13. A passive iterator is a simple recursive function, easy to write. The traverseInOrder method (Listing 4-13, line 31) demonstrates a passive iterator. This textbook recursive-traversal algorithm just passes each node to the Examiner object (declared just above this method, on line 23) in turn. You could print all the nodes of a tree like this:

Tree t = new Tree();

//...

t.traverse

( new Examiner()

{ public void examine( Object currentNode )

{ System.out.println( currentNode.toString() );

}

}

);

Now consider the implementation of an active (external) iterator across a tree. The Tree class’s iterator() method (line 49) returns a standard java.util.Iterator that visits every node of the tree in order. My main point in showing you this code is to show you how opaque this code is. The algorithm is short but difficult to both understand and write. (In essence, I use a stack to keep track of parent nodes as I traverse the tree.)

Listing 4-13. Tree.java: A Simple Binary-Tree Implementation

1 import java.util.*;

2

3 /** This class demonstrates how to make both internal and external

4 * iterators across a binary tree. I've deliberately used a tree

5 * rather than a linked list to make the external in-order iterator

6 * more complicated.

7 */

8

9 public class Tree

10 {

11 private Node root = null;

12

13 private static class Node

14 { public Node left, right;

15 public Object item;

16 public Node( Object item )

17 { this.item = item;

18 }

19 }

20 //----------------------------------------------------------------- 21 // A Passive (internal) iterator

22 //

23 public interface Examiner

24 { public void examine( Object currentNode );

25 }

26

27 public void traverse( Examiner client )

28 { traverseInOrder( root, client );

29 }

30

31 private void traverseInOrder(Node current, Examiner client)

32 { if( current == null )

33 return;

34

35 traverseInOrder(current.left, client);

36 client.examine (current.item );

37 traverseInOrder(current.right, client);

38 }

39

40 public void add( Object item )

41 { if( root == null )

42 root = new Node( item );

43 else

44 insertItem( root, item );

45 }

46 //-----------------------------------------------------------------

47 // An Active (external) iterator

48 //

49 public Iterator iterator()

50 { return new Iterator()

51 { private Node current = root;

52 private LinkedList stack = new LinkedList();

53

54 public Object next()

55 {

56 while( current != null )

57 { stack.addFirst( current );

58 current = current.left;

59 }

60

61 if( stack.size() != 0 )

62 { current = (Node)

63 ( stack.removeFirst() );

64 Object toReturn=current.item;

65 current = current.right;

66 return toReturn;

67 } 68

69 throw new NoSuchElementException();

70 }

71

72 public boolean hasNext()

73 { return !(current==null && stack.size()==0);

74 }

75

76 public void remove()

77 { throw new UnsupportedOperationException();

78 }

79 };

80 }

81 //-----------------------------------------------------------------

82

83 private void insertItem( Node current, Object item )

84 { if(current.item.toString().compareTo(item.toString())>0)

85 { if( current.left == null )

86 current.left = new Node(item);

87 else

88 insertItem( current.left, item );

89 }

90 else

91 { if( current.right == null )

92 current.right = new Node(item);

93 else

94 insertItem( current.right, item );

95 }

96 }

97

98 public static void main( String[] args )

99 { Tree t = new Tree();

100 t.add("D");

101 t.add("B");

102 t.add("F");

103 t.add("A");

104 t.add("C");

105 t.add("E");

106 t.add("G");

107

108 Iterator i = t.iterator();

109 while( i.hasNext() )

110 System.out.print( i.next().toString() );

111

112 System.out.println("");

113

114 t.traverse( new Examiner()115 { public void examine(Object o)

116 { System.out.print( o.toString() );

117 }

118 } );

119 System.out.println("");

120 }

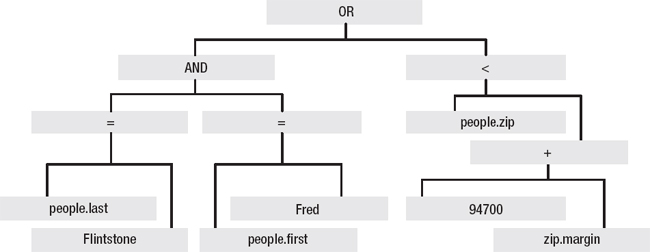

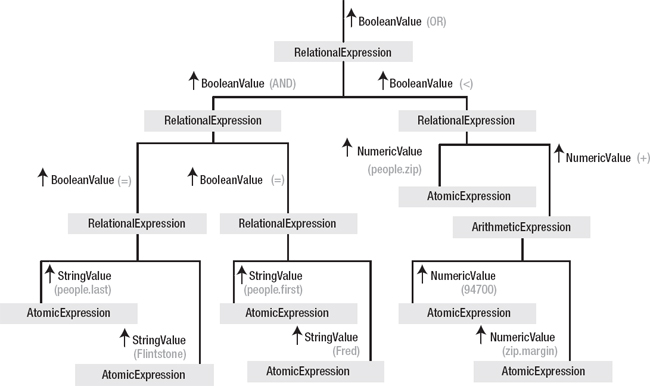

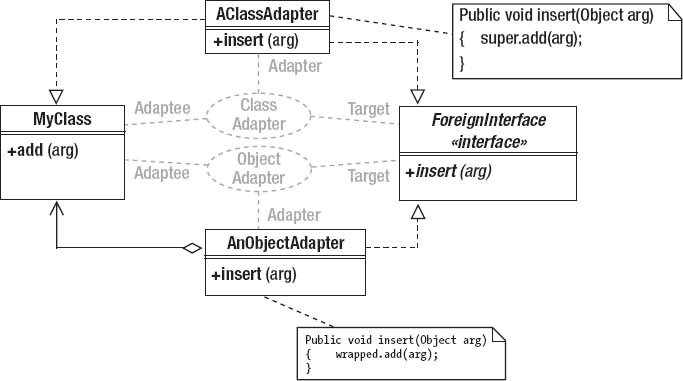

121 }