It is all about the data. There is value in the data and as more information becomes available through mobile and IOT devices, our imaginations start to bring us ideas of the potential of what can be realized with the right data.

Just like inventions, there are reasons and purpose for needing something that triggers ideas of what can be developed. Several areas of data already follow these innovations. Sports, retail, health care, manufacturing, marketing, and plenty of other industries see data as a company asset. The enterprise realizes the importance of data and has many data sources, but it needs the direction of what to do with this information. Analyzing the data and providing questions to ask is the competitive edge in using the data.

Over the past few years the office of the Chief Data Officer (CDO) has developed to become an integral part of more enterprises. The role is to manage and provide governance for the highly valuable data assets of the company. The data that flows through the company and realizing that there is more information to gather requires a team of individuals strictly focused on the business needs for data and architecting where data is available and ensuring the quality of the data.

DBAs that have been working on data services and other data workflows can find ways to transform into the data professional. They can provide guidance on how the data is captured, used, and distributed to other applications and provide the know-how of the database processes to efficiently work with the data.

Data Quality

Data coming in through different sources will have to go through data quality processes. There are controls and tagging of the data to ensure that it is accurate, classified, and complete. Companies will have various types of data all needing governance on how it can be used and what needs to be secured. Also available are external data sources that can fill in gaps and additional attributes to provide a complete data set to be used.

Master Data Management (MDM) is generally what is considered as the overall work for data quality. MDM can be part of any system that pulls data through work processes and offers data services as the system of record or the golden data set. There needs to be a catalog that will provide details about the data classification and how it should be used and the source of the data. DBAs are supporting these processes and management of data in the databases. They have the insight to improve data processing and where data is available to include additional attributes.

Data models and flow will be known to help them transform into more of a data profession to plan, develop, and integrate processes to support MDM and especially data quality. Data quality is an interesting process and is continuous because new data is always coming and new sources are made available. The quality follows a development process. The following is how to look at that process:

Gather requirements for the needed data

Gather information about the sources

Write procedures to bring in data

Write procedures to fill in the required attributes and gaps

Write procedures to validate the data (remove duplicates, incomplete data)

Capture data issues

Write processes to address data issues

Perform cleansing processes

Validate against data requirements

This list includes the writing of procedures and running through the process that is the testing and development of the data quality workflow. After being tested, validated, and deployed, the procedures would run automatically. Some of the data issues might require manual intervention to figure out what the issue is, but then they can be included in the cleansing process. The business requirements can change, which will mean that the procedures and cleansing processes will need to be modified.

MDM is not a simple decision and requires efforts from everyone involved in the data sources and use of the data. These projects are developed based on sources of data needed and business questions and processes to get the needed information in the right hands to execute upon them.

Tip

MDM is not just the job of the DBA, and it is difficult to do data cleansing if other processes are not on board with the quality of the data. Business requirement, development effort, and data professionals along with the business need to work together to support MDM.

Working on these processes, developing code to be executed as part of data quality is part of a database developer type role and is a transition for a DBA to focus specially on these areas. The database system is going to require less hands-on maintenance and administration, which opens up this opportunity to look at data processes. Providing data source and services is going to be critical for the enterprises’ business processes and understanding the available data is going to lead to better questions. Better questions about the data will lead to new opportunities and hopefully provide a competitive edge with customers and other business partners.

Data quality and governance also supplies analytics and analysis of the data. Analytics are performed on many data sets to get answers to questions. There are plenty of uses for this in companies, but as an example, think of your spending habits and location details being collected for credit card suppliers to detect potential misuse of credit cards and identity theft issues.

Having sources that are not the golden source of data or uncleansed data is not going to produce accurate analytics or analysis of the data. If there are gaps in the location of the use of the credit card that might not be able to supply that, the card is being used in two different places and it could be fraud or other issues with the credit card account. Early detection of this and details will depend on how fast the data can move through the analysis process that the data is going through; this is a logical place for a DBA to step in to help with performance and even look how the analytics are performing on the processes. The knowledge of the DBA can cleanse and transform the data that is part of the analytics.

Data quality frameworks are available to work from that to match up the data quality steps with business requirements. Figure 7-1 shows the process of going through a framework for data quality.

Figure 7-1 Data Quality Steps

Classification of the data will also contain data that needs specific keys about personal and sensitive data. Classifying data will allow other security and business processes to utilize that knowledge about the data to make sure that it is properly secured and only made available to authorized uses.

The CDO office and teams work to handle MDM and the data quality processes. They work with the business to understand what data sources are needed and the business requirements for data analytics. They take the sources of the data and provide the governance and workflows. The classification of the data is not just important for the business but extremely beneficial for technology processes. This can provide additional information about the data source and if it is the source of record and information about security. Security tools can use classification of the data for monitoring and access controls.

Data Integrations

The data sources do not just sit alone. They become even more powerful when they work together. There are enough challenges with data quality and understanding the details about the source of the data, but combine that with data coming from different platforms and with different descriptions of the data. Hopefully the CDO will be working toward standardizing the details around the data details and classifications, so the only challenge is to be able to combine the data into a single source without losing data changes from the system of record.

DBAs have opportunity here to maintain data sources, how to integrate the data and design processes to allow for simple integrations to other sources. Data integration tools are available to catalog the business rules and keep the definitions of the integration process. It has ways to implement data quality procedures and keep a system of record information. The DBA transformation can be with learning new tools such as Oracle Data Integrator (ODI) . ODI is a tool specifically to create the process and procedures for the data integrations. It is an ongoing process and there are consistently new data that is required by the business or used to enhance current sources. These tools are additional to the database tools, but with the understanding of database objects, they are something that DBAs can master.

Not all of the data sources are going to be in databases. There are going to be different forms of data, such as APIs or files. There might be extract, load, transform (ETL) processes needed to use the source. Then there is being able to use the data in sources closest to the golden copy to prevent duplication and synchronization issues.

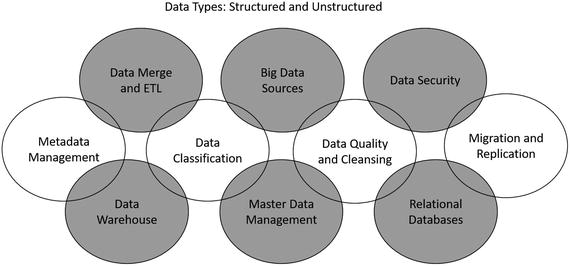

Figure 7-2 Data Integration Data and Processes

Figure 7-2 is a way to look at processes and data sources that can make up the data integrations. There is quite a bit of effort for designing a robust integration for the data, because it takes in all of these details just discussed in data quality, conversations with the business to understand the data strategies, and exploring the sources of different data sources available.

Data professionals are going to work with data integration tools, test data quality, maintain business rules, and continue to review the rules and data sources. The processes of ETL and producing APIs are going to be constantly monitored and challenges come with real-time data and speed of which the data is needed for the business.

BIG DATA

Big Data is not about large amounts of data. It is about data coming in structured or non-structured form with high velocity. Big Data is going to use other technology platforms such as Hadoop, NoSQL, and Massively Parallel Processing (MPP) databases . The data collection can be coming in from sources such as IOT devices, social media, and even several public sources of data sets.

DBAs can currently be pulling in big data sources into the relational databases to provide full data sources in other systems. The opportunities here for the DBA are to learn the Big Data technologies. Hadoop is a platform that can process the data coming in from devices and other data sets. It is not necessarily the administration of the technologies or platforms, but how to use them and use the data sets to integrate with other database systems.

As we look into a data professional, you can notice that these processes start with data quality, and then proceeds to data integrations and repeat data quality. Each step along the way might be looking at data quality. The velocity and amount of data that comes in with the Big Data sources challenges these processes to see if data quality matters or if certain pieces of the data is what is important. The data might be used for analytics in a way that just the processes need to run and not all require the complete data set. There are several details to consider when looking at these data sets and are they available to augment the existing data that is collected through another Big Data process or the relational data. Also think about the security that is needed for data. If it is public information, it might not need the security processes in place until it is integrated with other data. This integration might become the company’s intellectually property.

Business intelligence is based on all of the data points that are coming in from Big Data sources, other data integrations, and then the algorithms can help provide the data science around the details. The questions are part of the business intelligence to answer the right questions and provide answers or start to provide direction to make decisions.

Data professionals are managing the Big Data processes, using the new tools that come with it. Understanding how to use Hadoop, analytical processes, and other NoSQL databases are going to be key for this area. The public data sources with data that is collected is going to be available to use as part of the business strategies for data and integrations.

Conclusion

DBAs have the knowledge of where data is available or have at least an idea when data moves. It is normally a process that they have been involved in from the beginning. Even if it was not to know what the data is being used for, it could be to improve the speed of the process or help make the appropriate connections or load data into the database.

These interactions with the data are a foundation for moving more into the data field. However, most of the movement, migrations, and loading of the data should become automated processes. The databases still house the information and being able to catalog the data and provide the details about the metadata builds the components of what the data is about and how it can be used.

The teams under the CDO are looking for that knowledge and understanding about how to use, move, and integrate data to meet business needs. The data is a valuable company asset that can be underutilized if the knowledge is not there on how to use it or what questions it can answer.

Information is captured at a single point in time; these are our data records. This will get fed into a system to be able to gather details around all of this information and provide knowledge and analysis about the data of what it means, how it applies to the business, and what can be learned by it. This is the knowledge that is trying to be understood and comprehended by the applications to translate what that means to business processes. As data points change over time, they are captured and provides combined sets of data for decision making and business intelligence.

The collection of the data and applying the business rules give us the insight into the data. They knowledge of the data is based over time and collection of the information. As the sources are explored and how they should be utilized are part of the knowledge of the data, and the processes and workflows provide the other pieces for understanding the sources. It is the applications and business processes that make it possible to have the knowledge about what the data is telling us and how it applies to the business and even technology processes.

DBAs have the opportunity to work even closer with the data. Data quality and integrations are part of the data processes that are run to provide accurate and complete data to answer questions and provide business knowledge back to the business. These data sources and understanding how to manage and utilize them are what data professionals do. The DBA transformation comes with wanting to support the business strategies with the business intelligence provided by efficient, standardized, and accurate data processes. The data professional will perform these tasks to cleanse and attach to the data source to the correct workflows. Automation of the processes will not be removing the need for DBAs or Data Professionals because the knowledge and understanding of the data flows still needs to be mapped and processes is part of that education to become a data professional.