The xtensor library is a C++ library for numerical analysis with multidimensional array expressions. Containers of xtensor are inspired by NumPy, the Python array programming library. ML algorithms are mainly described using Python and NumPy, so this library can make it easier to move them to C++. The following container classes implement multidimensional arrays in the xtensor library.

The xarray type is a dynamically sized multidimensional array, as shown in the following code snippet:

std::vector<size_t> shape = { 3, 2, 4 };

xt::xarray<double, xt::layout_type::row_major> a(shape);

The xtensor type is a multidimensional array whose dimensions are fixed at compilation time. Exact dimension values can be configured in the initialization step, as shown in the following code snippet:

std::array<size_t, 3> shape = { 3, 2, 4 };

xt::xtensor<double, 3> a(shape);

The xtensor_fixed type is a multidimensional array with a dimension shape fixed at compile time, as shown in the following code snippet:

xt::xtensor_fixed<double, xt::xshape<3, 2, 4>> a;

The xtensor library also implements arithmetic operators with expression template techniques such as Eigen (this is a common approach for math libraries implemented in C++). So, the computation happens lazily, and the actual result is calculated when the whole expression is evaluated. The container definitions are also expressions. There is also a function to force an expression evaluation named xt::eval in the xtensor library.

There are different kinds of container initialization in the xtensor library.

Initialization of xtensor arrays can be done with C++ initializer lists, as follows:

xt::xarray<double> arr1{{1.0, 2.0, 3.0},

{2.0, 5.0, 7.0},

{2.0, 5.0, 7.0}}; // initialize a 3x3 array

The xtensor library also has builder functions for special tensor types. The following snippet shows some of them:

std::vector<uint64_t> shape = {2, 2};

xt::ones(shape);

xt::zero(shape);

xt::eye(shape); //matrix with ones on the diagonal

Also, we can map existing C++ arrays into the xtensor container with the xt::adapt function. This function returns the object that uses the memory and values from the underlying object, as shown in the following code snippet:

std::vector<float> data{1,2,3,4};

std::vector<size_t> shape{2,2};

auto data_x = xt::adapt(data, shape);

We can use direct access to container elements, with the () operator, to set or change tensor values, as shown in the following code snippet:

std::vector<size_t> shape = {3, 2, 4};

xt::xarray<float> a = xt::ones<float>(shape);

a(2,1,3) = 3.14f;

The xtensor library implements linear algebra arithmetic operations through overloads of standard C++ arithmetic operators such as +, - and *. To use other operations such as dot-product operations, we have to link an application with the library named xtensor-blas. These operators are declared in the xt::linalg namespace.

The following code shows the use of arithmetic operations with the xtensor library:

auto a = xt::random::rand<double>({2,2});

auto b = xt::random::rand<double>({2,2});

auto c = a + b;

a -= b;

c = xt::linalg::dot(a,b);

c = a + 5;

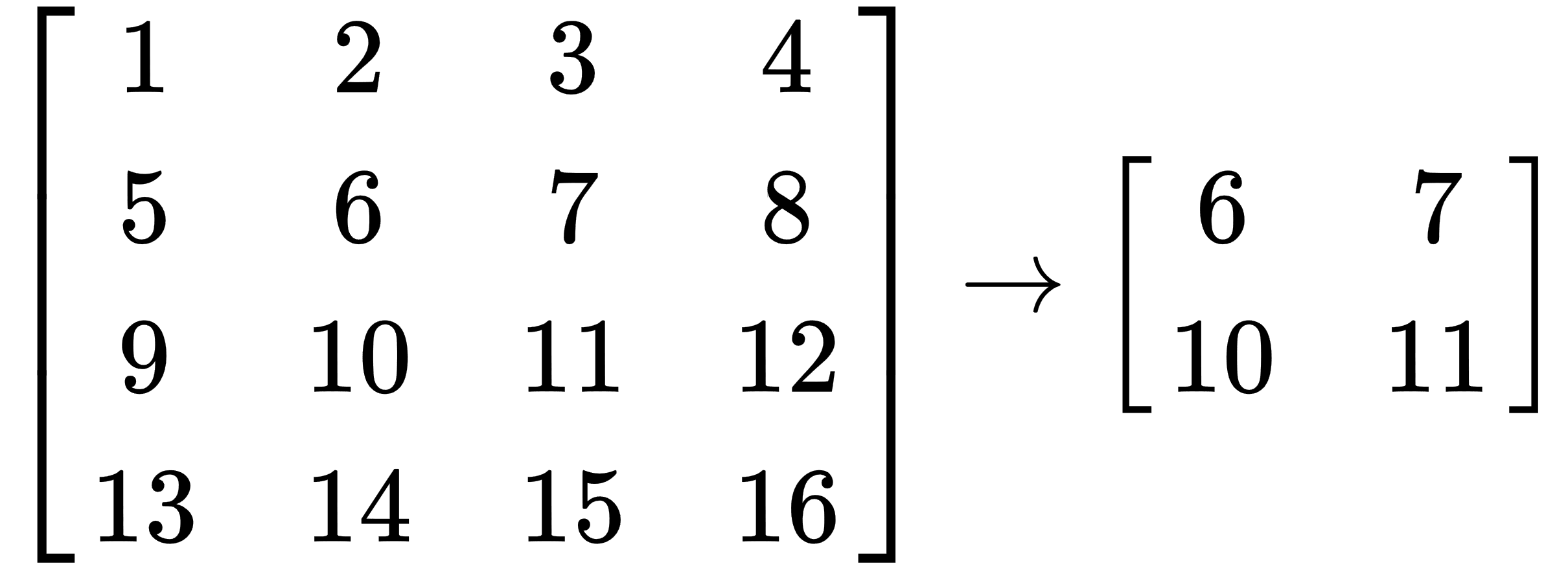

To get partial access to the xtensor containers, we can use the xt::view function. The following sample shows how this function works:

xt::xarray<int> a{{1, 2, 3, 4},

{5, 6, 7, 8}

{9, 10, 11, 12}

{13, 14, 15, 16}};

auto b = xt::view(a, xt::range(1, 3), xt::range(1, 3));

This operation takes a rectangular block from the tensor, which looks like this:

The xtensor library implements automatic broadcasting in most cases. When the operation involves two arrays of different dimensions, it transmits the array with the smaller dimension across the leading dimension of the other array, so we can directly add a vector to a matrix. The following code sample shows how easy it is:

auto m = xt::random::rand<double>({2,2});

auto v = xt::random::rand<double>({2,1});

auto c = m + v;