Chapter 1: DevOps, SRE, and Google Cloud Services for CI/CD

DevOps is a mindset change that tries to balance release velocity with system reliability. It aims to increase an organization's ability to continuously deliver reliable applications and services at a high velocity when compared to traditional software development processes.

A common misconception about DevOps is that it is a technology. Instead, DevOps is a set of supporting practices (such as, build, test, and deployment) that combines software development and IT operations. These practices establish a culture that breaks down the metaphorical wall between developers (who aim to push new features to production) and system administrators or operators (who aim to keep the code running in production).

Site Reliability Engineering (SRE) is Google's approach to align incentives between development and operations that are key to building and maintaining reliable engineering systems. SRE is a prescriptive way to implement DevOps practices and principles. Through these practices, the aim is to increase overall observability and reduce the level of incidents. The introduction of a Continuous Integration/Continuous Delivery (CI/CD) pipeline enables a robust feedback loop in support of key SRE definitions such as toil, observability, and incident management.

CI/CD is a key DevOps practice that helps to achieve this mindset change. CI/CD requires a strong emphasis on automation to build reliable software faster (in terms of delivering/deploying to production). Software delivery of this type requires agility, which is often achieved by breaking down existing components.

A cloud-native development paradigm is one where complex systems are decomposed into multiple services (such as microservices architecture). Each service can be independently tested and deployed into an isolated runtime. Google Cloud Platform (GCP) has well-defined services to implement cloud-native development and apply SRE concepts to achieve the goal of building reliable software faster.

In this chapter, we're going to cover the following main topics:

- DevOps 101 – evolution and life cycle

- SRE 101 – evolution; technical and cultural practices

- GCP's cloud-native approach to implementing DevOps

Understanding DevOps, its evolution, and life cycle

This section focuses on the evolution of DevOps and lists phases or critical practices that form the DevOps life cycle.

Revisiting DevOps evolution

Let's take a step back and think about how DevOps has evolved. Agile software development methodology refers to a set of practices based on iterative development where requirements and solutions are built through collaboration between cross-functional teams and end users. DevOps can be perceived as a logical extension of Agile. Some might even consider DevOps as an offspring of Agile. This is because DevOps starts where Agile logically stops. Let's explore what this means in detail.

Agile was introduced as a holistic approach for end-to-end software delivery. Its core principles are defined in the Agile Manifesto (https://agilemanifesto.org/), with specific emphasis on interaction with processes and tools, improving collaboration, incremental and iterative development, and flexibility in response to changes to a fixed plan. The initial Agile teams primarily had developers, but it quickly extended to product management, customers, and quality assurance. If we factor in the impact of the increased focus on iterative testing and user acceptance testing, the result is a new capacity to deliver software faster to production.

However, Agile methodology creates a new problem that has resulted in a need for a new evolution. Once software is delivered to production, the operations team are primarily focused on system stability and upkeep. At the same time, development teams continue to add new features to a delivered software to meet customers' dynamic needs and to keep up with the competition.

Operators were always cautious for the fear of introducing issues. Developers always insist on pushing changes since these were tested in their local setup, and developers always thought that it is the responsibility of the operators to ensure that the changes work in production. But from an operator's standpoint, they have little or no understanding of the code base. Similarly, developers have little or no understanding of the operational practices. So essentially, developers were focused on shipping new features faster and operators were focused on stability. This forced developers to move slower in pushing the new features out to production. This misalignment often caused tensions within an organization.

Patrick Debois, an IT consultant who was working on a large data center migration project in 2007, experienced similar challenges when trying to collaborate with developers and operators. He coined the term DevOps and later continued this movement with Andrew Shafer. They considered DevOps as an extension of Agile. In fact, when it came to naming their first Google group for DevOps, they called it Agile System Administration.

The DevOps movement enabled better communication between software development and IT operations and effectively led to improved software with continuity being the core theme across operating a stable environment, consistent delivery, improved collaboration, and enhanced operational practices with a focus on innovation. This led to the evolution of the DevOps life cycle, which is detailed in the upcoming sub-section.

DevOps life cycle

DevOps constitutes phases or practices that in their entirety form the DevOps life cycle. In this section, we'll look at these phases in detail, as shown in the following diagram:

Figure 1.1 – Phases of the DevOps life cycle

There are six primary phases in a DevOps life cycle. They are as follows:

- Plan and build

- Continuous integration

- Continuous delivery

- Continuous deployment

- Continuous monitoring and operations

- Continuous feedback

The keyword here is continuous. If code is developed continuously, it will be followed with a need to continuously test, provide feedback, deploy, monitor, and operate. These phases will be introduced in the following sections.

Phase 1 – plan and build

In the planning phase, the core focus is to understand the vision and convert it into a detailed plan. The plan can be split into phases, otherwise known as epics (in Agile terminology). Each phase or epic can be scoped to achieve a specific set of functionalities, which could be further groomed as one or multiple user stories. This requires a lot of communication and collaboration between various stakeholders.

In the build phase, code is written in the language of choice and appropriate build artifacts are created. Code is maintained in a source code repository such as GitHub, Bitbucket, and others.

Phase 2 – continuous integration

CI is a software development practice where developers frequently integrate their code changes to the main branch of a shared repository. This is done, preferably, several times in a day, leading to several integrations.

Important note

Code change is considered the fundamental unit of software development. Since development is incremental in nature, developers keep changing their code.

Ideally, each integration is triggered by an automated build that also initiates automated unit tests, to detect any issues as quickly as possible. This avoids integration hell, or in other words, ensures that the application is not broken by introducing a code change or delta into the main branch.

Phase 3 – continuous delivery

Continuous delivery is a software development practice to build software such that a set of code changes can be delivered or released to production at any time. It can be considered an extension of CI and its core focus is on automating the release process to enable hands-free or single-click deployments.

The core purpose is to ensure that the code base is releasable and there is no regression break. It's possible that the newly added code might not necessarily work. The frequency to deliver code to production is very specific to the organization and could be daily, weekly, bi-weekly, and so on.

Phase 4 – continuous deployment

Continuous deployment is a software development practice where the core focus is to release automated deployments to production without the user's intervention. It aims to minimize the time elapsed between developers writing new line(s) of code and this new code being used by live users in production.

At its core, continuous deployment incorporates robust testing frameworks and encourages code deployment in a testing/staging environment post the continuous delivery phase. Automated tests can be run as part of the pipeline in the test/stage environment. In the event of no issues, the code can be deployed to production in an automated fashion. This removes the need for a formal release day and establishes a feedback loop to ensure that added features are useful to the end users.

Phase 5 – continuous monitoring and operation

Continuous monitoring is a practice that uses analytical information to identify issues with the application or its underlying infrastructure. Monitoring can be classified into two types: server monitoring and application monitoring.

Continuous operations is a practice where the core focus is to mitigate, reduce, or eliminate the impact of planned downtime, such as scheduled maintenance, or in the case of unplanned downtime, such as an incident.

Phase 6 – continuous feedback

Continuous feedback is a practice where the core focus is to collect feedback that improves the applica/service. A common misconception is that continuous feedback happens only as the last phase of the DevOps cycle.

Feedback loops are present at every phase of the DevOps pipeline such that feedback is conveyed if a build fails due to a specific code check-in, a unit/integration test or functional test fails in a testing deployment, or an issue is found by the customer in production.

GitOps is one of the approaches to implement continuous feedback where a version control system has the capabilities to manage operational workflows, such as Kubernetes deployment. A failure at any point in the workflow can be tracked directly in the source control and that creates a direct feedback loop.

Key pillars of DevOps

DevOps can be categorized into five key pillars or areas:

- Reduce organizational silos: Bridge the gap between teams by encouraging them to work together toward a shared company vision. This reduces friction between teams and increases communication and collaboration.

- Accept failure as normal: In the continuous aspect of DevOps, failure is considered an opportunity to continuously improve. Systems/services are bound to fail, especially when more features are added to improve the service. Learning from failures mitigates reoccurrence. Fostering failure as the normal culture will make team members more forthcoming.

- Implement gradual change: Implementing gradual change falls in line with the continuous aspect of DevOps. Small, gradual changes are not only easier to review but in the event of an incident in production, it is easier to roll back and reduce the impact of the incident by going back to a last known working state.

- Leverage tooling and automation: Automation is key to implement the continuous aspect of CI/CD pipelines, which are critical to DevOps. It is important to identify manual work and automate it in a way that eventually increases speed and adds consistency to everyday processes.

- Measure everything: Measuring is a critical gauge for success. Monitoring is one way to measure and observe that helps to get important feedback to continuously improve the system.

This completes our introduction to DevOps where we discussed its evolution, life cycle phases, and key pillars. At the end of the day, DevOps is a set of practices. The next section introduces site reliability engineering, or SRE, which is essentially Google's practical approach to implementing DevOps key pillars.

SRE's evolution; technical and cultural practices

This section tracks back the evolution of SRE, defines SRE, discusses how SRE relates to DevOps by elaborating DevOps key pillars, details critical jargon, and introduces SRE's cultural practices.

The evolution of SRE

In the early 2000s, Google was building massive, complex systems to run their search and other critical services. Their main challenge was to reliably run their services. At the time, many companies historically had system administrators deploying software components as a service. The use of system administrators, otherwise known as the sysadmin approach, essentially focused on running the service by responding to events or updates as they occur. This means that if the service grew in traffic or complexity, there would be a corresponding increase in events and updates.

The sysadmin approach has its pitfalls, and these are represented by two categories of cost:

- Direct costs: Running a service with a team of system administrators included manual intervention. Manual intervention at scale is a major downside to change management and event handling. However, this manual approach was adopted by multiple organizations because there wasn't a recognized alternative

- Indirect costs: System administrators and developers widely differed in terms of their skills, the vocabulary used to describe situations, and incentives. Development teams always want to launch new features and their incentive is to drive adoption. System administrators or ops teams want to ensure that the service is running reliably and often with a thought process of don't change something that is working.

Google did not want to pursue a manual approach because at their scale and traffic, any increase in demand would make it impractical to scale. The desire to regularly push more features to their users would ultimately cause conflict between developers and operators. Google wanted to reduce this conflict and remove the confusion with respect to desired outcomes. With this knowledge, Google considered an alternative approach. This new approach is what became known as SRE.

Understanding SRE

(Betsy Beyer, Chris Jones, Jennifer Petoff, & Niall Murphy, Site Reliability Engineering, O'REILLY)

The preceding is a quote from Ben Treynor Sloss, who in 2003 started the first SRE team at Google with seven software engineers. Ben himself was a software engineer up until that point, and joined Google as the site reliability Tsar in 2003, led the development and operations of Google's production software infrastructure, network, and user-facing services, and is currently the VP of engineering at Google. At that point in 2003, neither Ben nor Google had any formal definition for SRE.

SRE is a software engineering approach to IT operations. SRE is an intrinsic part of Google's culture. It's the key to running their massively complex systems and services at scale. At its core, the goal of SRE is to end the age-old battle between development and operations. This section introduces SRE's thought process and the upcoming chapters on SRE give deeper insights into how SRE achieves its goal.

A primary difference in Google's approach to building the SRE practice or team is the composition of the SRE team. A typical SRE team consists of 50-60% Google software engineers. The other 40-50% are personnel who have software engineering skills but in addition, also have skills related to UNIX/Linux system internals and networking expertise. The team composition forced two behavioral patterns that propelled the team forward:

- Team members were quickly bored of performing tasks or responding to events manually.

- Team members had the capability to write software and provide an engineering solution to avoid repetitive manual work even if the solution is complicated.

In simple terminology, SRE practices evolved when a team of software engineers ran a service reliably in production and automated systems by using engineering practices. This raises some critical questions. How is SRE different from DevOps? Which is better? This will be covered in the upcoming sub-sections.

From Google's viewpoint, DevOps is a philosophy rather than a development methodology. It aims to close the gap between software development and software operations. DevOps' key pillars clarify what needs to be done to achieve collaboration, cohesiveness, flexibility, reliability, and consistency.

SRE's approach toward DevOps' key pillars

DevOps doesn't put forward a clear path or mechanism for how it needs to be done. Google's SRE approach is a concrete or prescriptive way to solve problems that the DevOps philosophy addresses. Google describes the relationship between SRE and DevOps using an analogy:

(Google Cloud, SRE vs. DevOps: competing standards or close friends?, https://cloud.google.com/blog/products/gcp/sre-vs-devops-competing-standards-or-close-friends)

Let's look at how SRE implements DevOps and approaches the DevOps key pillars:

- Reduces organizational silos: SRE reduces organizational silos by sharing ownership between developers and operators. Both teams are involved in the product/service life cycle from the start. Together they define Service-Level Objectives (SLOs), Service-Level Indicators (SLIs), and error budgets and share the responsibility to determine the reliability, work priority, and release cadence of new features. This promotes a shared vision and improves communication and collaboration.

- Accepts failure as normal: SRE accepts failure as normal by conducting blameless postmortems, which includes detailed analysis without any reference to a person. Blameless postmortems help to understand the reasons for failure, identifying preventive actions, and ensuring that a failure for the same reason doesn't re-occur. The goal is to identify the root cause and process but not to focus on individuals. This helps to promote psychological safety. In most cases, failure is the result of a missing SLO or targets and incidents are tracked using specific indicators as a function of time or SLI.

- Implements gradual change: SRE implements gradual changes by limited canary rollouts and eventually reduces the cost of failures. Canary rollouts refer to the process of rolling out changes to a small percentage of users in production before making them generally available. This ensures that the impact is limited to a small set of users and gives us the opportunity to capture feedback on the new rollouts.

- Leverages tooling and automation: SRE leverages tooling and automation to reduce toil or the amount of manual repetitive work, and it eventually promotes speed and consistency. Automation is a force multiplier. However, this can create a lot of resistance to change. SRE recommends handling this resistance to change by understanding the psychology of change.

- Measures everything: SRE promotes data-driven decision making, encourages goal setting by measuring and monitoring critical factors tied to the health and reliability of the system. SRE also measures the amount of manual, repetitive work spent. Measuring everything is key for setting up SLOs and Service-Level Agreements (SLAs) and reducing toil.

This wraps up our introduction to SRE's approach to DevOps key pillars; we referred to jargon such as SLI, SLO, SLA, error budget, toil, and canary rollouts. These will be introduced in the next sub-section.

Introducing SRE's key concepts

SRE implements the DevOps philosophy via several key concepts, such as SLI, SLO, SLA, error budget, and toil.

Becoming familiar with SLI, SLO, and SLA

Before diving into the definitions of SRE terminology – specifically SLI, SLO, and SLA – this sub-section attempts to introduce this terminology through a relatable example.

Let's consider that you are a paid consumer for a video streaming service. As a paid consumer, you will have certain expectations from the service. A key aspect of that expectation is that the service needs to be available. This means when you try to access the website of the video streaming service via any permissible means, such as mobile device or desktop, the website needs to be accessible and the service should always work.

If you frequently encounter issues while accessing the service, either because the service is experiencing high traffic or the service provider is adding new features, or for any other reason, you will not be a happy consumer. Now, it is possible that some users can access this service at a moment in time but some users are unable to access it at the same moment in time. Those users who are able to access it are happy users and users who are unable to access it are sad users.

Availability

The first and most critical feature that a service should provide is availability. Service availability can also be referred to as its uptime. Availability is the ability of an application or service to run when needed. If a system is not running, then the system will fail.

Let's assume that you are a happy user. You can access the service. You can create a profile, browse titles, filter titles, watch reviews for specific titles, add videos to your watchlist, play videos, or add reviews to viewed videos. Each of these actions performed by you as a user can be categorized as a user journey. For each user journey, you will have certain expectations:

- If you try to browse titles under a specific category, say comedy, you would expect that the service loads the titles without any delay.

- If you select a title that you would like to watch, you would expect to watch the video without any buffering.

- If you would like to watch a livestream, you would expect the stream contents to be as fresh as possible.

Let's explore the first expectation. When you as a user tries to browse titles under comedy, how fast is fast enough?

Some users might expect to display the results within 1 second, and some might expect it in 200 ms and some others in 500 ms. So, the expectation needs to be quantifiable and for it to be quantifiable, it needs to be measurable. The expectation should be set to a value where most of the users will be happy. It should also be measured for a specific duration (say 5 minutes) and should be met over a period (say 30 days). It should not be a one-time event. If the expectation is not met over a period users expect, the service provider takes on some accountability and addresses the users' concerns either by issuing a refund or adding extra service credits.

For a service to be reliable, the service needs to have key characteristics based on expectations from user journeys. In this example, the key characteristics that the user expects are latency, throughput, and freshness.

Reliability

Reliability is the ability of an application or service to perform a specific function within a specific time without failures. If a system cannot perform its intended function, then the system will fail.

So, to summarize the example of a video streaming service, as a user you will expect the following:

- The service is available.

- The service is reliable.

Now, let's introduce SRE terminology with respect to the preceding example before going into their formal definitions:

- Expecting the service to be available or expecting the service to meet a specific amount of latency, throughput, or freshness, or any other characteristic that is critical to the user journey, is known as SLI.

- Expecting the service to be available or reliable for a certain target level over a specific period is SLO.

- Expecting the service to meet a pre-defined customer expectation, the failure of which results in a refund or credits, is SLA.

Let's move on from this general understanding of these concepts and explore how Google views them by introducing SRE's technical practices.

SRE's technical practices

SRE specifically prescribes the usage of specific technical tools or practices that will help to define, measure, and track service characteristics such as availability and reliability. These are referred to as SRE technical practices and specifically refer to SLIs, SLOs, SLAs, error budget, and toil. These are introduced in the following sections with significant insights.

Service-Level Indicator (SLI)

Google SRE has the following definition for SLI:

(Betsy Beyer, Chris Jones, Jennifer Petoff, & Niall Murphy, Site Reliability Engineering, O'REILLY)

Most services consider latency or throughput as key aspects of a service based on related user journeys. SLI is a specific measurement of these aspects where raw data is aggregated or collected over a measurement window and represented as a rate, average, or percentile

Let's now look at the characteristics of SLIs:

- It is a direct measurement of a service performance or behavior.

- Refers to measurable metrics over time.

- Can be aggregated and turned to rate, average, or percentile.

- Used to determine the level of availability. SRE considers availability as the prerequisite to success.

SLI can be represented as a formula:

For systems serving requests over HTTPS, validity is often determined by request parameters such as hostname or requested path to scope the SLI to a particular set of serving tasks, or response handlers. For data processing systems, validity is usually determined by the selection of inputs to scope the SLI to a subset of data. Good events refer to the expectations from the service or system.

Let's look at some examples of SLIs:

- Request latency: The time taken to return a response for a request should be less than 100 ms.

- Failure rate: The ratio of unsuccessful requests to all received requests should be greater than 99%.

- Availability: Refers to the uptime check on whether a service is available or not at a particular point in time.

Service-Level Objective (SLO)

Google SRE uses the following definition for SLO:

(Betsy Beyer, Chris Jones, Jennifer Petoff, & Niall Murphy, Site Reliability Engineering, O'REILLY)

Customers have specific expectations from a service and these expectations are characterized by specific indicators or SLIs that are tailored per the user journey. SLOs are a way to measure customer happiness and their expectations by ensuring that the SLIs are consistently met and are potentially reported before the customer notices an issue.

Let's now look at the characteristics of SLOs:

- Identifies whether a service is reliable enough.

- Directly tied to SLIs. SLOs are in fact measured by using SLIs.

- Can either be a single target or a range of values for the collection of SLIs.

- If the SLI refers to metrics over time, which details the health of a service, then SLOs are agreed-upon bounds on how often the SLIs must be met.

Let's see how they are represented as a formula:

target OR

target OR

SLO can best be represented either as a specific target value or as a range of values for an SLI for a specific aspect of a service, such as latency or throughput, representing the acceptable lower bound and possible upper bound that is valid over a specific period. Given that SLIs are used to measure SLOs, SLIs should be within the target or between the range of acceptable values

Let's look at some examples of SLOs:

- Request latency: 99.99% of all requests should be served under 100 ms over a period of 1 month or 99.9% of all requests should be served between 75 ms and 125 ms for a period of 1 month.

- Failure rate: 99.9% of all requests should have a failure rate of 99% over 1 year.

- Availability: The application should be usable for 99.95% of the time over 24 hours.

Service-Level Agreement (SLA)

Google SRE uses the following definition for SLAs:

(Betsy Beyer, Chris Jones, Jennifer Petoff, & Niall Murphy, Site Reliability Engineering, O'REILLY)

An SLA is an external-facing agreement that is provided to the consumer of a service. The agreement clearly lays out the minimum expectations that the consumer can expect from the service and calls out the consequences that the service provider needs to face if found in violation. The consequences are generally applied in terms of refund or additional credits to the service consumer.

Let's now look at the characteristics of SLAs:

- SLAs are based on SLOs.

- Signifies the business factor that binds the customer and service provider.

- Represents the consequences of what happens when availability or customer expectation fails.

- Are more lenient than SLOs to trigger early alarms as these are the minimum expectations that the service should meet.

SLAs' priority in comparison to SLOs can be represented as follows:

Let's look at some examples of SLAs:

- Latency: 99% of all requests per day should be served under 150 ms; otherwise, 10% of the daily subscription fee will be refunded.

- Availability: The service should be available with an uptime commitment of 99.9% in a 30-day period; else 4 hours of extra credit will be added to the user account.

Error budgets

Google SRE defines error budgets as follows:

(Betsy Beyer, Chris Jones, Jennifer Petoff, & Niall Murphy, Site Reliability Engineering, O'REILLY)

While a service needs to be reliable, it should also be mindful that if new features are not added to the service, then users might not continue to use it. A 100% reliable service will imply that the service will not have any downtime. This means that it will be increasingly difficult to add innovation via new features that could potentially attract new customers and lead to an increase in revenue. Getting to 100% reliability is expensive and complex. Instead, it's recommended to find the unique value for service reliability where customers feel that the service is reliable enough.

Unreliable systems can quickly erode users' confidence. So, it's critical to reduce the chance of system failure. SRE aims to balance the risk of unavailability with the goals of rapid innovation and efficient service operations so that users' overall happiness – with features, service, and performance – is optimized.

The error budget is basically the inverse of availability, and it tells us how unreliable our service is allowed to be. If your SLO says that 99.9% of requests should be successful in a given quarter, your error budget allows 0.1% of requests to fail. This unavailability can be generated because of bad pushes by the product teams, planned maintenance, hardware failures, and so on:

Important note

The relationship between the error budget and actual allowed downtime for a service is as follows:

If SLO = 99.5%, then error budget = 0.5% = 0.005

Allowed downtime per month = 0.005 * 30 days/month * 24 hours/day * 60 minutes/hour = 216 minutes/month

The following table represents the allowed downtime for a specific time period to achieve a certain level of availability. For downtime information calculation for a specific availability level (other than the following mentioned), refer to https://availability.sre.xyz/:

There are advantages to defining the error budget:

- Release new features while keeping an eye on system reliability.

- Roll out infrastructure updates.

- Plan for inevitable failures in networks and other similar events.

Despite planning error budgets, there are times when a system can overshoot it. In such cases, there are a few things that occur:

- Release of new features is temporarily halted.

- Increased focus on dev, system, and performance testing.

Toil

Google SRE defines toil as follows:

(Betsy Beyer, Chris Jones, Jennifer Petoff, & Niall Murphy, Site Reliability Engineering, O'REILLY)

Here are the characteristics of toil:

- Manual: Act of manually initiating a script that automates a task.

- Repetitive: Tasks that are repeated multiple times.

- Automatable: Human executing a task instead of a machine, especially if a machine can execute with the same effectiveness.

- Tactical: Reactive tasks originating out of an interruption (such as pager alerts), rather than strategy-driven proactive tasks, are considered toil.

- No enduring value: Tasks that do not change the effective state of the service after execution.

- Linear growth: Tasks that grow linearly with an increase in traffic or service demand.

Toil is generally confused with overhead. Overhead is not the same as toil. Overhead is referred to as administrative work that is not tied to running a service, but toil refers to repetitive work that can be reduced by automation. Automation helps to lower burnout, increase team morale, increase engineering standards, improve technical skills, standardize processes, and reduce human error. Examples of tasks that represent overhead and not toil are email, commuting, expense reports, and meetings.

Canary rollouts

SRE prescribes implementing gradual change by using canary rollouts, where the concept is to introduce a change to a small portion of users to detect any imminent danger.

To elaborate, when there is a large service that needs to be sustained, it's preferable to employ a production change with unknown impact to a small portion to identify any potential issue. If any issues are found, the change can be reversed, and the impact or cost is much less than if the change was rolled out to the whole service.

The following two factors should be considered when selecting the canary population:

- The size of the canary population should be small enough that it can be quickly rolled back in case an issue arises.

- The size of the canary population should be large enough that it is a representative subset of the total population.

This concludes a high-level overview of important SRE technical practices. The next section details SRE cultural practices that are key to embrace SRE across an organization and are also critical to efficiently handle change management.

SRE's cultural practices

Defining SLIs, SLOs, and SLAs for a service, using error budgets to balance velocity (the rate at which changes are delivered to production) and reliability, identifying toil, and using automation to eliminate toil forms SRE's technical practices. In addition to these technical practices, it is important to understand and build certain cultural practices that eventually support the technical practices. Cultural practices are equally important to reduce silos within IT teams, as they can reduce the incompatible practices used by individuals within the team. The first cultural practice that will be discussed is the need for a unifying vision.

Need for a unifying vision

Every company needs a vision and a team's vision needs to align with the company's vision. The company's vision is a combination of core values, the purpose, the mission, strategies, and goals:

- Core values: Values refer to a team member's commitment to personal/organizational goals. It also reflects on how members operate within a team by building trust and psychological safety. This creates a culture where the team is open to learning and willing to take risks.

- Purpose: A team's purpose refers to the core reason that the team exists in the first place. Every team should have a purpose in the larger context of the organization.

- Mission: A team's mission refers to a well-articulated, clear, compelling, and unified goal.

- Strategy: A team's strategy refers to a plan on how the team will realize its mission.

- Goals: A team's goal gives more detailed and specific insights into what the team wants to achieve. Google recommends the use of Objectives and Key Results (OKRs), which are a popular goal-setting tool in large companies.

Once a vision statement is established for the company and the team, the next cultural practice is to ensure there is efficient collaboration and communication within the team and across cross-functional teams. This will be discussed next.

Collaboration and communication

Communication and collaboration are critical given the complexity of services and the need for these services to be globally accessible. This also means that SRE teams should be globally distributed to support services in an effective manner. Here are some SRE prescriptive guidelines:

- Service-oriented meetings: SRE teams frequently review the state of the service and identify opportunities to improve and increase awareness among stakeholders. The meetings are mandatory for team members and typically last 30-60 minutes, with a defined agenda such as discussing recent paging events, outages, any required configuration changes.

- Balanced team composition: SRE teams are spread across multiple countries and multiple time zones. This enables them to support a globally available system or service. The SRE team composition typically includes a technical lead (to provide technical guidance), a manager (who runs performance management), and a project manager, who collaborate across time zones.

- Involvement throughout the service life cycle: SRE teams are actively involved throughout the service life cycle across various stages such as architecture and design, active development, limited availability, general availability, and depreciation.

- Establish rules of engagement: SRE teams should clearly describe what channels should be used for what purpose and in what situations. This brings in a sense of clarity. SRE teams should use a common set of tools for creating and maintaining artifacts

- Encourage blameless postmortem: SRE encourages a blameless postmortem culture, where the theme is to learn from failure and the focus is on identifying the root cause of the issue rather than on individuals. A well-written postmortem report can act as an effective tool for driving positive organizational changes since the suggestions or improvements mentioned in the report can help to tune up existing processes

- Knowledge sharing: SRE teams prescribe knowledge sharing through specific means such as encouraging cross-training, creation of a volunteer teaching network, and sharing postmortems of incidents in a way that fosters collaboration and knowledge sharing.

The preceding guidelines, such as knowledge sharing along with the goal to reduce paging events or outages by creating a common set of tools, increase resistance among individuals and team members. This might also create a sense of insecurity. The next cultural practice elaborates on how to encourage psychological safety and reduce resistance to change.

Encouraging psychological safety and reducing resistance to change

SRE prescribes automation as an essential cornerstone to apply engineering principles and reduce manual work such as toil. Though eliminating toil through automation is a technical practice, there will be huge resistance to performing automation. Some may resist automation more than others. Individuals may feel as though their jobs are in jeopardy, or they may disagree that certain tasks need not be automated. SRE prescribes a cultural practice to reduce the resistance to change by building a psychologically safe environment.

In order to build a psychologically safe environment, it is first important to communicate the importance of a specific change. For example, if the change is to automate this year's job away, here are some reasons on how automation can add value:

- Provides consistency.

- Provides a platform that can be extended and applied to more systems.

- Common faults can be easily identified and resolved more quickly.

- Reduces cost by identifying problems as early in the life cycle as possible, rather than finding them in production.

Once the reason for the change is clearly communicated, here are some additional pointers that will help to build a psychologically safe environment:

- Involve team members in the change. Understand their concerns and empathize as needed.

- Encourage critics to openly express their fears as this adds a sense of freedom to team members to freely express their opinions.

- Set realistic expectations.

- Allow team members to adapt to new changes.

- Provide them with effective training opportunities and ensure that training is engaging and rewarding.

This completes an introduction to key SRE cultural practices that are critical to implementing SRE's technical practices. Subsequently, this also completes the section on SRE where we introduced SRE, discussed its evolution, and elaborated on how SRE is a prescriptive way to practically implement DevOps key pillars. The next section discusses how to implement DevOps using Google Cloud services.

Cloud-native approach to implementing DevOps using Google Cloud

This section elaborates on how to implement DevOps using Google Cloud services with a focus on a cloud-native approach – an approach that uses cloud computing at its core to build highly available, scalable, and resilient applications.

Focus on microservices

A monolith application has a tightly coupled architecture and implements all possible features in a single code base along with the database. Though monolith applications can be designed with modular components, the components are still packaged at deployment time and deployed together as a single unit. From a CI/CD standpoint, this will potentially result in a single build pipeline. Fixing an issue or adding a new feature is an extremely time-consuming process since the impact is on the entire application. This decreases the release velocity and essentially is a nightmare for production support teams dealing with service disruption.

In contrast, a microservice application is based on service-oriented architecture. A microservice application divides a large program into several smaller, independent services. This allows the components to be managed by smaller teams as the components are more isolated in nature. The teams, as well as the service, can be independently scaled. Microservices fundamentally support the concept of incremental code change. With microservices, the individual components are deployable. Given that microservices are feature-specific, in the event of an issue, fault detection and isolation are much easier and hence service disruptions can be handled quickly and efficiently. This also makes it much more suitable for CI/CD processes and works well with the theme of building reliable software faster!

Exam tip

Google Cloud provides several compute services that facilitate the deployment of microservices as containers. These include App Engine flexible environment, Cloud Run, Google Compute Engine (GCE), and Google Kubernetes Engine (GKE). From a Google Cloud DevOps exam perspective, the common theme is to build containers and deploy containers using GKE. GKE will be a major focus area and will be discussed in detail in the upcoming chapters.

Cloud-native development

Google promotes and recommends application development using the following cloud-native principles:

- Use microservice architectural patterns: As discussed in the previous sub-section, the essence is to build smaller independent services that could be managed separately and be scaled granularly.

- Treat everything as code: This principle makes it easier to track, roll back code if required, and see the version of change. This includes source code, test code, automation code, and infrastructure as code.

- Build everything as containers: A container image can include software dependencies needed by the application, specific language runtimes, and other software libraries. Containers can be run anywhere, making it easier to develop and deploy. This allows developers to focus on code and ops teams will spend less time debugging and diagnosing differences in environments.

- Design for automation: Automated processes can repair, scale, and deploy systems faster than humans. As a critical first step, a comprehensive CI/CD pipeline is required that can automate the build, testing, and deployment process. In addition, the services that are deployed as containers should be configured to scale up or down based on outstanding traffic. Real-time monitoring and logging should be used as a source for automation since they provide insights into potential issues that could be mitigated by building proactive actions. The idea of automation can also be extended to automate the entire infrastructure using techniques such as Infrastructure as Code (IaC).

- Design components to be stateless wherever possible: Stateless components are easy to scale up or down, repair a failed instance by graceful termination and potential replacement, roll back to an older instance in case of issues, and make load balancing a lot simpler since any instance can handle any request. Any need to store persistent data should happen outside the container, such as storing files using Cloud Storage, storing user sessions through Redis or Memcached, or using persistent disks for block-level storage.

Google Cloud provides two approaches for cloud-native development – serverless and Kubernetes. The choice comes down to focus on infrastructure versus business logic:

- Serverless (via Cloud Run, Cloud Functions, or App Engine): Allows us to focus on the business logic of the application by providing a higher level of abstraction from an infrastructure standpoint.

- Kubernetes (via GKE): Provides higher granularity and control on how multiple microservices can be deployed, how services can communicate with each other, and how external clients can interact with these services.

Managed versus serverless service

Managed services allow operations related to updates, networking, patching, high availability, automated backups, and redundancy to be managed by the cloud provider. Managed services are not serverless as it is required to specify a machine size and the service mandates to have a minimal number of VMs/nodes. For example, it is required to define the machine size while creating a cloud SQL instance, but updates and patches can be configured to be managed by Google Cloud.

Serverless services are managed but do not require reserving a server upfront or keeping it running. The focus is on the business logic of the application with the possibility of running or executing code only when needed. Examples are Cloud Run, Cloud Storage, Cloud Firestore, and Cloud Datastore.

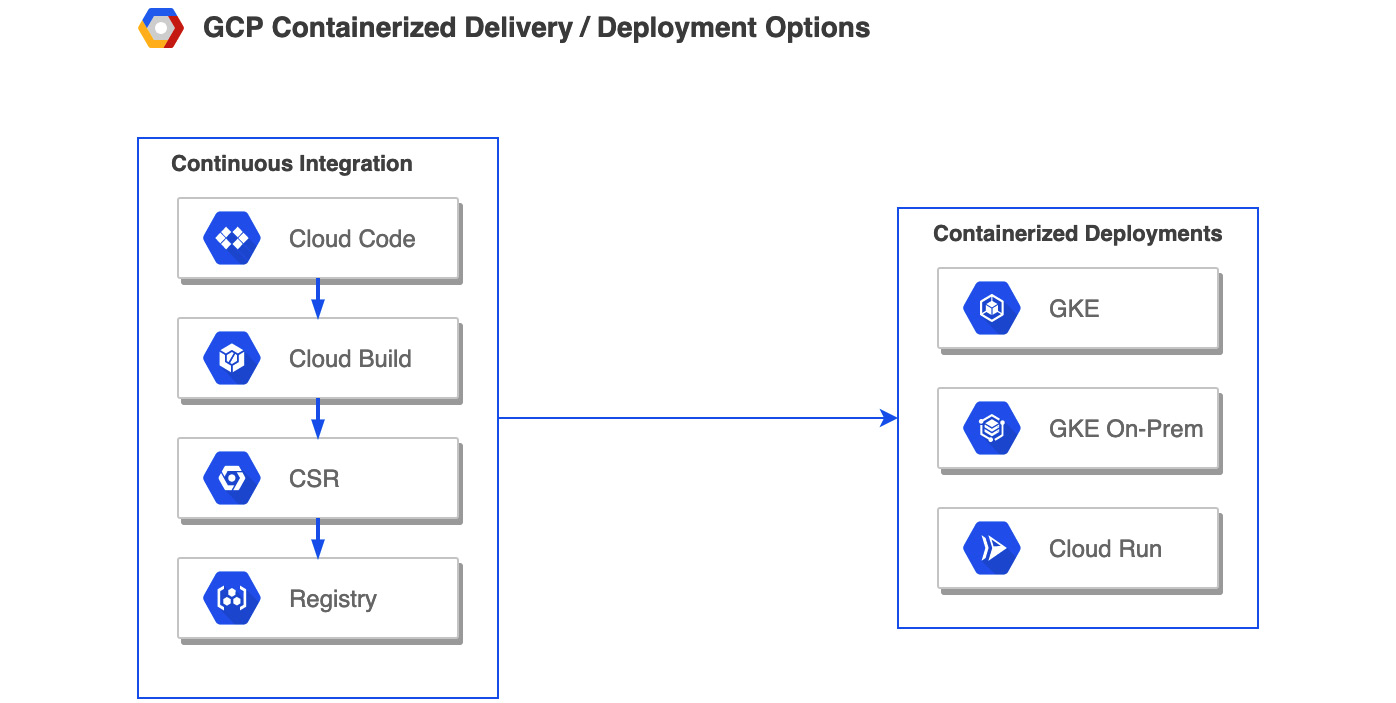

Continuous integration in GCP

Continuous integration forms the CI of the CI/CD process and at its heart is the culture of submitting smaller units of change frequently. Smaller changes minimize the risk, help to resolve issues quickly, increase development velocity, and provide frequent feedback. The following are the building blocks that make up the CI process:

- Make code changes: By using the IDE of choice and possible cloud-native plugins

- Manage source code: By using a single shared code repository

- Build and create artifacts: By using an automated build process

- Store artifacts: By storing artifacts such as container images in a repository for a future deployment process

Google Cloud has an appropriate service for each of the building blocks that allows us to build a GCP-native CI pipeline (refer to Figure 1.2). The following is a summary of these services, which will be discussed in detail in upcoming chapters:

Figure 1.2 – CI in GCP

Let's look at these stages in detail.

Cloud Code

This is the GCP service to write, debug, and deploy cloud-native applications. Cloud Code provides extensions to IDEs such as Visual Studio Code and the JetBrains suite of IDEs that allows to rapidly iterate, debug, and run code on Kubernetes and Cloud Run. Key features include the following:

- Speed up development and simplify local development

- Extend to production deployments on GKE or Cloud Run and allow debugging deployed applications

- Deep integration with Cloud Source Repositories and Cloud Build

- Easy to add and configure Google Cloud APIs from built-in library manager

Cloud Source Repositories

This is the GCP service to manage source code. It provides Git version control to support the collaborative development of any application or service. Key features include the following:

- Fully managed private Git repository

- Provides one-way sync with Bitbucket and GitHub source repositories

- Integration with GCP services such as Cloud Build and Cloud Operations

- Includes universal code search within and across repositories

Cloud Build

This is the GCP service to build and create artifacts based on commits made to source code repositories such as GitHub, Bitbucket, or Google's Cloud Source Repositories. These artifacts can be container or non-container artifacts. The GCP DevOps exam's primary focus will be on container artifacts. Key features include the following:

- Fully serverless platform with no need to pre-provision servers or pay in advance for additional capacity. Will scale up and down based on load

- Includes Google and community builder images with support for multiple languages and tools

- Includes custom build steps and pre-created extensions to third-party apps that enterprises can easily integrate into their build process

- Focus on security with vulnerability scanning and the ability to define policies that can block the deployment of vulnerable images

Container/Artifact Registry

This is the GCP construct to store artifacts that include both container (Docker images) and non-container artifacts (such as Java and Node.js packages). Key features include the following:

- Seamless integration with Cloud Source Repositories and Cloud Build to upload artifacts to Container/Artifact Registry.

- Ability to set up a secure private build artifact storage on Google Cloud with granular access control.

- Create multiple regional repositories within a single Google Cloud project.

Continuous delivery/deployment in GCP

Continuous delivery/deployment forms the CD of the CI/CD process and at its heart is the culture of continuously delivering production-ready code or deploying code to production. This allows us to release software at high velocity without sacrificing quality.

GCP offers multiple services to deploy code, such as Compute Engine, App Engine, Kubernetes Engine, Cloud Functions, and Cloud Run. The focus of this book will be on GKE and Cloud Run. This is in alignment with the Google Cloud DevOps exam objectives.

The following figure summarizes the different stages of continuous delivery/deployment from the viewpoint of appropriate GCP services:

Figure 1.3 – Continuous delivery/deployment in GCP

Let's look at the two container-based deployments in detail.

Google Kubernetes Engine (GKE)

This is the GCP service to deploy containers. GKE is Google Cloud's implementation of the CNCF Kubernetes project. It's a managed environment for deploying, managing, and scaling containerized applications using Google's infrastructure. Key features include the following:

- Automatically provisions and manages a cluster's master-related infrastructure and abstracts away the need for a separate master node

- Automatic scaling of a cluster's node instance count

- Automatic upgrades of a cluster's node software

- Node auto-repair to maintain the node's health

- Native integration with Google's Cloud Operations for logging and monitoring

Cloud Run

This is a GCP-managed serverless platform that can deploy and run Docker containers. These containers can be deployed in either Google-managed Kubernetes clusters or on-premises workloads using Cloud Run for Anthos. Key features include the following:

- Abstracts away infrastructure management by automatically scaling up and down

- Only charges for exact resources consumed

- Native GCP integration with Google Cloud services such as Cloud Code, Cloud Source Repositories, Cloud Build, and Artifact Registry

- Supports event-based invocation via web requests with Google Cloud services such as Cloud Scheduler, Cloud Tasks, and Cloud Pub/Sub

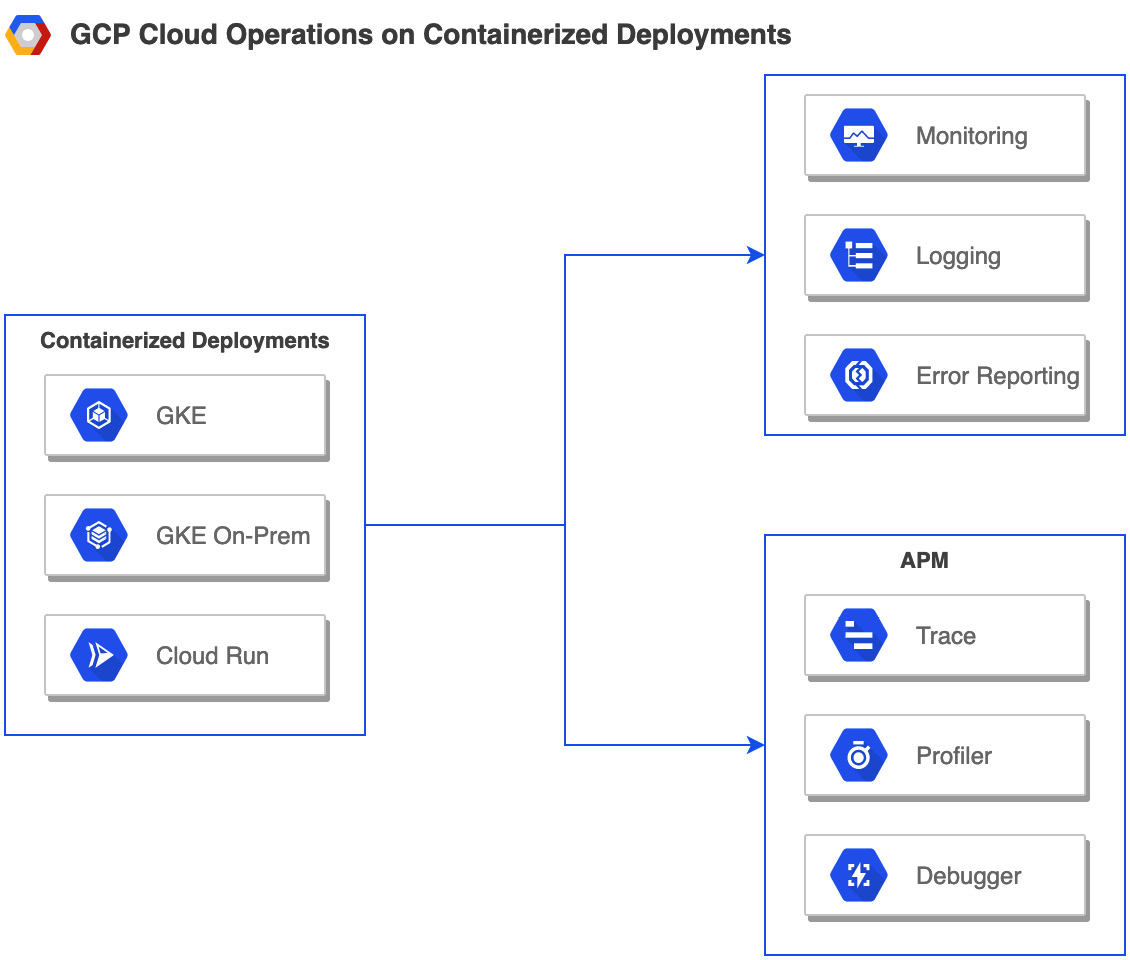

Continuous monitoring/operations on GCP

Continuous Monitoring/Operations forms the feedback loop of the CI/CD process and at its heart is the culture of continuously monitoring or observing the performance of the service/application.

GCP offers a suite of services that provide different aspects of Continuous Monitoring/Operations, aptly named Cloud Operations (formerly known as Stackdriver). Cloud Operations includes Cloud Monitoring, Cloud Logging, Error Reporting, and Application Performance Management (APM). APM further includes Cloud Debugger, Cloud Trace, and Cloud Profiler. Refer to the following diagram:

Figure 1.4 – Continuous monitoring/operations

Let's look at these operations- and monitoring-specific services in detail.

Cloud Monitoring

This is the GCP service that collects metrics, events, and metadata from Google Cloud and other providers. Key features include the following:

- Provides out-of-the-box default dashboards for many GCP services

- Supports uptime monitoring and alerting to various types of channels

- Provides easy navigation to drill down from alerts to dashboards to logs and traces to quickly identify the root cause

- Supports non-GCP environments with the use of agents

Cloud Logging

This is the GCP service that allows us to store, search, analyze, monitor, and alert on logging data and events from Google Cloud and Amazon Web Services. Key features include the following:

- A fully managed service that performs at scale with sub-second ingestion latency at terabytes per second

- Analyzes log data across multi-cloud environment from a single place

- Ability to ingest application and system log data from thousands of VMs

- Ability to create metrics from streaming logs and analyze log data in real time using BigQuery

Error Reporting

This is the GCP service that aggregates, counts, analyzes, and displays application errors produced from running cloud services. Key features include the following:

- Dedicated view of error details that include a time chart, occurrences, affected user count, first and last seen dates, and cleaned exception stack trace

- Lists out the top or new errors in a clear dashboard

- Constantly analyzes exceptions and aggregates them into meaningful groups

- Can translate the occurrence of an uncommon error into an alert for immediate attention

Application Performance Management

This is the GCP service that combines monitoring and troubleshooting capabilities of Cloud Logging and Cloud Monitoring with Cloud Trace, Cloud Debugger, and Cloud Profiler, to help reduce latency and cost and enable us to run applications more efficiently. Key features include the following:

- A distributed tracing system (via Cloud Trace) that collects latency data from your applications to identify performance bottleneck

- Inspects a production application by taking a snapshot of the application state in real time, without stopping or slowing down (via Cloud Debugger), and provides the ability to inject log messages as part of debugging

- Low-impact production profiling (via Cloud Profiler) using statistical techniques, to present the call hierarchy and resource consumption of relevant function in an interactive flame graph

Bringing it all together – building blocks for a CI/CD pipeline in GCP

The following figure represents the building blocks that are required to build a CI/CD pipeline in GCP:

Figure 1.5 – GCP building blocks representing the DevOps life cycle

In the preceding figure, the section for Continuous Feedback/Analysis represents the GCP services that are used to analyze or store information obtained during Continuous Monitoring/Operations either from an event-driven or compliance perspective. This completes the section on an overview of Google Cloud services that can be used to implement the key stages of the DevOps life cycle using a cloud-native approach with emphasis on decomposing a complex system into microservices that can be independently tested and deployed.

Summary

In this chapter, we learned about DevOps practices that break down the metaphorical wall between developers (who constantly want to push features to production) and operators (who want to run the service reliably).

We learned about the DevOps life cycle, key pillars of DevOps, how Google Cloud implements DevOps through SRE, and Google's cloud-native approach to implementing DevOps. We learned about SRE's technical and cultural practices and were introduced to key GCP services that help to build the CI/CD pipeline. In the next chapter, we will take an in-depth look at SRE's technical practices such as SLI, SLO, SLA, and error budget.

Points to remember

The following are some important points:

- If DevOps is a philosophy, SRE is a prescriptive way of achieving that philosophy: class SRE implements DevOps.

- SRE balances the velocity of development features with the risk to reliability.

- SLA represents an external agreement and will result in consequences when violated.

- SLOs are a way to measure customer happiness and their expectations.

- SLIs are best expressed as a proportion of all successful events to valid events.

- Error budget is the inverse of availability and depicts how unreliable a service is allowed to be.

- Toil is manual work tied to a production system but is not the same as overhead.

- The need for unifying vision, communication, and collaboration with an emphasis on blameless postmortems and the need to encourage psychological safety and reduce resistance to change are key SRE cultural practices.

- Google emphasizes the use of microservices and cloud-native development for application development.

- Serverless services are managed but managed services are necessarily not serverless.

Further reading

For more information on GCP's approach toward DevOps, read the following articles:

- DevOps: https://cloud.google.com/devops

- SRE: https://landing.google.com/sre/

- CI/CD on Google Cloud: https://cloud.google.com/docs/ci-cd

Practice test

Answer the following questions:

- Which of the following represents a sequence of tasks that is central to user experience and is crucial to service?

a) User story

b) User journey

c) Toil

d) Overhead

- If the SLO for the uptime of a service is set to 99.95%, what is the possible SLA target?

a) 99.99

b) 99.95

c) 99.96

d) 99.90

- Which of the following accurately describes the equation for SLI?

a) Good events / Total events

b) Good events / Total events * 100

c) Good events / Valid events

d) Good events / Valid events * 100

- Which of the following represents a carefully defined quantitative measure of some aspect of the level of service?

a) SLO

b) SLI

c) SLA

d) Error budget

- Select the option used to calculate the error budget.

a) (100 – SLO) * 100

b) 100 – SLI

c) 100 – SLO

d) (100 – SLI) * 100

- Which set of Google services accurately depicts the continuous feedback loop?

a) Monitoring, Logging, Reporting

b) Bigtable, Cloud Storage, BigQuery

c) Monitoring, Logging, Tracing

d) BigQuery, Pub-Sub, Cloud Storage

- In which of the following "continuous" processes are changes automatically deployed to production without manual intervention?

a) Delivery

b) Deployment

c) Integration

d) Monitoring

- Select the option that ranks the compute services from a service that requires the most management needs with the highest customizability to a service with fewer management needs and the lowest customizability.

a) Compute Engine, App Engine, GKE, Cloud Functions

b) Compute Engine, GKE, App Engine, Cloud Functions

c) Compute Engine, App Engine, Cloud Functions, GKE

d) Compute Engine, GKE, Cloud Functions, App Engine

- Awesome Incorporated is planning to move their on-premises CI pipeline to the cloud. Which of the following services provides a private Git repository hosted on GCP?

a) Cloud Source Repositories

b) Cloud GitHub

c) Cloud Bitbucket

d) Cloud Build

- Your goal is to adopt SRE cultural practices in your organization. Select two options that could help to achieve this goal.

a) Launch and iterate.

b) Enable daily culture meetings.

c) Ad hoc team composition.

d) Create and communicate a clear message.

Answers

- (b) – User journey

- (d) – 99.90

- (d) - Good events / Valid events * 100

- (b) - SLI

- (c) – 100 – SLO

- (d) – BigQuery, Pub-Sub, Cloud Storage

- (b) – Deployment (forming continuous deployment)

- (b) – Compute Engine, GKE, App Engine, Cloud Functions

- (a) – Cloud Source Repositories

- (a) and (d) – Launch and iterate. Create and communicate a clear message.