While working on an application, let's say there are several places which require data protection via locking—in other words, several critical sections:

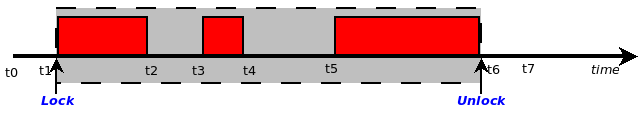

We have shown the critical sections (the places that, as we have learned, require synchronization—locking) with the solid red rectangles on the timeline. The developer might well realize, why not simplify this? Just take a single lock at time t1 and unlock it at time t6:

This will work in protecting all the critical sections. But this is at the cost of performance. Think about it; each time a thread runs through the preceding code path, it must take the lock, perform the work, and then unlock. That's fine, but what about parallelism? It's effectively defeated; the code from t1 to t6 is now serialized. This kind of over-amplified locking-of-all-critical-sections-with-one-big-fat-lock is called coarse granularity locking.

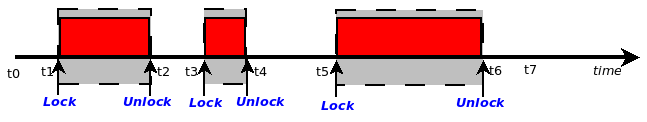

Recall our earlier discussion: code (text) is never an issue—there is no need at all to lock here; just lock the places where writable shared data of any sort is being accessed. These are the critical sections! This gives rise to fine granularity locking—we only take the lock at the point in time where a critical section begins and unlock where it ends; the following diagram reflects this:

As we stated previously, a good rule of thumb to keep in mind is to lock data, not code.

Straying too far in either direction might be a mistake; too coarse a locking granularity yields poor performance, but too fine a granularity can too.