As a SQL Server DBA, when migrating to an Azure SQL Database, you will need to have an initial estimate of DTUs so as to assign an appropriate Service tier to an Azure SQL Database. An appropriate Service Tier will ensure that you met most of your application performance goals. Estimating a lower or a higher Service tier will result in decreased performance or increased cost, respectively.

This chapter teaches you how to use the DTU calculator to make an appropriate initial estimate of the Service tier. You can, at any time, change your service tier by monitoring the Azure SQL Database performance once it's up and running.

Developed by Justin Henriksen, an Azure Solution Architect at Microsoft, the DTU Calculator can be used to find out the initial Service tier for an Azure SQL Database. The calculator is available at https://dtucalculator.azurewebsites.net.

DTU Calculator Work Flow

The DTU Calculator works as shown in the following diagram:

Figure 2.2: DTU Calculator Work Flow

First, you have to set up a trace to record the following counters for at least an hour:

- Processor: % Processor Time

- Logical Disk: Disk Reads/sec

- Logical Disk: Disk Writes/sec

- Database: Log Bytes Flushed/sec

You can run a trace by using either the command-line utility or the PowerShell script provided on the DTU Calculator website.

Capture the counters on a workload similar to that of the production environment. The trace generates a CSV report.

The DTU Calculator uses this report to analyze and suggest an initial Service Tier.

Let’s get back to Mike. Mike is unsure about the service tier that he has to select while migrating to the Azure SQL Database. Hence, he wants to make use of the DTU Calculator to select the service tier to migrate to. The following steps describe how he can use the DTU Calculator to determine the initial service tier for his database:

- Open https://dtucalculator.azurewebsites.net and download the command-line utility to capture the performance counters.

The

sql-performance-clfolder has two files,SqlDtuPerfmon.exeandSqlDtuPerfmon.exe.config. The first one is the executable which, when run, will capture the counter in acsvfile, and the second file is a configuration file that specifies the counters to be captured. - Open

SqlDtuPerfmon.exe.configin Notepad and make changes, as suggested in the following points:- Change the SQL Instance name to be monitored:

- Under the

SQL COUNTERcomment, modify the value ofSqlCategoryas per the instance name of your SQL Server. - If you are running on a default instance, then replace

MSSQL$SQL2016:DatabaseswithSQLServer:Databases. - If you are running on a named instance, say,

Packtpub, then replaceMSSQL$SQL2016:DatabaseswithMSSQL$Packtpub:Databases. - Set the output file path:

- If you wish to change the output file, modify the value for the

CsvPathkey shown in the preceding screenshot. - You are now ready to capture the performance trace.

- Change the SQL Instance name to be monitored:

- Double click the

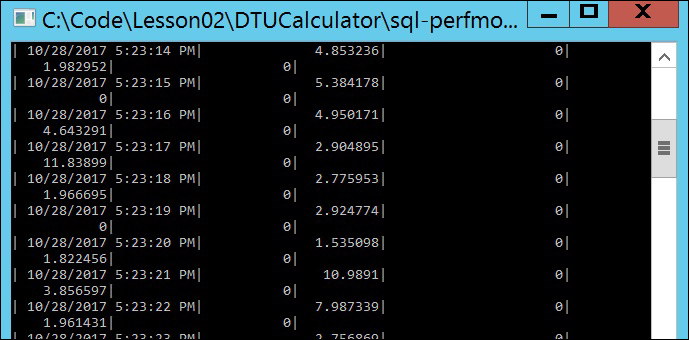

C:CodeLesson02DTUCalculatorsql-perfmon-clSqlDtuPerfmon.exefile to run the performance trace.A new command prompt will appear and will display the counters as they are being monitored and saved into the CSV file.

You should get a similar command-line window, as shown in the following screenshot:

- The next step is to upload the output file on the DTU Calculator website and analyze the results. To do this, follow these steps:

- Open https://dtucalculator.azurewebsites.net/ and scroll down to the Upload the CSV file and Calculate section.

- In the Cores text box, enter the number of cores on the machine you ran

SqlDtuPerfmon.exeon and capture the performance counters. - Click Choose file and in the File Open Dialog box, select the

C:CodeLesson02DTUCalculatorsql-perfmon-log.csvfile. - Click the Calculate button to upload and analyze the performance trace:

- The DTU Calculator will analyze and then suggest the Service tier you should migrate your database to. It gives an overall recommendation and further breaks down the recommendation based on only CPU, I/O, and Logs utilization.

Overall Recommendation

The DTU Calculator suggests to migrate to Premium – P2 tier, as it will cover approximately 100% of the workload.

You can hover your mouse over different areas of the chart to check what percent the workload of each tier covers:

Recommendation Based on CPU Utilization

The calculator recommends Premium – P2 tier based on CPU utilization:

Recommendation Based on Iops Utilization

Based solely on Iops utilization, the DTU Calculator suggests going for a Standard – S2 tier, which covers approximately 90% of the workload. However, it also mentions that approximately 10% of the workloads will require a higher service tier. Therefore, the decision lies with you; the Premium – P2 Service tier is costlier, however, it covers 100% of the workload. If we are okay with 10% of the workload performing slowly, we can choose the Standard – S2 service tier.

Remember, this is an estimation of the Service Tier and you can at any time scale up to a higher Service Tier for improved performance:

Recommendation Based on Log Utilization

Based solely on Log Utilization, the DTA Calculator recommends the Basic Service tier. However, it is also mentioned that approximately 11% of the workload requires a higher Server tier.

At this point, you may start with the Basic Service Tier and then scale up as and when required. This will certainly save money. However, you should consider that the Basic Service allows only 30 concurrent connections and has a maximum database size limit of 2 GB: