1

Windows Container 101

In this chapter, we’re going to cover the foundations of a Windows container and why it is an essential topic for DevOps engineers and solution architects. The chapter will cover the following topics:

- Why are Windows containers an important topic?

- How Windows Server exposes container primitives

- How Windows Server implements resource controls for Windows containers

- Understanding Windows container base images

- Delving into Windows container licensing on AWS

- Summary

Why are Windows containers an important topic?

Have you ever asked yourself, “Why should I care about Windows containers?” Many DevOps engineers and solution architects excel in Linux containerization, helping their companies with re-platforming legacy Linux applications into containers to architect, deploy, and manage complex microservices environments. However, many organizations still run tons of Windows applications, such as ASP.NET websites or .NET Framework applications, which are usually left behind during the modernization journey.

Through many customer engagements I have had in the past, there were two main aspects that meant Windows containers weren’t an option for DevOps engineers and solution architects.

The first was a lack of Windows operational system expertise in the DevOps team. Different system administrators and teams usually manage Windows and Linux, each using the tools that best fit their needs. For instance, a Windows system administrator will prefer System Center Configuration Manager (SCCM) as a Configuration Management solution. In contrast, a Linux system administrator would prefer Ansible.

Another example: a Windows system administrator would prefer System Center Operations Manager (SCOM) for deep insights, monitoring, and logging, whereas a Linux system administrator would prefer Nagios and an ELK stack. With the rapid growth of the Linux ecosystem toward containers, it is a natural and more straightforward career shift that a Linux system administrator needs to take in order to get up to speed as a DevOps engineer, whereas Windows system administrators aren’t exposed to all these tools and evolutions, making it a hard and drastic career shift, where you have to first learn about the Linux operating system (OS) and then the entire ecosystem around it.

The second aspect is the delusion that every .NET Framework application should be refactored to .NET (formerly .NET Core). In almost all engagements where the .NET Framework is a topic, I’ve heard developers talking about the beauty of refactoring their .NET Framework application into .NET and leveraging all the benefits available on a Linux ecosystem, such as ARM processors and the rich Linux tools ecosystem. While they are all 100% technically correct, as solution architects, we need to see the big picture, meaning the business investment behind it. We need to understand how much effort and investment of money will be required to fully refactor the application and its dependencies to move out of Windows, what will happen with the already purchased Windows Server licenses and management tools, and when the investment will break even. Sometimes, the annual IT budget will be better spent on new projects rather than refactoring 10-year-old applications, where the investment breakeven will take 5 or more years to come through, without much innovation on the application itself.

Now that we understand the most common challenges for Windows container adoption and the opportunity in front of us, we’ll dig into the Windows Server primitives for Windows containers, resource controls, and Windows base images.

How does Windows Server expose container primitives?

Containers are kernel primitives responsible for containerization, such as control groups, namespaces, union filesystems, and other OS functionalities. These work together to create process isolation provided through namespace isolation and control groups, which govern the resources of a collection of processes within a namespace.

Namespaces isolate named objects from unauthorized access. A named object provides processes to share object handles. In simple words, when a process needs to share handles, it creates a named event or mutex in the kernel; other processes can use this object name to call functions inside the process, then an object namespace creates the boundary that defines what process or container process can call the named objects.

Control groups or cgroups are a Linux kernel feature that limits and isolates how much CPU, memory, disk I/O, and network a collection of the process can consume. The collection process is the one running in the container:

Figure 1.1 – How a container runtime interacts with the Linux kernel

However, when it relates to the Windows OS, this is an entirely different story; there is no cgroup, pid, net, ipc, mnt, or vfs. Instead, in the Windows world, we call them job objects (the equivalent of cgroups), object namespaces, the registry, and so on. Back in the days when Microsoft planned how they would effectively expose these low-level Windows kernel APIs so that the container runtime could easily consume them, Microsoft decided to create a new management service called the Host Compute Service (HCS). The HCS provides an abstraction to the Windows kernel APIs, making a Windows container a single API call from the container runtime to the kernel:

Figure 1.2 – How a container runtime interacts with the Windows kernel

Working directly with the HCS may be difficult as it exposes a C API. To make it easier for container runtime and orchestrator developers to consume the HCS from higher-level languages such as Go and C#, Microsoft released two wrappers:

- hcsshim is a Golang interface to launch and manage Windows containers using the HCS

- dotnet-computevirtualization is a C# class library to launch and manage Windows containers using the HCS

Now that you understand how Windows Server exposes container primitives and how container runtimes such as Docker Engine and containerd interact with the Windows kernel, let’s delve into how Windows Server implements resource controls at the kernel level for Windows containers.

How Windows Server implements resource controls for Windows containers

In order to understand how Windows Server implements resource controls for Windows containers, we first need to understand what a job object is. In the Windows kernel, a job object allows groups of processes to be managed as a unit, and Windows containers utilize job objects to group and track processes associated with each container.

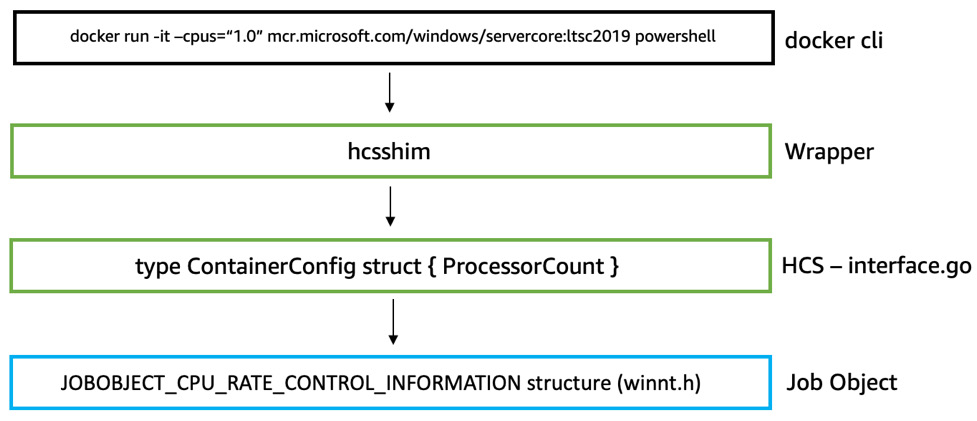

Resource controls are enforced on the parent job object associated with the container. When you are running the Docker command to execute memory, CPU count, or CPU percentage limits, under the hood, you are asking the HCS to set these resource controls in the parent job object directly:

Figure 1.3 – Internal container runtime process to set resource controls

Resources that can be controlled include the following:

- The CPU/processor

- Memory/RAM

- Disk/storage

- Networking/throughput

The previous two topics gave us an understanding of how Windows Server exposes container primitives and how container runtimes such as Docker Engine and containerd interact with the Windows kernel. However, you shouldn’t worry too much about this. As a DevOps engineer and solution architect, it is essential to understand the concept and how it differs from Linux, but you will rarely work at the Windows kernel level when running Windows containers. The container runtime will take care of it for you.

Understanding Windows container base images

When building your Windows application into a Windows container, it is crucial to assess the dependencies it carries, such as Open Database Connectivity (ODBC) drivers, a Dynamic-Link Library (DLL), and additional applications. These entire packages (meaning the application plus dependencies) will dictate which Windows container image must be used.

Microsoft offers four container base images, each exposing a different Windows API set, drastically influencing the final container image size and on-disk footprint:

- Nano Server is the smallest Windows container base image available, exposing just enough APIs to support .NET Core or other modern open source frameworks. It is a great option for sidecar containers.

- Server Core is the most common Windows container base image available. It exposes the Windows API set to support the .NET Framework and common Windows Server features, such as IIS.

- Server is smaller than the Windows image but has the full Windows API set. It fits, in the same use case mentioned previously, applications that require a DirectX graphics API.

- Windows is the largest image and exposes the full Windows API set. It is usually used for applications that require a DirectX graphics API and frameworks such as DirectML or Unreal Engine. There is a very cool community project specifically for this type of workload, which can be accessed at the following link: https://unrealcontainers.com/.

Important note

The Windows image is not available for Windows Server 2022, as Server is the only option for workloads that require a full Windows API set.

Enumerating the Windows container image sizes

You have probably already heard about how big Windows container images are compared to Linux. While, technically, the differences in sizes are exorbitant, it doesn’t bring any value to the discussion since we won’t address Windows-specific needs with Linux, and vice versa. However, selecting the right Windows container base image directly affects the solution cost, especially regarding the storage usage footprint, drastically influencing the container host storage allocation.

Let’s delve into Windows container image sizes. The values in the following table are based on Windows Server 2022 container images:

|

Image name |

Image size |

Extracted on disk |

|

Nano Server |

118 MB |

296 MB |

|

Server Core |

1.4 GB |

4.99 GB |

|

Windows Server |

3.28 GB |

10.8 GB |

Table 1.1 – Windows container image sizes

As discussed in the previous section, the difference in size refers to the amount of the Windows API set exposed to the container, addressing different application needs. The Extracted on disk column is crucial information because, on AWS, one of the price compositions for the block storage called Amazon Elastic Block Storage (EBS) is the amount of space provisioned; you pay for what you provision, independent of whether it is used or not, thereby influencing the EBS volume size you will deploy on each container host.

We’ll dive deep into this topic in greater detail in Chapter 14, Implementing a Container Image Cache Strategy.

Delving into Windows container licensing on AWS

In AWS, there are two options to license Windows Server:

- License included: You pay per second in the Amazon EC2 Windows cost. The Windows Server version is Datacenter, which gives you unlimited containers per host.

- Bring your own license (BYOL): You bring your existing Windows Server license as long as the licenses were acquired or added as a true-up under an active Enterprise Agreement that was signed prior to October 1, 2019. This option also requires an Amazon EC2 Dedicated Host.

I recommend checking internally in your organization what Windows Server 2019 license options are available and making a decision based on how much money you can save using either BYOL or License included adoption.

Summary

In this chapter, we learned why Windows containers are an essential topic for organizations going through their modernization journey and why it may be a challenge due to a lack of expertise; then, we delved into how Windows Server exposes container primitives through the HCS and how container runtimes interact with the Windows kernel for resource controls. We also delved into the Windows container base images available, image sizing, and licensing.

In a nutshell, the use case for a Windows container is very straightforward; if it can’t be solved with Linux due to incompatibility or application dependencies/requirements, then go with Windows, period. To add more to that, in the same way that we shouldn’t use Windows containers to run a Go application, we shouldn’t even try to use a Linux container to run a .NET Framework application.

In Chapter 2, Amazon Web Services – Breadth and Depth, we will understand why AWS is the best choice for running Windows containers. You will learn how AWS Nitro improves container performance and the information you need to choose what AWS container orchestrator make sense for your use case.

Further reading

- Microsoft Windows container license FAQ: https://docs.microsoft.com/en-us/virtualization/windowscontainers/about/faq

- Microsoft Licensing on AWS: https://aws.amazon.com/windows/resources/licensing/

- StackOverflow 2022 Developer Survey: https://survey.stackoverflow.co/2022/