CHAPTER 3

AWS Identity and Access Management and AWS Service Security

In this chapter, you will

• Learn about the AWS shared responsibility security model

• Use AWS account security features

• Learn more about AWS Identity and Access Management (IAM)

• Learn how to manage AWS component security

• Learn how to secure your network

• Understand how to secure storage services

• Learn how to secure your databases: Amazon DynamoDB, Amazon RDS, Amazon Redshift, and Amazon ElastiCache Security

• Learn how to secure application services

• Understand how to improve security with AWS monitoring tools and services

AWS security is a very broad topic because of the many ways in which you can secure your AWS resources and the flow of Internet traffic to your cloud-based servers and applications.

The AWS Shared Responsibility Security Model

In an AWS cloud, security and compliance are a shared responsibility between AWS and its customers. AWS manages the infrastructure components, ranging from the host operating system and the virtualization layer, down to the physical security of the facilities that host the services. The customers are responsible for managing the guest OS, including applying all updates and security patches and other application software. This shared responsibility between you and AWS reduces the burden on you to secure your infrastructure in the AWS cloud and provides a stronger security posture.

Figure 3-1 illustrates the AWS shared responsibility security model. This separation of security responsibilities is often referred to as the security “of” the cloud versus security “in” the cloud.

Figure 3-1 The AWS shared responsibility model in the cloud

AWS Responsibility: Security of the Cloud

AWS is responsible for the security of the entire underlying infrastructure on which its customers run the AWS cloud services. Indeed, AWS considers protection of the infrastructure its main priority. The infrastructure consists of all the hardware and software, as well as the networking, storage, and physical facilities that support AWS cloud services. The shared responsibility model means that AWS manages the security for the following assets:

• Physical facilities

• Physical hardware

• Network infrastructure

• Virtualization infrastructure

AWS offers services such as IAM that you can use to manage users and user permissions in AWS services.

Auditing AWS Infrastructure Security

It’s not possible for all customers to visit the AWS data centers to ensure that the promised protection is indeed there. However, you can rest assured, because AWS offers several types of third-party auditor reports that verify AWS compliance with several computer security standards and regulations, such as Sarbanes-Oxley and PCI DSS.

AWS Global Infrastructure Security

AWS locates all its computing resources in a global infrastructure, which includes the physical data centers, network, hardware and host OS software, and virtualization software to support users of these resources.

Physical and Environment Security AWS houses its global data centers in nondescript physical buildings and strictly controls physical access at the perimeter using video surveillance, intrusion detection systems, and other physical security measures. AWS enforces a two-factor authentication for authorized staff to access the data center floors, and all physical access to data centers is logged and audited.

AWS protects its data centers from disasters and failures via the following means:

• Decommissioning old storage devices AWS uses a formal decommissioning process that destroys data as part of the decommissioning process. Decommissioned magnetic storage devices are degaussed and physically destroyed.

• Fire detection and suppression AWS installs automatic fire detection and suppression equipment in its data centers.

• Redundant power supplies Data center electric power systems are fully redundant, using uninterruptible power supplies (UPS) through the use of power generators for provision of backup power in the event of electrical failures.

• Climate control Data centers are conditioned to maintain temperatures at the optional levels to prevent overheating and reduce service outages.

Business Continuity Management Business continuity management involves providing high availability for the data centers and fast incident detection and response.

AWS builds its data centers as clusters in multiple geographical regions. If a data center fails, its automatic processes direct customer traffic to the unaffected data centers. Distributing your applications across multiple availability zones (AZs) and regions enhances resiliency against failures caused by natural disasters or system failures. The AZs inside each region are designed as independent failure zones by physically separating the zones and locating them in lower-risk flood plains. Data centers also use power from different grids run by different utilities to reduce the possibility of a single point of failure. The Amazon Incident Management team provides fast incident response by proactively detecting incidents and managing their resolution.

In addition, AWS has implemented various types of internal communications to teach employees about their individual roles and responsibilities, including orientation and training programs, video conferencing, and electronic messages. The customer support teams maintain a Service Health Dashboard to alert customers to issues having major impact. The AWS Security Center offers security and compliance details that pertain to AWS.

The AWS Compliance Program AWS follows strict security best practices and security compliance standards. When you set up your systems on top of the AWS infrastructure, you’ll share compliance responsibilities with AWS. AWS ties together governance focus and audit-friendly service features with relevant compliance and audit standards to help you operate in an AWS security–controlled environment.

The AWS infrastructure is designed to align with a variety of IT security standards, including the following:

• Service Organization Controls (SOC 1)/Statement on Standards for Attestation Engagements (SSAE 16)/International Standard on Assurance Engagements (ISAE 3402) (formerly SAS 70)

• SOC 2

• SOC 3

• Federal Information Security Management Act (FISMA), DoD Information Assurance Certification and Accreditation Process (DIACAP), and Federal Risk and Authorization Management Program (FedRAMP)

• DoD CSM Levels 1–5

• PCI DSS Level 1

• ISO 9001/ISO 27001

• International Traffic in Arms Regulations (ITAR)

• Federal Information Processing Standard (FIPS 140-2)

• Multi-Tier Cloud Security Standard (MTCS) Level 3

In addition, the AWS platform complies with other industry-specific security standards such as these:

• Criminal Justice Information Services (CJIS)

• Cloud Security Alliance (CSA) standards

• Family Educational Rights and Privacy Act (FERPA)

• Health Insurance Portability and Accountability Act (HIPAA)

• Motion Picture Association of America (MPAA)

Securing its global infrastructure isn’t AWS’s only responsibility. AWS is fully responsible for securing all its managed services offerings, such as Amazon RDS, Amazon DynamoDB, and Amazon Elastic MapReduce (EMR). When you use any of the managed services, AWS takes care of the overall security configuration, such as patching the guest operating system and databases, firewall configuration, and many other security aspects. Your responsibility would be to take care of the access controls to your servers and databases.

If a third-party auditing services is auditing your organization, and it requires details about your physical network and ritualization infrastructure, you can approach your AWS representative to help the third-party auditors get the information they need. The AWS representative will facilitate the audits by the third-party auditing services. For auditing purposes, you’re responsible for the applications that you run on AWS EC2 as well as securing the OS, including managing the system administrators group.

If an external auditor requests a list of your users and their statuses for audit purposes (say, to determine whether you’re using Multi-Factor Authentication) you can generate a credentials report by signing into the AWS Management Console, opening the IAM console, and downloading the report. The credentials report shows all your users and their credential statuses, such as passwords, access keys, and MFA devices. You can also generate the credentials report from the command line, IAM APIs, or through AWS SDKs.

Customer’s Responsibility: Security in the Cloud

While AWS is responsible for the infrastructure and its support, you, as the customer, are responsible for everything you place in the cloud (think data!) or connect to the AWS cloud, in addition to securing the OSs, platforms, and data. AWS customer responsibility varies according to the services that the customer chooses. In the case of services categorized as Infrastructure as a Service (IaaS), for example, such as Amazon EC2, Amazon VPC, and Amazon S3, the customer performs all the security configuration and management tasks.

If you deploy an EC2 instance, you’re responsible for managing the guest OS as well as the application software and utilities that you install on the EC2 instance. In addition, you’re responsible for configuring the security groups on each of the instances. As you’ll recall, a security group acts as a virtual firewall.

The type and extent of security configuration you must perform depends on the specific AWS service and the importance of the data you store in the cloud. With EC2, for example, the customer is responsible for securing the following:

• Amazon Machine Images (AMIs)

• Guest operating systems (including updates and security patching)

• Applications

• Firewalls (security groups)

• Data (stored on disk and in transit)

• Credentials

• Policies and configuration

Regardless of the type of service, you must set up certain security elements such as IAM, Secure Sockets Layer/Transport Layer Security (SSL/TLS) for encrypting data in motion, and a strong logging framework (using AWS CloudTrail) to protect your cloud infrastructure and the data you store in it.

Sharing Security Responsibility for AWS Services

You can categorize security and shared responsibility for the AWS infrastructure and platform services into the following categories, each of which has a slightly different security ownership model:

• Infrastructure services These are the various compute services such as EC2, and associated services such as Amazon Elastic Block Storage (EBS), Auto Scaling, and Amazon VPC. You control the OS and configure and manage the identity management system that enables access to the user layers of the virtualization stack.

• Container services These services typically live in separate EC2 or other infrastructure instances, and for the most part, you don’t manage the OS or the platform layer. You are responsible for setting up network controls such as firewall rules and managing the platform-level identity and access management separately from IAM.

• Abstracted services These services include high-level storage, database, and messaging services such as S3, Glacier, DynamoDB, Simple Queue Service (SQS), and Simple Notification Service (SNS). These are services in the platform layer on which you build cloud applications. You use AWS APIs to access the endpoints of these abstracted services. Abstracted services are offered on a multitenant platform that stores your data securely in an isolated fashion.

Responsibility for IT Controls and Compliance

The same shared responsibility model for securing the IT environment also applies to IT control. You follow a distributed control strategy in the AWS cloud for managing, operating, and verifying IT controls. AWS is responsible for managing the controls associated with the physical infrastructure.

There are three types of controls based on how they’re managed by AWS, you, and/or both:

• Inherited controls These are controls that you fully inherit from AWS, such as the physical and environment controls managed by AWS.

• Shared controls AWS provides the infrastructure requirements, and you must provide your own control implementation within your use of the AWS services. Here are examples:

• Patch management AWS is responsible for patching the infrastructure, but you are responsible for patching your guest OS and application software.

• Configuration management AWS configures its infrastructure devices, but you configure your own guest OS, databases, and applications.

• Customer-specific controls These controls are solely your responsibility, depending on the applications you deploy within AWS services. For example, Zone Security may require you to zone data within specific security environments.

Security for the AWS-Managed Services

Throughout this book, you’ll learn about various AWS-managed services, such as the Amazon Relational Database Service (Amazon RDS), where AWS fully manages the relevant service. AWS is responsible for the security configuration of all of its managed services. You need to configure access controls with AWS IAM and account credentials for database user accounts for the managed service, such as a MySQL database service (RDS) and similar services.

Network Security

AWS secures its network infrastructure using several strategies:

• Secure network architecture Network devices such as firewalls and other boundary services use rule sets and access controls lists (ACLs) to control network traffic flow to and from each managed network interface.

• Secure access points Strategically placed cluster customer access points called API endpoints enable secure HTTP and (HTTPS) access to your storage and compute instances.

• Transmission protection You can connect to AWS access points using SSL to protect against tampering and message forgery. If you need additional layers of network security, you can use Amazon Virtual Private Cloud (VPC), which provokes a secure subnet within the AWS cloud. VPCs offer the ability to use an IPsec virtual private network (VPN) to provide an encrypted tunnel for transmitting data between your data center and Amazon VPC.

Network Monitoring and Protection

AWS uses several automated monitoring systems to enhance service performance and availability. The monitoring is designed to catch unauthorized activities at incoming and outgoing communication points by monitoring server/network usage, port-scanning activities, application usage, and intrusion attempts.

AWS security monitoring tools help identify the following types of attacks.

Distributed Denial-of-Service (DDoS) Attacks AWS locates API endpoints on world-class infrastructure and uses proprietary DDoS mitigation techniques. It also multihomes its networks across providers to achieve diversified Internet access, which helps in situations such as a DDoS attack.

Man-in-the-Middle (MITM) Attacks AWS encourages its users to use SSL. All the AWS APIs are available via SSL-protected endpoints. EC2 AMIs generate new SSH certificates when you first boot an instance. You can use the AWS Certificate Manager (ACM) to call the console and get the host certificates before logging onto the new instance.

IP Spoofing EC2 instances can’t send spoof network traffic. The host-based firewall won’t permit an EC2 instance to send traffic with any source IP or MAC address other than its own IP/MAC address.

Port Scanning AWS stops and blocks all unauthorized port scanning. Since, by default, all inbound ports of EC2 instances are closed, port scanning isn’t effective with an EC2 instance. By configuring appropriate security groups, you can further minimize the threat of port scans. As a customer of AWS, you can request permission from AWS to conduct vulnerability scans that you need, but you must limit the scans to your own instances and must not violate the AWS Acceptable Use Policy.

Packing Sniffing by Other AWS Tenants EC2 instances that you own and that are located on the same physical hosts cannot listen to one another’s traffic. Even if you place a VM into promiscuous mode to receive or “sniff” traffic being sent to other VMs, the hypervisor won’t deliver any traffic that isn’t addressed to this instance. Well-known security attacks such as Address Resolution Protocol (ARP) cache poisoning aren’t possible in EC2 and VPC.

In addition to constant monitoring, AWS also performs regular vulnerability scans on the OS, web applications, and databases.

AWS Account Security Features

You can use various tools and features to protect your AWS account and AWS resources, as summarized in the following sections.

AWS Credentials

AWS uses several types of credentials for authentication to ensure that only authorized users and processes can access your accounts and resources.

AWS recommends that you regularly change your access keys and certificates. You can rotate the access keys of your IAM account as well as your IAM user accounts with the AWS IAM API.

Passwords

AWS requires passwords for accessing the AWS account, as well as the individual IAM user account and the AWS Support Center. You can change the passwords from the Security Credentials page. AWS recommends the creation of strong and hard-to-guess passwords.

You can set a password policy for your IAM user accounts to ensure the use of strong passwords and ensure that they’re changed on a regular basis. Several password policy options are available to you, such as preventing password reuse by users.

You can choose to require a password reset by the administrator when user passwords expire or allow some users to manage their passwords. The password expiration requires an administrator reset option in the console and prevents IAM users from choosing a new password when their password expires. If you decide to enable this option, be sure to do one of the following, so you’re not locked out of your own AWS account when your password expires:

• Make sure you have the access keys, which will enable you to use the AWS CLI (or the AWS API) to reset your own password, even if you cannot log into the AWS Management Console.

• Alternatively or in addition, ensure that multiple administrators have the necessary administrative permissions to reset IAM user passwords. This way, even if you as the administrator don’t have the access keys, other administrators can reset your console password for you.

AWS Multi-Factor Authentication

With Multi-Factor Authentication (MFA), a system checks for more than one authentication factor before granting access. This usually consists of a username/password combination (something you know) and the code from an authentication device (something you have). MFA provides an additional layer of security when users authenticate to the AWS cloud.

When you enable MFA, you need to provide a six-digit single-use code, in addition to a username/password, to gain access to your accounts. The single-use code may be provided by an authentication device you carry with you.

You can enable MFA for all the IAM users that you create. You can also add MFA protection for access across IAM accounts. This enables a user in one AWS account to use an IAM role to access AWS resources in a different AWS account. Before the user can assume the role, you can require the user to use MFA.

IAM Access Keys

As you know, access keys consist of two components: an access key ID and a secret access key. A user can have a maximum of two active access keys to enable continued access even while the active key is being rotated. Users can list and rotate their own access keys.

As a best practice, you must regularly rotate all your IAM user’s access keys. You can view an access key’s usage history to determine whether the key is being used and remove unnecessary active keys from users who don’t use them. You can revoke an IAM user’s access by disabling their access keys, which makes the keys inactive. You can also delete the access keys of a user.

As described in Chapter 2, the IAM access keys are by default stored in the ~/.aws/credentials file on a Linux server. AWS recommends that you not use the root access keys and that you lock these keys.

You are required to sign all your API requests with a digital signature to verify your identity. The text of your request and your secret access key serve as inputs to the hash function used to calculate the digital signature. Your applications must calculate the digital signature, but when you use the AWS SDKs, the signature is calculated for you. If a request doesn’t reach AWS within 15 minutes of the request’s timestamp, AWS turns down the request.

AWS also recommends that you not embed the access keys in your code. Using IAM roles is a safe and easy way to manage access key distribution. IAM roles offer temporary credentials that are automatically loaded to the target instances and are automatically rotated several times daily.

Key Pairs

Instead of passwords, EC2 instances use a public/private key pair to sign in via SSH. The public key is embedded in the EC2 instance. You sign in securely with your private key. You can have AWS generate a key pair for you automatically when you launch an instance, or you can generate your own and load it.

In addition to EC2, Amazon CloudFront also uses key pairs. It does so when creating signed URLs of private content, such as when you distribute restricted content that someone paid for.

X-509 Certificates

X-509 certificates contain a public key and additional metadata such as the certificate expiry date and are associated with a private key. These certificates are used to sign SOAP-based requests. They are also used as SSL/TLS certificates for users who use HTTPS for encrypting their data.

To create a request, you use your private key to create a digital signature and include the signature in the request, along with your X.509 certificate. AWS verifies your identity by decrypting the signature with the public key embedded in your certificate.

As with key pairs, you can let AWS create the certificate and private key for you to download, or you can upload your own certificate. If you’re using the X-509 certificates for HTTPS, you can use a tool such as OpenSSL to create a unique private key, which you’ll then use to create a Certificate Signing Request (CSR) to submit to a certificate authority (CA) to get the server certificate. Using the AWS CLI, you can upload the certificate, private key, and certificate chain to IAM.

Individual User Accounts

You use AWS IAM to create and manage individual users in your AWS account. A user can be a person, an application, or a system that works with AWS resources programmatically, from the command line, or through the AWS Management Console.

You use IAM to define permission policies that control what your users can do with your AWS resources. Grant users the minimum permissions they need to do their job.

Secure HTTP Access Points

AWS recommends that you use HTTPS (which uses the SSL/TLS protocol that uses public key cryptography to prevent unauthorized use) instead of HTTP for transmitting data. AWS services offer secure customer access points called API endpoints to help you establish HTTPS sessions.

Security Logs

AWS CloudTrail tracks and records all requests for AWS resources you own. The logs reveal the services accessed, actions performed, the time when the actions were performed, and the identity of the individual or service.

CloudTrail captures data about all API calls made to all your resources. It delivers its event logs every 5 minutes, and you can configure it so it stores aggregated log files from multiple regions into an Amazon S3 bucket. You can move the logs from S3 to Glacier for long-term auditing and compliance requirements.

You can upload CloudTrail logs from S3 to your own log management solutions to analyze them and detect unusual patterns. The CloudWatch Logs service tracks systems, applications, and more, from EC2 instances and other sources in near real-time, helping you to detect unauthorized login attempts, for example, by monitoring the web server log files.

AWS Trusted Advisor Security Checks

AWS Trusted Advisor offers a set of best-practice checks to help increase your security and performance. The advisor can also inspect your AWS environment and offer security remediation recommendations or close security gaps.

Managing Cryptographic Keys for Encryption

Earlier, you learned how to safeguard your public/private key pairs that help you log into your EC2 instances. You also learned how X-509 certificates help AWS to verify the authenticity of a requester by decrypting their digital signatures (created with a private key) with the public key and X-509 certificates to safeguard interactions with AWS.

You use encryption in various services, such as databases. To protect your cryptographic keys, AWS offers two important services or tools: the AWS Key Management Service (KMS), and the AWS cloud hardware security module (CloudHSM).

The AWS Key Management Service

AWS KMS is a managed service that helps you create and manage the encryption keys that you use to encrypt your data stored in the AWS cloud. KMS helps you create, import, rotate, disable, and define the usage policies for encryption keys that you use.

KMS uses FIPS 140-2 validated hardware security modules to safeguard the keys. KMS is integrated with most AWS services, which makes it easy for you to encrypt data you store in these services with encryption keys that you fully control. It’s also well integrated with AWS CloudTrail, thus providing you with a record of all encryption key usage, which helps in meeting many regulatory and compliance needs.

Following are the key features of AWS KMS.

Centralized Key Management You can create and manage encryption keys from the AWS Management Console or with the AWS CLI and SDK, which offers you centralized control of your encryption keys. You can have KMS create the master keys or import them from somewhere else. These keys are stored in an encrypted format, and you can have KMS rotate the master keys once a year, without having to re-encrypt the previously encrypted data, since KMS keeps older master keys available.

Easy Encryption You can easily encrypt data through a one-click encryption in the AWS console or use the AWS SDK to incorporate encryption in your application code.

Audit Capabilities AWS CloudTrail can record all uses of a key that you store in KMS in a log file that it can deliver to an S3 bucket.

Scalability and High Availability AWS KMS scales automatically as your encryption needs grow over time. KMS stores multiple copies of the encrypted versions of your encryption keys to provide 99.999999999 percent durability. For high availability of the encryption keys, KMS is deployed in multiple AZs inside an AWS region.

Secure Safeguarding of Encryption Keys Your master keys are stored securely and cannot be exported out of KMS. No one, including AWS employees, can view the KMS service’s plain-text keys, which are never written to disk and are pulled into volatile memory during cryptographic operations. KMS uses a FIPS 140-2 validated hardware security module (HSM) to safeguard the keys.

Compliance AWS KMS has been certified by several compliance standards that include PCI DSS Level 1, IS0 27018, and ISO 9001.

Storing and Managing Encryption Keys

You can use encryption for protecting data in your databases, document signing, transaction processing, and for digital rights management (DRM). Encryption strategies require encryption keys. You can use your own processes for managing encryption keys in the AWS cloud or rely on server-side encryption with AWS key management and storage capabilities.

If you choose to manage encryption keys yourself, AWS recommends that you store keys in tamper-proof appliance such as an HSM. If you choose to store the keys on premises in an HSM, you can access the AWS keys over secure links such as IPsec VPNs, or AWS Direct Connect.

AWS offers an HSM service, AWS CloudHSM. If you use CloudHSM instead of your own on-premise HSM, you receive a dedicated single-tenant access to CloudHSM appliances, which are resources in your Amazon VPC. The appliance has a private IP and runs in a private subnet. You connect to the appliance from EC2 servers via SSL/TLS, with two-way digital certificate authentication and 256-bit SSL encryption.

If you use CloudHSM, try and choose a CloudHSM service in the same region as your EC2 instance to decrease network latency and thus improve application performance.

CloudHSM appliances can securely store and process cryptographic keys for database encryption, Public Key Infrastructure (PKI), authentication and authorization, transaction processing, and so on. They support strong cryptographic algorithms such as AES and RSA.

Although AWS is responsible for managing, monitoring, and maintaining the health of the CloudHSM appliance, only you control your security keys and the operations performed by CloudHSM. A cryptographic domain is a logical and physical security boundary that restricts access to your encryption keys. AWS doesn’t have access to the cryptographic domain. You initialize and manage the cryptographic domain of CloudHSM.

When you start working with CloudHSM, you must set up one or more cryptographic partitions on it. A partition is a logical and physical security boundary that restricts access to your cryptographic keys. AWS can’t see inside the HSM partitions, although it has admin credentials to the appliance itself in order to monitor the appliance’s health and availability. Therefore, AWS can’t perform cryptographic operations with your keys.

HSM also offers both physical and logical tamper detection and response capabilities that erase cryptographic key material and log the events when HSM detects tampering.

AWS Identity and Access Management

AWS IAM is a web service that helps you secure access to your AWS resources. IAM helps you centrally manage your users, security credentials (passwords and access keys), and permissions policies that determine which AWS services and resources users can access. More precisely, IAM enables you to do the following:

• Create users and groups in your AWS account.

• Assign unique security credentials for users in your AWS account.

• Share AWS resources among your users.

• Control user access to AWS services and resources.

• Control each user’s permissions to perform tasks with AWS resources.

• Allow users in another AWS account to share your AWS resources.

• Create roles and define the users or services that can assume the roles.

IAM enables you to control how AWS products are administered, such as the creation and termination of EC2 instances. IAM controls the administrative tasks that you perform via the console, command line tools, or the AWS SDKs. You can work with IAM through the AWS Management Console, AWS CLI, and AWS SDKs.

It’s important to understand that AWS services such as EC2 and Amazon RDS have their own ways of securing access to their resources. These access methods are separate from IAM.

IAM has two main components:

• Identity Who is authenticated legitimately (signed in)

• Access management Who has the authorization (permissions) to use the AWS resources

How IAM Works

IAM provides the infrastructure that manages authentication and authorization for AWS accounts. IAM uses the following elements to perform its tasks:

• Principals

• Requests

• Authentication

• Authorization

• Actions or operations

• Resources

Principals

A principal is any entity that can act on an AWS resource. Originally, when you create your AWS account, you create the administrative IAM user as your first principal. Later, you can allow users and services to assume a role. You can also support applications to programmatically access your AWS account. All users and roles (including federated users and applications) are AWS principals.

Figure 3-2 shows how principals perform actions on resources through authorization granted via IAM policies.

Figure 3-2 How principals perform actions on resources in IAM

Requests

Principals send requests via the AWS console, the AWS CLI, or the AWS API. A request contains information about the following:

• The principal (requestor), including the permissions granted to that principal via IAM policies

• The resources on which the user wants to perform the action, such as the name of a DynamoDB table or a tag associated with an Amazon EC2 instance

• The actions or operations that the principal wants to perform on the resources

AWS takes the request information such as principals, resources, actions, and environmental data (such as the IP address) and forms a request context, which it uses to evaluate the request and determine whether it should authorize it.

Authentication vs. Authorization

It’s important that you understand the difference between authentication and authorization. AWS must authenticate and authorize your request before it approves the actions in the request.

Before a principal can access a resource and perform actions on it, AWS must first authenticate the user. You authenticate from the console by signing in with your username and password. You authenticate through the API or CLI by providing your access key and secret key.

In authorization, IAM checks the request contexts for matching policies so it can allow or deny the requests. AWS collects all the request information and puts it into a request context, which it uses to evaluate the request and determine whether it should authorize it. Policies specify the permissions that are either allowed or denied for principals or resources.

Actions (Operations)

Once IAM authenticates the user and authorizes the request, AWS will approve the action/operation that you specify in your request. The operations that you can perform are defined by a service, so they can vary. For example, you can perform actions such as the following on a user resource:

• Create user

• Delete user

• Update user

Resources

A resource is an object that exists within a service, such as an EC2 instance or an S3 bucket. I discuss resources in more details in the next section.

Managing the Identity Component of IAM

When you sign up for AWS, the first account that you create is an AWS account, and you use your e-mail address and password as credentials. You can use these credentials to log into the AWS Management Console. You can also create a set of access keys to use when you need to make programmatic calls to AWS via the CLI, the AWS SDKs, or API calls.

IAM enables you to create individual users in your AWS account with their own username/password. The users can log into the console using an account-specific URL. You can also create access keys for users to enable them to make programmatic calls to AWS resources.

You must understand two key things in the context of IAM:

• Principal This AWS entity can perform actions in AWS. A principal doesn’t have to be a specific human user; it can be the AWS account root user, and IAM user, or a role.

• Resource This object exists within a service, such as an EC2 instance or an S3 bucket. An Amazon Resource Name (ARN) uniquely identifies an AWS resource. AWS requires you to use an ARN so that you can unambiguously specify resources across all of AWS, such as when you create an IAM policy or an Amazon RDS tag, for example. Following is the general format of an ARN:

arn:partition:service:region:account-id:resource,

• partition Where the resource is located. For standard AWS regions, the partition is aws. For resources that live in other partitions, the partition is aws-partitionname, as in aws-cn, which is the partition for resources location in the Beijing, China, AWS region.

• service Identifies the AWS product. For IAM resources, the service is always IAM.

• region The region the resource lives in. For IAM resources, you leave the region blank.

• account-id The AWS account ID, such as 123456789012.

• resource The part of the ARN that identifies a specific resource.

When working with AWS IAM, you must understand both the ARN format as well as IAM identifiers. I explain the two in the following sections.

IAM ARN Formats

An ARN can take slightly different formats, depending on the resource, as shown here:

arn:partition:service:region:account-id:resourcetype/resource

arn:partition:service:region:account-id:resourcetype/resource/qualifier

arn:partition:service:region:account-id:resourcetype/resource:qualifier

arn:partition:service:region:account-id:resourcetype:resource

arn:partition:service:region:account-id:resourcetype:resource:qualifier

Following are some examples of ARNs for various services.

AWS Batch

arn:aws:batch:us-east-1:123456789012:job-definition/my-job-definition:1

Amazon DynamoDB

arn:aws:dynamodb:us-east-1:123456789012:table/books_table

Amazon EC2

arn:aws:ec2:us-east-1:123456789012:dedicated-host/h-12345678

An AWS account

arn:aws:iam:123456789012:root

An IAM user in the AWS account

arn:aws:iam::123456789012:user/Sam

An IAM group

arn:aws:iam:123456789012:group/Developers

An IAM role

arn:aws:iam::123456789012:role/S3Access

You can specify all users, groups, or policies in your account by specifying a wildcard for the user, group, or policy portion of the ARN:

arn:aws:iam::123456789012:user/*

arn:aws:iam::123456789012:group/*

arn:aws:iam::123456789012:policy/*

IAM Identifiers

IAM employs different identifiers for users, roles, groups, policies, and server certificates. The three types of identifiers are friendly names and paths, IAM ARNs, and unique IDs.

Friendly Names and Paths When creating users, groups, or policies, or when you’re uploading serer certificates, you can specify a friendly name for the entities, such as Sam, Administrators, ProdApp1, ManageCredentialsPermissions, or ProdServerCert.

When you use the AWS command line to create the IAM entities, you can optionally provide a path for an entity. The path can be a single path or a nested multiple path structure, such as a directory structure.

IAM ARNs Although many AWS resources have friendly names such as a user named Sam and a group named Administrators, you can’t use these in permission policies, which require that you specify the ARN format instead when referring to resources such as users and groups.

Unique IDs IAM assigns a unique ID to each user, group, role, policy, instance profile, and server certificate that it creates. The unique ID is a string of letters and numbers, such as the following: AIDAJQABLZS4A3QDU576Q.

You use friendly names or IAM ARNs mostly when working with IAM entities such as users and groups, but the unique ID is helpful when you can’t use friendly names. All IAM users have a unique ID, even if you assign an IAM user a previously used friendly name. In cases such as this, using unique IDs rather than friendly names helps tighten data access.

You can’t get unique IDs for an IAM entity from the IAM console. You can get the IDs via the AWS CLI or an IAM API calls. In AWS CLI, for example, the get-user, get-role, and get-group commands will provide the unique IDs for a user, role, and group, respectively.

Managing the Authentication Component of IAM

AWS IAM enables you to manage secure access to your AWS resources and AWS services. The identity portion of AWS IAM deals with the creation and management of AWS users and groups. The access management part of IAM deals with how you use permission to allow and deny users access to your AWS resources.

With IAM, you can easily provide users secure access to your AWS resources. You can manage IAM users and their access by creating users in the AWS identity management system and assign those users various types of security credentials, such as access keys, passwords, and MFA devices. In addition to helping you manage IAM users, IAM helps you manage access to federated users in your corporate directory (such as Active Directory), without having to create IAM user accounts for them.

You can access AWS services and resources via different types of identities: the AWS account root user, IAM users, and IAM roles, as explained in the following sections.

AWS Account Root Users

The AWS account root user is the user account (with your e-mail address and password as the credentials) set up when you first create an AWS account. The AWS account root user account has full access to all AWS services and resources in your account. The root user has full, unrestricted access to your entire AWS account. Because you can’t restrict the permissions granted to the main AWS account, the best practice is to use the root user to create your first IAM user and lock up the root user credentials. Even if you’re the only person who works with your AWS account, it’s a best practice to create an IAM user and use that user’s identity (and credentials) when working with AWS.

IAM Users

IAM users are unique identities recognized by AWS services and applications. You create IAM users with specific permissions to access services and resources. For example, you can create an IAM user with permissions to create a hosted zone in Amazon DNS, Route 53.

IAM helps you manage access to two broad sets of users: It supports IAM users you create and that are managed in AWS’s IAM system. In addition, it enables you to grant access to your AWS resources to federated users that are managed outside AWS in your corporate directory.

IAM users can sign in to the AWS Management Console and use the AWS CLI or APIs to make programmatic requests to AWS services. Although you can directly attach permission policies to an IAM user, the recommended way to grant permissions is by making the user part of a group that has the required permission policies.

A user doesn’t have to be a person: it can be an individual, system, or an application that requires access to an AWS service. Each user has a unique name and security credentials, such as a password and/or access keys (up to two access keys for use with the CLI or API).

Usernames can be a combination of up to 64 letters, digits, and the characters plus (+), equal (=), comma (,), period (.), at sign (@), and hyphen (-). Names aren’t distinguished based on case. So, for example, sam@mycloud is a valid username and so is sam-mycloud, but not sam#mycloud.

IAM Roles

An IAM role is similar to an IAM user, but unlike a user, a role doesn’t have or require credentials and it is temporary, set to expire after a defined time period (the default expiration time is 12 hours). Once a role expires, it can’t be reused. An IAM role defines a set of permissions for making AWS service requests. It uses temporary security credentials that enable you to delegate access to users or services that need access to your AWS resources. You can create an IAM role with specific permissions that aren’t tied to a specific user or group. Rather, entities such as IAM users, applications, or even AWS services such as EC instances assume the roles. The credentials are automatically loaded, to the target instances, and you don’t need to embed them in code, which isn’t safe. They are automatically rotated several times daily and stored securely.

You create a role similar to how you create an IAM user: you assign a name and attach an IAM policy to it. IAM roles can be used to solve several security problems. For example, say that you have 1000 employees in your organization for which you need to provide access to your new AWS cloud resources. The best way to do this is to create roles to authorize various users to access the different AWS resources. Creating roles is a best practice for AWS IAM.

An IAM role enables you to delegate access to trusted entities with defined permissions, without your having to share access keys long-term. Roles are helpful when you don’t want to create regular IAM users with persistent permissions for accessing resources in your AWS account. With an appropriate IAM role, an IAM user can access not only resources in their own AWS account, but also resources in other AWS accounts.

Before an IAM user, application, or service can use a role, you must grant the entity permission to switch the role through a permissions policy that you attach to the IAM user or user group. Use the DurationSeconds parameter to specify the duration of the role session, with a minimum value of 900 seconds (15 minutes), up to the maximum CLI/API session duration for the role.

IAM roles with temporary credentials are useful in the following cases, where you need to provide limited, controlled access.

Federated (Non-AWS) User Access You can assign a role to federated users (or applications) that don’t have an AWS account when they request access through an identity provider such as Active Directory, Lightweight Directory Access Protocol (LDAP), or Kerberos. The temporary AWS credentials are assigned to the roles provided with the identity federation between AWS and non-AWS users in your organization’s identity and access management system. With web identity federation, you don’t need to manage identities of your users. Instead, the users of your apps can sign in via an identity provider (IdP) such as Amazon, Facebook, Google, or any other OpenID Connect (OIDC)–compatible IdP and receive an authentication token, which they exchange for temporary credentials in AWS that map to an IAM role with permissions to use resources in your account. The IdP enables you to manage user identities outside of IAM and grant these external users permissions to your AWS resources.

Create Trust Between Organizations If your company supports Security Assertion Markup Language (SAML) 2.0, you can create trust between your organization (identity provider) and other organizations (service providers).

Allow Externally Authenticated Users to Log In Roles also enable users to sign in to your applications by logging into Amazon, Facebook, or Google. The users can use information from their successful authentication to assume the roles and, thus, the temporary security credentials for accessing your AWS resources. If you’re currently managing identities outside of AWS, you can use IAM IdPs instead of creating IAM users in your account. The best practice here is to use an IAM role that validates the calls to the DynamoDB database with IdPs.

Provide Cross-Account Access You can use roles to provide users with permissions in an AWS account to access your resources in another AWS account owned by your organization, thus ensuring that only temporary credentials are provided on an as-needed basis to the cross-account users. For details, see the section “Granting IAM Users Permissions to Switch to other Roles” a bit later in this chapter.

Allow Applications to Access AWS Resources Applications running on EC2 instances require security credentials when they make requests to an AWS resource such as S3 buckets. Roles help you grant AWS services permissions to access your AWS resources. Instead of creating individual IAM accounts for each application and storing access keys on the EC2 instances where applications are running, you can use IAM roles as a way of granting temporary credentials for the applications when they make AWS API requests. This is especially useful when dealing with a large number of EC2 instances that are spawned dynamically, through AWS Auto Scaling.

IAM Groups

Use IAM groups to manage permissions that you want to grant to more than one user and to gather users based on their functional roles. Suppose, for example, that there are 50 IAM users in your AWS account. If your company introduces a new policy that changes the access of the IAM users, you don’t need to apply the new policy at the individual user level. You can add the new security policy to the group. Remember that there’s no default group in your AWS account. You create the groups as needed. An IAM user can be a member of more than one IAM group. When a user joins or leaves your organization, you can add or remove the user from the groups.

Granting IAM Users Permissions to Switch to Other Roles

You can grant your IAM users permission to switch to other roles in your AWS account. AWS recommends that you adopt this approach to enforce the principle of least access—that is, grant users more advanced or more powerful permissions only for times they need them to perform specific tasks, such as terminate an EC2 instance. This reduces your security exposure and minimizes or prevents accidental changes to critical environments. Auditors will also appreciate the fact that you granted role permissions only when you needed to.

A user in an AWS account can switch to another role in the same or a different AWS account. When users switch to a different role, their original permissions are suspended, and they’re restricted to just those actions and resources that are permitted by the role they switched to. The user’s original permissions are restored when the user exits the new role.

For example, suppose that you have a development and a production environment, and you create separate AWS accounts for the two environments. Your IAM users in the development account may occasionally need to access resources in the production account. Instead of creating separate identities in both environments for the users that need this type of access, you can enable cross-account access to those users. Here’s how to do this:

1. In the production account, create a role named ModifyApp and define a trust policy for that role and attach it to the role. This allows an IAM user to assume the role. The trust policy defines the development account as a Principal. This allows authorized users from the development account to use the ModifyApp role. Next, you define a IAM permissions policy for this role that specifies the actions that the IAM user is allowed to perform when the user assumes this IAM role. In this case, the permission policy grants access to the production resources (such as an Amazon S3 bucket in the production environment). The role ARN for an account such as 123456789012 will then look like the following:

arn:aws:iam:123456789012:role/ModifyApp

2. As the AWS SysOps administrator, you must grant the users in the development account permission to switch to the role you’ve created in step 1 by granting the group—say, the Developers group—permission to call the AWS Security Token Service AssumeRole API for the ModifyApp role that you created in step 1. This enables the users in the Development group to switch to the ModifyApp role in the production account.

3. The users in the Development group can now request a switch to the ModifyApp role by choosing Switch Role in the AWS console, or they can use the AWS API or AWS CLI to do the same.

4. AWS STS verifies the request with the role’s trust policy and returns temporary credentials to the AWS console or to the application.

5. The temporary credentials returned by AWS STS grant the user access to the AWS resources in the production account. The AWS console uses these credentials on behalf of the user for subsequent console actions, such as accessing an S3 bucket in the production account. Similarly, if the user has switched the role through the AWS API or AWS CLI, the application uses the temporary credentials to update the S3 bucket(s) in the production account.

Managing IAM Authorization Policies

So far, you’ve learned about the identity part of AWS IAM. The second part of IAM, access management, also known as authorization, enables you to configure what users and other entities can do in their account. Authorization is how you specify permissions that allow or deny users access to AWS resources, as well as specify the actions they can perform in AWS.

You define these permissions with the help of IAM policies. Permissions policies help you control which users can access AWS resources and what actions they can perform on those resources. For example, let’s say that you want your IAM users to be able to access the IAM console only from within the organization, and not from outside. You create an IAM policy with a condition that denies access when the IP address range is from outside the organization. The following example shows an IAM policy with a Deny condition that prevents access from any IP addresses that are specified as the source IP addresses ("AWS:SourceIp"):

All IAM users start with zero permissions. So, by default, a user can’t do anything in your AWS account until you explicitly attach a policy to that user or add that user to a group with the relevant permissions.

There are two broad types of policies:

• Permissions policies A permissions policy is an object that defines an entity’s or a resource’s permissions. These are mostly stored as JSON documents, which you can attach to an IAM identity or an AWS resource (such as an Amazon S3 bucket) to define their permissions (allow or deny).

• Permissions boundaries Permissions boundaries are policies that limit the maximum amount of permissions that a user or entity (principals) can have.

Earlier in the chapter, you learned about a request context. When a principal seeks to perform an action on an AWS resource such as an EC2 instance, IAM looks in the request context for matching permissions policies to determine whether to allow the request.

Permissions Policies

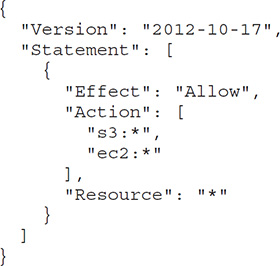

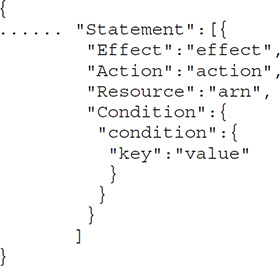

An IAM policy is a JSON-formatted document with one or more statements. A statement has the following structure and syntax:

Following are the key elements of a statement:

• "Effect" This can take the value Allow or Deny. By default, users don’t have permissions to use resources or perform API actions, so all requests are denied. An explicit deny in the "Effect" element overrides any allows, and an explicit allow overrides the default (deny).

• "Action" This is the specific API action for which the policy grants or denies permission.

• "Resource" This is the resource that’s impacted by the action. You specify the resource by using its Amazon Resource Name (ARN).

• "Condition" This is an optional attribute that allows you do things such as controlling when your policy is in effect. A condition has one or more key-value pairs, and when there are multiple keys in a condition, AWS evaluates them using a logical AND operation. All conditions must be met before permission is granted. For example, you can have ec2:AccepterVpc as the condition key, and "ec2:AccepterVpc":"vpc-arn" as the key-value pair. Here, vpc-arn is the VPC ARN of the accepter VPC in a VPC-peering connection. You can also set a policy condition that requests must originate from specific IP addresses, or that they use SSL, for example.

Following is an example of a permissions policy that permits all requests except those coming from specific IP addresses (NotIpAddress) to terminate EC2 instances using the AWS API or the AWS CLI. Remember that unless a permission is granted explicitly, AWS by default disallows all actions, so the users to whom you attach this policy won’t be able to terminate the EC2 instances via the console.

Here’s what you need to remember about how IAM authorizes requests through permissions policies:

• By default, all requests are denied.

• An explicit allow in a permissions policy overrides the default.

• A permissions boundary (an AWS Organizations secure control policy [SCP] or a user or role boundary) or a policy used during AWS Security Token Service (STS) role assumption overrides the allow.

• An explicit deny in a permissions policy overrides any allows.

• If a single policy in a request includes a denied action, IAM denies the entire request.

Types of Permissions Policies

There are four broad types of AWS permissions policies:

• Identity-based policies Attach managed or inline policies to IAM identities such as users, groups, and roles.

• Resource-based policies Attach inline policies to resources, such as an Amazon S3 bucket policy.

• AWS Organizations SCPs Apply permissions boundaries to an organizational unit (OU) or an AWS Organizations organization.

• Access control lists (ACLs) Specify the principals that can access various resources.

Identity-Based Permissions Policies You can attach permissions policies to IAM identities such as users, groups, and roles. There are two broad types of identity-based policies: managed policies and inline policies.

Managed policies are permissions policies that you can attach to users, groups, and roles in an AWS account. Managed policies offer various benefits, such as the following:

• Automatic updates AWS automatically updates AWS-managed policies when necessary, such as adding permissions for a new AWS service, and applies those changes to the principals.

• Reusability You can attach the same managed policy to multiple principals.

• Central change management A change to a managed policy applies to all principals to which you attach that policy.

• Versioning and rollback IAM stores up to five versions of customer-managed policies. You can revert to an older policy version if you need to.

• Permissions management delegation You can allow users to attach and detach permissions policies.

There are two types of managed policies: AWS-managed policies and customer-managed policies.

AWS-managed policies are standalone policies maintained by AWS. A standalone policy has its own ARN:

arn:aws:iam::aws:policy/IAMReadOnlyAccess

In this ARN, IAMReadOnlyAccess is the name of the AWS policy.

AWS recommends that you start with the AWS-managed policies since you don’t have to write the policies yourself. You simply assign the permissions to the user, groups, or roles. You can attach a single AWS-managed policy to principals in different AWS accounts. You typically use these policies for defining permissions for service administrators. You also use them to grant full (AmazonDynamoDBFullAccess) or partial access (AmazonEC2ReadOnlyAccess) to AWS services.

AWS-managed policies are especially useful when you design the policies to match common job functions, such as the PowerUserAccess policy, which grants full access to a user for every AWS service (with limited access to IAM and Organizations).

Here’s an example IAM policy for an IAM group that grants full access to all AWS services (similar to a full administrator access) for the IAM users who are members of this group:

![]()

Customer-managed policies are policies that you create and manage. These allow a more fine-grained control than AWS-managed policies. Customer-managed policies are also standalone policies that you attach to users, groups, and roles. Customer-managed policies enable you to enforce identical permissions to a group of users by attaching the policy to a group (or groups). You can easily edit a policy to apply policy changes to all members of the group(s).

An easy way to ensure that you’ve created a policy correctly is by copying an AWS-managed policy and customizing it. You can then assign the policy to one or more principals. As an AWS administrator, you can use IAM policies to control which users can create, update, and delete customer-managed policies in your AWS account. Following is an example policy that allows a user to create, update, and delete customer-managed policies in your AWS account:

The second major identity-based permissions policy type, inline policies, are policies that you can create and embed directly into a user, group, or role. Unlike managed policies (both AWS-managed and customer-managed), inline policies aren’t standalone policies. These policies are part of a specific principal, such as a user, group, or rule. So when you delete the principal, the embedded policies in the principal entity are deleted automatically. Inline policies are helpful when you want to establish a strict one-to-one relationship between a permissions policy and a principal. Multiple principals can share the same inline policy that you create.

Resource-Based Permissions Policies Resource-based policies attach inline policies to AWS resources, rather than to IAM identities. For example, you can attach policies to resources such as Amazon S3 buckets, Amazon SQS queues, and so on. With each resource, you specify which principal can access the resource and the actions they can perform on the resource. All resource-based policies are inline, not managed, policies.

AWS is composed of collections of resources such as IAM users and Amazon S3 buckets. User requests specify a resource, a principal, a principal account, and the action the user wants to perform on the resource. This information, along with other necessary request information, is part of the resource context.

AWS Organization SCPs AWS Organizations is a service that groups and manages all the AWS accounts that your organization owns. SCPs are JSON policies that apply permissions boundaries that control the maximum services and the actions that entities in an AWS Organizations organization, or an organization unit (OU), can access. The permissions apply to the AWS root user as well.

Access Control Lists Amazon S3 supports ACLs as a permission mechanism. An ACL is a list of permissions attached to an object, such as a file or an Amazon S3 bucket. The ACL specifies which users or processes can access the objects, as well as what operations they’re allowed to perform on the objects.

ACLs are independent of IAM policies and permissions, although you can use both together. ACLs are similar to resource-based permissions policies, but they are the only resource type that doesn’t use the JSON policy document structure.

Permissions Boundaries

Permissions boundaries change the effective permissions for a user or role. While permissions policies (identity, resource-based, ACLs) define permissions for the objects to which you attach them, permissions boundaries help you limit the maximum permissions for an IAM principal or AWS Organizations. Permissions boundaries don’t grant any access on their own, but they can limit permissions provided by permissions policies.

This example shows how you can allow the IAM user to perform actions only in Amazon S3 and Amazon EC2. Even if you create a policy allowing the action iam:CreateUser and grant the policy to this IAM user, the operation fails, since the permissions boundary doesn’t allow operations in IAM.

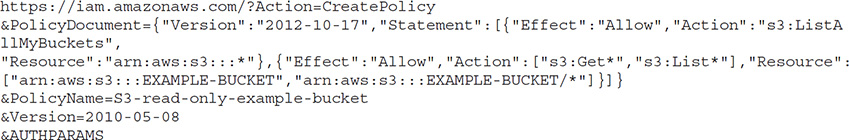

Creating IAM Policies

You can create policies from the command line or via the console. Here’s an example that shows how to create a managed policy with the CreatePolicy action

You can also create an IAM policy in the AWS console. Choose from one of the following methods to create a new IAM policy:

• Import Import and customize either an AWS-managed or a customer-managed policy that you’ve created.

• Visual editor Create the JSON document for the policy in the visual editor.

• JSON Create a policy using JSON syntax in the JSON tab. You’ll do this in Exercise 3-2 later in this chapter.

IAM Best Practices

AWS recommends the following best practices for securing your AWS resources.

Restrict and Protect the AWS Account Root User Access Key

Your AWS account root user access key (consisting of an access key ID and secret access key) gives access to all your AWS resources, including billing details. Therefore, you should not use your AWS account root user access key. Instead, use your account e-mail address and password to log into the console and create an IAM user that you can use for all administrative work. In addition, you may also want to delete or rotate your AWS account keys and use a strong password to protect the key.

Create Individual IAM Users

Create individual IAM users for working with your AWS account. This enables you to grant a different set of permissions for each IAM user, as needed.

Grant Least Privilege

Granting least privilege means that when you create IAM policies, you must limit the privilege grants to the permissions required to perform a task that each user needs to perform. For example, if a user needs only read-only access to a resource, don’t grant the user write permissions. When granting permissions on S3 service, for example, allow only a small set of required users to access Amazon S3 write actions, which enable a user to put objects into an S3 bucket or delete buckets. The Access Advisor tab in the IAM console shows information about the services by user, group, and role. This information helps you identify and remove unnecessary IAM policies.

Use Roles and Groups to Delegate and Assign Permissions

Instead of granting permissions directly to individual users, try to create groups based on job functions, and grant permissions for each group. Once you add users to a group, the users inherit all the permissions you assigned to the group. Groups help you easily grant and revoke permissions from users as their assignments in an organization change over time.

Granting credentials to IAM roles rather than users helps secure your AWS services. Applications running on EC2 instance, for example, can use a role’s credentials to access AWS resources.

Use AWS-Defined Policies to Assign Permissions

Use the managed policies created by AWS to grant permissions. As you introduce new services, AWS maintains and updates the policies.

Monitor Activity in your AWS Account

Regularly review and monitor all your IAM policies to enhance your security. Ensure that the policies follow the principle of least privilege as the policies evolve over time.

AWS Component Security

Some AWS resources have their own security mechanism that operates beyond IAM, which controls access to management tasks that are performed from the console, command line, or AWS SDKs. The following sections summarize the product-specific security for important AWS services.

Amazon EC2 Security

In EC2, you log into the instances with a key pair (Linux) or use a username/password (Windows). You use security groups to control the traffic to the instance.

AWS offers the following recommendations as security best practices for Amazon EC2:

• Use and identify federation, IAM roles, and IAM users to manage access to all AWS resources and APIs.

• Establish credentials-management policies and procedures for the creation, revocation, and rotation of AWS access.

• Implement the least permissive rules for your security group.

• Perform regular patches and updates of the OS and applications on your EC2 instances.

AWS offers multiple layers of security for EC2 instance, at the host and guest OS levels, and via firewalls and signed API calls. The following sections summarize the OS, network, and security features of EC2 instances.

Managing OS Access to EC2 Instances

In the shared responsibility model, you keep the OS credentials to your EC2 instances. AWS helps you with the initial access to the OS. When you launch an EC2 instance from a standard Amazon Machine Image (AMI), you must authenticate at the OS level to access and configure the instance. You can use secure remote access protocols such as Secure Shell (SSH) or Windows Remote Desktop Protocol (RDP) to access that instance.

Once you authenticate to your new EC2 instance, you can set up standard OS authentication mechanisms, such as local OS accounts, Microsoft Active Directory, and X.509 certificate authentication.

EC2 Key Pairs for Authentication to EC2 Instances

AWS provides asymmetric EC2 key pairs to enable you to authenticate to EC2 instances. You learned about key pairs in Chapter 2. Public key cryptography uses a public key to encrypt something such as a password, and the recipient uses the private key to decrypt the data.

The big difference between the AWS account and IAM user credentials versus the EC2 key pairs is this: you use your account and IAM credentials to manage access to other AWS services, whereas an EC2 key pair controls access to a specific EC2 instance that you launch in your account.

AWS can generate EC2 key pairs for you. When you launch an instance, AWS presents both the private key and the public key that are part of the key pair. You can generate new key pairs through EC2 anytime you want to.

On a Linux server, the public key is stored with the /.ssh/authorized_keys file. You must provide the private key when you connect to the instance. AWS doesn’t save the private key, so if you lose it, you must generate a new key pair. Therefore, it’s a good idea to secure the private key of the Amazon EC2 key pair.

Instead of having AWS generate a key pair for you, you can use a standard tool such as OpenSSH (with the keygen utility) to generate your own EC2 key pair. You import only the public key of the key pair into AWS and securely store the private key.

Each Linux instance launches with a default Linux system user account such as ec2-user (or Amazon Linux and Red Hat), and ubuntu for the Ubuntu operating system. Instead of granting access to an account such as ec2-user to multiple users, to enhance EC2 security, you must create multiple user accounts. Once you create the users, set up access keys for the users to log into EC2.

Controlling Access to EC2 Instances

You can access all AWS services with your security credentials. In addition, you have unlimited use of your AWS resources, such as EC2 instances. However, you don’t have to share your own security credentials with other users. Instead, use IAM and other EC2 features to control EC2 resource usage by other users, services, and applications. Security groups control access to EC2 instances, and IAM helps you control how users use resources in your AWS account. Following is a summary of how you control access to EC2 instances.

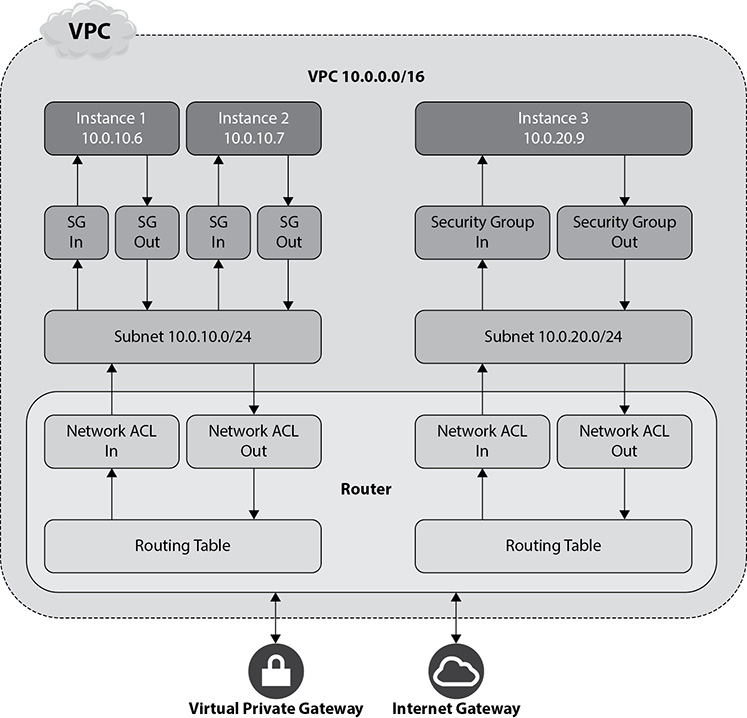

EC2 Security Groups for Linux Instances to Control Network Access A security group is a virtual firewall that controls traffic into and out of one or more EC2 instances. If you don’t specify a custom security group when you launch an instance, the instance uses the default security group. Your AWS account has a default security group (named default) for the default VPC in each AWS region. The default rules for each default security group are as follows:

• Allow all inbound traffic for all the other instances associated with the default security group.

• Allow all outbound traffic from the instance.

You add rules to a security group to control which traffic you want to accept or deny access to an instance or instances. AWS evaluates all rules from a security group in determining whether to allow traffic to go to an EC2 instance.

Security groups control both inbound and outbound traffic at the instance level. You must set up rules in your security group to enable you to connect to a Linux instance from your IP address, via SSH. Here’s how you’d add the rule to the security group using the AWS CLI:

$ aws ec2 authorize-security-group-ingress --group-id security_group_id --protocol tcp --port 22 --cidr cidr_ip_range

If you create an EC2 instance in a VPC, you must specify a security group created for that VPC. A security group is associated with the network interfaces, and changing the security groups for an instance changes the security groups associated with the primary network interface (eth0).

EC2 Permission Attributes You can specify which of your AWS accounts can use your AMIs and EBS snapshots. You configure an AMI’s LaunchPermission attribute to specify the AWS accounts that can access an AMI. The create VolumePermission attribute of an EBS snapshot helps you control which AWS accounts can use a snapshot.

IAM and EC2 You can use IAM with EC2 to control which users can perform tasks using specific EC2 API actions, and whether they can work with specific AWS resources.

By default, your IAM users can’t create or modify EC2 resources. They also can’t perform tasks with the EC2 API, the console, or the CLI. To enable your IAM users to work with EC2 resources and perform tasks, you must first create IAM policies that grant the users permission to use resources and perform API actions. You must then attach these policies to the users for groups that need the permissions.

An EC2 IAM policy contains two things:

• It must grant or deny permission to use one or more EC2 actions.

• It must specify the resources that can be used with the action. Because EC2 only partially supports resource-level permissions, for some EC2 API actions, you can’t specify the resources a user is allowed to work with for the action.

You can create an IAM group and attach a policy to that group. For EC2, you can use the following AWS-managed policies:

• PowerUserAccess

• ReadOnlyAccess

• AmazonEC2FullAccess

• AmazonEC2ReadOnlyAccess

Once you create a group with one or more of these AWS-managed policies, you can add users to that group. When you attach the policy to the group or user, it grants or denies permission to perform the tasks that you specify on specific resources in your AWS account.

The following EC2 IAM policy shows how you can control permissions granted to IAM users for EC2 instances. This policy is for requests from the Amazon CLI or an AWS SDK. You can also create policies for working in the Amazon EC2 console. This policy enables a user to describe all EC2 instances (each chunk starting with "Effect": is a separate statement), but to start and stop only two specific instances and terminate instances only in a specific region and with a specific resource tag:

This policy shows how to control access to EC2 instances. You can also create policies that control access to volumes, snapshots, reserved instances, and those that restrict access to specific AWS regions.

Securing the Operating System and Applications

In the shared responsibility model, you are responsible for both OS- and application-level security. AWS recommends that you standardize the OS and application builds and maintain the security configurations in a secure build repository. Furthermore, you should build preconfigured AMIs that satisfy security hardening standards that address known security vulnerabilities.

Best practices for OS and application security include the following:

• Rotate credentials such as access keys.

• Run regular privilege checks using IAM user’s Access Advisor and access key last used.

• Disable password-only access and use MFA to gain access to instances.

• Use bastion hosts to enforce control. A bastion host acts as a jump server that lets users hop into your AWS environment to access secure servers running within your private subnets. Ideally, all access to EC2 instances should be through a bastion host.

• Password-protect the .pem file on user servers.

• Restrict access to EC2 instances to a select range of IPs, using security groups (these act as firewalls).

• Use SSH network protocol to secure login to your Linux EC2 instances.

• Disable the root API access keys.

• Disable remote root login.

• Use command-line logging.

• Use sudo for privilege escalation.

• Generate your own key pairs, and don’t share them with other customers (or even with AWS).

• Delete unnecessary keys from the authorized keys file on your EC2 instances. An instance’s neighbors thus don’t have privileged access to the instance compared to any host on the Internet, meaning that you can treat them as belonging to different physical hosts.

Securing the Hypervisor

EC2 uses a custom version of the Xen hypervisor, which uses paravirtualization for Linux VMs. Under a paravirtualized system, the guest OS has no privileged access to the CPU, since the guest runs less privileged mode, called a ring. This demarcation of the guest and hypervisor means strong security for you.

Instance Isolation

AWS uses the Xen hypervisor to isolate the instances running on a physical machine. All network packets pass through the AWS firewall, which is in the hypervisor layer, helping to control traffic to the VM instance’s virtual network interfaces. AWS enforces instance isolation in multiple areas, such as CPU memory.

Your EC2 instances use virtualized disks and have no access to the raw disk devices. The disk virtualization layer resets all used storage blocks, so a customer’s data isn’t exposed to other users. Memory is returned to the free memory pool for new memory allocation only after the memory is fully scrubbed. The hypervisor scrubs all the memory it reallocates to users.

Who Secures the Host and Guest Operating Systems? AWS uses specially designed, configured, and hardened servers to serve as administration hosts. It tightly controls access to these servers with MFA and logs and audits the access.

You, the customer, completely control your VM servers. You have root access or administrative control over all accounts and servers and the applications that run on these servers. AWS has no access rights to your VMs.

API Calls

Amazon EC2 API calls, such as those that terminate instances, must be signed by your Amazon secret access key. This key could be the AWS account secret access key, or an IAM user’s secret access key.

Mandatory Firewall

The extent of security provided by a firewall depends on the network ports that you open and the duration for which you leave them open. EC2 comes with a mandatory inbound firewall that by default is in the deny-all mode—that is, no inbound traffic is allowed until you open the necessary ports. You can restrict network traffic by protocol, service, port, and IP address.

You can group instances into various classes, so you can assign different rules for the instances. For example, you can open port 80 (HTTP) and, optionally, port 443 (HTTPS) for all your web servers. Instances functioning as database servers, by running the Oracle database, would have port 1521 open.