Chapter 2. Logical Asset Security

Terms you’ll need to understand:

![]() Confidentiality

Confidentiality

![]() Integrity

Integrity

![]() Availability

Availability

![]() SANs

SANs

![]() Information lifecycle management

Information lifecycle management

![]() Privacy impact assessment

Privacy impact assessment

![]() Data classification

Data classification

![]() Data destruction

Data destruction

![]() Data remanence

Data remanence

Techniques you’ll need to master:

![]() Proper methods for destruction of data

Proper methods for destruction of data

![]() Development of documents that can aid in compliance of all local, state, and federal laws

Development of documents that can aid in compliance of all local, state, and federal laws

![]() The implementation of encryption and its use for the protection of data

The implementation of encryption and its use for the protection of data

![]() International concerns of data management

International concerns of data management

Introduction

Asset security addresses the controls needed to protect data throughout its lifecycle. From the point of creation to the end of its life, data protection controls must be implemented to ensure that information is adequately protected during each life cycle phase. This chapter starts by reviewing the basic security principles of confidentiality, integrity, and availability and moves on to data management and governance.

A CISSP must know the importance of data security and how to protect it while it is in transit, in storage, and at rest. A CISSP must understand that protection of data is much more important today than it was ten to fifteen years ago because data is no longer in just a paper form. Today, data can be found on local systems, RAID arrays, or even in the cloud. Regardless of where the data is stored it must have adequate protection and be properly disposed of at the end of its useful life.

Basic Security Principles

Confidentiality, integrity, and availability (CIA) define the basic building blocks of any good security program when defining the goals for network, asset, information, and/or information system security and are commonly referred to collectively as the CIA triad. Although the abbreviation CIA might not be as intriguing as the United States government’s spy organization, it is a concept that security professionals must know and understand.

Confidentiality addresses the secrecy and privacy of information and preventing unauthorized persons from viewing sensitive information. There are a number of controls used in the real world to protect the confidentiality of information, such as locked doors, armed guards, and fences. Administrative controls that can enhance confidentiality include the use of information classification systems, such as requiring sensitive data be encrypted. For example, news reports have detailed several large-scale breaches in confidentiality as a result of corporations misplacing or losing laptops, data, and even backup media containing customer account, name, and credit information. The simple act of encrypting this data could have prevented or mitigated the damage. Sending information in an encrypted format denies attackers the opportunity to intercept and sniff clear text information.

Integrity is the second leg in the security triad. Integrity provides accuracy of information, and offers users a higher degree of confidence that the information they are viewing has not been tampered with. Integrity must be protected while in storage, at rest, and in transit. Information in storage can be protected by using access controls and audit controls. Cryptography can enhance this protection through the use of hashing algorithms. Real-life examples of this technology can be seen in programs such as Tripwire, and MD5Sum. Likewise, integrity in transit can be ensured primarily by the use of transport protocols, such as PKI, hashing, and digital signatures.

The concept of availability requires that information and systems be available when needed. Although many people think of availability only in electronic terms, availability also applies to physical access. If, at 2 a.m., you need access to backup media stored in a facility that allows access only from 8 a.m. to 5 p.m., you definitely have an availability problem. Availability in the world of electronics can manifest itself in many ways. Access to a backup facility 24 × 7 does little good if there are no updated backups to restore from.

Backups are the simplest way to ensure availability. Backups provide a copy of critical information, should data be destroyed or equipment fail. Failover equipment is another way to ensure availability. Systems such as redundant arrays of independent disks (RAID) and redundant sites (hot, cold, and warm) are two other examples. Disaster recovery is tied closely to availability because it’s all about getting critical systems up and running quickly.

Which link in the security triad is considered most important? That depends. In different organizations with different priorities, one link might take the lead over the other two. For example, your local bank might consider integrity the most important; however, an organization responsible for data processing might see availability as the primary concern, whereas an organization such as the NSA might value confidentiality the most. Finally, you should be comfortable seeing the triad in any form. Even though this book refers to it as CIA, others might refer to it as AIC, or as CAIN (where the “N” stands for nonrepudiation).

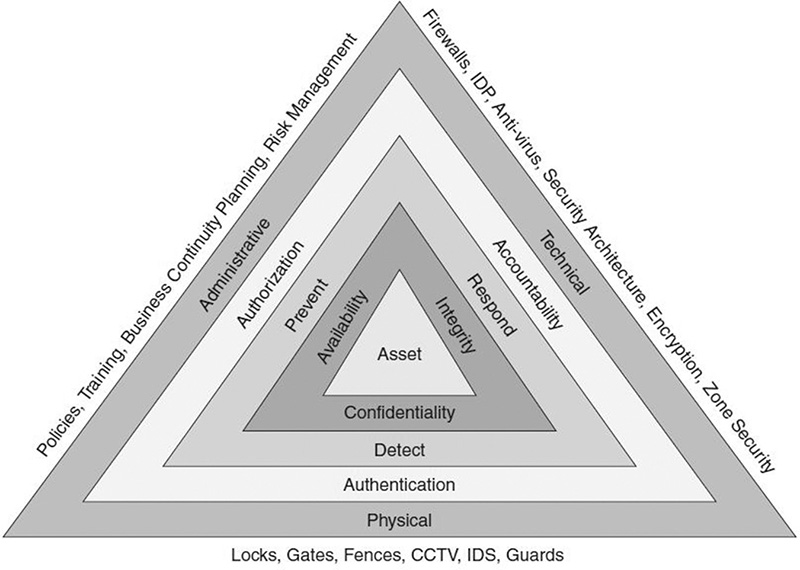

Security management does not stop at CIA. These are but three of the core techniques that apply to asset security. True security requires defense-in-depth. In reality, many techniques are required to protect the assets of an organization; take a moment to look over Figure 2.1.

Data Management: Determine and Maintain Ownership

Data management is not easy and has only become more complex over the last ten to fifteen years. Years ago, people only had to be concerned with paper documents and control might have only meant locking a file cabinet. Today, electronic data might be found on thumb drives, SAN storage arrays, laptop hard drives, mobile devices, or might even be stored in a public cloud.

Data Governance Policy

Generally you can think of policies as high-level documents developed by management to transmit the guiding strategy and philosophy of management to employees. A data governance policy is a documented set of specifications for the guarantee of approved management and control of an organization’s digital assets and information. Data governance programs generally address the following types of data:

![]() Sets of master data

Sets of master data

![]() Metadata

Metadata

![]() Acquired data

Acquired data

Such specifications can involve directives for business process management (BPM) and enterprise risk planning (ERP), as well as security, data quality, and privacy. The goal of data governance is:

![]() To establish appropriate responsibility for the management of data

To establish appropriate responsibility for the management of data

![]() To improve ease of access to data

To improve ease of access to data

![]() To ensure that once data are located, users have enough information about the data to interpret them correctly and consistently

To ensure that once data are located, users have enough information about the data to interpret them correctly and consistently

![]() To improve the security of data, including confidentiality, integrity, and availability

To improve the security of data, including confidentiality, integrity, and availability

Issues to consider include:

![]() Cost—This can include the cost of providing access to the data as well as the cost to protect it.

Cost—This can include the cost of providing access to the data as well as the cost to protect it.

![]() Ownership—This includes concerns as to who owns the data or who might be a custodian. As an example, you may be the custodian of fifty copies of Microsoft Windows Server 2012 yet the code is owned by Microsoft. This is why users pay for a software license and not the ownership of the software itself, and typically have only the compiled “.exe” file and not the source code itself.

Ownership—This includes concerns as to who owns the data or who might be a custodian. As an example, you may be the custodian of fifty copies of Microsoft Windows Server 2012 yet the code is owned by Microsoft. This is why users pay for a software license and not the ownership of the software itself, and typically have only the compiled “.exe” file and not the source code itself.

![]() Liability—This refers to the financial and legal costs an organization would bear should data be lost, stolen, or hacked.

Liability—This refers to the financial and legal costs an organization would bear should data be lost, stolen, or hacked.

![]() Sensitivity—This includes issues related to the sensitivity of data that should be protected against unwarranted disclosure. As an example, social security numbers, data of birth, medical history, etc.

Sensitivity—This includes issues related to the sensitivity of data that should be protected against unwarranted disclosure. As an example, social security numbers, data of birth, medical history, etc.

![]() Ensuring Law/Legal Compliance—This includes items related to legal compliance. As examples, you must retain tax records for a minimum number of years, while you may only retain customers’ for only the time it takes to process a single transaction.

Ensuring Law/Legal Compliance—This includes items related to legal compliance. As examples, you must retain tax records for a minimum number of years, while you may only retain customers’ for only the time it takes to process a single transaction.

![]() Process—This includes methods and tools used to transmit or modify the data.

Process—This includes methods and tools used to transmit or modify the data.

Roles and Responsibility

Data security requires responsibility. There must be a clear division of roles and responsibility. This will be a tremendous help when dealing with any security issues. Everyone should be subject to the organization’s security policy, including employees, management, consultants, and vendors. The following list describes some general areas of responsibility. Specific roles have unique requirements. Some key players and their responsibilities are as follows:

![]() Data Owner—Because senior management is ultimately responsible for data and can be held liable if it is compromised, the data owner is usually a member of senior management, or head of that department. The data owner is responsible for setting the data’s security classification. The data owner can delegate some day-to-day responsibility.

Data Owner—Because senior management is ultimately responsible for data and can be held liable if it is compromised, the data owner is usually a member of senior management, or head of that department. The data owner is responsible for setting the data’s security classification. The data owner can delegate some day-to-day responsibility.

![]() Data Custodian—Usually a member of the IT department. The data custodian does not decide what controls are needed, but does implement controls on behalf of the data owner. Other responsibilities include the day-to-day management of data, controlling access, adding and removing privileges for individual users, and ensuring that the proper controls have been implemented.

Data Custodian—Usually a member of the IT department. The data custodian does not decide what controls are needed, but does implement controls on behalf of the data owner. Other responsibilities include the day-to-day management of data, controlling access, adding and removing privileges for individual users, and ensuring that the proper controls have been implemented.

![]() IS Security Steering Committee—These are individuals from various levels of management that represent the various departments of the organization. They meet to discuss and make recommendations on security issues.

IS Security Steering Committee—These are individuals from various levels of management that represent the various departments of the organization. They meet to discuss and make recommendations on security issues.

![]() Senior Management—These individuals are ultimately responsible for the security practices of the organization. Senior management might delegate day-to-day responsibility to another party or someone else, but cannot delegate overall responsibility for the security of the organization’s data.

Senior Management—These individuals are ultimately responsible for the security practices of the organization. Senior management might delegate day-to-day responsibility to another party or someone else, but cannot delegate overall responsibility for the security of the organization’s data.

![]() Security Advisory Group—These individuals are responsible for reviewing security issues with the chief security officer and they are also responsible for reviewing security plans and procedures.

Security Advisory Group—These individuals are responsible for reviewing security issues with the chief security officer and they are also responsible for reviewing security plans and procedures.

![]() Chief Security Officer—The individual responsible for the day-to-day security of the organization and its critical assets.

Chief Security Officer—The individual responsible for the day-to-day security of the organization and its critical assets.

![]() Users—This is a role that most of us are familiar with because this is the end user in an organization. Users do have responsibilities; they must comply with the requirements laid out in policies and procedures.

Users—This is a role that most of us are familiar with because this is the end user in an organization. Users do have responsibilities; they must comply with the requirements laid out in policies and procedures.

![]() Developers—These individuals develop code and applications for the organization. They are responsible for implementing the proper security controls within the programs they develop.

Developers—These individuals develop code and applications for the organization. They are responsible for implementing the proper security controls within the programs they develop.

![]() Auditor—This individual is responsible for examining the organization’s security procedures and mechanisms. The auditor’s job is to provide an independent objective as to the effectiveness of the organization’s security controls. How often this process is performed depends on the industry and its related regulations. As an example, the health care industry in the United States is governed by the Health Insurance Portability and Accountability Act (HIPAA) regulations and requires yearly reviews.

Auditor—This individual is responsible for examining the organization’s security procedures and mechanisms. The auditor’s job is to provide an independent objective as to the effectiveness of the organization’s security controls. How often this process is performed depends on the industry and its related regulations. As an example, the health care industry in the United States is governed by the Health Insurance Portability and Accountability Act (HIPAA) regulations and requires yearly reviews.

ExamAlert

The CISSP candidate might be tested on the concept that data access does not extend indefinitely. It is not uncommon for an employee to gain more and more access over time while moving to different positions within a company. Such poor management can endanger an organization. When employees are terminated, data access should be withdrawn. If unfriendly termination is known in advance, access should be terminated as soon as possible to reduce the threat of potential damage.

Data Ownership

All data objects within an organization must have an owner. Objects without a data owner will be left unprotected. The process of assigning a data owner and set of controls to information is known as information lifecycle management (ILM). ILM is the science of creating and using policies for effective information management. ILM includes every phase of a data object from its creation to its end. This applies to any and all information assets.

ILM is focused on fixed content or static data. While data may not stay in a fixed format throughout its lifecycle there will be times when it is static. As an example consider this book; after it has been published it will stay in a fixed format until the next version is released.

For the purposes of business records, there are five phases identified as being part of the lifecycle process. These include the following:

![]() Creation and Receipt

Creation and Receipt

![]() Distribution

Distribution

![]() Use

Use

![]() Maintenance

Maintenance

![]() Disposition

Disposition

Data owners typically have legal rights over the data. The data owner typically is responsible for understanding the intellectual property rights and copyright of their data. Intellectual property is agreed on and enforced worldwide by various organizations, including the United Nations Commission on International Trade Law (UNCITRAL), the European Union (EU), and the World Trade Organization (WTO). International property laws protect trade secrets, trademarks, patents, and copyrights:

![]() Trade secret—A trade secret is a confidential design, practice, or method that must be proprietary or business related. For a trade secret to remain valid, the owner must take precautions to ensure the data remains secure. Examples include encryption, document marking, and physical security.

Trade secret—A trade secret is a confidential design, practice, or method that must be proprietary or business related. For a trade secret to remain valid, the owner must take precautions to ensure the data remains secure. Examples include encryption, document marking, and physical security.

![]() Trademark—A trademark is a symbol, word, name, sound, or thing that identifies the origin of a product or service in a particular trade. The ISC2 logo is an example of a trademarked logo. The term service mark is sometimes used to distinguish a trademark that applies to a service rather than to a product.

Trademark—A trademark is a symbol, word, name, sound, or thing that identifies the origin of a product or service in a particular trade. The ISC2 logo is an example of a trademarked logo. The term service mark is sometimes used to distinguish a trademark that applies to a service rather than to a product.

![]() Patent—A patent documents a process or synthesis and grants the owner a legally enforceable right to exclude others from practicing or using the invention’s design for a defined period of time.

Patent—A patent documents a process or synthesis and grants the owner a legally enforceable right to exclude others from practicing or using the invention’s design for a defined period of time.

![]() Copyright—A copyright is a legal device that provides the creator of a work of authorship the right to control how the work is used and protects that person’s expression on a specific subject. This includes the reproduction rights, distribution rights, music, right to create, and right to public display.

Copyright—A copyright is a legal device that provides the creator of a work of authorship the right to control how the work is used and protects that person’s expression on a specific subject. This includes the reproduction rights, distribution rights, music, right to create, and right to public display.

Data Custodians

Data custodians are responsible for the safe custody, transport, and storage of data and the implementation of business rules. This can include the practice of due care and the implementation of good practices to protect intellectual assets such as patents or trade secrets. Some common responsibilities for a data custodian include the following:

![]() Data owner identification—A data owner must be identified and known for each data set and be formally appointed. Too many times data owners do not know that they are data owners and do not understand the role and its responsibilities. In many organizations the data custodian or IT department by default assumes the role of data owner.

Data owner identification—A data owner must be identified and known for each data set and be formally appointed. Too many times data owners do not know that they are data owners and do not understand the role and its responsibilities. In many organizations the data custodian or IT department by default assumes the role of data owner.

![]() Data controls—Access to data is authorized and managed. Adequate controls must be in place to protect the confidentiality, integrity, and availability of the data. This includes administrative, technical, and physical controls.

Data controls—Access to data is authorized and managed. Adequate controls must be in place to protect the confidentiality, integrity, and availability of the data. This includes administrative, technical, and physical controls.

![]() Change control—A change control process must be implemented so that change and access can be audited.

Change control—A change control process must be implemented so that change and access can be audited.

![]() End-of-life provisions or disposal—Controls must be in place so that when data is no longer needed or is not accurate it can be destroyed in an approved method.

End-of-life provisions or disposal—Controls must be in place so that when data is no longer needed or is not accurate it can be destroyed in an approved method.

Data Documentation and Organization

Data that is organized and structured can help ensure that that it is better understood and interpreted by users. Data documentation should detail how data was created, what the context is for the data, the format of the data and its contents, and any changes that have occurred to the data. It’s important to document the following:

![]() Data context

Data context

![]() Methodology of data collection

Methodology of data collection

![]() Data structure and organization

Data structure and organization

![]() Validity of data and quality assurance controls

Validity of data and quality assurance controls

![]() Data manipulations through data analysis from raw data

Data manipulations through data analysis from raw data

![]() Data confidentiality, access, and integrity controls

Data confidentiality, access, and integrity controls

Data Warehousing

A data warehouse is a database that contains data from many other databases. This allows for trend analysis and marketing decisions through data analytics (discussed below). Data warehousing is used to enable a strategic view. Because of the amount of data stored in one location, data warehouses are tempting targets for attackers who can comb through and discover sensitive information.

Data Mining

Data mining is the process of analyzing data to find and understand patterns and relationships about the data (see Figure 2.2). There are many things that must be in place for data mining to occur. These include multiple data sources, access, and warehousing. Data becomes information, information becomes knowledge, and knowledge becomes intelligence through a process called data analytics, which is simply examination of the data. Metadata is best described as being “data about data”. As an example, the number 212 has no meaning by itself. But, when qualifications are added, such as to state the field is an area code, it is then understood the information represents an area code on Manhattan Island. Organizations treasure data and the relationships that can be deduced between individual elements. The relationships discovered can help companies understand their competitors and the usage patterns of their customers, and can result in targeted marketing. As an example, it might not be obvious why the diapers are at the back of the store by the beer case until you learn from data mining that after 10 p.m., more men than women buy diapers, and that they tend to buy beer at the same time.

Knowledge Management

Knowledge management seeks to make intelligent use of all the data in an organization by applying wisdom to it. This is called turning data into intelligence through analytics. This skill attempts to tie together databases, document management, business processes, and information systems. The result is a huge store of data that can be mined to extract knowledge using artificial intelligence techniques. These are the three main approaches to knowledge extraction:

![]() Classification approach—Used to discover patterns; can be used to reduce large databases to only a few individual records or data marts. Think of data marts as small slices of data from the data warehouse.

Classification approach—Used to discover patterns; can be used to reduce large databases to only a few individual records or data marts. Think of data marts as small slices of data from the data warehouse.

![]() Probabilistic approach—Used to permit statistical analysis, often in planning and control systems or in applications that involve uncertainty.

Probabilistic approach—Used to permit statistical analysis, often in planning and control systems or in applications that involve uncertainty.

![]() Statistical approach—A number-crunching approach; rules are constructed that identify generalized patterns in the data.

Statistical approach—A number-crunching approach; rules are constructed that identify generalized patterns in the data.

Data Standards

Data standards provide consistent meaning to data shared among different information systems, programs, and departments throughout the product’s life cycle. Data standards are part of any good enterprise architecture. The use of data standards makes data much easier to use. As an example, say you get a new 850-lumen flashlight that uses two AA batteries. You don’t need to worry about what brand of battery you buy as all AA batteries are manufactured to the same size and voltage.

Tip

If you would like to see an example of a data standard check out Texas Education Agency. It requires all Texas school districts to submit data to the PEIMS data standard. Learn more at: tea.texas.gov/Reports_and_Data/Data_Submission/PEIMS/PEIMS_Data_Standards/PEIMS_Data_Standards/

Data Lifecycle Control

Data lifecycle control is a policy-based approach to managing the flow of an information system’s data throughout its life cycle from the point of creation to the point at which it is out of date and is destroyed or archived.

Data Audit

After all the previous tasks discussed in this chapter have been performed, the organization’s security-management practices will need to be evaluated periodically. This is accomplished by means of an audit process. The audit process can be used to verify that each individual’s responsibility is clearly defined. Employees should know their accountability and their assigned duties. Most audits follow a code or set of documentation. As an example, financial audits can be performed using Committee of Sponsoring Organizations of the Treadway Commission (COSO). IT audits typically follow the Information Systems Audit and Control Association (ISACA) Control Objectives for Information and related Technology (COBIT) framework. COBIT is designed around four domains:

![]() Plan and organize

Plan and organize

![]() Acquire and implement

Acquire and implement

![]() Deliver and support

Deliver and support

![]() Monitor and evaluate

Monitor and evaluate

Although the CISSP exam will not expect you to understand the inner workings of COBIT, you should understand that it is a framework to help provide governance and assurance. COBIT was designed for performance management and IT management. It is considered a system of best practices. COBIT was created by the Information Systems Audit and Control Association (ISACA), and the IT Governance Institute (ITGI) in 1992.

Although auditors can use COBIT, it is also useful for IT users and managers designing controls and optimizing processes. It is designed around 34 key controls that address:

![]() Performance concerns

Performance concerns

![]() IT control profiling

IT control profiling

![]() Awareness

Awareness

![]() Benchmarking

Benchmarking

Audits are the only way to verify that the controls put in place are working, that the policies that were written are being followed, and that the training provided to the employees actually works. To learn more about COBIT, check out www.isaca.org/cobit/. Another set of documents that can be used to benchmark the infrastructure is the family of ISO 27000 standards.

Data Storage and Archiving

Organizations have a never-ending need for increased storage. My first 10-megabyte thumb drive is rather puny by today’s standards. Data storage can include:

![]() Network attached storage (NAS)

Network attached storage (NAS)

![]() Storage area network (SAN)

Storage area network (SAN)

![]() Cloud

Cloud

Organizations should fully define their security requirements for data storage before a technology is deployed. For example, NAS devices are small, easy to use, and can be implemented quickly, but physical security is a real concern, as is implementing strong controls over the data. A SAN can be implemented with much greater security than a NAS. Cloud-based storage offers yet another option but also presents concerns such as:

![]() Is it a private or public cloud?

Is it a private or public cloud?

![]() Does it use physical or virtual servers?

Does it use physical or virtual servers?

![]() How are the servers provisioned and decommissioned?

How are the servers provisioned and decommissioned?

![]() Is the data encrypted and if so what kind of encryption is used?

Is the data encrypted and if so what kind of encryption is used?

![]() Where is the data actually stored?

Where is the data actually stored?

![]() How is the data transferred (data flow)?

How is the data transferred (data flow)?

![]() Where are the encryption keys kept?

Where are the encryption keys kept?

![]() Are there co-tenants?

Are there co-tenants?

Keep in mind that storage integration also includes securing virtual environments, services, applications, appliances, and equipment that provide storage.

SAN

The Storage Network Industry Association (SNIA) defines a SAN as “a data storage system consisting of various storage elements, storage devices, computer systems, and/or appliances, plus all the control software, all communicating in efficient harmony over a network.” A SAN appears to the client OS as a local disk or volume that is available to be formatted and used locally as needed.

![]() Virtual SAN—A virtual SAN (VSAN) is a SAN that offers isolation among devices that are physically connected to the same SAN fabric. A VSAN is sometimes called fabric virtualization. VSANs were developed to support independent virtual fabrics on a single switch. VSANs improve consolidation and simplify management by allowing for more efficient SAN utilization. A VSAN will allow a resource on any individual VSAN to be shared by other users on a different VSAN without merging the SAN fabrics.

Virtual SAN—A virtual SAN (VSAN) is a SAN that offers isolation among devices that are physically connected to the same SAN fabric. A VSAN is sometimes called fabric virtualization. VSANs were developed to support independent virtual fabrics on a single switch. VSANs improve consolidation and simplify management by allowing for more efficient SAN utilization. A VSAN will allow a resource on any individual VSAN to be shared by other users on a different VSAN without merging the SAN fabrics.

![]() Internet Small Computer System Interface (iSCSI)—iSCSI is a SAN standard used for connecting data storage facilities and allowing remote SCSI devices to communicate. Many see it as a replacement for fiber channel, because it does not require any special infrastructure and can run over existing IP LAN, MAN, or WAN networks.

Internet Small Computer System Interface (iSCSI)—iSCSI is a SAN standard used for connecting data storage facilities and allowing remote SCSI devices to communicate. Many see it as a replacement for fiber channel, because it does not require any special infrastructure and can run over existing IP LAN, MAN, or WAN networks.

![]() Fiber Channel over Ethernet (FCoE)—FCoE is another transport protocol that is similar to iSCSI. FCoE can operate at speeds of 10 GB per second and rides on top of the Ethernet protocol. While it is fast, it has a disadvantage in that it is non-routable. iSCSI is, by contrast, routable because it operates higher up the stack, on top of the TCP and UDP protocols.

Fiber Channel over Ethernet (FCoE)—FCoE is another transport protocol that is similar to iSCSI. FCoE can operate at speeds of 10 GB per second and rides on top of the Ethernet protocol. While it is fast, it has a disadvantage in that it is non-routable. iSCSI is, by contrast, routable because it operates higher up the stack, on top of the TCP and UDP protocols.

![]() Host Bus Adapter (HBA) Allocation—The host bus adapter is used to connect a host system to an enterprise storage device. HBAs can be allocated by either soft zoning or by persistent binding. Soft zoning is more permissive, whereas persistent binding decreases address space and increases network complexity.

Host Bus Adapter (HBA) Allocation—The host bus adapter is used to connect a host system to an enterprise storage device. HBAs can be allocated by either soft zoning or by persistent binding. Soft zoning is more permissive, whereas persistent binding decreases address space and increases network complexity.

![]() LUN Masking—LUN masking is implemented primarily at the HBA level. It is a number system that makes LUN numbers available to some but not to others. LUN masking implemented at this level is vulnerable to any attack that compromises the local adapter.

LUN Masking—LUN masking is implemented primarily at the HBA level. It is a number system that makes LUN numbers available to some but not to others. LUN masking implemented at this level is vulnerable to any attack that compromises the local adapter.

![]() Redundancy (Location)—Location redundancy is the idea that content should be accessible from more than one location. An extra measure of redundancy can be provided by means of a replication service so that data is available even if the main storage backup system fails.

Redundancy (Location)—Location redundancy is the idea that content should be accessible from more than one location. An extra measure of redundancy can be provided by means of a replication service so that data is available even if the main storage backup system fails.

![]() Secure Storage Management and Replication—Secure storage management and replication systems are designed to allow an organization to manage and handle all its data in a secure manner with a focus on the confidentiality, integrity, and availability of the data. The replication service allows the data to be duplicated in real time so that additional fault tolerance is achieved.

Secure Storage Management and Replication—Secure storage management and replication systems are designed to allow an organization to manage and handle all its data in a secure manner with a focus on the confidentiality, integrity, and availability of the data. The replication service allows the data to be duplicated in real time so that additional fault tolerance is achieved.

![]() Multipath Solutions—Enterprise storage multipath solutions reduce the risk of data loss or lack of availability by setting up multiple routes between a server and its drives. The multipath software maintains a listing of all requests, passes them through the best possible path, and reroutes communication if a path fails.

Multipath Solutions—Enterprise storage multipath solutions reduce the risk of data loss or lack of availability by setting up multiple routes between a server and its drives. The multipath software maintains a listing of all requests, passes them through the best possible path, and reroutes communication if a path fails.

![]() SAN Snapshots—SAN snapshot software is typically sold with SAN solutions and offers a way to bypass typical backup operations. The snapshot software has the ability to temporarily stop writing to physical disk and then make a point-in-time backup copy. Snapshot software is typically fast and makes a copy quickly, regardless of the drive size.

SAN Snapshots—SAN snapshot software is typically sold with SAN solutions and offers a way to bypass typical backup operations. The snapshot software has the ability to temporarily stop writing to physical disk and then make a point-in-time backup copy. Snapshot software is typically fast and makes a copy quickly, regardless of the drive size.

![]() Data De-Duplication (DDP)—Data de-duplication is the process of removing redundant data to improve enterprise storage utilization. Redundant data is not copied. It is replaced with a pointer to the one unique copy of the data. Only one instance of redundant data is retained on the enterprise storage media, such as disk or tape.

Data De-Duplication (DDP)—Data de-duplication is the process of removing redundant data to improve enterprise storage utilization. Redundant data is not copied. It is replaced with a pointer to the one unique copy of the data. Only one instance of redundant data is retained on the enterprise storage media, such as disk or tape.

Data Security, Protection, Sharing, and Dissemination

Data security is the protection of data from unauthorized activity by authorized users and from access by unauthorized users. Although laws differ depending on which country an organization is operating in, organizations must make the protection of personal information in particular a priority. To understand the level of importance, consider that according to the Privacy Rights Clearinghouse (www.privacyrights.org), the total number of records containing sensitive personal information accumulated from security breaches in the United States between January 2005 and December 2015 is 895,531,860.

From a global standpoint the international standard ISO/IEC 17799 covers data security. ISO 17799 makes clear the fact that all data should have a data owner and data custodian so that it is clear whose responsibility it is to secure and protect access to that data.

An example of a proprietary international information security standard is the Payment Card Industry Data Security Standard. PCI-DSS sets standards for any entity that handles cardholder information for credit cards, prepaid cards, and POS cards. PCI DSS version is comprised of six control objectives that contain one or more requirements:

1. Build and Maintain a Secure Network

Requirement 1: Install and maintain a firewall configuration to protect cardholder data

Requirement 2: Do not use vendor-supplied defaults for system passwords and other security parameters

2. Protect Cardholder Data

Requirement 3: Protect stored cardholder data

Requirement 4: Encrypt transmission of cardholder data across open, public networks

3. Maintain a Vulnerability Management Program

Requirement 5: Use and regularly update anti-virus software

Requirement 6: Develop and maintain secure systems and applications

4. Implement Strong Access Control Measures

Requirement 7: Restrict access to cardholder data by business need-to-know

Requirement 8: Assign a unique ID to each person with computer access

Requirement 9: Restrict physical access to cardholder data

5. Regularly Monitor and Test Networks

Requirement 10: Track and monitor all access to network resources and cardholder data

Requirement 11: Regularly test security systems and processes

6. Maintain an Information Security Policy

Requirement 12: Maintain a policy that addresses information security

Privacy Impact Assessment

Another approach for organizations seeking to improve their protection of personal information is to develop an organization-wide policy based on a privacy impact analysis (PIA). A PIA should determine the risks and effects of collecting, maintaining, and distributing personal information in electronic-based systems. The PIA should be used to evaluate privacy risks and ensure that appropriate privacy controls exist. Existing data controls should be examined to verify that accountability is present and that compliance is built-in every time new projects or processes are planned to come online. The PIA must include a review of the following items as they adversely affect the CIA of privacy records:

![]() Technology—Any time new systems are added or modifications are made, reviews are needed.

Technology—Any time new systems are added or modifications are made, reviews are needed.

![]() Processes—Business processes change, and even though a company might have a good change policy, the change management system might be overlooking personal information privacy.

Processes—Business processes change, and even though a company might have a good change policy, the change management system might be overlooking personal information privacy.

![]() People—Companies change employees and others with whom they do business. Any time business partners, vendors, or service providers change, the impact of the change on privacy needs to be reexamined.

People—Companies change employees and others with whom they do business. Any time business partners, vendors, or service providers change, the impact of the change on privacy needs to be reexamined.

Privacy controls tend to be overlooked for the same reason many security controls are. Management might have a preconceived idea that security controls will reduce the efficiency or speed of business processes. To overcome these types of barriers, senior management must make a strong commitment to protection of personal information and demonstrate its support. Risk-assessment activities aid in the process by informing stakeholders of the actual costs for the loss of personal information of clients and customers. These costs can include fines, lawsuits, lost customers, reputation, and the company going out of business.

Information Handling Requirements

Organizations handle large amounts of information and should have policies and procedures in place that detail how information is to be stored. Think of policies as high level documents, whereas procedures offer step-by-step instructions. Many organizations are within industries that fall under regulatory standards that detail how and how long information must be retained.

One key concern with storage is to ensure that media is appropriately labeled. Media should be labeled so that the data librarian or individual in charge of media management can identify the media owner, when the content was created, the classification level, and when the content is to be destroyed. Figure 2.3 shows an example of appropriate media labeling.

Data Retention and Destruction

All data has a lifetime. Eventually it should either be purged, released, or unclassified. As an example, consider the JFK Records Act. The JFK Records Act was put in place to eventually declassify all records dealing with the assassination of President John F. Kennedy. The JFK Records Act states that all assassination records must finally be made public by 2017. This is an example of declassification, but sometimes data in an organization will never be released and will need to be destroyed.

If the media is held on hard drives, magnetic media, or thumb drives, it must be sanitized. Sanitization is the process of clearing all identified content, such that no data remnants can be recovered. Some of the methods used for sanitization are as follows:

![]() Drive wiping—This is the act of overwriting all information on the drive. As an example, DoD.5200.28-STD (7) specifies overwriting the drive with a special digital pattern through seven passes. Drive wiping allows the drive to be reused.

Drive wiping—This is the act of overwriting all information on the drive. As an example, DoD.5200.28-STD (7) specifies overwriting the drive with a special digital pattern through seven passes. Drive wiping allows the drive to be reused.

![]() Zeroization—This process is usually associated with cryptographic processes. The term was originally used with mechanical cryptographic devices. These devices would be reset to 0 to prevent anyone from recovering the key. In the electronic realm, zeroization involves overwriting the data with zeros. Zeroization is defined as a standard in ANSI X9.17.

Zeroization—This process is usually associated with cryptographic processes. The term was originally used with mechanical cryptographic devices. These devices would be reset to 0 to prevent anyone from recovering the key. In the electronic realm, zeroization involves overwriting the data with zeros. Zeroization is defined as a standard in ANSI X9.17.

![]() Degaussing—This process is used to permanently destroy the contents of a hard drive or magnetic media. Degaussing works by means of a powerful magnet whose field strength penetrates the media and reverses the polarity of the magnetic particles on the tape or hard disk. After media has been degaussed, it cannot be reused. The only method more secure than degaussing is physical destruction.

Degaussing—This process is used to permanently destroy the contents of a hard drive or magnetic media. Degaussing works by means of a powerful magnet whose field strength penetrates the media and reverses the polarity of the magnetic particles on the tape or hard disk. After media has been degaussed, it cannot be reused. The only method more secure than degaussing is physical destruction.

Physical media should be protected with a level of control equal to electronic media. These issues are covered in much greater detail in Chapter 3, “Physical Asset Security.”

With the discussion of controls concluded, the next section focuses on auditing and monitoring. It is time to review some of the ways organizations can maintain accountability.

Note

Unless you’re a 1960s car enthusiast like I am, it might have been a while since you have seen a working 8-track player. The point is that technology changes and the requirement to be able to read and access old media is something to consider. Be it 8-tracks, laser discs, Zip drives, or floppy disks, stored media must be readable to be useful.

Data Remanence and Decommissioning

Object reuse is important because of the remaining information that may reside on a hard disk or any other type of media. Even when data has been sanitized there may be some remaining information. This is known as data remanence. Data remanence is the residual data that remains after data has been erased. Most objects that may be reused will have some remaining amount of information left on media after it has been erased. If the media is not going to be destroyed outright, best practice is to overwrite it with a minimum of seven passes of random ones and zeros.

When information is deemed too sensitive assets such as hard drive, media, and other storage devices may not be reused and the decision may be made for asset disposal. Asset disposal must be handled in an approved manner and part of the system development life cycle. As an example, media that has been used to store sensitive or secret information should be physically destroyed. Before systems or data are decommissioned or disposed of, you must understand any existing legal requirements pertaining to records retention. When archiving information, you must consider the method for retrieving the information.

Classifying Information and Supporting Assets

Organizational information that is proprietary or confidential in nature must be protected. Data classification is a useful way to rank an organization’s informational assets. A well-planned data classification system makes it easy to store and access data. It also makes it easier for users of data to understand its importance. As an example, if an organization has a clean desk policy and mandates that company documents, memos, and electronic media not be left on desks, it can change people’s attitudes about the value of that information. However, whatever data classification system is used, it should be simple enough that all employees can understand it and execute it properly. Two common data classification plans are discussed next.

Data Classification

The two most common data-classification schemes are military and public. Organizations store and process so much electronic information about their customers and employees that it’s critical for them to take appropriate precautions to protect this information. The responsibility for the classification of data lies with the data owner. Both military and private data classification systems accomplish this task by placing information into categories and applying labels to data and clearances to people that access the data.

The first step of this process is to assess the value of the information. When the value is known, it becomes much easier to decide the amount of resources that should be used to protect the data. It would make no sense to spend more on protecting something with a lesser value. By using this system, not all data is treated equally; data that requires more protection gets it, and funds are not wasted protecting data that does not need it.

Each level of classification established should have specific requirements and procedures. The military and commercial data-classification models have predefined labels and levels. When an organization decides which model to use, it can evaluate data placement by using criteria such as the following:

![]() Data value

Data value

![]() Data age

Data age

![]() Laws pertaining to data

Laws pertaining to data

![]() Regulations pertaining to disclosure

Regulations pertaining to disclosure

![]() Replacement cost

Replacement cost

Regardless of which model is used, the following questions will help determine the proper placement of the information:

![]() Who owns the asset or data?

Who owns the asset or data?

![]() Who controls access rights and privileges?

Who controls access rights and privileges?

![]() Who approves access rights and privileges?

Who approves access rights and privileges?

![]() What level of access is granted to the asset or data?

What level of access is granted to the asset or data?

![]() Who currently has access to the asset or data?

Who currently has access to the asset or data?

Classification of data requires several steps:

1. Identify the data custodian.

2. Determine the criteria used for data classification.

3. Task the owner with classifying and labeling the information.

4. Identify any exceptions to the data classification policy.

5. Determine security controls to be applied to protect each category of information.

6. Specify sunset policy or end of life policy and detail in a step-by-step manner how data will be reclassified or declassified. Reviews specifying rentention and end of life should occur at specific periods of time.

7. Develop awareness program.

Military Data Classification

The military data-classification system is mandatory within the U.S. Department of Defense. This system has five levels of classification:

![]() Top Secret—Grave damage if exposed.

Top Secret—Grave damage if exposed.

![]() Secret—Serious damage if exposed.

Secret—Serious damage if exposed.

![]() Confidential—Disclosure could cause damage.

Confidential—Disclosure could cause damage.

![]() Sensitive but Unclassified or Restricted—Disclosure should be avoided.

Sensitive but Unclassified or Restricted—Disclosure should be avoided.

![]() Unclassified or Official—If released, no damage should result.

Unclassified or Official—If released, no damage should result.

Each classification represents a level of sensitivity. Sensitivity is the desired degree of secrecy that the information should maintain. If you hold a confidential clearance, it means that you could access unclassified, sensitive, or confidential information for which you have a need to know. Your need to know would not extend to the secret or top secret levels. The concept of need-to-know is similar to the principle of least privilege in that employees should have access only to information that they need to know to complete their assigned duties.

Public/Private Data Classification

The public or commercial data classification is also built on a four-level model:

![]() Confidential—This is the highest level of sensitivity and disclosure could cause extreme damage to the organization.

Confidential—This is the highest level of sensitivity and disclosure could cause extreme damage to the organization.

![]() Private—This information is for organization use only and its disclosure would damage the organization.

Private—This information is for organization use only and its disclosure would damage the organization.

![]() Sensitive—This information requires a greater level of protection to prevent loss of confidentiality.

Sensitive—This information requires a greater level of protection to prevent loss of confidentiality.

![]() Public—This information might not need to be disclosed, but if it is, it shouldn’t cause any damage.

Public—This information might not need to be disclosed, but if it is, it shouldn’t cause any damage.

Table 2.1 provides details about the military and public/private data-classification models.

Caution

Information has a useful life. Data classification systems need to build in mechanisms to monitor whether information has become obsolete. Obsolete information should be declassified or destroyed.

Asset Management and Governance

The job of asset management and governance is to align the goals of IT to the business functions of the organization, to track assets throughout their lifecycle, and to protect the assets of the organization. Asset management can be defined as any system that inventories, monitors, and maintains items of value. Assets can be both tangible and intangible. Assets can include the following:

![]() Hardware

Hardware

![]() Software

Software

![]() Employees

Employees

![]() Services

Services

![]() Reputation

Reputation

![]() Documentation

Documentation

You can think of asset management as a structured approach of deploying, operating, maintaining, upgrading, and disposing of assets cost-effectively. Asset management is required for proper risk assessment. Before you can start to place a value on an asset you must know what it is and what it is worth. Its value can be assessed either quantitatively or qualitative. A quantitative approach requires:

1. Estimation of potential losses and determination of single loss expectancy (SLE)

2. Completion of a threat frequency analysis and calculation of the annual rate of occurrence (ARO)

3. Determination of the annual loss expectancy (ALE)

A qualitative approach does not place a dollar value on the asset and ranks it as high, medium, or low concern. The downside of performing qualitative evaluations is that you are not working with dollar values, so it is sometimes harder to communicate the results of the assessment to management.

One key asset is software. CISSP candidates should understand common issues related to software licensing. Because software vendors usually license their software rather than sell it, and license it for a number of users on a number of systems, software licenses must be accounted for by the purchasing organization. If users or systems exceed the licensed number, the organization can be held legally liable.

As we move into an age where software is being delivered over the Internet and not with media (CD), software asset management is an important concern.

Software Licensing

Intellectual property rights issues have always been hard to enforce. Just consider the uproar that Napster caused years ago as the courts tried to work out issues of intellectual property and the rights of individuals to share music and files. The software industry has long dealt with this same issue. From the early days of computing, some individuals have been swapping, sharing, and illegally copying computer software. The unauthorized copying and sharing of software is considered software piracy, which is illegal. Many don’t think that the copy of that computer game you gave a friend is hurting anyone. But software piracy is big business, and accumulated loss to the property’s owners is staggering. According to a 2008 report on intellectual property to the United States Congress, in just one raid in June 2007, the FBI recovered more than two billion dollars worth of illegal Microsoft and Symantec software. Internationally, losses from illegal software are estimated to be in excess of $200 billion.

Microsoft and other companies are actively fighting to protect their property rights. Some organizations have formed the Software Protection Association, which is one of the primary bodies that work to enforce licensing agreements. The Business Software Alliance (BSA) and the Federation Against Software Theft are international groups targeting software piracy. These associations target organizations of all sizes from small, two-person companies to large multinationals.

Software companies are making clear in their licenses what a user can and cannot do with their software. As an example, Microsoft Windows XP allowed multiple transfers of licenses whereas Windows 8 and 10 have different transfer rules. As an example, Windows 8 allows only one transfer. The user license states, “The first user of the software may reassign the license to another device one time.” Some vendors even place limits on virtualization. License agreements can actually be distributed in several different ways, including the following:

![]() Click-wrap license agreements—Found in many software products, these agreements require you to click through and agree to terms to install the software product. These are often called contracts of adhesion; they are “take it or leave it” propositions.

Click-wrap license agreements—Found in many software products, these agreements require you to click through and agree to terms to install the software product. These are often called contracts of adhesion; they are “take it or leave it” propositions.

![]() Master license agreements—Used by large companies that develop specific software solutions that specify how the customer can use the product.

Master license agreements—Used by large companies that develop specific software solutions that specify how the customer can use the product.

![]() Shrink-wrap license agreements—Created when software started to be sold commercially and named for the fact that breaking the shrink wrap signifies your acceptance of the license.

Shrink-wrap license agreements—Created when software started to be sold commercially and named for the fact that breaking the shrink wrap signifies your acceptance of the license.

Even with licensing and increased policing activities by organizations such as the BSA, improved technologies make it increasingly easy to pirate software, music, books, and other types of intellectual property. These factors and the need to comply with two World Trade Organization (WTO) treaties led to the passage of the 1998 Digital Millennium Copyright Act (DMCA). Here are some salient highlights:

![]() The DMCA makes it a crime to bypass or circumvent antipiracy measures built into commercial software products.

The DMCA makes it a crime to bypass or circumvent antipiracy measures built into commercial software products.

![]() The DMCA outlaws the manufacture, sale, or distribution of any equipment or device that can be used for code-cracking or illegally copying software.

The DMCA outlaws the manufacture, sale, or distribution of any equipment or device that can be used for code-cracking or illegally copying software.

![]() The DMCA provides exemptions from anti-circumvention provisions for libraries and educational institutions under certain circumstances; however, for those not covered by such exceptions, the act provides penalties up to $1,000,000 and 10 years in prison.

The DMCA provides exemptions from anti-circumvention provisions for libraries and educational institutions under certain circumstances; however, for those not covered by such exceptions, the act provides penalties up to $1,000,000 and 10 years in prison.

![]() The DMCA provides Internet service providers exceptions from copyright infringement liability enabling transmission of information across the Internet.

The DMCA provides Internet service providers exceptions from copyright infringement liability enabling transmission of information across the Internet.

Equipment Lifecycle

The equipment lifecycle begins at the time equipment is requested to the end of its useful life or when it is discarded. The equipment lifecycle typically consist of four phases:

![]() Defining requirements

Defining requirements

![]() Acquisition and implementation

Acquisition and implementation

![]() Operation and maintenance

Operation and maintenance

![]() Disposal and decommission

Disposal and decommission

While some may think that much of the work is done once equipment has been acquired, that is far from the truth. There will need to be some established support functions. Routine maintenance is one important item. Without routine maintenance equipment will fail, and those costs can be calculated. Items to consider include:

![]() Lost productivity

Lost productivity

![]() Delayed or canceled orders

Delayed or canceled orders

![]() Cost of repair

Cost of repair

![]() Cost of rental equipment

Cost of rental equipment

![]() Cost of emergency services

Cost of emergency services

![]() Cost to replace equipment or reload data

Cost to replace equipment or reload data

![]() Cost to pay personnel to maintain the equipment

Cost to pay personnel to maintain the equipment

Technical support is another consideration. The longer a piece of equipment has been in use the more issues it may have. As an example, if you did a search for exploits for Windows 7 or Windows 10 which do you think would return more results? Most likely Windows 7. This all points to the need for more support the longer the resource has been in use.

Determine Data Security Controls

Any discussion on logical asset security must at some point discuss encryption. While there is certainly more to protecting data than just encrypting it, encryption is one of the primary controls used to protect data. Just consider all the cases of lost hard drives, laptops, and thumb drives that have made the news because they contained data that was not encrypted. In many cases encryption is not just a good idea; it is also mandated by law. CISSP candidates must ensure that corporate policies addressing where and how encryption will be used are well defined and being followed by all employees.

Let’s examine the two areas at which encryption can be used to protect data at a high level. These topics will be expanded on in Chapter 6, “The Application and Use of Cryptography.”

Data at Rest

Data at rest is information stored on some form of media that is not traversing a network or residing in temporary memory. Failure to properly protect data at rest can lead to attacks such as the following:

![]() Pod slurping, a technique for illicitly downloading or copying data from a computer. Typically used for data exfiltration.

Pod slurping, a technique for illicitly downloading or copying data from a computer. Typically used for data exfiltration.

![]() Various forms of USB (Universal Serial Bus) malware, including but not limited to USB Switchblade and Hacksaw.

Various forms of USB (Universal Serial Bus) malware, including but not limited to USB Switchblade and Hacksaw.

![]() Other forms of malicious software, including but not limited to viruses, worms, Trojans, and various types of key loggers.

Other forms of malicious software, including but not limited to viruses, worms, Trojans, and various types of key loggers.

Data at rest can be protected via different technical and physical hardware or software controls that should be defined in your security policy. Some hardware offers the ability to build in encryption. A relatively new hardware security device for computers is called the trusted platform module (TPM) chip. The TPM is a “slow” cryptographic hardware processor which can be used to provide a greater level of security than software encryption. A TPM chip installed on the motherboard of a client computer can also be used for system state authentication. The TPM can also be used to store the encryption keys.

The TPM measures the system and stores the measurements as it traverses through the boot sequence. When queried, the TPM will return these values signed by a local private key. These values can be used to discover the status of a platform. The recognition of the state and validation of these values is referred to as attestation. Phrased differently, attestation allows one to confirm, authenticate, or prove a system to be in a specific state. Data can also be encrypted using these values. This process is referred to as sealing a configuration. In short, the TPM is also a tamper-resistant cryptographic module that can provide a means to report the system configuration to a policy enforcer or “health monitor.”

The TPM also provides the ability to encrypt information to a specific platform configuration by calculating hashed values based on items such as the system’s firmware, configuration details, and core components of the operating system as it boots. These values, along with a secret key stored in the TPM, can be used to encrypt information and only allow it to become usable in a specific machine configuration. This process is called sealing.

The TPM is now addressed by ISO 11889-1:2009. It can also be used with other forms of data and system protection to provide a layered approach, referred to as defense in depth. For example, the TPM can help protect the actual system, while another set of encryption keys can be stored on a user’s common access card or smart card to decrypt and access the data set.

Another potential option that builds on this technology is self-encrypting hard drives (SEDs). These pieces of hardware offer many advantages over non-encrypted drives:

![]() Compliance—SEDs have the ability to offer built-in encryption. This can help with compliance laws that many organizations must adhere to.

Compliance—SEDs have the ability to offer built-in encryption. This can help with compliance laws that many organizations must adhere to.

![]() Strong security—SEDs make use of strong encryption. The contents of an SED are always encrypted and the encryption keys are themselves encrypted and protected in hardware.

Strong security—SEDs make use of strong encryption. The contents of an SED are always encrypted and the encryption keys are themselves encrypted and protected in hardware.

![]() Ease of use—Users only have to authenticate to the drive when the device boots up or when they change passwords/credentials. The encryption is not visible to the user.

Ease of use—Users only have to authenticate to the drive when the device boots up or when they change passwords/credentials. The encryption is not visible to the user.

![]() Performance—As SEDs are not visible to the user and are integrated into hardware, the system operates at full performance with no impact on user productivity.

Performance—As SEDs are not visible to the user and are integrated into hardware, the system operates at full performance with no impact on user productivity.

Software encryption is another protection mechanism for data at rest. There are many options available, such as EFS, BitLocker, and PGP. Software encryption can be used on specific files, databases, or even entire RAID arrays that store sensitive data. What is most important about any potential software option is that not only must the encrypted data remain secure and remain inaccessible when access controls, such as usernames and passwords, are incorrect; the encryption keys themselves must be protected, and should therefore be updated on a regular basis.

Caution

Encryption keys should be stored separately from the data.

Data in Transit

Any time data is being processed or moved from one location to the next, it requires proper controls. The basic problem is that many protocols and applications send information via clear text. Services such as email, web, and FTP were not designed with security in mind and send information with few security controls and no encryption. Examples of insecure protocols include:

![]() FTP—Clear-text username and password

FTP—Clear-text username and password

![]() Telnet—Clear-text username and password

Telnet—Clear-text username and password

![]() HTTP—Clear text

HTTP—Clear text

![]() SMTP—All data is passed in the clear

SMTP—All data is passed in the clear

For data in transit that is not being protected by some form of encryption, there are many dangers, which include the following:

![]() Eavesdropping

Eavesdropping

![]() Sniffing

Sniffing

![]() Data alteration

Data alteration

Today, many people connect to corporate networks from many different locations. Employees may connect via free Wi-Fi from coffee shops, restaurants, airports, or even hotels.

One way to protect this type of data in transit is by means of a Virtual Private Network (VPN). VPNs are used to connect devices through the public Internet. Three protocols are used to provide a tunneling mechanism in support of VPNs: Point-to-Point Tunneling Protocol (PPTP), Layer 2 Tunneling Protocol (L2TP), and IP Security (IPSec). When an appropriate protocol is defined, the VPN traffic will be encrypted. Microsoft supplies Microsoft Point-to-Point Encryption (MPPE), with PPTP, native to the Microsoft operating systems. L2TP offers no encryption, and as such is usually used with IPSec in ESP mode to protect data in transit. IPSec can provide both tunneling and encryption.

Two types of tunnels can be implemented:

![]() LAN-to-LAN tunnels—Users can tunnel transparently to each other on separate LANS.

LAN-to-LAN tunnels—Users can tunnel transparently to each other on separate LANS.

![]() Host-to-LAN tunnels—Mobile users can connect to the corporate LAN.

Host-to-LAN tunnels—Mobile users can connect to the corporate LAN.

Having an encrypted tunnel is just one part of protecting data in transit. Another important concept is that of authentication. Almost all VPNs use digital certificates as the primary means of authentication. X.509 v3 is the de facto standard. X.509 specifies certificate requirements and their contents. Much like that of a state driver’s license office, the Certificate Authority (CA) guarantees the authenticity of the certificate and its contents. These certificates act as an approval mechanism.

Just as with other services, organizations need to develop policies to define who will have access to the VPN and what encryption mechanisms will be used. It’s important that VPN policies be designed to map to the organization’s security policy. As senior management is ultimately responsible, they must approve and support this policy.

Standard email is also very insecure and can be exposed while in transit. Standard email protocols such as SMTP, POP3, and IMAP all send data via clear text. To protect email in transit you must use encryption. Email protection mechanisms include PGP, Secure Multipurpose Internet Mail Extensions (S/MIME), and Privacy Enhanced Mail (PEM). Regardless of what is being protected periodic auditing of sensitive data should be part of policy and should occur on a regular schedule.

Data in transit will also require a discussion of how the encryption will be applied. Encryption can be performed at different locations with different amounts of protection applied.

![]() Link encryption—The data is encrypted through the entire communication path. Because all header information is encrypted each node must decrypt and encrypt the routing information. Source and destination address cannot be seen to someone sniffing traffic.

Link encryption—The data is encrypted through the entire communication path. Because all header information is encrypted each node must decrypt and encrypt the routing information. Source and destination address cannot be seen to someone sniffing traffic.

![]() End to end encryption—Generally performed by the end user and as such can pass through each node without further processing. However, source and destination addresses are passed in clear text, so they can be seen to someone sniffing traffic.

End to end encryption—Generally performed by the end user and as such can pass through each node without further processing. However, source and destination addresses are passed in clear text, so they can be seen to someone sniffing traffic.

Endpoint Security

No review of logical asset security would be complete without a discussion of endpoint security. Endpoint security consists of the controls placed on client or end user systems, such as control of USB and CD/DVD, antivirus, anti-malware, anti-spyware, and so on. The controls placed on a client system are very important.

![]() Removable media—A common vector for malware propagation is via USB thumb drive. Malware such as Stuxnet, Conficker, and Flame all had the capability to spread by thumb drives. Removable drives should be restricted and turned off when possible.

Removable media—A common vector for malware propagation is via USB thumb drive. Malware such as Stuxnet, Conficker, and Flame all had the capability to spread by thumb drives. Removable drives should be restricted and turned off when possible.

![]() Disk encryption—Disk encryption software such as EFS and BitLocker can be used to encrypt the contents of desktop and laptop hard drives. Also, corporate smartphones and tablets should have encryption enabled.

Disk encryption—Disk encryption software such as EFS and BitLocker can be used to encrypt the contents of desktop and laptop hard drives. Also, corporate smartphones and tablets should have encryption enabled.

![]() Application whitelisting—This approach only allows known good applications and software to be installed, updated, and used. Whitelisting techniques can include code signing, digital certificates, known good cryptographic hashes, or trusted full paths and names. Blacklisting, alternatively, blocks known bad software from being downloaded and installed.

Application whitelisting—This approach only allows known good applications and software to be installed, updated, and used. Whitelisting techniques can include code signing, digital certificates, known good cryptographic hashes, or trusted full paths and names. Blacklisting, alternatively, blocks known bad software from being downloaded and installed.

![]() Host-based firewalls—Defense in depth dictates that the company should consider not just enterprise firewalls but also host-based firewalls.

Host-based firewalls—Defense in depth dictates that the company should consider not just enterprise firewalls but also host-based firewalls.

![]() Configuration lockdown—Not just anyone should have the ability to make changes to equipment or hardware. Configurations controls can be used to prevent unauthorized changes.

Configuration lockdown—Not just anyone should have the ability to make changes to equipment or hardware. Configurations controls can be used to prevent unauthorized changes.

![]() Antivirus—This is the most commonly deployed endpoint security product. While it is a needed component, antivirus has become much less effective over the last several years.

Antivirus—This is the most commonly deployed endpoint security product. While it is a needed component, antivirus has become much less effective over the last several years.

One basic starting point is to implement the principle of least privilege. This concept can also be applied to each logical asset: each computer, system component or process should have the least authority necessary to perform its duties.

Baselines

A baseline can be described as a standard of security. Baselines are usually mapped to industry standards. As an example, an organization might specify that all computer systems be certified by Common Criteria to an Evaluation Assurance Level (EAL) 3. Another example of baselining can be seen in NIST 800-53. NIST 800-53 describes a tailored baseline as a starting point for determining the needed level of security as seen in Figure 2.4.

![]() IT structure analysis (survey)—Includes analysis of technical, operation, and physical aspects of the organization, division, or group.

IT structure analysis (survey)—Includes analysis of technical, operation, and physical aspects of the organization, division, or group.

![]() Assessment of protection needs—Determination of the needed level of protection. This activity can be quantitative or qualitative.

Assessment of protection needs—Determination of the needed level of protection. This activity can be quantitative or qualitative.