1

THE PROBLEM WITH “HUMAN ERROR”

Disasters in complex systems – such as the destruction of the reactor at Three Mile Island, the explosion onboard Apollo 13, the destruction of the space shuttles Challenger and Columbia, the Bhopal chemical plant disaster, the Herald of Free Enterprise ferry capsizing, the Clapham Junction railroad disaster, the grounding of the tanker Exxon Valdez, crashes of highly computerized aircraft at Bangalore and Strasbourg, the explosion at the Chernobyl reactor, AT&T’s Thomas Street outage, as well as more numerous serious incidents which have only captured localized attention – have left many people perplexed. From a narrow, technology-centered point of view, incidents seem more and more to involve mis-operation of otherwise functional engineered systems. Small problems seem to cascade into major incidents. Systems with minor problems are managed into much more severe incidents. What stands out in these cases is the human element.

“Human error” is cited over and over again as a major contributing factor or “cause” of incidents. Most people accept the term human error as one category of potential causes for unsatisfactory activities or outcomes. Human error as a cause of bad outcomes is used in engineering approaches to the reliability of complex systems (probabilistic risk assessment) and is widely cited as a basic category in incident reporting systems in a variety of industries. For example, surveys of anesthetic incidents in the operating room have attributed between 70 and 75 percent of the incidents surveyed to the human element (Cooper, Newbower, and Kitz, 1984; Chopra, Bovill, Spierdijk, and Koornneef, 1992; Wright, Mackenzie, Buchan, Cairns, and Price, 1991). Similar incident surveys in aviation have attributed over 70 percent of incidents to crew error (Boeing, 1993). In general, incident surveys in a variety of industries attribute high percentages of critical events to the category “human error” (see for example, Hollnagel, 1993). The result is the widespread perception of a “human error problem.”

One aviation organization concluded that to make progress on safety:

We must have a better understanding of the so-called human factors which control performance simply because it is these factors which predominate in accident reports. (Aviation Daily, November 6, 1992)

The typical belief is that the human element is separate from the system in question and hence, that problems reside either in the human side or in the engineered side of the equation. Incidents attributed to human error then become indicators that the human element is unreliable. This view implies that solutions to a “human error problem” reside in changing the people or their role in the system. To cope with this perceived unreliability of people, the implication is that one should reduce or regiment the human role in managing the potentially hazardous system. In general, this is attempted by enforcing standard practices and work rules, by exiling culprits, by policing of practitioners, and by using automation to shift activity away from people. Note that this view assumes that the overall tasks and system remain the same regardless of the extent of automation, that is the allocation of tasks to people or to machines, and regardless of the pressures managers or regulators place on the practitioners.

For those who accept human error as a potential cause, the answer to the question, what is human error, seems self-evident. Human error is a specific variety of human performance that is so clearly and significantly substandard and flawed when viewed in retrospect that there is no doubt that it should have been viewed by the practitioner as substandard at the time the act was committed or omitted. The judgment that an outcome was due to human error is an attribution that (a) the human performance immediately preceding the incident was unambiguously flawed and (b) the human performance led directly to the negative outcome.

But in practice, things have proved not to be this simple. The label “human error” is very controversial (e.g., Hollnagel, 1993). When precisely does an act or omission constitute an error? How does labeling some act as a human error advance our understanding of why and how complex systems fail? How should we respond to incidents and errors to improve the performance of complex systems? These are not academic or theoretical questions. They are close to the heart of tremendous bureaucratic, professional, and legal conflicts and are tied directly to issues of safety and responsibility. Much hinges on being able to determine how complex systems have failed and on the human contribution to such outcome failures. Even more depends on judgments about what means will prove effective for increasing system reliability, improving human performance, and reducing or eliminating bad outcomes.

Studies in a variety of fields show that the label “human error” is prejudicial and unspecific. It retards rather than advances our understanding of how complex systems fail and the role of human practitioners in both successful and unsuccessful system operations. The investigation of the cognition and behavior of individuals and groups of people, not the attribution of error in itself, points to useful changes for reducing the potential for disaster in large, complex systems. Labeling actions and assessments as “errors” identifies a symptom, not a cause; the symptom should call forth a more in-depth investigation of how a system comprising people, organizations, and technologies both functions and malfunctions (Rasmussen et al., 1987; Reason, 1990; Hollnagel, 1991b; 1993).

Consider this episode which apparently involved a “human error” and which was the stimulus for one of earliest developments in the history of experimental psychology. In 1796 the astronomer Maskelyne fired his assistant Kinnebrook because the latter’s observations did not match his own. This incident was one stimulus for another astronomer, Bessel, to examine empirically individual differences in astronomical observations. He found that there were wide differences across observers given the methods of the day and developed what was named the personal equation in an attempt to model and account for these variations (see Boring, 1950). The full history of this episode foreshadows the latest results on human error. The problem was not that one person was the source of errors. Rather, Bessel realized that the standard assumptions about inter-observer accuracies were wrong. The techniques for making observations at this time required a combination of auditory and visual judgments. These judgments were heavily shaped by the tools of the day – pendulum clocks and telescope hairlines – in relation to the demands of the task. In the end, the constructive solution was not dismissing Kinnebrook, but rather searching for better methods for making astronomical observations, re-designing the tools that supported astronomers, and re-designing the tasks to change the demands placed on human judgment.

The results of the recent intense examination of the human contribution to safety and to system failure indicate that the story of “human error” is markedly complex. For example:

![]() the context in which incidents evolve plays a major role in human performance,

the context in which incidents evolve plays a major role in human performance,

![]() technology can shape human performance, creating the potential for new forms of error and failure,

technology can shape human performance, creating the potential for new forms of error and failure,

![]() the human performance in question usually involves a set of interacting people,

the human performance in question usually involves a set of interacting people,

![]() the organizational context creates dilemmas and shapes tradeoffs among competing goals,

the organizational context creates dilemmas and shapes tradeoffs among competing goals,

![]() the attribution of error after-the-fact is a process of social judgment rather than an objective conclusion.

the attribution of error after-the-fact is a process of social judgment rather than an objective conclusion.

FIRST AND SECOND STORIES

Sometimes it is more seductive to see human performance as puzzling, as perplexing, rather than as complex. With the rubble of an accident spread before us, we can easily wonder why these people couldn’t see what is obvious to us now? After all, all the data was available! Something must be wrong with them. They need re-mediation. Perhaps they need disciplinary action to get them to try harder in the future. Overall, you may feel the need to protect yourself, your system, your organization from these erratic and unreliable other people.

Plus, there is a tantalizing opportunity, what seems like an easy way out – computerize, automate, proceduralize even more stringently – in other words, create a world without those unreliable people who aren’t sufficiently careful or motivated. Ask everybody else to try a little harder, and if that still does not work, apply new technology to take over (parts of) their work.

But where you may find yourself puzzled by erratic people, research sees something quite differently. First, it finds that success in complex, safety-critical work depends very much on expert human performance as real systems tend to run degraded, and plans/algorithms tend to be brittle in the face of complicating factors. Second, the research has discovered many common and predictable patterns in human-machine problem-solving and in cooperative work. There are lawful relationships that govern the different aspects of human performance, cognitive work, coordinated activity and, interestingly, our reactions to failure or the possibility of failure. These are not the natural laws of physiology, aerodynamics, or thermodynamics. They are the control laws of cognitive and social sciences (Woods and Hollnagel, 2006).

The misconceptions and controversies on human error in all kinds of industries are rooted in the collision of two mutually exclusive world views. One view is that erratic people degrade an otherwise safe system. In this view, work on safety means protecting the system (us as managers, regulators and consumers) from unreliable people. We could call this the Ptolemaic world view (the sun goes around the earth). The other world view is that people create safety at all levels of the socio-technical system by learning and adapting to information about how we all can contribute to success and failure. This, then, is a Copernican world view (the earth goes around the sun). Progress comes from helping people create safety. This is what the science says: help people cope with complexity to achieve success. This is the basic lesson from what is now called “New Look” research about error that began in the early 1980s, particularly with one of its founders, Jens Rasmussen.

We can blame and punish under whatever labels are in fashion but that will not change the lawful factors that govern human performance nor will it make the sun go round the earth. So are people sinners or are they saints? This is an old theme, but neither view leads anywhere near to improving safety. This book provides a comprehensive treatment of the Copernican world view or the paradigm that people create safety by coping with varying forms of complexity. It provides a set of concepts about how these processes break down at both the sharp end and the blunt end of hazardous systems.

You will need to shift your paradigm if you want to make real progress on safety in high-risk industries. This shift is, not surprisingly, extraordinarily difficult. Windows of opportunity can be created or expanded, but only if all of us are up to the sacrifices involved in building, extending, and deepening the ways we can help people create safety. The paradigm shift is a shift from a first story, where human error is the cause, to a second, deeper story, in which the normal, predictable actions and assessments which we call “human error” after the fact are the product of systematic processes inside of the cognitive, operational and organizational world in which people are embedded (Cook, Woods and Miller, 1998):

The First Story: Stakeholders claim failure is “caused” by unreliable or erratic performance of individuals working at the sharp end. These sharp-end individuals undermine systems which otherwise work as designed. The search for causes stops when they find the human or group closest to the accident who could have acted differently in a way that would have led to a different outcome. These people are seen as the source or cause of the failure – human error. If erratic people are the cause, then the response is to remove these people from practice, provide remedial training to other practitioners, to urge other practitioners to try harder, and to regiment practice through policies, procedures, and automation.

The Second Story: Researchers, looking more closely at the system in which these practitioners are embedded, reveal the deeper story – a story of multiple contributors that create the conditions that lead to operator errors. Research results reveal systematic factors in both the organization and the technical artifacts that produce the potential for certain kinds of erroneous actions and assessments by people working at the sharp end of the system. In other words, human performance is shaped by systematic factors, and the scientific study of failure is concerned with understanding how these factors lawfully shape the cognition, collaboration, and ultimately the behavior of people in various work domains.

Research has identified some of these systemic regularities that generate conditions ripe with the potential for failure. In particular, we know about how a variety of factors make certain kinds of erroneous actions and assessments predictable (Norman, 1983, 1988). Our ability to predict the timing and number of erroneous actions is very weak, but our ability to foresee vulnerabilities that eventually contribute to failures is often good or very good.

Table 1.1 The contrast between first and second stories of failure

| First stories | Second stories |

|---|---|

| Human error (by any other name: violation, complacency) is seen as a cause of failure | Human error is seen as the effect of systemic vulnerabilities deeper inside the organization |

| Saying what people should have done is a satisfying way to describe failure | Saying what people should have done does not explain why it made sense for them to do what they did |

| Telling people to be more careful will make the problem go away | Only by constantly seeking out its vulnerabilities can organizations enhance safety |

MULTIPLE CONTRIBUTORS AND THE DRIFT TOWARD FAILURE

The research that leads to Second Stories found that doing things safely, in the course of meeting other goals, is always part of operational practice. As people in their different roles are aware of potential paths to failure, they develop failure-sensitive strategies to forestall these possibilities. When failures occurred against this background of usual success, researchers found multiple contributors each necessary but only jointly sufficient and a process of drift toward failure as planned defenses eroded in the face of production pressures and change. These small failures or vulnerabilities are present in the organization or operational system long before an incident is triggered. All complex systems contain such conditions or problems, but only rarely do they combine to create an accident. The research revealed systematic, predictable organizational factors at work, not simply erratic individuals.

This pattern occurs because, in high consequence, complex systems, people recognize the existence of various hazards that threaten to produce accidents. As a result, they develop technical, human, and organizational strategies to forestall these vulnerabilities. For example, people in health care recognize the hazards associated with the need to deliver multiple drugs to multiple patients at unpredictable times in a hospital setting and use computers, labeling methods, patient identification cross-checking, staff training and other methods to defend against misadministration. Accidents in such systems occur when multiple factors together erode, bypass, or break through the multiple defenses creating the trajectory for an accident. While each of these factors is necessary for an accident, they are only jointly sufficient. As a result, there is no single cause for a failure but a dynamic interplay of multiple contributors. The search for a single or root cause retards our ability to understand the interplay of multiple contributors.

Because there are multiple contributors, there are also multiple opportunities to redirect the trajectory away from disaster. An important path to increased safety is enhanced opportunities for people to recognize that a trajectory is heading closer towards a poor outcome and increased opportunities to recover before negative consequences occur. Factors that create the conditions for erroneous actions or assessments, reduce error tolerance, or block error recovery, degrade system performance, and reduce the “resilience” of the system.

SHARP AND BLUNT ENDS OF PRACTICE

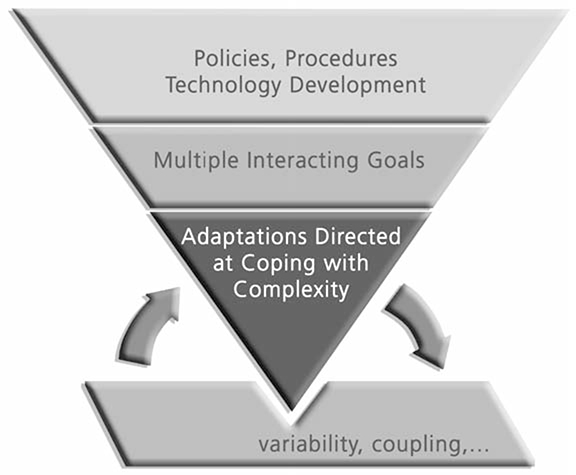

The second basic result in the Second Story is to depict complex systems such as health care, aviation and electrical power generation as having a sharp and a blunt end. At the sharp end, practitioners, such as pilots, spacecraft controllers, and, in medicine, as nurses, physicians, technicians, pharmacists, directly interact with the hazardous process. At the blunt end of the system regulators, administrators, economic policy makers, and technology suppliers control the resources, constraints, and multiple incentives and demands that sharp-end practitioners must integrate and balance. As researchers have investigated the Second Story over the last 30 years, they realized that the story of both success and failure is (a) how sharp-end practice adapts to cope with the complexities of the processes they monitor, manage and control and (b) how the strategies of the people at the sharp end are shaped by the resources and constraints provided by the blunt end of the system.

Researchers have studied sharp-end practitioners directly through various kinds of investigations of how they handle different evolving situations. In these studies we see how practitioners cope with the hazards that are inherent in the system. System operations are seldom trouble-free. There are many more opportunities for failure than actual accidents. In the vast majority of cases, groups of practitioners are successful in making the system work productively and safely as they pursue goals and match procedures to situations. However, they do much more than routinely following rules. They also resolve conflicts, anticipate hazards, accommodate variation and change, cope with surprise, work around obstacles, close gaps between plans and real situations, detect and recover from miscommunications and misassessments.

Figure 1.1 The sharp and blunt ends of a complex system

ADAPTATIONS DIRECTED AT COPING WITH COMPLEXITY

In their effort after success, practitioners are a source of adaptability required to cope with the variation inherent in the field of activity, for example, complicating factors, surprises, and novel events (Rasmussen, 1986). To achieve their goals in their scope of responsibility, people are always anticipating paths toward failure. Doing things safely is always part of operational practice, and we all develop failure sensitive strategies with the following regularities:

1. People’s (groups’ and organizations’) strategies are sensitive to anticipate the potential paths toward and forms of failure.

2. We are only partially aware of these paths.

3. Since the world is constantly changing, the paths are changing.

4. Our strategies for coping with these potential paths can be weak or mistaken. Updating and calibrating our awareness of the potential paths is essential for avoiding failures.

5. All can be overconfident that they have anticipated all forms, and overconfident that the strategies deployed are effective. As a result, we mistake success as built in rather than the product of effort.

6. Effort after success in a world of changing pressures and potential hazards is fundamental.

In contrast with the view that practitioners are the main source of unreliability in an otherwise successful system, close examination of how the system works in the face of everyday and exceptional demands shows that people in many roles actually “make safety” through their efforts and expertise. People actively contribute to safety by blocking or recovering from potential accident trajectories when they can carry out these roles successfully. To understand episodes of failure you have to first understand usual success – how people in their various roles learn and adapt to create safety in a world fraught with hazards, tradeoffs, and multiple goals.

RESILIENCE

People adapt to cope with complexity (Rasmussen, 1986; Woods, 1988). This notion is based on Ashby’s Law of Requisite Variety for control of any complex system. The law is most simply stated as only variety can destroy variety (Ashby, 1956). In other words, operational systems must be capable of sufficient variation in their potential range of behavior to match the range of variation that affects the process to be controlled (Hollnagel and Woods, 2005).

The human role at the sharp end is to “make up for holes in designers’ work” (Rasmussen, 1981); in other words, to be resilient or robust when events and demands do not fit preconceived and routinized paths (textbook situations). There are many forms of variability and complexity inherent in the processes of transportation, power generation, health care, or space operations. There are goal conflicts, dilemmas, irreducible forms of uncertainty, coupling, escalation, and always a potential for surprise. These forms of complexity can be modeled at different levels of analysis, for example complications that can arise in diagnosing a dynamic system, or in modifying a plan in progress, or those that arise in coordinating multiple actors representing different subgoals and parts of a problem. In the final analysis, the complexities inherent in the processes we manage and the finite resources of all operational systems create tradeoffs (Hollnagel, 2009).

Safety research seeks to identify factors that enhance or undermine practitioners’ ability to adapt successfully. In other words, research about safety and failure is about how expertise, in a broad sense distributed over the personnel and artifacts that make up the operational system, is developed and brought to bear to handle the variation and demands of the field of activity.

BLUNT END

When we look at the factors that degrade or enhance the ability of sharp end practice to adapt to cope with complexity we find the marks of organizational factors. Organizations that manage potentially hazardous technical operations remarkably successfully (or high reliability organizations) have surprising characteristics (Rochlin, 1999). Success was not related to how these organizations avoided risks or reduced errors, but rather how these high reliability organizations created safety by anticipating and planning for unexpected events and future surprises. These organizations did not take past success as a reason for confidence. Instead they continued to invest in anticipating the changing potential for failure because of the deeply held understanding that their knowledge base was fragile in the face of the hazards inherent in their work and the changes omnipresent in their environment.

Safety for these organizations was not a commodity, but a value that required continuing reinforcement and investment. The learning activities at the heart of this process depended on open flow of information about the changing face of the potential for failure. The high reliability organizations valued such information flow, used multiple methods to generate this information, and then used this information to guide constructive changes without waiting for accidents to occur. The human role at the blunt end then is to appreciate the changing match of sharp-end practice and demands, anticipating the changing potential paths to failure – assessing and supporting resilience or robustness.

BREAKDOWNS IN ADAPTATION

The theme that leaps out at the heart of these results is that failure represents breakdowns in adaptations directed at coping with complexity (see Woods and Branlat, in press for new results on how adaptive systems fail). Success relates to organizations, groups and individuals who are skillful at recognizing the need to adapt in a changing, variable world and in developing ways to adapt plans to meet these changing conditions despite the risk of negative side effects.

Studies continue to find two basic forms of breakdowns in adaptation (Woods, O’Brien and Hanes, 1987):

![]() Under-adaptation where rote rule following persisted in the face of events that disrupted ongoing plans and routines.

Under-adaptation where rote rule following persisted in the face of events that disrupted ongoing plans and routines.

![]() Over-adaptation where adaptation to unanticipated conditions was attempted without the complete knowledge or guidance needed to manage resources successfully to meet recovery goals.

Over-adaptation where adaptation to unanticipated conditions was attempted without the complete knowledge or guidance needed to manage resources successfully to meet recovery goals.

In these studies, either local actors failed to adapt plans and procedures to local conditions, often because they failed to understand that the plans might not fit actual circumstances, or they adapted plans and procedures without considering the larger goals and constraints in the situation. In the latter case, the failures to adapt often involved missing side effects of the changes in the replanning process.

SIDE EFFECTS OF CHANGE

Systems exist in a changing world. The environment, organization, economics, capabilities, technology, and regulatory context all change over time. This backdrop of continuous systemic change ensures that hazards and how they are managed are constantly changing. Progress on safety concerns anticipating how these kinds of changes will create new vulnerabilities and paths to failure even as they provide benefits on other scores.

The general lesson is that as capabilities, tools, organizations and economics change, vulnerabilities to failure change as well – some decay, new forms appear. The state of safety in any system is always dynamic, and stakeholder beliefs about safety and hazard also change.

Another reason to focus on change is that systems usually are under severe resource and performance pressures from stakeholders (pressures to become faster, better, and cheaper all at the same time). First, change under these circumstances tends to increase coupling, that is, the interconnections between parts and activities, in order to achieve greater efficiency and productivity. However, research has found that increasing coupling also increases operational complexity and increases the difficulty of the problems practitioners can face. Second, when change is undertaken to improve systems under pressure, the benefits of change may be consumed in the form of increased productivity and efficiency and not in the form of a more resilient, robust and therefore safer system.

It is the complexity of operations that contributes to human performance problems, incidents, and failures. This means that changes, however well-intended, that increase or create new forms of complexity will produce new forms of failure (in addition to other effects). New capabilities and improvements become a new baseline of comparison for potential or actual paths to failure. The public doesn’t see the general course of improvement; they see dreadful failure against a background normalized by usual success.

Future success depends on the ability to anticipate and assess how unintended effects of economic, organizational and technological change can produce new systemic vulnerabilities and paths to failure. Learning about the impact of change leads to adaptations to forestall new potential paths to and forms of failure.

COMPLEXITY IS THE OPPONENT

First stories place us in a search to identify a culprit. The enemy becomes other people, those who we decide after-the-fact are not as well intentioned or careful as we are. On the other hand, stories of how people succeed and sometimes fail in their effort after success reveal different forms of complexity as the mischief makers. Yet as we pursue multiple interacting goals in an environment of performance demands and resource pressures these complexities intensify.

Thus, the enemy of safety is complexity. Progress is learning how to tame the complexity that arises from achieving higher levels of capability in the face of resource pressures (Woods, Patterson and Cook, 2006; Woods, 2005). This directs us to look at sources of complexity and changing forms of complexity and leads to the recognition that tradeoffs are at the core of safety (Hollnagel, 2009). Ultimately, Second Stories capture these complexities and lead us to the strategies to tame them. Thus, they are part of the feedback and monitoring process for learning and adapting to the changing pattern of vulnerabilities.

REACTIONS TO FAILURE: THE HINDSIGHT BIAS

The First Story is ultimately barren. The Second Story points the way to constructive learning and change. Why, then, does incident investigation usually stop at the First Story? Why does the attribution of human error seem to constitute a satisfactory explanation for incidents and accidents? There are several factors that lead people to stop with the First Story, but the most important is hindsight bias.

Incidents and accidents challenge stakeholders’ belief in the safety of the system and the adequacy of the defenses in place. Incidents and accidents are surprising, shocking events that demand explanation so that stakeholders can resume normal activities in providing or consuming the products or services from that field of practice. As a result, after-the-fact stakeholders look back and make judgments about what led to the accident or incident. This is a process of human judgment where people – lay people, scientists, engineers, managers, and regulators – judge what “caused” the event in question.

In this psychological and social judgment process people isolate one factor from among many contributing factors and label it as the “cause” for the event to be explained. People tend to do this despite the fact there are always several necessary and sufficient conditions for the event. Researchers try to understand the social and psychological factors that lead people to see one of these multiple factors as “causal” while relegating the other necessary conditions to background status. One of the early pieces of work on how people attribute causality is Kelley (1973). A more recent treatment of some of the factors can be found in Hilton (1990). From this perspective, “error” research studies the social and psychological processes which govern our reactions to failure as stakeholders in the system in question.

Our reactions to failure as stakeholders are influenced by many factors. One of the most critical is that, after an accident, we know the outcome. Working backwards from this knowledge, it is clear which assessments or actions were critical to that outcome. It is easy for us with the benefit of hindsight to say, “How could they have missed x?” or “How could they have not realized that x would obviously lead to y?” Fundamentally, this omniscience is not available to any of us before we know the results of our actions.

Knowledge of outcome biases our judgment about the processes that led up to that outcome. We react, after the fact, as if this knowledge were available to operators. This oversimplifies or trivializes the situation confronting the practitioners, and masks the processes affecting practitioner behavior before-the-fact. Hindsight bias blocks our ability to see the deeper story of systematic factors that predictably shape human performance.

The hindsight bias is one of the most reproduced research findings relevant to accident analysis and reactions to failure. It is the tendency for people to “consistently exaggerate what could have been anticipated in foresight” (Fischhoff, 1975). Studies have consistently shown that people have a tendency to judge the quality of a process by its outcome. Information about outcome biases their evaluation of the process that preceded it. Decisions and actions followed by a negative outcome will be judged more harshly than if the same process had resulted in a neutral or positive outcome.

Research has shown that hindsight bias is very difficult to remove. For example, the bias remains even when those making the judgments have been warned about the phenomenon and advised to guard against it. The First Story of an incident seems satisfactory because knowledge of outcome changes our perspective so fundamentally. One set of experimental studies of the biasing effect of outcome knowledge can be found in Baron and Hershey (1988). Lipshitz (1989) and Caplan, Posner and Cheney (1991) provide demonstrations of the effect with actual practitioners. See Fischhoff (1982) for one study of how difficult it is for people to ignore outcome information in evaluating the quality of decisions.

DEBIASING THE STUDY OF FAILURE

The First Story leaves us with an impoverished view of the factors that shape human performance. In this vacuum, folk models spring up about the human contribution to risk and safety. Human behavior is seen as fundamentally unreliable and erratic (even otherwise effective practitioners occasionally and unpredictably blunder). These folk models, which regard “human error” as the cause of accidents, mislead us. These folk models create an environment where accidents are followed by a search for a culprit and solutions that consist of punishment and exile for the apparent culprit and increased regimentation or remediation for other practitioners as if the cause resided in defects inside people.

These countermeasures are ineffective or even counterproductive because they miss the deeper systematic factors that produced the multiple conditions necessary for failure. Other practitioners, regardless of motivation levels or skill levels, remain vulnerable to the same systematic factors. If the incident sequence included an omission of an isolated act, the memory burdens imposed by task mis-design are still present as a factor ready to undermine execution of the procedure. If a mode error was part of the failure chain, the computer interface design still creates the potential for this type of miscoordination to occur. If a double bind was behind the actions that contributed to the failure, that goal conflict remains to perplex other practitioners.

Getting to Second Stories requires overcoming the hindsight bias. The hindsight bias fundamentally undermines our ability to understand the factors that influenced practitioner behavior. Knowledge of outcome causes reviewers to oversimplify the problem-solving situation practitioners face. The dilemmas, the uncertainties, the tradeoffs, the attentional demands, and double binds faced by practitioners may be missed or under-emphasized when an incident is viewed in hindsight. Typically, hindsight bias makes it seem that participants failed to account for information or conditions that “should have been obvious” or behaved in ways that were inconsistent with the (now known to be) significant information. Possessing knowledge of the outcome, because of the hindsight bias, trivializes the situation confronting the practitioner, who cannot know the outcome before-the-fact, and makes the “correct” choice seem crystal clear.

The difference between everyday or “folk” reactions to failure and investigations of the factors that influence human performance is that researchers use methods designed to remove hindsight bias to see the factors that influenced the behavior of the people in the situation before the outcome is known (Dekker, 2006).

When your investigation stops with the First Story and concludes with the label “human error” (under whatever name), you lose the potential for constructive learning and change. Ultimately, the real hazards to your organization are inherent in the underlying system, and you miss them all if you just tell yourself and your stakeholders a First Story. Yet, independent of what you say, other people and other organizations are acutely aware of many of these basic hazards in their field of practice, and they actively work to devise defenses to guard against them. This effort to make safety is needed continuously. When these efforts break down and we see a failure, you stand to gain a lot of new information, not about the innate fallibilities of people, but about the nature of the threats to your complex systems and the limits of the countermeasures you have put in place.

HUMAN PERFORMANCE: LOCAL RATIONALITY

Why do people do what they do? How could their assessments and actions have made sense to them at the time? The concept of local rationality is critical for studying human performance. No pilot sets out to fly into the ground on today’s mission. No physician intends to harm a patient through their actions or lack of intervention. If they do intend this, we speak not of error or failure but of suicide or euthanasia.

After-the-fact, based on knowledge of outcome, outsiders can identify “critical” decisions and actions that, if different, would have averted the negative outcome. Since these “critical” points are so clear to you with the benefit of hindsight, you could be tempted to think they should have been equally clear and obvious to the people involved in the incident. These people’s failure to see what is obvious now to you seems inexplicable and therefore irrational or even perverse. In fact, what seems to be irrational behavior in hindsight turns out to be quite reasonable from the point of view of the demands practitioners face and the resources they can bring bear.

Peoples’ behavior is consistent with Simon’s (1969) principle of bounded rationality – that is, people use their knowledge to pursue their goals. What people do makes sense given their goals, their knowledge and their focus of attention at the time. Human (and machine) problem-solvers possess finite capabilities. There are bounds to the data that they pick up or search out, limits to the knowledge that they possess, bounds to the knowledge that they activate in a particular context, and conflicts among the multiple goals they must achieve. In other words, people’s behavior is rational when viewed from the locality of their knowledge, their mindset, and the multiple goals they are trying to balance.

This means that it takes effort (which consumes limited resources) to seek out evidence, to interpret it (as relevant), and to assimilate it with other evidence. Evidence may come in over time, over many noisy channels. The underlying process may yield information only in response to diagnostic interventions. Time pressure, which compels action (or the de facto decision not to act), makes it impossible to wait for all evidence to accrue. Multiple goals may be relevant, not all of which are consistent. It may not be clear, in foresight, which goals are the most important ones to focus on at any one particular moment in time. Human problem-solvers cannot handle all the potentially relevant information, cannot activate and hold in mind all of the relevant knowledge, and cannot entertain all potentially relevant trains of thought. Hence, rationality must be local – attending to only a subset of the possible knowledge, lines of thought, and goals that could be, in principle, relevant to the problem (Simon, 1957; Newell, 1982).

Though human performance is locally rational, after-the-fact we (and the performers themselves) may see how that behavior contributed to a poor outcome. This means that “error” research, in the sense of understanding the factors that influence human performance, needs to explore how limited knowledge (missing knowledge or misconceptions), how a limited and changing mindset, and how multiple interacting goals shape the behavior of the people in evolving situations. All of us use local rationality in our everyday communications. As Bruner (1986, p. 15) put it:

We characteristically assume that what somebody says must make sense, and we will, when in doubt about what sense it makes, search for or invent an interpretation of the utterance to give it sense.

In other words, this type of error research reconstructs what the view was like (or would have been like) had we stood in the same situation as the participants. If we can understand how the participants’ knowledge, mindset, and goals guided their behavior, then we can see how they were vulnerable to breakdown given the demands of the situation they faced. We can see new ways to help practitioners activate relevant knowledge, shift attention among multiple tasks in a rich, changing data field, and recognize and balance competing goals.