![]()

An Introduction to Asynchronous Programming

There are many holy grails in software development, but probably none so eagerly sought, and yet so woefully unachieved, as making asynchronous programming simple. This isn’t because the issues are currently unknown; rather, they are very well known, but just very hard to solve in an automated way. The goal of this book is to help you understand why asynchronous programming is important, what issues make it hard, and how to be successful writing asynchronous code on the .NET platform.

What Is Asynchronous Programming?

Most code that people write is synchronous. In other words, the code starts to execute, may loop, branch, pause, and resume, but given the same inputs, its instructions are executed in a deterministic order. Synchronous code is, in theory, straightforward to understand, as you can follow the sequence in which code will execute. It is possible of course to write code that is obscure, that uses edge case behavior in a language, and that uses misleading names and large dense blocks of code. But reasonably structured and well named synchronous code is normally very approachable to someone trying to understand what it does. It is also generally straightforward to write as long as you understand the problem domain.

The problem is that an application that executes purely synchronously may generate results too slowly or may perform long operations that leave the program unresponsive to further input. What if we could calculate several results concurrently or take inputs while also performing those long operations? This would solve the problems with our synchronous code, but now we would have more than one thing happening at the same time (at least logically if not physically). When you write systems that are designed to do more than one thing at a time, it is called asynchronous programming.

The Drive to Asynchrony

There are a number of trends in the world of IT that have highlighted the importance of asynchrony.

First, users have become more discerning about the responsiveness of applications. In times past, when a user clicked a button, they would be fairly forgiving if there was a slight delay before the application responded—this was their experience with software in general and so it was, to some degree, expected. However, smartphones and tablets have changed the way that users see software. They now expect it to respond to their actions instantaneously and fluidly. For a developer to give the user the experience they want, they have to make sure that any operation that could prevent the application from responding is performed asynchronously.

Second, processor technology has evolved to put multiple processing cores on a single processor package. Machines now offer enormous processing power. However, because they have multiple cores rather than one incredibly fast core, we get no benefit unless our code performs multiple, concurrent, actions that can be mapped on to those multiple cores. Therefore, taking advantage of modern chip architecture inevitably requires asynchronous programming.

Last, the push to move processing to the cloud means applications need to access functionality that is potentially geographically remote. The resulting added latency can cause operations that previously might have provided adequate performance when processed sequentially to miss performance targets. Executing two or more of these remote operations concurrently may well bring the application back into acceptable performance. To do so, however, requires asynchronous programming.

There are typically three models that we can use to introduce asynchrony: multiple machines, multiple processes, and multiple threads. All of these have their place in complex systems, and different languages, platforms, and technologies tend to favor a particular model.

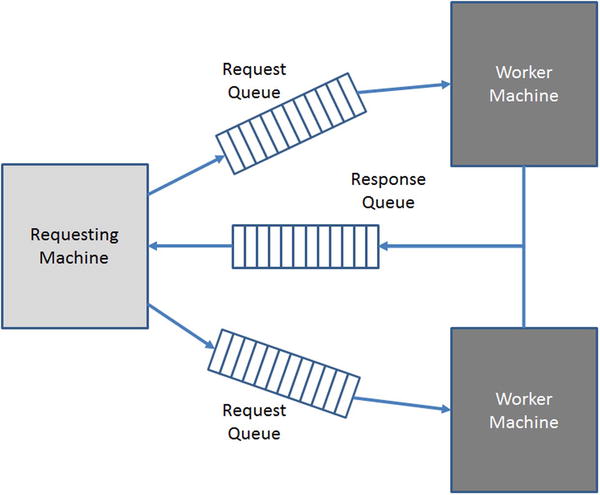

To use multiple machines, or nodes, to introduce asynchrony, we need to ensure that when we request the functionality to run remotely, we don’t do this in a way that blocks the requester. There are a number of ways to achieve this, but commonly we pass a message to a queue, and the remote worker picks up the message and performs the requested action. Any results of the processing need to be made available to the requester, which again is commonly achieved via a queue. As can be seen from Figure 1-1, the queues break blocking behavior between the requester and worker machines and allow the worker machines to run independently of one another. Because the worker machines rarely contend for resources, there are potentially very high levels of scalability. However, dealing with node failure and internode synchronization becomes more complex.

Figure 1-1. Using queues for cross-machine asynchrony

A process is a unit of isolation on a single machine. Multiple processes do have to share access to the processing cores, but do not share virtual memory address spaces and can run in different security contexts. It turns out we can use the same processing architecture as multiple machines on a single machine by using queues. In this case it is easier to some degree to deal with the failure of a worker process and to synchronize activity between processes.

There is another model for asynchrony with multiple processes where, to hand off long-running work to execute in the background, you spawn another process. This is the model that web servers have used in the past to process multiple requests. A CGI script is executed in a spawned process, having been passed any necessary data via command line arguments or environment variables.

Threads are independently schedulable sets of instructions with a package of nonshared resources. A thread is bounded within a process (a thread cannot migrate from one process to another), and all threads within a process share process-wide resources such as heap memory and operating system resources such as file handles and sockets. The queue-based approach shown in Figure 1-1 is also applicable to multiple threads, as it is in fact a general purpose asynchronous pattern. However, due to the heavy sharing of resources, using multiple threads benefits least from this approach. Resource sharing also introduces less complexity in coordinating multiple worker threads and handling thread failure.

Unlike Unix, Windows processes are relatively heavyweight constructs when compared with threads. This is due to the loading of the Win32 runtime libraries and the associated registry reads (along with a number of cross-process calls to system components for housekeeping). Therefore, by design on Windows, we tend to prefer using multiple threads to create asynchronous processing rather than multiple processes. However, there is an overhead to creating and destroying threads, so it is good practice to try to reuse them rather than destroy one thread and then create another.

In Windows, the operating system component responsible for mapping thread execution on to cores is called the Thread Scheduler. As we shall see, sometimes threads are waiting for some event to occur before they can perform any work (in .NET this state is known as SleepWaitJoin). Any thread not in the SleepWaitJoin state should be allocated some time on a processing core and, all things being equal, the thread scheduler will round-robin processor time among all of the threads currently running across all of the processes. Each thread is allotted a time slice and, as long as the thread doesn’t enter the SleepWaitJoin state, it will run until the end of its time slice.

Things, however, are not often equal. Different processes can run with different priorities (there are six priorities ranging from idle to real time). Within a process a thread also has a priority; there are seven ranging from idle to time critical. The resulting priority a thread runs with is a combination of these two priorities, and this effective priority is critical to thread scheduling. The Windows thread scheduler does preemptive multitasking. In other words, if a higher-priority thread wants to run, then a lower-priority thread is ejected from the processor (preempted) and replaced with the higher-priority thread. Threads of equal priority are, again, scheduled on a round-robin basis, each being allotted a time slice.

You may be thinking that lower-priority threads could be starved of processor time. However, in certain conditions, the priority of a thread will be boosted temporarily to try to ensure that it gets a chance to run on the processor. Priority boosting can happen for a number of reasons (e.g., user input). Once a boosted thread has had processor time, its priority gets degraded until it reaches its normal value.

Threads and Resources

Although two threads share some resources within a process, they also have resources that are specific to themselves. To understand the impact of executing our code asynchronously, it is important to understand when we will be dealing with shared resources and when a thread can guarantee it has exclusive access. This distinction becomes critical when we look at thread safety, which we do in depth in Chapter 4.

There are a number of resources to which a thread has exclusive access. When the thread uses these resources it is guaranteed to not be in contention with other threads.

Each thread gets its own stack. This means that local variables and parameters in methods, which are stored on the stack, are never shared between threads. The default stack size is 1MB, so a thread consumes a nontrivial amount of resource in just its allocated stack.

Thread Local Storage

On Windows we can define storage slots in an area called thread local storage (TLS). Each thread has an entry for each slot in which it can store a value. This value is specific to the thread and cannot be accessed by other threads. TLS slots are limited in number, which at the time of writing is guaranteed to be at least 64 per process but may be as high as 1,088.

Registers

A thread has its own copy of the register values. When a thread is scheduled on a processing core, its copy of the register value is restored on to the core’s registers. This allows the thread to continue processing at the point when it was preempted (its instruction pointer is restored) with the register state identical to when it was last running.

Resources Shared by Threads

There is one critical resource that is shared by all threads in a process: heap memory. In .NET all reference types are allocated on the heap and therefore multiple threads can, if they have a reference to the same object, access the same heap memory at the same time. This can be very efficient but is also the source of potential bugs, as we shall see in Chapter 4.

For completeness we should also note that threads, in effect, share operating system handles. In other words, if a thread performs an operation that produces an operating system handle under the covers (e.g., accesses a file, creates a window, loads a DLL), then the thread ending will not automatically return that handle. If no other thread in the process takes action to close the handle, then it will not be returned until the process exits.

Summary

We’ve shown that asynchronous programming is increasingly important, and that on Windows we typically achieve asynchrony via the use of threads. We’ve also shown what threads are and how they get mapped on to cores so they can execute. You therefore have the groundwork to understand how Microsoft has built on top of this infrastructure to provide .NET programmers with the ability to run code asynchronously.

This book, however, is not intended as an API reference—the MSDN documentation exists for that purpose. Instead, we address why APIs have been designed the way they have and how they can be used effectively to solve real problems. We also show how we can use Visual Studio and other tools to debug multithreaded applications when they are not behaving as expected.

By the end of the book, you should have all the tools you need to introduce asynchronous programming to your world and understand the options available to you. You should also have the knowledge to select the most appropriate tool for the asynchronous job in hand.