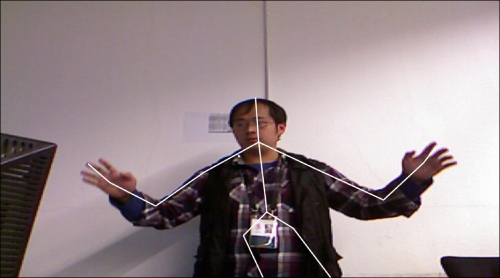

Before we can consider using the skeleton for gesture-based interaction, we should first print out all the skeletal-joint-related data to have a directly perceived look of the Kinect skeleton positions. The data can then be merged with our color image so that we can see how they are matched with each other in real time.

We will first draw the skeleton with a series of lines to see how Kinect defines all the skeletal bones.

- The Microsoft Kinect SDK uses

NUI_SKELETON_POSITION_COUNT(equivalent to 20 for the current SDK version) to represent the number of joints of one skeleton, so we define an array to store their positions.GLfloat skeletonVertices[NUI_SKELETON_POSITION_COUNT][3];

- Add the following lines for updating a skeleton frame in the

update()function.NUI_SKELETON_FRAME skeletonFrame = {0}; hr = context->NuiSkeletonGetNextFrame( 0, &skeletonFrame ); if ( SUCCEEDED(hr) ) { // Traverse all possible skeletons in tracking for ( int n=0; n<NUI_SKELETON_COUNT; ++n ) { // Check each skeleton data to see if it is tracked NUI_SKELETON_DATA& data=skeletonFrame.SkeletonData[n]; if ( data.eTrackingState==NUI_SKELETON_TRACKED ) { updateSkeletonData( data ); break; // in this demo, only handle one skeleton } } } - We declare a new function named

updateSkeletonData()with oneNUI_SKELETON_DATAargument for handling the specified skeleton data. Now let's fill it.POINT coordInDepth; USHORT depth = 0; // Traverse all joints for ( int i=0; i<NUI_SKELETON_POSITION_COUNT; ++i ) { // Obtain joint position and transform it to depth space NuiTransformSkeletonToDepthImage( data.SkeletonPositions[i], &coordInDepth.x, &coordInDepth.y, &depth, NUI_IMAGE_RESOLUTION_640x480 ); // Transform all coordinates to [0, 1] and set them // to the array we defined before. // We will discuss about the transformation later skeletonVertices[i][0] = (GLfloat)coordInDepth.x / 640.0f; skeletonVertices[i][1] = 1.0f - (GLfloat)coordInDepth.y / 480.0f; skeletonVertices[i][2] = (GLfloat)NuiDepthPixelToDepth(depth) * 0.00025f; } - Before rendering the skeleton data we retrieved in

updateSkeletonData(), we have to define the skeleton index array so that OpenGL knows how to connect these joint points. Because we will only draw the skeleton as a reference, it is sufficient to draw the points using theGL_LINESmode, which indicates that every two points are connected to form a line segment. - Using the human skeleton figure we just saw, we can quickly write out the definition as follows:

// Every two indices will form a line-segment // All Kinect enumerations here should be self-explained GLuint skeletonIndices[38] = { NUI_SKELETON_POSITION_HIP_CENTER, NUI_SKELETON_POSITION_SPINE, NUI_SKELETON_POSITION_SPINE, NUI_SKELETON_POSITION_SHOULDER_CENTER, NUI_SKELETON_POSITION_SHOULDER_CENTER, NUI_SKELETON_POSITION_HEAD, // Left arm NUI_SKELETON_POSITION_SHOULDER_LEFT, NUI_SKELETON_POSITION_ELBOW_LEFT, NUI_SKELETON_POSITION_ELBOW_LEFT, NUI_SKELETON_POSITION_WRIST_LEFT, NUI_SKELETON_POSITION_WRIST_LEFT, NUI_SKELETON_POSITION_HAND_LEFT, // Right arm NUI_SKELETON_POSITION_SHOULDER_RIGHT, NUI_SKELETON_POSITION_ELBOW_RIGHT, NUI_SKELETON_POSITION_ELBOW_RIGHT, NUI_SKELETON_POSITION_WRIST_RIGHT, NUI_SKELETON_POSITION_WRIST_RIGHT, NUI_SKELETON_POSITION_HAND_RIGHT, // Left leg NUI_SKELETON_POSITION_HIP_LEFT, NUI_SKELETON_POSITION_KNEE_LEFT, NUI_SKELETON_POSITION_KNEE_LEFT, NUI_SKELETON_POSITION_ANKLE_LEFT, NUI_SKELETON_POSITION_ANKLE_LEFT, NUI_SKELETON_POSITION_FOOT_LEFT, // Right leg NUI_SKELETON_POSITION_HIP_RIGHT, NUI_SKELETON_POSITION_KNEE_RIGHT, NUI_SKELETON_POSITION_KNEE_RIGHT, NUI_SKELETON_POSITION_ANKLE_RIGHT, NUI_SKELETON_POSITION_ANKLE_RIGHT, NUI_SKELETON_POSITION_FOOT_RIGHT, // Others NUI_SKELETON_POSITION_SHOULDER_CENTER, NUI_SKELETON_POSITION_SHOULDER_LEFT, NUI_SKELETON_POSITION_SHOULDER_CENTER, NUI_SKELETON_POSITION_SHOULDER_RIGHT, NUI_SKELETON_POSITION_HIP_CENTER, NUI_SKELETON_POSITION_HIP_LEFT, NUI_SKELETON_POSITION_HIP_CENTER, NUI_SKELETON_POSITION_HIP_RIGHT }; - Now in the

render()function, we render the skeleton lines along with the color image. ThedrawIndexedMesh()function is declared inGLUtilities.hfor quick and convenient use.glDisable( GL_TEXTURE_2D ); glLineWidth( 5.0f ); VertexData skeletonData = { &(skeletonVertices[0][0]), NULL, NULL, NULL }; drawIndexedMesh( WITH_POSITION, NUI_SKELETON_POSITION_COUNT, skeletonData, GL_LINES, 38, skeletonIndices ); - Compile the program, run it, and see what we have just done!

The skeleton along with the color image (only the upper body)

- Please note that the author has only shown the upper body in the Kinect camera, so the lower body part of skeletal mapping result may be incorrect and might shake. But the shoulder and arms perform very well.

Some new functions we used in this example are listed as follows:

|

Function/method name |

Parameters |

Description |

|---|---|---|

|

|

Gets skeleton data of the current frame and sets it to the | |

|

|

Returns the depth space |

In this example, we will transform the joint positions to depth space, and then to [0, 1] using the following functions:

NuiTransformSkeletonToDepthImage(

data.SkeletonPositions[i],

&coordInDepth.x, &coordInDepth.y,

&depth, NUI_IMAGE_RESOLUTION_640x480 );

skeletonVertices[i][0] = (GLfloat)coordInDepth.x / 640.0f;

skeletonVertices[i][1] = 1.0f - (GLfloat)coordInDepth.y / 480.0f;

skeletonVertices[i][2] = (GLfloat)NuiDepthPixelToDepth(depth) * 0.00025f;The original skeleton positions are stored in data.SkeletonPositions to define all the necessary joints in world space. The Microsoft Kinect SDK uses a right-handed coordinate system with values in meters to manage all the positions. The origin is at the sensor pinhole, and the z axis is pointing from the sensor to the view field. So when we lift our hands horizontally (x axis), the coordinates of our left and right hands may be:

Left hand: (-0.8, 0.2, 2.0), and right hand: (0.8, 0.2, 2.0)

The actual values won't be so stable and symmetrical, but it indicates that the person is standing about 2 meters away from the Kinect device, and his/her arm span is about 1.6 meters.

It can be easily inferred from the code segment that, after mapping the positions to depth space, every joint's x and y values are using image coordinates (640 x 480), and the z value is the actual depth, which is equivalent to the depth image pixel at the same location. As we know, Kinect can detect depth values from 0.8 meters to at most 4 meters, so we divide the return value of NuiDepthPixelToDepth() with 4000 millimeters to re-project the joint's z axis to [0, 1], which assumes that z = 0 is the camera lens plane (but can't reach it), and z = 1 is the farthest.

The Microsoft Kinect SDK also provides joint orientation data, which could be useful if we want to control the virtual body more precisely. Local or world space orientations can be obtained by calling the following function in the updateSkeletonData() function:

NUI_SKELETON_BONE_ORIENTATION rotations[NUI_SKELETON_POSITION_COUNT]; NuiSkeletonCalculateBoneOrientations( &data, rotations );

It will output to an array containing the orientation data of each joint. Try to get and display it (for example, add a small axis at each joint position) on screen by yourself.