Organizations typically focus on building applications and data-driven software toharvest value from their data. Application security mainly attempts to prevent data or code from being stolen. Application security considerations include hardware, software, and procedures to minimize security vulnerabilities.

The previous chapter explored how to set up your cloud infrastructure and strategies to make it secure.

Securing application access

Data classification

Securing data access

Data encryption patterns

Securing Application Access

In traditional application development and environments, securing data and software applications involves the on-premise network perimeter and physical access to the data. Considering the current trend where software developers are able to work from home using the Bring Your Own Device (BYOD) concept and mobile and cloud applications, most of the workload happens outside the company’s network.

A circular model depicts zero trust security, which includes a partnership with identity providers, trusted application access, app layer security, and infrastructure security.

Zero trust cloud security

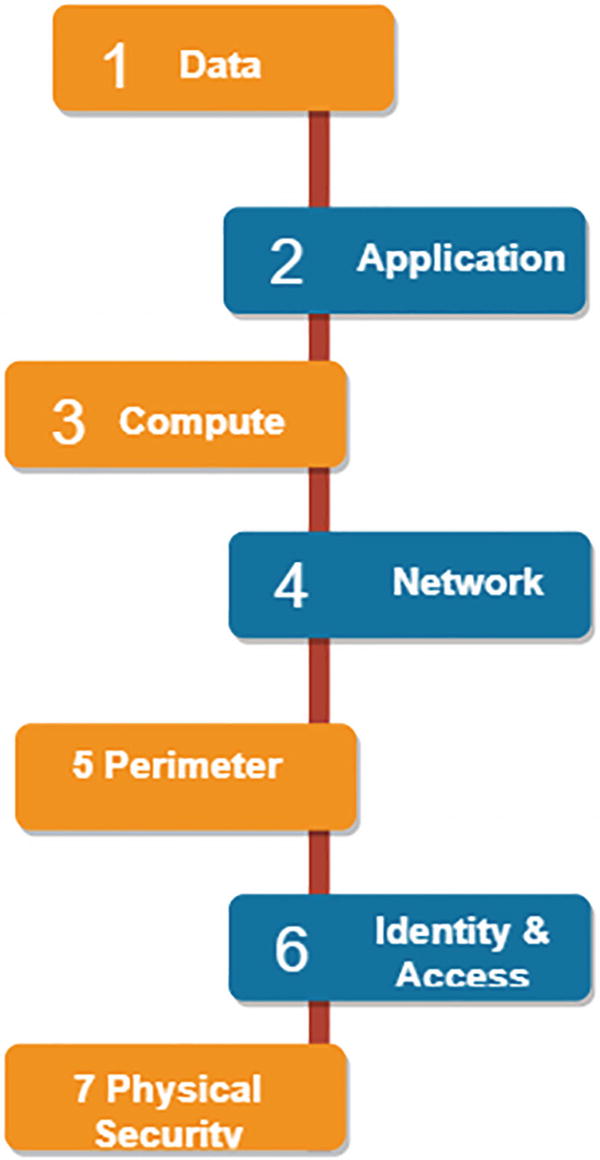

The layered security approach with defense-in-depth: With layered security, you can easily detect attempts to access unauthorized access to the data. Since there are multiple layers, if one layer is breached, another layer is in place to prevent exposure.

Regarding the PaaS and SaaS components of Microsoft Azure, Microsoft has a layered approach to security for physical data centers as well as across all Azure services. It protects information from intruders and prevents the data/information from being stolen.

Confidentiality : Only authorized users can access the application. With the principle of least privilege, an entity or ID should be given only those privileges that are absolutely required to complete its tasks. Only individuals or non-personal entities who have explicit access can use the application. This includes securing user passwords, email content, or certificates.

Integrity : In order to achieve integrity of an application, the goal is to prevent unauthorized changes to the data/information while the data is in rest or in transit. The standard approach while sharing data is that the sender creates unique fingerprint data using the hashing algorithm. The hash is then sent to the receiver along with the data. The receiver then checks the integrity of the data by calculating and comparing the hash to see if any data was lost or changed in transit.

Availability : Cloud providers need to make sure that all services are available to authorized users. Denial of service attacks are the major reason to make applications unavailable to end users. Another major reason for the non-availability of the application is due to the natural disasters.

Now that you’ve read about the basics of application security, let’s look at the security layers. You can think of layered security as a set of concentric rings, with the data being at the center, and it should be secured.

- Data: In a cloud environment, data normally resides as follows:

- 1.

Database

- 2.

Virtual machine disk

- 3.

SaaS application such as Microsoft 365

- 4.

Cloud storage

Applications: You need to make sure that your applications are secure and free of vulnerabilities. Sensitive information should be stored in a secure storage mechanism. Integrating security into the application development lifecycle will lessen security vulnerabilities in the code. See Figure 5-2.

A model diagram depicts the security layers, which include seven layers such as data, application, computer, network, perimeter, identity and access, and physical security.

Security layers

Compute: All compute resources should be kept secure by making sure that malware and patches are applied on time.

Networking: Resource communication should be limited by using segmentation and access control. Inbound and outbound traffic should be denied by default and limited traffic should be open based on requirements.

Perimeter: In order to avoid denial of service for end users, you can use the distributed denial of service (DDoS) protection. You can also use the perimeter firewalls to identify and alert about attacks against the network.

Identity and access: The main goal of this security layer is to make sure that identities are secure and use a single sign-on and multifactor authentication for login and access management.

Physical security : Physical security of the building and controlled access to the computer hardware in the data center is a top-most priority above all security layers.

Now that you have an understanding of application security access, the next section covers identity management in detail.

Identity Management

Digital identities are an integral part of enterprise organizations working on cloud or on-premise. Earlier identity and access services were restricted to operate only within the company’s internal environment, so protocols like LDAP and Kerberos were designed and implemented.

Single sign-on : In a normal scenario, users have to manage multiple usernames and passwords to access applications/systems or services. More identities mean more usernames and passwords to remember. It becomes difficult for users to remember all that information. With single sign-on, users need to remember only one user Id and password. Access across the application is granted to one entity, which simplifies the security model. You can use Azure AD to enable single sign-on, which has a capability to combine multiple data sources into the security graph.

- Multifactor authentication (MFA) : With multifactor authentication , you can add a layer of security to the application by requiring two or more elements for full authentication. Multifactor authentication enables security this way:

Something you know (for example, password)

Something you have (for example, security token)

Something you are (for example, biometrics such as fingerprints or face recognition)

It increases the security of the identity by limiting the impact of confidential information being leaked. Azure AD has built-in multifactor authentication capability. Basic authentication features are available to Microsoft 365 and Azure AD administrators at no cost.

A block diagram with 7 layers of Azure A D multifactor authentication. It flows from physical, data link, network, transport, session, presentation, and application.

Figure 5-3Azure AD multifactor authentication

The next section takes a quick tour of data classification.

Data Classification

Data classification sorts data to determine and assign value to it. It categorizes the data by sensitivity and business impact to identify the risks. Since the data is classified, you can manage the data to protect the sensitive information from loss. It is a process of linking metadata with every asset in a digital estate, which identifies the type of data.

Non-business : Personal data that doesn’t belong to the organization

Public : Data that is freely available and approved for public use

General : Data that is available but not approved for public use

Confidential : Data that can create issues if it is shared with other people

Highly confidential : Business data that can create major issues for an organization if it is overshared

Achieve standards of data privacy and audit regulatory compliance

Access sensitive data in a controlled manner and audit data access for security purposes

- Harden the security database that contains sensitive data

A model diagram depicts the data discovery and classification processes with discovery, classification, and protection, which includes identifying sensitive data, classification by default, access control, and so on.

Figure 5-4Data discovery and classification

Discovery and recommendations : A classification engine scans the database and detects the columns in the database that might contain personal sensitive information. Based on the data, it provides a recommendation to review and apply classification using the Azure Portal. See Figure 5-5.

A screenshot depicts the Azure S Q L D B, in security, data discovery, and classification selected, which has two options and learn more links at the end.

Data discovery and classification in Azure SQL DB

Labels : Using this feature, you can apply labels to the columns based on the metadata attribute available in the SQL database. You can take into account the metadata attributes in the SQL database based on the compliance and auditing requirements. See Figure 5-6.

A screenshot depicts the Azure S Q L D B data classification with display names of public, general, confidential, and so on, with states and descriptions for each.

Labelling in Azure SQL DB

Query execution sensitivity: Once you execute the query, sensitivity of the query result is determined for auditing purposes.

Dashboards : The SQL user can view the classification of the underlying data in visualized dashboards. You can also download reports in Excel format for auditing and governance purposes. See Figure 5-7.

A screenshot depicts the Azure S Q L D B data classification dashboard with classified columns, tables containing sensitive data, unique information types, and label and information type distribution.

Azure SQL DB data classification dashboards

Azure SQL offers the SQL Information Protection Policy as well as the Microsoft Information Protection policy to classify data. You can use either of them to discover and classify your data.

SQL Information Protection Policy : Data discovery and classification has an built-in feature of sensitivity labels and information types that have discovery logic. You can define and customize the classification inside the central place using the Azure organizations. This central place is the Microsoft defender from the security policy. Only administrators of the management group can execute this activity. See Figure 5-8.

A screenshot depicts the Azure S Q L D B information protection with save, discard, create a label, manage information types, import or export, and information protection policy with two options.

Azure SQL DB Information Protection

A model diagram depicts the Azure S Q L D B information protection policy labels, which have display names, public, general, confidential, confidential G D P R, highly confidential, and highly confidential G D P R.

Azure SQL DB Information Protection Policy Labels

- 1.

First go to the Azure Portal using the link: https://portal.azure.com/

- 2.

Go to the Azure database and open the Data Discovery and Classification option from the Security section. The Overview tab has a summary of classification from the SQL database.

- 3.

It also has an option to download the report in Excel. Click the Export button in the top pane to do this. See Figure 5-10.

A screenshot depicts the Azure S Q L D B information protection with save, discard, create a label, manage information types, and import or export options.

Azure SQL DB Data Discovery an Classification: Export option

- 4.

In order to classify your data, select the Classification tab from the Data Discovery and Classification page. This classification engine scans the data and identifies any columns that contain personal and sensitive information.

- 5.Next, apply the classification recommendations as follows. First select the recommendation panel from the bottom pane.

A screenshot depicts the Azure S Q L D B classification recommendations with overview and classification options where classification is selected, and 49 columns with classification recommendations are highlighted.

Figure 5-11Azure SQL DB classification recommendations

You can accept the recommendations for a specific column or ignore them based on your choices. In order to apply the recommendations, choose the Accept Selected Recommendations option.

On the other hand, Microsoft Information Policy labels provide a simple way for end users to classify sensitive data across various Microsoft applications. These sensitivity labels are created and maintained in the Microsoft 365 compliance center.

Let’s now go through the process of securing data access.

Securing Data Access

A model diagram depicts the cloud data security with a cloud data store which includes information protection, access management, network security, and threat protection.

Cloud data security

Centrally monitoring security events in the logs

Encrypting data at rest and in transit

Guaranteeing authenticated and authorized access to data

Handling centralized identity management for securely accessing stored data

Data Protection

Data at rest : You need to protect the data that exists statically or physically inside the cloud storage.

Data in transit : When data is being transferred to components or locations over the network or across the service bus, it must be secured.

Three circular models depict the data in different conditions, such as data at rest, in transit, and data in use.

Data at rest or in transit

Access Control

Securing and administrating data stored in the cloud is a combination of identity access management and control .

- Centralized identity management. Centralized identity management enables much easier authentication and authorization to manage enterprise applications.

A block diagram of identity access management and control. It flows from the cloud with the public, hybrid and private, data security and privacy, data integrity, data confidentiality, data availability, and data privacy with hardware and software.

Figure 5-14Identity and Access Management (IAM) in Azure

Password management

Multifactor Authentication(MFA) : Multifactor authentication is an electronic authentication method in which a user can access the application or data only after providing two or more pieces of information—what the user knows, such as a password, what the user has, such as a token, and what the user is, using biometric verification.

Role-based access control (RBAC) : Role-based access control is a method for controlling what users can do within a company's IT application. RBAC enables access control by assigning roles to end users. See Figure 5-15.

A screenshot represents sales as a customer database, finance as payroll, and engineering is the codebase of role-based access control.

Role-based access control

Conditional access policies

Monitor suspicious activities

Another important consideration for protecting data is to select the right key management solution. Azure Key Vault (AKV) safeguards keys, certificates, and secrets that can be used by cloud applications and services. Azure Key Vault is designed to store keys and secrets. It is not intended to store the passwords.

Grant access to the users, groups, or application for specific purposes.

Azure has many in-built roles as well and you can create custom roles as per the requirements. You can assign those predefined roles to users or groups as needed.

Control users who have access to the data or application.

Access to Key Vault is managed through two separate interfaces: management plane and data plane. For example, if you want to grant data plane access permissions using the Key Vault access policies then no management plane access is needed for the application.

Store authentication certificates in the Key Vault: Azure Resource Manager(ARM) can securely deploy the certificates stored inside the Key Vault to Azure VMs when the VMs are deployed. You can set up required access policies for the Key Vault to grant access to the certificate.

You can use the Azure disk encryption in a virtual machine to encrypt the attached disks on the Windows or Linux VMs. Azure Storage uses Azure storage encryption to encrypt data at rest in the Azure Storage. Encryption, decryption, and key management are transparent to end users. Azure SQL databases and Azure Synapse Analytics use Transparent Data Encryption (TDE) to execute the real-time encryption and decryption of the database, related backup, and transaction log files without requiring any changes to the application. SQL databases also have a feature called Always Encrypted to protect sensitive data at rest and on the server. This prevents Database Administrators (DBAs) , cloud database operators , and other high privileged non-authorized users from accessing encrypted data directly from the server as well.

Network Security for Data Access

In order to protect data in transit , you can use Secure Socket Layer (SSL) /Transport Layer Security (TLS) certificates while exchanging data across locations. You can isolate the communication channel between the on-premises and cloud infrastructure using the Virtual Private Network (VPN) or Express Route (ER) .

Using the network security groups (NSGs) , you can reduce the number of potential attacks, as the network security groups contain a list of security rules that allow or deny inbound or outbound network traffic based on destination address, source ports, destination ports, or protocol. VMs within the two Azure virtual networks can easily talk to each other using VNET peering . Network traffic between the peered virtual networks is private.

Monitoring

Three model diagrams represent the continuous access, security, and defense of the Microsoft defender for the cloud.

Microsoft Defender for Cloud

A flow diagram represents the logs, to log query to dashboards, power shell, and A P I of the log analytics workspace.

Log analytics workspace

A screenshot represents the data management, security, intelligent performance, recommendations 3, security alerts 0, findings, Microsoft subscription, recommendations, and description.

Microsoft Defender for Cloud

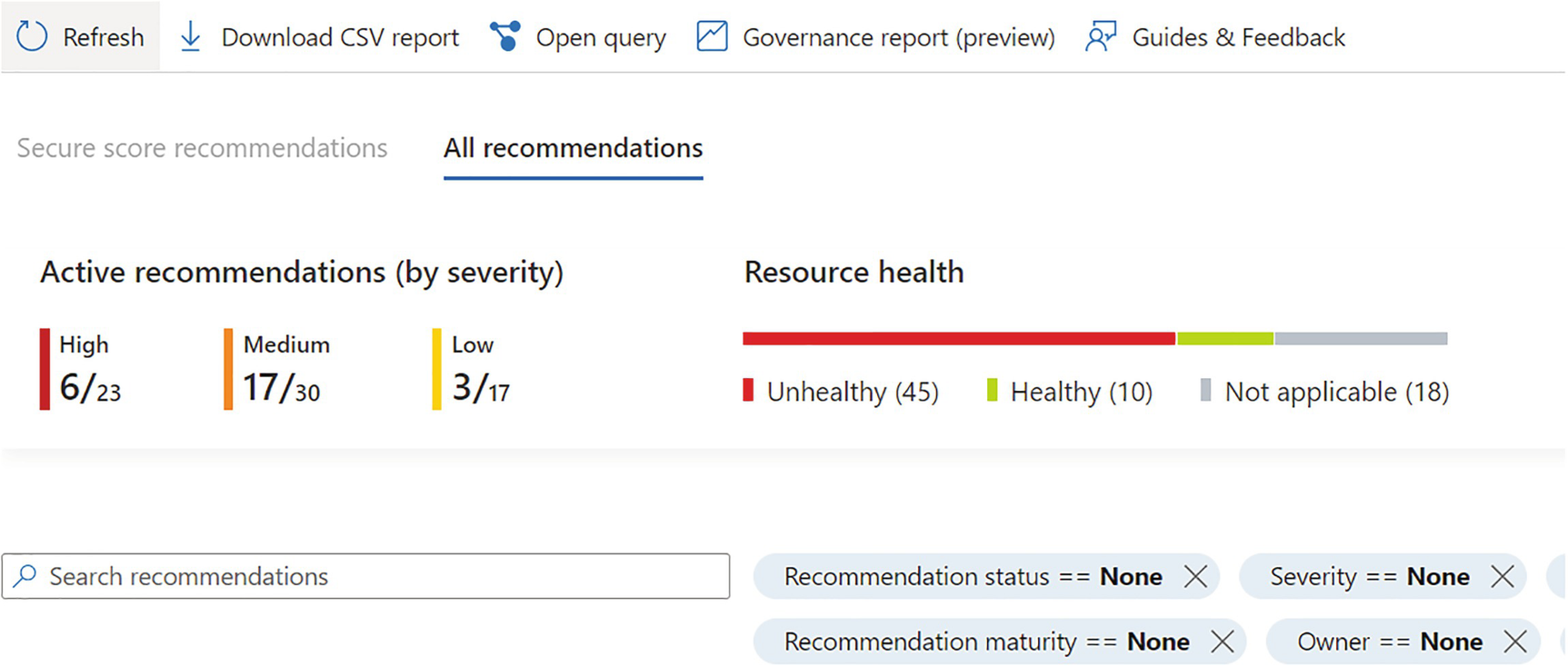

A screenshot depicts the advanced threat protection with all recommendations selected, including active recommendations, resource health, recommendation status, maturity, severity, and owner.

Advanced Threat Protection

Data Encryption Patterns

A model diagram depicts the data encryption in which a device extracts data from five different entities like cloud storage, memory, network, or other devices.

Data encryption

Use identity-based storage access controls

Encrypt the virtual disks for the virtual machines

Use secure hash algorithms for data encryption

Protect data in transit by using encrypted network channels like HTTPS or TLS for client-server communication

Use additional key encryption key (KEK) to protect the data encryption key (DEK)

Microsoft Azure has built-in data encryption features in many layers and also participates in data processing. Microsoft recommends you enable the encryption capability for all Microsoft services to protect and secure your data.

Identity based access control : You can enable access to the storage service using the Azure Active Directory and key-based authentications, such as storage account access key and shared access signature (SAS).

Built-in storage encryption : All cloud storage data is by default encrypted. Data can’t be read by the tenant if it hasn’t been written by the tenant. With this feature, you can make sure that data isn’t leaked.

Region-based controls : Data only remains in the selected region and three copies of the data is maintained in other regions. Azure storage has detailed activity logging available based on the configuration.

Firewall features : Azure Firewall provides an additional layer of access control and storage threat protection to detect abnormal activities related to access.

Azure’s Key Management Parameters

-  A table depicts the key management parameters of Azure which includes Microsoft-managed keys, customer-managed keys, and customer-provided keys. A table depicts the key management parameters of Azure which includes Microsoft-managed keys, customer-managed keys, and customer-provided keys.

|

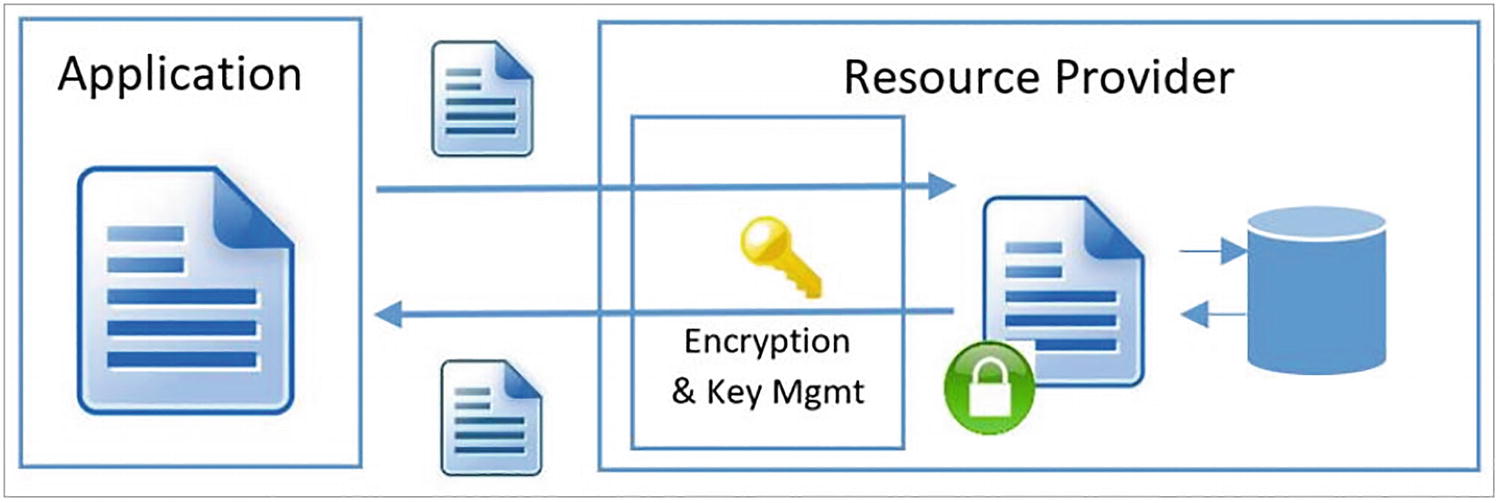

- Server-side encryption using service-managed keys: In this method of encryption, encryption is mainly performed by the Azure service, which is basically done by the Microsoft Azure cloud resource provider. Consider an example where Azure Storage receives the data in plain text format and encryption and decryption are performed automatically by the cloud service providers when you write or read data to the Azure storage account. Resource providers can use their own encryption key or they can use a custom encryption key, depending on the storage encryption configuration. See Figure 5-21.

A model diagram depicts the client-side encryption, which includes the application, resource provider, and encryption and key management.

Figure 5-21Server-side encryption

Client encryption model : This encryption model is performed outside Azure by the service or client application. With this setup, Azure resource providers can encrypt the blob of data, but they can’t decrypt the data or access the encryption keys. See Figure 5-22.

A model diagram depicts Client-side encryption. Application and resource providers are depicted in the diagram.

Client-side encryption

Some Azure services store the root key encryption key (KEK) in the Azure Key Vault and store the encrypted data encryption key (DEK) in the internal location where the data resides.

Conclusion

This chapter discussed securing the data stored in the cloud. You also learned about the various ways to classify the data and make it available for downstream users and applications in a secure manner. You also learned about the various data encryption patterns and related models when working with public cloud providers like Azure, Google, AWS, and so on.