Now that we understand the structure of this web page we will investigate three different approaches to scraping its data, firstly with regular expressions, then with the popular BeautifulSoup module, and finally with the powerful lxml module.

If you are unfamiliar with regular expressions or need a reminder, there is a thorough overview available at https://docs.python.org/2/howto/regex.html.

To scrape the area using regular expressions, we will first try matching the contents of the <td> element, as follows:

>>> import re

>>> url = 'http://example.webscraping.com/view/UnitedKingdom-239'

>>> html = download(url)

>>> re.findall('<td class="w2p_fw">(.*?)</td>', html)

['<img src="/places/static/images/flags/gb.png" />',

'244,820 square kilometres',

'62,348,447',

'GB',

'United Kingdom',

'London',

'<a href="/continent/EU">EU</a>',

'.uk',

'GBP',

'Pound',

'44',

'@# #@@|@## #@@|@@# #@@|@@## #@@|@#@ #@@|@@#@ #@@|GIR0AA',

'^(([A-Z]\d{2}[A-Z]{2})|([A-Z]\d{3}[A-Z]{2})|([A-Z]{2}\d{2}[A-Z]{2})|([A-Z]{2}\d{3}[A-Z]{2})|([A-Z]\d[A-Z]\d[A-Z]{2})|([A-Z]{2}\d[A-Z]\d[A-Z]{2})|(GIR0AA))$',

'en-GB,cy-GB,gd',

'<div><a href="/iso/IE">IE </a></div>']This result shows that the <td class="w2p_fw"> tag is used for multiple country attributes. To isolate the area, we can select the second element, as follows:

>>> re.findall('<td class="w2p_fw">(.*?)</td>', html)[1]

'244,820 square kilometres'This solution works but could easily fail if the web page is updated. Consider if this table is changed so that the population data is no longer available in the second row. If we just need to scrape the data now, future changes can be ignored. However, if we want to rescrape this data in future, we want our solution to be as robust against layout changes as possible. To make this regular expression more robust, we can include the parent <tr> element, which has an ID, so it ought to be unique:

>>> re.findall('<tr id="places_area__row"><td class="w2p_fl"><label for="places_area" id="places_area__label">Area: </label></td><td class="w2p_fw">(.*?)</td>', html)

['244,820 square kilometres']This iteration is better; however, there are many other ways the web page could be updated in a way that still breaks the regular expression. For example, double quotation marks might be changed to single, extra space could be added between the <td> tags, or the area_label could be changed. Here is an improved version to try and support these various possibilities:

>>> re.findall('<tr

id="places_area__row">.*?<tds*class=["']w2p_fw["']>(.*?)

</td>', html)

['244,820 square kilometres']This regular expression is more future-proof but is difficult to construct, becoming unreadable. Also, there are still other minor layout changes that would break it, such as if a title attribute was added to the <td> tag.

From this example, it is clear that regular expressions provide a quick way to scrape data but are too brittle and will easily break when a web page is updated. Fortunately, there are better solutions.

Beautiful Soup is a popular module that parses a web page and then provides a convenient interface to navigate content. If you do not already have this module, the latest version can be installed using this command:

pip install beautifulsoup4

The first step with Beautiful Soup is to parse the downloaded HTML into a soup document. Most web pages do not contain perfectly valid HTML and Beautiful Soup needs to decide what is intended. For example, consider this simple web page of a list with missing attribute quotes and closing tags:

<ul class=country>

<li>Area

<li>Population

</ul>If the Population item is interpreted as a child of the Area item instead of the list, we could get unexpected results when scraping. Let us see how Beautiful Soup handles this:

>>> from bs4 import BeautifulSoup

>>> broken_html = '<ul class=country><li>Area<li>Population</ul>'

>>> # parse the HTML

>>> soup = BeautifulSoup(broken_html, 'html.parser')

>>> fixed_html = soup.prettify()

>>> print fixed_html

<html>

<body>

<ul class="country">

<li>Area</li>

<li>Population</li>

</ul>

</body>

</html>Here, BeautifulSoup was able to correctly interpret the missing attribute quotes and closing tags, as well as add the <html> and <body> tags to form a complete HTML document. Now, we can navigate to the elements we want using the find() and find_all() methods:

>>> ul = soup.find('ul', attrs={'class':'country'})

>>> ul.find('li') # returns just the first match

<li>Area</li>

>>> ul.find_all('li') # returns all matches

[<li>Area</li>, <li>Population</li>]Note

For a full list of available methods and parameters, the official documentation is available at http://www.crummy.com/software/BeautifulSoup/bs4/doc/.

Now, using these techniques, here is a full example to extract the area from our example country:

>>> from bs4 import BeautifulSoup

>>> url = 'http://example.webscraping.com/places/view/United-Kingdom-239'

>>> html = download(url)

>>> soup = BeautifulSoup(html)

>>> # locate the area row

>>> tr = soup.find(attrs={'id':'places_area__row'})

>>> td = tr.find(attrs={'class':'w2p_fw'}) # locate the area tag

>>> area = td.text # extract the text from this tag

>>> print area

244,820 square kilometresThis code is more verbose than regular expressions but easier to construct and understand. Also, we no longer need to worry about problems in minor layout changes, such as extra whitespace or tag attributes.

Lxml is a Python wrapper on top of the libxml2 XML parsing library written in C, which helps make it faster than Beautiful Soup but also harder to install on some computers. The latest installation instructions are available at http://lxml.de/installation.html.

As with Beautiful Soup, the first step is parsing the potentially invalid HTML into a consistent format. Here is an example of parsing the same broken HTML:

>>> import lxml.html

>>> broken_html = '<ul class=country><li>Area<li>Population</ul>'

>>> tree = lxml.html.fromstring(broken_html) # parse the HTML

>>> fixed_html = lxml.html.tostring(tree, pretty_print=True)

>>> print fixed_html

<ul class="country">

<li>Area</li>

<li>Population</li>

</ul>As with BeautifulSoup, lxml was able to correctly parse the missing attribute quotes and closing tags, although it did not add the <html> and <body> tags.

After parsing the input, lxml has a number of different options to select elements, such as XPath selectors and a find() method similar to Beautiful Soup. Instead, we will use CSS selectors here and in future examples, because they are more compact and can be reused later in Chapter 5, Dynamic Content when parsing dynamic content. Also, some readers will already be familiar with them from their experience with jQuery selectors.

Here is an example using the lxml CSS selectors to extract the area data:

>>> tree = lxml.html.fromstring(html)

>>> td = tree.cssselect('tr#places_area__row > td.w2p_fw')[0]

>>> area = td.text_content()

>>> print area

244,820 square kilometresThe key line with the CSS selector is highlighted. This line finds a table row element with the places_area__row ID, and then selects the child table data tag with the w2p_fw class.

CSS selectors are patterns used for selecting elements. Here are some examples of common selectors you will need:

Select any tag: * Select by tag <a>: a Select by class of "link": .link Select by tag <a> with class "link": a.link Select by tag <a> with ID "home": a#home Select by child <span> of tag <a>: a > span Select by descendant <span> of tag <a>: a span Select by tag <a> with attribute title of "Home": a[title=Home]

Note

The CSS3 specification was produced by the W3C and is available for viewing at http://www.w3.org/TR/2011/REC-css3-selectors-20110929/.

Lxml implements most of CSS3, and details on unsupported features are available at https://pythonhosted.org/cssselect/#supported-selectors.

Note that, internally, lxml converts the CSS selectors into an equivalent XPath.

To help evaluate the trade-offs of the three scraping approaches described in this chapter, it would help to compare their relative efficiency. Typically, a scraper would extract multiple fields from a web page. So, for a more realistic comparison, we will implement extended versions of each scraper that extract all the available data from a country's web page. To get started, we need to return to Firebug to check the format of the other country features, as shown here:

Firebug shows that each table row has an ID starting with places_ and ending with __row. Then, the country data is contained within these rows in the same format as the earlier area example. Here are implementations that use this information to extract all of the available country data:

FIELDS = ('area', 'population', 'iso', 'country', 'capital', 'continent', 'tld', 'currency_code', 'currency_name', 'phone', 'postal_code_format', 'postal_code_regex', 'languages', 'neighbours')

import re

def re_scraper(html):

results = {}

for field in FIELDS:

results[field] = re.search('<tr id="places_%s__row">.*?<td class="w2p_fw">(.*?)</td>' % field, html).groups()[0]

return results

from bs4 import BeautifulSoup

def bs_scraper(html):

soup = BeautifulSoup(html, 'html.parser')

results = {}

for field in FIELDS:

results[field] = soup.find('table').find('tr', id='places_%s__row' % field).find('td', class_='w2p_fw').text

return results

import lxml.html

def lxml_scraper(html):

tree = lxml.html.fromstring(html)

results = {}

for field in FIELDS:

results[field] = tree.cssselect('table > tr#places_%s__row > td.w2p_fw' % field)[0].text_content()

return resultsNow that we have complete implementations for each scraper, we will test their relative performance with this snippet:

import time

NUM_ITERATIONS = 1000 # number of times to test each scraper

html = download('http://example.webscraping.com/places/view/

United-Kingdom-239')

for name, scraper in [('Regular expressions', re_scraper),

('BeautifulSoup', bs_scraper),

('Lxml', lxml_scraper)]:

# record start time of scrape

start = time.time()

for i in range(NUM_ITERATIONS):

if scraper == re_scraper:

re.purge()

result = scraper(html)

# check scraped result is as expected

assert(result['area'] == '244,820 square kilometres')

# record end time of scrape and output the total

end = time.time()

print '%s: %.2f seconds' % (name, end – start)This example will run each scraper 1000 times, check whether the scraped results are as expected, and then print the total time taken. The download function used here is the one defined in the preceding chapter. Note the highlighted line calling re.purge(); by default, the regular expression module will cache searches and this cache needs to be cleared to make a fair comparison with the other scraping approaches.

Here are the results from running this script on my computer:

$ python performance.py Regular expressions: 5.50 seconds BeautifulSoup: 42.84 seconds Lxml: 7.06 seconds

The results on your computer will quite likely be different because of the different hardware used. However, the relative difference between each approach should be equivalent. The results show that Beautiful Soup is over six times slower than the other two approaches when used to scrape our example web page. This result could be anticipated because lxml and the regular expression module were written in C, while BeautifulSoup is pure Python. An interesting fact is that lxml performed comparatively well with regular expressions, since lxml has the additional overhead of having to parse the input into its internal format before searching for elements. When scraping many features from a web page, this initial parsing overhead is reduced and lxml becomes even more competitive. It really is an amazing module!

The following table summarizes the advantages and disadvantages of each approach to scraping:

|

Scraping approach |

Performance |

Ease of use |

Ease to install |

|---|---|---|---|

|

Regular expressions |

Fast |

Hard |

Easy (built-in module) |

|

Beautiful Soup |

Slow |

Easy |

Easy (pure Python) |

|

Lxml |

Fast |

Easy |

Moderately difficult |

If the bottleneck to your scraper is downloading web pages rather than extracting data, it would not be a problem to use a slower approach, such as Beautiful Soup. Or, if you just need to scrape a small amount of data and want to avoid additional dependencies, regular expressions might be an appropriate choice. However, in general, lxml is the best choice for scraping, because it is fast and robust, while regular expressions and Beautiful Soup are only useful in certain niches.

Now that we know how to scrape the country data, we can integrate this into the link crawler built in the preceding chapter. To allow reusing the same crawling code to scrape multiple websites, we will add a callback parameter to handle the scraping. A callback is a function that will be called after certain events (in this case, after a web page has been downloaded). This scrape callback will take a url and html as parameters and optionally return a list of further URLs to crawl. Here is the implementation, which is simple in Python:

def link_crawler(..., scrape_callback=None): ... links = [] if scrape_callback: links.extend(scrape_callback(url, html) or []) ...

The new code for the scraping callback function are highlighted in the preceding snippet, and the full source code for this version of the link crawler is available at https://bitbucket.org/wswp/code/src/tip/chapter02/link_crawler.py.

Now, this crawler can be used to scrape multiple websites by customizing the function passed to scrape_callback. Here is a modified version of the lxml example scraper that can be used for the callback function:

def scrape_callback(url, html):

if re.search('/view/', url):

tree = lxml.html.fromstring(html)

row = [tree.cssselect('table > tr#places_%s__row > td.w2p_fw' % field)[0].text_content() for field in FIELDS]

print url, rowThis callback function would scrape the country data and print it out. Usually, when scraping a website, we want to reuse the data, so we will extend this example to save results to a CSV spreadsheet, as follows:

import csv

class ScrapeCallback:

def __init__(self):

self.writer = csv.writer(open('countries.csv', 'w'))

self.fields = ('area', 'population', 'iso', 'country', 'capital', 'continent', 'tld', 'currency_code', 'currency_name', 'phone', 'postal_code_format', 'postal_code_regex', 'languages', 'neighbours')

self.writer.writerow(self.fields)

def __call__(self, url, html):

if re.search('/view/', url):

tree = lxml.html.fromstring(html)

row = []

for field in self.fields:

row.append(tree.cssselect('table > tr#places_{}__row > td.w2p_fw'.format(field))[0].text_content())

self.writer.writerow(row) To build this callback, a class was used instead of a function so that the state of the csv writer could be maintained. This csv writer is instantiated in the constructor, and then written to multiple times in the __call__ method. Note that __call__ is a special method that is invoked when an object is "called" as a function, which is how the cache_callback is used in the link crawler. This means that scrape_callback(url, html) is equivalent to calling scrape_callback.__call__(url, html). For further details on Python's special class methods, refer to https://docs.python.org/2/reference/datamodel.html#special-method-names.

Here is how to pass this callback to the link crawler:

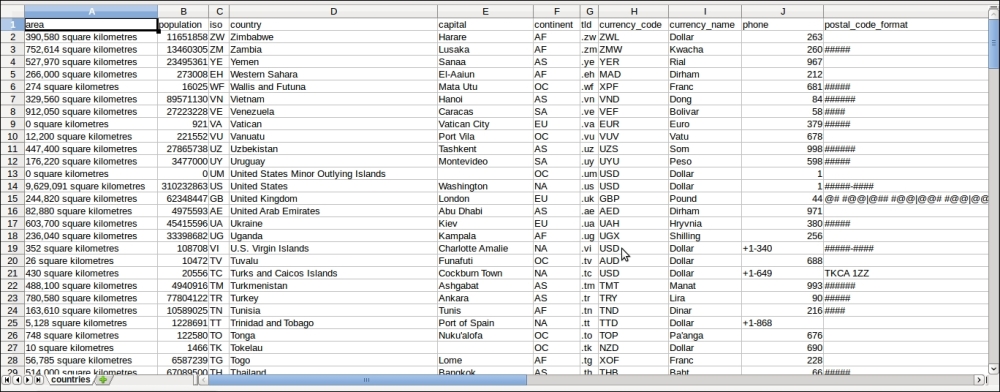

link_crawler('http://example.webscraping.com/', '/(index|view)', max_depth=-1, scrape_callback=ScrapeCallback())Now, when the crawler is run with this callback, it will save results to a CSV file that can be viewed in an application such as Excel or LibreOffice:

Success! We have completed our first working scraper.