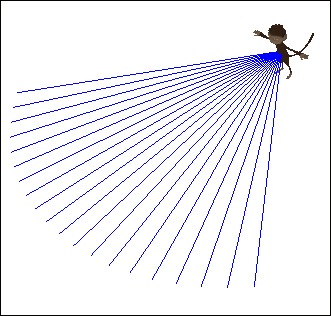

No matter how clever our AI is, it needs some senses to become aware of its surroundings. In this recipe, we'll accomplish an AI that can look in a configurable arc in front of it, as shown in the following screenshot. It will build upon the AI control from the previous recipe, but the implementation should work well for many other patterns as well. The following screenshot shows Jaime with a visible representation of his line of sight:

To get our AI to sense something, we need to modify the AIControl class from the previous recipe by performing the following steps:

- We need to define some values, a float called

sightRange, for how far the AI can see, and an angle representing the field of view (to one side) in radians. - With this done, we create a

sense()method. Inside we define a Quaternion calledaimDirectionthat will be the ray direction relative to the AI'sviewDirectionfield. - We convert the angle to a Quaternion and multiply it with

viewDirectionto get the direction of the ray, as shown in the following code:rayDirection.set(viewDirection); aimDirection.fromAngleAxis(angleX, Vector3f.UNIT_Y); aimDirection.multLocal(rayDirection);

- We check whether the ray collides with any of the objects in our

targetableObjectslist using the following code:CollisionResults col = new CollisionResults(); for(Spatial s: targetableObjects){ s.collideWith(ray, col); } - If this happens, we set the target to be this object and exit the sensing loop, as shown in the following code. Otherwise, it should continue searching for it:

if(col.size() > 0){ target = col.getClosestCollision().getGeometry(); foundTarget = true; break; } - If the sense method returns true, the AI now has a target, and should switch to the

Followstate. We add a check for this in thecontrolUpdatemethod and theIdlecase, as shown in the following code:case Idle: if(!targetableObjects.isEmpty() && sense()){ state = State.Follow; } break;

The AI begins in an idle state. As long as it has some items in the targetableObjects list, it will run the sense method on each update. If it sees anything, it will switch to the Follow state and stay there until it loses track of the target.

The sense method consists of a for loop that sends rays in an arc representing a field of view. Each ray is limited by sightRange and the loop will exit if a ray has collided with anything from the targetableObjects list.

Currently, it's very difficult to visualize the results. Exactly what does the AI see? One way of finding out is to create Lines for each ray we cast. These should be removed before each new cast. By following this example, we will be able to see the extent of the vision. The following steps will give us a way of seeing the extent of an AI's vision:

- First of all, we need to define an array for the lines; it should have the same capacity as the number of rays we're going to cast. Inside the

forloop, add the following code at the start and end:for(float x = -angle; x < angle; x+= FastMath.QUARTER_PI * 0.1f){ if(debug && sightLines[i] != null){ ((Node)getSpatial().getParent()).detachChild(sightLines[i]); } ...Our sight code here... if(debug){ Geometry line = makeDebugLine(ray); sightLines[i++] = line; ((Node)getSpatial().getParent()).attachChild(line); } - The

makeDebugLinemethod that we mentioned previously will look like the following code:private Geometry makeDebugLine(Ray r){ Line l = new Line(r.getOrigin(), r.getOrigin().add(r.getDirection().mult(sightRange))); Geometry line = new Geometry("", l); line.setMaterial(TestAiControl.lineMat); return line; }

This simply takes each ray and makes something that can be seen by human eyes.