![]()

Building Predictive Maintenance Models

The leading manufacturers are now investing in predictive maintenance, which holds the potential to reduce cost yet increase margin and customer satisfaction. Though traditional techniques such as statistics and manufacturing have helped, the industry is still plagued by serious quality issues and the high cost of business disruption when components fail. Advances in machine learning offer a unique opportunity to improve customer satisfaction and reduce service downtime. This chapter shows how to build models for predictive maintenance using Microsoft Azure Machine Learning. Through examples we will demonstrate how you can use Microsoft Azure Machine Learning to build, validate, and deploy a predictive model for predictive maintenance.

Overview

According to Ahmet Duyar, an expert in fault detection and former Visiting Researcher at NASA, a key cause of reduced productivity in the manufacturing industry is low asset effectiveness that results from equipment breakdowns and unnecessary maintenance interventions. In the US alone, the cost of excess maintenance and lost productivity is estimated at $740B, so we clearly need better approaches to maintenance (Duyar, 2011).

Predictive maintenance techniques are designed to predict when maintenance should be performed on a piece of equipment even before it breaks down. By accurately predicting the failure of a component you can reduce unplanned downtime and extend the lifetime of your equipment. Predictive maintenance also offers cost savings since it increases the efficiency of repairs: an engineer can target repair work to the predicted failure and complete the work faster as a result. They don’t need to spend too much time trying to find the cause of the equipment failure. With predictive maintenance, plant operators can be more proactive and fix issues even before their equipment breaks down.

It is worth clarifying the difference between predictive and preventive maintenance since the two are often confused. Preventive maintenance refers to scheduled maintenance that is typically planned in advance. While useful and in many cases necessary, preventive maintenance can be expensive and ineffective at catching issues that develop in between scheduled appointments. In contrast, predictive maintenance aims to predict failures before they happen.

Let’s use car servicing as an example to illustrate the difference. When you buy a car, the dealer typically recommends regular services based on time or mileage. For instance some car manufacturers recommend a full service after 6,000 and 10,000 miles. This is a good example of preventive maintenance. As you approach 6,000 miles, your dealer will send a reminder for you to schedule your full service. In contrast, through predictive maintenance, many car manufacturers would prefer to monitor the performance of your car on an ongoing basis through data relayed by sensors from your car to a database system. With this data, they can detect when your transmission or timing belt are beginning to show signs of impending failure and will call you for maintenance, regardless of your car’s mileage.

Predictive maintenance is a form of non-destructive monitoring that occurs during the normal operation of the equipment. Sensors installed on the equipment collect valuable data that can be used to predict and prevent failures.

Current techniques for predictive maintenance include vibration analysis, acoustical analysis, infrared monitoring, oil analysis, and model-based condition modeling. Vibration analysis uses sensors such as accelerometers installed on a motor to determine when it is operating abnormally. According to Ahmet Duyar, vibration analysis is the most widely used approach to condition monitoring, accounting for up 85% of all systems sold. Acoustical analysis uses sonic or ultrasound analysis to detect abnormal friction and stress in rotating machines. While sonic techniques can detect problems in mechanical machines, ultrasound is more flexible and can detect issues in both mechanical and electrical machines. Infrared analysis has the widest range of applications, spanning low- to high-speed equipment and also mechanical and electrical devices.

Model-based condition monitoring uses mathematical models to predict failures. First developed by NASA, this technique has a learning phase in which it learns the characteristics of normal operating conditions. On completion of the learning phase, the system enters the production phase where it monitors the equipment’s condition. It compares the performance of the equipment to the data collected in the learning phase, and will flag an issue if it detects a serious deviation from the normal operation of the machine. This is a form of anomaly detection where the monitoring system flags an issue when the machine deviates significantly from normal operating conditions.

![]() Note Refer to the following resources for more details on predictive maintenance:

Note Refer to the following resources for more details on predictive maintenance:

http://en.wikipedia.org/wiki/Predictive_maintenance and

Ahmet Duyar, “Simplifying predictive maintenance”, Orbit Vol. 31 No.1, pp. 38-45, 2011.

The Business Problem

Imagine that you are a data scientist at a semiconductor manufacturer. Your employer wants you to do the following:

- Build a model that predicts yield failure on their manufacturing process, and

- Through your analysis provide the factors that lead to yield failures in their process.

This is a very important business problem for semiconductor manufacturers since their process can be complex and involves several stages from raw sand to the final integrated circuits. Given the complexity, there are several factors that can lead to yield failures downstream in the manufacturing process. Identifying the most important factors will help process engineers to improve the yield and reduce error rates and cost of production, leading to increased productivity.

![]() Note You will need to have an account on Azure Machine Learning. Please refer to Chapter 2 for instructions to set up your new account if you do not have one yet.

Note You will need to have an account on Azure Machine Learning. Please refer to Chapter 2 for instructions to set up your new account if you do not have one yet.

In Chapter 1, you saw that the data science process typically follows these five steps.

- Define the business problem

- Data acquisition and preparation

- Model development

- Model deployment

- Monitoring model performance

Having defined the business problem, you will explore data acquisition and preparation, model development, evaluation, and deployment in the remainder of this chapter.

Data Acquisition and Preparation

Let’s explore how to load data from source systems and analyze the data in Azure Machine Learning.

The Dataset

For this exercise, you will use the SECOM dataset from the University of California at Irvine’s machine learning database. This dataset from the semiconductor manufacturing industry was provided by Michael McCann and Adrian Johnston.

![]() Note The SECOM data set is available at the University of California at Irvine’s Machine Learning Repository. The dataset contains 1,567 examples, each with 591 features. Of the 1567 examples, 104 of them represent yield failures.

Note The SECOM data set is available at the University of California at Irvine’s Machine Learning Repository. The dataset contains 1,567 examples, each with 591 features. Of the 1567 examples, 104 of them represent yield failures.

The features or columns represent sensor readings from 590 points in the manufacturing process. In addition, it also includes a timestamp and the yield result (i.e. a simple pass or fail) for each example.

Refer to http://archive.ics.uci.edu/ml/machine-learning-databases/secom/.

Azure Machine Learning enables you to load data from several sources including your local machine, the Web, SQL Database on Azure, Hive tables, or Azure Blob storage.

Loading Data from Your Local File System

The easiest way to load data is from your local machine. You can do this through the following steps.

- Point your browser to https://studio.azureml.net and log into your workspace in Azure Machine Learning.

- Next, click the Experiments item from the menu on the left pane.

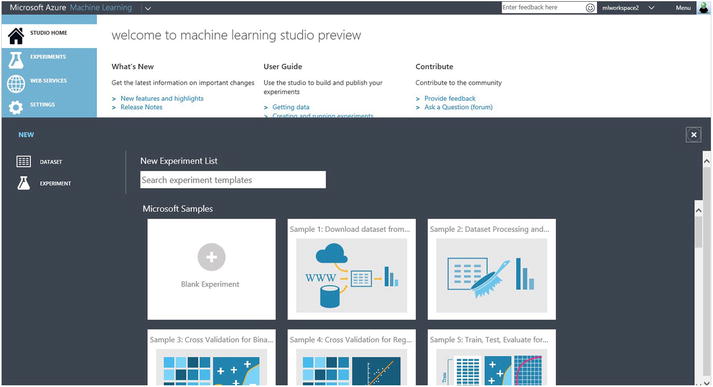

- Click the +New button at the bottom left side of the window, and then select Dataset from the new menu shown in Figure 8-1.

Figure 8-1. Loading a new dataset from your local machine

- When you choose the option From Local File you will be prompted to select the data file to load from your machine.

Loading Data from Other Sources

You can load data from non-local sources using the Reader module in Azure Machine Learning. Specifically, with the Reader module you can load data from the Web, SQL Database on Azure, Azure Table, Azure Blob storage, or Hive tables. To do this you will have to create a new experiment before calling the Reader module. Use the following steps to load data from any of the above sources with the Reader module.

- Point your browser to https://studio.azureml.net and log into your workspace in Azure Machine Learning.

- Next, click the Experiments item from the menu on the left pane.

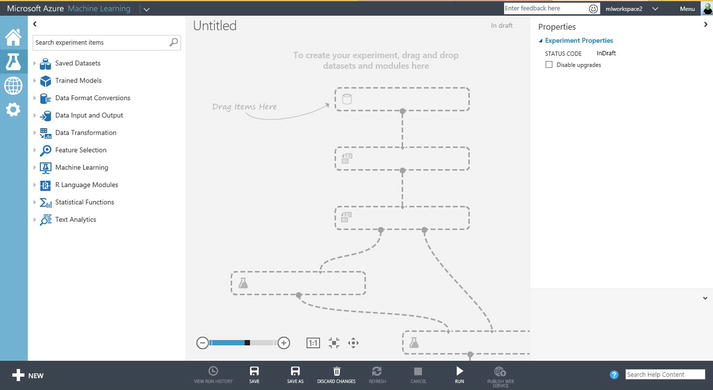

- Click the +New button at the bottom left side of the window, and then select Experiment from the new menu. A new experiment is launched with a blank canvas, as shown in Figure 8-2.

Figure 8-2. Starting a new experiment in Azure Machine Learning

- Open the menu item Data Input and Output and you will see two modules, Reader and Writer.

- Drag the Reader module from the menu to any position on the canvas.

- Click the Reader module in the canvas and its properties will be shown on the right pane under Reader. Now specify the data source in the drop-down menu and then enter the values of all required parameters. The Account Name and Account Key refer to a storage account on Microsoft Azure. You can obtain these parameters from the Azure portal as follows:

- Log in to Microsoft Azure at http://azure.microsoft.com.

- On the top menu, click Portal. This takes you to the Azure portal.

- Select storage from the menu on the left pane. This lists all your storage accounts in the right pane.

- Click the storage account that contains the data you need for your machine learning model.

- At the bottom of the screen, click the key icon labeled Manage Access Keys. Your storage account name and access keys will be displayed. Use the Primary Access Key as your Account Key in the Reader module in Azure Machine Learning.

![]() Note You can load several datasets from multiple sources into Azure Machine Learning using the above instructions. Note, however, that each Reader module only loads a single dataset at a time.

Note You can load several datasets from multiple sources into Azure Machine Learning using the above instructions. Note, however, that each Reader module only loads a single dataset at a time.

For this project, import the two SECOM datasets from the UCI Machine Learning database and join them into a new file stored locally. The first dataset only has the sensor readings, while the second dataset has the labels (i.e. the yield data). Then load the joined dataset into Azure Machine Learning using the first approach above.

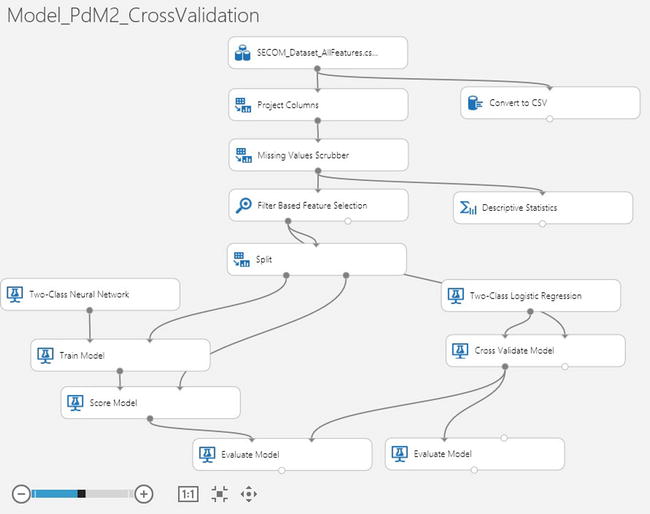

Figure 8-3 shows the first part of the experiment that covers data preparation. The data is loaded and preprocessed for missing values using the Missing Values Scrubber module. Following this, summary statistics are obtained and the Filter Based Feature Selection module is used to determine the most important variables for prediction.

Figure 8-3. Preprocessing the SECOM dataset

Figure 8-4 shows a snapshot of the SECOM data as seen in Azure Machine Learning. As you can see, there are 1,567 rows and 592 columns in the dataset. In addition, Azure Machine Learning also shows the number of unique values per feature and the number of missing values. You can see that many of the sensors have missing values. Further, some of the sensors have a constant reading for all rows. For example, sensor #6 has a reading of 100 for all rows. Such features will have to be eliminated as they add no value to the prediction since there is no variation in their readings.

Figure 8-4. Visualizing the SECOM dataset in Azure Machine Learning

Feature Selection

Feature selection is critical in this case since it provides the answer to your second business problem. Indeed, with over 590 features, you must perform feature selection to identify the subset of features that are useful to build a good model and eliminate irrelevant and redundant features. With so many features, you risk the curse of dimensionality. If there are a large number of features, the learning problem can be difficult because the many “extra” features can confuse the learning algorithm and cause it to have high variance. It’s also important to note that machine learning algorithms are very computationally intensive, and reducing the number of features can greatly reduce the time required and train the model, enabling the data scientist to perform experiments in less time. Through careful feature selection, you can find the most influential variables for the prediction. Let’s see how to do feature selection in Azure Machine Learning.

To perform feature selection in Azure Machine Learning, drag the module named Filter Based Feature Selection from the list of modules in the left pane. You can find this module by either searching for it in the search box or by opening the Feature Selection category. To use this module, you need to connect it to a dataset as the input. Figure 8-5 shows how to use it to perform feature selection on the SECOM dataset. Before running the experiment, use the Launch column selector in the right pane to define the target variable for prediction. In this case, choose the column Yield_Pass_Fail as the target since this is what you wish to predict. When you are done, set the number of desired features to 100. This instructs the feature selector in Azure Machine Learning to find the top 100 variables.

Figure 8-5. Feature selection in Azure Machine Learning

You also need to choose the scoring method that will be used for feature selection. Azure Machine Learning offers the following options for scoring:

- Pearson correlation

- Mutual information

- Kendall correlation

- Spearman correlation

- Chi Squared

- Fischer score

- Count-based

The correlation methods find the set of variables that are highly correlated with the output, but have low correlation among themselves. The correlation is calculated using Pearson, Kendall, or Spearman correlation coefficients, depending on the option you choose.

The Fisher score uses the Fisher criterion from statistics to rank variables. In contrast, the mutual information option is an information theoretic approach that uses mutual information to rank variables. The mutual information measures the dependence between the probability density of each variable and that of the outcome variable.

Finally, the Chi Squared option selects the best features using a test for independence; in other words, it tests whether each variable is independent of the outcome variable. It then ranks the variables using the Chi Squared test.

![]() Note See http://en.wikipedia.org/wiki/Feature_selection#Correlation_feature_selection or http://jmlr.org/papers/volume3/guyon03a/guyon03a.pdf for more information on feature selection strategies.

Note See http://en.wikipedia.org/wiki/Feature_selection#Correlation_feature_selection or http://jmlr.org/papers/volume3/guyon03a/guyon03a.pdf for more information on feature selection strategies.

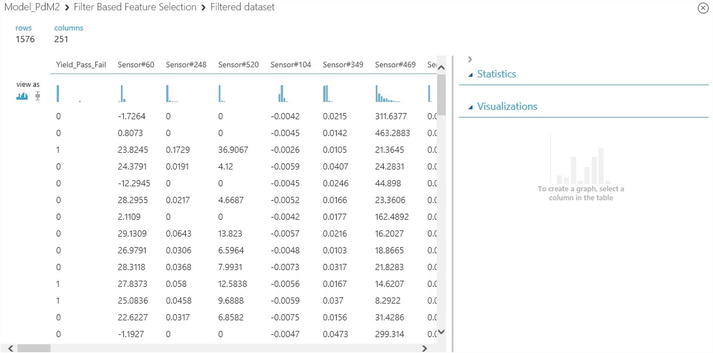

When you run the experiment, the Filter Based Feature Selection module produces two outputs: first, the filtered dataset lists the actual data for the most important variables. Second, the module shows a list of the variables by importance with the scores for each selected variable. Figure 8-6 shows the results of the features. In this case, you set the number of features to 100 and you use mutual information for scoring and ranking the variables. Figure 8-6 shows 101 columns since the results set includes the target variable (i.e. Yield_Pass_Fail) plus the top 100 variables including sensor #60, sensor #248, sensor #520, sensor #104, etc. The last row of the results shows the score for each selected variable. Since the variables are ranked, the scores decrease from left to right.

Figure 8-6. The results of feature selection for the SECOM dataset showing the top variables

Note that the selected variables will vary based on the scoring method, so it is worth experimenting with different scoring methods before choosing the final set of variables. The Chi Squared and mutual information scoring methods produce a similar ranking of variables for the SECOM dataset.

Training the Model

Having completed the data preprocessing, the next step is to train the model to predict the yield. Since the response variable is binary, you can treat this as a binary classification problem. You can use any of the two-class classification algorithms in Azure Machine Learning such as two-class logistic regression, two-class boosted decision trees, two-class decision forest, two-class neural networks, etc.

![]() Note All predictive maintenance problems are not created equal. Some problems will require different techniques besides classification. For instance, if the goal is to determine when a part will fail, you will need to use survival analysis. Alternatively, if the goal is to predict energy consumption, you may use a forecasting technique or a regression method that predicts continuous outcomes. Hence you need to understand the business problem in order to find the most appropriate technique to use. One size does not fit all!

Note All predictive maintenance problems are not created equal. Some problems will require different techniques besides classification. For instance, if the goal is to determine when a part will fail, you will need to use survival analysis. Alternatively, if the goal is to predict energy consumption, you may use a forecasting technique or a regression method that predicts continuous outcomes. Hence you need to understand the business problem in order to find the most appropriate technique to use. One size does not fit all!

Figure 8-7 shows the full experiment to predict the yield from SECOM data. The top half of the experiment, up to the Split module, implements the data preprocessing phase. The Split module splits the data into two samples, a training sample comprising 70% of the initial dataset, and a test sample with the remaining 30%.

Figure 8-7. An experiment for predicting the yield from SECOM data

In this experiment, you compare two classification algorithms to find the best predictor of yield. The left branch after the Split module uses the two-class boosted decision tree, while the right branch uses the two-class logistic regression algorithm that is widely used in statistics. Each of these algorithms are trained with the same training sample and tested with the same test sample. You use the module named Train Model to train each algorithm, and the Score Model for testing. The module named Evaluate Model is used to evaluate the accuracy of these two classification models.

Model Testing and Validation

Once the model is trained, the next step is to test it with a hold-out sample to avoid over-fitting and evaluate model generalization. We have shown how we performed this using a 30% sample for testing the trained model. Another strategy to avoid over-fitting and evaluate model generalization is cross-validation, which was discussed in Chapter 6. By default, Azure Machine Learning uses 10-fold cross-validation. With this approach, you use 10 hold-out samples instead of one for testing. To perform cross-validation for this problem you can simply replace any pair of the Train Model and Score Model modules with the module named Cross Validate Model. Figure 8-8 shows how to perform cross-validation with the Two-Class Logistic Regression portion of this experiment. You will also need to use the whole dataset from the Filter Based Feature Selection as your input dataset. For cross-validation there is no need to split the data into training and test sets with the Split module since the module named Cross Validate Model will do the required data splits.

Figure 8-8. A modified experiment with cross-validation

Model Performance

The Evaluate Model module is used to measure the performance of a trained model. This module takes two datasets as inputs. The first is a scored dataset from a tested model. The second is an optional dataset for comparison. After running the experiment, you can check your model’s performance by clicking the small circle at the bottom of the module named Evaluate Model. This module provides the following methods to measure the performance of a classification model such as the propensity model:

- The receiver operating characteristic, or ROC curve, which plots the rate of true positives to false positives

- The lift curve (also known as the gains curve), which plots the number of true positives vs. the positive rate

- Precision vs. recall chart

- Confusion matrix that shows type I and II errors

Figure 8-9 shows the ROC curve for the propensity model you built earlier. The ROC curve visually shows the performance of a predictive binary classification model. The diagonal line from (0,0) to (1,1) on the chart shows the performance of random guessing; so if you randomly selected the yield, your response would be on this diagonal line. A good predictive model should do much better than random guessing. Hence, on the ROC curve, a good model should lie above the diagonal line. The ideal or perfect model that is 100% accurate will have a vertical line from (0,0) to (0,1), followed by a horizontal line from (0,1) to (1,1).

Figure 8-9. Two ROC curves comparing the performance of logistic regression and boosted decision tree models for yield prediction

One way to measure the performance from the ROC curve is to measure the area under the curve (AUC). The higher the area under the curve, the better the model’s performance. The ideal model will have an AUC of 1.0, while random guessing will have an AUC of 0.5. The logistic regression model you built has an AUC of 0.667 while the boosted decision tree model has a slightly higher AUC of 0.741! In this experiment, the two-class boosted decision tree had the following parameters:

- Maximum number of leaves per tree = 50

- Maximum number of training instances required to form a leaf = 10

- Learning rate = 0.4

- Number of trees constructed = 1000

- Random number seed = 1

In addition, Azure Machine Learning also provides the confusion matrix as well as precision and recall rates for both models. By clicking each of the two curves, the tool shows the confusion matrix, precision, and recall rates for the select model. If you click the bottom curve or the legend named “scored dataset to compare,” the tool shows the performance data for the logistic regression model.

Figure 8-10 shows the confusion matrix for the logistic regression model. The confusion matrix has the following four cells:

- True positives: These are cases where yield failed and the model correctly predicts this.

- True negatives: In the historical dataset, the yield passed, and the model correctly predicts a pass.

- False positives: In this case, the model incorrectly predicts that the yield would fail when in fact it was a pass. This is commonly referred to as Type I error. The boosted decision tree model you built had only two false positives.

- False negatives: Here the model incorrectly predicts that the yield would pass when in reality it failed. This is also known as Type II error. The boosted decision tree model had 27 false negatives.

Figure 8-10. Confusion matrix and more performance metrics

In addition, Figure 8-10 shows the accuracy, precision, and recall of the model. Here are the formulas for these metrics:

Precision is the rate of true positives in the results.

Recall is the percentage of buyers that the model identifies and is measured as

Finally, the accuracy measures how well the model correctly identifies buyers and non-buyers, shown as

where tp = true positive, tn = true negative, fp = false positive, and fn = false negative. The F1 score is a weighted average of precision and recall. In this case, it is quite low since the recall is very low.

Model Deployment

When you build and test a predictive model that meets your needs, you can use Azure Machine Learning to deploy it into production for business use. A key differentiator of Azure Machine Learning is the ease of deployment in production. Once a model is complete, it can be deployed very easily into production as a web service. Once deployed, the model can be invoked as a web service from multiple devices including servers, laptops, tablets, or even smart phones.

The following two steps are required to deploy a model into production.

- Publish your experiment into the staging environment in Azure Machine Learning Studio.

- From the Azure Management portal, move the experiment from the staging environment into production.

Let’s review these steps in detail and see how they apply to your finished model built in the previous sections.

Publishing Your Model into Staging

To deploy your model into staging, follow these steps in Azure Machine Learning Studio.

- Save your trained mode using the Save As button at the bottom of Azure Machine Learning Studio. Rename it to a new name of your choice.

- Run the experiment.

- Right-click the output of the training module (e.g. Train Model) and select the option Save As Trained Model.

- Delete any modules that were used for training (e.g. the Split, Two-Class Boosted Decision Tree, Train Model, Evaluate Model).

- Connect the newly saved model directly to the Score Model module.

- Re-run your experiment.

Before the deletion in Step 1c, your experiment should be as shown Figure 8-11.

Figure 8-11. Predictive model before the training modules were deleted

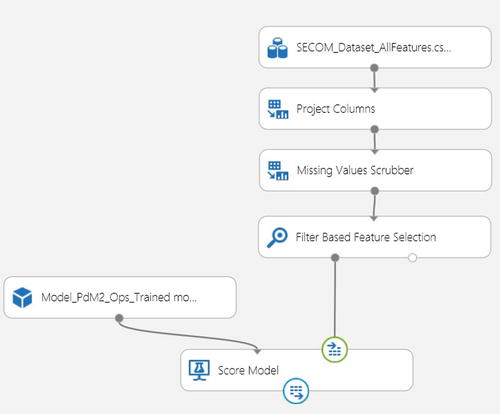

After deleting the training modules and replacing them with the saved training model, the experiment should now appear as shown in Figure 8-12.

Figure 8-12. The experiment that uses the saved training model

- Next, set your publishing input and output data ports. To do this,

- Right-click the right input port of the module named Score Model. Select the option Set As Publish Input.

- Right-click the output port of the Score Model module and select Set As Publish Output.

After these two steps, you will see two circles highlighting the chosen publish input and output on the Score Model module. This is shown in Figure 8-12.

- Once you assign the publish input and output ports, run the experiment and then publish it into staging by clicking Publish Web Service at the bottom of the screen.

Moving Your Model from Staging into Production

At this point your model is now in the staging environment, but is not yet running in production. To publish it into production, you need to move it from the staging environment to the production environment through the following steps.

- Configure your new web service in Azure Machine Learning Studio and make it ready for production as follows:

- In Azure Machine Learning Studio, click the menu called Web Services on the right pane. It will show a list of all your web services.

- Click the name of the new service you just created. Test it by clicking the Test URL.

- Click the configuration tab, and then select yes for the option Ready For Production? Click the Save button at the bottom of the window. Now your model is ready to be published in production.

- Now switch to Azure Management Portal and publish your web service into production as follows:

- Select Machine Learning on the right-hand pane in Azure Management Portal.

- Choose the workspace with the experiment you want to deploy in production.

- Click the name of your workspace, and then click the tab named Web Services.

- Choose the +Add button at the bottom of the Azure Management Portal window.

Congratulations, you have just published your machine learning model into production. If you click your model from the Web Services tab, you will see details such as the number of predictions made by your model over a seven-day window. The service also shows the APIs you can use to call your model as a web service either in a request/response or batch execution mode. In addition, you also get sample code you can use to invoke your new web service in C#, Python, or R.

Summary

You began this chapter by exploring predictive maintenance, which shows the business opportunity and the promise of machine learning. Using the SECOM semiconductor manufacturing data from the University of California at Irvine’s Machine Learning Data Repository, you learned how to build and deploy a predictive maintenance solution in Azure Machine Learning. In this step-by-step guide, we explained how to perform data preprocessing and analysis, which are critical for understanding the data. With that understanding of the data, you used a two-class logistic regression and a two-class boosted decision tree algorithm to perform classification. You also saw how to evaluate the performance of your models and avoid over-fitting. Finally, you saw how to deploy the finished model in production as a machine learning web service on Microsoft Azure.