Cloud Computing

Cloud Computing

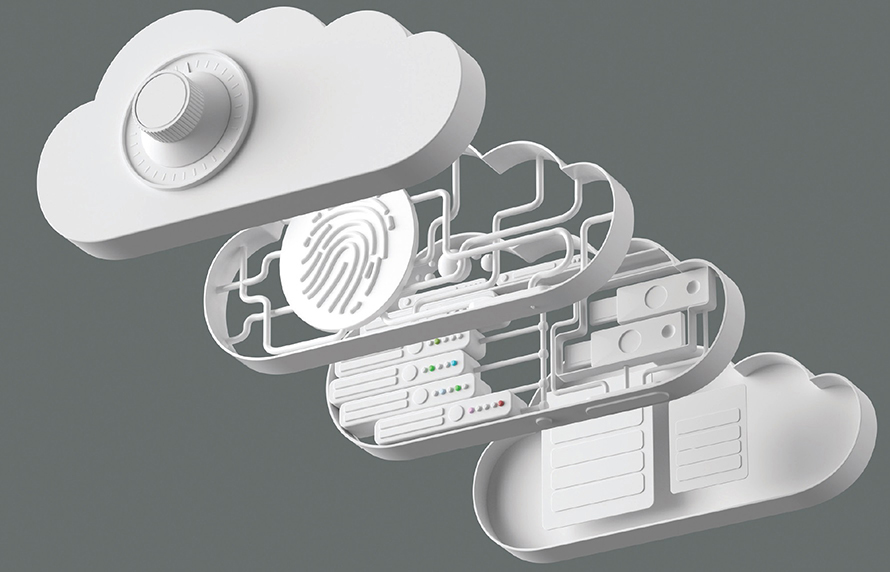

Cloud computing is becoming more and more prevalent because of multiple business factors. It has many economic and technical advantages over traditional IT in many use cases, but that is not to say it comes without problems. Securing cloud systems is in one respect no different from securing a traditional IT system: protect the data from unauthorized reading and manipulation. However, the tools, techniques, and procedures vary greatly from standard IT, and while clouds can be secure, it is by the application of the correct set of security controls, not by happenstance.

Cloud Computing

Cloud Computing

Cloud computing is a common term used to describe computer services provided over a network. These computing services are computing, storage, applications, and services that are offered via the Internet Protocol. One of the characteristics of cloud computing is transparency to the end user. This improves usability of this form of service provisioning. Cloud computing offers much to the user: improvements in performance, scalability, flexibility, security, and reliability, among other items. These improvements are a direct result of the specific attributes associated with how cloud services are implemented.

Security is a particular challenge when data and computation are handled by a remote party, as in cloud computing. The specific challenge is how does one allow data outside their enterprise and yet remain in control over how the data is used, and the common answer is encryption. When data is properly encrypted before it leaves the enterprise, external storage can still be performed securely.

There are many different cloud deployment models. Clouds can be created by many entities, both internal and external to an organization. Many commercial cloud services are available from a variety of firms, ranging from Google and Amazon to smaller, local providers. Internally, an organization’s own services can replicate the advantages of cloud computing while improving the utility of limited resources. The promise of cloud computing is improved utility and is marketed under the concepts of Platform as a Service (PaaS), Software as a Service (SaaS), and Infrastructure as a Service (IaaS).

There are pros and cons to cloud-based computing. And for each use, the economic factors may differ (issues of cost, contracts, and so on). However, for someone standing up a test project for which they might not want to incur hardware costs associated with buying servers that may live beyond the test project, then “renting” space in the cloud makes sense. When multiple sites are involved and the issues of distributing data and backup solutions are a concern, cloud services offer advantages. However, with less control comes other costs, such as forensics, incident response, archiving data, long-term contracts, and network connectivity. For each case, a business analysis must be performed to determine the correct choice between cloud options and on-premises computing.

Cloud Characteristics

NIST defines cloud computing as a system that enables ubiquitous, convenient, on-demand network access to a shared pool of configurable computing resources that can be rapidly provisioned and released with minimal management effort or service provider interaction. Five essential characteristics are associated with the cloud model: on-demand self-service, broad network access, resource pooling, rapid elasticity and scalability, and measured service.

On-Demand Self-Service

A cloud customer can unilaterally provision computing capabilities, such as server time and network storage, as needed automatically, without requiring human interaction with each service provider as provided under the terms of the cloud service agreement.

Broad Network Access

Cloud capabilities are available over the network and accessed through standard mechanisms that promote use by heterogeneous thin- or thick-client platforms. Reliance on standard network protocols and methods enable a level of architectural flexibility in accessing the cloud capabilities.

Resource Pooling

The cloud provider’s computing resources are pooled to serve multiple consumers using a multitenant model, with different physical and virtual resources dynamically assigned and reassigned according to customer demand. This enables the first characteristic, self-service, and the next, rapid elasticity and scalability. There is a sense of location independence in that the cloud is accessed via the network. Examples of resources that can be pooled include storage, processing, memory, and network bandwidth.

Rapid Elasticity and Scalability

Cloud capabilities can be elastically provisioned and released, in some cases automatically, to match resources commensurate with demand. For example, a spike in web traffic may increase workloads, requiring more resources. This is also called horizontal scaling, or scaling in or out. Scalability refers to the ability to increase workload with a given set of hardware resources. This is also called vertical scaling, or scaling up or down. Vertical scaling can involve more or less CPU power, RAM, and/or network bandwidth. To the customer, the capabilities available for provisioning often appear to be unlimited and can be appropriated in any quantity at any time.

Measured Service

Cloud systems control and optimize resource use by leveraging a metering capability at some level of abstraction appropriate to the type of service (for example, storage, processing, bandwidth, and active user accounts). This can be done automatically via cloud-side automation, adjusting as necessary per the cloud service agreement. Resource usage can be monitored, controlled, and reported, providing transparency for both the provider and customer of the utilized service.

Cloud Computing Service Models

Clouds can be created by many entities, both internal and external to an organization. Commercial cloud services are already available and offered by a variety of firms, as large as Google and Amazon and as small as local providers. Internal services can replicate the advantages of cloud computing while improving the utility of limited resources. The promise of cloud computing is improved utility and, as such, is marketed under the concepts of Infrastructure as a Service, Platform as a Service, Software as a Service, and Anything as a Service.

Infrastructure as a Service (IaaS)

Infrastructure as a Service (IaaS) is a marketing term used to describe cloud-based systems that are delivered as a virtual solution for computing. Rather than firms needing to build data centers, IaaS allows them to contract for utility computing, as needed. IaaS is specifically marketed on a pay-per-use basis, scalable directly with need.

Platform as a Service (PaaS)

Platform as a Service (PaaS) is a marketing term used to describe the offering of a computing platform in the cloud. Multiple sets of software working together to provide services, such as database services, can be delivered via the cloud as a platform. PaaS offerings generally focus on security and scalability, both of which are characteristics that fit with cloud and platform needs.

Software as a Service (SaaS)

Software as a Service (SaaS) is the offering of software to end users from within the cloud. Rather than installing software on client machines, SaaS acts as software on demand, where the software runs from the cloud. This has a couple advantages: updates can be seamless to end users, and integration between components can be enhanced. Common examples of SaaS are products offered via the Web as subscription services, such as Microsoft Office 365 and Adobe Creative Suite.

Anything as a Service (XaaS)

With the growth of cloud services, applications, storage, and processing, the scale provided by cloud vendors has opened up new offerings that are collectively called Anything as a Service (XaaS). The wrapping of the previously mentioned SaaS and IaaS components into a particular service (say, Disaster Recovery as a Service) creates a new marketable item.

Be sure you understand the differences between the cloud computing service models Platform as a Service, Software as a Service, Infrastructure as a Service, and Anything as a Service.

Level of Control in the Hosting Models

One way to examine the differences between the cloud models and on-premises computing is to look at who controls what aspect of the model. In Figure 18.1, you can see that the level of control over the systems goes from complete self-control in on-premises computing to complete vendor control in XaaS.

• Figure 18.1 Comparison of the level of control in the various hosting models

Infrastructure as Code

Infrastructure as code is the use of machine-readable definition files as well as code to manage and provision computer systems. By making the process of management programmable, there are significant scalability and flexibility advantages. Rather than having to manage physical hardware configurations using interactive configuration tools, infrastructure as code allows for this to be done programmatically. A good example of this is in the design of software-defined networking.

Services Integration

Services integration is the connection of infrastructure and software elements to provide specific services to a business entity. Connecting processing, storage, databases, web, communications, and other functions into an integrated comprehensive solution is the goal of most IT organizations. Cloud-based infrastructure is the ideal environment to achieve this goal. Through predesigned scripts, the cloud provider can manage services integration in a much more scalable fashion than individual businesses. For a business, each integration is a one-off creation, whereas the cloud services provider can capitalize on the reproducibility of doing the same integrations for many customers. With this scale and experience comes cost savings and reliability.

Cloud Types

Cloud Types

Depending on the size and particular needs of an organization, there are four basic types of cloud: public, private, hybrid, and community.

Private

If your organization is highly sensitive to sharing resources, you might want to consider the use of a private cloud. Private clouds are essentially reserved resources used only for your organization—your own little cloud within the cloud. This service will be considerably more expensive, but it should also carry less exposure and should enable your organization to better define the security, processing, and handling of data that occurs within your cloud.

Public

The term public cloud refers to when the cloud service is rendered over a system that is open for public use. In most cases, there is little operational difference between public and private cloud architectures, but the security ramifications can be substantial. Although public cloud services will separate users with security restrictions, the depth and level of these restrictions, by definition, will be significantly less in a public cloud.

Hybrid

A hybrid cloud structure is one where elements are combined from private, public, and community cloud structures. When examining a hybrid structure, you need to remain cognizant that operationally these differing environments may not actually be joined, but rather used together. Sensitive information can be stored in the private cloud and issue-related information can be stored in the community cloud, all of which is accessed by an application. This makes the overall system a hybrid cloud system.

Community

A community cloud system is one where several organizations with a common interest share a cloud environment for the specific purposes of the shared endeavor. For example, local public entities and key local firms may share a community cloud dedicated to serving the interests of community initiatives. This can be an attractive cost-sharing mechanism for specific data-sharing initiatives.

Be sure to understand and recognize the different cloud systems—private, public, hybrid, and community.

On-premises vs. Hosted vs. Cloud

Systems can exist in a wide array of places—from on premises, to hosted, to in the cloud. On-premises means the system resides locally in the building of the organization. Whether it’s a virtual machine (VM), storage, or even a service, if the solution is locally hosted and maintained, it is referred to as “on-premises.” The advantage is that the organization has total control of the system and generally has high connectivity to it. The disadvantage is that it requires local resources and is not necessarily easy to scale. Off-premises or hosted services refers to having the services hosted somewhere else, commonly in a shared environment. Using a third party for hosted services provides you a set cost based on the amount of those services you use. This has cost advantages, especially when scale is included—does it make sense to have all the local infrastructure, including personnel, for a small, informational-only website? Of course not; you would have that website hosted. Storage works the opposite with scale. Small-scale storage needs are easily met in-house, whereas large-scale storage needs are typically either hosted or in the cloud.

![]()

On-premises means the system is on your site. Off-premises means it is somewhere else—a specific location. The phrase “in the cloud” refers to having the system distributed across a remotely accessible infrastructure via a network, with specific cloud characteristics, such as scalability and so on. This is true for both on- and off-premises.

Cloud Service Providers

Cloud Service Providers

Cloud service providers (CSPs) come in many sizes and shapes, with a myriad of different offerings, price points, and service levels. There are the mega-cloud providers, Amazon, Google, Microsoft, and Oracle, which have virtually no limit to the size they can scale to when needed. There are smaller firms, with some offering reselling from the larger clouds and others hosting their own data centers. Each of these has a business offering, and the challenge is determining which offering best fits the needs of your project or company. Many issues have to be resolved around which services are being provided and which are not, as well as price points and contractual terms. One important thing to remember: if something isn’t in the contract, it won’t be done. Take security items, for example: if you want the cloud provider to offer specific security functionality, it must be in the package you subscribe to; otherwise, you won’t receive this functionality.

Transit Gateway

A transit gateway is a network connection that is used to interconnect virtual private clouds (VPCs) and on-premises networks. Using transit gateways, organizations can define and control communication between resources on the cloud provider’s network and their own infrastructure. Transit gateways are unique to each provider and are commonly implemented to support the administration of the provider’s cloud environment.

Cloud Security Controls

Cloud Security Controls

Cloud security controls are a shared issue—one that is shared between the user and the cloud provider. Depending on your terms of service with your cloud provider, you will share responsibilities for software updates, access control, encryption, and other key security controls. Figure 18.1 previously illustrated the differing levels of shared responsibility in various cloud models. What is important to remember is to define the requirements up front and have them written into the service agreement with the cloud provider, because unless they are part of the package, they will not occur.

High Availability Across Zones

Cloud computing environments can be configured to provide nearly full-time availability (that is, a high availability system). This is done using redundant hardware and software that make the system available despite individual element failures. When something experiences an error or failure, the failover process moves the processing performed by the failed component to the backup component elsewhere in the cloud. This process is transparent to users as much as possible, creating an image of a high availability system to users. Architecting these failover components across zones provides high availability across zones. As with all cloud security issues, the details are in your terms of service with your cloud provider; you cannot just assume the system will be high availability. It must be specified in your terms and architected in by the provider.

![]()

Zones can be used for replication and provide load balancing as well as high availability.

Resource Policies

When you are specifying the details of a cloud engagement, how much processing power, what apps, what security requirements, how much storage, and access control are all resources. Cloud-based resources are controlled via a set of policies. This is basically your authorization model projected into the cloud space. Management of these items is done via resource policies. Each cloud service provider has a different manner of allowing you to interact with their menu of services, but in the end, you are specifying the resource policies you want applied to your account. Through resource policies you can define what, where, or how resources are provisioned. This allows your organization to set restrictions, manage the resources, and manage cloud costs.

The integration between the enterprise identity access management (IAM) system and the cloud-based IAM system is a configuration element of utmost importance when setting up the cloud environment. The policies set the permissions for the cloud objects. Once the resource policies from the enterprise are extended into the cloud environment and set up, they must be maintained. The level of integration between the cloud-based IAM system and the enterprise-based IAM system will determine the level of work required for regular maintenance activities.

Secrets Management

Data that is in the cloud is still data that is on a server and is therefore, by definition, remotely accessible. Hence, it is important to secure the data using encryption. A common mistake is to leave data unencrypted on the cloud. It seems hardly a week goes by without a report of unencrypted data being exposed from a cloud instance. Fingers always point to the cloud provider, but in most cases the blame is on the end user. Cloud providers offer encryption tools and management services, yet too many companies don’t implement them, which sets the scene for a data breach.

Secrets management is the term used to denote the policies and procedures employed to connect the IAM systems of the enterprise and the cloud to enable communication with the data. Storing sensitive data, or in most cases virtually any data, in the cloud without putting in place appropriate controls to prevent access to the data is irresponsible and dangerous. Encryption is a failsafe—even if security configurations fail and the data falls into the hands of an unauthorized party, the data can’t be read or used without the keys. It is important to maintain control of the encryption keys. The security of the keys, which can be done outside the primary cloud instance and elsewhere in the enterprise, is how the secrecy is managed.

Secrets management is an important aspect of maintaining cloud security. The secrets used for system-to-system access should be maintained separately from other configuration data and handled according to the strictest principles of confidentiality because these secrets allow access to the data in the cloud.

![]()

Use of a secrets manager can enable secrets management by providing a central trusted storage location for certificates, passwords, and even application programming interface (API) keys.

Integration and Auditing

The integration of the appropriate level and quantity of security controls is a subject that is always being audited. Are the controls appropriate? Are they placed and used correctly? Most importantly, are they effective? These are standard IT audit elements in the enterprise. The moving of computing resources to the cloud does not change the need or intent of audit functions.

Cloud computing audits have become a standard as enterprises are realizing that unique cloud-based risks exist with their data being hosted by other organizations. To address these risks, organizations are using specific cloud computing audits to gain assurance and to understand the risk of their information being lost or released to unauthorized parties. These cloud-specific audits have two sets of requirements: one being an understanding of the cloud security environment as deployed, and the second being related to the data security requirements. The result is that cloud computing audits can be in different forms, such as SOC 1 and SOC 2 reporting, HITRUST, PCI, and FedRAMP. For each one of these data-specific security frameworks, additional details are based on the specifics of the cloud environment and the specifics of the security controls employed by both the enterprise and the cloud vendor.

Storage

Cloud-based data storage was one of the first uses of cloud computing. Security requirements related to storage in the cloud environment are actually based on the same fundamentals as in the enterprise environment. Permissions to access and modify data need to be defined, set, and enforced. A means to protect data from unauthorized access is generally needed, and encryption is the key answer, just as it is in the enterprise. The replication of data across multiple different systems as part of the cloud deployment and the aspects of high availability elements of a cloud environment can complicate the securing of data.

Permissions

Permissions for data access and modifications are handled in the same manner as in an on-premises IT environment. Identity access management (IAM) systems are employed to manage the details of who can do what with each object. The key to managing this in the cloud is the integration of the on-premises IAM system with the cloud-based IAM system.

Encryption

Encryption of data in the cloud is one of the foundational elements to securing one’s data when it is on another system. Data should be encrypted when stored in the cloud, and the keys should be maintained by the enterprise, not the cloud provider. Keys should be managed in accordance with the same level of security afforded keys in the enterprise.

Replication

Data may replicate across the cloud as part of a variety of cloud-based activities. From shared environments to high availability systems, including their backup systems, data in the cloud can seem to be fluid, moving across multiple physical systems. This level of replication is yet another reason that data should be encrypted for security. The act of replicating data across multiple systems is part of the resiliency of the cloud, in that single points of failure will not have the same effects that occur in the standard IT enterprise. Therefore, this is one of the advantages of the cloud.

High Availability

High availability storage works in the same manner as high availability systems described earlier in the chapter. Having multiple different physical systems working together to ensure your data is redundantly and resiliently stored is one of the cloud’s advantages. What’s more, the cloud-based IAM system can use encryption protections to keep your data secret, while high availability keeps it available.

Network

Cloud-based systems are made up of machines connected using a network. Typically this network is under the control of the cloud service provider (CSP). While you may be given network information, including addresses, the networks you see might actually be encapsulated on top of another network that is maintained by the service provider. In this fashion, many cloud service providers offer a virtual network that delivers the required functions without providing direct access to the actual network environment.

Virtual Networks

Most networking in cloud environments is via a virtual network operating in an overlay on top of a physical network. The virtual network can be used and manipulated by users, whereas the actual network underneath cannot. This gives the cloud service provider the ability to manage and service network functionality independent of the cloud instance with respect to a user. The virtual network technology used in cloud environments can include software-defined networking (SDN) and network function virtualization (NFV) as elements that make it easier to perform the desired networking tasks in the cloud.

Public and Private Subnets

Just as in traditional IT systems, there is typically a need for public-facing subnets, where the public/Internet can interact with servers, such as mail servers, web servers, and the like. There is also a need for private subnets, where access is limited to specific addresses, preventing direct access to secrets such as datastores and other important information assets. The cloud comes with the capability of using both public-facing and private subnets; in other words, just because something is “in the cloud” does not change the business architecture of some having machines connected to the Internet and some not. Now, being “in the cloud” means that, in one respect, the Internet is used for all access. However, in the case of private subnets, the cloud-based IAM system can determine who is authorized to access which parts of the cloud’s virtual network.

Segmentation

Segmentation is the network process of separating network elements into segments and regulating traffic between the segments. The presence of a segmented network creates security barriers for unauthorized accessors through the inspection of packets as they move from one segment to another. This can be done in a multitude of ways—via MAC tables, IP addresses, and even tunnels, with devices such as firewalls and secure web gateways inspecting at each connection. The ultimate in segmentation is the zero-trust environment, where microsegmentation is used to continually invoke the verification of permissions and controls. All of these can be performed in a cloud network. Also, as with the other controls already presented, the details are in the service level agreement (SLA) with the cloud service provider.

API Inspection and Integration

APIs are software interfaces that allow various software components to communicate with each other. This is true in the cloud just as it is in the traditional IT enterprise. Because of the nature of cloud environments, accepting virtually all of requests across the Web, there is a need for verifying information before it can be used. One key element in this solution is presented later in the chapter—the next-generation secure web gateway. This system analyzes information transfers at the application layer to verify authenticity and correctness.

Content inspection refers to the examination of the contents of a request to an API by applying rules to determine whether a request is legitimate and should be accepted. As APIs act to integrate one application to another, errors in one application can be propagated to other components, thus creating bigger issues. The use of API content inspection is an active measure to prevent errors from propagating through a system and causing trouble.

![]()

Cloud security controls provide the same functionality as normal network security controls; they just do it in a different environment. The cloud is not a system without controls.

Compute

The cloud has become a service operation where applications can be deployed, providing a form of cloud-based computing. The compute aspects of a cloud system have the same security issues as a traditional IT system; in other words, the fact that a compute element is in the cloud does not make it any more or less secure. What has to happen is that security requirements need to be addressed as data comes and goes from the compute element.

Security Groups

Security groups are composed of the set of rules and policies associated with a cloud instance. These rules can be network rules, such as rules for passing a firewall, or they can be IAM rules with respect to who can access or interact with an object on the system. Security groups are handled differently by each cloud service provider, but in the end they provide a means of managing permissions in a limited granularity mode. Different providers have different limits, but in the end the objective is to place users into groups rather than to perform individual checks for every access request. This is done to manage scalability, which is one of the foundational elements of cloud computing.

Dynamic Resource Allocation

A cloud-based system has certain hallmark characteristics besides just being on another computer. Among these characteristics is providing scalable, reliable computing in a cost-efficient manner. Having a system whose resources can grow and shrink as the compute requirements change, without the need to buy new servers, expand systems, and so on, is one of the primary advantages of the cloud. Cloud service providers offer more than just bare hardware. One of the values associated with the cloud is its ability to grow as the load increases and to shrink (thus saving costs) as the load decreases. Cloud service providers manage this using dynamic resource allocation software that monitors the levels of performance. In accordance with the service agreement, they can act to increase resources incrementally as needed.

Instance Awareness

Just as enterprises have moved to the cloud, so too have attackers. Command-and-control networks can be spun up in cloud environments, just as they are on real enterprise hardware. This creates a situation where a cloud is communicating with another cloud, and how does the first cloud understand if the second cloud is legit? Instance awareness is the name of a capability that must be enabled on firewalls, secure web gateways, and cloud access security brokers (CASBs) to determine if the next system in a communication chain is legitimate or not. Take a cloud-enabled service such as Google Drive, Microsoft OneDrive, or Box, or any other cloud-based storage. Do you block them all? Or do you determine by instance which are legit and which are not? This is a relatively new and advanced feature, but one that is becoming increasingly important to prevent data disclosures and other issues from integrating cloud apps with unauthorized endpoints.

Virtual Private Cloud (VPC) Endpoint

A virtual private cloud endpoint allows connections to and from a virtual private cloud instance. VPC endpoints are virtual elements that can scale. They are also redundant and typically highly available. A VPC endpoint provides a means to connect a VPC to other resources without going out over the Internet. View it as a secure tunnel to directly access other cloud-based resources without exposing the traffic to other parties. VPC endpoints can be programmable to enable integration with IAM and other security solutions, enabling cross-cloud connections securely.

![]()

A VPC endpoint provides a means to connect a VPC to other resources without going out over the Internet. In other words, you don’t need additional VPN connection technologies or even an Internet gateway.

Container Security

Container security is the process of implementing security tools and policies to ensure your container is running as intended. Container technology allows applications and their dependencies to be packaged together into one operational element. This element, also commonly called a manifest, can be version-controlled, deployed, replicated, and managed across an environment. Containers can contain all the necessary OS elements for an application to run; they can be considered self-contained compute platforms. Security can be designed into the containers, as well as enforced in the environment in which the containers run. Running containers in cloud-based environments is a common occurrence because the ease of managing and deploying the containers fits the cloud model well. Most cloud providers have container-friendly environments that enable the necessary cloud environment security controls as well as allow the container to make its own security decisions within the container.

![]()

Cloud-based computing has requirements to define who can do what (security groups) and what can happen and when (dynamic resource allocation) as well as to manage the security of embedded entities such as containers. Some of these controls are the same (security groups and containers) while other controls are unique to the cloud environment (dynamic resource allocations).

Security as a Service

Security as a Service

Just as one can get Software as a Service or Infrastructure as a Service, one can contract with a security firm for Security as a Service, which is the outsourcing of security functions to a vendor that has advantages in scale, costs, or speed. Security is a complex, wide-ranging cornucopia of technical specialties, all working together to provide appropriate risk reductions in today’s enterprise. This means there are technical people, management, specialized hardware and software, and fairly complex operations, both routine and in response to incidents. Any or all of this can be outsourced to a security vendor, and firms routinely examine vendors for solutions where the business economics make outsourcing attractive.

Different security vendors offer different specializations—from network security to web application security, e-mail security, incident response services, and even infrastructure updates. These can all be managed from a third party. Depending on architecture, needs, and scale, these third-party vendors can oftentimes offer a compelling economic advantage for part of a security solution.

Several types of items are delivered as a service—software, infrastructure, platforms, cloud access, and security—each with a specific deliverable and value proposition.

Managed Security Service Provider (MSSP)

A managed service provider (MSP) is a company that remotely manages a customer’s IT infrastructure. A managed security service provider (MSSP) does the same thing as a third party that manages security services. For each of these services, the devil is in the details. The scope of the engagement, what is in the details of the contract, is what is being provided by the third party, and nothing else. For example, if you don’t have managing backups as part of the contract, either you do it yourself or you have to modify the contract. Managed services provide the strength of a large firm but at a fraction of the cost that a small firm would have to pay to get that scale. So, obviously, there are advantages. However, the downside is flexibility, as there is no room for change without renegotiating the contract for services.

Cloud Security Solutions

Cloud Security Solutions

Cloud security solutions are similar to traditional IT security solutions in one simple way: there is no easy, magic solution. Security is achieved through multiple actions designed to ensure the security policies are being followed. Whether in the cloud or in an on-premises environment, security requires multiple activities, with metrics, reporting, management, and auditing to ensure effectiveness. With respect to the cloud, some specific elements need to be considered, mostly in interfacing existing enterprise IT security efforts with the methods employed in the cloud instance.

Cloud Access Security Broker (CASB)

Cloud access security brokers (CASBs) are integrated suites of tools or services offered as Security as a Service, or third-party managed security service providers (MSSPs), focused on cloud security. CASB vendors provide a range of security services designed to protect cloud infrastructure and data. CASBs act as security policy enforcement points between cloud service providers and their customers to enact enterprise security policies as the cloud-based resources are utilized.

A CASB is a security policy enforcement point that is placed between cloud service consumers and cloud service providers to manage enterprise security policies as cloud-based resources are accessed. A CASB can be an on-premises or cloud-based item; the key is that it exists between the cloud provider and customer connection, thus enabling it to mediate all access. Enterprises use CASB vendors to address cloud service risks, enforce security policies, and comply with regulations. A CASB solution works wherever the cloud services are located, even when they are beyond the enterprise perimeter and out of the direct control of enterprise operations. CASBs work at both the bulk and microscopic scale. They can be configured to block some types of access like a sledgehammer, while also operating as a scalpel, trimming only specific elements. They do require an investment in the development of appropriate strategies in the form of data policies that can be enforced as data moves to and from the cloud.

![]()

A CASB is a security policy enforcement point that is placed between cloud service consumers and cloud service providers to manage enterprise security policies as cloud-based resources are accessed.

Application Security

When applications are provided by the cloud, application security is part of the equation. Again, this immediately becomes an issue of potentially shared responsibility based on the cloud deployment model chosen. If the customer has the responsibilities for securing the applications, then the issues are the same as in the enterprise, with the added twist of maintaining software on a different platform—the cloud. Access to the application for updating as well as auditing and other security elements must be considered and factored into the business decision behind the model choice.

If the cloud service provider is responsible, there can be economies of scale, and the providers have the convenience of having their own admins maintain the applications. However, with that comes the cost, and the issues of auditing to ensure it is being done correctly. At the end of the day, the concept of what needs to be done with respect to application security does not change just because it is in the cloud. What does change is who becomes responsible for it and how it is accomplished in the remote environment. As with other elements of potential shared responsibilities, this is something that needs to be determined before the cloud agreement is signed; if it is not in the agreement, then it is solely on the user to provide the responses.

Firewall Considerations in a Cloud Environment

Firewalls are needed in cloud environments in the same manner they are needed in traditional IT environments. In cloud computing, the network perimeter has essentially disappeared; it is a series of services running outside the traditional IT environment and connected via the Internet. To the cloud, the user’s physical location and the device they’re using no longer matter. The cloud needs a firewall blocking all unauthorized connections to the cloud instance. In some cases, this is built into the cloud environment; in others, it is up to the enterprise or cloud customer to provide this functionality.

Cost

The first question on every manager’s mind is cost—what will this cost me? There are cloud environments that are barebones and cheap, but they also don’t come with any built-in security functionality, such as a firewall, leaving it up to the customer to provide. Therefore, this needs to be included in the cost comparisons to cloud environments with built-in firewall functionality. The cost of a firewall is not just in the procurement but also the deployment and operation. And all of these factors need to be included, not only for firewalls around the cloud perimeter, but internal firewalls used for segmentation as well.

Need for Segmentation

As previously discussed, segmentation can provide additional opportunities for security checks between critical elements of a system. Take the database servers that hold the crown jewels of a corporation’s data, be that intellectual property (IP), business information, customer records; each enterprise has its own flavor, but all have critical records that the loss of or disclosure of would be a serious problem. Segmenting off this element of the network and only allowing access to a small set of defined users at the local segment is a strong security protection against attackers traversing your network. It can also act to keep malware and ransomware from hitting these critical resources. Firewalls are used to create the segments, and the need for them must be managed as part of the requirements package for designing and creating the cloud environment.

Open Systems Interconnection (OSI) Layers

The Open Systems Interconnection (OSI) layers act as a means of describing the different levels of communication across a network. From the physical layer (layer 1) to the network layer (layer 3) is the standard realm of networking. Layer 4, the transport layer, is where TCP and UDP function, and through level 7, the application layer, is where applications work. This is relevant with respect to firewalls because most modern application-level attacks do not occur at layers 1–3, but rather happen in layers 4–7. This makes traditional IT firewalls inadequately prepared to see and stop most modern attacks. Modern next-generation firewalls and secure web gateways operate higher in the OSI model, including up to the application layer, to make access decisions. These devices are more powerful and require significantly more information and effort to effectively use, but with integrated security orchestration, automation, and response (SOAR) frameworks and systems, they are becoming valuable components in the security system. As the cloud networking is a virtualized function, many of the old network-based attacks will not function on the cloud network, but the need is still there for the higher-level detection of the next-generation devices. These are the typical firewall and security appliances that are considered essential in cloud environments.

Modern next-generation firewalls and secure web gateways operate higher in the OSI model, using application layer data to make access decisions.

Cloud-native Controls vs. Third-party Solutions

When one is looking at cloud security automation and orchestration tools, there are two sources. First is the set provided by the cloud service provider. These cloud-native controls vary by provider and by specific offering that an enterprise subscribes to as part of the user agreement and service license. Third-party tools also exist that the customer can license and deploy in the cloud environment. The decision is one that should be based on a comprehensive review of requirements, including both capabilities and cost of ownership. This is not a simple binary A-or-B choice; there is much detail to consider. How will each integrate with the existing security environment? How will the operation be handled, and who will have to learn what tools to achieve the objectives? This is a complete review of the people, processes, and technologies, because any of the three can make or break either of these deployments.

Virtualization

Virtualization

Virtualization technology is used to enable a computer to have more than one OS present and, in many cases, operating at the same time. Virtualization is an abstraction of the OS layer, creating the ability to host multiple OSs on a single piece of hardware. To enable virtualization, a hypervisor is employed. A hypervisor is a low-level program that allows multiple operating systems to run concurrently on a single host computer. Hypervisors use a thin layer of code to allocate resources in real time. The hypervisor acts as the traffic cop that controls I/O and memory management. One of the major advantages of virtualization is the separation of the software and the hardware, creating a barrier that can improve many system functions, including security. The underlying hardware is referred to as the host machine, and on it is a host OS. Either the host OS has built-in hypervisor capability or an application is needed to provide the hypervisor function to manage the virtual machines (VMs). The virtual machines are typically referred to as guest OSs. Two types of hypervisors exist: Type I and Type II.

A hypervisor is the interface between a virtual machine and the host machine hardware. Hypervisors comprise the layer that enables virtualization.

Type I

Type I hypervisors run directly on the system hardware. They are referred to as native, bare-metal, or embedded hypervisors in typical vendor literature. Type I hypervisors are designed for speed and efficiency, as they do not have to operate through another OS layer. Examples of Type I hypervisors include KVM (Kernel-based Virtual Machine, a Linux implementation), Xen (Citrix Linux implementation), Microsoft Windows Server Hyper-V (a headless version of the Windows OS core), and VMware’s vSphere/ESXi platforms. All of these Type I hypervisors are designed for the high-end server market in enterprises and are designed to allow multiple VMs on a single set of server hardware. These platforms come with management tool sets to facilitate VM management in the enterprise.

Type II

Type II hypervisors run on top of a host operating system. In the beginning of the virtualization movement, Type II hypervisors were the most popular. Administrators could buy the VM software and install it on a server they already had running. Typical Type II hypervisors include Oracle’s VirtualBox and VMware’s VMware Player. These are designed for limited numbers of VMs, typically running in a desktop or small server environment.

Virtual Machine (VM) Sprawl Avoidance

Sprawl is the uncontrolled spreading and disorganization caused by lack of an organizational structure when many similar elements require management. Just as you can lose track of a file in a large file directory and have to hunt for it, you can lose track of a VM among many others that have been created. VMs basically are files that contain a copy of a working machine’s disk and memory structures. Creating a new VM is a simple process. If an organization has only a couple of VMs, keeping track of them is relatively easy. But as the number of VMs grows rapidly over time, sprawl can set in. VM sprawl is a symptom of a disorganized structure. An organization needs to implement VM sprawl avoidance through policy. It can avoid VM sprawl through naming conventions and proper storage architectures so that the files are in the correct directory/folder, making finding the correct VM easy and efficient. But as in any filing system, it works only if everyone routinely follows the established policies and procedures to ensure that proper VM naming and filing are performed.

One of the strongest business cases for an integrated VM management tool such as ESXi Server from VMware is its ability to enable administrators to manage VMs and avoid sprawl. Being able to locate and use resources when required is an element of security, specifically availability, and sprawl causes availability issues.

VM Escape Protection

When multiple VMs are operating on a single hardware platform, one concern is VM escape, which is where software, either malware or an attacker, escapes from one VM to the underlying OS. Once the VM escape occurs, the attacker can attack the underlying OS or resurface in a different VM. When you examine the problem from a logical point of view, both VMs use the same RAM, the same processors, and so forth; the difference is one of timing and specific combinations. While the VM system is designed to provide protection, as with all things of larger scale, the devil is in the details. Large-scale VM environments have specific modules designed to detect escape and provide VM escape protection to other modules.

VM sprawl and VM escape are different and each pose specific issues to the deployment environment.

VDI/VDE

VDI/VDE

Virtual desktop infrastructure (VDI) and virtual desktop environment (VDE) are terms used to describe the hosting of a desktop environment on a central server. There are several advantages to this desktop environment. From a user perspective, their “machine” and all of its data are persisted in the server environment. This means that a user can move from machine to machine and have a singular environment following them around. And because the end-user devices are just simple doors back to the server instance of the user’s desktop, the computing requirements at the edge point are considerably lower and can be performed on older machines. Users can utilize a wide range of machines, even mobile phones, to access their desktop and get their work finished. Security can be a very large advantage of VDI/VDE. Because all data, even when being processed, resides on servers inside the enterprise, there is nothing to compromise if a device is lost.

Fog Computing

Fog Computing

Cloud computing has been described by pundits as using someone else’s computer. If this is the case, then fog computing is using someone else’s computers. Fog computing is a distributed form of cloud computing, in which the workload is performed on a distributed, decentralized architecture. Originally developed by Cisco, fog computing moves some of the work into the local space to manage latency issues, with the cloud being less synchronous. In this form, it is similar to edge computing, which is described in the next section.

Fog computing is an architecture where devices mediate processing between local hardware and remote servers. It regulates which information is sent to the cloud and which is processed locally, with the results sent to the user immediately and to the cloud with its latency. One can view fog computing as using intelligent gateways that handle immediate needs while managing the cloud’s more efficient data storage and processing. This makes fog computing an adjunct to cloud, not a replacement.

Edge Computing

Edge Computing

Edge computing refers to computing performed at the edge of a network. Edge computing has been driven by network vendors who have processing power on the network and want new markets rather than just relying on existing markets. Edge computing is similar to fog computing in that it is an adjunct to existing computing architectures—one that is designed for speed. The true growth in edge computing has occurred with the Internet of Things (IoT) revolution. This is because edge computing relies on what one defines as “the edge,” coupled with the level of processing needed. In many environments, the actual edge is not as large as one might think, and what some would call edge computing is better accomplished using fog computing. But when you look at a system such as IoT, where virtually every device may be an edge, then the issue of where to do computing comes into play—on the tiny IoT device with limited resources or at the nearest device with computing power. This has led networking companies to create devices that can manage the data flow and do the computing on the way.

![]()

Edge computing brings processing closer to the edge of the network, which optimizes web applications and IoT devices.

Thin Client

Thin Client

A thin client is a lightweight computer, with limited resources, whose primary purpose is to communicate with another machine. Thin clients can be very economical when they are used to connect to more powerful systems. Rather than having 32 GB of memory, a top-level processor, a high-end graphics card, and a large storage device on every desktop, where most of the power goes unused, the thin client allows access to a server where the appropriate resources are available and can be shared. With cloud computing and virtualization, where processing, storage, and even the apps themselves exist on servers in the cloud, what is needed is a device that connects to that power and acts as an input/output device.

Containers

Containers

Virtualization enables multiple OS instances to coexist on a single hardware platform. The concept of containers is similar, but rather than having multiple independent OSs, a container holds the portions of an OS that it needs separate from the kernel. Therefore, multiple containers can share an OS, yet have separate memory, CPU, and storage threads, guaranteeing that they will not interact with other containers. This allows multiple instances of an application or different applications to share a host OS with virtually no overhead. This also allows portability of the application to a degree separate from the OS stack. Multiple major container platforms exist, such as Docker. Rather than adopt a specific industry solution, the industry has coalesced around a standard form called the Open Container Initiative (OCI), designed to enable standardization and the market stability of the environment. Different vendors in the container space have slightly different terminologies, so you need to check with your specific implementation by vendor to understand the exact definition of container and cell in their environment.

You can think of containers as the evolution of the VM concept to the application space. A container consists of an entire runtime environment bundled into one package: an application, including all its dependencies, libraries, and other binaries, and the configuration files needed to run it. This eliminates the differences between development, test, and production environments, as the differences are in the container as a standard solution. By containerizing the application platform, including its dependencies, any differences in OS distributions, libraries, and underlying infrastructure are abstracted away and rendered moot.

![]()

Containers are a form of operating system virtualization. They are a packaged-up combination of code and dependencies that help applications run quickly in different computing environments.

Microservices/API

Microservices/API

An application programming interface (API) is a means for specifying how one interacts with a piece of software. Let’s use a web service as an example: if it uses the Representational State Transfer (REST) API, then the defined interface is a set of four actions expressed in HTTP:

![]() GET Get a single item or a collection.

GET Get a single item or a collection.

![]() POST Add an item to a collection.

POST Add an item to a collection.

![]() PUT Edit an item that already exists in a collection.

PUT Edit an item that already exists in a collection.

![]() DELETE Delete an item in a collection.

DELETE Delete an item in a collection.

Microservices is a different architectural style. Rather than defining the inputs and outputs, microservices divide a system into a series of small modules that can be coupled together to produce a complete system. Each of the modules in a microservices architecture is designed to be lightweight, with simple interfaces and structurally complete. This allows for more rapid development and maintenance of code.

Serverless Architecture

Serverless Architecture

When an infrastructure is established “on premises,” the unit of computing power is a server. To set up e-mail, you set up a server. To set up a website, you set up a server. The same issues exist for storage: Need storage? Buy disks. Yes, these disks can all be shared, but in the end, computing is servers, and storage is disks. With the cloud, this all changes. The cloud is like the ultimate shared resource, and with many large providers, you don’t specify servers or disks; instead, you specify capacity. The provider then spins up the required resources. This serverless architecture simplifies a lot of things and adds significant capabilities. By specifying the resources needed in terms of processing power, the cloud provider can spin up the necessary resources. Because you are in essence renting from a large pool of resources, this gives you the ability to have surge capacity, where for a period of time you increase capacity for some specific upturn in usage. One of the operational advantages of this is that cloud providers can make these changes via automated scripts that can occur almost instantaneously, as opposed to the on-premises problem of procurement and configuration. This architecture also supports service integration, thus expanding the utility of computing to the business.

![]()

Serverless architecture is a way to develop and run applications and services without owning and managing an infrastructure. Servers are still used, but they are owned and managed “off-premises.”

Chapter 18 Review

Chapter Summary

Chapter Summary

After reading this chapter and completing the exercises, you should understand the following about cloud computing and virtualization concepts.

Explore cloud security controls

![]() Cloud computing is characterized by the capabilities of on-demand self-service, resource pooling, rapid elasticity and scalability, and measured service.

Cloud computing is characterized by the capabilities of on-demand self-service, resource pooling, rapid elasticity and scalability, and measured service.

![]() Cloud deployments use security controls just like normal IT deployments.

Cloud deployments use security controls just like normal IT deployments.

Compare and contrast cloud security solutions

![]() Cloud computing service models include IaaS, PaaS, SaaS, and XaaS.

Cloud computing service models include IaaS, PaaS, SaaS, and XaaS.

![]() Clouds models can be on-premises, hosted, private, public, community, or hybrid.

Clouds models can be on-premises, hosted, private, public, community, or hybrid.

Learn about cloud native controls versus third-party solutions

![]() Transit gateways connect clouds to customers.

Transit gateways connect clouds to customers.

![]() The connection between a cloud and a user is managed by a CASB.

The connection between a cloud and a user is managed by a CASB.

Explore virtualization

![]() VMs come in two types: Type I (on bare metal) and Type II (on a host OS).

VMs come in two types: Type I (on bare metal) and Type II (on a host OS).

![]() Enterprise VM deployments at scale can result in VM sprawl.

Enterprise VM deployments at scale can result in VM sprawl.

![]() Software can leave a VM and cross to other VMs via VM escape mechanisms.

Software can leave a VM and cross to other VMs via VM escape mechanisms.

![]() Containers are a means of managing apps like VMs.

Containers are a means of managing apps like VMs.

Key Terms

Key Terms

Anything as a Service (XaaS) (699)

cloud access security brokers (CASBs) (708)

cloud computing (697)

cloud service providers (CSPs) (701)

community cloud (701)

container security (707)

edge computing (713)

fog computing (713)

high availability (704)

hybrid cloud (701)

hypervisor (711)

Infrastructure as a Service (IaaS) (698)

infrastructure as code (700)

instance awareness (707)

managed security service provider (MSSP) (708)

on-premises (701)

Platform as a Service (PaaS) (699)

private cloud (700)

public cloud (700)

secrets management (703)

Security as a Service (707)

serverless architecture (715)

Software as a Service (SaaS) (699)

transit gateway (702)

Type I hypervisors (711)

Type II hypervisors (711)

virtual desktop environment (VDE) (712)

virtual desktop infrastructure (VDI) (712)

virtual private cloud endpoint (707)

virtualization (711)

VM escape (712)

VM sprawl (712)

Key Terms Quiz

Key Terms Quiz

Use terms from the Key Terms list to complete the sentences that follow. Don’t use the same term more than once. Not all terms will be used.

1. _______________ is a distributed form of cloud computing, where the workload is performed on a distributed, decentralized architecture.

2. A(n) _______________ allows connections to and from a virtual private cloud instance.

3. A(n) _______________ structure is one where elements are combined from private, public, and community cloud structures.

4. A(n) _______________ is a network connection that is used to interconnect virtual private clouds (VPCs) and on-premises networks.

5. Specifying compute requirements in terms of resources needed (for example, processing power and storage) is an example of _______________.

6. _______________ is the infrastructure needed to enable the hosting of a desktop environment on a central server.

7. _______________ is the term used to denote the policies and procedures employed to connect the IAM systems of the enterprise and the cloud to enable communication with the data.

8. _______________ is the term used to describe the offering of a computing platform in the cloud.

9. When software, either malware or an attacker, escapes from one VM to the underlying OS, this is referred to as _______________.

10. The name of a capability that must be enabled on firewalls, secure web gateways, and cloud access security brokers (CASBs) to determine if the next system in a communication chain is legitimate or not is called _______________.

Multiple-Choice Quiz

Multiple-Choice Quiz

1. The policies and procedures employed to connect the IAM systems of the enterprise and the cloud to enable communication with the data is referred to as what?

A. API inspection and integration

B. Secrets management

C. Dynamic resource allocation

D. Container security

2. You have deployed a network of Internet-connected sensors across a wide geographic area. These sensors are small, low-power IoT devices, and you need to perform temperature conversions and collect the data into a database. The calculations would be best managed by which architecture?

A. Fog computing

B. Edge computing

C. Thin client

D. Decentralized database in the cloud

3. Resource policies involve all of the following except?

A. Permissions

B. IAM

C. Cost

D. Access

4. Why is VM sprawl an issue?

A. VM sprawl uses too many resources on parallel functions.

B. The more virtual machines in use, the harder it is to migrate a VM to a live server.

C. Virtual machines are so easy to create, so you end up with hundreds of small servers only performing a single function.

D. When servers are no longer physical, it can be difficult to locate a specific machine.

5. Which of the following is a security policy enforcement point placed between cloud service consumers and cloud service providers to manage enterprise security policies as cloud-based resources are accessed?

A. SWG

B. VPC endpoint

C. CASB

D. Resource policies

6. You are planning to move some applications to the cloud, including your organization’s accounting application, which is highly customized and does not scale well. Which cloud deployment model is best for this application?

A. SaaS

B. PaaS

C. IaaS

D. None of the above

7. Which is the most critical element in understanding your current cloud security posture?

A. Cloud service agreement

B. Networking security controls

C. Encryption

D. Application security

8. One of the primary resources in use at your organization is a standard database that many applications tie into. Which cloud deployment model is best for this kind of application?

A. SaaS

B. PaaS

C. IaaS

D. None of the above

9. Which cloud deployment model has the fewest security controls?

A. Private

B. Public

C. Hybrid

D. Community

10. What is the primary downside of a private cloud model?

A. Restrictive access rules

B. Cost

C. Scalability

D. Lack of vendor support

Essay Quiz

Essay Quiz

1. Compare and contrast VMs and containers.

2. What are the defining characteristics of cloud computing? What does it enable in the enterprise? How and when is it advantageous over standalone servers?

Lab Projects

• Lab Project 18.1

Experiment with virtual computing. Using a virtual machine solution (Virtual Box, VMware, or some other method), create a series of three virtual machines and connect them together using the virtual networking method provided by the VM vendor. Then experiment with using one machine as a source, another as the target (for example, web browser and web server or FTP client and server), with the third machine monitoring the activity via Wireshark and other network monitoring tools.

• Lab Project 18.2

Obtain a free account from one of the cloud service providers (Amazon, Oracle, or Microsoft) and create an instance of a data store and service in the cloud. Then explore how you connect and how things operate. How do you manage the instance, provisioning, security, and so on?