C H A P T E R 15

Walkthroughs, Code Reviews, and Inspections

Our objective with Inspections is to reduce the Cost of Quality by finding and removing defects earlier and at a lower cost. While some testing will always be necessary, we can reduce the costs of test by reducing the volume of defects propagated to test.

—Ron Radice (2002)

When you catch bugs early, you also get fewer compound bugs. Compound bugs are two separate bugs that interact: you trip going downstairs, and when you reach for the handrail it comes off in your hand.

—Paul Graham (2001)

Here's a shocker: your main quality objective in software development is to get a working program to your user that meets all their requirements and has no defects. That's right: your code should be perfect. It meets all the user's requirements and it has no errors in it when you deliver it. Impossible, you cry? Can't be done? Well, software quality assurance is all about trying to get as close to perfection as you can – albeit within time and budget. (You knew there was a catch, didn't you?)

Software quality is usually discussed from two different perspectives, the user's and the developer's. From the user's perspective, quality has a number of characteristics – things that your program must do in order to be accepted by the user – among which are:1

- Correctness: The software has to work, period.

- Usability: It has to be easy to learn and easy to use.

- Reliability: It has to stay up and be available when you need it.

- Security: The software has to prevent unauthorized access and it has to protect your data.

- Adaptability: It should be easy to add new features.

____________

1 McConnell, S. Code Complete 2: A Practical Handbook of Software Construction. (Redmond, WA: Microsoft Press, 2004.)

From the developer's perspective, things are a bit different. The developer wants to see the following:

- Maintainability: It has to be easy to make changes to the software.

- Portability: It has to be easy to move the software to a different platform.

- Readability: Many developers won't admit this, but you do need to be able to read the code.

- Understandability: The code needs to be designed in such a way that a new developer can understand how it all hangs together.

- Testability: Well, at least the testers think that your code should be easy to test. Code that is created in a modular fashion, with short functions that do only one thing, is much easier to understand and test than code that is all just one big main() function.

Software Quality Assurance (SQA) has three legs to it:

- Testing: Finding the errors that surface while your program is executing, also known as dynamic analysis.

- Debugging: Getting all the obvious errors out of your code, the ones that are found by testing it.

- Reviews: Finding the errors that are inherently in your code as it sits there, also known as static analysis.

Many developers – and managers – think that you can test your way to quality. You can't. As we saw in the last chapter, tests are limited. You often can't explore every code path, you can't test every possible data combination, and often your tests themselves are flawed. Tests can only get you so far. As Edsger Dijkstra famously said, “…program testing can be a very effective way to show the presence of bugs, but it is hopelessly inadequate for showing their absence.”2

Reviewing your code – reading it and looking for errors on the page – provides another mechanism for making sure that you've implemented the user's requirements and the resulting design correctly. In fact, most development organizations that use a plan-driven methodology will not only review code, they'll also review the requirements document, the architecture, the design specification, the test plan, the tests themselves, and the user documentation. In short, all the work products produced by the software development organization. Organizations that use an agile development methodology don't necessarily have all the documents mentioned above, but they do have requirements, user stories, user documentation, and especially code to review. In this chapter we'll focus on reviewing your code.

__________

2 Dijkstra, E. “The Humble Programmer.” CACM 15(10): 859-866. 1972.

Walkthroughs, Reviews, and Inspections – Oh My!

Testing alone is not a particularly effective way of finding errors in your code. In many cases, the combination of unit testing, integration testing, and system testing will only find about 50% or so of the errors in your program.3 But, if you add some type of code review (reading the code to find errors) to your testing regimen you can bring that percentage up to between 93% and 99% of all the errors in your code. Now that's an objective to shoot for.

There are three types of reviews that are typically done, and they work their way up from very informal techniques, to very formal methodologies. These reviews are typically done either right after you've got a clean compile of your code and before you unit test, or right after you finish your unit testing. It's better to do the reviews right after unit testing. Then you've got your changes made, you've got a clean compile, and you've done the first round of testing. That's a great time to have someone else take a look at your code.

Walkthroughs

Walkthroughs, also known as desk checks or code reads, are the least formal type of a review. Walkthroughs are normally used to confirm small changes to code, say a line or two, that you have just made to fix an error. If you've just added a new method to a class, or you've changed more than about 10 lines of code, under no circumstances should you do a walkthrough. Do a code review instead.

Walkthroughs involve two or at most three people: the author of the code and the reviewer. The author's job in a walkthrough is to explain to the reviewer what the change is supposed to do and to point out where the change was made. The reviewer's job is to understand the change and then read the code. Once the reviewer reads the code she makes one of two judgments; either she agrees that the change is correct, or she does not. If not, the author has to go back, fix the code again, and then do another walkthrough. If the reviewer thinks the change is correct, then the author can integrate the changed code back into the code base for integration testing.

If you're using an agile methodology and you're pair programming, a code walkthrough will happen naturally as you are implementing a task. The driver is writing the code and the navigator is looking over her shoulder, checking for errors and thinking ahead. In this case it's acceptable to use a walkthrough for a larger piece of code, but for a complete task, or better yet, for each user story that is implemented, you should do a code review or an inspection.

Code Reviews

Code reviews, on the other hand, are somewhat more formal than a walkthrough. Code reviews are what most software developers do. You should always do a code review if you've changed a substantial amount of code, or if you've added new code to an existing program. As mentioned, agile programmers should do code reviews when they finish a user story. Code reviews are real meetings.

There are usually between three and five attendees at a code review. The people who attend a code review should each bring a different perspective to the meeting.

____________

3 McConnell, 2004.

- The moderator of the code review is usually the author. It's the moderator's job to call the meeting, send out the work to be reviewed well before the meeting time, and to run the code review meeting. The moderator may also take notes at the meeting.

- There should be one or more developers at the meeting; someone who is working on the same project as the author. This person will bring detailed knowledge of the project to the meeting and assume that perspective.

- There should be a tester at the code review. This person brings the testing perspective and not only reads the code being reviewed, but thinks about ways the code should be tested.

- Finally, there should be an experienced developer present who is not on the same project as the author. This person is the “disinterested third-party” who represents the quality perspective. Their job at the code review is to understand the code and get the author to explain the changes clearly. This person provides a more strategic vision about the code and how it fits into the project.

Oh, and no managers are allowed at code reviews. The presence of a manager changes the dynamics of the meeting and makes the code review less effective. People who might be willing to honestly critique a piece of code among peers will clam up in the presence of a manager; this doesn't help find errors. No managers, please.

The objective of a code review is to find errors in the code. It is not to fix them. Code reviews are informal enough that some discussion of fixes may occur, but that should be kept to a minimum. Before the code review meeting, all the participants should go over the materials sent out by the moderator and prepare a list of errors they find. This step is critical to making the review meeting efficient and successful. Do your homework!

This list should be given to the moderator at the beginning of the meeting. The author (who may also be the moderator) goes through the code changes, explaining them and how they either fix the error they were intended to fix, or add the new feature that was required. If an error or a discussion leads the review meeting off into code that was not in the scope of the original review – Stop! Be very careful about moving off into territory that hasn't been pre-read. You should treat any code not in the scope of the review as a black box. Schedule another meeting instead. Remember, the focus of the code review is on a single piece of code and finding errors in that piece of code. Don't be distracted.

A computer and projector are essential at the code review so that everyone can see what's going on all the time. A second computer should be used so that someone (usually the author) can take notes about errors found in the code. A code review should not last more than about two hours or review more than about 200–500 lines of code because everyone's productivity will begin to suffer after about that amount of time or reading.

After the code review, the notes are distributed to all the participants and the author is charged with fixing all the errors that were found during the review. If you run out of time, then another review is scheduled. While metrics aren't required for code reviews, the moderator should at least keep track of how many errors were found, how many lines of code were reviewed, and if appropriate, the severity of each of the errors. These metrics are very useful to gauge productivity and should be used in planning the next project.

Code Inspections

Code inspections are the most formal type of review meeting. The sole purpose of an inspection is to find defects in a document. Inspections can be used to review planning documents, requirements, designs, or code, in short, any work product that a development team produces. Code inspections have specific rules regarding how many lines of code to review at once, how long the review meeting must be, and how much preparation each member of the review team should do, among other things. Inspections are typically used by larger organizations because they take more time and effort than walkthroughs or code reviews. They are also used for mission and safety-critical software where defects can cause harm to users. The most widely known inspection methodology was invented by Michael Fagan in 1976. Fagan's process was the first formal software inspection process proposed and as such, has been very influential. Most organizations that use inspections use a variation of the original Fagan software code inspection process.4 Code inspections have several very important criteria, including:

- Inspections use checklists of common error types to focus the inspectors.

- The focus of the inspection meeting is solely on finding errors; no solutions are permitted.

- Reviewers are required to prepare beforehand; the inspection meeting will be canceled if everyone isn't ready.

- Each participant in the inspection has a distinct role.

- All participants have had inspection training.

- The moderator is not the author and has had special training in addition to the regular inspection training.

- The author is always required to follow up on errors reported in the meeting with the moderator.

- Metrics data is always collected at an inspection meeting.

Inspection Roles

The following are the roles used in code inspections:

- Moderator: The moderator gets all the materials from the author, decides who the other participants in the inspection should be, and is responsible for sending out all the inspection materials and scheduling and coordinating the meeting. Moderators must be technically competent; they need to understand the inspection materials and keep the meeting on track. The moderator schedules the inspection meeting and sends out the checklist of common errors for the reviewers to peruse. They also follow-up with the author on any errors found in the inspection, so they must understand the errors and the corrections. Moderators attend an additional inspection-training course to help them prepare for their role.

- Author: The author distributes the inspection materials to the moderator. If an Overview meeting is required, the author chairs it and explains the overall design to the reviewers. Overview meetings are discouraged in code inspections, because they can “taint the evidence” by injecting the author's opinions about the code and the design before the inspection meeting. Sometimes, however, if many of the reviewers are not familiar with the project an Overview meeting is necessary. The author is also responsible for all rework that is created as a result of the inspection meeting. During the inspection the author answers questions about the code from the reviewers, but does nothing else.

- Reader: The reader's role is to read the code. Actually, the reader is supposed to paraphrase the code, not read it. This implies that the reader has a good understanding of the project, its design and the code in question. The reader does not explain the code; he just paraphrases it. The author should answer any questions about the code. That said, if the author has to explain too much of the code that is usually considered a defect to be fixed; the code should be refactored to make it simpler.

- Reviewers: The reviewers do the heavy lifting in the inspection. A reviewer can be anyone with an interest in the code who is not the author. Normally reviewers are other developers from the same project. As in code reviews it's usually a good idea to have a senior person who is not on the project also be a reviewer. There are usually between two and four reviewers in an inspection meeting. Reviewers must do their pre-reading of the inspection materials and are expected to come to the meeting with a list of errors that they have found. This list is given to the Recorder.

- Recorder: Every inspection meeting has a recorder. The recorder is one of the reviewers and is the person who takes notes at the inspection meeting. The recorder merges the defect lists of the reviewers and classifies and records errors found during the meeting. The recorder prepares the inspection report and distributes it to the meeting participants. If the project is using a defect management system, then it is up to the Recorder to enter defect reports for all major defects from the meeting into the system.

- Managers: As with code reviews, managers are not invited to code inspections.

__________

4 Fagan, M. “Design and Code Inspections to Reduce Errors in Program Development.” IBM Systems Journal 15(3): 182-211. 1976.

Inspection Phases and Procedures

Fagan inspections have seven phases that must be followed for each inspection:5

- Planning

- The Overview meeting

- Preparation

- The Inspection meeting

- The Inspection report

- Rework

- Follow up

______________

5 Fagan, M. “Advances in Software Inspections.” IEEE Trans on Software Engineering 12(7): 744-751. 1986.

Planning

In the Planning phase, the moderator organizes and schedules the meeting and picks the participants. The moderator and the author get together to discuss the scope of the inspection materials – for code inspections typically between 200 and 500 uncommented lines of code will be reviewed. The author then distributes the code to be inspected to the participants.

The Overview Meeting

An Overview meeting is necessary if several of the participants are unfamiliar with the project or its design and they need to come up to speed before they can effectively read the code. If an Overview meeting is necessary, the author will call it and run the meeting. The meeting itself is mostly a presentation by the author of the project architecture and design. As mentioned, Overview meetings are discouraged, because they have a tendency to taint the evidence. Like the Inspection meeting itself, Overview meetings should last no longer than two hours.

Preparation

In the Preparation phase, each reviewer reads the work to be inspected. Preparation should take no more than 2–3 hours. The amount of work to be inspected should be between 200 and 500 uncommented lines of code or between 30 and 80 pages of text. A number of studies have shown that reviewers can typically review about 125–200 lines of code per hour. In Fagan inspections, the preparation phase is required. The inspection meeting can be canceled if the reviewers have not done their preparation. The amount of time each reviewer spent in preparation is one of the metrics that is gathered at the inspection meeting.

The Inspection Meeting

The moderator is in charge of the Inspection meeting. Her job during the meeting is to keep the meeting on track and focused. The Inspection meeting should last no more than two hours. If there is any material that has not been inspected at the end of that time, a new meeting is scheduled. At the beginning of the meeting, the reviewers turn in their list of previously discovered errors to the recorder.

During the meeting the reader paraphrases the code and the reviewers follow along. The author is there to clarify any details and answer any questions about the code and otherwise does nothing. The recorder writes down all the defects reported, their severity and their classification. Solutions to problems are strongly discouraged. Participants are encouraged to have a different meeting to discuss solutions.

We should look for a minute at defect types and severity as reported in a Fagan inspection. Fagan specifies only two types of defects: minor and major. Minor defects are typically typographic errors, errors in documentation, small user interface errors, and other miscellany that don't cause the software to fail. All other errors are major defects. This is a bit extreme. Two levels are usually not sufficient for most development organizations. Most organizations will have at least a five level defect structure:

- Fatal: Yes, your program dies; can you say core dump?

- Severe: A major piece of functionality fails and there is no workaround for the user. Say that in a first-person shooter game, the software doesn't allow you to re-load your main weapon and doesn't let you switch weapons in the middle of a fight. That's bad.

- Serious: The error is severe, but with a workaround for the user. The software doesn't let you re-load your main weapon, but if you switch weapons and then switch back you can re-load.

- Trivial: A small error, either wrong documentation or something like a minor user interface problem. For example, a text box is 10 pixels too far from its prompt in a form.

- Feature request: A brand new feature for the program is desired. This isn't an error; it's a request from the user (or marketing) for new functionality in the software. In a game this could be new weapons, new character types, new maps or surroundings, and so on. This is version 2.

In most organizations, software is not allowed to ship to a user with known severity 1 and 2 errors still in it. But severity 3 errors really make users unhappy, so realistically, no known severity 1 through 3 errors are allowed to ship. Ideally, of course, no errors ship, right?

In a Fagan inspection meeting it is usually up to the recorder to correctly classify the severity of the major defects found in the code. This classification can be changed later. In the Fagan inspection process all severity 1 through 3 defects are required to be fixed.

Inspection Report

Within a day of the meeting, the recorder distributes the Inspection report to all participants. The central part of the report is the defects that were found in the code at the meeting.

The report also includes metrics data, including

- The number of defects found

- The number of each type of defect by severity and type

- The time spent in preparation; total time in person-hours and time per participant

- The time spent in the meeting; clock time and total person-hours

- The number of uncommented lines of code or pages reviewed

Rework and Follow Up

The author fixes all the severity 1 through 3 defects found during the meeting. If enough defects were found, or if enough refactoring or code changes had to occur, then another inspection is scheduled. How much is enough? Amounts vary. McConnell says 5% of the code,6 but this author has typically used 10% of the code inspected. So if you inspected 200 lines of code and you had to change 20 or more of them in the rework, then you should have another inspection meeting. If it's less than 10%, the author and the moderator can do a walkthrough. Regardless of how much code is changed, the moderator must check all the changes as part of the follow up. As part of the rework another metric should be reported – the amount of time required by the author to fix each of the defects reported. This metric, plus the number of defects found during the project are critical to doing accurate planning and scheduling for the next project. This metric is easier to keep track of if developers use a defect tracking system.

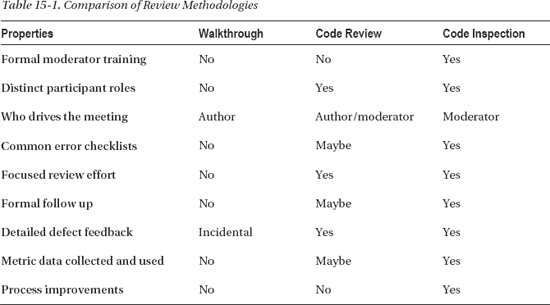

Summary of Review Methodologies

Table 15-1 summarizes the characteristics of the three review methodologies we've examined. Each has its place and you should know how each of them works. The important thing to remember is that reviews and testing go hand in hand and both should be used to get your high-quality code out the door.

___________

6 McConnell, 2004.

Defect Tracking Systems

Most software development organizations and many open source development projects will use an automated defect tracking system to keep track of defects found in their software and to record requests for new features in the program. One of the most popular open source defect tracking systems is Bugzilla (www.bugzilla.org).

Defect tracking systems keep track of a large amount of information about each defect found and entered. A typical defect tracking system will keep track of at least the following:

- The number of the defect (assigned by the tracking system itself)

- The current state of the defect in the system (Open, Assigned, Resolved, Integrated, Closed)

- The fix that was made to correct the error

- The files that were changed to make the fix

- What baseline the fix was integrated into

- What tests were written and where they are stored (ideally, the tests are stored along with the fix)

- The result of the code review or inspection

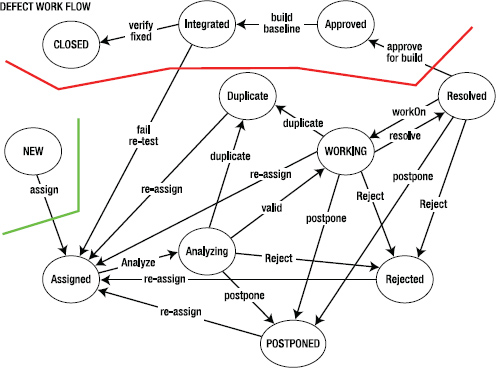

Defect tracking systems assume that at any given time a defect report is in some state that reflects where it is in the process of being fixed. A typical defect tracking system can have upwards of ten (10) states for each defect report.

Figure 16-1 shows the states of a typical defect tracking system and the flow of a defect report through the system. In brief, all defects start out as New. They are then assigned to a developer for Analysis. The developer decides whether the reported defect is:

- A duplicate of one already in the system

- Not a defect and so should be rejected

- A real defect that should be worked on by someone

- A real defect whose resolution can be postponed to a later date

Defects that are worked on are eventually fixed and move to the Resolved state. The fix must then be subjected to a code review. If the code review is successful, the defect fix is then Approved. From Approved, the fix is scheduled for integration into the next baseline of the product, and if the integration tests of that baseline are successful, the defect is Closed. Whew!

Figure 15-1. Defect tracking system workflow

Conclusion

A second set of eyes on your code is always a good thing. Code that is reviewed by others is improved and brings you closer to the Platonic ideal of defect-free software. Walkthroughs, code reviews, and formal code inspections each have their place in the array of tools used to improve code quality. The more of these tools you have in your toolbox, the better programmer you are. The combination of reviews, debugging and unit testing will find the vast majority of defects in your code7 and is the best thing that a developer can do to help release great code.

References

Ackerman, A., et al. (1989). “Software Inspections: An Effective Verification Process.” IEEE Software 6(3): 31-36. 1989.

Dijkstra, E. “The Humble Programmer.” CACM 15(10): 859-866. 1972.

Doolan, P. “Experience with Fagan's Inspection Method.” Software - Practice & experience 22(2): 173-182. 1992.

Dunsmore, A., M. Roper, et al. “Practical Code Inspection Techniques for Object-Oriented Systems: An Experimental Comparison.” IEEE Software 20(4): 21-29. 2003.

Fagan, M. “Design and Code Inspections to Reduce Errors in Program Development.” IBM Systems Journal 15(3): 182-211. 1976.

Fagan, M. “Advances in Software Inspections.” IEEE Trans on Software Engineering 12(7): 744-751. 1986.

Martin, R. C. Agile Software Development: Principles, Patterns, and Practices. (Upper Saddle River, NJ: Prentice Hall, 2003.)

McConnell, S. Code Complete 2: A Practical Handbook of Software Construction. (Redmond, WA: Microsoft Press, 2004.)

____________

7 McConnell, 2004.