CHAPTER 10

Setting Up Your Editing Universe

For me the dialogue edit is not only about words. Everything you hear is important, and before working on effects it's important and inspiring to listen and listen again to those “sync” sounds that belong to the unique moment of the shoot.

Cécile Chagnaud, film editor/sound editor/sound designer

Une goutte de sang; Les petits pains du peuple

You have all the materials you need, or at least everything you'll ever get. Now you have to organize your workspace. There's a huge temptation to dive in and get to work, but dialogue editing is a process that benefits handsomely from good preparation. A little time spent now will pay off manifold. I promise.

Different jobs require their own approaches, but here is a pretty reliable list of what you need to do to prepare each reel's session for editing. Each task will be discussed in detail later.

- If you're working by reels, make a session template that includes all of the tracks that you anticipate using for the entire dialogue editing process.

- Import AAF and/or auto-assembly. Verify the sync.1

- Copy the AAF and/or auto-assembly onto the session's dialogue tracks. Disable and hide the original AAF and auto-assembly tracks.

- Remove redundant clips where relevant.

- Add reference tones.

- Add sync pops to match your head and tail leaders.

- Mark the boundaries of the scenes on each reel.

The Monitor Chain

To make wise decisions, you have to be able to hear properly and to trust your monitors and your room. This means setting up and maintaining the cleanest, shortest, and most consistent monitor chain possible. Using the best amplifier and speakers you can manage, and avoiding unnecessary electronics in the signal path, will help you far more than you realize. Don't underestimate the impact of the room size, shape, and sound isolation. “It's only dialogue so the listening environment isn't critical” is an argument bound to blow up in your face.

Within reason, always work at the same listening level and check the level of your monitor chain as often as you can remember. Aligning monitor levels for dialogue editing is a moving target. According to Dolby Laboratories, these are the standard room alignments for film and television mix theaters:

For film work, test noise at reference level should produce an SPL of 85 dBC for each of the main front channels (Left, Center, Right) and 82 dBC for each Surround channel. The lower Surround level is specific to film-style mixing rooms. For television work, test noise at reference level is typically set to produce an SPL ranging from 79 to 82 dBC for each of the main five channels. The lower reference level for television reflects the lower average listening levels preferred by the consumer (typically 70 to 75 dBC).2

If your editing room is not unusually large, don't dare monitor at 85 dBC —unless you already know sign language. The size of editing rooms and the fact that unmixed dialogue is wildly dynamic will likely encourage you to monitor at a level 78 dBC or less. Find a level that works for you, and then measure it so that you know where you stand. But don't make yourself crazy. Remember, too, that you're not mixing, so if you must raise the volume to edit a soft whisper or lower it to survive a horror movie scream, then do so.

Forgo the Filter

Despite temptation, don't monitor your dialogue through a filter. Some editors listen through a high-pass filter in order to eliminate the low-frequency mess that often causes shot mismatches. Their argument is that since low frequency is usually reduced in the dialogue premix—across all dialogue tracks—there's no harm in doing so. You're not going to hear those low frequencies anyway, right? Well, maybe. Unless you edit dialogue in a film mix room, you don't have the bass response necessary to make good bass-related room tone decisions. If you filter your monitor chain, you're likely going to encounter surprises in the mix.

Moreover, dialogue editing is about resolving problems through editing. It's not about filtering. This sounds pedantic and inflexible, but it's the only way to look at it if you want good results. If you can get a scene to work despite unsteady bass in the room tone, you stand a far better chance of good results in the dialogue premix. Cutting through a filter is akin to washing dishes without your much-needed eyeglasses. Of course it goes easier; you just can't see the spots you're missing.3

Sync Now!

Before you begin editing or organizing your material, make certain that every region of your session is in sync with the guide track, and then with picture. Normal human laziness will tell you that since there's ample opportunity to check sync, then why bother now? Here are some reasons you should sync now rather than later:

- Your session is now as simple as it will ever be. As you work, it will become more complicated, heavier, and more difficult to manipulate. Plus, once you begin editing, clips and EDL times will no longer match.

- Syncing the original AAF session before you copy it means that you'll always be able to use the safety copy as an absolute sync reference.

- Doubts about sync will make you crazy. If you know your tracks are in sync, you never need to worry. This leaves you free to edit, to create, and to think about things more important than the silly sync Schnauzer gnawing at your ankles.

It's a joy to edit knowing that the film is really in sync.

Know What You're Syncing To

Import the QT movie's audio onto a new track, lock it, and use it as your sync reference. Syncing to the guide track doesn't necessarily mean that the sound is in sync with the picture—that depends what went on in the picture department. However, you need to start somewhere, and the film's guide track is a comforting place to begin.

You probably won't yet be able to determine the absolute sync of the film—the actual relationship between picture and sound—until you get the final online, color-corrected file. This final picture is astonishingly sharp (depending, of course, on the film), thus revealing all sorts of details you've never before seen. But there's a price: the process of conforming, adding visual effects, and making it all look pretty will likely throw chunks of the film out of sync. This means that what you sync now you may have to sync again. But do your best before you start editing.

Normally, the OMF won't have any local sync variations. You can usually select the entire OMF and move it back and forth until it phases with the reference. Easy. But when syncing the auto-assembly, you may encounter weird, unpredictable sync offsets for each region of your session. Resyncing this sort of mess can be slow and ugly. However, there are software solutions for such problems.

Titan was the software package we used in Chapter 7 to assemble a file-based project. The original sound was recorded on a hard disk recorder, and we used Titan to recreate the picture editor's project, using the original BWF files. Titan has several other utilities up its sleeve. One of them is Fix Sync, which automatically aligns an auto-assembly to a guide track. The process is sample-accurate, whether or not you used Titan for the conform.

If you don't have Titan or another auto-sync program, you'll have to do this by hand and ear. When you have varying offsets and you don't know in which direction each region will vary from the reference, you're probably better trying a sync plan like this:

- Make an edit group of all tracks of the auto-assembly.

- Output the auto-assembly to one side of a stereo output. Output the guide track to the other side. It doesn't matter if you put the auto-assembly on the right or left, but always do it the same way so that you don't have to think about it. I always put the reference on the left.

- Play one auto-assembly region and the guide track together. Adjust the volumes so that left and right are equal. If the sync offset is big, it will be pretty clear if the auto-assembly region is late or early. Sometimes small sync differences are hard to hear when played in a “panned-out” manner. In this case, listen to the stereo image. If the auto-assembly is on the right and the stereo image is “pulling” to the right, then the auto-assembly region is early. When sync differences are very slight, you tend to favor the earlier signal, perceiving it as louder.4 Nudge the auto-assembly region until the sound is centered in the stereo field and you hear the phasing sound that indicates sync.

- If you're using an external mixer to monitor, pan the two channels to center and listen for absolute phasing.

- Repeat this process for all regions.

Setting Up Your Editing Workspace

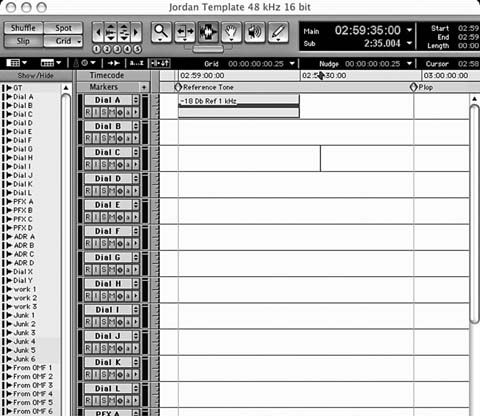

Once you have an in-sync OMF and auto-assembly, choose the one you want to use for your edit. If you go with the auto-assembly, then make the OMF tracks inactive and hide them. Copy the auto-assembly tracks to your empty dialogue tracks and then, as you did with the OMF tracks, deactivate and hide the original auto-assembly (see Figure 10.1).

Why go to such trouble? What will these copies buy you? The OMF/AAF copy, even if you don't use its sounds, is a useful reference. Fades, temporary sound effects and music, and volume automation are all intact and will help you understand what the picture editor was trying to accomplish.

Plus, non-timecoded material, such as “edit room ADR,” will likely be in the OMF but probably won't show up in the auto-assembly. When you start editing a scene, listen to the OMF tracks to get into the editor's head. Then you can go back to the virgin auto-assembly tracks with a good idea of the editor's artistic and storytelling hopes for the scene.

The copy of the auto-assembly will come in handy if you inadvertently offset or delete a clip. Just unhide the track and copy the missing region into your dialogue tracks in sync. You can also use these unaltered regions as guides if you need to conform your edit to a new picture version. Unlike your elegantly overlapping edited sounds, these virgin regions have the same start and stop times as the EDL, so you can use them to calculate offsets and figure out what the picture editor did to make the change.

Figure 10.1 Auto-assembly regions appear on the dialogue tracks; copies are disabled and hidden (Assy 1, Assy 2, …, Assy 8). OMF tracks are disabled and soon to be hidden. They contain the picture editor's level automation and temporary SFX, music. Note that the top track contains a locked mono guide track from the video file.

Working With Just an OMF

Often you won't have an auto-assembly, just an OMF. If that's the case, make a copy of the OMF (after you've confirmed its sync) and then make the copied tracks inactive and hide them. Delete the picture editor's volume and pan automation, as they make room tone editing next to impossible. Keep all picture-cutting automation in the hidden copy of the OMF, and you'll have a convenient reference when you can't figure out what the picture editor was thinking.

Before the film gets to you it is subjected to many screenings—director, focus groups, producer, and the like—so its temporary soundtrack must be listenable, and that requires volume automation. Most likely the picture editor did all sorts of volume rides while cutting the film. However, when you're editing dialogue, these numerous ups and downs can shackle you to existing level changes. If you're on an insanely quick job, say a 45-minute documentary with only two days for production sound editing, you may choose to keep the picture editor's automation and just “make it nice.”

Labeling Your Tracks

Each track must be named. This ought to be pretty obvious, but even the most obvious things in life often need to be said a few times. Of course, labeling your tracks means deciding how you want to organize your work, and this involves understanding the complexity of the film, the capacity of the rerecording mixing desk and rerecording mixer's preferences, and the habits of your supervising sound editor. Busy films or films with lots of perspective cuts need more tracks; action films need extra PFX tracks; poorly channel-rich OMFs mandate more “junk” tracks. Table 10.1 shows a suggested dialogue template for small films.

Some people like to use letters to name tracks. Others prefer numbers. I like letters for tracks that will make it to the dialogue premix (e.g., Dialogue, ADR, PFX, X) and numbers for tracks I created just for my convenience (Junk, Work, etc.). As long as your rerecording mixer is content, it really doesn't matter which you use.

Work and Junk Tracks

Some workstations allow you to work on several timelines at once and to have numerous open sessions. Most DAWs, however, present all of your work on one timeline, as though you're working on a piece of multitrack recording tape. One of the downsides of a single timeline is that you don't have a “safe” area to work in without worrying about damaging your session. That's why I always create several extra tracks where nothing of value lives.

It's here, on the “work” tracks, that I open long files with no worry that I'll eradicate another region well offscreen. (The danger is not that you'll delete a region that you can see—after all, if you're paying attention, you'll see it. The risk lies outside your screen, where you can cause all sorts of unseen damage.) The work tracks are also where I record new sounds into the workstation and where I perform any editing operation that calls for rippling

the track, as in Pro Tools' shuffle mode, which is very convenient but famous for knocking tracks out of sync.

I also open several new tracks, inelegantly entitled “junk” (if this name seems too demeaning for these valuable storage tracks, try “outs,” “removed,” or something along those lines). Any sound I don't want in the mix but still want to have around just for safety I put on a junk track. As you work your way through the film, there are some regions you can delete with total confidence. For example, if two or more channels of a clip are precisely the same, you can throw away one of them with a clean conscience. You'll never need it again.

On the other hand, if you're editing a scene with a boom and three radio microphones, you may decide to use only the boom but you probably aren't fearless enough to toss the radio mics. Junk tracks are great places to store—in sync—these unwanted regions without clogging up your sessions. I also use them to store alternate takes as I reconstruct a shot. Plus, they're a convenient place to keep room tone for a scene.

Neither “work” nor “junk” is a standard industry name or concept. Too bad. To my way of thinking and working, this model serves well. Use it or find something else that works for you.

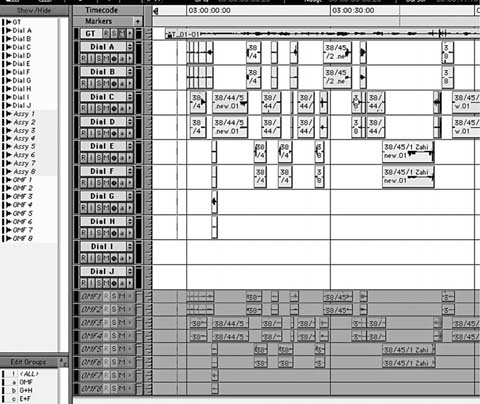

Templates

If you edit a lot of films, or if you're embarking on a 12-reel feature, you'd do well to make a master template session that you can open, offset, name, and use as the basis of each reel's session. If you're a master typist, this isn't necessary, but if you get tired of typing “Dial A,” “Dial B,” and so on, over and over again for each track in each session, make a template and be done with it.

A template, shown in Figure 10.2, is a generic session containing the named tracks as well as the head beeps and the reference tones. It beats rebuilding sessions from scratch, and it helps ensure that each reel has the same track sequence, which is something rerecording mixers appreciate. Here are some tips on making templates:

- Properly set the sample rate and bit depth to match the film.

- Build your template for reel 1. Session start: 00:57:00:00.5 Reference tone: 00:59:00:00–00:59:30:00. Head sync pop: 00:59:58:00 (if the picture timecode “hour” rolls over at FFOA. If the hour is at “picture start,” the sync pop will fall at 01:00:06:00). On reels 2 and on, reset the session start (R2 = 01:57:00:00; R3 = 02:57:00:00; etc.). Also move the reference tone and sync pop to the appropriate timecode locations for each reel.

- Open about 30 mono tracks and label them accordingly. Include the work and junk tracks.

- Save a copy of your template on the internal hard drive of the computer and a USB flash drive, since your next film will probably need a very similar template.

- Import both the OMF and the auto-assembly of each reel into a copy of this session.

Reels or Supersession?

Some editors like to combine all of the dialogue reel sessions into one giant timeline—a supersession—so that they have the entire film before them without quitting one reel's session to access another. I think this is a bad idea. For background or SFX editors, there are some worthwhile reasons to work this way. After all, if you're building the backgrounds for a scene that takes place at a location visited many times throughout the film, it's nice to be able to cut and paste between reels. Effects editors, too, can benefit from having the timeline of the entire film before them.

But dialogue editing issues are local, not global across the whole film. There's rarely a need to steal sounds from another scene, and even when you do it's not hard to find the file and import it. I organize my work into one session per reel. Here are some reasons:

- Short sessions are quicker to work with, they load faster, and they make the computer happier.

- If a one-reel session is damaged or corrupted, it's less catastrophic than when a six-reeler is wrecked.

- The horizontal scroll bar has much better resolution in short sessions.

- A version change in one reel is easier to deal with than it would be in a composite session. You can modify the affected reel, change its name to reflect the version number change, and nothing else is affected.

There is, however, one advantage for dialogue supersessions. If you anticipate that the picture department will rebalance the reels, moving scenes from one reel to another, a supersession may save you some grief. However, good reconform software will chip away at this advantage.

Eliminating Redundant Regions

Back when the two-track Nagra was a location standard, sound mixers would record a boom microphone on one track and a mix of the other mics on the other. When a scene merited nothing more than a boom, the same signal was placed on both tracks. This redundancy provided a bit of protection from edge damage. DAT came along and largely wiped out analogue field recording, but not necessarily its habits. For no real reason, many location mixers continued to print onto two channels, whether mono shots recorded with one boom microphone or split tracks (see Figure 10.3). The result: almost all the sound from the OMF came in the form of pairs.

Now that you're working in a double-system hard disk recorder universe, you'll not often run into this situation. But if the sound on your project was captured direct-to-camera or to another two-track format, this redundancy ought to interest you. Before you start editing, find these dual mono tracks and delete one side of the pair. The duplicate track does you no good whatsoever and can cause all sorts of trouble, including the following.

- Processing differences between the two tracks could result in unequal latencies and hence phasing.

- Two identical tracks mean twice the work, twice the fades, double the click removal, and more tracks consumed.

- It is much harder to organize your scene when lugging around these useless tracks, and it's difficult to glance at the scene's layout and know what's going on when you have unnecessary material.

And remember, there's nothing to gain from having two tracks with the same sound.

You could, of course, rely on your ears to compare the two sides of each region, or on your eyes to evaluate the waveform of one side of a pair against

Figure 10.3 A dual mono event (left) and a split track event.

Figure 10.4 Confirm that two tracks share the same information by inserting a very low latency plugin into one of the tracks and inverting the phase.

Figure 10.5 if the channels phase cancel they are identical. Delete one side of the pair. If the channels are not identical, don't do anything. You don't yet know enough about the scene to begin choosing microphones.

the other. But there are a few thousand clips in a film, and life's is too short to wear yourself out on such repetitive and boring chores. Somewhere in your studio you have the tools to help you hunt down these annoying dual mono files. Barring anything simpler, you can use a plugin to figure out if two clips are the same (see Figure 10.4).

- Insert a zero-latency plugin. I like to use a one-band equalizer (EQ) because it sits near the top of the list of options (so it's easy to get to), its interface is charmingly low-tech, and it's free of latency. Pro Tools' Trim plugin is also useful. If your DAW allows you to reverse the phase of a track without resorting to a plugin, then by all means do so.

- Open the inserted plugin, flip the phase, and copy the plugin to every odd track.

- Temporarily disable volume automation for all tracks so you can make volume adjustments to the track without “fighting” the automation.

- Solo the two tracks you want to check. If they're identical, you should hear almost complete phase cancellation. Adjust the volume on either of the sides of the pair to perfect it.

- If you're unsure if the two tracks are phase-canceling, mute one. If the sound suddenly becomes much louder, with more low frequency and fuller fidelity, you have a match. You can delete either side of the pair—they're similar enough to phase, so it doesn't really matter which one.

- If the sound doesn't get louder (or even gets quieter) when you mute one side of the pair, then it's not a dual mono pair. Leave it alone. At this point, don't try to choose which track to use (see Figure 10.5).

- Repeat this for each paired region.

- Don't forget to turn on your automation and remove the phase reverse insert when you finish.

Scenes

A film is based on scenes. A scene usually tells a freestanding mini-story and is limited by time, location, or story issues. Each mini-story has a life of its own, with its own rules, quirks, and personality. Together, the scenes tell the greater story of the film. Unless your project is Rope or Timecode or Russian Ark, you'll know the boundaries of a scene when you see them. If you're not sure when a scene changes, talk to the director or the supervising sound editor (you may think it impossible not to know when a scene changes, but some transitions are ambiguous and need to be addressed).

Dialogue editors have a special relationship with scenes, for within their confines we try to make everything seem continuous, smooth, and believable. At their edges, however, we usually want to slap the viewers a bit to indicate that something new is happening. As a result, scene changes are almost always quick and at times brutal. By marking scene boundaries you will better understand the flow of a scene, and you'll likely be better organized.

- Mark scenes before you begin editing and you needn't hunt around for the scene boundary while you're passionately editing. You can keep up a good creative pace.

- Before you begin editing, the scene changes in your OMF will probably be hard cuts, centered on the transition and easy to spot. But as you edit and create crossfades, you'll lose the location of the original edit unless you identified it with a memory marker.

- Markers make it easier to apply standard scene transition durations (one frame, two frames, etc.), since you have a reference around which to build your crossfades. You can always choose a different transition, but the marker gives you a clue of what you are doing, regardless of how closely you're zoomed in.

- Markers make it easier to prepare for the mix.

Figure 10.6 Markers (top) are added to indicate scene changes. Most workstations provide a list of markers or locator points (right) that can be used to navigate through your session.

- If you establish the scene breaks and place markers, the rest of the editing crew will be able to use your marks. This saves time and ensures that all scene transitions will be sharp and even and on the correct frame, not staggered messes that lack energy.

As shown in Figure 10.6, you should apply short, sensible names to the scene markers, so that you can use the markers list to instantly navigate to a scene. For example, “(1) Sc33 INT car Sarah,” “(2) Sc17 EXT Bob runs,” and “(3) Sc45 INT kitchen fight.” Make up your own naming system as long as you can pack all the information you need into a few characters.

Beeps, Tones, and Leaders

In the days of analogue recording, every piece of tape, magnetic film, or optical track carried a series of alignment tones at its head. With these tones —usually 1 kHz, 10 kHz, and 100 Hz—as references, a tape could be played back properly on any well-maintained machine, anywhere in the world. A set of standard (and very expensive) alignment tapes was used to ensure that the machines were set up for proper recording and playback. Every assistant in the industry spent more time than he cares to remember aligning analogue machines. The system was amazingly simple, and it worked.

Today analogue machines are all but unheard of and machine alignment is a lost art. With digital, there's little to align. Unfortunately, the myth that there is nothing to align has resulted in misplaced complacency. Plus, the fact that a maintenance engineer once had to regularly align analogue recorders kept him in touch with the machines. That daily or weekly interaction was an opportunity to learn of other looming problems.

Even though you're working in digital, you still have to place alignment tones (often called “reference tones”) on your tracks. Why?

- You need a reference tone to align the monitor chain in your edit room.

- If you make a rough mix of your work as a guide track for the effects, backgrounds, Foley, or music editors, it should have a reference tone attached so that other editors will know your program levels.

- When you bring the tracks to the mix, each track's reference tone ensures that you've routed and patched the sound correctly. You can also see if you have a bad connection (a −6 dB tone level indicates a missing leg on a balanced connection). If a particular track is not going to be used on a reel, a missing tone on it will tell the mixer to ignore it during that reel.

Making a Reference Tone

A large studio probably has a ready collection of reference, or calibration, tones, either on the internal drive of each computer or on the network. Ask. A studio's “Tones Folder” will likely have an assortment of options such as these:

| Sync pop 48 KHz, 16 bit | Sync pop 48 KHz, 24 bit |

| 1 K reference @ −18 dB, 48 KHz, 24 bit |

1 K reference @ −18 dB, 48 KHz, 24 bit |

| 1 K reference @ −20 dB, 48 KHz, 24 bit |

1 K reference @ −20 dB, 48 KHz, 24 bit |

Make sure to pick the reference tone and sync pop to match the sample rate and bit-depth of your session, as well as the reference level of the studio and the local film community. Nowadays, most facilities use a reference like this:

−18 dBFS = 0 VU = +4 dBu

−20 dBFS = 0 VU = +4 dBu

This means that, in a properly set up audio chain, a digital reference of −20 dBFS (full scale, the standard digital scale with 0 as the absolute highest value) equals 0 on an analogue VU meter, which equals 4 dBu, which is 1.23-volt RMS.

Debates over the “right” reference level are longstanding, passionate, and often personal, so this is not the place to get into it.6 Bottom line: before you begin your project, determine the reference level of the original field recordings, talk to your studio engineer to learn the local reference level. Odds are it will be −18 or −20 dBFS. If your studio doesn't provide reference tones, make your own. Most workstations provide a way to make a reference tone. It's not the best choice, but it will do in a pinch.

To complicate matters, certain “light” or native workstations use converters that are aligned in a nonstandard manner and cannot be calibrated internally. If you're working in an all-digital environment, this doesn't pose any problems. But when you're moving from DAW to mixer in the analogue world, you must use a bogus reference level in the workstation. Experiment.

Using Your Reference Tone for Daily Alignment

Unless you're listening directly from the output of your workstation and using internal loudness meters, each editing day should begin with a quick alignment of your monitor chain. This is particularly true if you're working in a space you're sharing with others—goodness knows what happened during the night! Running a tone through your monitor mixer and external meters will ensure that you're always working at the same monitor level and that your meters can be trusted. Send a known reference through your system; if the reference tone doesn't behave as you expect, you'll know to look for problems.

If there's a minor inconsistency between the two stereo channels, trim your monitor console accordingly. But if in your analogue console you notice a disparity of 3 or 6 dB, there's a problem in the chain. Talk to the studio tech rather than compensate with the trim pots.

The Sync Pop

The importance of the sync pop (which is also called “plop” or “beep,” depending on where you live) can't be overstated when you're working on a film project. Filmmaking is technically a very sophisticated industry with a workflow that more-or-less guarantees sync. Until the very end, that is. When the final film negative is joined with the finished soundtrack, the visible sync pop on the optical negative is manually aligned with a known location on the film. This hasn't changed in 75 years.

But it's changing now. More cinema releases on DCP mean fewer and fewer on film. DCP labs aren't interested in a sync pop, in fact, they usually don't want one. They rely on timecode, so the relevance of the sync pop is under attack. However, even if you know that the film will never see celluloid, there are some decent reasons to use beeps up until the soundtrack is ready for print mastering:

- You can use the pops (head or tail) to help resync the sound if needed.

- You'll periodically make reference mixes for the other editors. If, however unlikely, one of them is working on a DAW that won't accept your file's metadata (which includes timecode), she has to use the pop for sync reference.

- A sync pop and leader are useful in determining if the video offset in your DAW is correct. Syncheck is more effective for checking this, but the sync pop is better than nothing.

- Can anyone really say that a title will never be released on film?

- It's just the way it is, at least until film is dead altogether. Reels must have reference tones plus head and tail pops. If you don't do them, you'll come across as a video/MIDI geek and the film people won't take you seriously.

The head sync pop is one frame long and it begins two seconds before the start of each reel. So if your first frame of action (FFOA) on reel 2 is 2:00:00:00, the sync pop will fall at 1:59:58:00. Many film editors place the “hour mark” (1:00:00:00, 2:00:00:00, etc.) at the picture start of the leader. The picture start is 12 feet, or eight seconds (at 24 fps), before the FFOA. If it's at 2:00:00:00, place the sync pop at 2:00:06:00; the FFOA will then be at 2:00:08:00. If the leader was correctly placed by the picture department, the final “flashed” number of the countdown will coincide with your pop.

The placement of the tail sync pop is a bit more enigmatic. It depends on the kind of tail leaders you're using (some productions don't bother with tail leaders and merely place another head leader at the end of the reel!). If the videotape or video file doesn't have a tail leader, put a pop exactly two seconds (48 film frames or 50 PAL video or 60 NTSC video frames) after the last frame of action (LFOA) and hope for the best.

SMPTE Leader Versus Academy Leader

Just as many DCP labs don't want sync pops before the FFOA, they also shun leaders. But as long as film's around, we'll continue to see the familiar countdown. There are two kinds of head leader: SMPTE and Academy. Both allow the sound and picture to remain synchronous throughout the postproduction process. Each has a “picture start” mark 12 feet before the FFOA and was used by projectionists to crossover from one projector to the other as the reels change.7 The head leader is also used to line up a film projector with mag recorders and players for mixing, and you'll need it if you ever want to look at your mix on a Steenbeck or other film editing table.

So, what's the difference between the two leaders? Nothing, except that the SMPTE counts in seconds whereas the Academy counts in feet. The Academy will pop on the number three; the SMPTE will pop on two. As long as you placed your sync pop two seconds before the FFOA (48 film frames, 50 PAL frames, 60 NTSC frames), it will fall at the right place no matter which leader your picture editor used; that is because at 24 fps, 35 mm film travels 90 ft/min, and 90 ft/min equals 1.5 ft/sec, so 3 feet equals 2 seconds.

The start mark will always be 12 feet, or 8 seconds, before the LFOA on a reel. If not, then something's wrong. Talk to the supervising sound editor or picture editor. Just don't ignore the problem.

Wild Sound

Most of the audio from the shoot is sync sound, recorded with the camera rolling. However, a decent location mixer will try to take the extra time to record additional sounds that may have been missed, unavailable while shooting the scene, or are simply interesting. You can't rely solely on sync recordings to put together a proper scene, so these extra sounds are lifesavers. Here are just a few of the reasons to record wild sound:

- For dialogue that couldn't be recorded during the shoot; for example, two characters talking to each other. In the rain. Under umbrellas. A long shot of a couple of nudists is another good example.

- For shots ruined during the filming. A plane flies over, a train goes by. Perhaps the location mixer's pleas for another take were ignored, but she had the good sense to call the actors aside and record the scene wild.

- For specialty sounds difficult to recreate with sound effects. If there's an unusual car or motorcycle in a scene, a good location mixer will record its sounds, knowing that the production may never find them elsewhere.

- For room tone. The location mixer, if at all possible, will record room tone for each scene—the sound of a scene without talking, footsteps, phone rings, and the like; in other words, silence.

- For location-specific sounds. If the scene takes place in a jail cell, for example, the sound recordist may capture special details such as the door, the springs on the bed, or the toilet, all within the special acoustics of that unique space.

- For sounds that will be useful to solve certain dialogue editing problems. For example, if one side of a scene has lots of cloth rustle that is lacking in the other shots, a particularly observant sound mixer will grab some wild rustle to cover the gaps. This warrants flowers.

“But,” you may ask, “aren't all of these sounds replaced by ADR or Foley later?” Often yes. However, at times a bit of wild dialogue will save a scene from the pain and suffering (and save the production the expense) of ADR. A “save” created from alternate takes and wild sound will usually be more effective and believable than an ADR line helped along with Foley.

As you organize the wild tracks from the shoot, copy the nondialogue recordings and give them to the sound effects editor. There's nothing like the real thing when it comes to building realistic-sounding scenes.

Finding Wild Sound

Wild sound is captured whenever the opportunity arises, so it's not neatly organized in one section of the recordings. To find wild sound, you first have to learn to read a sound report, the log of sounds recorded during the shoot. There are many kinds of sound reports, depending on the recording format, the source of the paperwork, and the temperament of the location mixer.

Figure 10.7 shows an example of a sound report for two-track recordings. It's pretty simple, yet it provides all the critical information. The location mixer fills in scene, take, and timecode for each shot, along with other useful information. “Left: boom, right: radio mic mix” is a typical comment. Assume the information is the same on subsequent shots until another statement supersedes it. There may also be notes such as “plane over last half” “or GT” (guide track, which means that the recording isn't good enough for use in the final track but can be used when you loop the shot or replace it with wild dialogue), or perhaps “PU.”

Today you'll rarely see a sound report like this because it's (1) hand-written and (2) based on two-track recordings, which are becoming less common. That machine-generated sound reports are better for dialogue editors is self-evident; not only can you actually read them, but you can convert them to any format you choose, thus making sorting and searching manageable (see Figure 10.8).

When you begin a project, you don't know anything about the sounds you'll be working with. Collecting wild sound before you start to edit will save you grief later, and it's an efficient way to get to know the raw material of the film. As you dig through the dailies, be on the lookout for notes or abbreviations that point you to wild sound (see Table 10.2).

Figure 10.8 Sound report generated by a hard disk recorder.

Using the Wild Sound Log

You need an organized log of the film's wild sound. If you receive a text file or PDF of the sound reports, organize them by scene as shown in Figure 10.9, using a spreadsheet or word processing program. If you didn't get electronic sound reports, or if they are somehow worthless, you can still create an organized wild sound log—it's just a bit more work. Start by making a session called “Wild Sound Files.” Use the sound reports to locate wild sound within each shooting day folder. Add these sound files to the timeline and rename clips to something that makes sense to you and any other editors who will be working with the production sound. Then create files from these clips. What you're left with is a folder filled with properly named wild sound files. Most workstations allow you to make a text file of the contents of a session, with which you can create a list of these wild sounds. Once you create a text file you'll almost certainly have to do some editing to remove unwanted details and organize the information in a manner that makes sense for you. You can use a spreadsheet or word processing program for this clean-up process. Figure 10.9 is an example of a wild sound list generated from a Pro Tools session. Keep something like it handy while editing.

Nessie's Revenge

Wild Sound Log

| EVENT | REGION NAME | DURATION |

| 1 | 01 RT, ver 1 INT bedroom night | 00:00:54:20 |

| 2 | 01 RT, ver 2 | 00:00:47:14 |

| 3 | 02 RT park night, some WT footsteps | 00:00:39:23 |

| 4 | 03-06 RT Shula's house | 00:00:30:21 |

| 5 | 12–15 wild Sam + Dana together | 00:00:21:03 |

| 6 | 12–15 wild text, good rustle @ 01:01:01:01 | 00:00:46:01 |

| 7 | 12–16 wild Dana lines, take 1 | 00:01:01:12 |

| 8 | 12–16 wild Dana lines, take 2 | 00:00:25:00 |

| 9 | 12–19 wild Sam lines | 00:00:33:12 |

| 10 | 12+20–22 wild car in and out | 00:03:31:17 |

| 11 | 12 wild Jeweler lines | 00:01:07:15 |

| 12 | 22 RT hospital room | 00:00:55:23 |

| 13 | 24 wild Rebecca lines 01 | 00:00:46:14 |

| 14 | 24 wild Rebecca lines 02 | 00:00:50:08 |

| 15 | 557#x2013;57 wild text 01 | 00:00:37:03 |

| 16 | 55–57 wild text 02 | 00:00:41:14 |

| 17 | 55–57 wild text 03 with mask | 00:00:39:12 |

Figure 10.9 Wild sound log. In this example, wild sound from the film was imported into a session. A text file of the session was imported into a spreadsheet to make for easy finds and sorts.

By now you may be wondering, “Why go to all the trouble of loading the wild sound and creating a list before you know if you'll need it?” After all, you may well get through a scene not using it at all. You'll fix the bad lines from alternate takes, piece together some missing rustle from a later section of the scene, and replace off-mic wide-shot dialogue with close-up sound. Fine. However, if you look at the list of wild sounds before you start editing a scene, you'll better understand the problems encountered when the scene was shot.

I keep the wild sound list taped next to the monitor so that I have no excuse but to check it before I begin each scene. There's probably a reason the wild recordings were made, so being familiar with them may be useful. Knowing about the wild sound might powerfully alter the way you look at the scene, or it may make no difference whatsoever. But you never know if you don't look.

_______________

1. The OMF will almost certainly be in sync with the edited guide track, but it's worth checking. An assembly will more likely exhibit region-by-region sync problems. Use Titan's Fix Sync to sort this out.

2. See “Dolby Model 564 Multichannel Audio Decoder User's Manual” www.dolby.com/uploadedFiles/Assets/US/Doc/Professional/139_DP564_UserManual.pdf. Pay particular attention to Chapter 5.

3. There are times when you have to filter a soundfile before you can edit it. A very low-frequency tone (say less than 40 Hz) may make glitch-free editing all but impossible, so removing it may be necessary before proceeding. Insert a high-pass filter or process it offline: make a copy of the fully edited clip, move it to an adjacent track, and mute it before processing the troublesome clips.

4. This phenomenon is called the “Haas effect” or “precedence effect” and is used in many psychoacoustic processors and algorithms.

5. Session start times are usually fixed within a film community, but vary from one culture to another. Unless you have a very good reason, use the same session start as your colleagues.

6. For a thorough explanation of the mysteries of exchanging signals between analogue and digital domains, see Hugh Robjohns, “The Ins and Outs of Interfacing Analogue and Digital Equipment,” Sound on Sound (May 2000).

7. Modern theaters don't screen films from two crossover projectors. Instead, when a film arrives at the cinema, the projectionist strips the film of its leaders and splices together the reels. The reels are combined onto one huge horizontal platter (affectionately called a “cakestand”) for continuous projection, so the projectionist needn't babysit the projector so closely and instead can control several rooms' screenings simultaneously. This is much more cost effective for the cinema owners. The bad news is that there's no longer a skilled projectionist keeping close tabs on focus, framing, and sound.