Let’s begin by thinking about some problems.

Think back over the past 24 hours. Note down some of the problems you encountered during that period. Think about the problems you solved and the ones you didn’t; the little problems and the bigger problems; problems that people presented to you and problems that you invented for yourself.

Go ahead, do it now, before reading on. Don’t turn the page until you’ve come up with some ideas.

My guess is that, whatever you noted down, you haven’t mentioned a whole host of problems that you solved without thinking about them:

- You may have mentioned that you lost the car keys; but you didn’t mention that you were able to find the sugar in the kitchen cupboard.

- You may have mentioned that you discovered a problem with your computer; but you didn’t mention that you changed a light bulb.

- You may have mentioned that someone made a demand that you weren’t able to fulfil; but you didn’t mention that you were able to meet the demands of half a dozen other people during the day.

- You may have mentioned that you felt overwhelmed with problems at one point; but you didn’t mention that you cleared the ‘to-do’ list with which you started the day.

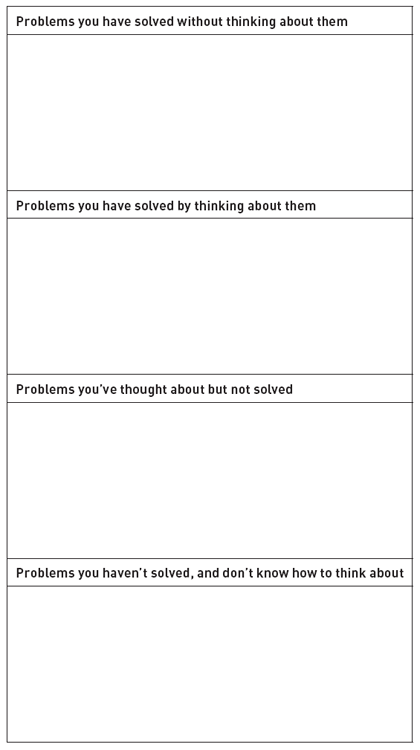

To make this point, allocate each of the problems of the past 24 hours to one of the four boxes opposite:

The top box should contain the most problems – by far.

Perhaps it was hard to fill in that top box because you simply didn’t notice most of the problems you were solving. We focus, not surprisingly, on the problems we can’t solve; we tend to ignore the problems we solve very successfully.

Think back over that 24-hour period again. Ask yourself: ‘What problems did I solve, which I wouldn’t have been able to solve when I was two years old?’. In the years since you were a small child, you have become one of the most proficient and effective problem-solvers on the planet.

We should celebrate our success as problem-solvers. Human beings aren’t specialized; we can’t fly like eagles or swim like dolphins. It’s our versatility – our ability to solve different problems in different ways – that makes us, arguably, the best problem-solvers on the planet.

What’s the secret of your versatility as a problem-solver?

Pattern-matching: the heart of all problem-solving

Let’s begin with perception. How do we make sense of the world? The simple answer is: by pattern-matching. The human brain processes information in parallel. Think of it as ‘bottom-up’ processing and ‘top-down’ processing:

- Bottom-up processing. The brain doesn’t recognize objects directly. Different parts of the brain respond to different features: shape, colour, sound, touch and so on. The neural networks that respond to all these different features – the myriad connections of brain cells in our brains – operate independently of each other, and in parallel.

- Top-down processing. Meanwhile, other parts of the brain are doing ‘top-down’ processing: providing the mental models that organize information into patterns and give it meaning. As you read, for example, bottom-up processing recognizes the shapes of letters, while top-down processing provides the mental models that combine the shapes into the patterns of recognizable words.

These two kinds of processing engage in continuous, mutual feedback. It’s a kind of internal conversation within the brain. Bottom-up processing constantly supplies new information so that we can modify our mental models. Meanwhile, top-down processing is constantly integrating incoming information into existing mental models.

Our mental models make sense of the world for us. Indeed, they create our world. As Joe Griffins and Ivan Tyrell explain in their book, Human Givens:

“These metaphorical templates are the basis of all animal and human perception. Without them no world would exist for us. They organize our reality.”

Where do our mental models come from? Many of them we learn through experience; some seem to be ‘hard-wired’ into us at birth. Newborn babies, for example, can recognize faces and expressions. They can even copy actions, sticking out their tongues when they see someone else doing so, even though they can’t know what the grown-up is doing – or even what a tongue is.

The brain is always guessing when it pattern-matches. Incoming information is often garbled, ambiguous or incomplete. How can my brain distinguish your voice from all the other noises in a crowded room? Or a flower from a picture of a flower? How does it recognize a tune from just a few notes? The answer is that top-down processing filters incomplete information through existing mental models and completes the pattern.

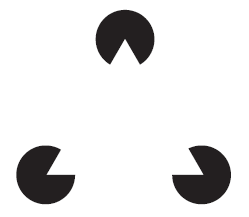

Visual illusions demonstrate how the brain makes these calculated guesses. In Figure 1.1 we can see a white triangle, even though the image contains no triangles. The brain’s top-down processing completes the incoming information by imposing a triangle – its best guess of what’s there. (The triangle is named after Gaetano Kanizsa, an Italian psychologist and artist.)

Figure 1.1 A Kanizsa triangle

This process is called perceptual completion, and it’s not limited to visual information. When you hear the Beatles sing ‘All You Need Is Love’, it’s hard not to sing the answering call (‘Da da da-da DAAAHH!’). The moment you smell that particular scent, you’re swept back to your first meeting with that special person. One sip of a Campari and soda, and I’m sitting once more on the waterfront in Venice. Perceptual completion continually helps us to create meaning from the merest wisps of information.

Ulric Neisser and the perceptual cycle

We make sense of the world, then, by matching incoming information to mental models. But pattern-matching is more than simply responding passively to incoming information. We make sense of the world because sense-making helps us to be more effective in the world.

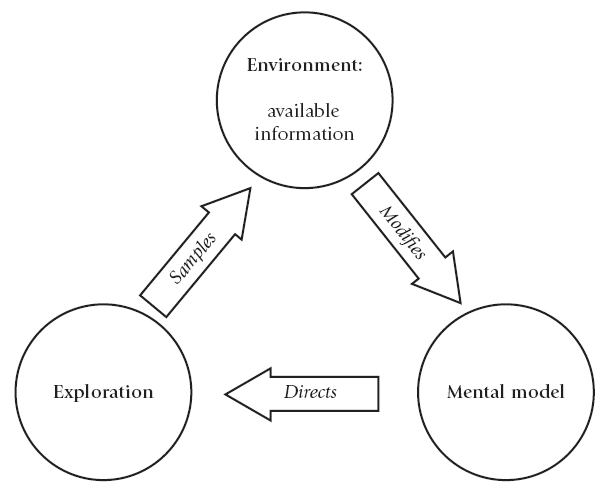

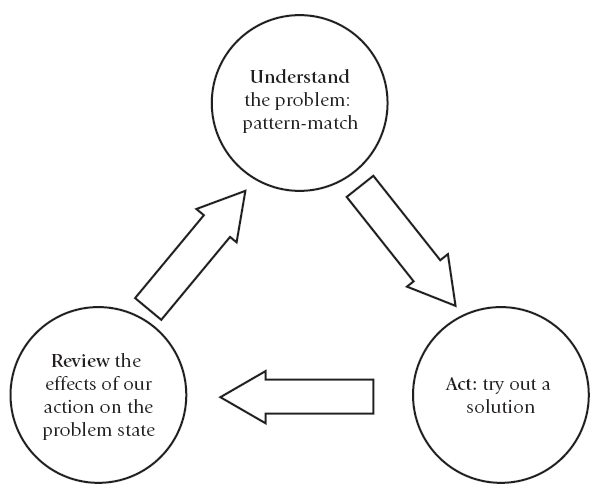

Ulric Neisser was an American psychologist and member of the National Academy of Sciences who died in 2012. In his book, Cognition and Reality (1976), Neisser suggested that we use mental models (he calls them schemata) to explore the world: they act as filters through which we can select information that will be helpful to us (see Figure 1.2). A schema, says Neisser, is ‘not only the plan but also the executor of the plan. It is a pattern of action as well as a pattern for action’.

Figure 1.2 Neisser’s perceptual cycle

For Neisser, sense-making and taking action are part of one continuous cycle. Sense-making begins with exploration: searching our environment for information we can use to exploit a situation to our advantage. If the information we discover modifies our mental model, we can then use the adapted model to be even more effective the next time we encounter a similar situation.

The important point is that the cycle begins with exploration. Our natural urge is not to solve problems, but to look for solutions. Every organism must explore its environment if it’s to survive. The default mode of every living thing – humans included – is to hunt around in our environment, searching for information we can use to succeed.

Human beings are not so much problem-solvers as solution-seekers.

Intuitive problem-solving

It may not feel like it at the end of a stressful day ‘fire-fighting’ at work, but the history of your own success as a problem-solver is proof of this principle. Look back at that list you made at the start of this chapter: think about all those problems you solved, without thinking about them, as you made your way through the world.

When we’re about a year old, walking on two legs is a problem. We want to get about, but we don’t know how. Something impels us to try out solutions – perhaps by imitating others, perhaps by following genetically imprinted patterns in our brains – and, before long, we’ve solved the problem. It’s the Neisser cycle at work.

As we grow up, we learn language by listening actively, trying out sounds in various combinations, monitoring the responses when we use them, and adapting the combinations. It’s a process of continuous learning: we nearly all learn new words, and new ways of expressing ourselves, throughout our lives. It’s another classic example of the Neisser cycle.

And we solve a host of other problems in the same way. From catching a ball to holding a conversation, from learning to whistle to maintaining a friendship, the problem-solving process tends to be the same. Encounter the problem; try out a solution; monitor the results; understand the problem more fully; try another solution. (See Figure 1.3.)

We could call this intuitive problem-solving. Intuition operates without conscious or deliberate thought. When we solve problems intuitively, we do so without thinking.

Intuitive problem-solving is characterized by three key principles:

- Making sense of the problem (by pattern-matching) always dictates a solution.

- Solving the problem always means doing something.

- Solving the problem is also simultaneously a way of understanding the problem better.

Figure 1.3 Intuitive problem-solving

In other words, in intuitive problem-solving, understanding the problem and solving it are the same thing. Intuitive problem-solving always combines the problem with the solution. The match of information to mental model is the solution.

The limitations of intuitive problem-solving

Intuitive problem-solving has proved remarkably successful. After all, it’s kept the species going for a few hundred thousand years. Because pattern-matching is more or less unconscious, it’s super-sensitive. It picks up subtle cues in a situation, it reads signals in others’ behaviour very efficiently and it can spot discrepancies that might be signs of danger. Because pattern-matching is wired directly into our bodies’ systems, it triggers action very efficiently. Above all, pattern-matching helps us solve problems quickly. It’s fast.

But intuitive problem-solving isn’t without its limitations. Here are some of the most important.

Intuitive problem-solving makes us specialists

As we go through life matching mental models to new experiences, we reinforce the mental models that work successfully. The more successful a mental model becomes, the less likely we are to use alternative mental models in similar situations.

As a result, intuitive problem-solving tries to solve the same problem the same way every time. Faced with new information, it likes to ‘bolt on’: to assimilate new information into an already existing mental model. It’s easier to learn butterfly stroke if you can already swim freestyle. A French person can probably learn Italian more easily than Mandarin. ‘Bolting on’ is the easy mental option. We prefer ‘bolt-on’ solutions because they need less thinking.

The danger, of course, is that a ‘bolt-on’ solution may not be the best one. ‘Bolting on’ may be more efficient than reinvention, but it may not provide the most effective course of action. (Information technology provides many examples of this conundrum.) By extending existing mental models and refining them, we build expertise in specific fields. Our knowledge and skill tend to deepen more readily than they broaden. The field may be huge – the breadth and scope of a whole language, for example – or it may be highly localized, such as the ecology of lichen or pottery techniques in Bhutan.

Specialization is a double-edged sword. It helps us solve problems in our chosen field, but it can limit our thinking when problems fail to sit comfortably within that field. As specialists, we can become more expert but potentially less creative.

Intuitive problem-solving is vulnerable to biases

It’s very difficult to catch ourselves making errors when we’re practising intuitive problem-solving. All that selective pattern-matching means that we simply don’t notice when we miss something, or interpret it inaccurately.

Intuitive problem-solving usually makes reasonable sense of reality, but sometimes it can be wildly wrong. For hundreds of years it was intuitively obvious to most people that the Sun orbited the Earth. (A survey in 2008 suggested that a third of all Americans still believe this.) Many people find it hard to believe that heavy and light objects will fall to the ground at the same rate. Our intuitive judgement is prone to take our perceptions as true, and to forget that they are the result of mental models selecting information from the environment. As a result, intuitive problem-solving is vulnerable to a whole range of biases that can distort our perception and judgement. (We look at some of them in Chapter 10.)

Intuitive problem-solving can generate false solutions

Intuitive problem-solving can sometimes make us see meaning where none exists. The brain hates randomness: if it can use a mental model to find meaningful patterns in chaotic arrangements of information, it will do so. We see images in photographs or clouds; we read significance into the astrology columns in the newspapers; we generate conspiracy theories to account for threats or appalling tragedies.

The fancy name for this capacity to see significance where none exists is pareidolia. Some people call it magical thinking because we might explain a problem by identifying a cause that doesn’t exist, or by making false correlations between sets of data. We may even resort to blame or superstition in an effort to make sense of the inexplicable. (There’s more on blame in Chapter 4.)

Can you see what it is yet?

In an experiment reported in Science in 2008, a team from the University of Texas in Austin and Northwestern University in Evanston, Illinois, asked people what patterns they could see in random arrangements of dots or stock market information.

Half the participants had been made to feel a lack of control – either by being given negative feedback unrelated to the way they performed the task or by asking them to remember situations in which they had lost control. These participants tended to see patterns in random information more readily.

The moral of the story is that we’re more likely to engage in magical thinking when we feel powerless. When we sense that we lack control in a situation, we can start to see connections between unconnected phenomena and patterns in chaos. We can begin to see meaning where none exists.

Pareidolia can become a serious problem if we feel vulnerable. A gambler on a losing streak, for example, may construct all sorts of illusory patterns to convince himself that he should make the big bet. Workers threatened with redundancy may read all sorts of significance into managerial pronouncements. Anyone feeling exposed or anxious in high-risk, ambiguous or emotionally charged situations – trading on the stock market, perhaps, or declaring war – can succumb to pareidolia.

Intuitive problem-solving can be positively unhelpful, then, if we aren’t feeling mentally or emotionally resilient.

Stuckness (and its opposite)

Intuitive problem-solving usually knows what to do. But sometimes it breaks down. And that’s when we notice that we’ve got a problem.

We can define having a problem as:

knowing we want to do something, but not knowing what to do.

This isn’t my definition. I’ve taken it from one of the classic texts on the subject: Human Problem Solving, by Allen Newell and Herbert Simon. Newell and Simon put it like this:

“A person is confronted with a problem when he wants something and does not know immediately what series of actions he can perform to get it.”

We notice we have a problem when we’re stuck.

The anatomy of stuckness

Stuckness is a fascinating phenomenon. For example, other animals very rarely get stuck. And very young humans usually avoid being stuck very successfully. Stuckness is a condition that seems to emerge when our capacity to think reaches a certain level of sophistication. Here are some of its more intriguing features.

Stuckness is made up of two elements: our desire to do something and our inability to do it. Both elements are important, and in thinking about a problem we need to retain sight of both. Our inability to solve a problem drives our search to understand more about the problem; but our desire drives our search to solve it.

There are two kinds of stuckness. We can call them focused stuckness and unfocused stuckness:

- You experience focused stuckness when you can’t take your mind off a problem, when it occupies your thinking at the expense of everything else. You’re staring at the coffee table where you know the car keys should be sitting – and they’re not there. You try repeatedly to follow a computer protocol and it refuses to work. You find it impossible to shake off the memory of an offensive customer who upset you earlier in the day. (The problem described by Robert M. Pirsig in Chapter 24 of Zen and the Art of Motorcycle Maintenance – from which I quote at the start and end of this book – is a classic example of focused stuckness.)

Focused stuckness makes us brood. - You experience unfocused stuckness when you’re overwhelmed by a crowd of problems, when you can’t concentrate. You have a dozen demands on your time and you’ve no idea where to start. You’re responsible for completing a project and need to accommodate the actions and demands of a large group of people. You feel you’re spending your day ‘fire-fighting’ rather than getting any real work done.

Unfocused stuckness makes us panic.

Stuckness makes problem-solving conscious. The intuitive problem-solving cycle operates unconsciously. The moment we experience stuckness, we become conscious of our situation.

Stuckness opens up a space in the problem-solving cycle. A gap opens up in our mind between the problem and solution. Problem-solving becomes a two-part process: investigate the problem and generate a solution.

We can fill the problem-solving space with two kinds of thinking. Intuitive problem-solving can still be useful to us, even if we feel it’s broken down. Because we’re now consciously thinking about the problem, we can reflect on our intuitive responses: we can question them, develop them and guide them. We can start to guide our intuition consciously. And we can now also think about the problem more consciously: using logic, evaluation and analysis. As well as intuitive problem-solving, we can now also start to do rational problem-solving.

Solutions unstick our thinking

Solutions are what we do to escape stuckness. The word ‘solve’ itself contains the clue to this idea. It comes from the Latin solvere, meaning ‘to loosen’. To solve a problem is to unknot our thinking about it and create movement: to become unstuck. A solution is both the settling of a problem and a fluid created by mixing solids with liquids (dissolving them).

A solution, then, is:

a course of action that unsticks stuckness.

We often talk about ‘looking for a solution’, ‘searching for the answer’. But if a solution to a problem is a course of action, we can’t find it; we can only do it.

The two stages of problem-solving

The aim of problem-solving is to escape from stuckness.

When we’re stuck, a space opens up in our thinking: a gap between understanding a problem and generating a solution. In that gap, we can begin to do a new kind of problem-solving. We can begin to think deliberately: to test our perceptions and intuitions, to examine the evidence, to look at the problem in different ways and to contemplate alternative solutions.

The first step in unsticking our thinking is to split it into two stages:

| Stage 1: | Identify the problem |

| Stage 2: | Decide what to do |

In Stage 1, we investigate the problem. We gather information about the problem and try to make sense of it. The output of Stage 1 is a representation of the problem. We show the problem to ourselves (we re-present it) using language or symbols, a picture or a model. We might name the problem as ‘financial’ or ‘administrative’. We might simplify the problem: a circuit diagram represents the complex wiring of an electrical system; a map represents the complexity of a landscape; a model represents an aircraft.

In Stage 2, we generate a solution. We examine the information we’ve gathered and use it to decide what to do. The output of Stage 2 is action. We work with our representation of the problem: we use financial tools to solve financial problems, and administrative systems to solve administrative problems. We can use the circuit diagram to repair the electrical system; we can use the map to find our way out of a forest; we can put the model of the aircraft in a wind-tunnel and observe its aerodynamic behaviour.

Intuitive problem-solving: the magic of reframing

As we’ve seen, intuitive problem-solving, effective though it is, has some serious limitations. It tends to make us specialists, which can limit our cognitive thinking. It’s vulnerable to biases, which can influence our judgement without us noticing. And it can offer false solutions by generating illusory information.

But intuitive problem-solving has one feature that can unstick our thinking. We can demonstrate this feature using a second visual illusion. (It’s called a Rubin vase, after the Danish psychologist Edgar Rubin – see Figure 1.4.)

Figure 1.4 The Rubin vase

Looked at one way, this is a white vase on a black background. Looked at another, it’s two heads in profile on a white background. Most people can switch effortlessly between the two images, because the image cunningly matches two mental models, and the brain can switch from one to the other.

The Rubin vase demonstrates, in a very simple way, our ability to reframe. Faced with new information, intuitive problem-solving can compare it against different mental models and see which fits best. Switching mental models allows us to find the most appropriate thing to do.

In other words, reframing allows us to think about problems in context.

Reframing originally evolved as a way of assessing risk: it helped us decide very fast, for example, whether the rustling in the bushes was likely to be a dangerous predator or just the wind at play. And that allowed us to conserve valuable energy.

We reframe whenever we have to decide what to do in complicated or ambiguous situations. Reframing helps us drive, for example: anticipating how that cyclist might shoot out between two lorries, or how that small child might chase its ball into the road. Reframing helps us understand metaphors: if someone tells us to ‘pull the door to behind us’ when we leave a building, reframing tells us that we’re not supposed to wrench the door off its hinges and drag it behind us down the street. Complicated conversations are impossible without the ability to reframe: how else would we be able to adjust our remarks to Richard, knowing that Bharti is behind him listening to every word? (All of these situations would be problematic for anyone diagnosed with a condition on the autistic spectrum. Autistic people often find reframing very difficult.)

Reframing tends to operate instantaneously. We can switch between the two images in the Rubin vase at once; there’s no intermediate stage where we’re trying to work out rationally how one image differs from another. The suddenness of reframing makes it feel magical. We’re baffled; and then, suddenly, the pattern of the solution forms itself in our mind. The moment of intuitive insight often comes with a little emotional explosion: we might cry ‘Aha!’ or burst out laughing. (Intuitive problem-solving is always closely linked to emotion, as we shall see in Chapter 2.)

Reframing is one of the most powerful of intuitive problem-solving techniques. We’ll see how we can develop our ability to reframe to help us look at problems in different ways, and we’ll see how at various points throughout this book, especially in Chapter 9.

Rational problem-solving: thinking deliberately

Rational problem-solving operates by deliberate, conscious thought. Three key principles dictate its workings:

- Understanding the problem and solving the problem are two distinct forms of thinking.

- Making sense of the problem always means testing our understanding against objective criteria and evidence.

- Generating a solution always involves building feasibility into the action we propose to take.

Rational problem-solving uses a range of tools and techniques to understand problems and build feasible solutions: measurement, analysis, comparison, logic, evaluation. It also uses models: not mental models, but constructed, objective models that represent the problem we’re working on.

Solving problems: two approaches compared

| Intuitive problem-solving | Rational problem-solving |

| Purpose: to discover what to do | Purpose: to work out what’s true |

| Recognizes the truth | Works out the truth logically |

| Pattern-matches | Challenges pattern-matches |

| Accepts assumptions | Questions assumptions |

| Trusting: what you see is what there is | Sceptical: what you see is never the whole truth |

| Finds evidence for hypothesis | Seeks evidence to disprove hypothesis |

| Seeks similarities | Seeks differences |

| Combines information into patterns | Analyses information into constituent parts |

| Spontaneous | Deliberate |

| Discontinuous | Incremental |

| Instantaneous | Slow |

| Polarized: either/or | Discriminating: many possibilities |

| Discards irrelevant information | Investigates evidence exhaustively |

| Decisive | Cautious |

| Acts swiftly | Pauses |

| The solution explains the problem | The solution removes the problem |

The curse of the right answer

Intuitive and rational problem-solving have quite different objectives:

- The aim of intuitive problem-solving is to identify what to do.

- The aim of rational problem-solving is to work out what’s true.

But we often confuse the two objectives. And one of the results of that confusion is something I like to call the curse of the right answer.

The idea that a problem must have a correct answer is one of the key features of rational problem-solving. In ancient Greek, the word πρóβλημα [‘problema’] meant ‘a question posed for a solution’. It particularly referred to a puzzle in logic: a question as to whether a statement is true, to be answered using reasoning.

This idea of a problem as a question that must be answered persists to this day. We solve crosswords and Sudoku in the newspaper; we buy books of problems and join pub quiz teams. Our education system is still dominated by an examination system in which the principal means of succeeding is finding correct answers: a system that can decide our course through life.

Find the right answer and you have won. Fail to find the right answer, and you’re a loser.

Of course, very few problems in real life have single, correct answers. Or, to put the point another way: we sometimes confuse two ideas of a solution:

- a solution as a conclusion; and

- a solution as a course of action.

We see this confusion most clearly when we talk about ‘fixing’ a problem. A ‘fix’ is a course of action that’s also a ‘correct’ answer: it seems to satisfy both demands of a solution. ‘Fix’: the very opposite of the idea of a solution as fluid or dynamic. A ‘fix’ is static, permanent – fixed, indeed. ‘I want to fix the problem so it stays fixed’, say many of the participants on my problem-solving courses.

A ‘fix’ puts something right; which means that it must have been wrong to begin with. And so is born the idea that a problem is something ‘wrong’. And, by a kind of intuitive association, we see what’s wrong as bad. So problems in the real world – unlike the puzzles in the newspaper or the questions on the television quiz show – come to be seen as undesirable things to have, and probably someone’s fault. (Notice the link between the two meanings of the word: ‘fault’ as something wrong, and ‘fault’ as being responsible for something wrong.) All of which generates a powerful emotional response.

Going ballistic

Interestingly, the ‘-blem’ part of the word ‘problem’ derives from other words to do with throwing: it’s related to the Greek word βáλλειν [‘ballein’], meaning ‘to throw’. ‘Pro-’ suggests the idea of ‘forward’; so a problem is something ‘thrown at us’. And that Greek word ‘ballein’ is also apparently the root of the word ‘ballistic’ – which is, of course, what we may go when faced with a problem.

This is the curse of the right answer. It’s a curse because it severely limits our abilities as problem-solvers.

If we see a problem as ‘bad’ or ‘wrong’, the rational solution must be to put it right; to ‘fix’ it. We might remove what’s wrong, or repair it. Alternatively, we might look for the cause of what went wrong and try to fix that.

Some problems can be fixed, of course. If an electric bulb has stopped working, I can replace it. If the chain on my bicycle breaks, I can repair it. If my computer repeatedly shows a mysterious message when I boot up, I may be able to find the piece of software that is causing the problem and remove, repair or replace it.

But not every problem can be fixed. We may not be able to remove what’s wrong without removing something of value (how can we destroy a tumour without destroying healthy tissue or organs?). Repairing a fault may cause some other element of the system to fail (the new, highly sensitive fuse box installed in our cellar tripped the moment we turned on the old hob in our kitchen). Many problems have no identifiable causes (many medical problems are of this kind); some have multiple causes (social, political or economic problems often fall into this category).

We need to beware the curse of the right answer. Not all problems can be fixed; not all problems are things that are wrong or bad. Not all solutions are answers; solutions to practical problems are courses of action, which cannot be right or wrong but only successful or unsuccessful. Answers are final; courses of action have consequences.

Rational problem-solving, then, comes to our aid when we’re stuck. But it can never entirely replace intuitive problem-solving. And indeed, as we’ll see, there is a form of intuition beyond rationality that can complement and transcend even the subtle play of reasoning. By combining the best of both approaches, we can solve problems more skilfully – and make wiser decisions.

In brief

Intuitive problem-solving uses mental models to explore the world and decide how to act. Intuitive problem-solving is discontinuous. It happens suddenly, not incrementally. In intuitive problem-solving:

- making sense of the problem (by pattern-matching) always dictates a solution;

- solving the problem always means doing something; and

- solving the problem is also simultaneously a way of understanding the problem better.

Intuitive problem-solving has three main limitations:

- It tends to make us specialists as problem-solvers.

- It’s vulnerable to biases that can cloud our understanding.

- It can generate false solutions.

When intuitive problem-solving breaks down, we become stuck. We can define having a problem as knowing we want to do something, but not knowing what to do.

- Stuckness is made up of two key elements: our desire to do or achieve something, and our inability to do it.

- There are two kinds of stuckness: focused and unfocused.

- Stuckness makes problem-solving conscious. Stuckness opens up a space in the problem-solving cycle.

- We can fill the problem-solving space with two kinds of thinking.

A solution is a course of action that unsticks our thinking. The first step in unsticking our thinking is to split it into two stages:

Stage 1: Identify the problem

Stage 2: Decide what to do

Intuitive problem-solving includes contextual thinking, which allows us to view problems in different ways.

Rational problem-solving uses deliberate, step-by-step, conscious thought. In rational problem-solving:

- understanding the problem and solving the problem are two distinct forms of thinking;

- making sense of the problem always means testing our understanding against objective criteria and evidence; and

- generating a solution always involves building feasibility into the action we propose to take.

The principal limitation of rational problem-solving is the curse of the right answer.