Chapter 1

Overview of Operations Management

Before we delve into the System Center 2012 Operations Manager product, we must explain what operations management is, what it defines, and why you need it. As an IT manager, you are not responsible for all key business activities within the company. When those activities are being processed on your servers, however, you become a critical piece of the puzzle in overall IT systems management. You may control the database servers, but they house information that is critical to the day-to-day operation of the billing department, for example. Suddenly, you start to see how everything ties together. A missing or damaged link in the chain or an unplanned removal of the chain may cause much more damage than you originally thought.

This is just one of the many reasons Microsoft created the Microsoft Operations Framework (MOF), based on the Information Technology Infrastructure Library (ITIL). The idea behind MOF and ITIL is to create a complete team structure with the ultimate goal of service excellence. Numerous groups fall under the IT department tag, but we often see many of them acting as separate departments rather than as one cohesive unit. Desktop support, application developers, server support, storage administrators, and so forth are all members of IT, but they are not always as tight as they should be.

Operations Manager is much more than just a centralized console view of the events and processes in your network. It was built with ITIL and MOF in mind, and so we would like to start the book with a background of both these IT service management standards.

In this chapter, you will learn to:

- Understand IT service management

- Explore the IT Infrastructure Library (ITIL) and Microsoft Operations Framework (MOF)

- Explore the Dynamic Systems Initiative

- Define cloud computing

- Understand the IT as a Service (ITaaS) model

- Define the Microsoft System Center 2012 products

- Define operations management

Understanding IT Service Management

ITIL and MOF were introduced as a way to deliver consistent IT service management (ITSM). Some of the key objectives of ITSM are:

- To align IT services with current and future needs of the business and its customers

- To improve the quality of IT services delivered

- To reduce the long-term cost of service provisioning

Think of ITSM as a conduit between the business and the technology that helps run the business. Without a proper conduit in place, one cannot function properly without the other. ITSM is process focused as opposed to vendor specific and technology centered.

Exploring ITIL

In the early 1980s, computing technology evolved from a centralized IT organization model to distributed computing and geographically spanned resources. With this distributed computing model came greater flexibility, but a downside to this was also a deterioration and inconsistency in process application for technology delivery and support. The UK Office of Government Commerce (OGC) identified the need to use consistent practices for all aspects of a service life cycle to deliver organizational effectiveness and efficiency as well as predictable service levels. As a result, ITIL was born. ITIL is now the most widely adopted framework in the world for ITSM.

ITIL version 1 was published between 1989 and 1995. The original ITIL volumes, consisting of 31 books total, provided a cohesive set of best practices for ITSM. These books were created by industry leaders of the time, and their best practices gave direction and guidance for providing high-quality IT facilities and services to support IT.

In 2000 and 2001, the initial version was revised to become ITIL v2. This second version consolidated the original 31 publications into 7 more closely connected and consistent books within an overall framework.

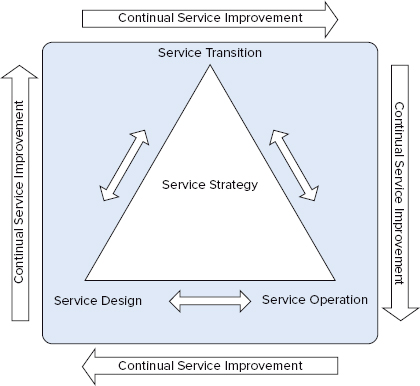

In June 2007, ITIL v3 was published with an enhanced and further consolidated third version of ITIL consisting of five core books covering the service life cycle, as shown in Figure 1.1. These books were updated in July 2011 for consistency.

Figure 1.1 The service life cycle

The five core volumes that make up ITIL v3 each cover a stage of the service life cycle. Table 1.1 shows these volumes and their key areas.

Table 1.1: The ITIL v3 volumes

| Volume | Key management areas |

| Service Strategy | Service portfolio Demand Financial |

| Service Design | Service catalog Service level Availability Capacity Supplier IT service continuity Information security |

| Service Transition | Change Release Configuration Service knowledge |

| Service Operation | Event Incident Request fulfillment Access Problem |

| Continual Service Improvement | 7-step improvement process Service measurement Service reporting |

There is much more to ITIL than just the books, however. ITIL as a whole includes the books, certification, ITIL consultants and services, and ITIL-based training and user groups. The IT Service Management Forum (itSMF) is the primary contributor and promoter for ITIL and is made up of more than 6,000 member companies covering in excess of 40,000 individuals spread over 53 autonomous chapters worldwide.

The itSMF International Executive Board is the separate international entity that provides overall steering and support functions to existing and emerging chapters. You can find more information about them at their website: http://itsmfi.org.

You can find a lot more in-depth information about ITIL on the official website: www.itil-officialsite.com.

Exploring the MOF

IT organizations are continuously challenged to deliver better IT services at lower cost in a turbulent environment, and ITIL is the best-known management framework developed to deal with this challenge. The MOF is Microsoft’s structured approach to the same goal.

In 1999, Microsoft created the first version of MOF (MOF v1). The key focus of developing MOF was two-pronged:

- To provide a framework specifically designed for managing Microsoft technologies

- To give IT professionals the knowledge and processes required to manage Microsoft platforms cost-effectively and thus achieve high reliability and security

In early 2008, MOF v4 was released (based on ITIL v3), and it is the current version of the framework. MOF v4 was built to respond to new IT challenges, such as demonstrating the IT business value, responding to regulatory requirements, and improving organizational capability.

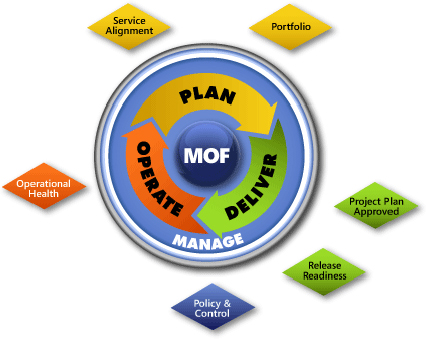

The MOF guidance includes all of the activities and processes involved in managing an IT service from its conception, development, operation, maintenance, to—ultimately—its retirement. MOF organizes these activities and processes into service management functions (SMFs), which are grouped together in phases that mirror the IT service life cycle. Each SMF is anchored within a life cycle phase and contains a unique set of goals and outcomes supporting the objectives of that phase. An IT service’s readiness to move from one phase to the next is confirmed by management reviews (MRs), which ensure that goals are achieved in an appropriate fashion and that the goals of IT are aligned with the goals of the organization.

MOF includes a great number of resources that are available to help you achieve mission-critical system reliability, manageability, supportability, and availability with Microsoft products and technologies. These resources are in the form of whitepapers, operations guides, assessment tools, best practices, case studies, templates, support tools, courseware, and services. All of these resources are available on the official MOF website at www.microsoft.com/mof.

How MOF Expands

While ITIL is based on IT operations as a whole, MOF has taken the route of providing a service solution as its core. MOF focuses on the release and life cycle of a service solution, such as an application or infrastructure deployment.

Since ITIL was based on a philosophy of “adopt and adapt,” Microsoft decided to use it as its basis for MOF. Although Microsoft supports ITIL from a process perspective, the company decided to make some changes and add a few things when they built MOF. One of these changes was moving to a “prescriptive” process model. Microsoft defines the ITIL process model as “descriptive.” It has more of a “what to do, when and why” approach, whereas MOF has a “prescriptive,” or “how to do,” approach.

The MOF v4 Model

Information in this section about the Microsoft Operations Framework 4.0 is provided with permission from Microsoft Corporation (© 2009 Microsoft Corporation).

The MOF v4 life cycle areas are:

Service Management Functions

MOF also introduced the concept of SMFs. Each life cycle area of the MOF model contains SMFs that define the processes, people, and activities needed to align IT services to the requirements of the business.

Even though each SMF can be interpreted as a stand-alone set of processes, it’s important to understand how the SMFs in all the phases work to ensure that service delivery is at the desired quality and risk level. In some phases (such as Deliver), the SMFs are performed consecutively, whereas in other phases (such as Operate), the SMFs may be performed simultaneously to create the outputs for the phase. Figure 1.3 shows the SMFs within the MOF v4 model and their placement within each of the phases.

As Table 1.2 illustrates, there are currently 16 SMFs that describe the series of management functions performed in an IT environment. All of these SMFs map to an ITIL-based best practice for performing each function.

Table 1.2: MOF v4 SMF placement

| Quadrant | SMF |

| Plan phase | Business/IT alignment Reliability Policy Financial management |

| Deliver phase | Envision Project planning Build Stabilize Deploy |

| Operate phase | Operations Service monitoring and control Customer service Problem management |

| Manage layer | Governance, risk, and compliance Change and configuration Team |

Management Reviews

For each phase in the life cycle, MRs serve to bring together information and people to determine the status of IT services and to establish readiness to move forward in the life cycle. These reviews help ensure that business objectives are being met and that initiatives, projects, and services are on track to deliver expected value. The scope of MRs can be either project-specific or broad.

During an MR, the criteria that a service must meet to move through the life cycle are reviewed against actual progress. Figure 1.4 shows the MRs and their placement within the MOF v4 model.

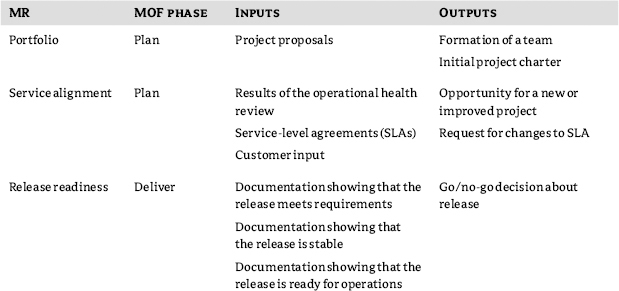

Table 1.3 shows the six MOF v4 management reviews, their placement within the IT service life cycle, and their inputs and outputs.

Table 1.3: MOF v4 management reviews

Exploring the Dynamic System Initiative

As software becomes more and more complex—thus introducing new components and systems to the infrastructure—the IT department will in turn become increasingly diverse and heterogeneous. For example, an inventory application moves from being client server–based, to multitier, to a web service–based application. As the application grows and more users start using it, the decision is made to install a hardware load balancer in front of it. Then the data is moved to a storage area network (SAN) to give the IT department better control over backup and recovery options. The result is an IT environment in which the definition of a distributed application has evolved to include much more than just the software.

All of these changes result in various teams in the IT department being involved with this application. You quickly see how a change to the application can affect more than just the application developers. You now have to coordinate changes with the web server team, the database administrators, the networking team, and the storage team.

Whether these teams are made up of one person (you), or they consist of dozens of people on each team, you realize how complex the infrastructure can become, and why there is a need for management of these distributed systems. With so many teams working on a system over its lifetime, if the knowledge they each have could be captured in machine-readable form over the life of a system, then this knowledge could be harnessed to automate many of the well-defined management tasks that are handled manually today. Not only does the impact of this process drive down support costs, but it also reduces the risk of mistakes and omissions associated with humans carrying out all the steps of even a single best-practice management process. This concept of capturing and reusing knowledge over the life of the system and each manageable component is at the heart of the management aspects of the Dynamic Systems Initiative (DSI).

The DSI is an industry strategy led by Microsoft to effect these fundamental changes. It is a plan to build software that incorporates ITSM capabilities and MOF best practices to match IT capabilities and operations with business needs.

DSI solutions not only address the complexity of enterprise IT infrastructures but also deliver enterprise-like capabilities to small and medium-sized businesses in a simple and cost-effective way.

DSI helps IT organizations deliver end-to-end offerings that will:

- Increase productivity and reduce costs across the entire IT organization

- Reduce time and effort required to troubleshoot and maintain systems

- Improve system compliance with business and IT policies

- Increase responsiveness to changing business demands

DSI also delivers dynamic systems technology to businesses. There are three architectural elements of the dynamic systems technology strategy:

These three architectural elements are the building blocks for dynamic systems. Virtualized infrastructure mobilizes the resources and brings elasticity to the infrastructure. Knowledge-driven management is the mechanism for putting those resources to work to meet dynamic business demands, and design for operations ensures that systems are built with operational requirements for excellence.

SDM versus SML

Originally, Microsoft implemented the System Definition Model (SDM) as the standard schema within DSI. SDM was developed to capture a consistent model of IT resources.

SDM is an Extensible Markup Language (XML)-based language and modeling platform through which a schematic blueprint for effective management of distributed systems can be created. SDM models can be consumed by specific management systems, such as members of the System Center product family and third-party management products; they can also be hosted by each component of the system to enable local self-management by the components themselves. Just as a distributed system is a set of related software and hardware resources running on one or more computers that are working together to accomplish a common function, SDM models combine to form a common management definition of that distributed system, created out of the resultant sum of its component parts.

In July 2006, Microsoft, along with other major hardware and software corporations, published a draft of a new specification defining a consistent way to express how heterogeneous computer networks, applications, servers, and other IT resources are described—or modeled—in XML. Based extensively on SDM, this new specification was called the System Modeling Language (SML). SML provides a consistent method for hardware manufacturers and software developers to define how infrastructure, applications, and services are modeled.

Microsoft has now realigned all of their work on the SDM platform with SML and has renamed the platform the SML platform. This approach will give IT departments end-to-end solutions that are integrated across applications, operating systems, hardware, and management tools, and it will provide reduced costs, improved reliability, and increased responsiveness in the entire IT life cycle.

Tying It All Together

Microsoft took the ITIL, MOF, and DSI standards and created a suite of products known as System Center 2012 that helps you in your quest to align with the best practices set forth in those frameworks. The System Center suite helps IT organizations capture and use information to design more manageable systems and automate IT operations. This is achieved by integrating systems-management tools and knowledge of the systems to help you with day-to-day operations of the environment, as well as to ease your time spent on troubleshooting and to improve planning capabilities.

With System Center 2012, you are empowered with the tools that can help you build, migrate to, and manage your cloud infrastructures—both private and public. To fully realize the potential of System Center as a whole suite, you need to understand the various cloud deployment models, the cloud service models, and the capabilities of each of the System Center products.

Cloud Computing Defined

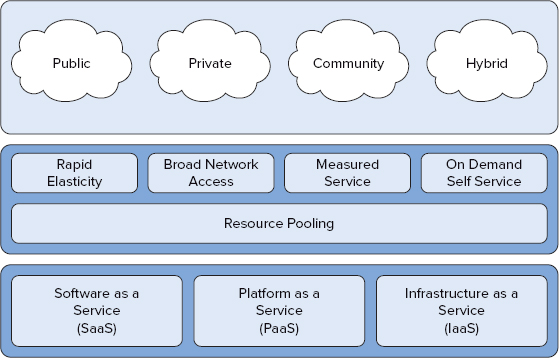

The National Institute of Standards and Technology (NIST) is the globally recognized authority on developing standards and guidelines for the cloud computing model. In September 2011, they released their final version of the cloud computing definition. This definition states that:

Cloud computing is a model for enabling ubiquitous, convenient, on-demand network access to a shared pool of configurable computing resources (e.g., networks, servers, storage, applications, and services) that can be rapidly provisioned and released with minimal management effort or service provider interaction. This cloud model is composed of five essential characteristics, three service models, and four deployment models.

Figure 1.5 shows a graphical representation of the NIST definition of cloud computing.

Figure 1.5 The NIST cloud computing model

Essential Cloud Characteristics

NIST has specified five essential cloud characteristics within their cloud computing definition and these are described below:

The source for this information is the website of the National Institute of Standards and Technology. See http://csrc.nist.gov/publications/nistpubs/800-145/SP800-145.pdf.

Cloud Service Models

Along with the five essential cloud characteristics, the NIST has defined three types of services that exist within the cloud:

The source for this information is the website of the National Institute of Standards and Technology. See http://csrc.nist.gov/publications/nistpubs/800-145/SP800-145.pdf.

Cloud Deployment Models

With the three cloud service definitions and five essential characteristics explained, the NIST publication defines four types of cloud models:

The source for this information is the website of the National Institute of Standards and Technology. See http://csrc.nist.gov/publications/nistpubs/800-145/SP800-145.pdf.

For more information on all of the special publications that the NIST has released, download the whitepapers from csrc.nist.gov/publications/PubsSPs.html.

Understanding IT as a Service

Now that you understand the various characteristics, service, and deployment models of a cloud environment, it is time to learn about a new cloud service model that Microsoft and their partners have defined and modeled the System Center 2012 suite around. To Microsoft, cloud computing represents a transformation in the industry in which we work. Their goal was to deliver a strategy that will let you focus on your business and the IT services that run your business from a holistic management perspective instead of on an individual component-based design. This model is defined as IT as a Service (ITaaS). It offers organizations greater flexibility in leveraging the power of IT to meet their business needs than has been available before. In short, System Center 2012 helps deliver ITaaS through the various product offerings within the suite. It enables you to manage your environment in the same way your users consume the services. With System Center 2012 you can create service maps based on your service catalog.

As an example, within System Center 2012 Operations Manager, you can create a distributed application based on the Messaging Service environment. This distributed application can encompass all of the components that make up your Messaging Service environment—from the Microsoft Exchange mail servers (both physical and virtual) to the network hardware gateways and even the end-user client perspectives. The health state of each of these individual components will roll up to a single top-level icon that represents the health state of the entire Messaging Service. Built-in processes and health roll-up policies will determine whether or not a particular alert generated should change the entire Messaging Service from healthy to warning or even to critical. With this ITaaS model, you are moving away from dealing with the silo-based method of individual component alerts and notifications and instead seeing the whole service as a single entity, giving you the transparency that you need across the service catalog.

As you progress through this book, you will learn much more about distributed applications, alerting, health states, and roll-up policies within Operations Manager.

System Center 2012

Before we look at the System Center 2012 line of products, let’s first explore the System Center management disciplines that were introduced by Microsoft to help define IT service management:

- Operations management

- Change management

- Configuration management

- Data protection management

- Service management

- Virtual machine management

- Orchestration management

- Security management

- Incident management

- Problem management

Through internal products, close work with partners, and acquisitions of software from other companies, Microsoft has addressed each one of these disciplines with a product in the System Center 2012 suite. The suite includes the following eight core products:

The key benefits are:

- Monitors infrastructure, network, applications, transactions, and code

- Monitors both public and private clouds

- Monitors physical and virtual environments

- Provides central monitoring and diagnostics of Microsoft and non-Microsoft platforms, including Unix, Linux, and VMware

The key benefits are:

- Empowers user productivity

- Unifies management and security infrastructure

- Simplifies IT administration

- Manages a wide range of mobile devices

The key benefits are:

- Provides centralized management and integration with the Operations Manager console

- Optimizes SharePoint and Hyper-V item-level recovery (ILR)

- Offers certificate-based authentication for nondomain backups

The key benefits are:

- Provides heterogeneous hypervisor support to manage Microsoft, VMware, and Citrix environments

- Offers Server App-V integration

- Provides service templates

- Enables private cloud creation and management

The key benefits are:

- Optimizes and extends your existing investments

- Delivers flexible and reliable services

- Lowers costs and improves predictability

- Lets you connect systems from different vendors without having to know how to use scripting and programming languages

Service Manager can provide increased productivity, reduced costs, improved resolution times, and tighter compliance of IT standards. Included in Service Manager are the core process management packs for incident and problem resolution, change control, and configuration and knowledge management. It uses a central configuration management database (CMDB) to automatically connect knowledge and information from Operations Manager, Configuration Manager, Orchestrator, Virtual Machine Manager, and Active Directory Domain Services. Service Manager fits into Microsoft’s systems management solutions for incident, problem, and change management.

The key benefits are:

- Provides datacenter management and integration efficiency

- Offers incident ticketing and problem management

- Provides user-centric support through the Self-Service Portal

- Manages IT governance, risk, and compliance (IT GRC)

The key benefits are:

- Connects both public and private clouds

- Provides centralized management of multiple Virtual Machine Manager deployments and Azure subscriptions

- Contains a central library shared across clouds

- Allows for quick deployment of service templates to the cloud

Built on System Center 2012 Configuration Manager, Endpoint Protection provides a single, integrated platform that helps you reduce costs by using your existing client management infrastructure to deploy and manage your endpoint protection. The unified infrastructure also provides improved visibility into the security and compliance of your client systems. Endpoint Protection supports the Security Management systems management solution.

The key benefits are:

- Implements role-based management across security and operations

- Features industry-leading malware detection

- Provides a single infrastructure for client management and security

- Improves visibility for identifying and remediating vulnerabilities

Defining Operations Management

There is often some confusion when it comes to the definition of operations management. The Microsoft System Center 2012 suite spans a wide range of “management” ground. The most confusing portions of this area are between systems management and operations management. We will look at the difference between the two.

Systems Management

Systems management is typically defined as software that is used to centrally manage large groups of computer systems. This software contains the tools to control and measure the configuration of both hardware and software in the environment.

System Center 2012 Configuration Manager and System Center 2012 Endpoint Protection are the Microsoft products that function in the systems management space. Configuration Manager provides remote control, patch management, software distribution, hardware and software inventory, user activity, and capacity monitoring. Endpoint Protection provides antivirus and vulnerability detection and protects against both known and unknown threats.

Operations Management

Now that you have an understanding of the System Center products that provide systems management, we will concentrate on operations management. Operations management is mainly focused on ensuring that business operations are efficient and effective through processes that are aimed at improving the reliability and availability of IT systems and services. You accomplish this by gathering information from your current systems, having the right people in place to decipher that data, and having proper procedures in place to carry out any tasks that may arise if there is a current or potential problem in your environment.

The Microsoft product that addresses this need is System Center 2012 Operations Manager, which is based on MOF, which in turn is based on ITIL. Operations Manager is a product that allows centralized monitoring of numerous computers and services on a network. Let’s break down the components of operations management now and apply them to the Operations Manager product.

Gathering Information

Operations Manager can gather information on all the components that come together to provide a service to your business. These components can consist of software such as Windows Server, SQL, Exchange, SharePoint, VMware, and Hyper-V. They can also include hardware components such as servers, SANs, routers, switches, and even the air-conditioning units that keep your datacenters at the right temperature.

When all of this data is collated into Operations Manager, it can provide transparency across your IT service catalog by bringing all the components that make up each service into individual distributed applications, which are essentially a top-level view of each service.

Having the Right People in Place

Once you have configured your ITaaS using Operations Manager, you need to ensure that the right people are in place to oversee its management and ensure that the data collected is deciphered and handled in the correct manner.

These people will most likely have roles that encompass the various security access levels within your company and will map to the Operations Manager user roles of administrators, advanced operators, operators, and read-only operators, to name a few.

This team will be the eyes of the business into what is going on each day within the company IT infrastructure and service catalog.

Having Proper Procedures in Place

When the information has been gathered and the team has been put together to decipher the data, proper procedures need to be put in place to enable the implementation of specific tasks in the event of a critical service failure.

When distributed applications have been deployed for each service, they define the service as a single object within Operations Manager. SLAs can then be configured for each of these single object services to provide reportable statistics on how that service is performing across the business. When you have procedures such as these in place, it will be much easier to define what services are operating at full efficiency and which ones need attention or additional support to bring them in line with the rest of the IT service catalog SLAs.

The Result

Operations Manager provides you with the information you need to help reduce time and effort in managing your IT infrastructure. It gives you a proactive approach at determining possible problems and is a powerful tool that enables you to get the most out of your environment. Operations Manager delivers top-class operations management.