Chapter 9

Creating and Managing Virtual Machines

The VMware ESXi hosts are installed, vCenter Server is running, the networks are blinking, the storage is carved, and the VMFS volumes are formatted. Let the virtualization begin! With the virtual infrastructure in place, you as the administrator must shift your attention to deploying the virtual machines.

Understanding Virtual Machines

It is common for IT professionals to refer to a Windows or Linux system running on an ESXi host as a virtual machine (VM). Strictly speaking, this term is not 100% accurate. Just as a physical machine is bare-metal hardware before the installation of an operating system, a VM is an empty shell before the installation of a guest operating system (the term “guest operating system” is used to denote an operating system instance installed into a VM). From an everyday usage perspective, though, you can go on calling the Windows or Linux system a VM. Any references you see to “guest operating system” (or “guest OS”) are references to instances of Windows, Linux, or Solaris—or any other supported operating system—installed in a VM.

If a VM is not an instance of a guest OS running on a hypervisor, then what is a VM? The answer to that question depends on your perspective. Are you “inside” the VM, looking out? Or are you “outside” the VM, looking in?

Examining Virtual Machines from the Inside

From the perspective of software running inside a VM, a VM is really just a collection of virtual hardware resources selected for the purpose of running a guest OS instance.

So, what kind of virtual hardware makes up a VM? By default, VMware ESXi presents the following fairly generic hardware to the VM:

- Phoenix BIOS

- Intel 440BX motherboard

- Intel PCI AHCI controller

- IDE CD-ROM drive

- BusLogic parallel SCSI, LSI Logic parallel SCSI, or LSI Logic SAS controller

- AMD or Intel CPU, depending on the physical hardware

- Intel E1000, Intel E1000e

- Standard VGA video adapter

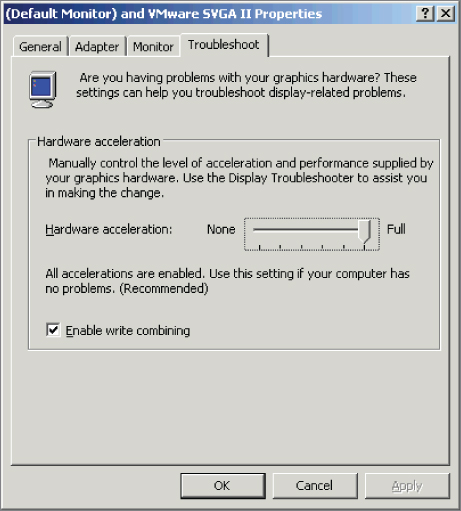

VMware selected this generic hardware to provide the broadest level of compatibility across the entire supported guest OSs. As a result, it's possible to use commercial off-the-shelf drivers when installing a guest OS into a VM. Figure 9.1 shows a few examples of VMware vSphere providing virtual hardware that looks like standard physical hardware. Both the network adapter and the storage adapter—identified as an Intel(R) 82574L Gigabit Network Connection and an LSI SAS 3000 series adapter, respectively—have corresponding physical counterparts, and drivers for these devices are available in many modern guest OSs.

FIGURE 9.1 VMware ESXi provides both generic and virtualization-optimized hardware for VMs.

However, VMware vSphere may also present virtual hardware that is unique to the virtualized environment. Look back at the display adapter in Figure 9.1. There is no such physical card as a VMware SVGA 3D display adapter; this is a device that is unique to the virtualized environment. These virtualization-optimized devices, also known as paravirtualized devices, are designed to operate efficiently within the virtualized environment created by the vSphere hypervisor. Because these devices have no corresponding physical counterpart, guest OS–specific drivers should optimally be provided. VMware Tools, described later in this chapter in the section “Installing VMware Tools,” satisfies this function and provides virtualization-optimized drivers to run these devices.

A physical machine might have a certain amount of memory installed, a certain number of network adapters, or a particular number of disk devices, and the same goes for a VM. A VM can include the following types and numbers of virtual hardware devices:

- Processors: between 1 and 128 processors with vSphere Virtual SMP (the number of processors depends on your vSphere licenses)

- Memory: maximum of 6 TB of RAM

- SCSI controller: maximum of 4 SCSI controllers

- SATA controller: maximum of 4 SATA controllers

- Network adapter: maximum of 10 network adapters

- Parallel port: maximum of 3 parallel ports

- Serial port: maximum of 32 serial ports

- Floppy drive: maximum of 2 floppy disk drives on a single floppy disk controller

- A single USB controller with up to 20 USB devices connected

- Keyboard, video card, and mouse

Hard drives are not included in this list, because VM hard drives are generally added as SCSI or AHCI devices. With up to 4 SCSI controllers and 15 devices per controller for a total of 60 SCSI / 256 PVSCSI devices per VM; it's possible to boot only from 1 of the first 8. Each VM can have a maximum of 4 SATA controllers with 30 devices per controller for a total of 120 possible virtual hard drives or CD/DVD drives. If you are using IDE hard drives, then the VM is subject to the limit of 4 IDE devices per VM, as mentioned previously.

There's another perspective on VMs besides what the guest OS instance sees. There's also the external perspective—what does the hypervisor see?

Examining Virtual Machines from the Outside

To better understand what a VM is, you must consider more than just how a VM appears from the perspective of the guest OS instance (for example, from the “inside”), as we've just done. You must also consider how a VM appears from the “outside.” In other words, you must consider how the VM appears to the ESXi host running the VM.

From the perspective of an ESXi host, a VM consists of several types of files stored on a supported storage device. The two most common files that compose a VM are the configuration file and the virtual hard disk file. The configuration file—hereafter referred to as the VMX file—is a plain-text file identified by a .vmx filename extension, and it functions as the virtual resource recipe of the VM. The VMX file defines the virtual hardware that resides in the VM. The number of processors, the amount of RAM, the number of network adapters, the associated MAC addresses, the networks to which the network adapters connect, and the number, names, and locations of all virtual hard drives are stored in the configuration file.

Listing 9.1 shows a sample VMX file for a VM named Win2k16-01.

Reading through the Win2k16-01.vmx file, you can determine the following facts about this VM:

- From the

guestOSline, you can see that the VM is configured for a guest OS referred to as “windows9srv-64”; this corresponds to Windows Server 2016 64-bit. - Based on the

memsizeline, you know the VM is configured for 8 GB of RAM. - The

scsi0:0.fileNameline tells you the VM's hard drive is located in the fileWin2k16-01.vmdk. - The VM has a floppy drive configured, based on the presence of the

floppy0 lines, but it does not start connected (seefloppy0.startConnected). - The VM has a single network adapter configured to the Distributed Virtual Switch

“dvportgroup-37”port group, based on theethernet0lines. - Based on the

ethernet0.generatedAddressline, the VM's single network adapter has an automatically generated MAC address of 00:50:56:b1:17:84.

Although the VMX file is important, it is only the structural definition of the virtual hardware that composes the VM. It does not store any actual data from the guest OS instance running inside the VM. A separate type of file, the virtual hard disk file, performs that role.

The virtual hard disk file, identified by a .vmdk filename extension and hereafter referred to as the VMDK file, holds the actual data stored by a VM. Each VMDK file represents a disk device. For a VM running Windows, the first VMDK file would typically be the storage location for the C: drive. For a Linux system, it would typically be the storage location for the root, boot, and a few other partitions. Additional VMDK files can be added to provide additional storage locations for the VM, and each VMDK file will appear as a physical hard drive to the VM.

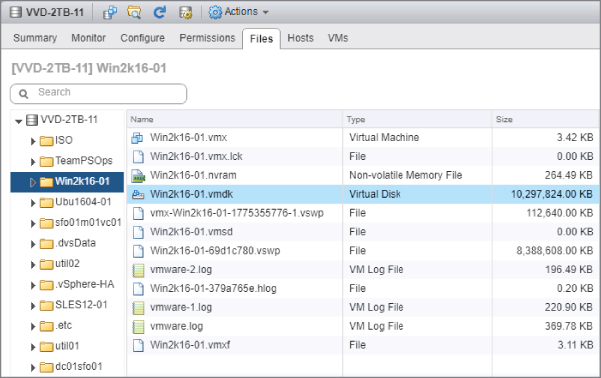

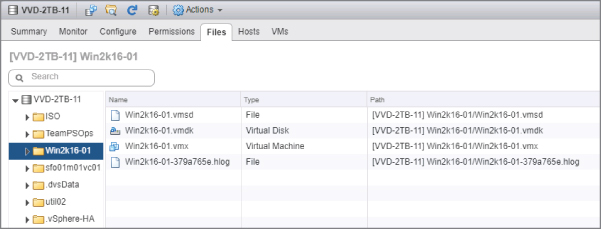

Although we refer to a virtual hard disk file as a VMDK file, in reality there are two different files that compose a virtual hard disk. Both of them use the .vmdk filename extension, but each performs a very different role: one is the VMDK descriptor file, and the other is the VMDK flat file. There's a good reason why we—and others in the virtualization space—refer to a virtual hard disk file as a VMDK file, though, and Figure 9.2 helps illustrate why.

FIGURE 9.2 The file browser in the vSphere Web Client shows only a single VMDK file.

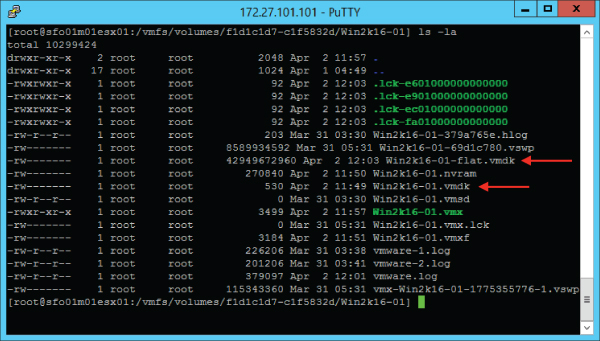

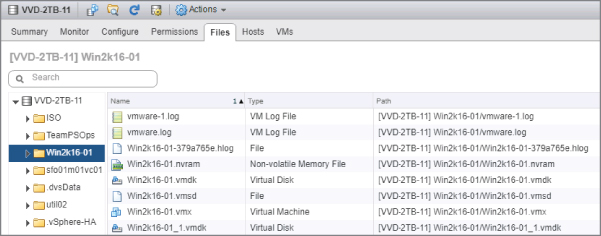

Looking closely at Figure 9.2, you'll see only a single VMDK file listed. In actuality, though, there are two files, but to see them you must go to a command-line interface. From there, as shown in Figure 9.3, you'll see the two different VMDK files: the VMDK descriptor (the smaller of the two) and the VMDK flat file (the larger of the two and the one that has –flat in the filename).

FIGURE 9.3 There are actually two VMDK files for every virtual hard disk in a VM, even though the vSphere Web Client shows only a single file.

Of these two files, the VMDK descriptor file is a plain-text file and is human-readable; the VMDK flat file is a binary file and is not human-readable. The VMDK descriptor file contains only configuration information and pointers to the flat file; the VMDK flat file contains the actual data for the virtual hard disk. Naturally, this means that the VMDK descriptor file is typically very small, whereas the VMDK flat file could be as large as the configured virtual hard disk in the VMX. So, a 40 GB virtual hard disk could mean a 40 GB VMDK flat file, depending on other configuration settings you'll see later in this chapter.

Listing 9.2 shows the contents of a sample VMDK descriptor file.

There are several other types of files that make up a VM. For example, when the VM is running there will most likely be a VSWP file, which is a VMkernel swap file. You'll learn more about VMkernel swap files in Chapter 11, “Managing Resource Allocation.” There will also be an NVRAM file, which stores the VM's BIOS settings.

Now that you have a feel for what makes up a VM, let's get started creating some VMs.

Creating a Virtual Machine

Creating VMs is a core part of using VMware vSphere, and VMware has made the process as easy and straightforward as possible. Let's walk through the process, and we'll explain the steps along the way.

Perform the following steps to create a VM from scratch:

- If it's not already running, launch the vSphere Web Client, and connect to a vCenter Server instance. If a vCenter Server instance is not available, launch the vSphere Host Client and connect directly to an ESXi host.

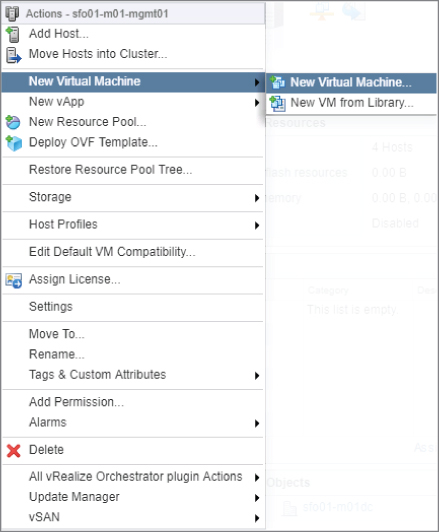

- In the inventory tree, right-click the name of a datacenter, a cluster, a resource pool, or an individual ESXi host, and select the New Virtual Machine option, as shown in Figure 9.4.

FIGURE 9.4 You can launch the New Virtual Machine Wizard from the context menu of a vCenter datacenter, virtual datacenter, an ESXi cluster, or an individual ESXi host.

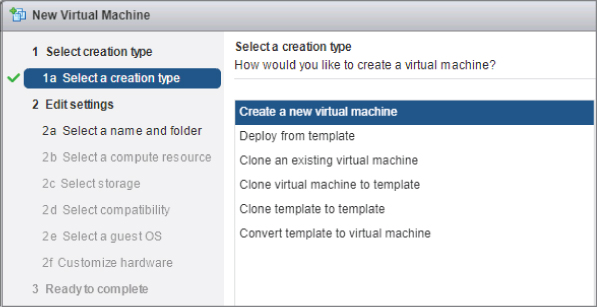

- When the New Virtual Machine Wizard opens, select Create A New Virtual Machine, shown in Figure 9.5, and then click Next.

FIGURE 9.5 Options for creating a new virtual machine when using the vSphere Web Client

- Type a name for the VM, select a location in the inventory list where the VM should reside, and click Next.

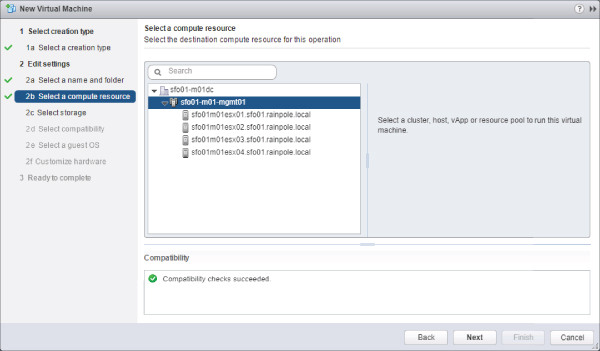

- If you selected a cluster without vSphere DRS enabled or you are running vSphere DRS in manual mode, you'll need to select a specific host within the cluster on which to create the VM. Select an ESXi host or cluster from the list and then click Next, as shown in Figure 9.6.

FIGURE 9.6 The compute resource selected here is a DRS enabled cluster. When the VM is powered on DRS will select the best ESXi host to run the VM.

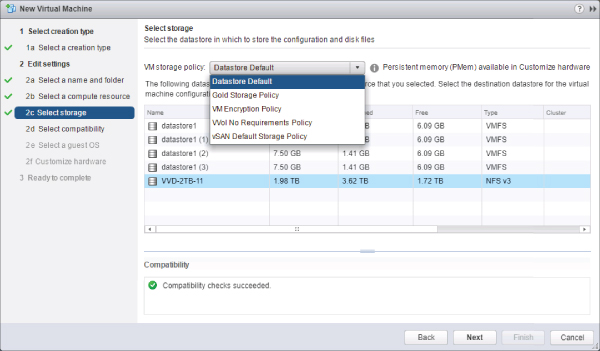

- Select a datastore where the VM files will be located and click Next.

As you can see in Figure 9.7, the vSphere Web Client shows a fair amount of information about the datastores (size, provisioned space, free space, type of datastore). However, the vSphere Web Client doesn't show information such as IOPS capacity or other performance statistics. In Chapter 6, we discussed storage service levels, which allow you to create VM storage policies based on storage attributes provided to vCenter Server by the storage vendor (as well as user-defined storage attributes created and assigned by the vSphere administrator). In Figure 9.7, you can see the VM Storage Policy drop-down list, which lists the currently defined storage service levels.

FIGURE 9.7 You can use storage service levels to help automate VM storage placement decisions when you create a new VM.

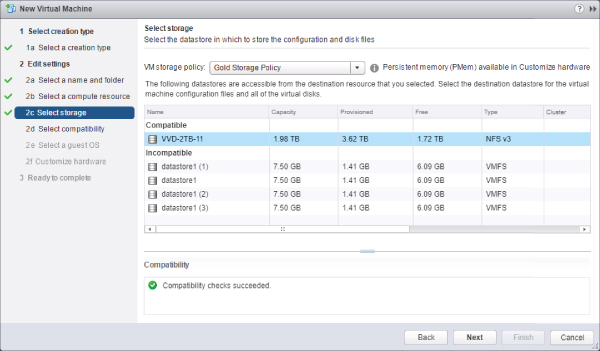

When you select a storage service level, the datastore listing will separate into two groups: compatible and incompatible. Compatible datastores are datastores whose attributes or capabilities satisfy the storage service level as defined in the VM Storage Policies; incompatible datastores are datastores whose attributes do not meet the criteria specified in the storage service level. Figure 9.8 shows a storage service level selected and a compatible datastore selected for this VM's storage.

FIGURE 9.8 When using VM storage policies, select a compatible datastore to ensure that the VM's storage needs are properly satisfied.

For more information on VM storage policies, refer to Chapter 6.

- Select a VMware VM version. vSphere 6.7 introduces a new VM hardware version, version 14. As with earlier versions of vSphere, previous VM hardware versions are also supported. If the VM you are creating will be shared with ESXi hosts running on earlier versions, then choose the appropriate version to match the lowest version host. For example, if the VM will be used only with vSphere 6.0, then choose ESXi 6.0 and later (VM version 11). Click Next.

- Select the drop-down box that corresponds to the operating system family, select the correct operating system version, and then click Next. As you'll see later in this chapter, this helps the vSphere Web Client provide recommendations for certain values later in the wizard.

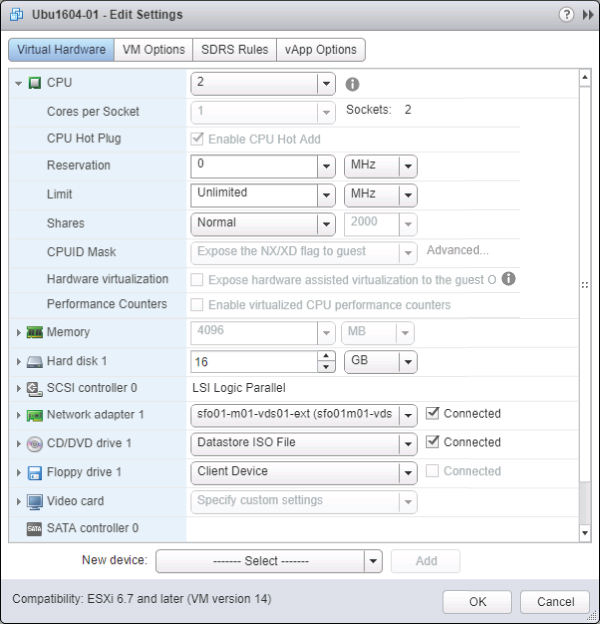

- At this point, you are taken to the Customize Hardware screen where you can customize the virtual hardware that will be presented to your virtual machine. To start, you'll choose how many virtual CPUs will be presented to your virtual machine. Select the number of virtual CPUs by using the drop-down box next to CPU. When you finish configuring virtual CPUs, click Next to continue.

You can select between 1 and 128 virtual CPU sockets, depending on your vSphere license. Additionally, you can choose the number of cores per virtual CPU socket. The total number of cores supported per VM with VM hardware version 14 is 128. The number of cores available per virtual CPU socket will change based on the number of virtual CPU sockets selected. For specific information about how many virtual cores are available per virtual CPU socket, refer to

docs.vmware.com.Keep in mind that the operating system you will install into this VM must support the selected number of virtual CPUs. Also keep in mind that more virtual CPUs doesn't necessarily translate into better performance, and in some cases larger values may negatively impact performance.

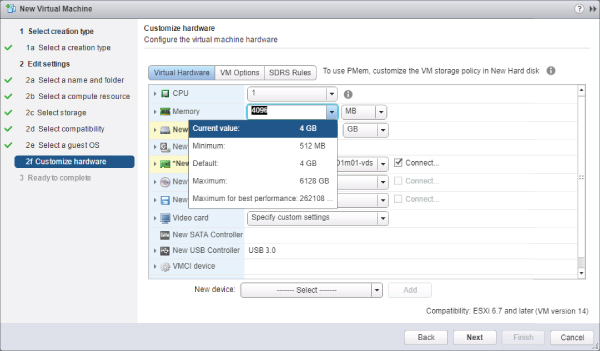

- Configure the VM with the determined amount of RAM by typing in the desired memory value, as shown in Figure 9.9. The default memory sizing is listed in megabytes (MB), so it may be easier to change it to gigabytes (GB) so that you do not need to know the precise number of megabytes. When you've selected the amount of RAM you want allocated to the VM, click Next.

FIGURE 9.9 Based on guest OS selection, the vSphere Web Client provides some basic guidelines on the amount of memory you should configure for the VM.

As shown in Figure 9.9, the vSphere Web Client displays recommendations about the minimum and recommended amounts of RAM based on the earlier selection of operating system and version. This is one of the reasons the selection of the correct guest OS is important when creating a VM.

The amount of RAM configured on this page is the amount of RAM the guest OS reflects in its system properties, and it is the maximum amount that a guest OS will ever be able to use. Think of it as the virtual equivalent of the amount of physical RAM installed in a system. Just as a physical machine cannot use more memory than is physically installed in it, a VM cannot access more memory than it is configured to use.

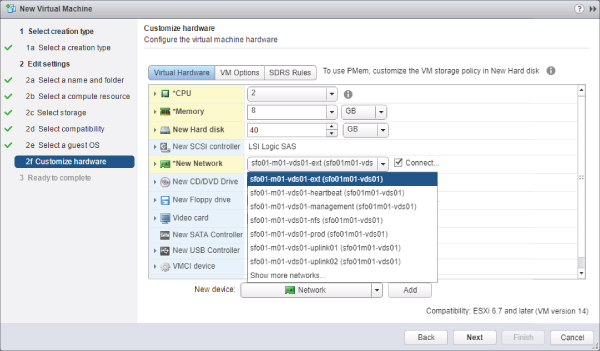

- Select the number of network adapters, the type of each network adapter, and the network to which each adapter will connect. Figure 9.10 shows a screen shot of configuring virtual NICs.

FIGURE 9.10 You can configure a VM with up to 10 network adapters, of the same or different types, that reside on the same or different networks as needed.

- Select New SCSI Controller to expand the selection area, and then click the drop-down box to choose the appropriate SCSI adapter for the operating system selected on the Select A Guest OS page of the Create New Virtual Machine Wizard.

The correct default driver should already be selected based on the previously selected operating system. For example, the LSI Logic parallel adapter is selected automatically when Windows Server 2003 is selected as the guest OS, but the LSI Logic SAS adapter is selected when Windows Server 2008, 2012, or 2016 is chosen as the guest OS. We provided some additional details on the different virtual SCSI adapters in Chapter 6.

- A virtual hard disk is configured automatically when you create a new virtual machine. If you need to add a new virtual hard disk, select the New Device drop-down box at the bottom of the screen, as shown in Figure 9.11.

FIGURE 9.11 A virtual disk is configured automatically when you create a new virtual machine. You can also add additional virtual disks by using the New device option.

You are presented with the following options for adding a virtual disk to your VM.

- The New Hard Disk option allows the user to create a new virtual disk (a VMDK file) that will house the guest OS's files and data. Since a virtual hard disk is already added by default when a new virtual machine is created, using this option is useful if the virtual machine needs two disks (such as when an operating system drive and a data drive are required).

- The Existing Hard Disk option allows a VM to be created using a virtual disk that is already configured with a guest OS or other data and that resides in an available datastore.

- The RDM Disk option allows a VM to have raw SAN LUN access. Raw device mappings (RDMs) are discussed in a bit more detail in Chapter 6.

Since a virtual hard disk is already configured by default, we'll use it to install our guest OS and we won't need to add another virtual disk.

- When you're either adding a new virtual hard disk or using the one provided by default, options are available for the creation of the new virtual disk. Select New Hard Disk to expand the selection area and access these options, as shown in Figure 9.12. First, configure the desired disk size for the VM hard drive. The maximum size will be determined by the format of the datastore on which the virtual disk is stored. Next, select the appropriate Disk Provisioning option:

FIGURE 9.12 vSphere 6 offers a number of different Disk Provisioning options when you're creating new virtual disks.

- To create a virtual disk with all space allocated at creation but not pre-zeroed, select Thick Provision Lazy Zeroed. In this case, the VMDK flat file will be the same size as the specified virtual disk size. A 40 GB virtual disk means a 40 GB VMDK flat file.

- To create a virtual disk with all space allocated at creation and pre-zeroed, select Thick Provision Eager Zeroed. This option is required in order to support vSphere Fault Tolerance. This option also means a “full-size” VMDK flat file that is the same size as the size of the virtual hard disk.

- To create a virtual disk with space allocated on demand, select the Thin Provision option. In this case, the VMDK flat file will grow depending on the amount of data actually stored in it, up to the maximum size specified for the virtual hard disk.

Depending on your storage platform, storage type, and storage vendor's support for vSphere's storage integration technologies like VAAI or VASA, some of these options might be grayed out. For example, an NFS datastore that does not support the VAAIv2 extensions will have these options grayed out, as only thin-provisioned VMDKs are supported. (VAAI and VASA are discussed in greater detail in Chapter 6.)

There are two options for the location of the new virtual disk. These options are available by selecting the drop-down box next to the Location field. Keep in mind that these options control physical location, not logical location; they will directly affect the datastore and/or directory where files are stored for use by the hypervisor.

- The option Store With The Virtual Machine will place the file in the same subdirectory as the configuration file and the rest of the VM files. This is the most commonly selected option and makes managing the VM files easier.

- The Browse option allows you to browse the available datastores and store the VM file separately from the rest of the files. You'd typically select this option when adding new virtual hard disks to a VM or when you need to separate the operating system virtual disk from a data virtual disk.

You can configure other options, such as shares, limits, or Virtual Flash sizing (discussed in greater detail in Chapter 6) for the virtual machine you are creating, if required.

- The Virtual Device Node option lets you specify the SCSI node, IDE controller, or SATA controller to which the virtual disk is connected. The Disk Mode option allows you to configure a virtual disk in Independent mode, as shown in Figure 9.13. The disk mode is not normally altered, so you can typically accept the default values provided, as shown in Figure 9.13.

FIGURE 9.13 You can configure the virtual disk on a number of different SCSI adapters and SCSI IDs, and you can configure it as an independent disk.

- The Virtual Device Node drop-down box reflects the 15 different SCSI nodes available on each of the four SCSI adapters a VM supports. When using Para-virtualized SCSI (PVSCSI) the limit is 64 devices per controller. When you're using an IDE controller, this drop-down list shows the four different IDE nodes that are available. When you're using a SATA controller, this drop-down shows 30 different SATA nodes that are available.

- By not selecting the Independent mode option, you ensure that the virtual disk remains in the default state that allows VM snapshots to be created. If you select the Independent check box, you can configure the virtual disk as a persistent disk, in which changes are written immediately and permanently to the disk, or as a nonpersistent disk, which discards all changes when the VM is powered off.

When you are done adding or modifying the configuration of the virtual machine, select Next to continue.

- Complete a final review of the VM configuration. If anything is incorrect, go back and make changes. As you can see in Figure 9.14, the steps on the left side of the wizard are links that allow you to jump directly to an earlier point in the wizard and make changes.

FIGURE 9.14 Reviewing the configuration of the New Virtual Machine Wizard ensures the correct settings for the VM and prevents mistakes that require deleting and re-creating the VM.

As you can see, the process for creating a VM is pretty straightforward. What's not so straightforward, though, are some of the values that should be used when creating new VMs. What are the best values to use?

Choosing Values for Your New Virtual Machine

Choosing the right values to use for the number of virtual CPUs, the amount of memory, or the number or types of virtual NICs when creating your new VM can be difficult. Fortunately, there's lots of documentation out there on CPU and RAM sizing as well as networking for VMs, so our only recommendation is to right-size the VMs based on your needs (see the sidebar “Provisioning Virtual Machines Is Not the Same as Provisioning Physical Machines,” later in this chapter).

For areas other than the ones we just described, the guidance isn't quite so clear. Out of all the options available during the creation of a new VM, four areas tend to consistently generate questions from both new and experienced users alike:

- How can I find out how to size my VMs?

- How should I handle naming my VMs?

- How big should I make the virtual disks?

- Does my virtual machine need high-end graphics?

Let's talk about each of these questions in a bit more detail.

Sizing Virtual Machines

You might be hoping that we'll give you specific guidance here about how to size your virtual machines. Unfortunately virtual machine sizing differs greatly depending on the environment, the applications installed on the virtual machines, performance requirements, and many other factors. Instead, it's better to discuss a methodology you can use to understand the resource utilization requirements (CPU, memory, disk, and network) of your physical servers before redeploying them as virtual machines.

Simply sizing your virtual machines to the same specifications used for physical servers can lead to oversizing (or undersizing) virtual machines unnecessarily. Both oversizing and undersizing a virtual machine can lead to performance problems for that virtual machine and for other virtual machines on the same ESXi host. Not correctly sizing your virtual machines can negatively impact consolidation ratios, too, ultimately requiring your cluster(s) to scale up or scale out.

Instead, a process called capacity planning can help you understand how to size your virtual machines. With capacity planning you learn over time how your current physical servers are utilized and then use that information to size your virtual machines. A typical capacity planning exercise takes place over a two-to-four-week period and uses tools to automatically monitor and report on the performance of physical servers. By monitoring your servers over time, such as over a 30-day period, you can capture normal business cycles such as end-of-month processing that you might otherwise miss if you monitor for only a short time.

Two of the most common tools used for capacity planning are free, though as you'll see, one of them is not available to everyone. Perhaps the most well-known is a product by VMware called Capacity Planner. The other product, Microsoft Assessment and Planning Toolkit, may not be as well known, but it's still a useful tool.

Both tools produce similar results, such as average and maximum utilization values for CPU, memory, disk, network, and other more specific performance counters. Capacity Planner is customizable and allows you to add custom performance counters to monitor beyond the standard Windows or application counters. Microsoft Assessment and Planning Toolkit is less customizable, but it includes advanced reporting for Microsoft applications (like SQL Server or SharePoint Server) that are useful if you're looking to virtualize these applications.

The process for using these tools is also similar for both. After running the capacity planning analysis over time, you review the results to understand the actual utilization of your servers. These tools also allow you to produce reports that tell you how many ESXi hosts (or in the case of Microsoft Assessment and Planning Toolkit, Hyper-V hosts, though the results are applicable to ESXi as well) you'll need to support the environment. For example, if you monitor 70 total physical servers, the tools may tell you that, based on the actual utilization of each server, you need only 7 total ESXi hosts to support those servers as virtual machines. Your results will vary depending on the actual utilization in your environment.

Capacity planning is such a useful exercise because it tells you the true utilization of your servers before you convert them to virtual machines. Let's say you have a physical server with two CPUs, each with eight cores and 64 GB of RAM, and that server runs Microsoft SQL Server. You might think that because SQL Server is typically an important application, the server must be fully utilized. In reality, a capacity planning exercise may reveal that the server uses only two CPU cores and 8 GB of RAM. When you virtualize that server, you can reduce the resources down to what the server actually uses and save resources for other virtual machines.

Whether you're just starting out on your virtualization journey or you're moving on to virtualizing more critical applications, capacity planning will provide valuable information for properly sizing your virtual machines. Without performing a capacity planning exercise, you are mostly just guessing at how many ESXi hosts you'll need to support the environment or how to properly size your virtual machines.

Naming Virtual Machines

Choosing the display name for a VM might seem like a trivial assignment, but you must ensure that an appropriate naming strategy is in place. We recommend making the display names of VMs match the hostnames configured in the guest OS being installed. For example, if you intend to use the name Server1 in the guest OS, the VM display name should match Server1.

It's important to note that if you use spaces in the virtual display name—which is allowed—then using command-line tools to manage VMs becomes a bit tricky because you must quote out the spaces on the command line. In addition, because DNS hostnames cannot include spaces, using spaces in the VM name would create a disparity between the VM name and the guest OS hostname. Ultimately, this means you should avoid using spaces and special characters that are not allowed in standard DNS naming strategies to ensure similar names both inside and outside the VM. Aside from whatever policies might be in place from your organization, this is usually a matter of personal preference.

The display name assigned to a VM also becomes the name of the folder in the VMFS volume where the VM files will live. At the file level, the associated configuration (VMX) and virtual hard drive (VMDK) files will assume the name supplied in the display name text box during VM creation. Refer to Figure 9.15, where you can see that the user-supplied name of Win2k16-01 is reused for both the folder name and the filenames for the VM. If a VM happens to be renamed at any stage, all the associated files will retain the original name until a Storage vMotion occurs on the VM. Once the vMotion is complete, the majority of the files associated with that VM adhere to the new VM name. Note that file and VM names are case specific. You will learn more detail about Storage vMotion in Chapter 12, “Balancing Resource Utilization.”

FIGURE 9.15 The display name assigned to a VM is used in a variety of places.

Sizing Virtual Machine Hard Disks

The answer to the third question—how big to make the hard disks in your VM—is a bit more complicated. There are many different approaches, but some best practices facilitate the management, scalability, and backup of VMs. First, you should create VMs with multiple virtual disk files to separate the operating system from the custom user/application data. Separating the system files and the user/application data will make it easy to increase the number of data drives in the future and allow a more practical backup strategy. A system drive of 30 GB to 40 GB, for example, usually provides ample room for installation and continued growth of the operating system. The data drives across different VMs will vary in size because of underlying storage system capacity and functionality, the installed applications, the function of the system, and the number of users who connect to the computer. However, because the extra hard drives are not operating system data, it will be easier to adjust those drives when needed.

Keep in mind that additional virtual hard drives will pick up on the same naming scheme as the original virtual hard drive. For example, a VM named Server1 that has an original virtual hard disk file named Win2k16-01.vmdk will name the new virtual hard disk file Win2k16-01_1.vmdk. For each additional file, the last number will be incremented, making it easy to identify all virtual disk files related to a particular VM. Figure 9.16 shows a VM with two virtual hard disks so that you can see how vSphere handles the naming for additional virtual hard disks.

FIGURE 9.16 vSphere automatically appends a number to the VMDK filename for additional virtual hard disks.

In Chapter 10, “Using Templates and vApps,” we'll revisit the process of creating VMs to see how to use templates to implement and maintain an optimal VM configuration that separates the system data from the user/application data. At this point, though, now that you've created a VM, you're ready to install the guest OS into the VM.

Virtual Machine Graphics

Depending on what kind of virtual machines you're deploying in your environment, you may need to think about graphics performance. For backend systems, such as database systems or email platforms, the graphics performance of the virtual machine is not important and is not something you typically have to worry about. If you're deploying a virtual desktop infrastructure (VDI), however, the graphics performance and capabilities of the virtual machine are likely to be a key consideration.

For VDI solutions like VMware Horizon View, end users no longer run a full desktop or laptop but instead connect to their virtual desktop (running on vSphere) from a variety of endpoint devices. These devices could be laptops, desktops, thin or zero clients, or even tablets and smartphones. The virtual desktop often acts as a complete desktop replacement for end users, so the desktop needs to perform as well as (or better than) the physical hardware that is being replaced.

In order to provide high-end graphics capabilities to virtual machines, vSphere 6 introduced Virtual Shared Graphics Acceleration (vSGA) and in vSphere 6.5 vGPUs were introduced in partnership with Nvidia. This technology allows you to install physical graphics cards of a specific type into your ESXi host and then offload the processing of 3D rendering to the physical graphics cards instead of the host CPUs. This offloading helps to reduce overall CPU utilization by allowing hardware that is purpose-built for rendering graphics to perform the processing. Additional functionality in vSphere 6.7 has been introduced for users of vGPUs with regard to VM mobility. Provided the latest hardware and driver VIBs are loaded in each ESXi host, VMs can now be vMotioned between hosts without needing to be powered off.

Although the 3D rendering settings are configured in the settings of a virtual machine, they are intended only for use with VMware Horizon. If you are using a VDI solution other than VMware Horizon, speak to the vendor to learn if 3D rendering on vSphere is supported.

Installing a Guest Operating System

A new VM is analogous to a physical computer with an empty hard drive. All the components are there but without an operating system. After creating the VM, you're ready to install a supported guest OS. The following OSs are some of the more commonly installed guest OSs supported by ESXi (this is not a comprehensive list as there are over 200 supported OSs listed on the vSphere 6.7 Guest OS Compatibility Guide):

- Windows XP, Vista, 7/8/10

- Windows Server 2000/2003/2008/2012/2016/2019

- Red Hat Enterprise Linux 3/4/5/6/7

- CentOS 4/5/6/7

- SUSE Linux Enterprise Server 8/9/10/11/12

- Debian Linux 6/7/8/9

- Oracle Linux 4/5/6/7

- Sun Solaris 10/11

- FreeBSD 7/8/9/10

- Ubuntu Linux

- CoreOS

- Apple OS X/macOS

Installing any of these supported guest OSs follows the same common order of steps for installation on a physical server, but the nuances and information provided during the install of each guest OS might vary greatly. Because of the differences involved in installing different guest OSs or different versions of a guest OS, we won't go into any detail on the actual guest OS installation process. We'll leave that to the guest OS vendor. Instead, we'll focus on guest OS installation tasks that are specific to a virtualized environment.

Working with Installation Media

In the physical world, administrators typically put the OS installation media in the physical server's optical drive, install the OS, and then are done with it. Well, in a virtual world, the process is similar, but here's the issue—where do you put the CD when the server is virtual? There are a couple of ways to handle it. One way is quick and easy, and the other takes a bit longer but pays off later.

VMs have a few ways to access data stored on optical disks. VMs can access optical disks in one of three ways (Figure 9.17 shows the Datastore ISO File option selected):

FIGURE 9.17 VMs can access optical disks physically located on the vSphere Web Client system, located on the ESXi host, or stored as an ISO image.

- Client Device This option allows an optical drive local to the computer running the vSphere Web Client to be mapped into the VM. For example, if you are using the vSphere Web Client on your corporate-issued laptop, you can simply insert a CD/DVD into your local optical drive and map that into the VM with this option. This is the quick-and-easy method referenced earlier.

- Host Device This option maps the ESXi host's optical drive into the VM. VMware administrators would have to insert the CD/DVD into the server's optical drive in order for the VM to have access to the disk. This option is only available from the Hardware Portlet in the VM's Summary tab as shown in Figure 9.18.

FIGURE 9.18 The Summary tab of a VM allows the connection of a VMs virtual CD/DVD drive to the physical CD/DVD drive in the ESXi host that is running the VM.

- Datastore or Library ISO File These last two options map an ISO image (see the sidebar “ISO Image Basics”) to the VM. Although using an ISO image typically requires an additional step—creating the ISO image from the physical disk—nearly all server software is being distributed as an ISO image that can be leveraged directly from within your vSphere environment.

ISO images are the recommended way to install a guest OS because they are faster than using an actual optical drive and can be quickly mounted or dismounted with little effort.

Before you can use an ISO image to install the guest OS, though, you must first put it in a location that ESXi can access. Generally, this means uploading it directly into a datastore accessible to your ESXi hosts or into a feature introduced in vSphere 6.0, the Content Library.

Perform these steps to upload an ISO image into a datastore:

- Use the vSphere Web Client to connect to a vCenter Server instance or browse to the vSphere Host Client to connect to an individual ESXi host.

- From the vSphere Web Client menu bar, select Storage.

- Right-click the datastore to which you want to upload the ISO image and select Browse Files from the context menu.

- Select the destination folder in the datastore where you want to store the ISO image. Use the Create A New Folder button (it looks like a folder with a green plus symbol) if you need to create a new folder in which to store the ISO image.

- From the toolbar in the Files screen, click the Upload button (it looks like a disk with a green arrow pointing into the disk). From the dialog box that appears, select the ISO image as shown in Figure 9.19 and click Open.

FIGURE 9.19 Use the Upload button to upload ISO images for use when installing guest OSs.

The vSphere Web Client uploads the file into the selected folder in that datastore.

You can find out how to perform a similar action with Content Libraries in Chapter 10. After the ISO image is uploaded to an available datastore or into a Content Library, you're ready to install a guest OS using that ISO image.

Using the Installation Media

Once you have the installation media in place—by using the local CD/DVD-ROM drive on the computer where you are running the vSphere Web Client or by creating and uploading an ISO image into a datastore—you're ready to use that installation media to install a guest OS into the VM.

Perform the following steps to install a guest OS using an ISO file on a shared datastore:

- Use the vSphere Web Client to connect to a vCenter Server instance or use the vSphere Host Client to connect to an individual ESXi host where a VM has been created.

- If you're not already in the Inventory Trees or VMs And Templates view, use the menu bar to select Home ⇒ Inventory Trees ⇒ VMs And Templates (the second of four icons above the inventory tree).

- In the inventory tree, expand out the tree to display and right-click the new VM. Select the Edit Settings menu option. The Virtual Machine Properties window opens.

- Expand the CD/DVD Drive 1 hardware option to expose the additional properties.

- Change the drop-down box to Datastore ISO File, and select the Connect At Power On check box. If you fail to select that check box, the VM will not boot from the selected ISO image.

- Click the Browse button to browse a datastore for the ISO file of the guest OS.

- Navigate through the available datastores until you find the ISO file of the guest OS to be installed. After you select the ISO file, the properties page is configured similar to the one shown previously in Figure 9.17. Click OK to close the VM's Edit Settings dialog box.

- Right-click the virtual machine and select Power On from the menu. Alternatively, you can use the Actions drop-down option on the properties page of the virtual machine or simply click the Power On green arrow on the content area title bar. Since there is no existing OS on the VM's virtual hard disk, the VM boots from the mounted ISO image and begins the installation of the guest OS.

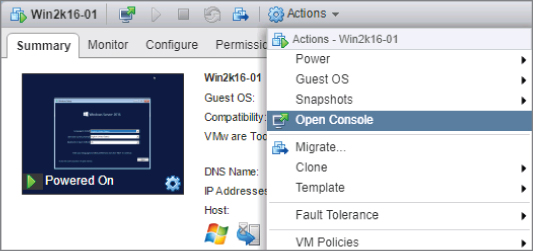

- Click the VM's console to open a remote session. Alternatively, you can use the Open Console option from the Properties page of the virtual machine as shown in Figure 9.20.

FIGURE 9.20 Opening the console to a VM can be done in various different ways, through the Summary screen, the content area tool bar, or the Actions menu.

- Follow the onscreen instructions to complete the guest OS installation. These will vary depending on the specific guest OS you are installing; refer to the documentation for that guest OS for specific details regarding installation.

Working in the Virtual Machine Console

Working within the VM console is like working at the console of a physical system. From here, you can do anything you need to do to the VM: you can access the VM's BIOS and modify settings, you can turn the power to the VM off (and back on again), and you can interact with the guest OS you are installing or have already installed into the VM. We'll describe most of these functions later in this chapter in the sections “Managing Virtual Machines” and “Modifying Virtual Machines,” but there is one thing that we want to point out now.

The vSphere Web Client must have a way to know if the keystrokes and mouse clicks you're generating go to the VM or if they should be processed by the vSphere Web Client itself. To do this, it uses the concept of focus. When you click within a VM console, that VM will have the focus: all of the keystrokes and the mouse clicks will be directed to that VM. Until you have VMware Tools installed—a process we'll describe in the next section, you usually have to manually tell the vSphere Web Client when you want to shift focus out of the VM. To do this, you use the vSphere Web Client's special keystroke: Ctrl+Alt. When you press Ctrl+Alt, the VM relinquishes control of the mouse and keyboard and returns it to the vSphere Web Client. Keep that in mind when you are trying to use your mouse and it won't travel beyond the confines of the VM console window. Just press Ctrl+Alt, and the VM will release control. Some of the more modern Guest OS installations will relinquish control when you move the mouse edge of the console window, but it is handy to know the keystrokes in case this is not automatic.

Once you've installed the guest OS, you should then install and configure VMware Tools. We discuss VMware Tools installation and configuration in the next section.

Installing VMware Tools

Although VMware Tools is not installed by default, the package is an important part of a VM. VMware Tools offers several great benefits without any detriments. Recall from the beginning of this chapter that VMware vSphere offers certain virtualization-optimized (or paravirtualized) devices to VMs in order to improve performance. In many cases, these paravirtualized devices do not have device drivers present in a standard installation of a guest OS. The device drivers for these devices are provided by VMware Tools, which is just one more reason why VMware Tools is an essential part of every VM and guest OS installation.

In other words, installing VMware Tools should be standard practice and not an optional step in the deployment of a VM. The VMware Tools package provides the following benefits:

- Optimized NIC drivers.

- Optimized SCSI drivers.

- Enhanced video and mouse drivers.

- VM heartbeat.

- VSS support to enable guest quiescing for snapshots and backups. Many VMware and third-party applications and tools rely on the VMware Tools VSS integration.

- Enhanced memory management.

- API access for VMware utilities (such as PowerCLI) to reach into the guest OS.

VMware Tools also helps streamline and automate the management of VM focus so you can move into and out of VM consoles easily and seamlessly without the Ctrl+Alt keyboard command.

The VMware Tools package is available for Windows, Linux, NetWare, Solaris, OSX, and FreeBSD; however, the installation methods vary because of the differences in the guest OSs. In all cases, the installation of VMware Tools can start when you select the option to install VMware Tools from the vSphere Web Client. Do you recall our discussion earlier about ISO images and how ESXi uses them to present CDs/DVDs to VMs? That's exactly the functionality being leveraged in this case. When you select to install VMware Tools, vSphere will mount an ISO as a CD/DVD for the VM, and the guest OS will reflect a mounted CD-ROM that has the installation files for VMware Tools.

As we mentioned previously, the exact process for installing VMware Tools will depend on the guest OS. Because Windows and Linux make up the largest portion of VMs deployed on VMware vSphere in most cases, those are the two examples we'll discuss. First, we'll walk you through installing VMware Tools into a Windows-based guest OS.

Installing VMware Tools in Windows

Perform these steps to install VMware Tools into Windows Server 2012 running as a guest OS in a VM (the steps for other versions of Windows are similar):

- Use the vSphere Web Client to connect to a vCenter Server instance or use the vSphere Host Client to connect to an individual ESXi host.

- If you aren't already in the Inventory Trees or VMs And Templates inventory view, use Home ⇒ Inventory Trees or Home ⇒ VMs And Templates to navigate to one of these views.

- Right-click the VM in the inventory tree and select Open Console. You can also use the Open Console option on the properties page of the virtual machine.

- If you aren't already logged into the guest OS in the VM, select Send Ctrl+Alt+Delete and log into the guest OS.

- Right-click the virtual machine and select Guest OS ⇒ Install VMware Tools. A dialog box providing additional information appears. Click Mount to mount the VMware Tools ISO and dismiss the dialog box.

- An AutoPlay dialog box appears, prompting the user for action. Select the option Run Setup64.exe.

If the AutoPlay dialog box does not appear, open Windows Explorer and double-click the CD/DVD drive icon. The AutoPlay dialog box should then appear.

- Click Next on the Welcome To The Installation Wizard For VMware Tools page.

- Select the appropriate setup type for the VMware Tools installation, and click Next.

For most situations, you will choose the Typical radio button. The Complete installation option installs all available features, whereas the Custom installation option allows for the greatest level of feature customization.

- Click Install.

During the installation, you may be prompted one or more times to confirm the installation of third-party device drivers; select Install for each of these prompts.

If the AutoRun dialog box appears again, simply close the dialog box and continue with the installation.

- After the installation is complete, click Finish.

- Click Yes to restart the VM immediately, or click No to manually restart the VM at a later time.

To install the enhanced VMware video driver and improve the graphical console performance on older Windows VMs, perform the following steps:

- From the Start menu, select Run. In the Run dialog box, type

devmgmt.mscand click OK. This will launch the Device Manager console. - Expand the Display Adapters entry.

- Right-click the Standard VGA Graphics Adapter or VMware SVGA II item, and select Update Driver Software.

- Click Browse My Computer For Driver Software.

- Using the Browse button, navigate to the following directory:

C:Program FilesCommon FilesVMwareDriverswddm_videoThen click Next.

- After a moment, Windows will report that it has successfully installed the driver for the VMware SVGA 3D (Microsoft Corporation – WDDM) device. Click Close.

- Restart the VM when prompted.

After Windows restarts in the VM, you should notice improved performance when using the graphical console. Note that this procedure is no longer required in Windows Server 2012 and newer. The VMware SVGA 3D driver is automatically installed along with VMware Tools.

For older versions of Windows, such as Windows Server 2003, you can further improve the responsiveness of the VM console by configuring the hardware acceleration setting. It is, by default, set to None; setting it to Maximum provides a much smoother console session experience. The VMware Tools installation routine reminds you to set this value at the end of the installation, but if you choose not to set hardware acceleration at that time, it can easily be set later. We highly recommended that you optimize the graphical performance of the VM's console. (Note that Windows XP has this value set to Maximum by default.)

Perform the following steps to adjust the hardware acceleration in a VM running Windows Server 2003 (or Windows XP, in case the value has been changed from the default):

- Right-click an empty area of the Windows Desktop, and select the Properties option.

- Select the Settings tab, and click the Advanced button.

- Select the Troubleshooting tab.

- Move the Hardware Acceleration slider to the Full setting on the right, as shown in Figure 9.21.

FIGURE 9.21 Changing the hardware acceleration feature of an older Windows guest OS is a common and helpful adjustment for improving mouse performance.

Now that the VMware Tools installation is complete and the VM is rebooted, the system tray displays the VMware Tools icon, the letters VM in a small gray box (Windows Taskbar settings might hide the icon). The icon in the system tray indicates that VMware Tools is installed and operational.

In previous versions of vSphere, double-clicking the VMware Tools icon in the system tray would bring up a set of configurable options. As of vSphere 5.1, that interface has been removed and replaced with the informational screen shown in Figure 9.22. Previously you could configure time synchronization, show or hide VMware Tools from the Taskbar, and select scripts to suspend, resume, shut down, or turn on a VM.

FIGURE 9.22 As of vSphere 5.1, you can no longer configure properties in VMware Tools by interacting with the icon in the system tray.

VMware now provides command-line-based tools that will allow you to configure these settings. You can access these by browsing to the installation directory of VMware Tools.

As with previous versions of VMware Tools, time synchronization between the guest OS and the host is disabled by default. You'll want to use caution when enabling time synchronization between the guest OS and the ESXi host because Windows domain members rely on Kerberos for authentication and Kerberos is sensitive to time differences between computers. A Windows-based guest OS that belongs to an Active Directory domain is already configured with a native time synchronization process against the domain controller in its domain that holds the PDC Emulator operations master role. If the time on the ESXi host is different from the time on the PDC Emulator operations master domain controller, the guest OS could end up moving outside the 5-minute window allowed by Kerberos. When the 5-minute window is exceeded, Kerberos will experience errors with authentication and replication.

You can take a few approaches to managing time synchronizations in a virtual environment. The first approach involves not using VMware Tools time synchronization and relying instead on the W32Time service and a PDC Emulator with a Registry edit that configures synchronization with an external time server. Another approach involves disabling the native time synchronization across the Windows domain and then relying on the VMware Tools feature. A third approach might be to synchronize the VMware ESXi hosts and the PDC Emulator operations master with the same external time server and then to enable the VMware Tools option for synchronization. In this case, both the native W32Time service and VMware Tools should be adjusting the time to the same value.

VMware has a few Knowledge Base articles that contain the latest recommendations for timekeeping. For Windows-based guest OS installations, refer to http://kb.vmware.com/kb/1318 or refer to the older, but still relevant document “Timekeeping in VMware Virtual Machines” at the following location:

http://www.vmware.com/files/pdf/Timekeeping-In-VirtualMachines.pdf

We've shown you how to install VMware Tools into a Windows-based guest operation system, so now we'd like to walk through the process for a Linux-based guest OS.

Installing VMware Tools in Linux

A number of versions (or distributions) of Linux are available and supported by VMware vSphere. While they are all called “Linux,” they do have subtle differences from one distribution to another that make it difficult to provide a single set of steps that would apply to all Linux distributions. In this section, we'll describe two different methods for installing VMware Tools. First, we'll show you a simple installation process, using Open VM Tools and a package manager. For this example, we will use Ubuntu 16.04 LTS. After that, we'll use SuSE Linux Enterprise Server (SLES) version 11 to show you how to install VMware Tools using an ISO mounted from the ESXi host. SuSE is a popular enterprise-focused distribution of Linux, and version 11 doesn't include Open VM Tools.

INSTALLING VMWARE TOOLS USING OPEN VM TOOLS

Open VM Tools (OVT) is nearly the same as the normal VMware Tools, but it's packaged and updated slightly differently. Like the normal VMware Tools that ships with ESXi and can be installed from an ISO, OVT is a set of services, modules, and drivers that allow you to more seamlessly manage your Linux based VMs. OVT has one distinct difference: the method in which you install and update it. By including Open VM Tools as an open source project that's available to anyone, installing and updating can be customized depending on the Linux distribution. There are a number of Linux distributions that include Open-VM-Tools:

- Fedora

- Devian

- OpenSUSE

- Ubuntu

- Red Hat Enterprise Linux

- SUSE Linux

- CentOS

- Oracle Linux

To install Open VM Tools into a VM running Ubuntu 16.04 LTS, perform the following steps:

- Use the vSphere Web Client to connect to a vCenter Server instance or use the vSphere Host Client to connect to an individual ESXi host.

- You will need access to the console of the VM onto which you're installing VMware Tools. Right-click the VM and select Open Console.

- Log into the Linux guest OS using an account with appropriate permissions or the ability to escalate permissions (

sudo <command>) to install OVT. - To ensure you are getting the most up to date version, run

sudo apt-get-update. - Once your package manager is updated, install Open VM Tools by running

sudo apt-get install open-vm-tools. - If this is the first time the OVT is being installed on this VM, you will need to reboot for it to start up correctly. Future updates should not require a reboot.

INSTALLING VMWARE TOOLS IN LINUX USING AN ISO

Perform the following steps to install VMware Tools into a VM running the 64-bit version of SLES 11 as the guest OS:

- Use the vSphere Web Client to connect to a vCenter Server instance or use the vSphere Host Client to connect to an individual ESXi host.

- You will need access to the console of the VM onto which you're installing VMware Tools. Right-click the VM and select Open Console.

- Log into the Linux guest OS using an account with appropriate permissions. This will typically be the root account or an equivalent (some Linux distributions, including Ubuntu, disable the root account but provide an administrative account you can use).

- Right-click the virtual machine and choose Guest OS ⇒ Install VMware Tools. Click Mount in the dialog box that pops up.

- Assuming that you have a graphical user environment running in the Linux VM, a file system browser window will open to display the contents of the VMware Tools ISO that was automatically mounted behind the scenes.

- Open a Linux terminal window. In many distributions, you can right-click a blank area of the file system browser window and select Open In Terminal.

- If you are not already in the same directory as the VMware Tools mount point, change directories to the location of the VMware Tools mount point using the following command (the exact path may vary from distribution to distribution and from version to version; this is the path for SLES 11):

cd /media/VMware Tools - Extract the compressed TAR file (with the

.tar.gzfilename extension) to a temporary directory, and then change to that temporary directory using the following commands:tar -zxf VMwareTools-x.y.z-xxxxxxx.tar.gz –C /tmpcd /tmp/vmware-tools-distrib - In the

/tmp/vmware-tools-distribdirectory, use thesudocommand to run thevmware-install.plPerl script with the following command:sudo ./vmware-install.plEnter the current account's password when prompted.

- The installer will provide a series of prompts for information such as where to place the binary files, where the init scripts are located, and where to place the library files. Default answers are provided in brackets; you can just press Enter unless you need to specify a different value that is appropriate for this Linux system.

- After the installation is complete, the VMware Tools ISO will be unmounted automatically. You can remove the temporary installation directory using these commands:

cdrm -rf /tmp/vmware-tools-distrib - Reboot the Linux VM for the installation of VMware Tools to take full effect.

The steps described here were performed on a VM running SLES 12 64-bit. Because of variations within different versions and distributions of Linux, the commands you may need to install VMware Tools within another distribution may not exactly match what we've listed here. However, these steps do provide a general guideline of what the procedure looks like.

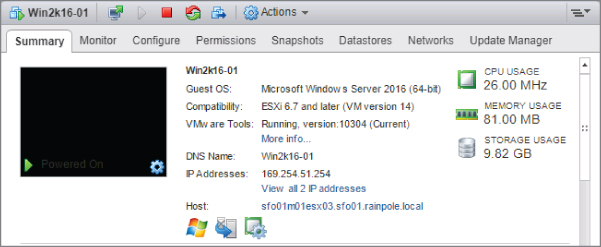

After VMware Tools is installed, the Summary tab of a VM object identifies the status of VMware Tools as well as other information such as operating system, CPU, memory, DNS (host) name, IP address, and current ESXi host. Figure 9.23 shows a screen shot of this information for the Windows Server 2016 VM into which we installed VMware Tools earlier.

FIGURE 9.23 You can view details about VMware Tools, DNS name, IP address, and so forth from the Summary tab of a VM object.

If you are upgrading to vSphere 6.7 from a previous version of VMware vSphere, you will have outdated versions of VMware Tools running in your guest OSs. You'll want to upgrade these in order to get the latest drivers. In Chapter 4, “Maintaining VMware vSphere,” we discuss the use of vSphere Update Manager to assist in this process, but you can also do it manually.

For Windows-based guest OSs, the process of upgrading VMware Tools is as simple as right-clicking a VM and selecting Guest OS ⇒ Upgrade VMware Tools. Select the option labeled Automatic Tools Upgrade and click OK. vCenter Server will install the updated VMware Tools and automatically reboot the VM, if necessary.

For other guest OSs, upgrading VMware Tools typically means running through the install process again, or running through an update in the Linux package manager. For more information, refer to the instructions for installing VMware Tools on SLES and Ubuntu.

Creating VMs is just one aspect of managing VMs. In the next sections, we look at some additional VM management tasks.

Managing Virtual Machines

In addition to creating VMs, vSphere administrators must perform a range of other tasks. Although most of these tasks are relatively easy to figure out, we include them here for completeness.

Adding or Registering Existing VMs

Creating VMs from scratch, as described earlier, is only one way of getting VMs into the environment. It's entirely possible that you, as a vSphere administrator, might receive pre-created VMs from another source. Suppose you receive the files that compose a VM—notably, the VMX and VMDK files—from another administrator and you need to put that VM to use in your environment. You've already seen how to use the vSphere Web Client–based file browser to upload files into a datastore, but what needs to happen once it's in the datastore? In this case, you need to register the VM. The process of registering the VM adds it to the vCenter Server (or ESXi host) inventory and allows you to then manage the VM.

Perform the following steps to add (or register) an existing VM into the inventory:

- Use the vSphere Web Client to connect to a vCenter Server instance or use the vSphere Host Client to connect to an individual ESXi host.

- A VM can be registered from a number of different views within the vSphere Web Client. The Storage inventory view, though, is probably the most logical place to do it. Navigate to the Storage inventory view by using the menu bar or the navigation bar.

- Right-click the datastore containing the VM you want to register. From the context menu, select Register VM as shown in Figure 9.24.

FIGURE 9.24 You invoke the Register Virtual Machine Wizard by right-clicking the datastore and selecting Register VM.

- Use the file browser to navigate to the folder where the VMX file for the VM resides. Select the correct VMX file and click OK.

- The Register Virtual Machine Wizard prepopulates the name of the VM. It does this by reading the contents of the VMX file. Accept the name or type a new one; then select a logical location within the inventory and click Next.

- Choose the cluster on which you'd like to run this VM and click Next.

- If you selected a cluster for which VMware DRS is not enabled or is set to Manual, you must also select the specific host on which the VM will run. Choose a specific host and click Next.

- Review the settings. If everything is correct, click Finish; otherwise, use the hyperlinks on the left side of the wizard or the Back button to go back and make any necessary changes.

When the Register Virtual Machine Wizard is finished, the VM will be added to the vSphere Web Client inventory. From here, you're ready to manipulate the VM in whatever fashion you need, such as powering it on.

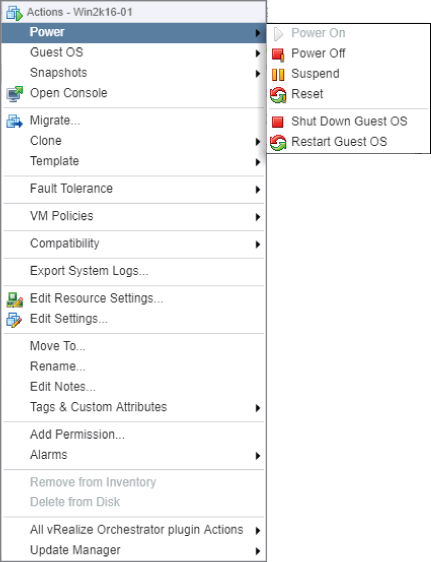

Changing VM Power States

There are six different commands involved in changing the runlevel and power state of a VM. Figure 9.25 shows these six commands on the context menu displayed when you right-click a VM and select Power.

FIGURE 9.25 The Power submenu allows you to power on, power off, suspend, or reset a VM as well as interact with the guest OS if VMware Tools is installed.

By and large, these commands are self-explanatory, but there are a few subtle differences in some of them:

- Power On and Power Off These function exactly as their names suggest. They are equivalent to toggling the virtual power button on the VM without any interaction with the guest OS (if one is installed).

- Suspend This command suspends the VM. When you resume the VM, it will start back right where it was when you suspended it.

- Reset This command will reset the VM, which is not the same as rebooting the guest OS. This is the virtual equivalent of pressing the Reset button on the front of the computer.

- Shut Down Guest OS This command works only if VMware Tools is installed, and it works through VMware Tools to invoke an orderly shutdown of the guest OS. To avoid file system or data corruption in the guest OS instance, you should use this command whenever possible.

- Restart Guest OS Like the Shut Down Guest command, this command requires VMware Tools and initiates a reboot of the guest OS in a graceful fashion.

Removing VMs

If you have a VM that you need to keep but that doesn't have to be listed in the VM inventory, you can remove the VM from the inventory. This keeps the VM files intact, and the VM can be re-added to the inventory (that is, registered) at any time later on using the procedure described earlier in this chapter, in the section “Adding or Registering Existing VMs.”

To remove a VM, right-click a powered-off VM and, from the context menu, select Remove From Inventory. Select Yes in the Confirm Remove dialog box, and the VM will be removed from the inventory. You can use the vSphere Web Client file browser to verify that the files for the VM are still intact in the same location on the datastore.

Deleting VMs

If you have a VM that you no longer need at all—meaning you don't need it listed in the inventory and you don't need the files maintained on the datastore—you can completely remove the VM. Be careful, though; this is not something that you can undo!

To delete a VM entirely, right-click a powered-off VM and select Delete from Disk from the context menu. The vSphere Web Client will prompt you for confirmation, reminding you that you are deleting the VM and its associated base disks (VMDK files). Click Yes to continue removing the files from both inventory and the datastore. Once the process is done, you can once again use the vSphere Web Client file browser to verify that the VM's files are gone.

Adding existing VMs, removing VMs from inventory, and deleting VMs are all relatively simple tasks. The task of modifying VMs, though, is significant enough to warrant its own section.

Modifying Virtual Machines

Just as physical machines require hardware upgrades or changes, a VM might require virtual hardware upgrades or changes to meet changing performance demands. Perhaps a new memory-intensive client-server application requires an increase in memory, or a new data-mining application requires a second processor or additional network adapters for bandwidth-heavy FTP traffic. In each of these cases, the VM requires a modification of the virtual hardware configured for the guest OS to use. Of course, this is only one task that an administrator charged with managing VMs could be responsible for completing. Other tasks might include leveraging vSphere's snapshot functionality to protect against a potential issue with the guest OS inside a VM. We describe both of these tasks in the following sections, starting with how to change the hardware of a VM.

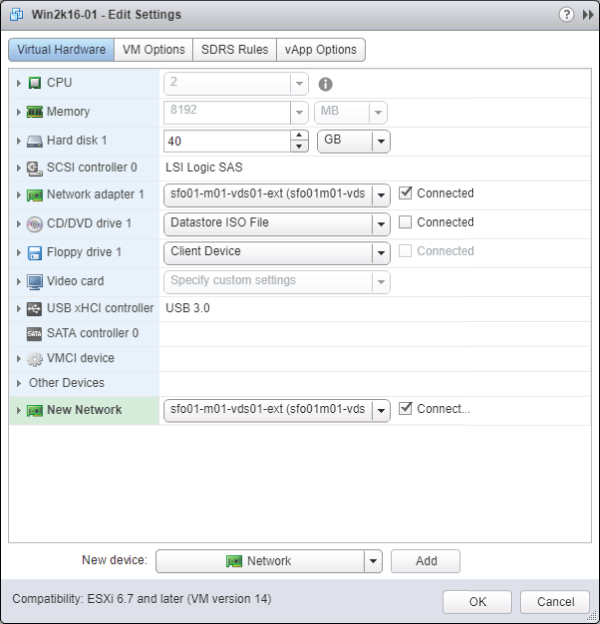

Changing Virtual Machine Hardware

In most cases, modifying a VM requires that the VM be powered off. There are exceptions to this rule, as shown in Figure 9.26. You can hot-add a USB controller, a SATA controller, an Ethernet adapter, a hard disk, or a SCSI device. Later in this chapter, you'll see that some guest OSs also support the addition (and subtraction) of virtual CPUs or RAM while they are powered on as well. Not all guest OS versions will see the new hardware configuration right away—you may need to reboot for the changes to take effect.

FIGURE 9.26 Users can add some types of hardware while the VM is powered on. If virtual hardware cannot be added while the VM is powered on, the operation will fail.

When you're adding new virtual hardware to a VM using the vSphere Web Client, the options are similar to those used while creating a VM. For example, to add a new virtual hard disk to an existing VM, you would use the New Device drop-down box at the bottom of the Virtual Machine Edit Settings dialog box. In Figure 9.26, you see that you can add a virtual hard disk to a VM while it is powered on. From there, the vSphere Web Client uses the same steps shown earlier in this chapter in Figure 9.11, Figure 9.12, and Figure 9.13. The only difference is that now you're adding a new virtual hard disk to an existing VM. As an example, we'll go through the steps to add an Ethernet adapter to a VM (the steps are the same regardless of whether the VM is actually running).

Perform these steps to add an Ethernet adapter to a VM:

- Launch the vSphere Web Client and connect to a vCenter Server instance or use the vSphere Host Client to connect to an individual ESXi host.

- If you aren't already in an inventory view that displays VMs, switch to the Inventory Trees or VMs And Templates view using the Home Inventories menu.

- Right-click the VM to which you want to add the Ethernet adapter, and select Edit Settings.

- Select the New Device drop-down box at the bottom of the screen and select Network. Click the Add button next to the New Device drop-down box to add the Ethernet adapter to the virtual machine.

- Expand the New Network option to gain access to additional properties.

- Select the network adapter type, the network to which it should be connected, and whether the network adapter should be connected at power-on, as shown in Figure 9.27.

FIGURE 9.27 To add a new network adapter, you must select the adapter type, the network, and whether it should be connected at power-on.

- Review the settings and click OK.

Besides adding new virtual hardware, users can make other changes while a VM is powered on. For example, you can mount and unmount CD/DVD drives, ISO images, and floppy disk images while a VM is turned on. We described the process for mounting an ISO image as a virtual CD/DVD drive earlier in this chapter, in the section “Installing a Guest Operating System.” You can also assign and reassign adapters to virtual networks while a VM is running. All of these tasks are performed in the VM Properties dialog box, which you access by selecting Edit Settings from the context menu for a VM.

If you are running Windows Server 2008 or above, or any modern Linux distribution, you also gain the ability to add virtual CPUs or RAM to a VM while it is running. To use this functionality, you must first enable it. In a somewhat ironic twist, the VM for which you want to enable hot-add must be powered off.

To enable hot-add of virtual CPUs or RAM, perform these steps:

- Launch the vSphere Web Client, if it is not already running, and connect to a vCenter Server instance.

- Navigate to either the Inventory Trees or VMs And Templates inventory view.

- If the VM for which you want to enable hot-add is currently running, right-click the VM and select Power ⇒ Shut Down Guest. The VM must be shut down in order to enable hot-add functionality.

- Right-click the VM and select Edit Settings.

- On the Virtual Hardware tab, select CPU to expand the available options. Select the Enable CPU Hot Add check box in the CPU Hot Plug option.

- To enable memory hot-add, select Memory to expand the available options. Select the Enable check box in the Memory Hot Plug option to enable hot-plug memory.

- Click OK to save the changes to the VM.

Once this setting has been configured, you can add RAM or virtual CPUs to the VM when it is powered on. Figure 9.28 shows a powered-on VM that has memory hot-add enabled. Figure 9.29 shows a powered-on VM that has CPU hot-plug enabled; you can change the number of virtual CPU sockets, but you can't change the number of cores per virtual CPU socket.

FIGURE 9.28 The ability to add memory to a VM that is already powered on is restricted to VMs with memory hot-add enabled.

FIGURE 9.29 With CPU hot-plug enabled, more virtual CPU sockets can be configured, but the number of cores per CPU cannot be altered.

Once these features are enabled, you can use the same procedure to add hardware as you would when a VM is turned off. You may also need to consider (and test) that just because the OS supports adding hardware on the fly, the applications running on the OS may not. That is, you may not always see additional benefit if the application maps out the potential resources when first run, but not again until the application is stopped and restarted.

Aside from the changes described so far, configuration changes to a VM can take place only when the VM is in a powered-off state. When a VM is powered off, all of the various configuration options are available to change: RAM, virtual CPUs, or adding or removing other hardware components such as CD/DVD drives or floppy drives.

As you can see, running your operating system in a VM offers advantages when it comes time to reconfigure hardware, even enabling such innovative features as CPU hot-plug. There are other advantages to using VMs too; one of these advantages is a vSphere feature called snapshots.

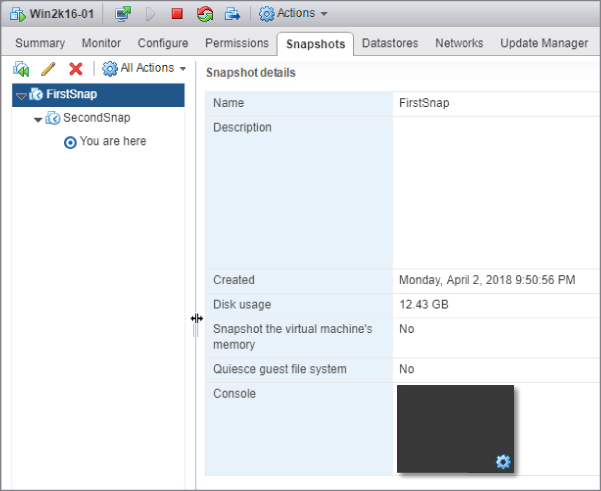

Using Virtual Machine Snapshots

VM snapshots allow administrators to create point-in-time checkpoints of a VM. The snapshot captures the state of the VM at a specific point in time. VMware administrators can then revert to their pre-snapshot state in the event the changes made since the snapshot should be discarded. Or, if the changes should be preserved, the administrator can commit the changes and delete the snapshot.

This functionality can be used in a variety of ways. Suppose you'd like to install the latest vendor-supplied patch for the guest OS instance running in a VM but you want to be able to recover in case the patch installation runs amok. By taking a snapshot before installing the patch, you can revert to the snapshot in the event the patch installation doesn't go well. You've just created a safety net for yourself. Keep in mind that snapshots do not affect RDM virtual hard disks or in-guest mounted iSCSI or NFS file systems. Also remember snapshots are made on a per-VM basis. If you have an application with multiple tiers and that is spread between multiple virtual machines, you may encounter application inconsistencies when reverting snapshots.

Earlier versions of vSphere did not allow Storage vMotions to occur when a snapshot was present, but this limitation was removed in vSphere 5.

Perform the following steps to create a snapshot of a VM:

- Use the vSphere Web Client to connect to a vCenter Server instance or use the vSphere Host Client to connect to an individual ESXi host.

- Navigate to either the Inventory Trees or VMs And Templates inventory view.

- Right-click the VM in the inventory tree and select Snapshots ⇒ Take Snapshot.

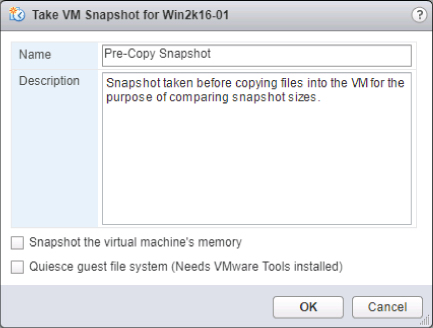

- Provide a name and description for the snapshot, as shown in Figure 9.30, and then click OK.

FIGURE 9.30 Providing names and descriptions for snapshots is an easy way to manage multiple historical snapshots.

As shown in Figure 9.30, there are two options when taking snapshots:

- The option Snapshot The Virtual Machine's Memory specifies whether the RAM of the VM should also be included in the snapshot. When this option is selected, the current contents of the VM's RAM are written to a file ending in a

.vmsnfilename extension. - The option Quiesce Guest File System (Needs VMware Tools Installed) controls whether the guest file system will be quiesced—or quieted—so that it is considered consistent. A special command is sent to the OS to flush all buffers and commit them to disk instead of holding them in memory. This can help ensure that the OS and application data within the guest file system is intact in the snapshot.

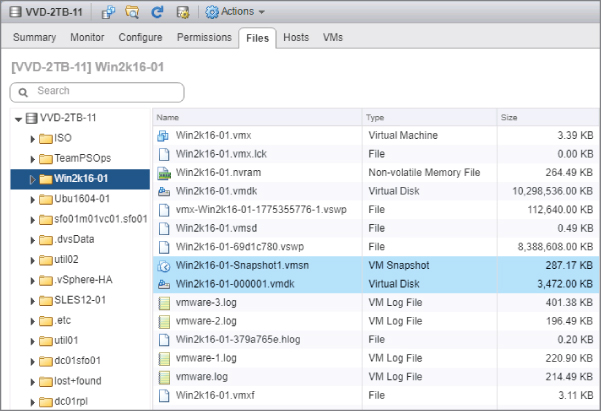

When a snapshot is taken, depending on the previous options, some additional files are created on the datastore, as shown in Figure 9.31.

FIGURE 9.31 When a snapshot is taken, some additional files are created on the VM's datastore.

It is a common misconception for administrators to think of snapshots as full copies of VM files. As you can clearly see in Figure 9.31, a snapshot is not a full copy of a VM. VMware's snapshot technology consumes minimal space while still reverting to a previous snapshot by allocating only enough space to store the changes rather than making a full copy.

To demonstrate snapshot technology and illustrate its behavior (for practice only), we performed the following steps:

- We created a VM with a default installation of Windows Server 2016 with a single hard drive (recognized by the guest OS as drive C:). The virtual hard drive was thin provisioned on a VMFS volume with a maximum size of 40 GB.

- We took a snapshot named FirstSnap.

- We added approximately 3 GB of data to drive C:, represented as

WIN2K16-01.vmdk. - We took a second snapshot named SecondSnap.

- Once again, we added approximately 3 GB of data to drive C:, represented as

WIN2K16-01.vmdk.

Review Table 9.1 for the results we recorded after each step. Note that these results were recorded as part of our example and may differ from your results if you perform a similar test.

TABLE 9.1: Snapshot demonstration results

| VMDK SIZE | NTFS SIZE | NTFS FREE SPACE | |

| Start (pre-first snapshot) | |||

WIN2K16-01.vmdk (C:)

|

8.6 GB | 40 GB | 31 GB |