20 Stage 2: Mitigation

Ignorance remains bliss only so long as it is ignorance; as soon as one learns one is ignorant, one begins to want not to be so. The desire to know, when you realize you do not know, is universal and probably irresistible.

—Charles Van Doren

In this chapter, I describe the practices that characterize the mitigation stage of risk management evolution. An introduction to risk concepts occurs at the mitigation stage. Contact is made with individuals from other organizations, who share their experiences in risk management. Through this initial contact comes an awareness of risks and the realization that what we do not know may hurt us. People’s knowledge of risk management is limited. They seek independent expertise to help them get started managing risk. This is what we observe in the mitigation stage project. The project name is not given due to the first rule of risk assessment: Do not attribute any information to an individual or project group. In accordance with this rule, I use job categories (instead of individual names) to discuss the people in the project. The people and practices of the project are authentic.

This chapter answers the following questions:

![]() What are the practices that characterize the mitigation stage?

What are the practices that characterize the mitigation stage?

![]() What is the primary project activity to achieve the mitigation stage?

What is the primary project activity to achieve the mitigation stage?

![]() What can we observe at the mitigation stage?

What can we observe at the mitigation stage?

20.1 Mitigation Project Overview

The project is a $10 million defense system upgrade that has a 21-month development schedule. Software (30 KLOC) is being developed in Ada on SUN Sparc10s using Verdix software tools. Custom hardware is being developed with embedded code. The project organization and the system concept of operation are described below.

20.1.1 Review the Project Organization

The project is staffed through a functional matrix organization, with a functional manager responsible for staffing, performance appraisal, training, and planning promotions. After assignment to the project, the staff take direction through delegation from the project manager. The project manager believes that having two bosses is a problem. From his perspective, there is no apparent incentive for functional management to make projects successful.1

1 The project manager speculates that the real incentive for functional management is to keep people off overhead.

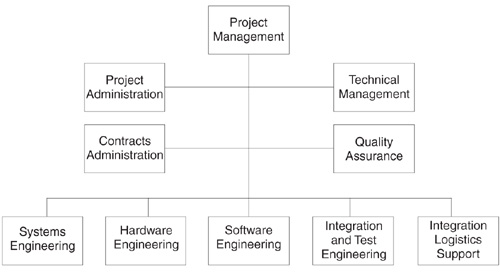

The organization chart in Figure 20.1 depicts the relationship among the project personnel. The relationship between systems and software engineering is a cooperative one. Systems engineering is responsible for the overall technical integrity, system concept, system analysis, requirements management, functional allocation, interface definition, and test and evaluation of the system. Software engineering participates in definition and allocation of system-level requirements, project planning, and code estimation. It is led by a chief software engineer, who is responsible for overseeing software production, managing software requirements and resources, specialized training, and development tools. Quality assurance works with the project personnel but has an independent reporting chain. Test engineers control the formal baseline with support from software engineers until system sell-off.

Contractually, the project’s organization is the prime contractor, who is responsible for requirements analysis through development and sell-off. A subcontractor will train customer personnel and perform operations and maintenance. A preferred list of vendors is used for other procurements to promote a cooperative supplier relationship.

Figure 20.1 Project organization chart. The project personnel worked within a matrix management division of a Fortune 500 company.

20.1.2 Review the System Concept of Operation

The latest technology is being used to replace the 1970s-vintage command processor. The project will replace existing equipment, increase system performance, and condense existing hardware by a factor of seven. Important system-level requirements have been derived from over 30 existing specifications, both hardware and software. The concept of operation is that embedded software will control all system functionality. The majority of system requirements map to software functionality. There are key performance requirements for command and authentication processing. The high-technology system does not have much of a human-machine interface. The system’s primary function is to process digital data for the next 10 to 15 years. It must also have the capability for future expansion to the next-generation system.

20.2 Risk Assessment Preparation

An organization standard procedure requires the identification of risk and problem analysis and corrective actions as a component of monthly project reviews. The operating instruction does not require the use of a particular method to identify and analyze risk. Through the organization’s process improvement activities, a new policy was written that requires a risk assessment at the beginning of a project. The project team began preparation for their risk assessment two months after contract award. The key activities to prepare for a risk assessment are the following:

![]() Obtain commitment from top management.

Obtain commitment from top management.

![]() Coordinate risk assessment logistics.

Coordinate risk assessment logistics.

![]() Communicate assessment benefits to the project participants.

Communicate assessment benefits to the project participants.

20.2.1 Obtain Executive Commitment

Risk management is about the future of present decisions [Charette89]. The project made the decision to conduct a risk assessment during an executive briefing that communicated the benefits of such an assessment. Individuals from the SEI, whose work involves developing, evaluating, and transitioning risk management methods, made the presentation. SEI representatives explained the concepts of software risk, risk assessment, and risk management to the project manager and the organization’s engineering director. The decision by the engineering director and the project manager to participate as a pilot project reflects a commitment to provide staff time for the assessment. The signed risk assessment agreement provides the foundation for future collaboration with the SEI.

20.2.2 Coordinate the Risk Assessment Logistics

After the managers signed up for the risk assessment, the task of coordinating logistics was delegated. Assessment team members were selected and team roles and responsibilities allocated. A site coordinator was chosen to select and invite the participants2 and to schedule the required facilities. The site coordinator used email to communicate with the participants regarding the risk assessment schedule.

2Approximately 25 percent of the project personnel were invited to participate in the interview sessions.

20.2.3 Brief the Project Participants

Before the risk assessment, a participants’ briefing was held to provide information and answer questions on the risk assessment activities. The risk assessment objectives—to identify software risks and train mechanisms for risk identification—and benefits—to prevent surprises, make informed decisions, and focus on building the right product—were explained. The risk assessment principles were emphasized:

![]() Commitment. Demonstrate commitment by signing the risk assessment agreement.

Commitment. Demonstrate commitment by signing the risk assessment agreement.

![]() Confidentiality. Emphasize confidentiality by making a promise of no attribution to individuals.

Confidentiality. Emphasize confidentiality by making a promise of no attribution to individuals.

![]() Communication. Encourage communication of risks during the risk assessment.

Communication. Encourage communication of risks during the risk assessment.

![]() Consensus. Show teamwork through the consensus process that the risk assessment team operates by.

Consensus. Show teamwork through the consensus process that the risk assessment team operates by.

20.3 Risk Assessment Training

To conduct the risk assessment as professionals, assessment team members spent two days in training at the SEI.3 (The risk assessment training material was that used by the SEI in 1993, which was revised as Software Risk Evaluation [Sisti94].) The following major activities were used to train assessment team members:

3 Just-in-time training is best. Team training occurred two weeks before the risk assessment.

![]() Review the project profile.

Review the project profile.

![]() Learn risk management concepts.

Learn risk management concepts.

![]() Practice risk assessment methods.

Practice risk assessment methods.

20.3.1 Prepare the Assessment Team Members

Expectations for the risk assessment training included understanding the techniques used, learning how risk assessment fits into the risk management paradigm [VanScoy92], and determining the value of risk assessment to the business bottom line. A team-building exercise was used to interview and then to introduce the assessment team members.

The project profile was presented to ensure that assessment team members understood the project context. The project overview covered the operational view of the product, how the project was structured, contractual relationships, organization roles, software staff, software characteristics, and project history. The project profile was used to set the context and vocabulary for communicating with the project team during the risk assessment.

20.3.2 Learn the SEI Taxonomy Method

A taxonomy is a scheme that partitions a body of knowledge and defines the relationships among the pieces. It is used for classifying and understanding the body of knowledge. A software development risk taxonomy was developed at the SEI to facilitate the systematic and repeatable identification of risks [Carr93]. The SEI taxonomy maps the characteristics of software development into three levels, thereby describing areas of software development risk. The risk identification method uses a taxonomy-based questionnaire to elicit risks in each taxonomic group. Risk areas are systematically addressed using the SEI taxonomy as a risk checklist. An interview protocol is used to interview project participants in peer groups. Peer groups create a nonjudgmental and nonattributive environment, so that tentative or controversial views can be heard.

20.3.3 Practice the Interview Session

With an understanding of the SEI taxonomy method, the risk assessment team practiced for an interview session by acting out each of the required roles:

![]() Interviewer. The interviewer conducts the group discussion using an interview protocol in a question-and-answer format.

Interviewer. The interviewer conducts the group discussion using an interview protocol in a question-and-answer format.

![]() Interviewee. The interviewees (project participants) identify risks in response to questions posed by the interviewer.

Interviewee. The interviewees (project participants) identify risks in response to questions posed by the interviewer.

![]() Recorder. The recorder documents the risk statements and results of the preliminary risk analysis.

Recorder. The recorder documents the risk statements and results of the preliminary risk analysis.

![]() Observer. The observer notes details of the discussion, the group dynamics, and overall process effectiveness. The observer monitors the time to ensure the meeting ends on schedule.

Observer. The observer notes details of the discussion, the group dynamics, and overall process effectiveness. The observer monitors the time to ensure the meeting ends on schedule.

20.4 Project Risk Assessment

From the risk assessment team’s perspective, there was a tremendous amount of information to collect, correlate, and synthesize in a limited amount of time. From the project participants’ perspective, this would be a chance to say what they thought, to hear what other people thought, and to discuss upcoming project issues. The major activities to perform the risk assessment are the following:

![]() Conduct the interview sessions.

Conduct the interview sessions.

![]() Filter the identified risks.

Filter the identified risks.

![]() Report the assessment findings.

Report the assessment findings.

![]() Obtain assessment team feedback.

Obtain assessment team feedback.

![]() Obtain project management feedback.

Obtain project management feedback.

20.4.1 Conduct the Interview Sessions

Three interview sessions were conducted that lasted three hours each, with between two and five project participants per interview session. Project participants were interviewed in peer groups with no reporting relationships. They considered risks that applied to the project as a whole, not just their own individual tasks. The first interview group consisted of project managers, the second of task leaders, and the third of engineering personnel.

The project managers identified twice as many project risks as either process or product risks. The task leaders identified more product risks than project risks by a factor of 10. And the engineering personnel identified the same number of product risks as process risks. These results are not by coincidence, but in part by design of the SEI taxonomy method [Carr93].

Each interview session began with a set of questions structured according to a different taxonomy class:

![]() Product engineering, the intellectual and physical aspects of producing a product. In the interview session, task leaders began by responding to questions from this class of the taxonomy.

Product engineering, the intellectual and physical aspects of producing a product. In the interview session, task leaders began by responding to questions from this class of the taxonomy.

![]() Development environment, the process and tools used to engineer a software product and the work environment. In the interview session, engineering personnel began by responding to questions from this class of the taxonomy.

Development environment, the process and tools used to engineer a software product and the work environment. In the interview session, engineering personnel began by responding to questions from this class of the taxonomy.

![]() Program constraints, the programmatic risk factors that restrict, limit, or regulate the activities of producing a product. In the interview session, project managers began by responding to questions from this class of the taxonomy.

Program constraints, the programmatic risk factors that restrict, limit, or regulate the activities of producing a product. In the interview session, project managers began by responding to questions from this class of the taxonomy.

20.4.2 Filter the Identified Risks

On average, over six issues were identified by each participant. For each identified risk on the candidate risk list, interviewees were asked to evaluate the risk according to four criteria: risk consequence, likelihood, time frame, and locus of control. These criteria determine whether the issue is documented as a risk. The risk evaluation is determined by a series of yes-no questions:

![]() Consequence. Does this risk have a significant consequence? Will the outcome affect performance, function, or quality?

Consequence. Does this risk have a significant consequence? Will the outcome affect performance, function, or quality?

![]() Likelihood. Is this risk likely to happen? Have you seen this occur in other circumstances? Are there conditions and circumstances that make this risk more likely?

Likelihood. Is this risk likely to happen? Have you seen this occur in other circumstances? Are there conditions and circumstances that make this risk more likely?

![]() Time frame. Is the time frame for this risk near term? Does this require a long lead-time solution? Must we act soon?

Time frame. Is the time frame for this risk near term? Does this require a long lead-time solution? Must we act soon?

![]() Locus of control. Is this risk within the project control? Does it require a technical solution? Does it require project management action?

Locus of control. Is this risk within the project control? Does it require a technical solution? Does it require project management action?

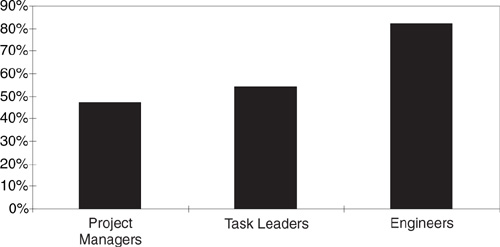

Responses were consolidated from all interview sessions and results tabulated by sorting the risk evaluation criteria, which helps to prioritize the issues. A significant difference was observed in response to the question regarding the project locus of control. Figure 20.2 shows this disparate perception of control among the interview sessions. The following risks were evaluated as outside the project’s locus of control:

![]() Project managers. This group believed that 47 percent of their identified risks were in the project’s control. They evaluate staff expertise, technology, and customer issues (e.g., government-furnished equipment) as outside the project locus of control.

Project managers. This group believed that 47 percent of their identified risks were in the project’s control. They evaluate staff expertise, technology, and customer issues (e.g., government-furnished equipment) as outside the project locus of control.

Figure 20.2 Perception of project control. Of the risks they identified, project managers evaluated 47.1 percent in the project’s control, task leaders 54.5 percent, and engineers82.3 percent. Engineers were more likely to perceive that risks were in the project’s control, because there were two levels of management above them that could resolve the issues. The issues facing project managers (the project externals) were less likely to be perceived as in their control. If project managers included two levels of management above them (i.e., functional management and the customer), they would have a higher percentage of control over their project.

![]() Task leaders. This group believed that 55 percent of their identified risks were in the project’s control. They evaluated a new development environment and process, customer closure on requirement definitions, and the inefficient interface with functional management as outside the project locus of control.

Task leaders. This group believed that 55 percent of their identified risks were in the project’s control. They evaluated a new development environment and process, customer closure on requirement definitions, and the inefficient interface with functional management as outside the project locus of control.

![]() Engineering personnel. This group believed that 82 percent of their identified risks were within the project control. They evaluated vendor-supplied hardware, limitations of time, deliverable formats, acceptance criteria, and potential morale problems due to layoffs as outside the project locus of control.

Engineering personnel. This group believed that 82 percent of their identified risks were within the project control. They evaluated vendor-supplied hardware, limitations of time, deliverable formats, acceptance criteria, and potential morale problems due to layoffs as outside the project locus of control.

The risks were classified according to the SEI taxonomy. Sorting the risk by the taxonomy classification provided a grouping of issues raised in a specific area. Grouping similar risks identified by all the interview groups helped to summarize the findings and prepare the results briefing.

20.4.3 Report the Assessment Findings

A summary of the risk assessment activities and results was presented to the project team. Findings were summarized in ten briefing slides, each containing the topic area at risk, several risk issues with specific supporting examples, and consequences to the project. There was surprise from the engineering director, who did not expect to hear about so many risks so soon after contract award. The tone of the meeting was mostly somber. No positive comments or solutions were presented. The project manager was assured there would be a point of contact for the project to continue risk management of the baselined risks.

20.4.4 Obtain Assessment Team Feedback

A meeting was held with the risk assessment team to provide feedback on the assessment experience. Observations of what worked were discussed and documented:

![]() Cooperative project management. There was value-added by interviewing the project manager and the functional manager together. For example, the project manager learned that he could obtain a waiver for organizational standard processes that did not suit the project. The functional manager became sensitive to the staff skill mix required by the project.

Cooperative project management. There was value-added by interviewing the project manager and the functional manager together. For example, the project manager learned that he could obtain a waiver for organizational standard processes that did not suit the project. The functional manager became sensitive to the staff skill mix required by the project.

![]() Peer group synergy. The reason to interview peer groups is that they share common concerns and communicate at the same level in the project hierarchy. People can empathize better with others if they know what the others’ concerns are.

Peer group synergy. The reason to interview peer groups is that they share common concerns and communicate at the same level in the project hierarchy. People can empathize better with others if they know what the others’ concerns are.

![]() Earlier is better. A direct relationship exists between the time assigned to a task and the attribution associated with an individual responsible for the task. Project teams should perform a risk assessment as soon as possible to avoid retribution to the individual team members.4

Earlier is better. A direct relationship exists between the time assigned to a task and the attribution associated with an individual responsible for the task. Project teams should perform a risk assessment as soon as possible to avoid retribution to the individual team members.4

4 Avoiding retribution is not necessary in advanced stages of risk management evolution.

Observations of what did not work were also discussed and documented:

![]() Required solutions. Most engineers and managers felt competent when they were solving problems. The presentation of risks without a corresponding solution made the project team feel uncomfortable.

Required solutions. Most engineers and managers felt competent when they were solving problems. The presentation of risks without a corresponding solution made the project team feel uncomfortable.

![]() Negative results. Presenting risks without a plan in place to address the critical risks left the project team with a negative feeling. Positive comments can make the project team feel good about taking the first step to resolving risks. An announcement should be made at the risk assessment results briefing about a meeting to move the results forward into risk management.

Negative results. Presenting risks without a plan in place to address the critical risks left the project team with a negative feeling. Positive comments can make the project team feel good about taking the first step to resolving risks. An announcement should be made at the risk assessment results briefing about a meeting to move the results forward into risk management.

![]() Abandoning risks. Everyone should resist the temptation to address an easy risk first and abandon a difficult risk because it was not in the perceived locus of control. The team could expand its locus of control by including the functional management and the customer as part of the project team.

Abandoning risks. Everyone should resist the temptation to address an easy risk first and abandon a difficult risk because it was not in the perceived locus of control. The team could expand its locus of control by including the functional management and the customer as part of the project team.

20.4.5 Obtain Project Management Feedback

Three months after the risk assessment, the SEI representative returned to facilitate a discussion on the value of the risk assessment to the project and to the organization. The most important points the project manager made are summarized below:

![]() The customer’s reaction was positive. The customer reaction to reviewing assessed risks at the system requirements review was positive. The customer wanted to keep the issues out in front, to avoid surprises, and to work toward the same set of goals.

The customer’s reaction was positive. The customer reaction to reviewing assessed risks at the system requirements review was positive. The customer wanted to keep the issues out in front, to avoid surprises, and to work toward the same set of goals.

![]() There is no reward for reducing risk. Customers were sensitive to the consequences for the risks presented, but the customers themselves did not receive a grade on decreasing risk.

There is no reward for reducing risk. Customers were sensitive to the consequences for the risks presented, but the customers themselves did not receive a grade on decreasing risk.

![]() Risks are programmatic in nature. The top 16 risks had little to do with software. The risk assessment identified risk projectwide.

Risks are programmatic in nature. The top 16 risks had little to do with software. The risk assessment identified risk projectwide.

![]() Take the time to do risk management. Within the organization, there needs to be pressure to take the time to do risk management. Otherwise, the problems at hand, meetings, and tomorrow take precedence.

Take the time to do risk management. Within the organization, there needs to be pressure to take the time to do risk management. Otherwise, the problems at hand, meetings, and tomorrow take precedence.

The most important points made by the organization assessment team members are summarized below:

![]() Collaboration saved time and money. Collaborative risk assessment was of great benefit to learning and leveraging existing methods to improve the risk management process [Ulrich93].

Collaboration saved time and money. Collaborative risk assessment was of great benefit to learning and leveraging existing methods to improve the risk management process [Ulrich93].

![]() Proposals need risk assessment. Proposal teams should use the risk assessment method during the proposal phase. Early identification means there is more time to work the issues.

Proposals need risk assessment. Proposal teams should use the risk assessment method during the proposal phase. Early identification means there is more time to work the issues.

![]() Risk assessment is the first step. Lack of follow-through on risk items gives a bad name to volunteering risk items. Risk assessment is not sufficient to resolve risk.

Risk assessment is the first step. Lack of follow-through on risk items gives a bad name to volunteering risk items. Risk assessment is not sufficient to resolve risk.

20.5 Project Risk Management

The independent risk assessment paved the way for the project to continue risk management. As a result of the risk assessment activities, the project possessed the following assets:

![]() Senior management commitment.

Senior management commitment.

![]() A baseline of identified risks.

A baseline of identified risks.

![]() The nucleus of a risk aware project team.

The nucleus of a risk aware project team.

From the organizational perspective, there were several opportunities that existed after the risk assessment:

![]() Support the project in managing identified risks.

Support the project in managing identified risks.

![]() Develop and refine methods of systematic risk management.

Develop and refine methods of systematic risk management.

![]() Share lessons learned throughout the organization.

Share lessons learned throughout the organization.

20.5.1 Pilot the Risk Management Process

The task leaders began to present the top risks at project reviews. They decided to address risks every other week at their top-level staff meeting on Monday morning for half an hour. Every month at their all-hands meeting, risks were reviewed with the entire project team. For these meetings, an independent facilitator from the software engineering process group (SEPG) led the group discussion on risks. The project manager later gave testimonial to the value of the facilitator role: “A facilitator was invaluable. The facilitator gives a common, consistent approach. It served as a forcing function. The project would not have otherwise done it.”

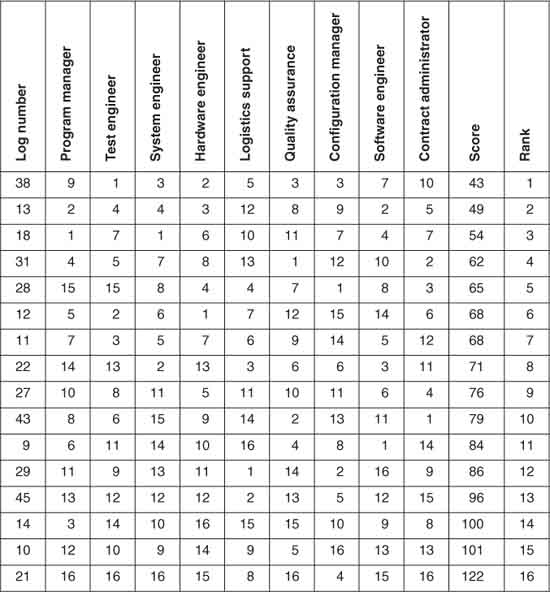

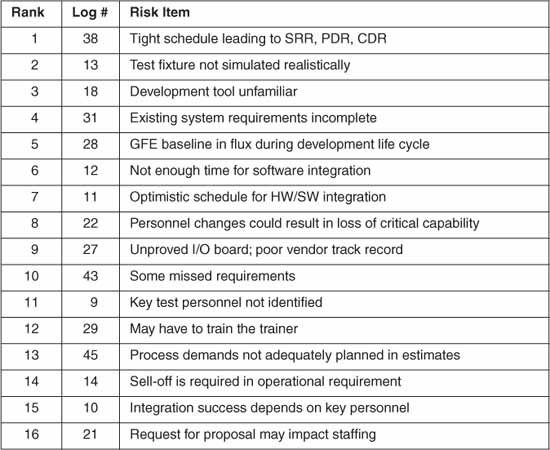

Prioritization of the risks occurred using the nominal group technique [Brassard94], a consensus approach that involved the project manager and task leaders. Each person ranked all the critical risks from 1 to N. As shown in Table20.1, the results of the individual risk scores showed unilateral disagreement. Risk action plans were informal—discussed but not documented.

Table 20.1 Ranking of Identified Risks

20.5.2 Sign the Technical Collaboration Agreement

Six months after the follow-up visit with the SEI representative, a technical collaboration agreement (TCA) was signed with the SEI. The focus of the collaboration was to participate in the development and deployment of the selfadministered risk assessment process. The organization had learned many useful techniques and had leveraged the SEI material through reuse on over ten projects and proposals. High marks were received on the risk section of one winning proposal, to the tune of over $100 million in new business. Software risk management was a niche capability that appeared to have competitive advantage.

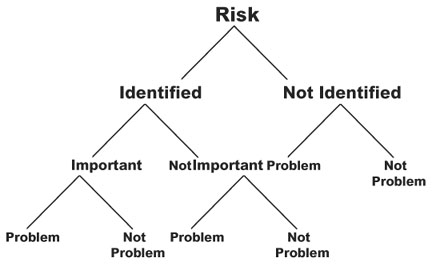

Figure 20.3 A risk may turn into a problem. Risk exists regardless of whether anyone identifies it. A risk consequence does not depend on an assessment of its importance. Only the action taken to resolve risk may prevent a risk from becoming a problem.

20.6 Project Risk Retrospective

One year after the risk assessment, the project manager presented the project lessons learned. The project was in the hardware-software integration and testing phase—behind schedule, but making good progress. There were no known major technical issues but several minor issues. The project manager said they had stopped performing risk management when they reached the test phase because the remaining risks had already turned into problems. How did some of the project risks turn into problems? As shown in Figure 20.3, there are three distinct paths a risk can take to end up as a problem:

![]() Identify a high risk and do nothing about it.

Identify a high risk and do nothing about it.

![]() Identify a low risk and later it changes into a high risk.

Identify a low risk and later it changes into a high risk.

![]() Do not identify a risk and later it becomes a problem.

Do not identify a risk and later it becomes a problem.

20.6.1 Identify Risk as Important

Risks that are identified and assessed as important have the greatest chance of being resolved. Unless risk assessment (see Table 20.2) is followed up with risk control, it would be naive to think that simply acknowledging a risk’s importance would be sufficient to prevent it from becoming a problem.

The risk of building the wrong product was identified as important due to incomplete requirements. Several statements were captured during the risk assessment that supported this as a legitimate issue. Some requirements were missed during the proposal phase. Existing system requirements were incomplete due to inadequate documentation, interface documents that were not approved, and inaccessible domain experts. To compound the issue, requirements for software reliability analysis, data formats, system flexibility, and acceptance criteria were unclear. Each risk was evaluated as having a significant impact that was likely to occur. The combined evaluation for these issues was 100 percent impact, 100 percent likelihood, 83 percent time frame, and 100 percent locus of control.

Requirements risk was prioritized by the project as incomplete requirements (ranked 4) and unclear requirements (ranked 10). These risks were addressed, and the priority was later reassessed as incomplete requirements (ranked 7) and unclear requirements (ranked 16). Both risk issues decreased in their relative importance because the project perceived that progress had been made in resolving the risk. The consequence that had been described in the risk assessment findings briefing never materialized. The project manager reported that some requirements baseline design concepts were not solidified by the preliminary design review and that some requirements documentation was developed out of preferred order but that problem was largely due to staffing issues.

20.6.2 Identify Risk as Not Important

Risks that are identified and assessed as not important may change in significance over time. The dynamic nature of risk does not depend on an assessment of its importance. Our assumption that we have correctly assessed a risk merely adds to the risk that a significant risk will be overlooked. Risks with significant consequences may not appear important when the likelihood is low or the time frame is later.

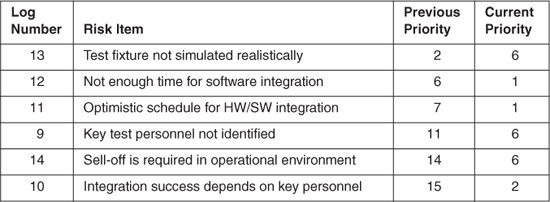

Such was the case with the seven issues identified in test and integration during the project risk assessment. The combined evaluation for these issues was 86 percent impact, 14 percent likelihood, 14 percent time frame, and 57 percent locus of control. Six of the seven issues were presented at the results briefing. Two months later, the project manager and the task leaders reviewed the baseline risks at a staff meeting. One risk was accepted when the consensus was reached that they could live with the risk consequence, and the other six were reevaluated. The increase in importance given to these issues was 100 percent impact, 17 percent likelihood, 50 percent time frame, and 100 percent locus of control. Two weeks later, the project manager and eight task leaders ranked each risk using a nominal group technique. Priority was determined by risk score, a summation of all ratings from 1 to N. There was no correlation in these ratings. The project manager mentioned that results of the risk scores showed unilateral disagreement of the different concerns in the project.

The next month, the quality assurance specialist issued a phase readiness review memo to the project personnel to report on the readiness to proceed into the detailed design phase. The project manager, chief system engineer, chief software engineer, and chief hardware engineer were interviewed. There were some concerns expressed about not having a full-time test engineer on the project. This was not viewed as an immediate risk, but the recommendation was that it be studied. The six test and integration issues were monitored over six months. As Table 20.3 shows, most of the issues had increased in their relative priority since the initial risk ranking. Over time, these issues were assigned to various individuals, combined as similar issues, and changed from test issues to issues of schedule and staff.

The project manager noted that they had underestimated the need for a software test engineer. Problems occurred as a result (e.g., conflicts over limited test bed resources, and a compressed site installation schedule). A $500,000 per month spend rate would overrun the project if they were unable to close on the schedule and destaff the project. The bottom line is that risks, even when they are identified early, may become problems when they are not viewed as important enough to resolve.

Table 20.2 List of Assessed Risks

Table 20.3 Priority Changes of Test and Integration Issues

20.6.3 Do Not Identify Risk

Risks that are not identified have the greatest chance of becoming problems. The project experienced problems in an area the participants had not been concerned about: hardware. The team had difficulty finalizing mechanical product design and drafting well beyond critical design review and completing the custom circuit card design and debugging it. Staffing was a probable cause for the problems experienced in hardware. The hardware task leader arrived late to the team, was pulled away part-time to a previous job, and did not have experience in coordinating manufacturing, material, product design, and drafting. The result was that the project lost focus in hardware design, and when other functions tried to help, their areas got into trouble. A vicious cycle of replanning and workarounds ensued. Perhaps the problems the project experienced in hardware could have been minimized if they had been identified early as risks and perceived to be important.

20.7 Summary and Conclusions

In this chapter, I described the practices that characterize the mitigation stage of risk management evolution. These practices include risk identification with subjective and superficial risk evaluation. A risk list is prioritized and updated infrequently. Risks are assigned to either the project manager or the task leaders. There is no evidence of documented risk action plans. The major activity is risk assessment, with little follow-through for risk control.

The primary project activity to achieve in the mitigation stage is a risk assessment that is conducted early in the project’s life cycle. The risk assessment is the key to introducing risk management concepts at a time in the life cycle where there is no blame assigned to individuals. The involvement of the entire project personnel is minimal, but they become more aware of the risks inherent in their plans and work.

At the mitigation stage, we observed the following:

![]() A lack of volunteers. Identifying risk is a relatively new concept for most of the staff, and they are not very comfortable raising issues without a corresponding solution. One of the task leaders said during the risk assessment, “Participating in the risk process is risky. It is less risky to share risks with the customer than management.”

A lack of volunteers. Identifying risk is a relatively new concept for most of the staff, and they are not very comfortable raising issues without a corresponding solution. One of the task leaders said during the risk assessment, “Participating in the risk process is risky. It is less risky to share risks with the customer than management.”

![]() Improvement costs. You pay a price to learn a new process. There is a cost to develop the process, and another cost to tailor it to meet the needs of the project. There may be time spent resisting the process and trying to build a case against its use. You must invest the time to learn the process before you will see productivity increase.

Improvement costs. You pay a price to learn a new process. There is a cost to develop the process, and another cost to tailor it to meet the needs of the project. There may be time spent resisting the process and trying to build a case against its use. You must invest the time to learn the process before you will see productivity increase.

![]() Testimonials. After the risk assessment, the project manager said, “Risk assessment helps flush out potential problems early, so that action may be taken to avoid problems. It brings the staff together to work on project issues as a whole, rather than as individual concerns, which results in team building.” After the project development, the project manager said, “We have not learned our lessons, and continue to repeat history. We see the problems but do not have good solutions that work.”

Testimonials. After the risk assessment, the project manager said, “Risk assessment helps flush out potential problems early, so that action may be taken to avoid problems. It brings the staff together to work on project issues as a whole, rather than as individual concerns, which results in team building.” After the project development, the project manager said, “We have not learned our lessons, and continue to repeat history. We see the problems but do not have good solutions that work.”

20.8 Questions for Discussion

1. List four resources a manager should provide for a risk assessment. Discuss why a manager would provide these resources.

2. Do you think there are any disadvantages of using a software risk taxonomy to identify risks? Explain your answer.

3. Discuss three classes of software risk. How do these classes describe the different roles and responsibilities of people on software projects?

4. List ten general categories of software risk that you would expect to find early in the project. Would you expect that most projects that perform a risk assessment early in their life cycle have the same logical grouping of risk? Explain your answer.

5. Discuss three emotions you would feel if you were the project manager and the risk assessment findings had nothing positive to report.

6. Do you agree that interviewing people in peer groups is synergistic? Discuss why you do or do not agree.

7. Are all risks programmatic in nature? Discuss the differences between risk assessment results in the requirements phase versus the coding phase.

8. What are three opportunities that exist after a risk assessment? Describe your plan to create these opportunities.

9. List five barriers to risk control. Discuss a contingency plan for each barrier.

10. What is the difference between ignorance and awareness? Explain your answer.

20.9 References

[Brassard94] Brassard M, Ritter D. The Memory Jogger II. Methuen, MA: GOAL/QPC, 1994.

[Carr93] Carr M, Konda S, Monarch I, Ulrich F, Walker C. Taxonomy based risk identification. Technical report CMU/SEl-93-TR-6. Pittsburgh, PA: Software Engineering Institute, Carnegie Mellon University, 1993.

[Charette89] Charette, Robert N. Software Engineering Risk Analysis and Management. New York: McGraw-Hil1, 1989.

[Sisti94] Sisti F, Joseph S. Software risk evaluation. Technical report CMU/SEl-94-TR-19. Pittsburgh, PA: Software Engineering Institute, Carnegie Mellon University, 1994.

[Ulrich93] Ulrich F, et. al. Risk identification: Transition to action, Panel discussion, Proc. Software Engineering Symposium, Pittsburgh, PA: Software Engineering Institute, Carnegie Mellon University, August 1993.

[Van Doren91] Van Doren C. A History of Knowledge. New York: Ballantine, 1991.

[VanScoy92] VanScoy R. Software development risk: Problem or opportunity. Technical report CMU/SEI-92-TR-30. Pittsburgh, PA: Software Engineering Institute, Carnegie Mellon University, 1992.