ANN nodes are simplified (mathematical) versions of what is displayed in Figure 8.1. They gather numerical inputs that are balanced through weights, summed together (frequently along with a constant, or bias if you will), applied to a given function, and then output as a result. Such a result could be a final result given by an output node, or handled over to another node (and sometimes also to the exact same node if we have a recurrent setting). The following diagram illustrates the functioning of an ANN node:

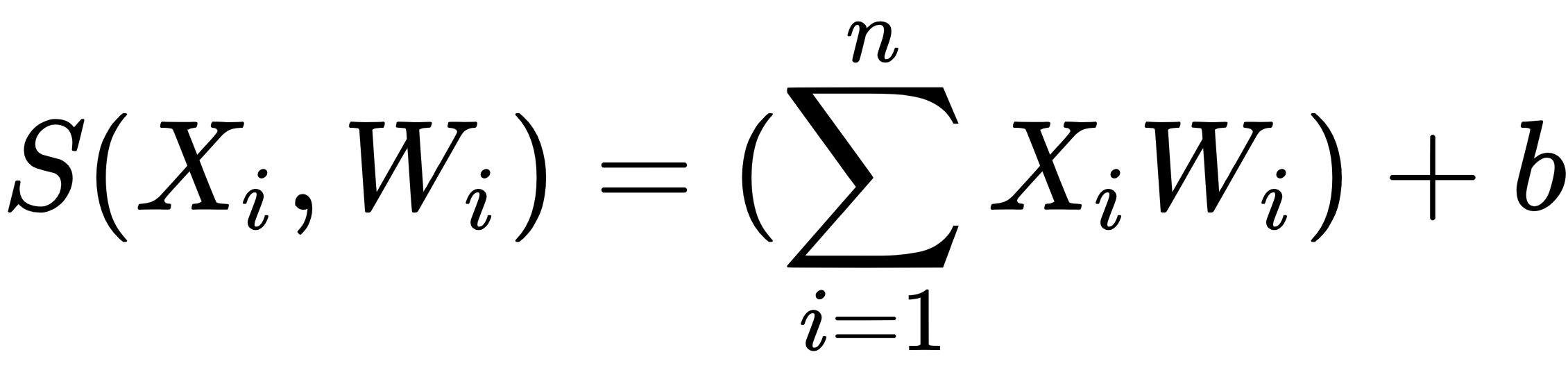

In the preceding diagram, the node is receiving three different inputs (X1, X2, and X3). All of them are balanced by weights (W1, W2, and W3) and summed. The S(Xi,Wi) is taking care of the sum, and such a function can be expressed as follows:

The letter b represents a bias, and few architectures won't have this. After everything is summed, they go through an activation function, which the diagrams represent as the F(S) function inside the triangle. The result of such a function is output as Yi, which can be handed to another node or work as the final output.

Activation functions play a very special role in the NN design.