Wget is a part of the GNU project and is included in most of the major Linux distributions, including Kali Linux. It has the ability to recursively download a web page for offline browsing, including conversion of links and downloading of non-HTML files.

In this recipe, we will use Wget to download pages that are associated with an application in our vulnerable_vm.

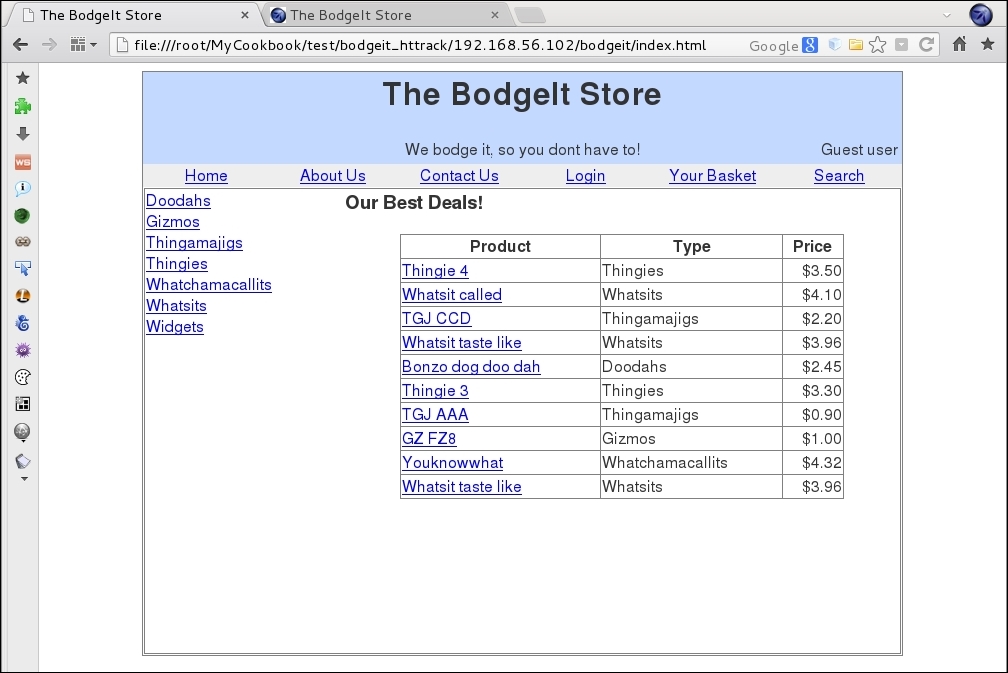

All recipes in this chapter will require vulnerable_vm running. In the particular scenario of this book, it will have the IP address 192.168.56.102.

- Let's make the first attempt to download the page by calling Wget with a URL as the only parameter:

wget http://192.168.56.102/bodgeit/

As we can see, it only downloaded the

index.htmlfile to the current directory, which is the start page of the application. - We will have to use some options to tell Wget to save all the downloaded files to a specific directory and to copy all the files contained in the URL that we set as the parameter. Let's first create a directory to save the files:

mkdir bodgeit_offline - Now, we will recursively download all files in the application and save them in the corresponding directory:

wget -r -P bodgeit_offline/ http://192.168.56.102/bodgeit/

As mentioned earlier, Wget is a tool created to download HTTP content. With the –r parameter we made it act recursively, which is to follow all the links in every page it downloads and download them too. The -P option allows us to set the directory prefix, which is the directory where Wget will start saving the downloaded content; it is set to the current path, by default.

There are some other useful options to be considered when using Wget:

-l: When downloading recursively, it might be necessary to establish limits to the depth Wget goes to, when following links. This option, followed by the number of levels of depth we want to go to, lets us establish such a limit.-k: After files are downloaded, Wget modifies all the links to make them point to the corresponding local files, thus making it possible to browse the site locally.-p: This option lets Wget download all the images needed by the page, even if they are on other sites.-w: This option makes Wget wait the number of seconds specified after it between one download and the next. It's useful when there is a mechanism to prevent automatic browsing in the server.