One of the advantages of ROS is that it has tons of packages that can be reused in our applications. In our case, what we want is to implement an object recognition and detection system. The find_object_2d package (http://wiki.ros.org/find_object_2d) implements SURF, SIFT, FAST, and BRIEF feature detectors (https://goo.gl/B8H9Zm) and descriptors for object detection. Using the GUI provided by this package, we can mark the objects we want to detect and save them for future detection. The detector node will detect the objects in camera images and publish the details of the object through a topic. Using a 3D sensor, it can estimate the depth and orientation of the object.

Installing this package is pretty easy. Here is the command to install it on Ubuntu 16.04 and ROS Kinetic:

$ sudo apt-get install ros-kinetic-find-object-2d

Here is the procedure to run the detector nodes for a webcam. If we want to detect an object using a webcam, we first need to install the usb_cam package,which was discussed in Chapter 2, Face Detection and Tracking Using ROS, OpenCV, and Dynamixel Servos.

- Start

roscore:$ roscore - Plug your USB camera into your PC, and launch the ROS

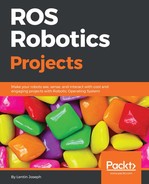

usb_camdriver:$ roslaunch usb_cam usb_cam-test.launchThis will launch the ROS driver for USB web cameras, and you can list the topics in this driver using the

rostopic listcommand. The list of topics in the driver is shown here:

Figure 3: Topics being published from the camera driver

- From the topic list, we are going to use the raw image topic from the cam, which is being published to the

/usb_cam/image_rawtopic. If you are getting this topic, then the next step is to run the object detector node. The following command will start the object detector node:$ rosrun find_object_2d find_object_2d image:=/usb_cam/image_rawThis command will open the object detector window, shown in the previous screenshot, in which we can see the camera feed and the feature points on the objects.

- So how can we use it for detecting an object? Here are the procedures to perform a basic detection using this tool:

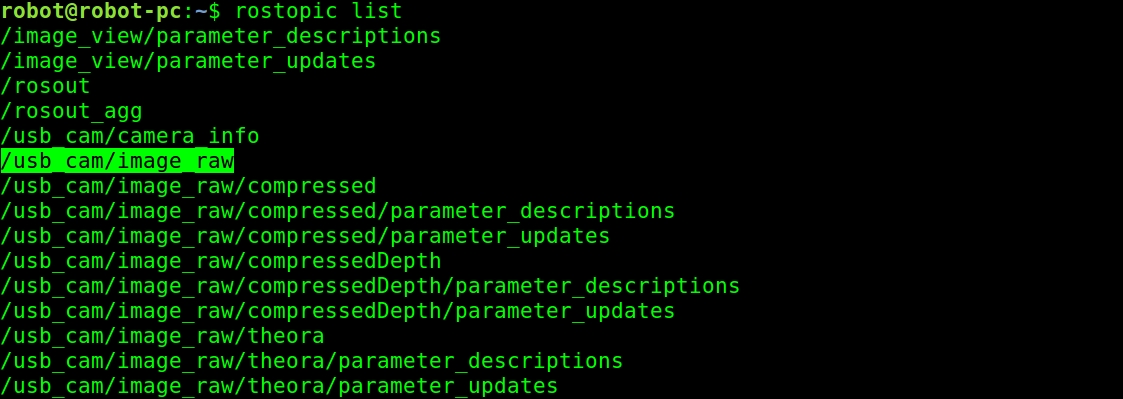

Figure 4: The Find-Object detector window

- You can right-click on the left-hand side panel (Objects) of this window, and you will get an option to Add objects from scene. If you choose this option, you will be directed to mark the object from the current scene, and after completing the marking, the marked object will start to track from the scene. The previous screenshot shows the first step, which is taking a snap of the scene having the object.

- After aligning the object toward the camera, press the Take Picture button to take a snap of the object:

Figure 5: The Add object wizard for taking a snap of the object

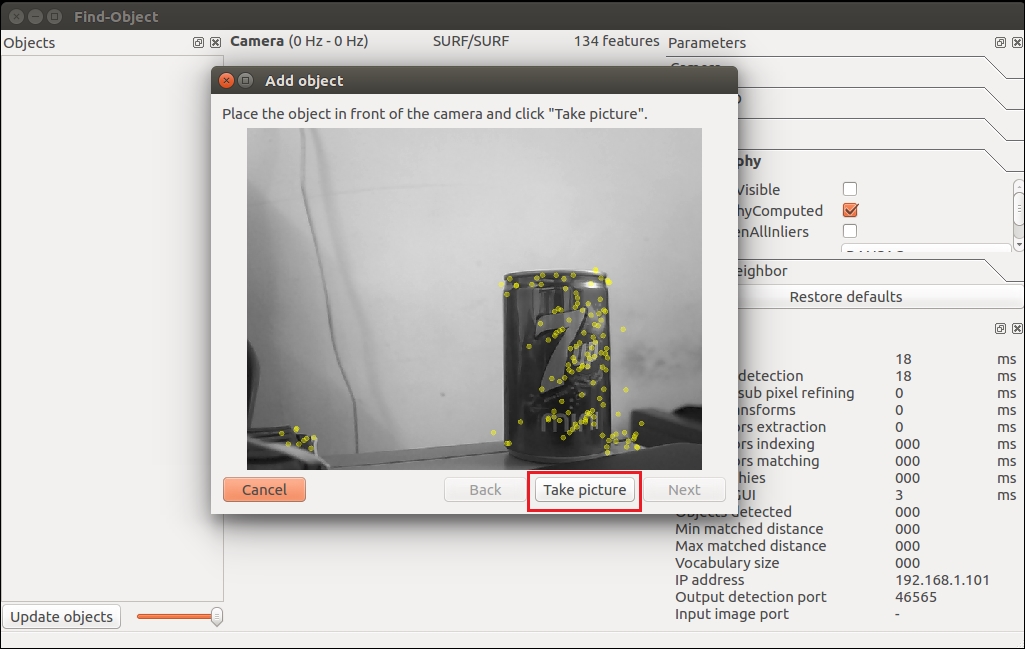

- The next window is for marking the object from the current snap. The following figure shows this. We can use the mouse pointer to mark the object. Click on the Next button to crop the object, and you can proceed to the next step:

Figure 6: The Add object wizard for marking the object

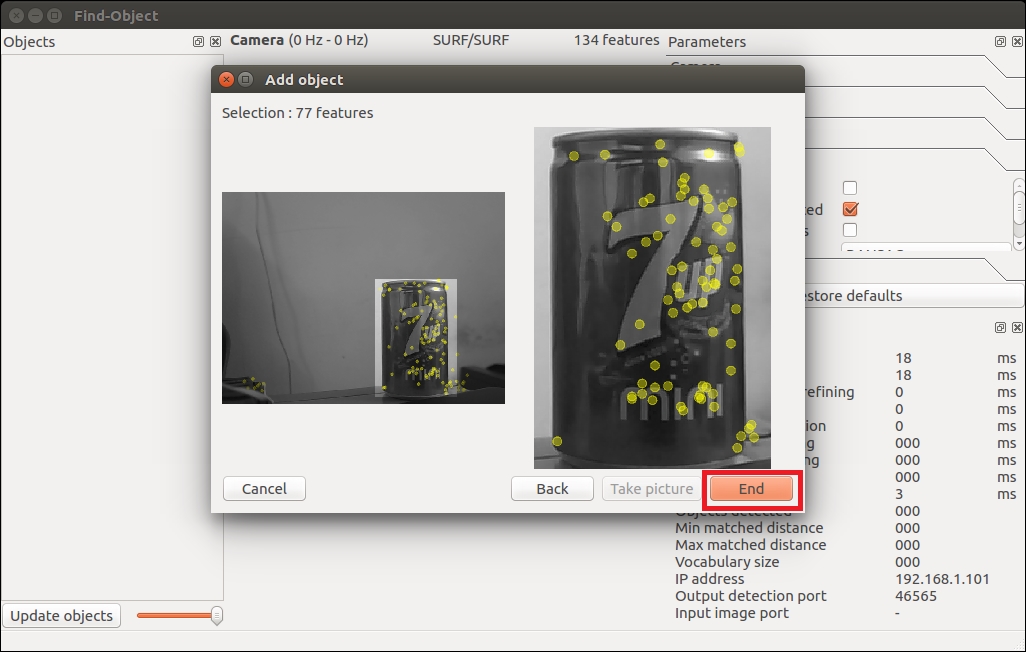

- After cropping the object, it will show you the total number of feature descriptors on the object, and you can press the End button to add the object template for detection. The following figure shows the last stage of adding an object template to this detector application:

Figure 7: The last step of the Add object wizard

- Congratulations! You have added an object for detection. Immediately after adding the object, you will be able to see the detection shown in the following figure. You can see a bounding box around the detected object:

Figure 8: The Find-Object wizard starting the detection

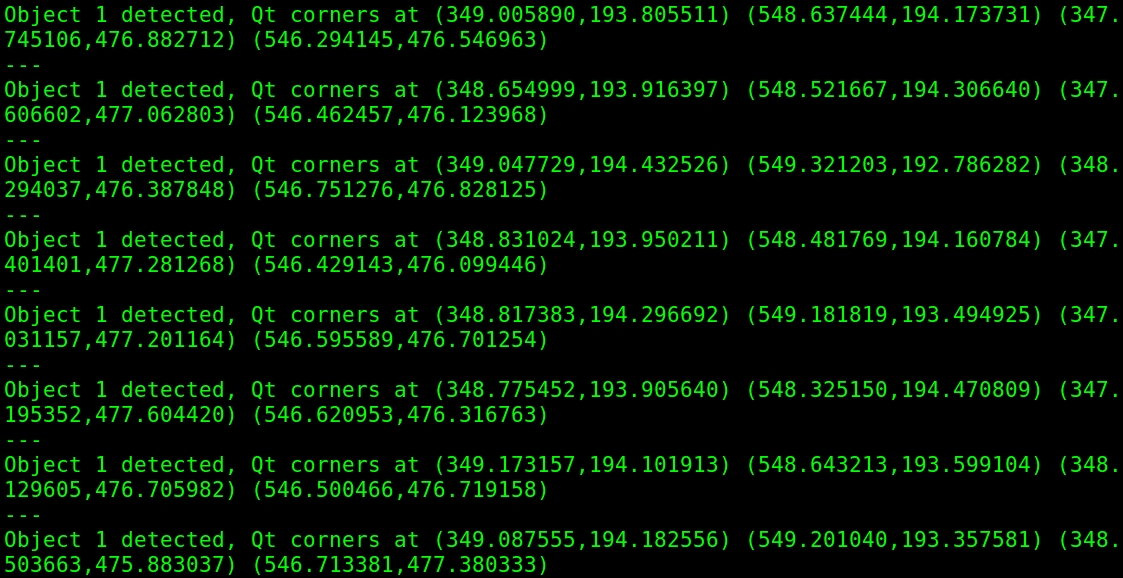

- Is that enough? What about the position of the object? We can retrieve the position of the object using the following command:

$ rosrun find_object_2d print_objects_detected

Figure 9: The object details

- You can also get the complete information about the detected object from the

/objecttopic. The topic publishes a multi-array that consist of the width and height of the object and the homography matrix to compute the position and orientation of the object and its scale and shear values. You can echo the/objectstopic to get output like this:

Figure 10: The /object topic values

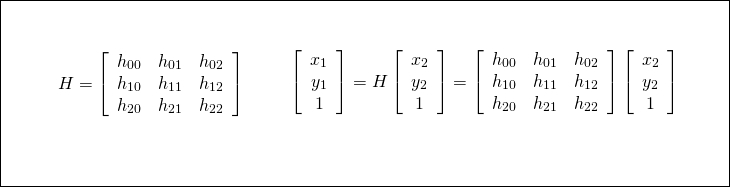

- We can compute the new position and orientation from the following equations:

Figure 11: The equation to compute object position

Here, H is the homography 3x3 matrix, (x1, y1) is the object's position in the stored image, and (x2, y2) is the computed object position in the current frame.

Note

You can check out the source code of the print_objected_src node to get the conversion using a homography matrix.

Here is the source code of this node: https://github.com/introlab/find-object/blob/master/src/ros/print_objects_detected_node.cpp.

Using a webcam, we can only find the 2D position and orientation of an object, but what should we use if we need the 3D coordinates of the object? We could simply use a depth sensor like the Kinect and run these same nodes. For interfacing the Kinect with ROS, we need to install some driver packages. The Kinect can deliver both RGB and depth data. Using RGB data, the object detector detects the object, and using the depth value, it computes the distance from the sensor too.

Here are the dependent packages for working with the Kinect sensor:

- If you are using the Xbox Kinect 360, which is the first Kinect, you have to install the following package to get it working:

$ sudo apt-get install ros-kinetic-openni-launch

- If you have Kinect version 2, you may need a different driver package, which is available on GitHub. You may need to install it from the source code. The following is the ROS package link of the V2 driver. The installation instructions are also given:https://github.com/code-iai/iai_kinect2

If you are using the Asus Xtion Pro or other PrimeSense device, you may need to install the following driver to work with this detector:

$ sudo apt-get install ros-kinetic-openni2-launch

In this book, we will be working with the Xbox Kinect, which is the first version of Kinect.

Before starting the Kinect driver, you have to plug the USB to your PC and make sure that the Kinect is powered using its adapter. Once everything is done, you can launch the drivers using the following command:

$ roslaunch openni_launch openni.launch depth_registration:=true

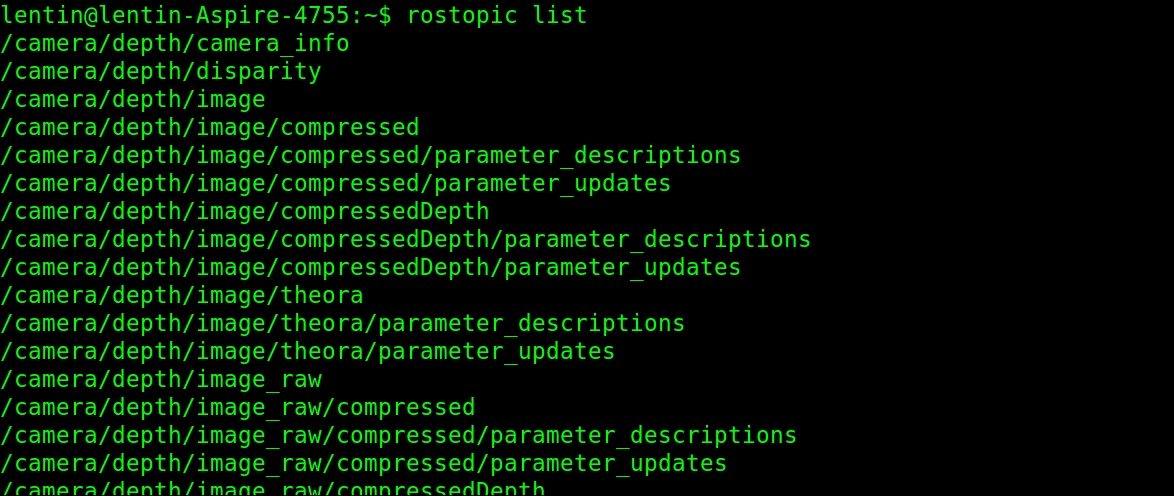

- If the driver is running without errors, you should get the following list of topics:

Figure 12: List of topics from the Kinect openNI driver

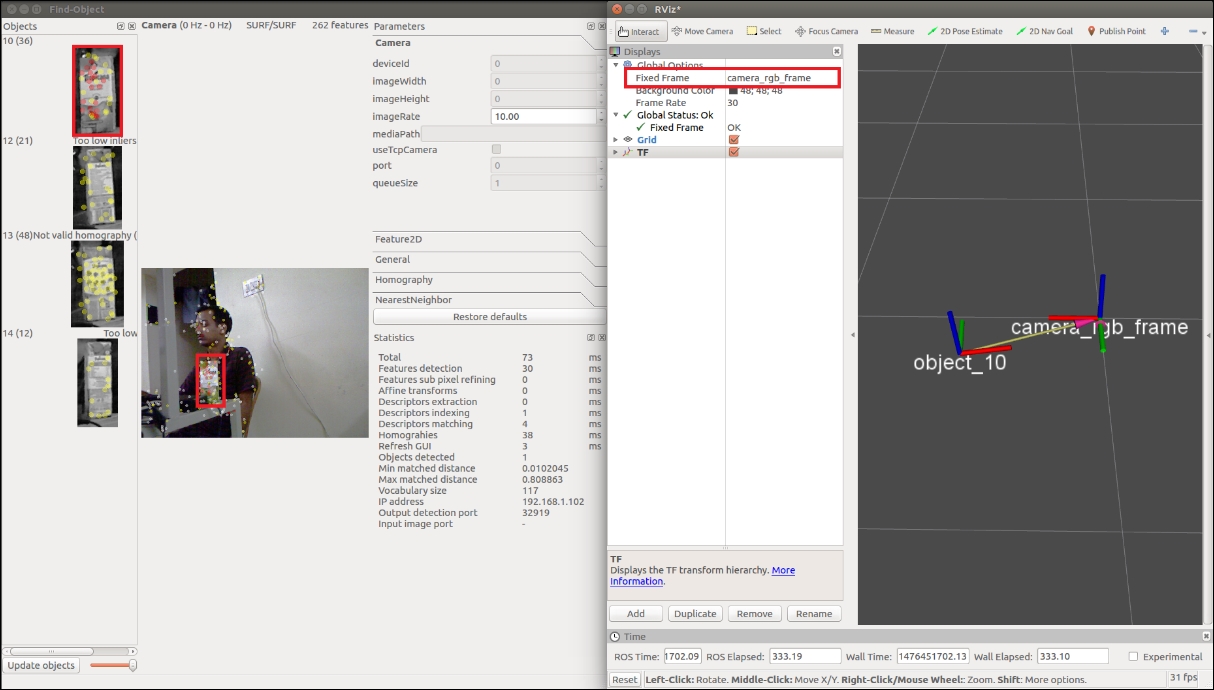

- If you are getting this, start the object detector and mark the object as you did for the 2D object detection. The procedure is the same, but in this case, you will get the 3D coordinates of the object. The following diagram shows the detection of the object and its TF data on Rviz. You can see the side view of the Kinect and the object position in Rviz.

Figure 13: Object detection using Kinect

- To start the object detection, you have to perform some tweaks in the existing launch file given by this package. The name of the launch file for object detection is

find_object_3d.launch.Note

You can directly view this file from the following link: https://github.com/introlab/find-object/blob/master/launch/find_object_3d.launch.

This launch file is written for an autonomous robot that detects objects while navigating the surrounding.

- We can modify this file a little bit because in our case, there is no robot, so we can modify it in such a way that the TF information should be published with respect to Kinect's

camera_rgb_frame, which is shown in the previous diagram. Here is the launch file definition we want for the demo:<launch> <node name="find_object_3d" pkg="find_object_2d" type="find_object_2d" output="screen"> <param name="gui" value="true" type="bool"/> <param name="settings_path" value="~/.ros/find_object_2d.ini" type="str"/> <param name="subscribe_depth" value="true" type="bool"/> <param name="objects_path" value="" type="str"/> <param name="object_prefix" value="object" type="str"/> <remap from="rgb/image_rect_color" to="camera/rgb/image_rect_color"/> <remap from="depth_registered/image_raw" to="camera/depth_registered/image_raw"/> <remap from="depth_registered/camera_info" to="camera/depth_registered/camera_info"/> </node> </launch>In this code, we just removed the static transform required for the mobile robot. You can also change the

object_prefixparameter to name the detected object.Using the following commands, you can modify this launch file, which is already installed on your system:

$ roscd find_object_2d/launch $ sudo gedit find_object_3d.launch

Now, you can remove the unwanted lines of code and save your changes. After saving this launch file, launch it to start detection:

$ roslaunch find_object_2d find_object_3d.launchYou can mark the object and it will start detecting the marked object.

- To visualize the TF data, you can launch Rviz, make the fixed frame

/camera_linkor/camera_rgb_frame, and add a TF display from the left panel of Rviz. - You can run Rviz using the following command:

$ rosrun rviz rviz

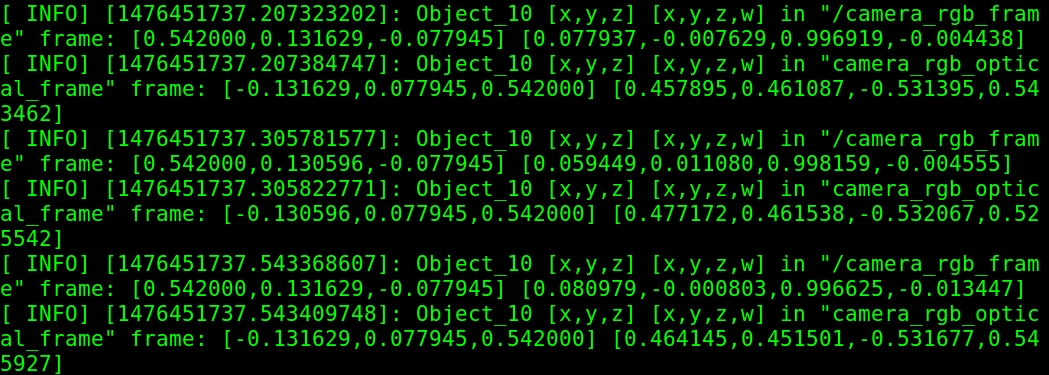

Other than publishing TF, we can also see the 3D position of the object in the detector Terminal. The detected position values are shown in the following screenshot:

Figure 14: Printing the 3D object's position