Chapter 6

I/O System Overview

6.1 I/O Mapping from User to Device

Computers store and retrieve data through supporting peripheral I/O devices. These devices typically include mass-storage devices, such as moving-head disk drives, magnetic-tape drives, and network interfaces. Storage devices such as disks and tapes are accessed through I/O controllers that manage the operation of their slave devices according to I/O requests from the CPU.

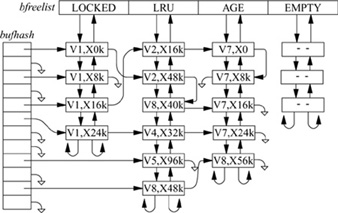

Many hardware device peculiarities are hidden from the user by high-level kernel facilities, such as the filesystem and socket interfaces. Other such peculiarities are hidden from the bulk of the kernel itself by the I/O system. The I/O system consists of buffer-caching systems, general device-driver code, and drivers for specific hardware devices that must finally address peculiarities of the specific devices. The various I/O systems are summarized in Fig. 6.1 (on page 194).

There are four main kinds of I/O in 4.4BSD: the filesystem, the character-device interface, the block-device interface, and the socket interface with its related network devices. The character and block interfaces appear in the filesystem name space. The character interface provides unstructured access to the underlying hardware, whereas the block device provides structured access to the underlying hardware. The network devices do not appear in the filesystem; they are accessible through only the socket interface. Block and character devices are described in Sections 6.2 and 6.3 respectively. The filesystem is described in Chapters 7 and 8. Sockets are described in Chapter 11.

A block-device interface, as the name indicates, supports only block-oriented I/O operations. The block-device interface uses the buffer cache to minimize the number of I/O requests that require an I/O operation, and to synchronize with filesystem operations on the same device. All I/O is done to or from I/O buffers that reside in the kernel’s address space. This approach requires at least one memory-to-memory copy operation to satisfy a user request, but also allows 4.4BSD to support I/O requests of nearly arbitrary size and alignment.

Figure 6.1 Kernel I/O structure.

A character-device interface comes in two styles that depend on the characteristics of the underlying hardware device. For some character-oriented hardware devices, such as terminal multiplexers, the interface is truly character oriented, although higher-level software, such as the terminal driver, may provide a line-oriented interface to applications. However, for block-oriented devices such as disks and tapes, a character-device interface is an unstructured or raw interface. For this interface, I/O operations do not go through the buffer cache; instead, they are made directly between the device and buffers in the application’s virtual address space. Consequently, the size of the operations must be a multiple of the underlying block size required by the device, and, on some machines, the application’s I/O buffer must be aligned on a suitable boundary.

Internal to the system, I/O devices are accessed through a fixed set of entry points provided by each device’s device driver. The set of entry points varies according to whether the I/O device supports a block- or character-device interface. For a block-device interface, a device driver is described by a bdevsw structure, whereas for character-device interface, it accesses a cdevsw structure. All the bdevsw structures are collected in the block-device table, whereas cdevsw structures are similarly organized in a character-device table.

Devices are identified by a device number that is constructed from a major and a minor device number. The major device number uniquely identifies the type of device (really of the device driver) and is the index of the device’s entry in the block- or character-device table. Devices that support both block- and character-device interfaces have two major device numbers, one for each table. The minor device number is interpreted solely by the device driver and is used by the driver to identify to which, of potentially many, hardware devices an I/O request refers. For magnetic tapes, for example, minor device numbers identify a specific controller and tape transport. The minor device number may also specify a section of a device—for example, a channel of a multiplexed device, or optional handling parameters.

Device Drivers

A device driver is divided into three main sections:

1. Autoconfiguration and initialization routines

2. Routines for servicing I/O requests (the top half)

3. Interrupt service routines (the bottom half)

The autoconfiguration portion of a driver is responsible for probing for a hardware device to see whether the latter is present and to initialize the device and any associated software state that is required by the device driver. This portion of the driver is typically called only once, when the system is initialized. Autoconfiguration is described in Section 14.4.

The section of a driver that services I/O requests by the system is invoked because of system calls or for the virtual-memory system. This portion of the device driver executes synchronously in the top half of the kernel and is permitted to block by calling the sleep() routine. We commonly refer to this body of code as the top half of a device driver.

Interrupt service routines are invoked when the system fields an interrupt from a device. Consequently, these routines cannot depend on any per-process state and cannot block. We commonly refer to a device driver’s interrupt service routines as the bottom half of a device driver.

In addition to these three sections of a device driver, an optional crash-dump routine may be provided. This routine, if present, is invoked when the system recognizes an unrecoverable error and wishes to record the contents of physical memory for use in postmortem analysis. Most device drivers for disk controllers, and some for tape controllers, provide a crash-dump routine. The use of the crash-dump routine is described in Section 14.7.

I/O Queueing

Device drivers typically manage one or more queues of I/O requests in their normal operation. When an input or output request is received by the top half of the driver, it is recorded in a data structure that is placed on a per-device queue for processing. When an input or output operation completes, the device driver receives an interrupt from the controller. The interrupt service routine removes the appropriate request from the device’s queue, notifies the requester that the command has completed, and then starts the next request from the queue. The I/O queues are the primary means of communication between the top and bottom halves of a device driver.

Because I/O queues are shared among asynchronous routines, access to the queues must be synchronized. Routines that make up the top half of a device driver must raise the processor priority level (using splbio(), spltty(), etc.) to prevent the bottom half from being entered as a result of an interrupt while a top-half routine is manipulating an I/O queue. Synchronization among multiple processes starting I/O requests also must be done. This synchronization is done using the mechanisms described in Section 4.3.

Interrupt Handling

Interrupts are generated by devices to signal that an operation has completed or that a change in status has occurred. On receiving a device interrupt, the system invokes the appropriate device-driver interrupt service routine with one or more parameters that identify uniquely the device that requires service. These parameters are needed because device drivers typically support multiple devices of the same type. If the interrupting device’s identity were not supplied with each interrupt, the driver would be forced to poll all the potential devices to identify the device that interrupted.

The system arranges for the unit-number parameter to be passed to the interrupt service routine for each device by installing the address of an auxiliary glue routine in the interrupt-vector table. This glue routine, rather than the actual interrupt service routine, is invoked to service the interrupt; it takes the following actions:

1. Save all volatile registers.

2. Update statistics on device interrupts.

3. Call the interrupt service routine with the appropriate unit number parameter.

4. Restore the volatile registers saved in step 1.

5. Return from the interrupt.

Because a glue routine is interposed between the interrupt-vector table and the interrupt service routine, device drivers do not need to be concerned with saving and restoring machine state. In addition, special-purpose instructions that cannot be generated from C, which are needed by the hardware to support interrupts, can be kept out of the device driver; this interposition of a glue routine permits device drivers to be written without assembly language.

6.2 Block Devices

Block devices include disks and tapes. The task of the block-device interface is to convert from the user abstraction of a disk as an array of bytes to the structure imposed by the underlying physical medium. Although the user may wish to write a single byte to a disk, the hardware can read and write only in multiples of sectors. Hence, the system must arrange to read in the sector containing the byte to be modified, to replace the affected byte, and to write back the sector to the disk. This operation of converting random access to an array of bytes to reads and writes of disk sectors is known as block I/O. Block devices are accessible directly through appropriate device special files, but are more commonly accessed indirectly through the filesystem (see Section 8.2).

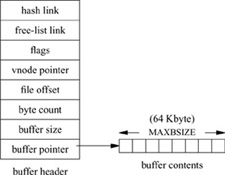

Processes may read data in sizes smaller than a disk block. The first time that a small read is required from a particular disk block, the block will be transferred from the disk into a kernel buffer. Later reads of parts of the same block then require only copying from the kernel buffer to the memory of the user process. Multiple small writes are treated similarly. A buffer is allocated from the cache when the first write to a disk block is made, and later writes to part of the same block are then likely to require only copying into the kernel buffer, and no disk I/O.

In addition to providing the abstraction of arbitrary alignment of reads and writes, the block buffer cache reduces the number of disk I/O transfers required by filesystem accesses. Because system-parameter files, commands, and directories are read repeatedly, their data blocks are usually in the buffer cache when they are needed. Thus, the kernel does not need to read them from the disk every time that they are requested.

If the system crashes while data for a particular block are in the cache but have not yet been written to disk, the filesystem on the disk will be incorrect and those data will be lost. (Critical system data, such as the contents of directories, however, are written synchronously to disk, to ensure filesystem consistency; operations requiring synchronous I/O are described in the last subsection of Section 8.2.) So that lost data are minimized, writes are forced periodically for dirty buffer blocks. These forced writes are done (usually every 30 seconds) by a user process, update, which uses the sync system call. There is also a system call, fsync, that a process can use to force all dirty blocks of a single file to be written to disk immediately; this synchronization is useful for ensuring database consistency or before removing an editor backup file.

Most magnetic-tape accesses are done through the appropriate raw tape device, bypassing the block buffer cache. When the cache is used, tape blocks must still be written in order, so the tape driver forces synchronous writes for them.

Entry Points for Block-Device Drivers

Device drivers for block devices are described by an entry in the bdevsw table. Each bdevsw structure contains the following entry points:

open |

Open the device in preparation for I/O operations. A device’s open entry point will be called for each open system call on a block special device file, or, internally, when a device is prepared for mounting a filesystem with the mount system call. The open() routine will commonly verify the integrity of the associated medium. For example, it will verify that the device was identified during the autoconfiguration phase and, for tape and disk drives, that a medium is present and online. |

strategy |

Start a read or write operation, and return immediately. I/O requests to or from filesystems located on a device are translated by the system into calls to the block I/O routines bread() and bwrite(). These block I/O routines in turn call the device’s strategy routine to read or write data not in the cache. Each call to the strategy routine specifies a pointer to a buf structure containing the parameters for an I/O request. If the request is synchronous, the caller must sleep (on the address of the buf structure) until I/O completes. |

Close a device. The close() routine is called after the final client interested in using the device terminates. These semantics are defined by the higher-level I/O facilities. Disk devices have nothing to do when a device is closed, and thus use a null close() routine. Devices that support access to only a single client must mark the device as available once again. Closing a tape drive that was open for writing typically causes end-of-file marks to be written on the tape and the tape to be rewound. |

|

dump |

Write all physical memory to the device. The dump entry point saves the contents of memory on secondary storage. The system automatically takes a dump when it detects an unrecoverable error and is about to crash. The dump is used in a postmortem analysis of the problem that caused the system to crash. The dump routine is invoked with the processor priority at its highest level; thus, the device driver must poll for device status, rather than wait for interrupts. All disk devices are expected to support this entry point; some tape devices do as well. |

psize |

Return the size of a disk-drive partition. The driver is supplied a logical unit and is expected to return the size of that unit, typically a disk-drive partition, in DEV_BSIZE blocks. This entry point is used during the bootstrap procedure to calculate the location at which a crash dump should be placed and to determine the sizes of the swap devices. |

Sorting of Disk I/O Requests

The kernel provides a generic disksort() routine that can be used by all the disk device drivers to sort I/O requests into a drive’s request queue using an elevator sorting algorithm. This algorithm sorts requests in a cyclic, ascending, cylinder order, so that requests can be serviced with a minimal number of one-way scans over the drive. This ordering was originally designed to support the normal read-ahead requested by the filesystem as well as to counteract the filesystem’s random placement of data on a drive. With the improved placement algorithms in the current filesystem, the effect of the disksort() routine is less noticeable; disksort() produces the largest effect when there are multiple simultaneous users of a drive.

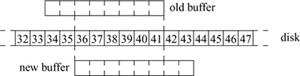

The disksort() algorithm is shown in Fig. 6.2. A drive’s request queue is made up of one or two lists of requests ordered by cylinder number. The request at the front of the first list indicates the current position of the drive. If a second list is present, it is made up of requests that lie before the current position. Each new request is sorted into either the first or the second list, according to the request’s location. When the heads reach the end of the first list, the drive begins servicing the other list.

Disk sorting can also be important on machines that have a fast processor, but that do not sort requests within the device driver. In this situation, if a write of several Kbyte is honored in order of queueing, it can block other processes from accessing the disk while it completes. Sorting requests provides some scheduling, which more fairly distributes accesses to the disk controller.

Figure 6.2 Algorithm for disksort().

Disk Labels

Many disk controllers require the device driver to identify the location of disk sectors that are to be transferred by their cylinder, track, and rotational offset. For maximum throughput efficiency, this information is also needed by the filesystem when deciding how to lay out files. Finally, a disk may be broken up into several partitions, each of which may be used for a separate filesystem or swap area.

Historically, the information about the geometry of the disk and about the layout of the partitions was compiled into the kernel device drivers. This approach had several flaws. First, it was cumbersome to have all the possible disk types and partitions compiled into the kernel. Any time that a disk with a new geometry was added, the driver tables had to be updated and the kernel recompiled. It was also restrictive in that there was only one choice of partition table for each drive type. Choosing a different set of tables required modifying the disk driver and rebuilding the kernel. Installing new tables also required dumping all the disks of that type on the system, then booting the new kernel and restoring them onto the new partitions. Disks with different partition layouts could not be moved from one system to another. An additional problem arose when nonstandard partition tables were used; new releases from the vendor had to have the partition tables modified before they could be used on an existing system.

For all these reasons, 4.4BSD and most commercial UNIX vendors added disk labels. A disk label contains detailed geometry information, including cylinder, track, and sector layout, along with any other driver-specific information. It also contains information about the partition layout and usage, the latter describing partition usage: type of filesystem, swap partition, or unused. For the fast filesystem, the partition usage contains enough additional information to enable the filesystem check program (fsck) to locate the alternate superblocks for the filesystem.

Having labels on each disk means that partition information can be different for each disk, and that it carries over when the disk is moved from one system to another. It also means that, when previously unknown types of disks are connected to the system, the system administrator can use them without changing the disk driver, recompiling, and rebooting the system.

The label is located near the beginning of each drive—usually, in block zero. It must be located in the first track, because the device driver does not know the geometry of the disk until the driver has read the label. Thus, it must assume that the label is in cylinder zero, track zero, at some valid offset within that track. Most architectures have hardware (or first-level) bootstrap code stored in read-only memory (ROM). When the machine is powered up or the reset button is pressed, the CPU executes the hardware bootstrap code from the ROM. The hardware bootstrap code typically reads the first few sectors on the disk into the main memory, then branches to the address of the first location that it read. The program stored in these first few sectors is the second-level bootstrap. Having the disk label stored in the part of the disk read as part of the hardware bootstrap allows the second-level bootstrap to have the disk-label information. This information gives it the ability to find the root filesystem and hence the files, such as the kernel, needed to bring up 4.4BSD. The size and location of the second-level bootstrap are dependent on the requirements of the hardware bootstrap code. Since there is no standard for disk-label formats and the hardware bootstrap code usually understands only the vendor label, it is often necessary to support both the vendor and the 4.4BSD disk labels. Here, the vendor label must be placed where the hardware bootstrap ROM code expects it; the 4.4BSD label must be placed out of the way of the vendor label but within the area that is read in by the hardware bootstrap code, so that it will be available to the second-level bootstrap.

6.3 Character Devices

Almost all peripherals on the system, except network interfaces, have a character-device interface. A character device usually maps the hardware interface into a byte stream, similar to that of the filesystem. Character devices of this type include terminals (e.g., /dev/tty00), line printers (e.g, /dev/lp0), an interface to physical main memory (/dev/mem), and a bottomless sink for data and an endless source of end-of-file markers (/dev/null). Some of these character devices, such as terminal devices, may display special behavior on line boundaries, but in general are still treated as byte streams.

Devices emulating terminals use buffers that are smaller than those used for disks and tapes. This buffering system involves small (usually 64-byte) blocks of characters kept in linked lists. Although all free character buffers are kept in a single free list, most device drivers that use them limit the number of characters that can be queued at one time for a single terminal port.

Devices such as high-speed graphics interfaces may have their own buffers or may always do I/O directly into the address space of the user; they too are classed as character devices. Some of these drivers may recognize special types of records, and thus be further from the plain byte-stream model.

The character interface for disks and tapes is also called the raw device interface; it provides an unstructured interface to the device. Its primary task is to arrange for direct I/O to and from the device. The disk driver isolates the details of tracks, cylinders, and the like from the rest of the kernel. It also handles the asynchronous nature of I/O by maintaining and ordering an active queue of pending transfers. Each entry in the queue specifies whether it is for reading or writing, the main-memory address for the transfer, the device address for the transfer (usually a disk sector number), and the transfer size (in bytes).

All other restrictions of the underlying hardware are passed through the character interface to its clients, making character-device interfaces the furthest from the byte-stream model. Thus, the user process must abide by the sectoring restrictions imposed by the underlying hardware. For magnetic disks, the file offset and transfer size must be a multiple of the sector size. The character interface does not copy the user data into a kernel buffer before putting them on an I/O queue. Rather, it arranges to have the I/O done directly to or from the address space of the process. The size and alignment of the transfer is limited by the physical device. However, the transfer size is not restricted by the maximum size of the internal buffers of the system, because these buffers are not used.

The character interface is typically used by only those system utility programs that have an intimate knowledge of the data structures on the disk or tape. The character interface also allows user-level prototyping; for example, the 4.2BSD filesystem implementation was written and largely tested as a user process that used a raw disk interface, before the code was moved into the kernel.

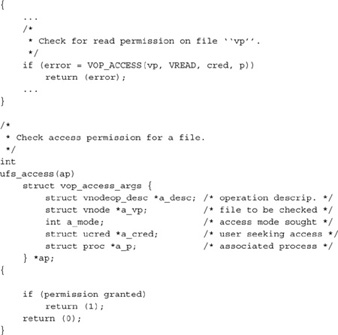

Character devices are described by entries in the cdevsw table. The entry points in this table (see Table 6.1 on page 202) are used to support raw access to block-oriented devices, as well as normal access to character-oriented devices through the terminal driver. Because of the diverse requirements of these two types of devices, the set of entry points is the union of two disjoint sets. Raw devices support a subset of the entry points that correspond to those entry points found in a block-device driver, whereas character devices support the full set of entry points. Each is described in the following sections.

Raw Devices and Physical I/O

Most raw devices differ from block devices only in the way that they do I/O. Whereas block devices read and write data to and from the system buffer cache, raw devices transfer data to and from user data buffers. Bypassing the buffer cache eliminates the memory-to-memory copy that must be done by block devices, but also denies applications the benefits of data caching. In addition, for devices that support both raw- and block-device access, applications must take care to preserve consistency between data in the buffer cache and data written directly to the device; the raw device should be used only when the block device is idle. Raw-device access is used by many filesystem utilities, such as the filesystem check program, fsck, and by programs that read and write magnetic tapes—for example, tar, dump, and restore.

Table 6.1 Entry points for character and raw device drivers.

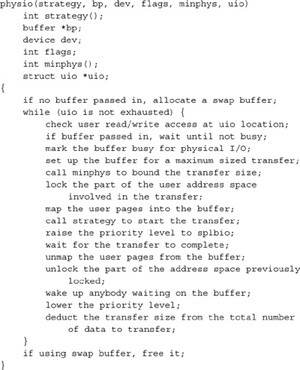

Because raw devices bypass the buffer cache, they are responsible for managing their own buffer structures. Most devices borrow swap buffers to describe their I/O. The read and write routines use the physio() routine to start a raw I/O operation (see Fig. 6.3). The strategy parameter identifies a block-device strategy routine that starts I/O operations on the device. The buffer indicated by bp is used by physio() in constructing the request(s) made to the strategy routine. The device, read – write flag, and uio parameters completely specify the I/O operation that should be done. The minphys() routine is called by physio() to adjust the size of each I/O transfer before the latter is passed to the strategy routine; this call to minphys() allows the transfer to be done in sections, according to the maximum transfer size supported by the device.

Raw-device I/O operations request the hardware device to transfer data directly to or from the data buffer in the user program’s address space described by the uio parameter. Thus, unlike I/O operations that do direct memory access (DMA) from buffers in the kernel address space, raw I/O operations must check that the user’s buffer is accessible by the device, and must lock it into memory for the duration of the transfer.

Character-Oriented Devices

Character-oriented I/O devices are typified by terminal multiplexers, although they also include printers and other character- or line-oriented devices. These devices are usually accessed through the terminal driver, described in Chapter 10. The close tie to the terminal driver has heavily influenced the structure of character-device drivers. For example, several entry points in the cdevsw structure exist for communication between the generic terminal handler and the terminal multiplexer hardware drivers.

Figure 6.3 Algorithm for physical I/O.

Entry Points for Character-Device Drivers

A device driver for a character device is defined by an entry in the cdevsw table. This structure contains many of the same entry points found in an entry in the bdevsw table.

Open or close a character device. The open() and close() entry points provide functions similar to those of a block device driver. For character devices that simply provide raw access to a block device, these entry points are usually the same. But some block devices do not have these entry points, whereas most character devices do have them. |

|

read |

Read data from a device. For raw devices, this entry point normally just calls the physio() routine with device-specific parameters. For terminal-oriented devices, a read request is passed immediately to the terminal driver. For other devices, a read request requires that the specified data be copied into the kernel’s address space, typically with the uiomove() routine, and then be passed to the device. |

write |

Write data to a device. This entry point is a direct parallel of the read entry point: Raw devices use physio(), terminal-oriented devices call the terminal driver to do this operation, and other devices handle the request internally. |

ioctl |

Do an operation other than a read or write. This entry point originally provided a mechanism to get and set device parameters for terminal devices; its use has expanded to other types of devices as well. Historically, ioctl() operations have varied widely from device to device. 4.4BSD, however, defines a set of operations that is supported by all tape devices. These operations position tapes, return unit status, write end-of-file marks, and place a tape drive off-line. |

select |

Check the device to see whether data are available for reading, or space is available for writing, data. The select entry point is used by the select system call in checking file descriptors associated with device special files. For raw devices, a select operation is meaningless, since data are not buffered. Here, the entry point is set to seltrue(), a routine that returns true for any select request. For devices used with the terminal driver, this entry point is set to ttselect(), a routine described in Chapter 10. |

stop |

Stop output on a device. The stop routine is defined for only those devices used with the terminal driver. For these devices, the stop routine halts transmission on a line when the terminal driver receives a stop character—for example, “^S”—or when it prepares to flush its output queues. |

mmap |

Map a device offset into a memory address. This entry point is called by the virtual-memory system to convert a logical mapping to a physical address. For example, it converts an offset in /dev/mem to a kernel address. |

reset |

Reset device state after a bus reset. The reset routine is called from the bus-adapter support routines after a bus reset is made. The device driver is expected to reinitialize the hardware to set into a known state—typically the state it has when the system is initially booted. |

6.4 Descriptor Management and Services

For user processes, all I/O is done through descriptors. The user interface to descriptors was described in Section 2.6. This section describes how the kernel manages descriptors, and how it provides descriptor services, such as locking and selecting.

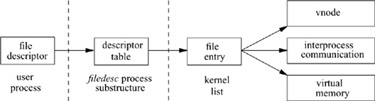

System calls that refer to open files take a file descriptor as an argument to specify the file. The file descriptor is used by the kernel to index into the descriptor table for the current process (kept in the filedesc structure, a substructure of the process structure for the process) to locate a file entry, or file structure. The relations of these data structures are shown in Fig. 6.4.

The file entry provides a file type and a pointer to an underlying object for the descriptor. For data files, the file entry points to a vnode structure that references a substructure containing the filesystem-specific information described in Chapters 7, 8, and 9. The vnode layer is described in Section 6.5. Special files do not have data blocks allocated on the disk; they are handled by the special-device filesystem that calls appropriate drivers to handle I/O for them. The 4.4BSD file entry may also reference a socket, instead of a file. Sockets have a different file type, and the file entry points to a system block that is used in doing interprocess communication. The virtual-memory system supports the mapping of files into a process’s address space. Here, the file descriptor must reference a vnode that will be partially or completely mapped into the user’s address space.

Open File Entries

The set of file entries is the focus of activity for file descriptors. They contain the information necessary to access the underlying objects and to maintain common information.

The file entry is an object-oriented data structure. Each entry contains a type and an array of function pointers that translate the generic operations on file descriptors into the specific actions associated with their type. In 4.4BSD, there are two descriptor types: files and sockets. The operations that must be implemented for each type are as follows:

Figure 6.4 File-descriptor reference to a file entry.

• Write to the descriptor

• Select on the descriptor

• Do ioctl operations on the descriptor

• Close and possibly deallocate the object associated with the descriptor

Note that there is no open routine defined in the object table. 4.4BSD treats descriptors in an object-oriented fashion only after they are created. This approach was taken because sockets and files have different characteristics. Generalizing the interface to handle both types of descriptors at open time would have complicated an otherwise simple interface.

Each file entry has a pointer to a data structure that contains information specific to the instance of the underlying object. The data structure is opaque to the routines that manipulate the file entry. A reference to the data structure is passed on each call to a function that implements a file operation. All state associated with an instance of an object must be stored in that instance’s data structure; the underlying objects are not permitted to manipulate the file entry themselves.

The read and write system calls do not take an offset in the file as an argument. Instead, each read or write updates the current file offset in the file according to the number of bytes transferred. The offset determines the position in the file for the next read or write. The offset can be set directly by the lseek system call. Since more than one process may open the same file, and each such process needs its own offset for the file, the offset cannot be stored in the per-object data structure. Thus, each open system call allocates a new file entry, and the open file entry contains the offset.

Some semantics associated with all file descriptors are enforced at the descriptor level, before the underlying system call is invoked. These semantics are maintained in a set of flags associated with the descriptor. For example, the flags record whether the descriptor is open for reading, writing, or both reading and writing. If a descriptor is marked as open for reading only, an attempt to write it will be caught by the descriptor code. Thus, the functions defined for doing reading and writing do not need to check the validity of the request; we can implement them knowing that they will never receive an invalid request.

Other information maintained in the flags includes

• The no-delay (NDELAY) flag: If a read or a write would cause the process to block, the system call returns an error (EWOULDBLOCK) instead.

• The asynchronous (ASYNC) flag: The kernel watches for a change in the status of the descriptor, and arranges to send a signal (SIGIO) when a read or write becomes possible.

Other information that is specific to regular files also is maintained in the flags field:

• Information on whether the descriptor holds a shared or exclusive lock on the underlying file: The locking primitives could be extended to work on sockets, as well as on files. However, the descriptors for a socket rarely refer to the same file entry. The only way for two processes to share the same socket descriptor is for a parent to share the descriptor with its child by forking, or for one process to pass the descriptor to another in a message.

• The append flag: Each time that a write is made to the file, the offset pointer is first set to the end of the file. This feature is useful when, for example, multiple processes are writing to the same log file.

Each file entry has a reference count. A single process may have multiple references to the entry because of calls to the dup or fcntl system calls. Also, file structures are inherited by the child process after a fork, so several different processes may reference the same file entry. Thus, a read or write by either process on the twin descriptors will advance the file offset. This semantic allows two processes to read the same file or to interleave output to the same file. Another process that has independently opened the file will refer to that file through a different file structure with a different file offset. This functionality was the original reason for the existence of the file structure; the file structure provides a place for the file offset intermediate between the descriptor and the underlying object.

Each time that a new reference is created, the reference count is incremented. When a descriptor is closed (any one of (1) explicitly with a close, (2) implicitly after an exec because the descriptor has been marked as close-on-exec, or (3) on process exit), the reference count is decremented. When the reference count drops to zero, the file entry is freed.

The AF_LOCAL domain interprocess-communication facility allows descriptors to be sent between processes. While a descriptor is in transit between processes, it may not have any explicit references. It must not be deallocated, as it will be needed when the message is received by the destination process. However, the message might never be received; thus, the file entry also holds a message count for each entry. The message count is incremented for each descriptor that is in transit, and is decremented when the descriptor is received. The file entry might need to be reclaimed when all the remaining references are in messages. For more details on message passing in the AF_LOCAL domain, see Section 11.6.

The close-on-exec flag is kept in the descriptor table, rather than in the file entry. This flag is not shared among all the references to the file entry because it is an attribute of the file descriptor itself. The close-on-exec flag is the only piece of information that is kept in the descriptor table, rather than being shared in the file entry.

Management of Descriptors

The fcntl system call manipulates the file structure. It can be used to make the following changes to a descriptor:

• Duplicate a descriptor as though by a dup system call.

• Get or set the close-on-exec flag. When a process fork s, all the parent’s descriptors are duplicated in the child. The child process then exec s a new process. Any of the child’s descriptors that were marked close-on-exec are closed. The remaining descriptors are available to the newly executed process.

• Set the descriptor into nonblocking mode. If any data are available for a read operation, or if any space is available for a write operation, an immediate partial read or write is done. If no data are available for a read operation, or if a write operation would block, the system call returns an error showing that the operation would block, instead of putting the process to sleep. This facility was not implemented for regular files in 4.4BSD, because filesystem I/O is always expected to complete within a few milliseconds.

• Force all writes to append data to the end of the file, instead of at the descriptor’s current location in the file.

• Send a signal to the process when it is possible to do I/O.

• Send a signal to a process when an exception condition arises, such as when urgent data arrive on an interprocess-communication channel.

• Set or get the process identifier or process-group identifier to which the two I/O – related signals in the previous steps should be sent.

• Test or change the status of a lock on a range of bytes within an underlying file. Locking operations are described in the next subsection.

The implementation of the dup system call is easy. If the process has reached its limit on open files, the kernel returns an error. Otherwise, the kernel scans the current process’s descriptor table, starting at descriptor zero, until it finds an unused entry. The kernel allocates the entry to point to the same file entry as does the descriptor being duplicated. The kernel then increments the reference count on the file entry, and returns the index of the allocated descriptor-table entry. The fcntl system call provides a similar function, except that it specifies a descriptor from which to start the scan.

Sometimes, a process wants to allocate a specific descriptor-table entry. Such a request is made with the dup2 system call. The process specifies the descriptor-table index into which the duplicated reference should be placed. The kernel implementation is the same as for dup, except that the scan to find a free entry is changed to close the requested entry if that entry is open, and then to allocate the entry as before. No action is taken if the new and old descriptors are the same.

The system implements getting or setting the close-on-exec flag via the fcntl system call by making the appropriate change to the flags field of the associated descriptor-table entry. Other attributes that fcntl can get or set manipulate the flags in the file entry. However, the implementation of the various flags cannot be handled by the generic code that manages the file entry. Instead, the file flags must be passed through the object interface to the type-specific routines to do the appropriate operation on the underlying object. For example, manipulation of the nonblocking flag for a socket must be done by the socket layer, since only that layer knows whether an operation can block.

The implementation of the ioctl system call is broken into two major levels. The upper level handles the system call itself. The ioctl call includes a descriptor, a command, and pointer to a data area. The command argument encodes what the size is of the data area for the parameters, and whether the parameters are input, output, or both input and output. The upper level is responsible for decoding the command argument, allocating a buffer, and copying in any input data. If a return value is to be generated and there is no input, the buffer is zeroed. Finally, the ioctl is dispatched through the file-entry ioctl function, along with the I/O buffer, to the lower-level routine that implements the requested operation.

The lower level does the requested operation. Along with the command argument, it receives a pointer to the I/O buffer. The upper level has already checked for valid memory references, but the lower level may do more precise argument validation because it knows more about the expected nature of the arguments. However, it does not need to copy the arguments in or out of the user process. If the command is successful and produces output, the lower level places the results in the buffer provided by the top level. When the lower level returns, the upper level copies the results to the process.

File-Descriptor Locking

Early UNIX systems had no provision for locking files. Processes that needed to synchronize access to a file had to use a separate lock file. A process would try to create a lock file. If the creation succeeded, then the process could proceed with its update; if the creation failed, the process would wait, and then try again. This mechanism had three drawbacks:

1. Processes consumed CPU time by looping over attempts to create locks.

2. Locks left lying around because of system crashes had to be removed (normally in a system-startup command script).

3. Processes running as the special system-administrator user, the superuser, are always permitted to create files, and so were forced to use a different mechanism.

Although it is possible to work around all these problems, the solutions are not straightforward, so a mechanism for locking files was added in 4.2BSD.

The most general locking schemes allow multiple processes to update a file concurrently. Several of these techniques are discussed in [Peterson, 1983]. A simpler technique is to serialize access to a file with locks. For standard system applications, a mechanism that locks at the granularity of a file is sufficient. So, 4.2BSD and 4.3BSD provided only a fast whole-file locking mechanism. The semantics of these locks include allowing locks to be inherited by child processes and releasing locks only on the last close of a file.

Certain applications require the ability to lock pieces of a file. Locking facilities that support a byte-level granularity are well understood [Bass, 1981]. Unfortunately, they are not powerful enough to be used by database systems that require nested hierarchical locks, but are complex enough to require a large and cumbersome implementation compared to the simpler whole-file locks. Because byte-range locks are mandated by the POSIX standard, the developers added them to 4.4BSD reluctantly. The semantics of byte-range locks come from the lock’s initial implementation in System V, which included releasing all locks held by a process on a file every time a close system call was done on a descriptor referencing that file. The 4.2BSD whole-file locks are removed only on the last close. A problem with the POSIX semantics is that an application can lock a file, then call a library routine that opens, reads, and closes the locked file. Calling the library routine will have the unexpected effect of releasing the locks held by the application. Another problem is that a file must be open for writing to be allowed to get an exclusive lock. A process that does not have permission to open a file for writing cannot get an exclusive lock on that file. To avoid these problems, yet remain POSIX compliant, 4.4BSD provides separate interfaces for byte-range locks and whole-file locks. The byte-range locks follow the POSIX semantics; the whole-file locks follow the traditional 4.2BSD semantics. The two types of locks can be used concurrently; they will serialize against each other properly.

Both whole-file locks and byte-range locks use the same implementation; the whole-file locks are implemented as a range lock over an entire file. The kernel handles the other differing semantics between the two implementations by having the byte-range locks be applied to processes whereas the whole-file locks are applied to descriptors. Because descriptors are shared with child processes, the whole-file locks are inherited. Because the child process gets its own process structure, the byte-range locks are not inherited. The last-close versus every-close semantics are a small bit of special-case code in the close routine that checks whether the underlying object is a process or a descriptor. It releases locks on every call if the lock is associated with a process, and only when the reference count drops to zero if the lock is associated with a descriptor.

Locking schemes can be classified according to the extent that they are enforced. A scheme in which locks are enforced for every process without choice is said to use mandatory locks, whereas a scheme in which locks are enforced for only those processes that request them is said to use advisory locks. Clearly, advisory locks are effective only when all programs accessing a file use the locking scheme. With mandatory locks, there must be some override policy implemented in the kernel. With advisory locks, the policy is left to the user programs. In the 4.4BSD system, programs with superuser privilege are allowed to override any protection scheme. Because many of the programs that need to use locks must also run as the superuser, 4.2BSD implemented advisory locks, rather than creating an additional protection scheme that was inconsistent with the UNIX philosophy or that could not be used by privileged programs. The use of advisory locks carried over to the POSIX specification of byte-range locks and is retained in 4.4BSD.

The 4.4BSD file-locking facilities allow cooperating programs to apply advisory shared or exclusive locks on ranges of bytes within a file. Only one process may have an exclusive lock on a byte range, whereas multiple shared locks may be present. Both shared and exclusive locks cannot be present on a byte range at the same time. If any lock is requested when another process holds an exclusive lock, or an exclusive lock is requested when another process holds any lock, the lock request will block until the lock can be obtained. Because shared and exclusive locks are only advisory, even if a process has obtained a lock on a file, another process may access the file if it ignores the locking mechanism.

So that there are no races between creating and locking a file, a lock can be requested as part of opening a file. Once a process has opened a file, it can manipulate locks without needing to close and reopen the file. This feature is useful, for example, when a process wishes to apply a shared lock, to read information, to determine whether an update is required, then to apply an exclusive lock and to update the file.

A request for a lock will cause a process to block if the lock cannot be obtained immediately. In certain instances, this blocking is unsatisfactory. For example, a process that wants only to check whether a lock is present would require a separate mechanism to find out this information. Consequently, a process can specify that its locking request should return with an error if a lock cannot be obtained immediately. Being able to request a lock conditionally is useful to daemon processes that wish to service a spooling area. If the first instance of the daemon locks the directory where spooling takes place, later daemon processes can easily check to see whether an active daemon exists. Since locks exist only while the locking processes exist, locks can never be left active after the processes exit or if the system crashes.

The implementation of locks is done on a per-filesystem basis. The implementation for the local filesystems is described in Section 7.5. A network-based filesystem has to coordinate locks with a central lock manager that is usually located on the server exporting the filesystem. Client lock requests must be sent to the lock manager. The lock manager arbitrates among lock requests from processes running on its server and from the various clients to which it is exporting the filesystem. The most complex operation for the lock manager is recovering lock state when a client or server is rebooted or becomes partitioned from the rest of the network. The 4.4BSD system does not have a network-based lock manager.

Multiplexing I/O on Descriptors

A process sometimes wants to handle I/O on more than one descriptor. For example, consider a remote login program that wants to read data from the keyboard and to send them through a socket to a remote machine. This program also wants to read data from the socket connected to the remote end and to write them to the screen. If a process makes a read request when there are no data available, it is normally blocked in the kernel until the data become available. In our example, blocking is unacceptable. If the process reads from the keyboard and blocks, it will be unable to read data from the remote end that are destined for the screen. The user does not know what to type until more data arrive from the remote end; hence, the session deadlocks. Conversely, if the process reads from the remote end when there are no data for the screen, it will block and will be unable to read from the terminal. Again, deadlock would occur if the remote end were waiting for output before sending any data. There is an analogous set of problems to blocking on the writes to the screen or to the remote end. If a user has stopped output to their screen by typing the stop character, the write will block until they type the start character. In the meantime, the process cannot read from the keyboard to find out that the user wants to flush the output.

Historic UNIX systems have handled the multiplexing problem by using multiple processes that communicate through pipes or some other interprocess-communication facility, such as shared memory. This approach, however, can result in significant overhead as a result of context switching among the processes if the cost of processing input is small compared to the cost of a context switch. Furthermore, it is often more straightforward to implement applications of this sort in a single process. For these reasons, 4.4BSD provides three mechanisms that permit multiplexing I/O on descriptors: polling I/O, nonblocking I/O, and signal-driven I/O. Polling is done with the select system call, described in the next subsection. Operations on nonblocking descriptors complete immediately, partially complete an input or output operation and return a partial count, or return an error that shows that the operation could not be completed at all. Descriptors that have signaling enabled cause the associated process or process group to be notified when the I/O state of the descriptor changes.

There are four possible alternatives that avoid the blocking problem:

1. Set all the descriptors into nonblocking mode. The process can then try operations on each descriptor in turn, to find out which descriptors are ready to do I/O. The problem with this approach is that the process must run continuously to discover whether there is any I/O to be done.

2. Enable all descriptors of interest to signal when I/O can be done. The process can then wait for a signal to discover when it is possible to do I/O. The drawback to this approach is that signals are expensive to catch. Hence, signal-driven I/O is impractical for applications that do moderate to large amounts of I/O.

3. Have the system provide a method for asking which descriptors are capable of doing I/O. If none of the requested descriptors are ready, the system can put the process to sleep until a descriptor becomes ready. This approach avoids the problem of deadlock, because the process will be awakened whenever it is possible to do I/O, and will be told which descriptor is ready. The drawback is that the process must do two system calls per operation: one to poll for the descriptor that is ready to do I/O and another to do the operation itself.

4. Have the process notify the system of all the descriptors that it is interested in reading, then do a blocking read on that set of descriptors. When the read returns, the process is notified on which descriptor the read completed. The benefit of this approach is that the process does a single system call to specify the set of descriptors, then loops doing only reads [Accetta et al, 1986].

The first approach is available in 4.4BSD as nonblocking I/O. It typically is used for output descriptors, because the operation typically will not block. Rather than doing a select, which nearly always succeeds, followed immediately by a write, it is more efficient to try the write and revert to using select only during periods when the write returns a blocking error. The second approach is available in 4.4BSD as signal-driven I/O. It typically is used for rare events, such as for the arrival of out-of-band data on a socket. For such rare events, the cost of handling an occasional signal is lower than that of checking constantly with select to find out whether there are any pending data.

The third approach is available in 4.4BSD via the select system call. Although less efficient than the fourth approach, it is a more general interface. In addition to handling reading from multiple descriptors, it handles writes to multiple descriptors, notification of exceptional conditions, and timeout when no I/O is possible.

The select interface takes three masks of descriptors to be monitored, corresponding to interest in reading, writing, and exceptional conditions. In addition, it takes a timeout value for returning from select if none of the requested descriptors becomes ready before a specified amount of time has elapsed. The select call returns the same three masks of descriptors after modifying them to show the descriptors that are able to do reading, to do writing, or to provide an exceptional condition. If none of the descriptors has become ready in the timeout interval, select returns showing that no descriptors are ready for I/O.

Implementation of Select

The implementation of select, like that of much other kernel functionality, is divided into a generic top layer and many device- or socket-specific bottom pieces.

At the top level, select decodes the user’s request and then calls the appropriate lower-level select functions. The top level takes the following steps:

1. Copy and validate the descriptor masks for read, write, and exceptional conditions. Doing validation requires checking that each requested descriptor is currently open by the process.

2. Set the selecting flag for the process.

3. For each descriptor in each mask, poll the device by calling its select routine. If the descriptor is not able to do the requested I/O operation, the device select routine is responsible for recording that the process wants to do I/O. When I/O becomes possible for the descriptor—usually as a result of an interrupt from the underlying device—a notification must be issued for the selecting process.

4. Because the selection process may take a long time, the kernel does not want to block out I/O during the time it takes to poll all the requested descriptors. Instead, the kernel arranges to detect the occurrence of I/O that may affect the status of the descriptors being polled. When such I/O occurs, the select-notification routine, selwakeup(), clears the selecting flag. If the top-level select code finds that the selecting flag for the process has been cleared while it has been doing the polling, and it has not found any descriptors that are ready to do an operation, then the top level knows that the polling results are incomplete and must be repeated starting at step 2. The other condition that requires the polling to be repeated is a collision. Collisions arise when multiple processes attempt to select on the same descriptor at the same time. Because the select routines have only enough space to record a single process identifier, they cannot track multiple processes that need to be awakened when I/O is possible. In such rare instances, all processes that are selecting must be awakened.

5. If no descriptors are ready and the select specified a timeout, the kernel posts a timeout for the requested amount of time. The process goes to sleep, giving the address of the kernel global variable selwait. Normally, a descriptor will become ready and the process will be notified by selwakeup(). When the process is awakened, it repeats the polling process and returns the available descriptors. If none of the descriptors become ready before the timer expires, the process returns with a timed-out error and an empty list of available descriptors.

Each of the low-level polling routines in the terminal drivers and the network protocols follows roughly the same set of steps. A piece of the select routine for a terminal driver is shown in Fig. 6.5. The steps involved in a device select routine are as follows:

1. The socket or device select entry is called with flag of FREAD, FWRITE, or 0 (exceptional condition). The example in Fig. 6.5 shows the FREAD case; the others cases are similar.

2. The poll returns success if the requested operation is possible. In Fig. 6.5, it is possible to read a character if the number of unread characters is greater than zero. In addition, if the carrier has dropped, it is possible to get a read error. A return from select does not necessarily mean that there are data to read; rather, it means that a read will not block.

3. If the requested operation is not possible, the process identifier is recorded with the socket or device for later notification. In Fig. 6.5, the recording is done by the selrecord() routine. That routine first checks to see whether the current process was the one that was recorded previously for this record; if it was, then no further action is needed. The second if statement checks for a collision. The first part of the conjunction checks to see whether any process identifier is recorded already. If there is none, then there is no collision. If there is a process identifier recorded, it may remain from an earlier call on select by a process that is no longer selecting because one of its other descriptors became ready. If that process is still selecting, it will be sleeping on sel-wait (when it is sleeping, the address of the sleep event is stored in p_wchan). If it is sleeping on some other event, its p_wchan will have a value different from that of selwait. If it is running, its p_wchan will be zero. If it is not sleeping on selwait, there is no collision, and the process identifier is saved in si_pid.

Figure 6.5 Select code to check for data to read in a terminal driver.

4. If multiple processes are selecting on the same socket or device, a collision is recorded for the socket or device, because the structure has only enough space for a single process identifier. In Fig. 6.5, a collision occurs when the second if statement in the selrecord() function is true. There is a tty structure for each terminal line (or pseudoterminal) on the machine. Normally, only one process at a time is selecting to read from the terminal, so collisions are rare.

Selecting processes must be notified when I/O becomes possible. The steps involved in a status change awakening a process are as follows:

1. The device or socket detects a change in status. Status changes normally occur because of an interrupt (e.g., a character coming in from a keyboard or a packet arriving from the network).

2. Selwakeup() is called with a pointer to the selinfo structure used by sel-record() to record the process identifier, and with a flag showing whether a collision occurred.

3. If the process is sleeping on selwait, it is made runnable (or is marked ready, if it is stopped). If the process is sleeping on some event other than selwait, it is not made runnable. A spurious call to selwakeup() can occur when the process returns from select to begin processing one descriptor and then another descriptor on which it had been selecting also becomes ready.

4. If the process has its selecting flag set, the flag is cleared so that the kernel will know that its polling results are invalid and must be recomputed.

5. If a collision has occurred, all sleepers on selwait are awakened to rescan to see whether one of their descriptors became ready. Awakening all selecting processes is necessary because the selrecord() routine could not record all the processes that needed to be awakened. Hence, it has to wake up all processes that could possibly have been interested. Empirically, collisions occur infrequently. If they were a frequent occurrence, it would be worthwhile to store multiple process identifiers in the selinfo structure.

Movement of Data Inside the Kernel

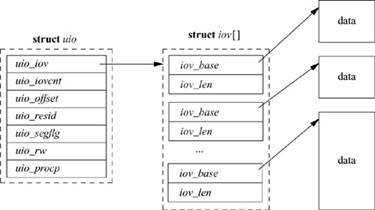

Within the kernel, I/O data are described by an array of vectors. Each I/O vector or iovec has a base address and a length. The I/O vectors are identical to the I/O vectors used by the readv and writev system calls.

The kernel maintains another structure, called a uio structure, that holds additional information about the I/O operation. A sample uio structure is shown in Fig. 6.6; it contains

• A pointer to the iovec array

• The number of elements in the iovec array

• The file offset at which the operation should start

• The sum of the lengths of the I/O vectors

• A flag showing whether the source and destination are both within the kernel, or whether the source and destination are split between the user and the kernel

• A flag showing whether the data are being copied from the uio structure to the kernel (UIO_WRITE)or from the kernel to the uio structure (UIO_READ)

• A pointer to the process whose data area is described by the uio structure (the pointer is NULL if the uio structure describes an area within the kernel)

All I/O within the kernel is described with iovec and uio structures. System calls such as read and write that are not passed an iovec create a uio to describe their arguments; this uio structure is passed to the lower levels of the kernel to specify the parameters of an I/O operation. Eventually, the uio structure reaches the part of the kernel responsible for moving the data to or from the process address space: the filesystem, the network, or a device driver. In general, these parts of the kernel do not interpret uio structures directly. Instead, they arrange a kernel buffer to hold the data, then use uiomove() to copy the data to or from the buffer or buffers described by the uio structure. The uiomove() routine is called with a pointer to a kernel data area, a data count, and a uio structure. As it moves data, it updates the counters and pointers of the iovec and uio structures by a corresponding amount. If the kernel buffer is not as large as the areas described by the uio structure, the uio structure will point to the part of the process address space just beyond the location completed most recently. Thus, while servicing a request, the kernel may call uiomove() multiple times, each time giving a pointer to a new kernel buffer for the next block of data.

Character device drivers that do not copy data from the process generally do not interpret the uio structure. Instead, there is one low-level kernel routine that arranges a direct transfer to or from the address space of the process. Here, a separate I/O operation is done for each iovec element, calling back to the driver with one piece at a time.

Historic UNIX systems used global variables in the user area to describe I/O. This approach has several problems. The lower levels of the kernel are not reentrant, because there is exactly one context to describe an I/O operation. The system cannot do scatter-gather I/O, since there is only a single base and size variable per process. Finally, the bottom half of the kernel cannot do I/O, because it does not have a user area.

The one part of the 4.4BSD kernel that does not use uio structures is the block-device drivers. The decision not to change these interfaces to use uio structures was largely pragmatic. The developers would have had to change many drivers. The existing buffer interface was already decoupled from the user structure; hence, the interface was already reentrant and could be used by the bottom half of the kernel. The only gain was to allow scatter-gather I/O. The kernel does not need scatter-gather operations on block devices, however, and user operations on block devices are done through the buffer cache.

6.5 The Virtual-Filesystem Interface

In 4.3BSD, the file entries directly referenced the local filesystem inode. An inode is a data structure that describes the contents of a file; it is more fully described in Section 7.2. This approach worked fine when there was a single filesystem implementation. However, with the advent of multiple filesystem types, the architecture had to be generalized. The new architecture had to support importing of filesystems from other machines including other machines that were running different operating systems.

One alternative would have been to connect the multiple filesystems into the system as different file types. However, this approach would have required massive restructuring of the internal workings of the system, because current directories, references to executables, and several other interfaces used inodes instead of file entries as their point of reference. Thus, it was easier and more logical to add a new object-oriented layer to the system below the file entry and above the inode. This new layer was first implemented by Sun Microsystems, which called it the virtual-node, or vnode, layer. Interfaces in the system that had referred previously to inodes were changed to reference generic vnodes. A vnode used by a local filesystem would refer to an inode. A vnode used by a remote filesystem would refer to a protocol control block that described the location and naming information necessary to access the remote file.

Contents of a Vnode

The vnode is an extensible object-oriented interface. It contains information that is generically useful independent of the underlying filesystem object that it represents. The information stored in a vnode includes the following:

• Flags are used for locking the vnode and identifying generic attributes. An example generic attribute is a flag to show that a vnode represents an object that is the root of a filesystem.

• The various reference counts include the number of file entries that are open for reading and/or writing that reference the vnode, the number of file entries that are open for writing that reference the vnode, and the number of pages and buffers that are associated with the vnode.

• A pointer to the mount structure describes the filesystem that contains the object represented by the vnode.

• Various information is used to do file read-ahead.

• A reference to an NFS lease is included; see Section 9.3.

• A reference to state about special devices, sockets, and FIFOsis included.

• There is a pointer to the set of vnode operations defined for the object. These operations are described in the next subsection.

• A pointer to private information needed for the underlying object is included. For the local filesystem, this pointer will reference an inode; for NFS, it will reference an nfsnode.

• The type of the underlying object (e.g., regular file, directory, character device, etc.) is given. The type information is not strictly necessary, since a vnode client could always call a vnode operation to get the type of the underlying object. However, because the type often is needed, the type of underlying objects does not change, and it takes time to call through the vnode interface, the object type is cached in the vnode.

• There are clean and dirty buffers associated with the vnode. Each valid buffer in the system is identified by its associated vnode and the starting offset of its data within the object that the vnode represents. All the buffers that have been modified, but have not yet been written back, are stored on their vnode dirty-buffer list. All buffers that have not been modified, or have been written back since they were last modified, are stored on their vnode clean list. Having all the dirty buffers for a vnode grouped onto a single list makes the cost of doing an fsync system call to flush all the dirty blocks associated with a file proportional to the amount of dirty data. In 4.3BSD, the cost was proportional to the smaller of the size of the file or the size of the buffer pool. The list of clean buffers is used to free buffers when a file is deleted. Since the file will never be read again, the kernel can immediately cancel any pending I/O on its dirty buffers, and reclaim all its clean and dirty buffers and place them at the head of the buffer free list, ready for immediate reuse.

• A count is kept of the number of buffer write operations in progress. To speed the flushing of dirty data, the kernel does this operation by doing asynchronous writes on all the dirty buffers at once. For local filesystems, this simultaneous push causes all the buffers to be put into the disk queue, so that they can be sorted into an optimal order to minimize seeking. For remote filesystems, this simultaneous push causes all the data to be presented to the network at once, so that it can maximize their throughput. System calls that cannot return until the data are on stable store (such as fsync) can sleep on the count of pending output operations, waiting for the count to reach zero.

The position of vnodes within the system was shown in Fig. 6.1. The vnode itself is connected into several other structures within the kernel, as shown in Fig. 6.7. Each mounted filesystem within the kernel is represented by a generic mount structure that includes a pointer to a filesystem-specific control block. All the vnodes associated with a specific mount point are linked together on a list headed by this generic mount structure. Thus, when it is doing a sync system call for a filesystem, the kernel can traverse this list to visit all the files active within that filesystem. Also shown in the figure are the lists of clean and dirty buffers associated with each vnode. Finally, there is a free list that links together all the vnodes in the system that are not being used actively. The free list is used when a filesystem needs to allocate a new vnode, so that the latter can open a new file; see Section 6.4.

Vnode Operations

Vnodes are designed as an object-oriented interface. Thus, the kernel manipulates them by passing requests to the underlying object through a set of defined operations. Because of the many varied filesystems that are supported in 4.4BSD, the set of operations defined for vnodes is both large and extensible. Unlike the original Sun Microsystems vnode implementation, that in 4.4BSD allows dynamic addition of vnode operations at system boot time. As part of the booting process, each filesystem registers the set of vnode operations that it is able to support. The kernel then builds a table that lists the union of all operations supported by any filesystem. From that table, it builds an operations vector for each filesystem. Supported operations are filled in with the entry point registered by the filesystem. Filesystems may opt to have unsupported operations filled in with either a default routine (typically a routine to bypass the operation to the next lower layer; see Section 6.7), or a routine that returns the characteristic error “operation not supported” [Heidemann & Popek, 1994].

Figure 6.7 Vnode linkages. D—dirty buffer; C—clean buffer.

In 4.3BSD, the local filesystem code provided both the semantics of the hierarchical filesystem naming and the details of the on-disk storage management. These functions are only loosely related. To enable experimentation with other disk-storage techniques without having to reproduce the entire naming semantics, 4.4BSD splits the naming and storage code into separate modules. This split is evident at the vnode layer, where there are a set of operations defined for hierarchical filesystem operations and a separate set of operations defined for storage of variable-sized objects using a flat name space. About 60 percent of the traditional filesystem code became the name-space management, and the remaining 40 percent became the code implementing the on-disk file storage. The naming scheme and its vnode operations are described in Chapter 7. The disk-storage scheme and its vnode operations are explained in Chapter 8.

Pathname Translation

The translation of a pathname requires a series of interactions between the vnode interface and the underlying filesystems. The pathname-translation process proceeds as follows:

1. The pathname to be translated is copied in from the user process or, for a remote filesystem request, is extracted from the network buffer.

2. The starting point of the pathname is determined as either the root directory or the current directory (see Section 2.7). The vnode for the appropriate directory becomes the lookup directory used in the next step.

3. The vnode layer calls the filesystem-specific lookup() operation, and passes to that operation the remaining components of the pathname and the current lookup directory. Typically, the underlying filesystem will search the lookup directory for the next component of the pathname and will return the resulting vnode (or an error if the name does not exist).

4. If an error is returned, the top level returns the error. If the pathname has been exhausted, the pathname lookup is done, and the returned vnode is the result of the lookup. If the pathname has not been exhausted, and the returned vnode is not a directory, then the vnode layer returns the “not a directory” error. If there are no errors, the top layer checks to see whether the returned directory is a mount point for another filesystem. If it is, then the lookup directory becomes the mounted filesystem; otherwise, the lookup directory becomes the vnode returned by the lower layer. The lookup then iterates with step 3.

Although it may seem inefficient to call through the vnode interface for each pathname component, doing so usually is necessary. The reason is that the underlying filesystem does not know which directories are being used as mount points. Since a mount point will redirect the lookup to a new filesystem, it is important that the current filesystem not proceed past a mounted directory. Although it might be possible for a local filesystem to be knowledgeable about which directories are mount points, it is nearly impossible for a server to know which of the directories within its exported filesystems are being used as mount points by its clients. Consequently, the conservative approach of traversing only a single pathname component per lookup() call is used. There are a few instances where a filesystem will know that there are no further mount points in the remaining path, and will traverse the rest of the pathname. An example is crossing into a portal, described in Section 6.7.

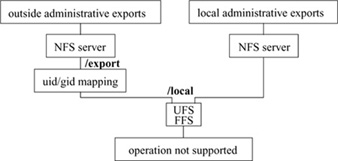

Exported Filesystem Services

The vnode interface has a set of services that the kernel exports from all the filesystems supported under the interface. The first of these is the ability to support the update of generic mount options. These options include the following:

Do not execute any files on the filesystem. This option is often used when a server exports binaries for a different architecture that cannot be executed on the server itself. The kernel will even refuse to execute shell scripts; if a shell script is to be run, its interpreter must be invoked explicitly. |

|

nosuid |

Do not honor the set-user-id or set-group-id flags for any executables on the filesystem. This option is useful when a filesystem of unknown origin is mounted. |

nodev |

Do not allow any special devices on the filesystem to be opened. This option is often used when a server exports device directories for a different architecture. The values of the major and minor numbers are nonsensical on the server. |

Together, these options allow reasonably secure mounting of untrusted or foreign filesystems. It is not necessary to unmount and remount the filesystem to change these flags; they may be changed while a filesystem is mounted. In addition, a filesystem that is mounted read-only can be upgraded to allow writing. Conversely, a filesystem that allows writing may be downgraded to read-only provided that no files are open for modification. The system administrator can forcibly downgrade the filesystem to read-only by requesting that any files open for writing have their access revoked.