9

Compression

9.1 Introduction to compression

Compression, bit rate reduction and data reduction are all terms which mean basically the same thing in this context. In essence the same (or nearly the same) information is carried using a smaller quantity or rate of data. It should be pointed out that, in audio, compression traditionally means a process in which the dynamic range of the sound is reduced. Provided the context is clear, the two meanings can co-exist without a great deal of confusion.

There are several reasons why compression techniques are popular:

(a)Compression extends the playing time of storage devices such as file servers.

(b)Compression allows miniaturization. With less data to store, the same playing time is obtained with smaller hardware. This is the approach used in the DV video tape format, DVD and MiniDisc.

(c)Tolerances can be relaxed. With less data to record, storage density can be reduced making equipment which is more resistant to adverse environments and which requires less maintenance.

(d)In communication systems, compression allows a reduction in bandwidth, hence the use in digital television broadcasting. Where the communication is billed by the bit, compression will result in a reduction in cost. Compression may also make possible some process which would be impracticable without it, such as Internet video.

(e)If a given bandwidth is available to an uncompressed signal, compression allows faster than real-time transmission in the same bandwidth.

(f)If a given bandwidth is available, compression allows a better-quality signal in the same bandwidth.

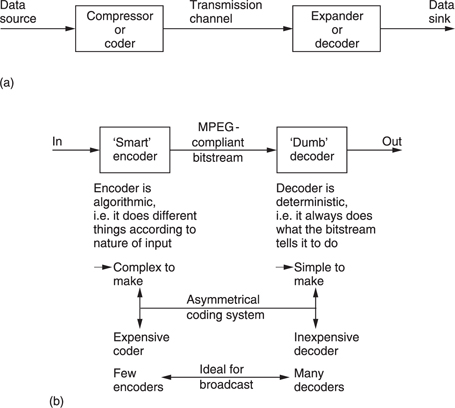

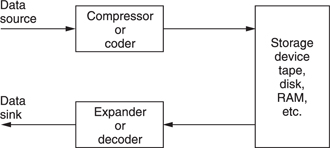

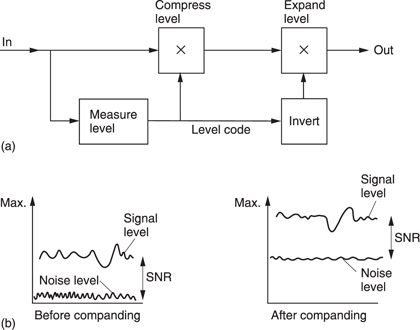

Compression is summarized in Figure 9.1. It will be seen in (a) that the data rate is reduced at source by the compressor. The compressed data are then passed through a communication channel and returned to the original rate by the expander. The ratio between the source data rate and the channel data rate is called the compression factor. The term coding gain is also used. Sometimes a compressor and expander in series are referred to as a compander. The compressor may equally well be referred to as a coder and the expander a decoder in which case the tandem pair may be called a codec.

In audio and video compression, where the encoder is more complex than the decoder the system is said to be asymmetrical as in Figure 9.1(b). The encoder needs to be algorithmic or adaptive whereas the decoder is ‘dumb’ and carries out fixed actions. This is advantageous in applications such as broadcasting where the number of expensive complex encoders is small but the number of simple inexpensive decoders is large. In point-topoint applications or in recorders the advantage of asymmetrical coding is not so great.

Although there are many different coding techniques, all of them fall into one or other of these categories. In lossless coding, the data from the expander are identical bit-for-bit with the original source data. The socalled ‘stacker’ programs which increase the apparent capacity of disk drives in personal computers use lossless codecs. Clearly with computer programs the corruption of a single bit can be catastrophic. Lossless coding is generally restricted to compression factors of around 2:1.

It is important to appreciate that a lossless coder cannot guarantee a particular compression factor and the communications link or recorder used with it must be able to function with the variable output data rate. Source data which result in poor compression factors on a given codec are described as difficult. It should be pointed out that the difficulty is often a function of the codec. In other words data which one codec finds difficult may not be found difficult by another. Lossless codecs can be included in bit-error-rate testing schemes. It is also possible to cascade or concatenate lossless codecs without any special precautions.

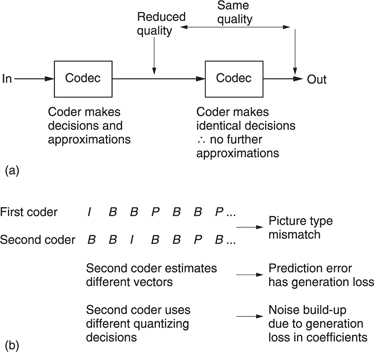

In lossy coding, data from the expander are not identical bit-for-bit with the source data and as a result comparing the input with the output is bound to reveal differences. Lossy codecs are not suitable for computer data, but are used in audio and video as they allow greater compression factors than lossless codecs. Successful lossy codecs are those in which the errors are arranged so that a human viewer or listener finds them subjectively difficult to detect. Thus lossy codecs must be based on an understanding of psychoacoustic and psychovisual perception and are often called perceptive codes.

In perceptive coding, the greater the compression factor required, the more accurately must the human senses be modelled. Perceptive coders can be forced to operate at a fixed compression factor. This is convenient for practical transmission applications where a fixed data rate is easier to handle than a variable rate. The result of a fixed compression factor is that the subjective quality can vary with the ‘difficulty’ of the input material. Perceptive codecs should not be concatenated indiscriminately especially if they use different algorithms. As the reconstructed signal from a perceptive codec is not bit-for-bit accurate, clearly such a codec cannot be included in any bit error rate testing system as the coding differences would be indistinguishable from real errors.

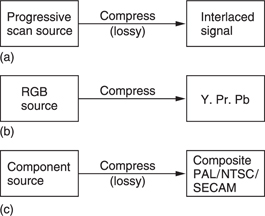

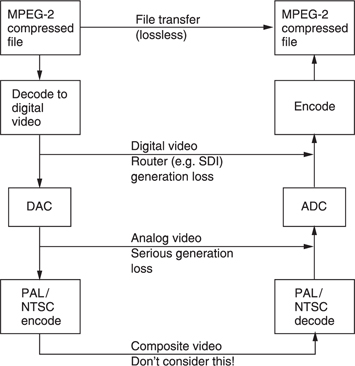

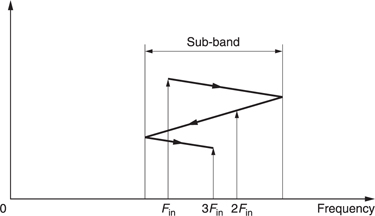

Although the adoption of digital techniques to images is recent, compression itself is as old as television. Figure 9.2 shows some of the compression techniques used in traditional television systems.

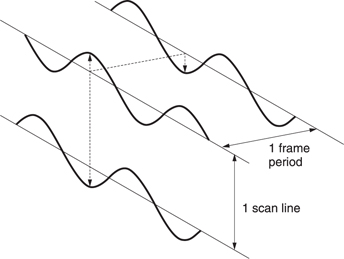

One of the oldest techniques is interlace which has been used in analog television from the very beginning as a primitive way of reducing bandwidth. As was seen in Chapter 7, interlace is not without its problems, particularly in motion rendering. MPEG supports interlace simply because legacy interlaced signals exist and there is a requirement to compress them. This should not be taken to mean that it is a good idea.

The generation of colour difference signals from RGB in video represents an application of perceptive coding. The human visual system (HVS) sees no change in quality although the bandwidth of the colour difference signals is reduced. This is because human perception of detail in colour changes is much less than in brightness changes. This approach is sensibly retained in MPEG.

Composite video systems such as PAL, NTSC and SECAM are all analog compression schemes which embed a subcarrier in the luminance signal so that colour pictures are available in the same bandwidth as monochrome. In comparison with a progressive scan RGB picture, interlaced composite video has a compression factor of 6:1.

In a sense MPEG can be considered to be a modern digital equivalent of analog composite video as it has most of the same attributes. For example, the eight-field sequence of a PAL subcarrier which makes editing diffficult has its equivalent in the GOP (group of pictures) of MPEG.1

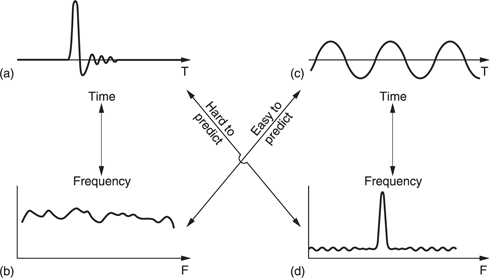

In a PCM digital system the bit rate is the product of the sampling rate and the number of bits in each sample and this is generally constant. Nevertheless the information rate of a real signal varies. In all real signals, part of the signal is obvious from what has gone before or what may come later and a suitable receiver can predict that part so that only the true information actually has to be sent. If the characteristics of a predicting receiver are known, the transmitter can omit parts of the message in the knowledge that the receiver has the ability to re-create it. Thus all encoders must contain a model of the decoder.

One definition of information is that it is the unpredictable or surprising element of data. Newspapers are a good example of information because they only mention items which are surprising. Newspapers never carry items about individuals who have not been involved in an accident as this is the normal case. Consequently the phrase ‘no news is good news’ is remarkably true because if an information channel exists but nothing has been sent then it is most likely that nothing remarkable has happened.

The difference between the information rate and the overall bit rate is known as the redundancy. Compression systems are designed to eliminate as much of that redundancy as practicable or perhaps affordable. One way in which this can be done is to exploit statistical predictability in signals. The information content or entropy of a sample is a function of how different it is from the predicted value. Most signals have some degree of predictability. A sine wave is highly predictable, because all cycles look the same. According to Shannon's theory, any signal which is totally predictable carries no information. In the case of the sine wave this is clear because it represents a single frequency and so has no bandwidth.

At the opposite extreme a signal such as noise is completely unpredictable and as a result all codecs find noise difficult. There are two consequences of this characteristic. First, a codec which is designed using the statistics of real material should not be tested with random noise because it is not a representative test. Second, a codec which performs well with clean source material may perform badly with source material containing superimposed noise. Most practical compression units require some form of preprocessing before the compression stage proper and appropriate noise reduction should be incorporated into the preprocessing if noisy signals are anticipated. It will also be necessary to restrict the degree of compression applied to noisy signals.

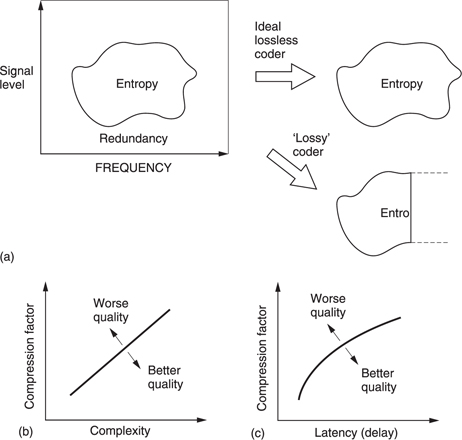

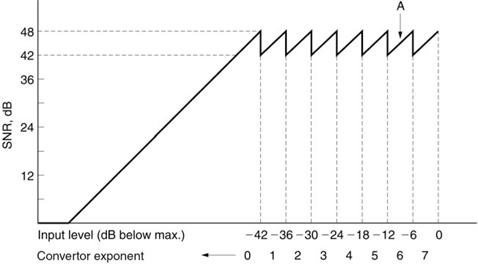

All real signals fall part-way between the extremes of total predictability and total unpredictability or noisiness. If the bandwidth (set by the sampling rate) and the dynamic range (set by the wordlength) of the transmission system are used to delineate an area, this sets a limit on the information capacity of the system. Figure 9.3(a) shows that most real signals occupy only part of that area. The signal may not contain all frequencies, or it may not have full dynamics at certain frequencies.

Entropy can be thought of as a measure of the actual area occupied by the signal. This is the area that must be transmitted if there are to be no subjective differences or artifacts in the received signal. The remaining area is called the redundancy because it adds nothing to the information conveyed. Thus an ideal coder could be imagined which miraculously sorts out the entropy from the redundancy and sends only the former. An ideal decoder would then re-create the original impression of the information quite perfectly.

Figure 9.3 (a) A perfect coder removes only the redundancy from the input signal and results in subjectively lossless coding. If the remaining entropy is beyond the capacity of the channel some of it must be lost and the codec will then be lossy. An imperfect coder will also be lossy as it fails to keep all entropy. (b) As the compression factor rises, the complexity must also rise to maintain quality. (c) High compression factors also tend to increase latency or delay through the system.

As the ideal is approached, the coder complexity and the latency or delay both rise. Figure 9.3(b) shows how complexity increases with compression factor. This can be seen in the relative complexities of MPEG-1, 2 and 4. Figure 9.3(c) shows how increasing the codec latency can improve the compression factor. Obviously we would have to provide a channel which could accept whatever entropy the coder extracts in order to have transparent quality. As a result moderate coding gains which only remove redundancy need not cause artifacts and result in systems which are described as subjectively lossless.

If the channel capacity is not sufficient for that, then the coder will have to discard some of the entropy and with it useful information. Larger coding gains which remove some of the entropy must result in artifacts. It will also be seen from Figure 9.3 that an imperfect coder will fail to separate the redundancy and may discard entropy instead, resulting in artifacts at a suboptimal compression factor.

A single variable-rate transmission or recording channel is inconvenient and unpopular with channel providers because it is difficult to police. The requirement can be overcome by combining several compressed channels into one constant rate transmission in a way which flexibly allocates data rate between the channels. Provided the material is unrelated, the probability of all channels reaching peak entropy at once is very small and so those channels which are at one instant passing easy material will free up transmission capacity for those channels which are handling difficult material. This is the principle of statistical multiplexing.

Where the same type of source material is used consistently, e.g. English text, then it is possible to perform a statistical analysis on the frequency with which particular letters are utilized. Variable-length coding is used in which frequently used letters are allocated short codes and letters which occur infrequently are allocated long codes. This results in a lossless code. The well-known Morse code used for telegraphy is an example of this approach. The letter e is the most frequent in English and is sent with a single dot. An infrequent letter such as z is allocated a long complex pattern. It should be clear that codes of this kind which rely on a prior knowledge of the statistics of the signal are only effective with signals actually having those statistics. If Morse code is used with another language, the transmission becomes significantly less efficient because the statistics are quite different; the letter z, for example, is quite common in Czech.

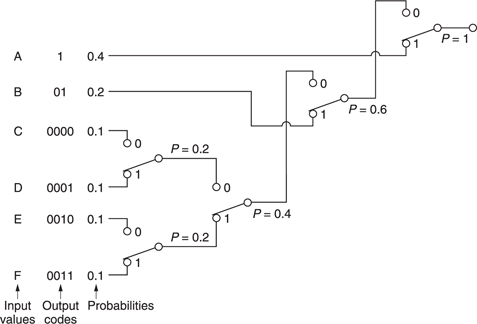

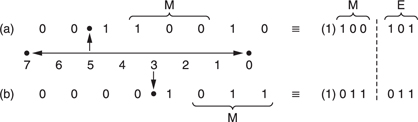

The Huffman code2 is one which is designed for use with a data source having known statistics and shares the same principles with the Morse code. The probability of the different code values to be transmitted is studied, and the most frequent codes are arranged to be transmitted with short wordlength symbols. As the probability of a code value falls, it will be allocated longer wordlength. The Huffman code is used in conjunction with a number of compression techniques and is shown in Figure 9.4.

The input or source codes are assembled in order of descending probability. The two lowest probabilities are distinguished by a single code bit and their probabilities are combined. The process of combining probabilities is continued until unity is reached and at each stage a bit is used to distinguish the path. The bit will be a zero for the most probable path and one for the least. The compressed output is obtained by reading the bits which describe which path to take going from right to left.

In the case of computer data, there is no control over the data statistics. Data to be recorded could be instructions, images, tables, text files and so on; each having their own code value distributions. In this case a coder relying on fixed source statistics will be completely inadequate. Instead a system is used which can learn the statistics as it goes along. The Lempel—Ziv-Welch (LZW) lossless codes are in this category. These codes build up a conversion table between frequent long source data strings and short transmitted data codes at both coder and decoder and initially their compression factor is below unity as the contents of the conversion tables are transmitted along with the data. However, once the tables are established, the coding gain more than compensates for the initial loss. In some applications, a continuous analysis of the frequency of code selection is made and if a data string in the table is no longer being used with sufficient frequency it can be deselected and a more common string substituted.

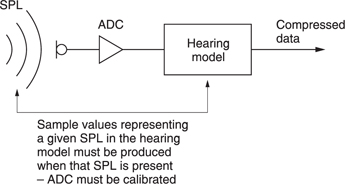

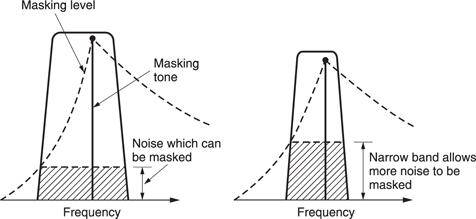

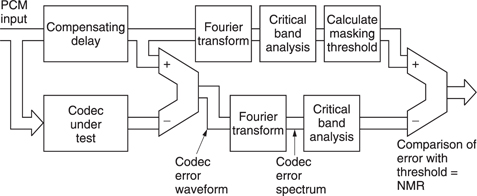

Lossless codes are less common for audio and video coding where perceptive codes are permissible. The perceptive codes often obtain a coding gain by shortening the wordlength of the data representing the signal waveform. This must increase the noise level and the trick is to ensure that the resultant noise is placed at frequencies where human senses are least able to perceive it. As a result although the received signal is measureably different from the source data, it can appear the same to the human listener or viewer at moderate compression factors. As these codes rely on the characteristics of human sight and hearing, they can only be fully tested subjectively.

The compression factor of such codes can be set at will by choosing the wordlength of the compressed data. Whilst mild compression will be undetectable, with greater compression factors, artifacts become noticeable. Figure 9.3 shows that this is inevitable from entropy considerations.

9.2 Compression standards

Standards are important in compression because without a suitable decoder, compressed data are meaningless. The MPEG standards are possibly the most well known, but they are certainly not the only standards. In video recording, the DV standard is also important. There are also a number of obsolescent standards which predated MPEG, and various proprietary schemes.

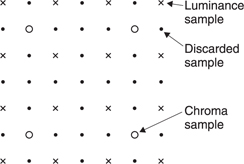

MPEG is actually an acronym for the Moving Pictures Experts Group which was formed by the ISO (International Standards Organization) to set standards for audio and video compression and transmission. The first compression standard for audio and video was MPEG-1.3,4 MPEG-1 was initially designed to allow pictures and sound to be carried in the standard bit rate of a Compact Disc. Achieving such a low bit rate at the time required considerable spatial and temporal subsampling of standard definition television down to what is known as SIF (source intermediate format). Figure 9.5 shows that SIF discards every other field in the input to halve the picture rate and eliminate interlace. Discarding alternate picture lines in this way halves vertical resolution and the horizontal resolution is also halved to match. Chroma data are further downsampled. MPEG-1 was of limited quality and application and the subsequent MPEG-2 standard was considerably broader in scope and of wider appeal. For example, MPEG-2 supports interlace and a much wider range of picture sizes and bit rates. The MPEG-4 standard uses the tools of MPEG-2 but adds to them to allow even higher compression factors. MPEG standards are largely backward compatible such that an MPEG-2 decoder can understand MPEG-1 data and an MPEG-4 decoder can understand both MPEG-2 and MPEG-1 data.

Figure 9.5 In MPEG-1, the input format is known as SIF (source intermediate format) and is created from interlaced video by discarding alternate fields and downsampling those that remain

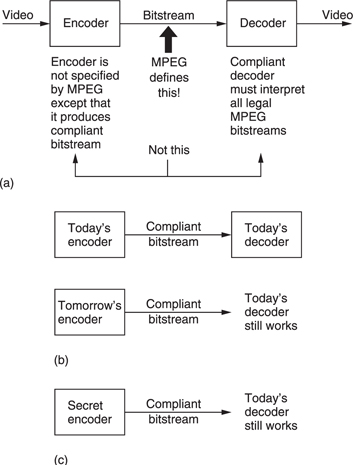

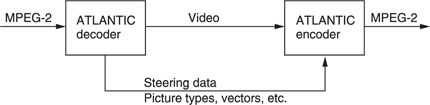

The approach of the ISO to standardization in MPEG is novel because it is not the encoder which is standardized. Figure 9.6(a) shows that instead the way in which a decoder shall interpret the bitstream is defined. A decoder which can successfully interpret the bitstream is said to be compliant. Figure 9.6(b) shows that the advantage of standardizing the decoder is that over time encoding algorithms can improve yet compliant decoders will continue to function with them.

Figure 9.6 (a) MPEG defines the protocol of the bitstream between encoder and decoder. The decoder is defined by implication, the encoder is left very much to the designer. (b) This approach allows future encoders of better performance to remain compatible with existing decoders. (c) This approach also allows an encoder to produce a standard bitstream while its technical operation remains a commercial secret.

Manufacturers can supply encoders using algorithms which are proprietary and their details do not need to be published. A useful result is that there can be competition between different encoder designs which means that better designs will evolve. The user will have greater choice because different levels of cost and complexity can exist in a range of coders yet a compliant decoder will operate with them all.

MPEG is, however, much more than a compression scheme as it also standardizes the protocol and syntax under which it is possible to combine or multiplex audio data with video data to produce a digital equivalent of a television program. Many such programs can be combined in a single multiplex and MPEG defines the way in which such multiplexes can be created and transported. The definitions include the metadata which decoders require to demultiplex correctly and which users will need to locate programs of interest. As with all video systems there is a requirement for synchronizing or genlocking and this is particularly complex when a multiplex is assembled from many signals which are not necessarily synchronized to one another

The applications of audio and video compression are limitless and the ISO has done well to provide standards which are appropriate to the wide range of possible compression products.

MPEG embraces video pictures from the tiny screen of a videophone to the high-definition images needed for electronic cinema. Audio coding stretches from speech-grade mono to multichannel surround sound.

Figure 9.7 shows the use of a codec with a recorder. The playing time of the medium is extended in proportion to the compression factor. In the case of tapes, the access time is improved because the length of tape needed for a given recording is reduced and so it can be rewound more quickly.

Figure 9.7 Compression can be used around a recording medium. The storage capacity may be increased or the access time reduced according to the application.

In the case of DVD (digital video disk, aka digital versatile disk) the challenge was to store an entire movie on one 12 cm disk. The storage density available with today's optical disk technology is such that recording of conventional uncompressed video would be out of the question.

In communications, the cost of data links is often roughly proportional to the data rate and so there is simple economic pressure to use a high compression factor. However, it should be borne in mind that implementing the codec also has a cost which rises with compression factor and so a degree of compromise will be inevitable. It should also be appreciated that the cost of communications is a moving target. The adoption of optical fibres has made bandwidth essentially limitless between major centres, leaving the bandwidth issue primarily in the so-called ‘last mile’ from such centres to the home. In the case of Video-On-Demand, technology such as ADSL (see Chapter 12) exists to convey data to the home on existing copper telephone lines, but the available bit rate is insufficient for entertainment-grade video without compression.

In workstations designed for the editing of audio and/or video, the source material is stored on hard disks for rapid access. Whilst top-grade systems may function without compression, many systems use compression to offset the high cost of disk storage. When a workstation is used for off-line editing, a high compression factor can be used and artifacts will be visible in the picture.

This is of no consequence as the picture is only seen by the editor who uses it to make an EDL (edit decision list) which is no more than a list of actions and the timecodes at which they occur. The original uncompressed material is then conformed to the EDL to obtain high-quality edited work. When on-line editing is being performed, the output of the workstation is the finished product and clearly a lower compression factor will have to be used.

Perhaps it is in broadcasting where the use of compression will have its greatest impact. There is only one electromagnetic spectrum and pressure from other services such as cellular telephones makes efficient use of bandwidth mandatory. Analog television broadcasting is an old technology and makes very inefficient use of bandwidth. Its replacement by a compressed digital transmission will be inevitable for the practical reason that the bandwidth is needed elsewhere.

Fortunately in broadcasting there is a mass market for decoders and these can be implemented as low-cost integrated circuits. Fewer encoders are needed and so it is less important if these are expensive. Whilst the cost of digital storage goes down year on year, the cost of electromagnetic spectrum goes up. Consequently in the future the pressure to use compression in recording will ease whereas the pressure to use it in radio communications will increase.

9.3 Profiles, levels and layers

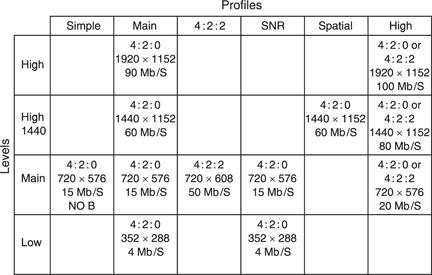

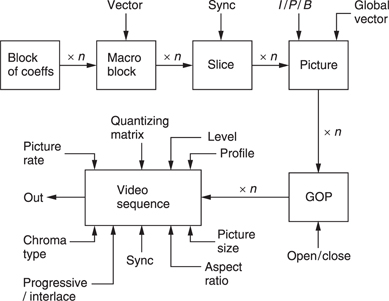

MPEG-2 has too many applications to solve with a single standard and so it is subdivided into Profiles and Levels. Put simply a Profile describes a degree of complexity whereas a Level describes the picture size or resolution which goes with that Profile. Not all Levels are supported at all Profiles. Figure 9.8 shows the available combinations. In principle there are 24 of these, but not all have been defined. An MPEG decoder having a given Profile and Level must also be able to decode lower profiles and levels.

The simple profile does not support bidirectional coding and so only I and P pictures will be output. This reduces the coding and decoding delay and allows simpler hardware. The simple profile has only been defined at Main level (SP@ML).

The Main Profile is designed for a large proportion of uses. The low level uses a low-resolution input having only 352 pixels per line. The majority of broadcast applications will require the MP@ML (Main Profile at Main Level) subset of MPEG which supports SDTV (standard definition television).

The High-1440 level is a high-definition scheme which doubles the definition compared to main level. The high level not only doubles the resolution but maintains that resolution with 16:9 format by increasing the number of horizontal samples from 1440 to 1920.

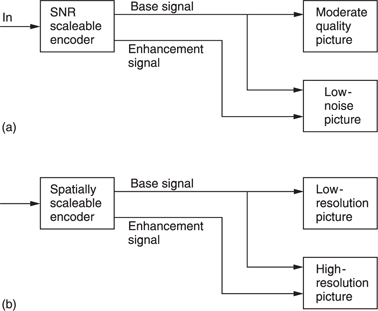

In compression systems using spatial transforms and requantizing it is possible to produce scaleable signals. A scaleable process is one in which the input results in a main signal and a ‘helper’ signal. The main signal can be decoded alone to give a picture of a certain quality, but if the information from the helper signal is added some aspect of the quality can be improved.

Figure 9.9(a) shows that in a conventional MPEG coder, by heavily requantizing coefficients a picture with moderate signal-to-noise ratio results. If, however, that picture is locally decoded and subtracted pixel by pixel from the original, a ‘quantizing noise’ picture would result. This can be compressed and transmitted as the helper signal. A simple decoder decodes only the main ‘noisy’ bitstream, but a more complex decoder can decode both bitstreams and combine them to produce a lownoise picture. This is the principle of SNR scaleability.

As an alternative, Figure 9.9(b) shows that by coding only the lower spatial frequencies in a HDTV picture a main bitstream can be made which an SDTV receiver can decode. If the lower-definition picture is locally decoded and subtracted from the original picture, a ‘definitionenhancing’ picture would result. This can be coded into a helper signal. A suitable decoder could combine the main and helper signals to re-create the HDTV picture. This is the principle of spatial scaleability.

The High profile supports both SNR and spatial scaleability as well as allowing the option of 4:2:2 sampling (see section 7.14).

The 4:2:2 profile has been developed for improved compatibility with existing digital television production equipment. This allows 4:2:2 working without requiring the additional complexity of using the high profile. For example, a HP@ML decoder must support SNR scaleability which is not a requirement for production.

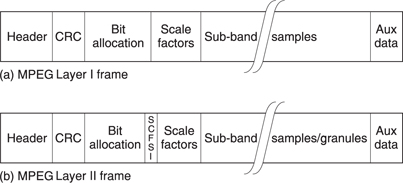

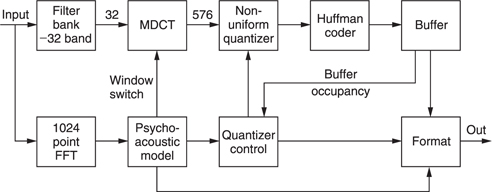

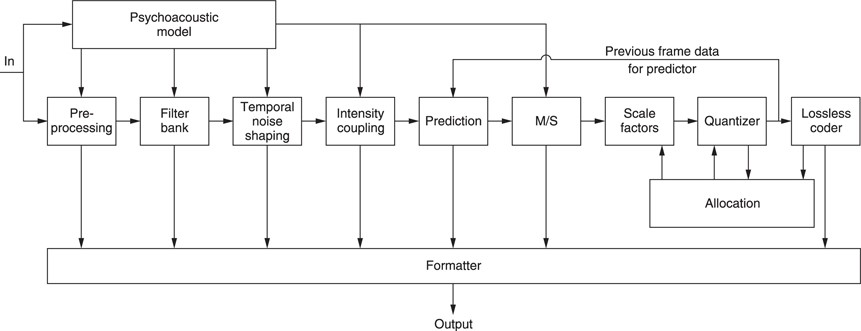

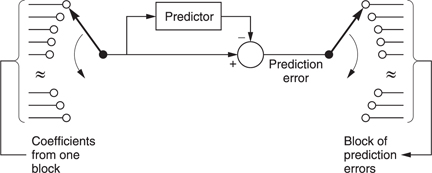

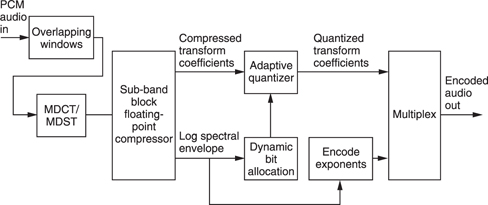

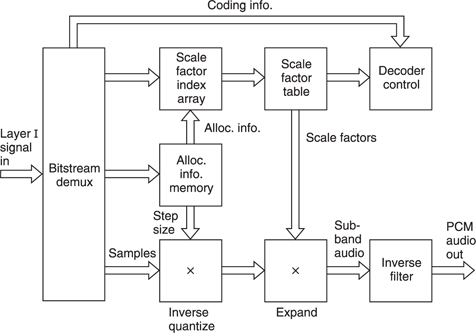

In MPEG audio the different requirements are met using layers. Layer I is the least complex, but gives the lowest compression factor, Layer II is more complex, but maintains quality at lower bit rates, whereas Layer III is extremely complex and is optimized for very high compression factors. Subsequently MPEG-AAC (advanced audio coding) was developed which allows better audio quality through increased complexity. However, AAC is not compatible with the earlier systems.

9.4 Spatial and temporal redundancy in MPEG

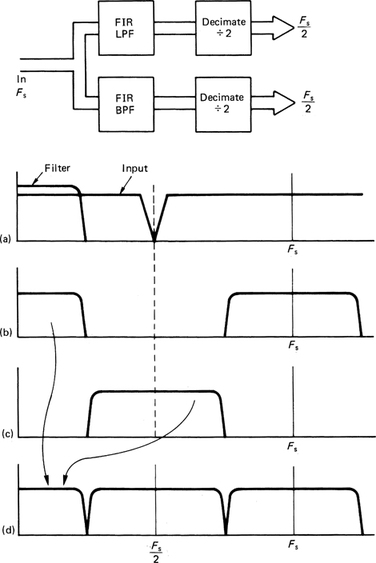

Video signals exist in four dimensions: these are the attributes of the sample, the horizontal and vertical spatial axes and the time axis. Compression can be applied in any or all of those four dimensions. MPEG assumes eight-bit colour difference signal as the input, requiring rounding if the source is ten-bit. The sampling rate of the colour signals is less than that of the luminance. This is done by downsampling the colour samples horizontally and generally vertically as well. Essentially an MPEG system has three parallel simultaneous channels, one for luminance and two colour difference, which after coding are multiplexed into a single bitstream.

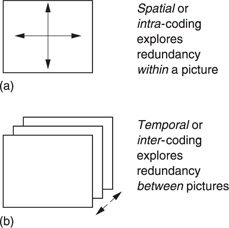

Figure 9.10 (a) Spatial or intra-coding works on individual images. (b) Temporal or inter-coding works on successive images.

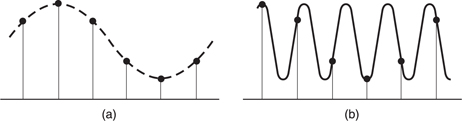

Figure 9.10(a) shows that when individual pictures are compressed without reference to any other pictures, the time axis does not enter the process which is therefore described as intra-coded (intra = within) compression. The term spatial coding will also be found. It is an advantage of intra-coded video that there is no restriction to the editing which can be carried out on the picture sequence. As a result compressed VTRs intended for production use spatial coding. Cut editing may take place on the compressed data directly if necessary. As spatial coding treats each picture independently, it can employ certain techniques developed for the compression of still pictures. The ISO JPEG (Joint Photographic Experts Group) compression standards5,6 are in this category. Where a succession of JPEG coded images are used for television, the term ‘Motion JPEG’ will be found.

Greater compression factors can be obtained by taking account of the redundancy from one picture to the next. This involves the time axis, as Figure 9.10(b) shows, and the process is known as inter-coded (inter = between) or temporal compression.

Temporal coding allows a higher compression factor, but has the disadvantage that an individual picture may exist only in terms of the differences from a previous picture. Clearly editing must be undertaken with caution and arbitrary cuts simply cannot be performed on the MPEG bitstream. If a previous picture is removed by an edit, the difference data will then be insufficient to re-create the current picture.

Intra-coding works in three dimensions on the horizontal and vertical spatial axes and on the sample values. Analysis of typical television pictures reveals that whilst there is a high spatial frequency content due to detailed areas of the picture, there is a relatively small amount of energy at such frequencies. Often pictures contain sizeable areas in which the same or similar pixel values exist. This gives rise to low spatial frequencies. The average brightness of the picture results in a substantial zero frequency component. Simply omitting the high-frequency components is unacceptable as this causes an obvious softening of the picture.

A coding gain can be obtained by taking advantage of the fact that the amplitude of the spatial components falls with frequency. It is also possible to take advantage of the eye's reduced sensitivity to noise in high spatial frequencies. If the spatial frequency spectrum is divided into frequency bands the high-frequency bands can be described by fewer bits not only because their amplitudes are smaller but also because more noise can be tolerated. The wavelet transform and the discrete cosine transfrm used in MPEG allow two-dimensional pictures to be described in the frequency domain. These were discussed in Chapter 3.

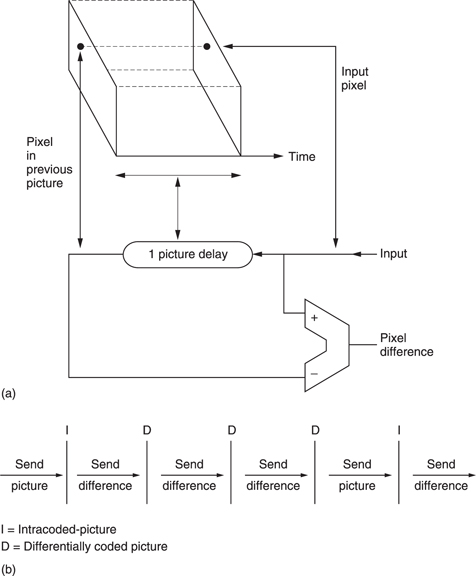

Inter-coding takes further advantage of the similarities between successive pictures in real material. Instead of sending information for each picture separately, inter-coders will send the difference between the previous picture and the current picture in a form of differential coding. Figure 9.11 shows the principle. A picture store is required at the coder to allow comparison to be made between successive pictures and a similar store is required at the decoder to make the previous picture available. The difference data may be treated as a picture itself and subjected to some form of transform-based spatial compression.

The simple system of Figure 9.11(a) is of limited use as in the case of a transmission error, every subsequent picture would be affected. Channel switching in a television set would also be impossible. In practical systems a modification is required. One approach is the so-called ‘leaky predictor’ in which the next picture is predicted from a limited number of previous pictures rather than from an indefinite number. As a result errors cannot propagate indefinitely. The approach used in MPEG is that periodically some absolute picture data are transmitted in place of difference data.

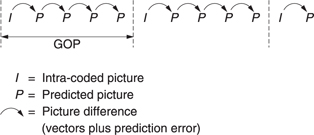

Figure 9.11(b) shows that absolute picture data, known as I or intra pictures are interleaved with pictures which are created using difference data, known as P or predicted pictures. The I pictures require a large amount of data, whereas the P pictures require less data. As a result the instantaneous data rate varies dramatically and buffering has to be used to allow a constant transmission rate. The leaky predictor needs less buffering as the compression factor does not change so much from picture to picture.

The I picture and all the P pictures prior to the next I picture are called a group of pictures (GOP). For a high compression factor, a large number of P pictures should be present between I pictures, making a long GOP. However, a long GOP delays recovery from a transmission error. The compressed bitstream can only be edited at I pictures as shown.

In the case of moving objects, although their appearance may not change greatly from picture to picture, the data representing them on a fixed sampling grid will change and so large differences will be generated between successive pictures. It is a great advantage if the effect of motion can be removed from difference data so that they reflect only the changes in appearance of a moving object since a much greater coding gain can then be obtained. This is the objective of motion compensation.

In real television program material objects move around before a fixed camera or the camera itself moves. Motion compensation is a process which effectively measures motion of objects from one picture to the next so that it can allow for that motion when looking for redundancy between pictures. Chapter 7 showed that moving pictures can be expressed in a three-dimensional space which results from the screen area moving along the time axis. In the case of still objects, the only motion is along the time axis. However, when an object moves, it does so along the optic flow axis which is not parallel to the time axis. The optic flow axis joins the same point on a moving object as it takes on various screen positions.

It will be clear that the data values representing a moving object change with respect to the time axis. However, looking along the optic flow axis the appearance of an object changes only if it deforms, moves into shadow or rotates. For simple translational motions the data representing an object are highly redundant with respect to the optic flow axis. Thus if the optic flow axis can be located, coding gain can be obtained in the presence of motion.

A motion-compensated coder works as follows. An I picture is sent, but is also locally stored so that it can be compared with the next input picture to find motion vectors for various areas of the picture. The I picture is then shifted according to these vectors to cancel inter-picture motion. The resultant predicted picture is compared with the actual picture to produce a prediction error also called a residual. The prediction error is transmitted with the motion vectors. At the receiver the original I picture is also held in a memory. It is shifted according to the transmitted motion vectors to create the predicted picture and then the prediction error is added to it to re-create the original. When a picture is encoded in this way MPEG calls it a P picture.

The concept of sending a prediction error is a useful approach because it allows both the motion estimation and compensation to be imperfect.

A good motion-compensation system will send just the right amount of vector data. With insufficient vector data, the prediction error will be large, but transmission of excess vector data will also cause the the bit rate to rise. There will be an optimum balance which minimizes the sum of the prediction error data and the vector data.

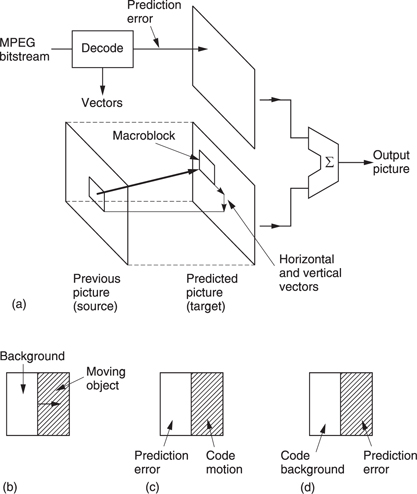

In MPEG-2 the balance is obtained by dividing the screen into areas called macroblocks which are 16 luminance pixels square. Each macroblock is steered by a vector. The location of the boundaries of a macroblock are fixed and so the vector does not move the macroblock. Instead the vector tells the decoder where to look in another frame to find pixel data to fetch to the macroblock. Figure 9.12(a) shows this concept. The shifting process is generally done by modifying the read address of a RAM using the vector. This can shift by one pixel steps. MPEG-2 vectors have half-pixel resolution so it is necessary to interpolate between pixels from RAM to obtain half-pixel shifted values.

Figure 9.12 (a) In motion compensation, pixel data are brought to a fixed macroblock in the target picture from a variety of places in another picture. (b) Where only part of a macroblock is moving, motion compensation is non-ideal. The motion can be coded (c), causing a prediction error in the background, or the background can be coded (d) causing a prediction error in the moving object.

Real moving objects will not coincide with macroblocks and so the motion compensation will not be ideal but the prediction error makes up for any shortcomings. Figure 9.12(b) shows the case where the boundary of a moving object bisects a macroblock. If the system measures the moving part of the macroblock and sends a vector, the decoder will shift the entire block making the stationary part wrong. If no vector is sent, the moving part will be wrong. Both approaches are legal in MPEG-2 because the prediction error sorts out the incorrect values. An intelligent coder might try both approaches to see which required the least prediction error data.

The prediction error concept also allows the use of simple but inaccurate motion estimators in low-cost systems. The greater prediction error data are handled using a higher bit rate. On the other hand, if a precision motion estimator is available, a very high compression factor may be achieved because the prediction error data are minimized. MPEG-2 does not specify how motion is to be measured; it simply defines how a decoder will interpret the vectors. Encoder designers are free to use any motionestimation system provided that the right vector protocol is created. Chapter 3 contrasted a number of motion-estimation techniques.

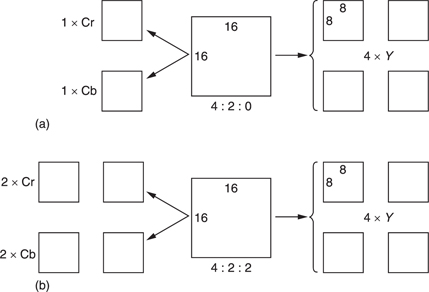

Figure 9.13(a) shows that a macroblock contains both luminance and colour difference data at different resolutions. Most of the MPEG-2 Profiles use a 4:2:0 structure which means that the colour is downsampled by a factor of two in both axes. Thus in a 16 × 16 pixel block, there are only 8 × 8 colour difference sampling sites. MPEG-2 is based upon the 8 × 8 DCT (see section 3.9) and so the 16 × 16 block is the screen area which contains an 8 × 8 colour difference sampling block. Thus in 4:2:0 in each macroblock there are four luminance DCT blocks, one R - Y DCT block and one B - Y DCT block, all steered by the same vector.

Figure 9.13 The structure of a macroblock. (A macroblock is the screen area steered by one vector.) (a) In 4:2:0, there are two chroma DCT blocks per macroblock whereas in 4:2:2 (b) there are four, 4:2:2 needs 33 per cent more data than 4:2:0.

In the 4:2:2 Profile of MPEG-2, shown in Figure 9.13(b), the chroma is not downsampled vertically, and so there is twice as much chroma data in each macroblock which is otherwise substantially the same.

In MPEG-4 the motion-compensation process is taken further. The macroblock approach of MPEG-2 will always result in prediction errors because object boundaries seldom coincide with macroblock boundaries. MPEG-4 overcomes this limitation because its motion compensation is object based. Essentially in MPEG-4 it is possible to describe an arbitrarily shaped object which can then be steered with vectors.

9.5 I and P coding

Predictive (P) coding cannot be used indefinitely, as it is prone to error propagation. A further problem is that it becomes impossible to decode the transmission if reception begins part-way through. In real video signals, cuts or edits can be present across which there is little redundancy and which make motion estimators throw up their hands.

In the absence of redundancy over a cut, there is nothing to be done but to send the new picture information in absolute form. This is called I coding where I is an abbreviation of intra coding. As I coding needs no previous picture for decoding, then decoding can begin at I coded information.

MPEG is effectively a toolkit and there is no compulsion to use all the tools available. Thus an encoder may choose whether to use I or P coding, either once and for all or dynamically on a macroblock-by-macroblock basis.

For practical reasons, an entire frame may be encoded as I macroblocks periodically. This creates a place where the bitstream might be edited or where decoding could begin.

Figure 9.14 shows a typical application of the Simple Profile of MPEG- 2. Periodically an I picture is created. Between I pictures are P pictures which are based on the picture before. These P pictures predominantly contain macroblocks having vectors and prediction errors. However, it is perfectly legal for P pictures to contain I macroblocks. This might be useful where, for example, a camera pan introduces new material at the edge of the screen which cannot be created from an earlier picture.

Figure 9.14 A Simple Profile MPEG-2 signal may contain periodic l pictures with a number of P pictures between.

Note that although what is sent is called a P picture, it is not a picture at all. It is a set of instructions to convert the previous picture into the current picture. If the previous picture is lost, decoding is impossible. An I picture together with all of the pictures before the next I picture form a Group of Pictures (GOP).

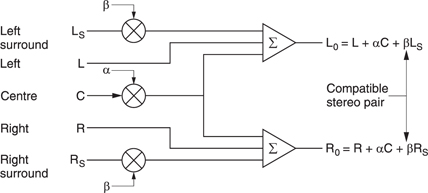

9.6 Bidirectional coding

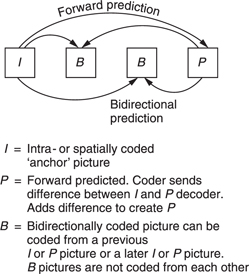

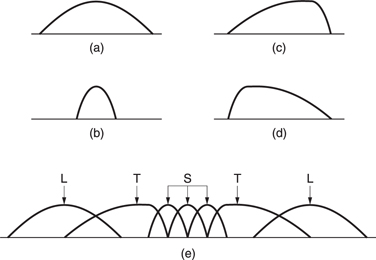

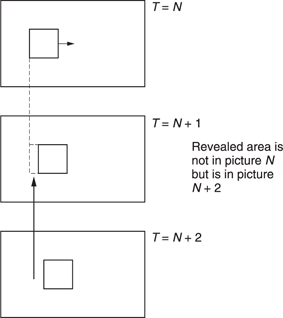

Motion-compensated predictive coding is a useful compression technique, but it does have the drawback that it can only take data from a previous picture. Where moving objects reveal a background this is completely unknown in previous pictures and forward prediction fails. However, more of the background is visible in later pictures. Figure 9.15 shows the concept. In the centre of the diagram, a moving object has revealed some background. The previous picture can contribute nothing, whereas the next picture contains all that is required.

Bidirectional coding is shown inFigure 9.16. A bidirectional or B macroblock can be created using a combination of motion compensation and the addition of a prediction error. This can be done by forward prediction from a previous picture or backward prediction from a subsequent picture. It is also possible to use an average of both forward and backward prediction. On noisy material this may result in some reduction in bit rate. The technique is also a useful way of portraying a dissolve.

Figure 9.15 In bidirectional coding the revealed background can be efficiently coded by bringing data back from a future picture.

The averaging process in MPEG-2 is a simple linear interpolation which works well when only one B picture exists between the reference pictures before and after. A larger number of B pictures would require weighted interpolation but MPEG-2 does not support this.

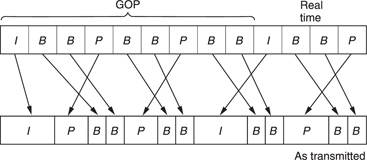

Typically two B pictures are inserted between P pictures or between I and P pictures. As can be seen, B pictures are never predicted from one another, only from I or P pictures. A typical GOP for broadcasting purposes might have the structure IBBPBBPBBPBB. Note that the last B pictures in the GOP require the I picture in the next GOP for decoding and so the GOPs are not truly independent. Independence can be obtained by creating a closed GOP which may contain B pictures but which ends with a P picture. It is also legal to have a B picture in which every macroblock is forward predicted, needing no future picture for decoding.

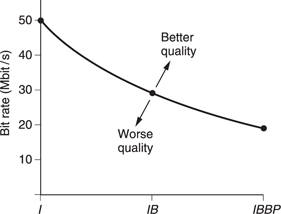

Bidirectional coding is very powerful. Figure 9.17 is a constant quality curve showing how the bit rate changes with the type of coding. On the left, only I or spatial coding is used, whereas on the right an IBBP structure is used. This means that there are two bidirectionally coded pictures in between a spatially coded picture (I) and a forward predicted picture (P). Note how for the same quality the system which only uses spatial coding needs two and a half times the bit rate that the bidirectionally coded system needs.

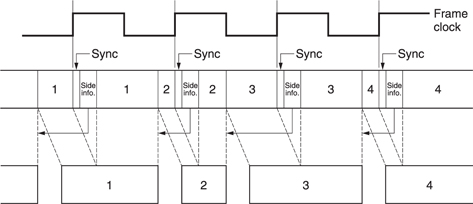

Clearly information in the future has yet to be transmitted and so is not normally available to the decoder. MPEG-2 gets around the problem by sending pictures in the wrong order. Picture reordering requires delay in the encoder and a delay in the decoder to put the order right again. Thus the overall codec delay must rise when bidirectional coding is used. This is quite consistent with Figure 9.3 which showed that as the compression factor rises the latency must also rise.

Figure 9.18 shows that although the original picture sequence is IBBPBBPBBIBB . . ., this is transmitted as IPBBPBBIBB . . . so that the future picture is already in the decoder before bidirectional decoding begins. Note that the I picture of the next GOP is actually sent before the last B pictures of the current GOP.

Figure 9.18 Comparison of pictures before and after compression showing sequence change and varying amount of data needed by each picture type. I, P, B pictures use unequal amounts of data.

Figure 9.18 also shows that the amount of data required by each picture is dramatically different. I pictures have only spatial redundancy and so need a lot of data to describe them. P pictures need fewer data because they are created by shifting the I picture with vectors and then adding a prediction error picture. B pictures need the least data of all because they can be created from I or P.

With pictures requiring a variable length of time to transmit, arriving in the wrong order, the decoder needs some help. This takes the form of picture-type flags and time stamps.

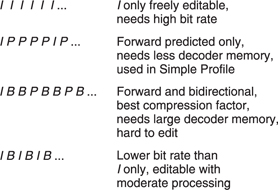

9.7 Coding applications

Figure 9.19 shows a variety of GOP structures. The simplest is the III .. sequence in which every picture is intra-coded. Pictures can be fully decoded without reference to any other pictures and so editing is straightforward. However, this approach requires about two and one half times the bit rate of a full bidirectional system. Bidirectional coding is most useful for final delivery of post-produced material either by broadcast or on prerecorded media as there is then no editing requirement. As a compromise the IBIB .. structure can be used which has some of the bit rate advantage of bidirectional coding but without too much latency. It is possible to edit an IBIB stream by performing some processing. If it is required to remove the video following a B picture, that B picture could not be decoded because it needs I pictures either side of it for bidirectional decoding. The solution is to decode the B picture first, and then re-encode it with forward prediction only from the previous I picture. The subsequent I picture can then be replaced by an edit process. Some quality loss is inevitable in this process but this is acceptable in applications such as ENG and industrial video.

Figure 9.19 Various possible GOP structures used with MPEG. See text for details.

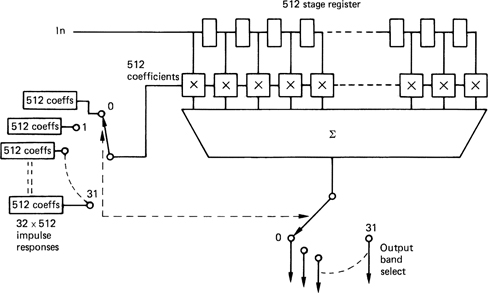

9.8 Spatial compression

Spatial compression in MPEG is used in I pictures on actual picture data and in P and B pictures on prediction error data. MPEG uses the discrete cosine transform described in section 3.7. The DCT works on blocks and in MPEG these are 8 × 8 pixels. Section 5.7 showed how the macroblocks of the motion-compensation structure are designed so they can be broken down into 8 × 8 DCT blocks. In a 4:2:0 macroblock there will be six DCT blocks whereas in a 4:2:2 macroblock there will be eight.

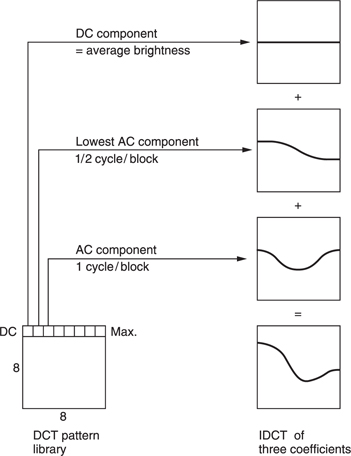

Figure 9.20 shows the table of basis functions or wave table for an 8 × 8 DCT. Adding these two-dimensional waveforms together in different proportions will give any original 8 × 8 pixel block. The coefficients of the DCT simply control the proportion of each wave which is added in the inverse transform. The top-left wave has no modulation at all because it conveys the DC component of the block. This coefficient will be a unipolar (positive only) value in the case of luminance and will typically be the largest value in the block as the spectrum of typical video signals is dominated by the DC component.

Figure 9.20 The discrete cosine transform breaks up an image area into discrete frequencies in two dimensions. The lowest frequency can be seen here at the top-left corner. Horizontal frequency increases to the right and vertical frequency increases downwards.

Increasing the DC coefficient adds a constant amount to every pixel. Moving to the right the coefficients represent increasing horizontal spatial frequencies and moving downwards the coefficients represent increasing vertical spatial frequencies. The bottom-right coefficient represents the highest diagonal frequencies in the block. All these coefficients are bipolar, where the polarity indicates whether the original spatial waveform at that frequency was inverted.

Figure 9.21 shows a one-dimensional example of an inverse transform. The DC coefficient produces a constant level throughout the pixel block. The remaining waves in the table are AC coefficients. A zero coefficient would result in no modulation, leaving the DC level unchanged. The wave next to the DC component represents the lowest frequency in the transform which is half a cycle per block. A positive coefficient would make the left side of the block brighter and the right side darker whereas a negative coefficient would do the opposite. The magnitude of the coefficient determines the amplitude of the wave which is added. Figure 9.21 also shows that the next wave has a frequency of one cycle per block. i.e. the block is made brighter at both sides and darker in the middle.

Figure 9.21 A one-dimensional inverse transform. See text for details.

Consequently an inverse DCT is no more than a process of mixing various pixel patterns from the wave table where the relative amplitudes and polarity of these patterns are controlled by the coefficients. The original transform is simply a mechanism which finds the coefficient amplitudes from the original pixel block.

The DCT itself achieves no compression at all. Sixty-four pixels are converted to sixty-four coefficients. However, in typical pictures, not all coefficients will have significant values; there will often be a few dominant coefficients. The coefficients representing the higher twodimensional spatial frequencies will often be zero or of small value in large areas, due to blurring or simply plain undetailed areas before the camera.

Statistically, the further from the top-left corner of the wave table the coefficient is, the smaller will be its magnitude. Coding gain (the technical term for reduction in the number of bits needed) is achieved by transmitting the low-valued coefficients with shorter wordlengths. The zero-valued coefficients need not be transmitted at all. Thus it is not the DCT which compresses the data, it is the subsequent processing. The DCT simply expresses the data in a form which makes the subsequent processing easier.

Higher compression factors require the coefficient wordlength to be further reduced using requantizing. Coefficients are divided by some factor which increases the size of the quantizing step. The smaller number of steps which results permits coding with fewer bits, but of course with an increased quantizing error. The coefficients will be multiplied by a reciprocal factor in the decoder to return to the correct magnitude.

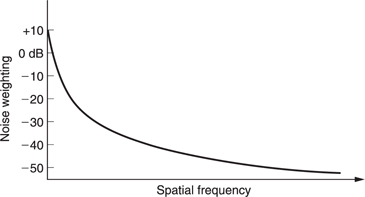

Inverse transforming a requantized coefficient means that the frequency it represents is reproduced in the output with the wrong amplitude. The difference between original and reconstructed amplitude is regarded as a noise added to the wanted data. Figure 9.22 shows that the visibility of such noise is far from uniform. The maximum sensitivity is found at DC and falls thereafter. As a result the top-left coefficient is often treated as a special case and left unchanged. It may warrant more error protection than other coefficients.

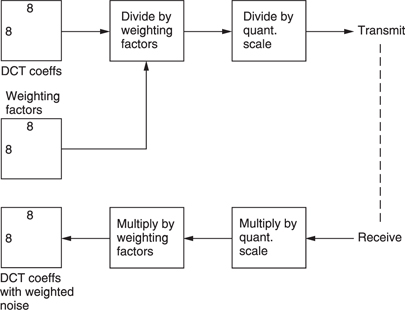

MPEG takes advantage of the falling sensitivity to noise. Prior to requantizing, each coefficient is divided by a different weighting constant as a function of its frequency. Figure 9.23 shows a typical weighting process. Naturally the decoder must have a corresponding inverse weighting. This weighting process has the effect of reducing the magnitude of high-frequency coefficients disproportionately. Clearly different weighting will be needed for colour difference data as colour is perceived differently.

P and B pictures are decoded by adding a prediction error image to a reference image. That reference image will contain weighted noise. One purpose of the prediction error is to cancel that noise to prevent tolerance build-up. If the prediction error were also to contain weighted noise this result would not be obtained. Consequently prediction error coefficients are flat weighted.

Figure 9.23 Weighting is used to make the noise caused by requantizing different at each frequency.

When forward prediction fails, such as in the case of new material introduced in a P picture by a pan, P coding would set the vectors to zero and encode the new data entirely as an unweighted prediction error. In this case it is better to encode that material as an I macroblock because then weighting can be used and this will require fewer bits.

Requantizing increases the step size of the coefficients, but the inverse weighting in the decoder results in step sizes which increase with frequency. The larger step size increases the quantizing noise at high frequencies where it is less visible. Effectively the noise floor is shaped to match the sensitivity of the eye. The quantizing table in use at the encoder can be transmitted to the decoder periodically in the bitstream.

9.9 Scanning and run-length/variable-length coding

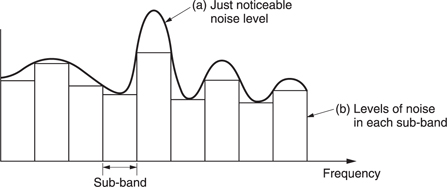

Study of the signal statistics gained from extensive analysis of real material is used to measure the probability of a given coefficient having a given value. This probability turns out to be highly non-uniform, suggesting the possibility of a variable-length encoding for the coefficient values. On average, the higher the spatial frequency, the lower the value of a coefficient will be. This means that the value of a coefficient falls as a function of its radius from the DC coefficient.

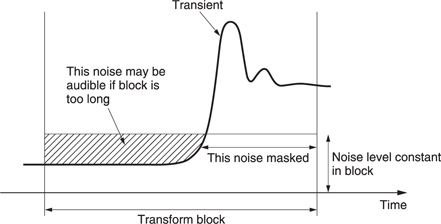

Typical material often has many coefficients which are zero valued, especially after requantizing. The distribution of these also follows a pattern. The non-zero values tend to be found in the top-left corner of the DCT block, but as the radius increases, not only do the coefficient values fall, but it becomes increasingly likely that these small coefficients will be interspersed with zero-valued coefficients. As the radius increases further it is probable that a region where all coefficients are zero will be entered.

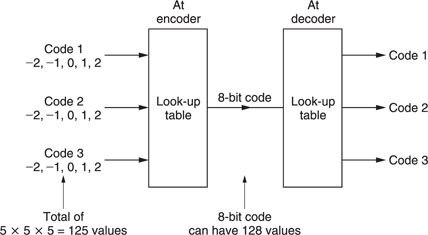

MPEG uses all these attributes of DCT coefficients when encoding a coefficient block. By sending the coefficients in an optimum order, by describing their values with Huffman coding and by using run-length encoding for the zero-valued coefficients it is possible to achieve a significant reduction in coefficient data which remains entirely lossless. Despite the complexity of this process, it does contibute to improved picture quality because for a given bit rate lossless coding of the coefficients must be better than requantizing, which is lossy. Of course, for lower bit rates both will be required.

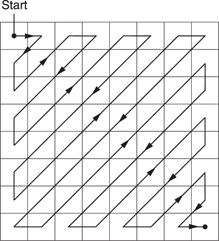

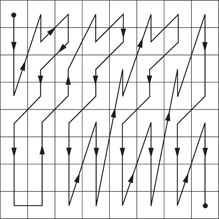

It is an advantage to scan in a sequence where the largest coefficient values are scanned first. Then the next coefficient is more likely to be zero than the previous one. With progressively scanned material, a regular zigzag scan begins in the top-left corner and ends in the bottom-right corner as shown in Figure 9.24. Zig-zag scanning means that significant values are more likely to be transmitted first, followed by the zero values. Instead of coding these zeros, an unique ‘end of block’ (EOB) symbol is transmitted instead.

As the zig-zag scan approaches the last finite coefficient it is increasingly likely that some zero value coefficients will be scanned.

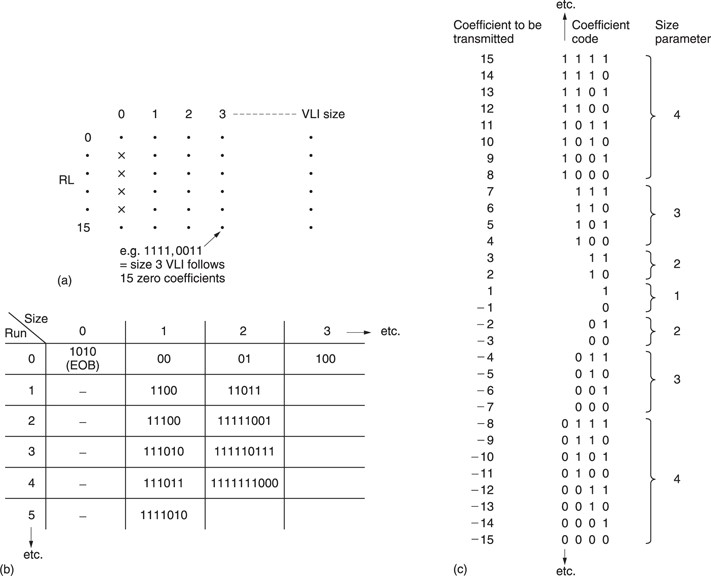

Instead of transmitting the coefficients as zeros, the zero-run-length, i.e. the number of zero valued coefficients in the scan sequence is encoded into the next non-zero coefficient which is itself variable-length coded. This combination of run-length and variable-length coding is known as RLC/ VLC in MPEG.

The DC coefficient is handled separately because it is differentially coded and this discussion relates to the AC coefficients. Three items need to be handled for each coefficient: the zero-run-length prior to this coefficient, the wordlength and the coefficient value itself. The wordlength needs to be known by the decoder so that it can correctly parse the bitstream. The wordlength of the coefficient is expressed directly as an integer called the size.

Figure 9.25(a) shows that a two-dimensional run/size table is created. One dimension expresses the zero-run-length; the other the size. A run length of zero is obtained when adjacent coefficients are non-zero, but a code of 0/0 has no meaningful run/size interpretation and so this bit pattern is used for the end-of-block (EOB) symbol.

In the case where the zero-run-length exceeds 14, a code of 15/0 is used signifying that there are fifteen zero-valued coefficients. This is then followed by another run/size parameter whose run-length value is added to the previous fifteen.

The run/size parameters contain redundancy because some combinations are more common than others. Figure 9.25(b) shows that each run/ size value is converted to a variable-length Huffman codeword for transmission. As was shown in section 1.5, the Huffman codes are designed so that short codes are never a prefix of long codes so that the decoder can deduce the parsing by testing an increasing number of bits until a match with the look-up table is found.

Having parsed and decoded the Huffman run/size code, the decoder then knows what the coefficient wordlength will be and can correctly parse that.

The variable-length coefficient code has to describe a bipolar coefficient, i.e one which can be positive or negative. Figure 9.25(c) shows that for a particular size, the coding scale has a certain gap in it. For example, all values from −7 to +7 can be sent by a size 3 code, so a size 4 code only has to send the values of −15 to −8 and +8 to +15. The coefficient code is sent as a pure binary number whose value ranges from all zeros to all ones where the maximum value is a function of the size. The number range is divided into two, the lower half of the codes specifying negative values and the upper half specifying positive.

In the case of positive numbers, the transmitted binary value is the actual coefficient value, whereas in the case of negative numbers a constant must be subtracted which is a function of the size. In the case of a size 4 code, the constant is 1510. Thus a size 4 parameter of 01112 (710) would be interpreted as 7 −15 = −8. A size of 5 has a constant of 31 so a transmitted coded of 010102 (102) would be interpreted as 10 −31 = −21.

This technique saves a bit because, for example, 63 values from −31 to +31 are coded with only five bits having only 32 combinations. This is possible because that extra bit is effectively encoded into the run/size parameter.

Figure 9.26 A complete spatial coding system which can compress an I picture or the prediction error in P and B pictures. See text for details.

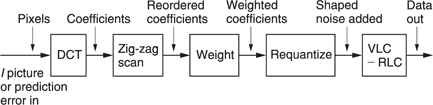

Figure 9.26 shows the whole spatial coding subsystem. Macroblocks are subdivided into DCT blocks and the DCT is calculated. The resulting coefficients are multiplied by the weighting matrix and then requantized. The coefficients are then reordered by the zig-zag scan so that full advantage can be taken of run-length and variable-length coding. The last non-zero coefficient in the scan is followed by the EOB symbol.

In predictive coding, sometimes the motion-compensated prediction is nearly exact and so the prediction error will be almost zero. This can also happen on still parts of the scene. MPEG takes advantage of this by sending a code to tell the decoder there is no prediction error data for the macroblock concerned.

The success of temporal coding depends on the accuracy of the vectors. Trying to reduce the bit rate by reducing the accuracy of the vectors is false economy as this simply increases the prediction error. Consequently for a given GOP structure it is only in the the spatial coding that the overall bit rate is determined. The RLC/VLC coding is lossless and so its contribution to the compression cannot be varied. If the bit rate is too high, the only option is to increase the size of the coefficient-requantizing steps. This has the effect of shortening the wordlength of large coefficients, and rounding small coefficients to zero, so that the bit rate goes down. Clearly if taken too far the picture quality will also suffer because at some point the noise floor will become visible as some form of artifact.

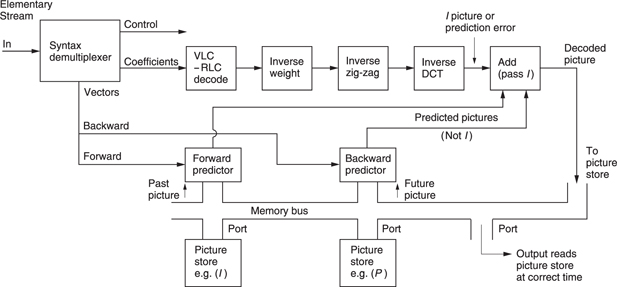

9.10 A bidirectional coder

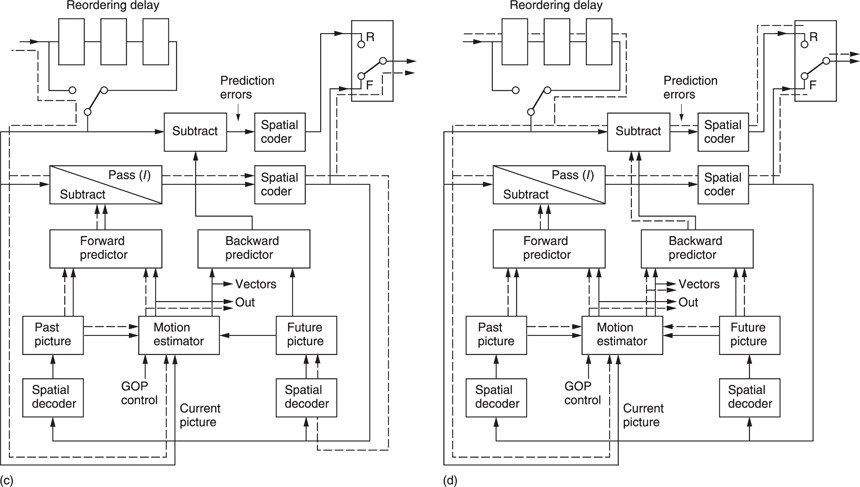

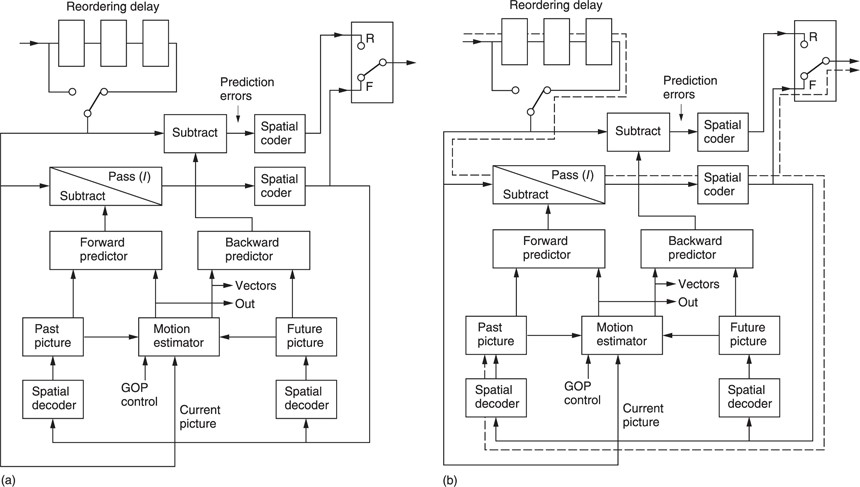

MPEG does not specify how an encoder is to be built or what coding decisions it should make. Instead it specifies the protocol of the bitstream at the output. As a result the MPEG-2 coder shown in Figure 9.27 is only an example.

Figure 9.27(a) shows the component parts of the coder. At the input is a chain of picture stores which can be bypassed for reordering purposes. This allows a picture to be encoded ahead of its normal timing when bidirectional coding is employed.

At the centre is a dual-motion estimator which can simultaneously measure motion between the input picture and earlier picture and a later picture. These reference pictures are held in frame stores. The vectors from the motion estimator are used locally to shift a picture in a frame store to form a predicted picture. This is subtracted from the input picture to produce a prediction error picture which is then spatially coded.

The bidirectional encoding process will now be described. A GOP begins with an I picture which is intra-coded. In Figure 9.27(b) the I picture emerges from the reordering delay. No prediction is possible on an I picture so the motion estimator is inactive. There is no predicted picture and so the prediction error subtractor is set simply to pass the input. The only processing which is active is the forward spatial coder which describes the picture with DCT coefficients. The output of the forward spatial coder is locally decoded and stored in the past picture frame store.

The reason for the spatial encode/decode is that the past picture frame store now contains exactly what the decoder frame store will contain, including the effects of any requantizing errors. When the same picture is used as a reference at both ends of a differential coding system, the errors will cancel out.

Having encoded the I picture, attention turns to the P picture. The input sequence is IBBP, but the transmitted sequence must be IPBB. Figure 9.27(c) shows that the reordering delay is bypassed to select the P picture. This passes to the motion estimator which compares it with the I picture and outputs a vector for each macroblock. The forward predictor uses these vectors to shift the I picture so that it more closely resembles the P picture. The predicted picture is then subtracted from the actual picture to produce a forward prediction error. This is then spatially coded. Thus the P picture is transmitted as a set of vectors and a prediction error image.

The P picture is locally decoded in the right-hand decoder. This takes the forward-predicted picture and adds the decoded prediction error to obtain exactly what the decoder will obtain.

Figure 9.27(d) shows that the encoder now contains an I picture in the left store and a P picture in the right store. The reordering delay is reselected so that the first B picture can be input. This passes to the motion estimator where it is compared with both the I and P pictures to produce forward and backward vectors. The forward vectors go to the forward predictor to make a B prediction from the I picture. The backward vectors go to the backward predictor to make a B prediction from the P picture. These predictions are simultaneously subtracted from the actual B picture to produce a forward prediction error and a backward prediction error. These are then spatially encoded. The encoder can then decide which direction of coding resulted in the best prediction; i.e. the smallest prediction error.

Not shown in the interests of clarity is a third signal path which creates a predicted B picture from the average of forward and backward predictions. This is subtracted from the input picture to produce a third prediction error. In some circumstances this prediction error may use fewer data than either forward or backward prediction alone.

As B pictures are never used to create other pictures, the decoder does not locally decode the B picture. After decoding and displaying the B picture the decoder will discard it. At the encoder the I and P pictures remain in their frame stores and the second B picture is input from the reordering delay.

Following the encoding of the second B picture, the encoder must reorder again to encode the second P picture in the GOP. This will be locally decoded and will replace the I picture in the left store. The stores and predictors switch designation because the left store is now a future P picture and the right store is now a past P picture. B pictures between them are encoded as before.

9.11 Slices

There is still some redundancy in the output of a bidirectional coder and MPEG is remarkably diligent in finding it. In I pictures, the DC coefficient describes the average brightness of an entire DCT block. In real video the DC component of adjacent blocks will be similar much of the time. A saving in bit rate can be obtained by differentially coding the DC coefficient.

In P and B pictures this is not done because these are prediction errors not actual images and the statistics are different. However, P and B pictures send vectors and instead the redundancy in these is explored. In a large moving object, many macroblocks will be moving at the same velocity and their vectors will be the same. Thus differential vector coding will be advantageous.

As has been seen above, differential coding cannot be used indiscriminately as it is prone to error propagation. Periodically absolute DC coefficients and vectors must be sent and the slice is the logical structure which supports this mechanism. In I pictures, the first DC coefficient in a slice is sent in absolute form, whereas the subsequent coefficients are sent differentially. In P or B pictures, the first vector in a slice is sent in absolute form, but the subsequent vectors are differential.

Slices are horizontal picture strips which are one macroblock (16 pixels) high and which proceed from left to right across the screen. The sides of the picture must coincide with the beginning or the end of a slice in MPEG-2, but otherwise the encoder is free to decide how big slices should be and where they begin.

In the case of a central dark building silhouetted against the bright sky, there would be two large changes in the DC coefficients, one at each edge of the building. It may be advantageous to the encoder to break the width of the picture into three slices, one each for the left and right areas of sky and one for the building. In the case of a large moving object, different slices may be used for the object and the background.

Each slice contains its own synchronizing pattern, so following a transmission error, correct decoding can resume at the next slice. Slice size can also be matched to the characteristics of the transmission channel. For example, in an error-free transmission system the use of a large number of slices in a packet simply wastes data capacity on surplus synchronizing patterns. However, in a non-ideal system it might be advantageous to have frequent resynchronizing.

9.12 Handling interlaced pictures

MPEG-1 handles interlaced inputs by discarding alternate fields to produce a non-interlaced signal. In MPEG-2, spatial coding, predictive coding and motion compensation can still be performed using interlaced source material at the cost of considerable complexity. Despite that complexity, coders cannot be expected to perform as well with interlaced material.

Figure 9.28 shows that in an incoming interlaced frame there are two fields each of which contain half of the lines in the frame. In MPEG-2 these are known as the top field and the bottom field. In video from a camera, these fields represent the state of the image at two different times. Where there is little image motion, this is unimportant and the fields can be combined obtaining more effective compression. However, in the presence of motion the fields become increasingly decorrelated because of the displacement of moving objects from one field to the next.

This characteristic determines that MPEG-2 must be able to handle fields independently or together. This dual approach permeates all aspects of MPEG-2 and affects the definition of pictures, macroblocks, DCT blocks and zig-zag scanning.

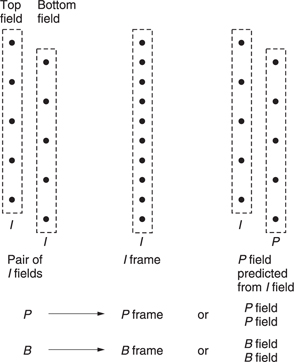

Figure 9.28 also shows how MPEG-2 designates interlaced fields. In picture types I, P and B, the two fields can be superimposed to make a frame-picture or the two fields can be coded independently as two field-pictures. As a third possibility, in I pictures only, the bottom field-picture can be predictively coded from the top field-picture to make an IP frame-picture.

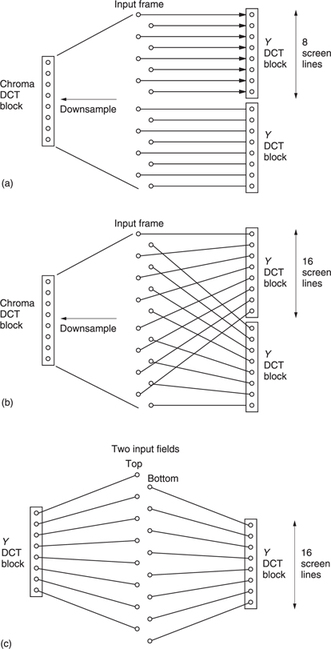

A frame-picture is one in which the macroblocks contain lines from both field types over a picture area 16 scan lines high. Each luminance macroblock contains the usual four DCT blocks but there are two ways in which these can be assembled. Figure 9.29(a) shows how a frame is divided into frame DCT blocks. This is identical to the progressive scan approach in that each DCT block contains eight contiguous picture lines. In 4:2:0, the colour difference signals have been downsampled by a factor of two and shifted as was shown in section 4.18. Figure 9.29(a) also shows how one 4:2:0 DCT block contains the chroma data from 16 lines in two fields.

Even small amounts of motion in any direction can destroy the correlation between odd and even lines and a frame DCT will result in an excessive number of coefficients. Figure 9.29(b) shows that instead the luminance component of a frame can also be divided into field DCT blocks. In this case one DCT block contains odd lines and the other contains even lines. In this mode the chroma still produces one DCT block from both fields as in Figure 9.29(a).

When an input frame is designated as two field-pictures, the macroblocks come from a screen area which is 32 lines high. Figure 9.29(c) shows that the DCT blocks contain the same data as if the input frame had been designated a frame-picture but with field DCT. Consequently it is only frame-pictures which have the option of field or frame DCT. These may be selected by the encoder on a macroblock-by-macroblock basis and, of course, the resultant bitstream must specify what has been done.

In a frame which contains a small moving area, it may be advantageous to encode as a frame-picture with frame DCT except in the moving area where field DCT is used. This approach may result in fewer bits than coding as two field-pictures.

In a field-picture and in a frame-picture using field DCT, a DCT block contains lines from one field type only and this must have come from a screen area sixteen scan lines high, whereas in progressive scan and frame DCT the area is only eight scan lines high. A given vertical spatial frequency in the image is sampled at points twice as far apart which is interpreted by the field DCT as a doubled spatial frequency, whereas there is no change in the horizontal spectrum.

Following the DCT calculation, the coefficient distribution will be different in field-pictures and field DCT frame-pictures. In these cases, the probability of coefficients is not a constant funtion of radius from the DC coefficient as it is in progressive scan, but is elliptical where the ellipse is twice as high as it is wide.

Using the standard 45° zig-zag scan with this different coefficient distribution would not have the required effect of putting all the significant coefficients at the beginning of the scan. To achieve this requires a different zig-zag scan, which is shown in Figure 9.30. This scan, sometimes known as the Yeltsin walk, attempts to match the elliptical probability of interlaced coefficients with a scan slanted at 67.5° to the vertical. This is clearly suboptimal, and is one of the reasons why MPEG-2 does not work so well with interlaced video.

Motion estimation is more difficult in an interlaced system. Vertical detail can result in differences between fields and this reduces the quality of the match. Fields are vertically subsampled without filtering and so contain alias products. This aliasing will mean that the vertical waveform representing a moving object will not be the same in successive pictures and this will also reduce the quality of the match.

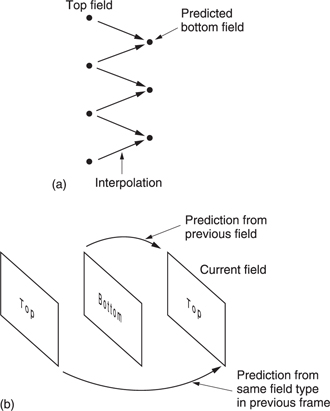

Even when the correct vector has been found, the match may be poor so the estimator fails to recognize it. If it is recognized, a poor match means that the quality of the prediction in P and B pictures will be poor and so a large prediction error or residual has to be transmitted. In an attempt to reduce the residual, MPEG-2 allows field-pictures to use motion-compensated prediction from either the adjacent field or from the same field type in another frame. In this case the encoder will use the better match. This technique can also be used in areas of frame-pictures which use field DCT.

The motion compensation of MPEG-2 has half-pixel resolution and this is inherently compatible with an interlace because an interpolator must be present to handle the half-pixel shifts. Figure 9.31(a) shows that in an interlaced system, each field contains half of the frame lines and so interpolating half-way between lines of one field type will actually create values lying on the sampling structure of the other field type. Thus it is equally possible for a predictive system to decode a given field type based on pixel data from the other field type or of the same type.

If when using predictive coding from the other field type the vertical motion vector contains a half-pixel component, then no interpolation is needed because the act of transferring pixels from one field to another results in such a shift.

Figure 9.31(b) shows that a macroblock in a given P field-picture can be encoded using a vector which shifts data from the previous field or from the field before that, irrespective of which frames these fields occupy. As noted above, field-picture macroblocks come from an area of screen 32 lines high and this means that the vector density is halved, resulting in larger prediction errors at the boundaries of moving objects.

As an option, field-pictures can restore the vector density by using 16 × 8 motion compensation where separate vectors are used for the top and bottom halves of the macroblock. Frame-pictures can also use 16 × 8 motion compensation in conjunction with field DCT. Whilst the 2 × 2 DCT block luminance structure of a macroblock can easily be divided vertically in two, in 4:2:0 the same screen area is represented by only one chroma macroblock of each component type. As it cannot be divided in half, this chroma is deemed to belong to the luminance DCT blocks of the upper field. In 4:2:2 no such difficulty arises.

MPEG-2 supports interlace simply because interlaced video exists in legacy systems and there is a requirement to compress it. However, where the opportunity arises to define a new system, interlace should be avoided. Legacy interlaced source material should be handled using a motion-compensated de-interlacer prior to compression in the progressive domain.

9.13 An MPEG-2 coder

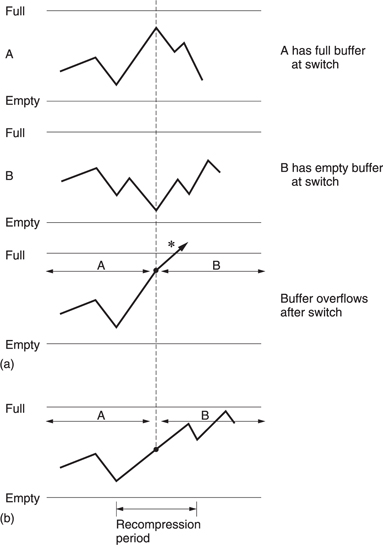

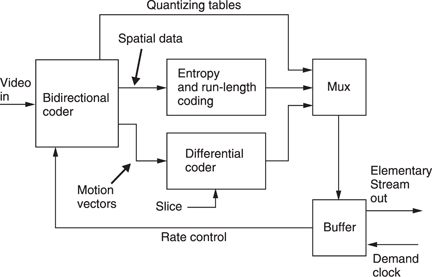

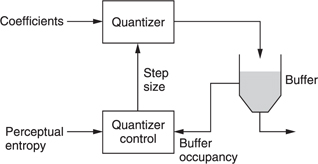

Figure 9.32 shows a complete MPEG-2 coder. The bidirectional coder outputs coefficients and vectors, and the quantizing table in use. The vectors of P and B pictures and the DC coefficients of I pictures are differentially encoded in slices and the remaining coefficients are RLC/ VLC coded. The multiplexer assembles all these data into a single bitstream called an elementary stream. The output of the encoder is a buffer which absorbs the variations in bit rate between different picture types. The buffer output has a constant bit rate determined by the demand clock. This comes from the transmission channel or storage device. If the bit rate is low, the buffer will tend to fill up, whereas if it is high the buffer will tend to empty. The buffer content is used to control the severity of the requantizing in the spatial coders. The more the buffer fills, the bigger the requantizing steps get.

The buffer in the decoder has a finite capacity and the encoder must model the decoder's buffer occupancy so that it neither overflows nor underflows. An overflow might occur if an I picture is transmitted when the buffer content is already high. The buffer occupancy of the decoder depends somewhat on the memory access strategy of the decoder. Instead of defining a specific buffer size, MPEG-2 defines the size of a particular mathematical model of a hypothetical buffer. The decoder designer can use any strategy which implements the model, and the encoder can use any strategy which doesn't overflow or underflow the model. The elementary stream has a parameter called the video buffer verifier (VBV) which defines the minimum buffering assumptions of the encoder.

Figure 9.32 An MPEG 2 coder. See text for details.