In this chapter, we want to contextualize the concepts around graph databases and make our readers understand the historical and operational differences between older, different kinds of database management systems and our modern-day Neo4j installations.

To do this, we will cover the following:

- Some background information on databases in general

- A walk-through of the different approaches taken to manage and store data, from old-school navigational databases to No-SQL graph databases

- A short discussion explaining the graph database category, its strengths, and its weaknesses

This chapter should then set our readers up for some more practical discussions later in this book.

It's not always very clear when the first real database management system was formally conceived and implemented. Ever since Babbage invented the first complete Turing computing system (the Analytical Engine, which Babbage never really managed to get built), we have known that computers would always need to have some kind of memory. This will be responsible for dealing with the data upon which operations and calculations will be executed. But when did this memory evolve into a proper database? What do we mean by a database anyway?

Let's tackle the latter question first. A database can be described as any kind of organized collection of data. Not all databases require a management system—think of the many spreadsheets and other file-based storage approaches that really don't have any kind of real material oversight imposed on it, let alone a true management system. A database management system, then, can technically be referred to as a set of computer programs that manage (in the broadest sense of the word) the database. It is a system that sits between the user-facing hardware and software components and the data. It can be described as any system that is responsible for and able to manage the structure of the data, is able to store and retrieve that data, and provides access to this data in a correct, trustable, performant, secure fashion.

Databases as we know them, however, did not exist from the get-go of computing. At first, most computers used memory, and this memory used a special-purpose, custom-made storage format that often relied on very manual, labor-intensive, and hardware-based storage operations. Many systems relied on things like punch cards for its instructions and datasets. It's not that long ago that computer systems evolved from these seemingly ancient, special-purpose technologies.

Having read many different articles on this subject, I believe that the need for "general purpose" database management systems, similar to the ones that we know today, started to increase as:

- The number of computerized systems significantly increased

- A number of breakthroughs were realized in terms of computer memory. Direct Access memory—memory that would not have to rely on lots of winding of tapes or punched cards—became available in the middle of the 1960s.

Both of these elements were necessary preconditions for any kind of multipurpose database management system to make sense. The first real database management systems seem to have cropped up in the 1960s, and I believe it would be useful to quickly run through the different phases in the development of database management systems.

We can establish the following three major phases in the half century that database management systems have been under development:

- Navigational databases

- Relational databases

- NoSQL databases

Let's look at these three system types so that we can then more accurately position graph databases such as Neo4j—the real subject of this book.

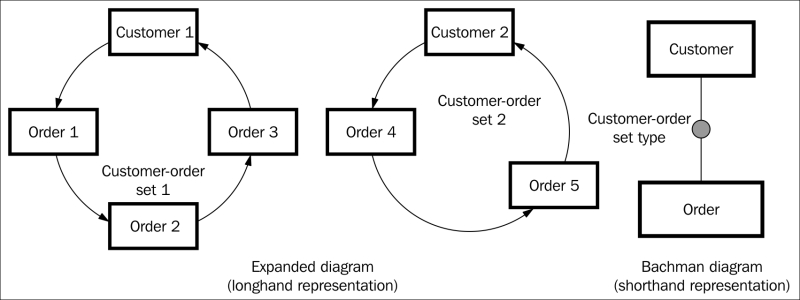

The original database management systems were developed by legendary computer scientists such as Charles Bachman, who gave a lot of thought to the way software should be built in a world of extremely scarce computing resources. Bachman invented a very natural (and as we will see later, graphical) way to model data: as a network of interrelated things. The starting point of such a database design was generally a Bachman Diagram (refer to the following diagram), which immediately feels like it expresses the model of the data structure in a very graph-like fashion:

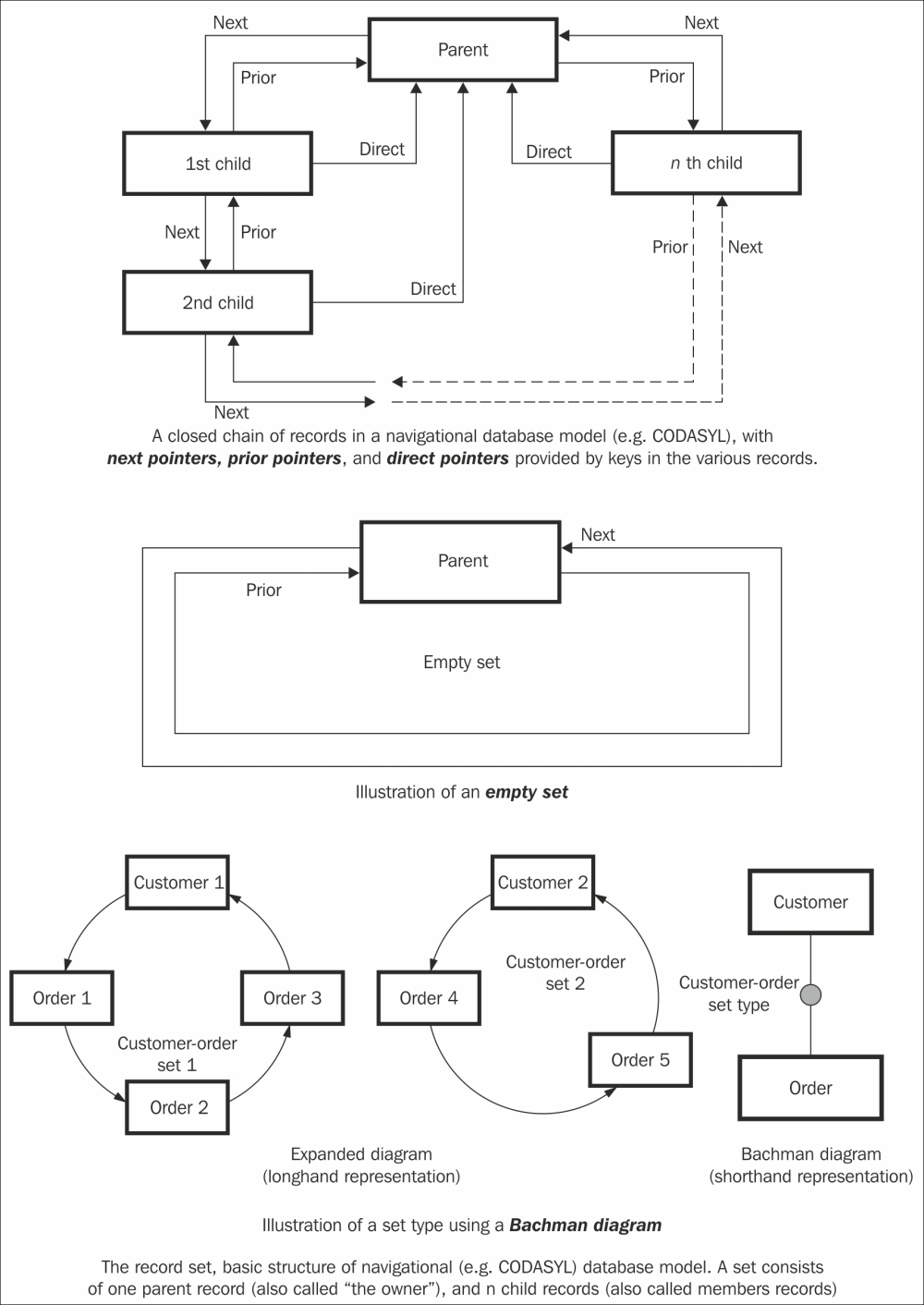

These diagrams were the starting points for database management systems that used either networks or hierarchies as the basic structure for their data. Both the network databases and the hierarchical database systems were built on the premise that data elements would be linked together by pointers.

An example of a navigational database model with pointers linking records

As you can probably imagine from the preceding discussion, these models were very interesting and resulted in a number of efforts that shaped the database industry. One of these efforts was the Conference on Data Systems Languages, better known under its acronym, CODASYL. This played an ever so important role in the information technology industry of the sixties and seventies. It shaped one of the world's dominant computer programming systems (COBOL), but also provided the basis for a whole slew of navigational databases such as IDMS, Cullinet, and IMS. The latter, the IBM-backed IMS database, is often classified as a hierarchical database, which offers a subset of the network model of CODASYL.

Navigational databases eventually gave way to a new generation of databases, the Relational Database Management Systems. Many reasons have been attributed to this shift, some technical and some commercial, but the main two reasons that seem to enjoy agreement across the industry are:

- The complexity of the models that they used. CODASYL is widely regarded as something that can only be worked or understood by absolute experts—as we partly experienced in 1999, when the Y2K problem required many CODASYL experts to work overtime to migrate their systems into the new millennium.

- The lack of a declarative query mechanism for navigational database management systems. Most of those systems inherently provide a very imperative approach to finding data: the user would have to tell the database what to do instead of just being able to ask a question and having the database provide the answer.

This allows for a great transition from navigational to relational databases.

Relational Database Management Systems are probably the ones that we are most familiar with in 21st century computer science. Some of the history behind the creation of these databases is quite interesting. It started with an unknown researcher at IBM's San Jose, CA, research facility; a gentleman called Edgar Codd. Mr. Codd was working at IBM on hard disk research projects, but was increasingly sucked into the navigational database management systems world that would be using these hard disks. Mr. Codd became increasingly frustrated with these systems, mostly with their lack of an intuitive query interface.

Essentially, you could store data in a network/hierarchy… but how would you ever get it back out?

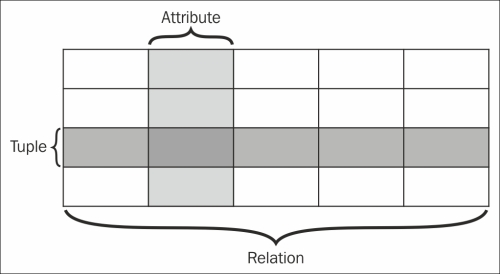

Relational database terminology

Codd wrote several papers on a different approach to database management systems that would not rely as much on linked lists of data (networks or hierarchies) but more on sets of data. He proved—using a mathematical representation called tuple calculus—that sets would be able to adhere to the same requirements that navigational database management systems were implementing. The only requirement was that there would be a proper query language that would ensure some of the consistency requirements on the database. This, then, became the inspiration for declarative query languages such as Structured Query Language, SQL. IBM's System R was one of the first implementations of such a system, but Software Development Laboratories, a small company founded by ex-IBM people and one illustrious Mr. Larry Ellison, actually beat IBM to the market. Their product, Oracle, never got released until a couple of years later by Relational Software, Inc., and then eventually became the flagship software product of Oracle Corporation, which we all know to day.

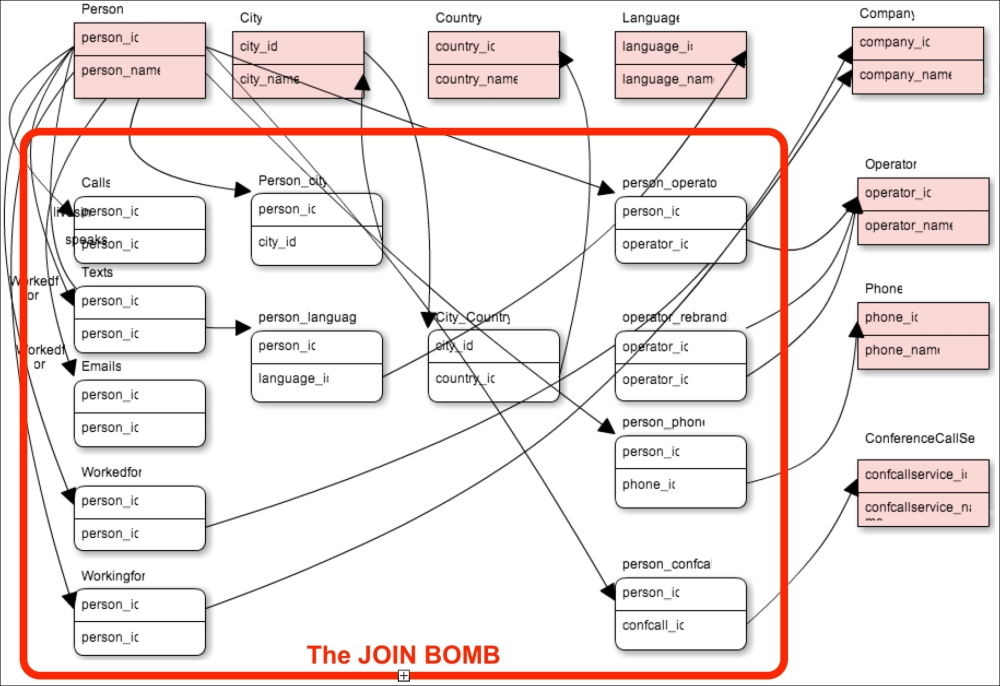

With relational databases came a process that all of us that have studied computer science know as normalization. This is the process that database modelers go through to minimize database redundancy and introduce disk storage savings, but introducing dependency. It involves splitting off data elements that appear more than once in a relational database table into their own table structures. Instead of storing the city where a person lives as a property of the person record, I would split the city into a separate table structure and store person entities in one table and city entities in another table. By doing so, we will often be creating the need to join these tables back together at query time. Depending on the cardinality of the relationship between these different tables (1:many, many:1, and many:many), this would require the introduction of a new type of table to execute these join operations: the join table, which links together two tables that would normally have a many:many cardinality.

I think it is safe to say that Relational Database Management Systems have served our industry extremely well in the past 30 years, and will probably continue to do so for a very long time to come. However, they also came with a couple of issues, which are interesting to point out as they will (again) set the stage for another generation of database management systems:

- Relational Database Systems suffer at scale. As the sets or tables of the relational systems grow longer, the query response times of the relational database systems generally get worse. Much worse. For most use cases, this was and is not necessarily a problem, but, as we all know, size does matter, and this deficiency certainly does harm the relational model.

- Relational Databases are quite "anti-relational". As the domains of our applications—and therefore, the relational models that represent those domains—become more complex, relational systems really start to become very difficult to work with. More specifically, join operations, where users would ask queries of the database that would pull data from a number of different sets/tables, are extremely complicated and resource intensive for the database management system. There is a true limit to the number of join operations that such a system can effectively perform, before the join bombs go off and the system becomes very unresponsive.

Relational database schema with explosive join tables

- Relational databases impose a schema even before we put any data into the database, and even if a schema is too rigid. Many of us work in domains where it is very difficult to apply a single database schema to all the elements of the domain that we are working with. Increasingly, we are seeing the need for a flexible type of schema that would cater to a more iterative, more agile way of developing software.

As you will see in the following sections, the next generation of database management systems is definitely not settling for what we have today, and is attempting to push innovation forward by providing solutions to some of these extremely complex problems.

The new millennium and the explosion of web content marked a new era for database management systems as well. A whole generation of new databases emerged, all categorized under the somewhat confrontational name of NOSQL databases. While it is not clear where the naming came from, it is pretty clear that it was born out of frustration with relational systems at that point in time. While most of us nowadays treat NOSQL as an acronym for Not Only SQL, the naming still remains a somewhat controversial topic among data buffs.

The basic philosophy of most NOSQL adepts, I believe, is that of the "task-oriented" database management system. It's like the old saying goes: if all you have is a hammer, everything looks like a nail. Well, now we have different kinds of hammers, screwdrivers, chisels, shovels, and many more tools up our sleeve to tackle our data problems. The underlying assumption then, of course, is that you are better off using the right tool for the job if you possibly can, and that for many workloads, the characteristics of the relational database may actually prove to be counterproductive. Other databases, not just SQL databases, are available now, and we can basically categorize them into four different categories:

- Key-Value stores

- Column-Family stores

- Document stores

- Graph databases

Let's get into the details of each of these stores.

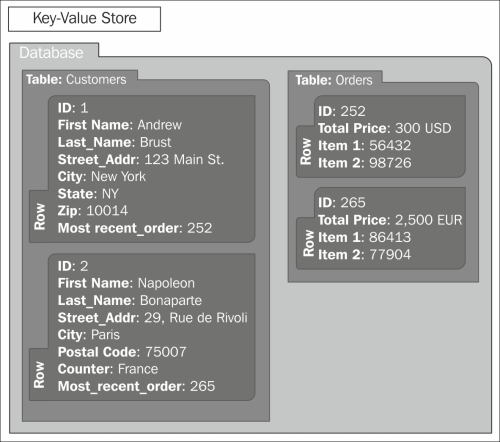

Key-Value stores are probably the simplest type of task-oriented NOSQL databases. The data model of the original task at hand was probably not very complicated: Key-Value stores are mostly based on a whitepaper published by Amazon at the biennial ACM Symposium on Operating Systems Principles, called the Dynamo paper. The data model discussed in that paper is that of Amazon's shopping cart system, which was required to be always available and support extreme loads. Therefore, the underlying data model of the Key-Value store family of database management systems is indeed very simple: keys and values are aligned in an inherently schema-less data model. And indeed, scalability is typically extremely high, with clustered systems of thousands of commodity hardware machines existing at several high-end implementations such as Amazon and many others. Examples of Key-Value stores include the mentioned DynamoDB, Riak, Project Voldemort, Redis, and the newer Aerospike. The following screenshot illustrates the difference in data models:

A simple Key-Value database

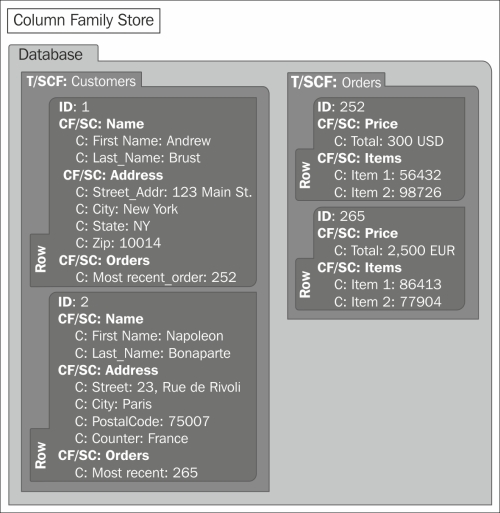

A Column-Family store is another example of a very task-oriented type of solution. The data model is a bit more complex than the Key-Value store, as it now includes the concept of a very wide, sparsely populated table structure that includes a number of families of columns that specify the keys for this particular table structure. Like the Dynamo system, Column-Family stores also originated from a very specific need of a very specific company (in this case, Google), who decided to roll their own solution. Google published their BigTable paper in 2006 at the Operating Systems Design and Implementation (OSDI) symposium. The paper not only started a lot of work within Google, but also yielded interesting open source implementations such as Apache Cassandra and Hbase. In many cases, these systems are combined with batch-oriented systems that use Map/Reduce as the processing model for advanced querying.

A simple Column-Family data model

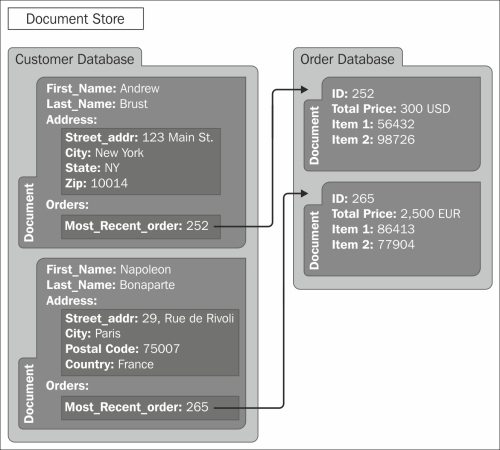

Sparked by the explosive growth of web content and applications, probably one of the most well-known and most used types of NOSQL databases are in the Document category. The key concept in a Document store, as the name suggests, is that of a semi-structured unit of information often referred to as a document. This can be an XML, JSON, YAML, OpenOffice, MS Office, or whatever kind of document that you may want to use, which can simply be stored and retrieved in a schema-less fashion. Examples of Document stores include the wildly popular MongoDB, and Couchbase, MarkLogic, and Virtuoso.

A simple Document data model

Last but not least, and of course the subject of most of this book, are the graph-oriented databases. They are often also categorized in the NOSQL category, but as you will see later, are inherently very different. This is not in the least the case because the task-orientation that graph databases are aiming to resolve has everything to do with graphs and graph theory that we discussed in Chapter 1, Graphs and Graph Theory – an Introduction. Graph databases such as Neo4j aim to provide its users with a better way to manage the complexity of the dense network of the data structure at hand. Implementations of this model are not limited to Neo4j, of course. Other closed and open source solutions such as Allegrograph, Dex, FlockDB, InfiniteGraph, OrientDB, and Sones are examples of implementations at various maturity levels.

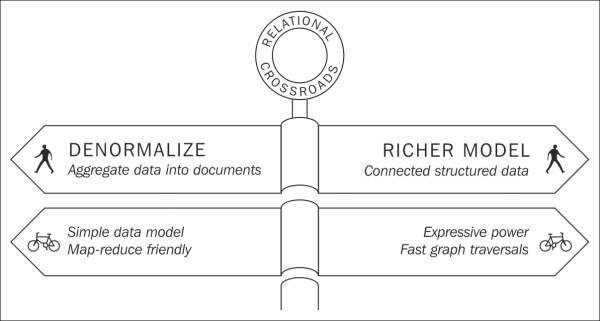

So, now that we understand the different types of NOSQL databases, it would probably be useful to provide some general classification of this broad category of database management systems, in terms of their key characteristics. In order to do that, I am going to use a mental framework that I owe to Martin Fowler (from his book NOSQL Distilled) and Alistair Jones (in one of his many great talks on this subject). The reason for doing so is that both of these gentlemen and me share the viewpoint that NOSQL essentially falls into two categories, on two sides of the relational crossroads:

- On one side of the crossroads are the aggregate stores. These are the Key-Value-, Column-Family-, and Document-oriented databases, as they all share a number of characteristics:

- They all have a fundamental data model that is based around a single, rich structure of closely-related data. In the field of software engineering called domain driven design, professionals often refer to this as an "aggregate", hence the reference to the fact that these NOSQL databases are all aggregate-oriented database management systems.

- They are clearly optimized for use cases in which the read patterns align closely with the write patterns. What you read is what you have written. Any other use case, where you would potentially like to combine different types of data that you had previously written in separate key-value pairs / documents / rows, would require some kind of application-level processing, possibly in batch if at some serious scale.

- They all give up one or more characteristics of the relational database model in order to benefit it in other places. Different implementations of the aggregate stores will allow you to relax your consistency/transactional requirements and will give you the benefit of enhanced (and sometimes, massive) scalability. This, obviously, is no small thing, if your problem is around scale, of course.

Relational crossroads, courtesy of Alistair Jones

- On the other side of the crossroads are the graph databases, such as Neo4j. One could argue that graph databases actually take relational databases:

- One step further, by enhancing the data model with a more granular, more expressive method for storing data, thereby allowing much more complex questions to be asked of the database, and effectively, as we later will see, demining the join bomb.

- Back to its roots, by reusing some of the original ideas of navigational databases, but of course learning from the lessons of the relational database world by reducing complexity and facilitating easy querying capabilities.

With that introduction and classification behind us, we are now ready to take a closer look at graph databases.